1. Introduction

Returning straw to the field is a highly effective measure in conservation tillage due to its ability to prevent environmental pollution and resource wastage caused by straw burning [

1]. Moreover, it contributes significantly to maintaining soil moisture, improving soil structure, increasing organic matter content, and promoting robust crop growth. The practice of incorporating straw into the field plays a pivotal role in environmental protection and the sustainable development of modern agriculture. Under suitable conditions regarding soil, water, air, and temperature, adopting conservation tillage methods with straw incorporation can enhance surface activity in the soil while simultaneously reducing weed growth [

2,

3]. During the process of returning corn straw to the field, numerous nutrients are generated that can partially substitute chemical fertilizers [

4]. This not only ensures enhanced crop yields but also benefits soil environment improvement by decreasing the reliance on fertilizers [

5,

6,

7]. Determining the appropriate coverage rate of straw is an important consideration when determining no-tillage levels and subsidies for straw incorporation [

8]. Excessive residue left in fields may have adverse effects on germination rates for soybean seedlings during subsequent seasons. Furthermore, growers commonly perceive returning straw as a time-consuming and labor-intensive task. Therefore, it is difficult to promote straw returning. To actively encourage growers to participate in straw returning, Heilongjiang Province (in northern China) has implemented a land protection policy [

9], which includes a subsidy policy based on the measurement standard of the straw coverage rate. In this context, the detection and supervision of straw incorporation into the field have been put in place. The precise identification of straw morphology in the field can serve as a foundation for the swift assessment of corn straw coverage. This plays a pivotal role in facilitating straw return to the field.

The traditional methods of detecting the straw coverage rate primarily rely on manual techniques, such as the pulling method [

10], and the manual estimation calculation method [

11]. The traditional testing methods, however, heavily rely on manual execution, leading to low testing efficiency and high expenditure costs. Consequently, this has a detrimental impact on the overall development of the straw coverage testing industry. These methods were time-consuming and labor-intensive. The employment of UAV low-altitude remote sensing (UAV-LARS) technology offers many benefits for crop information collection, including enhanced aerial coverage, accurate and real-time data acquisition, and rapid data transmission [

12]. It is widely used to detect crop coverage [

13,

14,

15,

16]. With the increasing popularity of machine vision recognition technology, the use of image-processing methods to identify straw and detect straw coverage has become a prominent research area. Gausman et al. [

17] first used multi-spectral scanner (MSS) images to distinguish between the spectral characteristics of soil and straw. Muhammad et al. [

18] utilized multi-temporal satellite remote sensing data from Landsat-7 (ETM+) and Landsat-8 (OLI/TIRS) to assess straw coverage. The R2 value, which represents the correlation between the predicted and actual measured values of straw coverage, was found to be 0.86. Precision agriculture demands the accurate delineation of crop fields at an agricultural scale, a significant challenge for satellite remote sensing due to data quality, atmospheric interference, and modeling methods. Despite its advantages, such as extensive coverage and high efficiency, these constraints limit satellite remote sensing, resulting in low accuracy.

The traditional pull-string method and manual estimation are time-consuming and laborious in detecting straw coverage, while the timeliness of satellite remote sensing images is inadequate and the resolution of the extracted images is insufficient for accurate discrimination between straw and soil. Therefore, it can be concluded that all of these methods have inherent limitations.

To address these problems, researchers used conventional image-processing techniques for straw recognition. Yu et al. [

19] aimed to address the issue of straw recognition accuracy being affected by illumination and soil color. They proposed a method to recognize straw-covered images by using the support vector machine (SVM) algorithm and a grayscale transformation function. This method can easily misclassify soil in the background as straw due to the selection of parameters in the prediction function duringSVM training. Li et al. [

20] utilized a combination of the fast Fourier transform (FFT and SV algorithms to achieve the automatic recognition of straw coverage, resulting in an average error rate of 4.55%. Wang et al. [

21] combined the Sauvola and Otsu algorithms to address the issue of the inaccurate recognition of straw images caused by thin straws. They also conducted field experiments. The experimental results show that the error rate of conventional straw coverage detection is 3.6%. Although traditional image processing methods can address issues such as illumination, soil, and other factors in straw image recognition, they are susceptible to inconsistencies in collection angles and inaccurate image capture. Refurbished techniques could not isolate chopped straw from an intricate rural backdrop.

Although these methods have shown improvement compared to traditional manual detection methods and satellite remote sensing technology, there are still other challenges. For instance, theSV andFFT algorithms generally lack robust generalization ability and struggle to accurately identify finely chopped straws in complex backgrounds. On the other hand, the thresholding algorithm Otsu can effectively segment straw; however, it is susceptible to variations in acquisition angle and equipment, necessitating feature engineering for proper characterization. Manual feature extraction is time-consuming and fails to capture high-level image features, thereby limiting its accuracy.

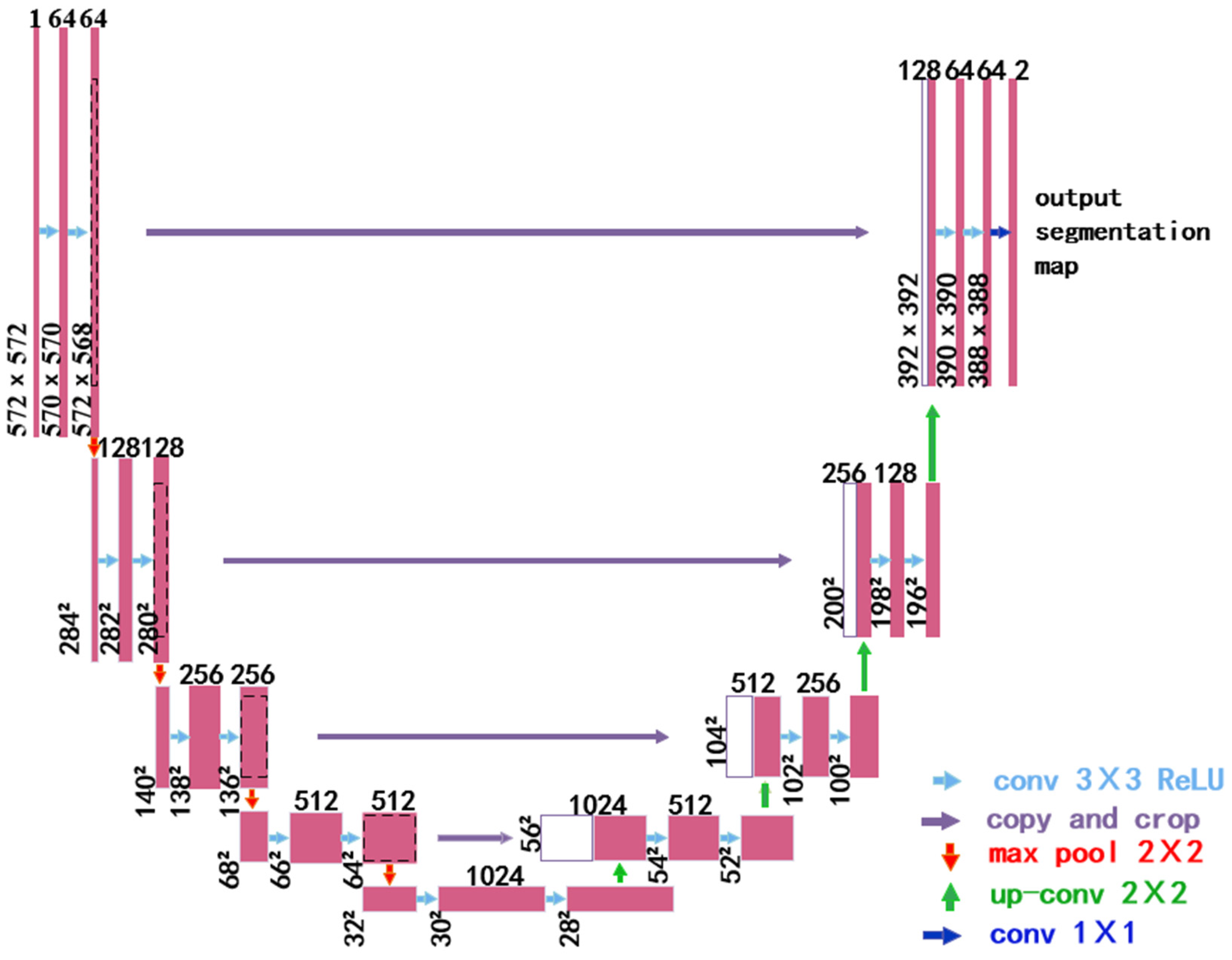

In recent years, scholars in the field have employed various devices to capture images of straw and conducted research on detecting straw coverage using deep learning techniques. For example, the enhanced U-Net model proposed by Ma et al. [

22] addresses the challenges of light interference, soil color changes, and shadow effects in traditional straw identification image recognition. They employed vehicle-mounted monitoring equipment to collect straw images. At the same time, the feature pyramid network is utilized to enhance the extraction of straw features in the image, resulting in an average crossover ratio of 84.78% for the straw dataset; however, the images of the straw collected by the camera on the locomotive will be distorted due to inconsistent shooting angles. Zhou et al. [

23] applied the UAV-LARS technique to capture images and proposed to segment straw images using an improved ResNet18-U-Net semantic segmentation model. The algorithm achieves an average segmentation accuracy of 93.70%, but the images are affected by the problem of imprecise cropping. Liu et al. [

24] proposed a large-scale collection of straw images using UAV. They utilized the multi-threshold differential gray wolf optimization algorithm to enhance the Precision of straw coverage detection, resulting in a straw coverage detection error rate of less than 8%; however, the algorithm has high requirements for image quality and hardware equipment, making it only suitable for detecting large areas of straw coverage. It can be inferred that the utilization of diverse devices for acquiring straw data and the implementation of deep learning algorithms to detect straw coverage significantly enhance the accuracy of segmentation. Nevertheless, there exist certain drawbacks, such as the influence of inconsistent angles during equipment collection on straw segmentation and its limited applicability to other field sizes.

In summary, based on an analysis of existing research on straw coverage detection and image segmentation methods, it can be concluded that traditional image segmentation algorithms have certain limitations in terms of accuracy. Moreover, they are susceptible to the influence of lighting conditions and low-resolution image acquisition, which hinders the accurate extraction of soil and straw from complex backgrounds. On the contrary, deep learning algorithms demonstrate high accuracy and enable the automatic extraction of intricate features from straw images even under challenging backgrounds. The utilization of receptive field and weight-sharing techniques also reduces the number of parameters during model training. UAV platforms offer new opportunities for the efficient detection of straw coverages at a high throughput rate. By leveraging UAV low-altitude remote sensing technology, independent image acquisition is possible regardless of shooting angles while ensuring speediness. The combination of these technologies enables improved and expedited detection capabilities for assessing straw coverage effectively. Therefore, this study employs UAV low-altitude remote sensing technology along with introducing deep learning to achieve effective detection results for measuring straw coverage accurately. Furthermore, through an extensive search conducted on related studies, no articles focusing on detecting straw coverage in conservation tillage using UAVs or VGG19 with U-Net models were found, thus highlighting the significance and novelty of the topic addressed by this study.

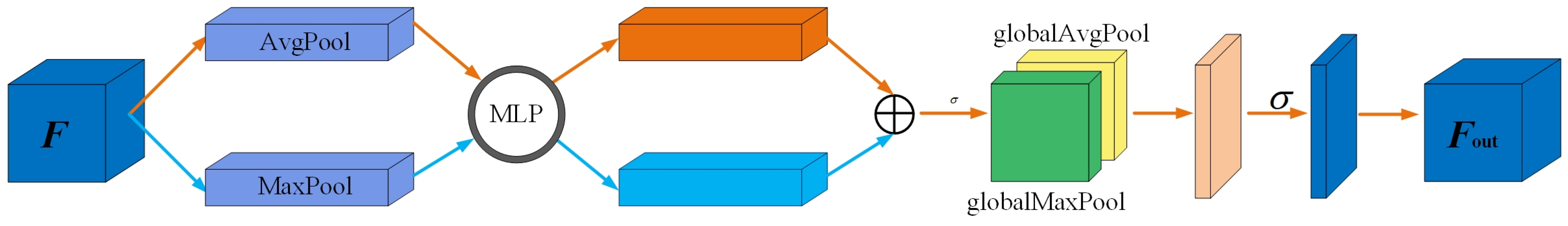

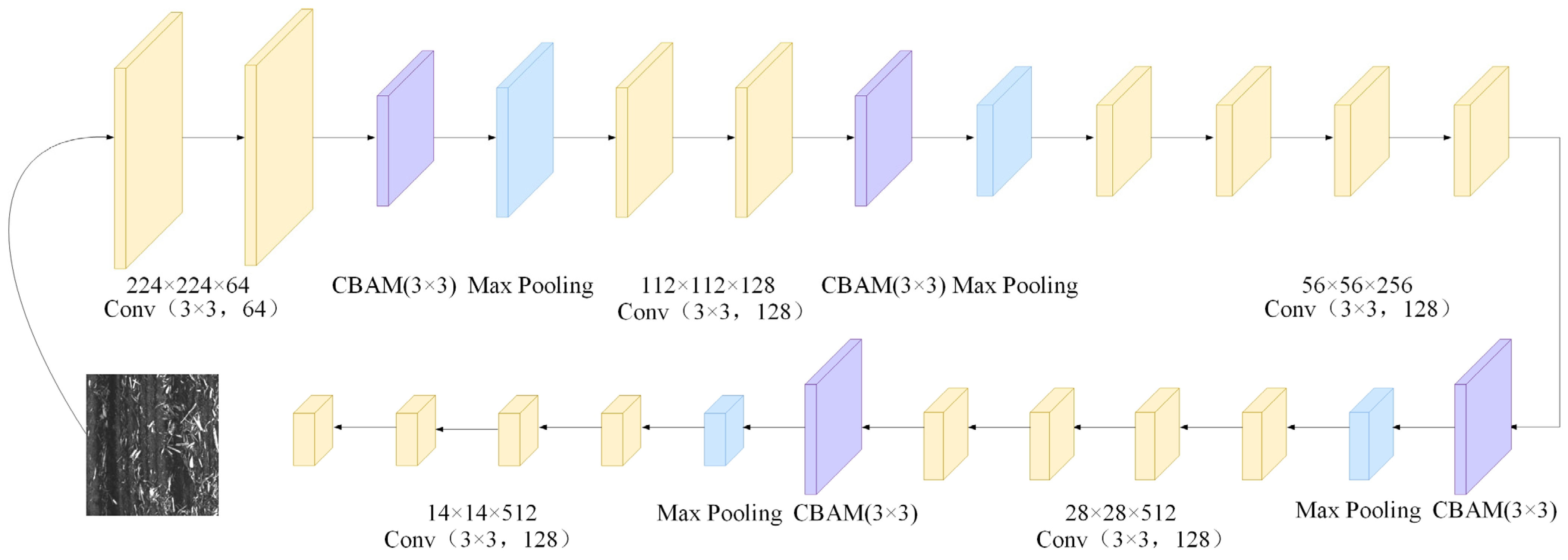

The primary objective of this study is to accurately extract corn stover from the captured images to achieve the precise segmentation of fine straw. Additionally, it aims to assess the quality of straw segmentation and provide a more accurate basis for measuring the degree of no-tillage field return in conservation tillage and determining subsidies for straw field return. Building upon previous research, this paper utilizes UAVs as a data acquisition platform, with a specific focus on corn fields as the research subject. High-definition visible-light images of corn stover covers are captured, followed by data enhancement and other operations to obtain ultra-high-resolution stover maps. Furthermore, a novel image segmentation method for field corn stover is proposed. This method enhances the U-Net model through incorporating transfer learning and replacing the encoder part with an attention mechanism, thereby improving recognition and segmentation ability for fine straw. The Focal-Dice Loss function is selected, along with network optimization techniques, to achieve the cost-effective and highly accurate recognition and segmentation of field corn stover and fine straw. These advancements offer new perspectives on segmenting and extracting corn stover in large fields while also providing valuable reference material and technical support for improving efficiency in detecting straw coverage during protective farming practices.

5. Conclusions

The identification and segmentation of corn stalks in the field are crucial for meeting the technical requirements of reduced tillage and qualifying for subsidies related to returning crop residues. Numerous researchers have utilized machine learning, deep learning, and other techniques to extract features from images; however, there is a lack of research focusing on using deep learning techniques to segment fragmented stalks. Most existing methods for stalk segmentation suffer from certain drawbacks that hinder the accurate identification and segmentation of fragmented stalks. The latest research conducted by scholars [

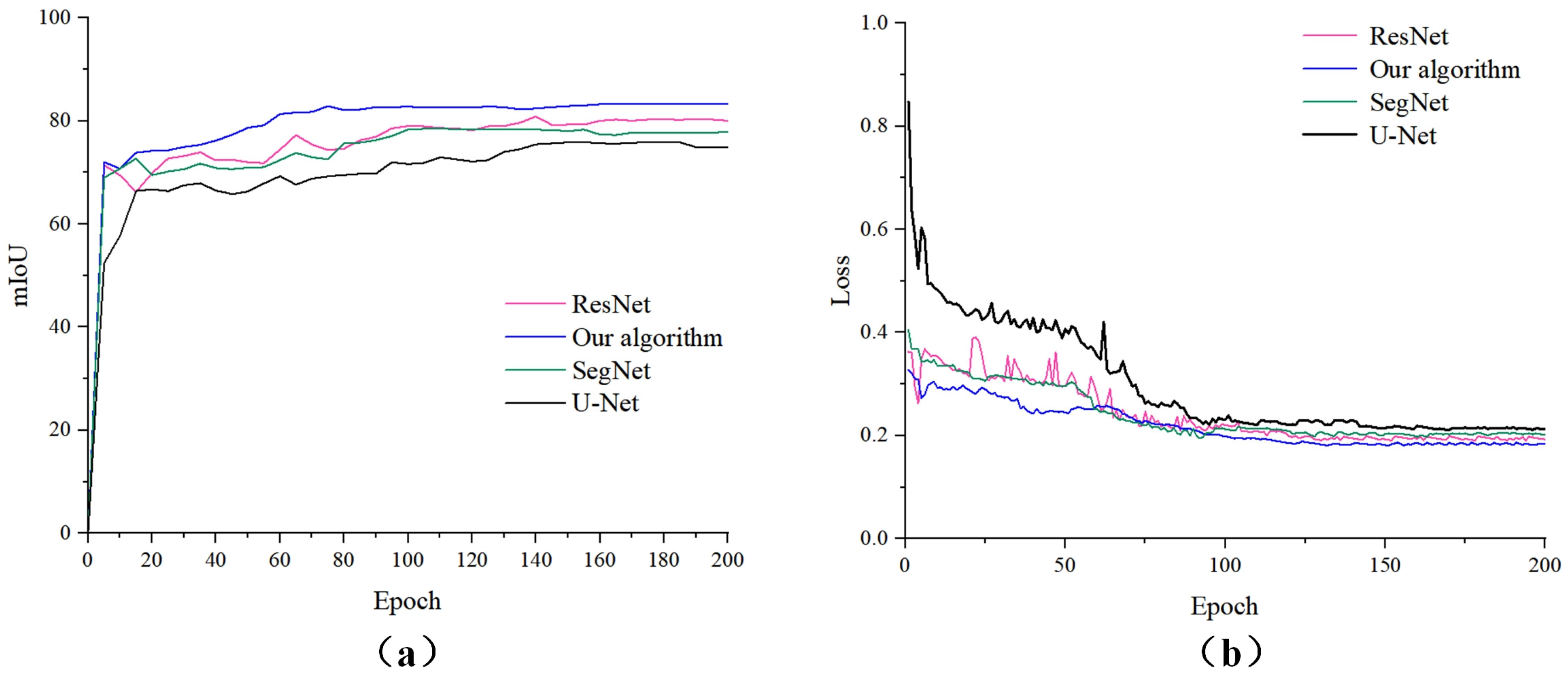

22] involved the utilization of onboard cameras and deep learning algorithms for straw coverage detection. To enhance the capability of extracting straw features, they replaced the U-Net encoder part with a ResNet34 structure. On a dataset consisting of straw images captured by onboard cameras, our algorithm achieved an mIoU performance of 84.78%, which is comparable to the results obtained in their study on straw segmentation; however, during the process of dividing fine straw, the camera acquisition angle is easily influenced by the forward movement of the locomotive, resulting in a substantial number of non-farmland scenes. Consequently, this hampers the effectiveness of extracting straw data and necessitates the subsequent manual removal of irrelevant background elements, such as the sky and ground, leading to a significant workload. In a study conducted by scholars [

23], images capturing farmland straw under conservation tillage were obtained using a low-altitude UAV. They developed an improved U-Net (ResNet18-UNet) semantic segmentation algorithm for straw cover without incorporating any additional optimization modules. Nevertheless, this algorithm proved ineffective in accurately distinguishing fine straw from soil amidst complex backgrounds and failed to achieve accurate extraction with an mIoU performance segmentation rate of 81.04%. Our algorithm surpasses theirs. Therefore, based on a comprehensive review of scholarly research, we propose a novel U-Net straw segmentation method. Our innovative approach to straw segmentation encompasses several key aspects: Firstly, unlike conventional data acquisition devices such as satellite remote sensing, airborne cameras, or handheld cameras that are susceptible to factors like shooting angles, this study employs low-altitude remote sensing technology UAVs for the precise and high-resolution collection of straw data in farmland. Secondly, previous studies have utilized methods such as BP neural networks and threshold segmentation for segmenting collected straw data within the realm of deep learning models; however, these approaches suffer from lengthy training times and limited generalization capabilities when it comes to accurate straw segmentation. In contrast with these methodologies, our paper leverages the U-Net model as the foundation network while integrating the latest VGG19 network into the encoding section of the U-Net model to reduce training parameters and incorporate CBAM modules. This not only enables our algorithm to focus on global features but also significantly enhances its ability to extract fine-grained straws from complex backgrounds. Thirdly, prior segmentation models merely specified input data sizes without comparing their impact on model training results across different sizes. To establish universally applicable data sizes for various models, this study selects three distinct dimensions: 128 × 128 pixels, 256 × 256 pixels, and 512 × 512 pixels. Furthermore, the influence of these dimensions on segmentation accuracy during the model training process is thoroughly examined and discussed. Fourthly, all convolutional kernel sizes in our model are set at a size of 3 × 3, which optimizes network design further, enhancing both the generalization ability of our model and the accuracy of straw segmentation.

The original U-Net model is enhanced in four aspects: the encoder, attention mechanism, loss function, and network optimization. This algorithm effectively segments and extracts fragmented stalks from complex field images. The main conclusions are as follows:

- (1)

To address the issue of the inaccurate segmentation of straw images caused by shadows and the challenges in identifying fragmented straws due to varying shooting angles during data collection, we propose utilizing a transfer learning approach. This method replaces the encoding phase of the original U-Net architecture with the first five layers of the VGG19 backbone network. Additionally, we integrate the CBAM convolutional attention mechanism and optimize the entire network using the Focal-Dice Loss function. This enhancement focuses on segmenting fine-grained straw edge details, reducing parameters and computational complexity, and improving the accuracy of segmenting corn straws in complex backgrounds.

- (2)

During the training process, the model demonstrates the expected performance when the input data size is 256 × 256. Compared to the other three algorithms, this algorithm demonstrates superior performance in corn stalk segmentation tasks. Consequently, our algorithm is suitable for segmenting the stalks of various crops and other tasks where there is minimal distinction between the foreground and background.

- (3)

The straw segmentation algorithm advanced in this study fulfills the processing requirements for capturing aerial images of straw coverage; however, it is exclusively designed for the low-altitude, small-scale, and high-precision detection of straw coverage, making it unsuitable for large-scale detection.

- (4)

Our algorithm serves as a technical reference for detecting the straw coverage rate of corn and other crops in the field, providing valuable insights to enhance the U-Net model. It constitutes a valuable resource for assessing the straw coverage rate of crops while also providing innovative ideas to enhance the U-Net model.

The challenges encountered in the research on straw coverage detection using UAVs can be summarized as follows: Firstly, ensuring measurement accuracy is crucial for accurately obtaining the distribution and density of straw, which directly impacts the accuracy of detection results; however, the current precision of UAVs in monitoring straw coverage remains limited. Different UAV devices exhibit varying levels of precision, thus necessitating improvements in UAV detection accuracy and the reduction in measurement errors. Secondly, dataset annotation plays a vital role in ensuring data quality by precisely labeling straw. Before model training, effective data processing and analysis are necessary for the straw data collected from UAVs. Currently, manual annotation continues to dominate dataset construction but consumes significant time and resources during data processing. Extracting valuable information from large volumes of raw data, establishing sound data analysis models, as well as achieving fast transmission and real-time analysis are urgent issues that need to be addressed. Thirdly, handling complex scenarios poses challenges in detecting and predicting straw coverage rates due to factors such as occlusion, lighting variations, and complex backgrounds encountered in actual agricultural environments; therefore, the further refinement and optimization of relevant algorithms are required.

The article proposes the application of UAVs and deep learning technology for the recognition and segmentation of corn stalk images in fields. Although our proposed algorithm has been experimentally demonstrated to segment stalk images effectively, there are still certain limitations. Future research and enhancements can be pursued in the following directions: We will continue to enhance the model. Despite achieving a test set accuracy of 93.87% and reducing the average coverage error to 0.35%, there is still room for further improvement in terms of precision. Our algorithm necessitates high-performance hardware devices for execution, and without GPU acceleration the running efficiency requires enhancement. To achieve more comprehensive and accurate straw coverage detection, we will integrate multimodal data by combining multispectral or thermal infrared images obtained from UAVs with traditional visible-light images.