2.1.1. From Shannon’s Mutual Information to Semantic Mutual Information

Definition 1. x: an instance or data point; X: a discrete random variable taking a value x∈U = {x1, x2, …, xm}.

y: a hypothesis or label; Y: a discrete random variable taking a value y∈V = {y1, y2, …, yn}.

P(yj|x) (with fixed yj and variable x): a Transition Probability Function (TPF) (named as such by Shannon [7]).

Shannon named

P(

X) the source,

P(

Y) the destination, and

P(

Y|X) the channel. A Shannon channel is a transition probability matrix or a group of transition probability functions:

where ⇔ indicates equivalence. Note that the TPF

P(

yj|x) is not normalized, unlike the conditional probability function,

P(

y|xi), in which

y is variable and

xi is constant. We will discuss how the TPF can be used for the traditional Bayes prediction in

Section 2.2.1.

The Shannon entropies of

X and

Y are

The Shannon posterior entropies of

X and

Y are

The Shannon mutual information is

If

Y = yj, the mutual information

I(

X; Y) will become the Kullback–Leibler (KL) divergence:

Some researchers have used the following formula to measure the information between

xi and

yj:

As I(xi; yj) may be negative, however Shannon did not use this formulation. Shannon explained that information is the reduced uncertainty or the saved average code word length. The author believes that the above formula is meaningful, because negative information indicates that a bad prediction may increase the uncertainty or the code word length.

As Shannon’s information theory cannot measure semantic information, Carnap and Bar-Hillel proposed a semantic information formula

where

p is a proposition and

mp is its logical probability.

As I(p) is not relative to whether the prediction is correct or not, this formula is not practical.

Zhong [

12] made use of the fuzzy entropy of DeLuca and Termini [

14] to define the semantic information measure

where

tj is “the logical truth” of

yj. However, according to this formula, whenever

tj = 1 or

tj = 0, the information reaches its maximum of 1 bit. This result is not expected. Therefore, this formula is unreasonable. This problem is also found in other semantic or fuzzy information theories that use DeLuca and Termini’s fuzzy entropy [

14].

Floridi’s semantic information formula [

11,

36] is a little complicated. It can ensure that the information that is conveyed by a tautology or a contradiction reaches its minimum 0. However, according to common sense, a wrong prediction or a lie is worse than a tautology. As to how the semantic information is related to the deviation and how the amount of semantic information of a correct prediction differs from that of a wrong prediction, we cannot obtain clear answers from his formula.

The author proposed an improved semantic information measure in 1990 [

21] and developed G theory later.

According to Tarski’s truth theory [

28],

P(

X ϵ

θj) is equivalent to

P(“

X ϵ

θ” is true) =

P(

yj is true). The truth function of

yj ascertains the semantic meaning of

yj, according to Davidson’s truth condition semantics [

29]. Following Tarski and Davidson, we define, as follows:

Definition 2. θj is a fuzzy subset of U which is used to explain the semantic meaning of a predicate yj(X) = “X ϵ θj”. If θj is non-fuzzy, we may replace it with Aj. The θj is also treated as a model or a group of model parameters.

A probability is defined with “=”, such that P(yj) = P(Y = yj), is a statistical probability; a probability is defined with “∈”, such as P(X∈θj), is a logical probability. To distinguish P(Y = yj) and P(X∈θj), we define T(θj) = P(X∈θj) as the logical probability of yj.

T(θj|x) = P(x∈θj) = P(X∈θj|X = x) is the conditional logical probability function of yj; this is also called the (fuzzy) truth function of yj or the membership function of θj.

A group of TPFs

P(

yj|x),

j = 1,2,…,

n, form a Shannon channel, whereas a group of membership functions

T(

θj|

x),

j = 1,2…

n, form a semantic channel:

Figure 1 illustrates the Shannon channel

P(

Y|X) and the semantic channel

T(

θ|X).

The Shannon channel indicates the correlation between X and Y, whereas the semantic channel indicates the fuzzy denotations of a group of labels. The Shannon channel indicates the rule by which the observatory selects labels or forecasts for the weather forecasts between an observatory and its audience, whereas the semantic channel indicates the semantic meanings of these forecasts understood by the audience.

The expectation of the truth function is the logical probability:

which was proposed earlier by Zadeh [

35] as the probability of a fuzzy event. This logical probability is a little different from the

mp that was defined by Carnap and Bar-Hillel [

9]. The latter only rests with the denotation of a hypothesis. For example,

y1 is a hypothesis (such as “

X is infected by the Human Immunodeficiency Virus (HIV)”) or a label (such as “HIV-infected”). Its logical probability

T(

θ1) is very small for normal people, because HIV-infected people are rare. However,

mp is irrelative to

P(

x); it may be 1/2.

Note that the statistical probability is normalized, whereas the logical probability is not, in general. When θ0, θ1, …, θn form a cover of U, we have that P(y0) + P(y1) + …+P(yn) = 1 and T(θ0) + T(θ1) + … + T(θn) ≥ 1.

For example, if U is a group of people of different ages with the subsets A1 = {adults} = {x|x ≥ 18}, A0 = {juveniles} = {x|x < 18}, and A2 = {young people} = {x|15 ≤ x ≤ 35}. The three sets form a cover of U, and T(A0) + T(A1) = 1. If T(A2) = 0.3; the sum of the three logical probabilities is 1.3 > 1. However, the sum of three statistical probabilities P(y0) + P(y1) + P(y2) must be less or equal to 1. If y2 is correctly used, P(y2) will change from 0 to 0.3. If A0, A1, and A2 become fuzzy sets, the conclusion is the same.

Consider the tautology “There will be rain or will not be rain tomorrow”. Its logical probability is 1, whereas its statistical probability is close to 0 because it is rarely selected.

We can put

T(

θj|x) and

P(

x) into Bayes’ formula to obtain a likelihood function [

21]:

P(

x|θj) can be called the semantic Bayes prediction or the semantic likelihood function. According to Dubois and Prade [

45], Thomas [

46] and others have proposed similar formulae.

Assume that the maximum of

T(

θj|x) is 1. From

P(

x) and

P(

x|θj), we can obtain

The author [

41] proposed the third type of Bayes’ theorem, which consists of the above two formulae. This theorem can convert the likelihood function and the membership function or the truth function from one to another when

P(

x) is given. Equation (14) is compatible with Wang’s fuzzy set falling shadow theory [

41,

47].

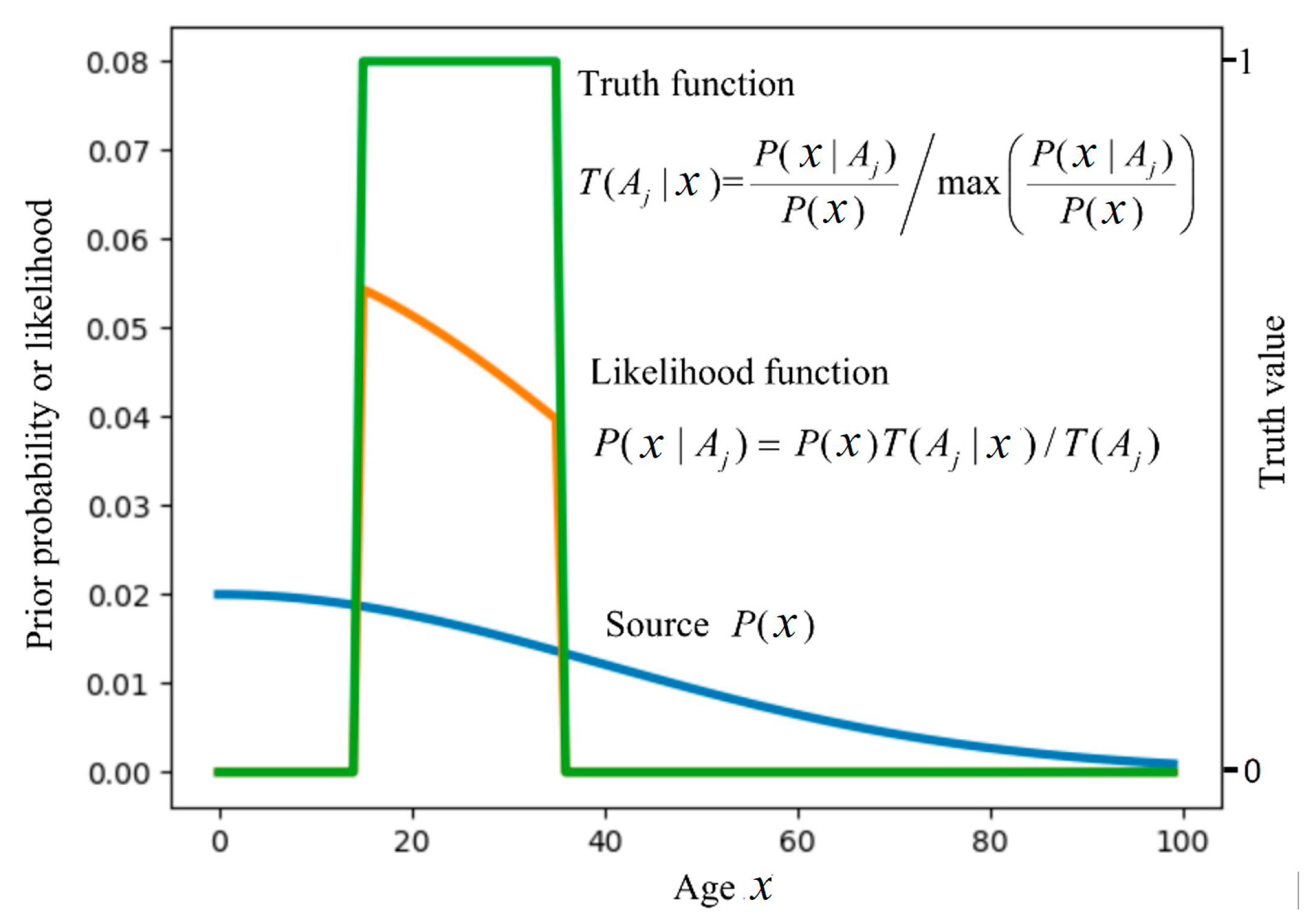

Figure 2 illustrates the relationship between

P(

x|θj) and

T(

θj|x) for a given

P(

x), where

x is an age, the label

yj = “Youth”, and

θj is a non-fuzzy set and, hence, becomes

Aj.

We use Global Positioning System (GPS) data as an example to demonstrate a semantic Bayes prediction.

Example 1. A GPS device is used in a train, and hence P(x) is uniformly distributed on a line (see Figure 3). The GPS pointer has a deviation. Try to find the most probable position of the GPS device. The semantic meaning of the GPS pointer can be expressed by

where

xj is the pointed position by

yj and

σ is the Root Mean Square (RMS). For simplicity, we assume that

x is one-dimensional.

According to Equation (13), we can predict that the position indicated by the star in

Figure 3 is the most probable position. Most people would make the same prediction without using any mathematical formula. It seems that human brains must automatically use a similar method: making predictions according to the fuzzy denotation of

yj.

In semantic communication, we often see hypotheses or predictions, such as “the temperature is about 10 °C”, “the time is about seven o’clock”, or “the stock index will go up about 10% next month”. Each one of these may be represented by yj = “X is about xj”. We can also express their truth functions by Equation (15).

The author defines the (amount of) semantic information that is conveyed by

yj about

xi with the log-normalized-likelihood:

Introducing Equation (15) into this formula, we have

by which we can explain that this information is equal to the Carnap–Bar-Hillel information minus the squared relative deviation.

Figure 4 illustrates this formula.

Figure 4 indicates that, the smaller the logical probability, the more information there is; and, the larger the deviation is, the less information there is. Thus, a wrong hypothesis will convey negative information. These conclusions accord with Popper’s thought (see [

32], p. 294).

To average

I(

xi;

θj), we have generalized KL information

In Equation (18), P(xi|yj) (i = 1,2…) is the sampling distribution, which may be unsmooth or discontinuous.

Theil proposed a generalized KL formula with three probability distributions [

37]. However, in Equation (18),

T(

θj) is constant. If

T(

θj|

x) is an exponential function with

e as the base, and then Equation (18) will become the Donsker–Varadhan representation [

19,

38].

Akaike [

4] proved that the Least KL divergence criterion is equivalent to the Maximum likelihood (ML) criterion. Following Akaike, we can prove that the Maximum Semantic Information (MSI) criterion (e.g., the maximum generalized KL information criterion) is also equivalent to the ML criterion.

Definition 3. Dis a sample with labels {(x(t), y(t))|t = 1 to N; x(t)∈U; y(t)∈V}, which includes n different sub-samples or conditional samples Xj, j = 1,2,…,n. Every sub-sample includes data points x(1), x(2), …, x(Nj)∈U with label yj. If Xj is large enough, we can obtain the distribution P(x|yj) from Xj. If yj in Xj is unknown, we replace Xj with Xand P(x|yj)with P(x|.).

Assume that there are

Nj data points in

Xj, where the

Nji data points are

xi. When

Nj data points in

Xj come from Independent and Identically Distributed (IID) random variables, we have the likelihood

As

I(

X;

θj) and log

P(

Xj|θj) reach their maxima at the same time that

θj changes and, hence, the two criteria are equivalent. It is easy to prove that, when

P(

x|

θj) =

P(

x|

yj),

I(

X; θj), and log

P(

Xj|θj) reach their maxima.

When the sample

Xj is very large, letting

P(

x|

θj) =

P(

x|

yj), we can obtain the optimized truth function:

This formula clearly indicates how the semantic channel matches the Shannon channel, which indicates the use rule of Y. It is also compatible with Wittgenstein’s thought: meaning lies in uses (see [

39], p. 80).

To average

I(

X;

θj) for different

y, we use the Semantic Mutual Information (SMI) formula

If P(x|θj) = P(x|yj) or T(θj|x)∝P(yj|x) for different yj, the SMI will be equal to the Shannon Mutual Information (SHMI).

Introducing Equation (15) into the above formula, we have

It is clear that the maximum SMI criterion is a special Regularized Least Squares (RLS) criterion [

33].

H(

θ) is the regularization term and

H(

θ|X) is the relative error term. However,

H(

θ) only penalizes the deviations without penalizing the means. The importance of this is that the maximum SMI criterion is also compatible with the ML criterion.