1. Introduction

Lithium-ion (Li-ion) batteries have been popularly used as a power source in mobile devices thanks to their longer lifespan and higher energy density than conventional batteries [

1]. Their popularity is not only limited to customer electronics, but also increasingly attracting attention in other real-time systems such as Electric Vehicles (EVs), Unmanned Aerial Vehicles (UAVs) and satellites.

However, the Li-ion batteries suffer from some limitations such as safety, non-linear performance, and aging issues. In particular, the battery aging issue is directly related to the lifetime of a system when physical maintenance is infeasible, which is the main challenge of this paper. The battery aging is usually represented by a capacity degradation which is caused by a huge number of repeated cycles of charging and discharging. It is common for electronic devices to become unusable due to this battery aging, instead of due to failure of electronic or mechanical parts. In other words, the lifespan of a battery has become a main bottleneck of the lifetime in battery-powered systems.

This aging effect has different impacts depending on the characteristics of systems. While the impact of aging only causes some inconveniences in mobile devices, the same level of aging would result in more critical consequences in other domains. In the case of an EV which is equipped with a large number of batteries, the battery replacement cost due to aging may be excessive. Moreover, a deteriorated health of battery sometimes makes system operation unpredictable which can lead to catastrophic consequences in safety-critical systems.

Among various systems, the impact of aging is particularly fatal to satellite systems where no maintenance is available after the initial deployment. Since Low Earth Orbit (LEO) satellites revolve around the Earth, the sunlight period, in which the system is exposed to sunlight, and the eclipse period, in which the Sun is blocked by the Earth, repeatedly appear in an interleaved way. Note that harvesting energy from solar panels in the satellite is only available during the sunlight period. Thus, during the energy-constrained eclipse period, the battery is the only power source of the entire system. This implies that the lifespan of satellite systems, which cost a lot for launch, can be compromised by the aging of the batteries.

There are a handful of works that mitigate the aging of batteries for the satellite systems. For a longer battery lifespan, Maheshwari et al. [

2] proposed a transmission power control technique that distributes traffic between adjacent satellites on the same orbit plane. This study utilized the fact that the smaller amount of the required charge per cycle, i.e., the shallower the depth of discharge (DOD), the longer the expected battery lifespan. In addition to the DOD minimization, Lami et al. [

3] claimed that minimizing the state of charge (SOC) swing, i.e., the range of the used capacity, can extend the battery lifespan. However, the above works have simply estimated the lifespan as a function of DOD and this modeling is only valid under a narrow condition where the data for modeling was obtained or measured. How the highly varying temperature condition of the satellite systems would affect the battery lifetime has not been investigated in their studies.

There have been some studies in other industrial domains to mitigate the aging of the Li-ion battery. Chon et al. [

4] proposed a battery use guide scheme, targeting the smartphone domain, that is based on a large-scale use-case profiling with crowdsensing. Wegmann et al. [

5] investigated the aging of hybrid battery with two different cell types and reported that proper load splits between different cell types could improve the battery lifetime at long recuperation phases in EV. However, none of these works can be directly applied to satellite systems due to the heterogeneity in workload and the lack of large-scale profiling data.

Recently, Kwak et al. [

6] proposed a real-time scheduling framework, called Reserved Execution Time (RET),that tries to maximize the battery lifetime in UAV systems. Based on a Li-ion battery aging model proposed by [

7], they tried to reduce the internal heat dissipation out of battery to mitigate the aging factors. While they did consider the effects of temperature in the battery aging, they basically assumed that the elevated temperature always results in a worse battery lifetime.

This does not always hold true even for the systems operating on the Earth [

8].

Furthermore, this assumption is increasingly unrealistic when considering radical temperature changes often observed in the satellite systems.

Indeed, there have been several works that demonstrated low temperatures could also cause adverse effects on battery aging [

9,

10]. Wu et al. [

11] also investigated battery aging of a number of batteries cycles with different discharge profiles at low temperature and showed that the aging can be mitigated by increasing the battery temperature. However, they did not propose any specific method how to increase the battery temperature. This work focuses on how a real-time task scheduling approach can mitigate this adverse effect within the given timing and power budget imposed in the satellite systems.

In this regard, we argue that the existing scheduling policy [

6] that was proposed to lengthen the battery lifetime may actually cause the opposite result for the satellite systems. To evidently demonstrate this anomaly, we evaluate the lifetimes of batteries used for satellite system on top of an open-source Li-ion battery aging simulator [

12]. In doing so, we first model the hardware and software behaviors of the target LEO satellites and their ambient temperature changes throughout the revolution period in

Section 2.

Section 3 reviews a number of aging factors of Li-ion batteries that have been considered or ignored in the existing techniques and how they can be affected by temperature changes. Based on the discussed aging factors, we propose a modified scheduling policy that suits better to the satellite systems in

Section 4. It is shown in

Section 5 that the proposed technique outperforms the existing technique in terms of the expected lifetime of Li-ion battery in satellite systems, followed by concluding remarks in

Section 6.

4. Proposed Scheduling Method

In this section, we propose a new scheduling policy that maximizes the expected lifespan of Li-ion battery that is used in the target system modeled in

Section 2. Kwak et al. [

6] proposed a scheduling policy, called RET, to maximize the battery lifespan for real-time systems. They assumed a fixed normal ambient temperature which is usually about 300 K and did not take the lithium plating effect into consideration in estimating the battery lifetime. Therefore, in their modeling, it is always best to keep the temperature as low as possible. So, they proposed to minimize the heat dissipated by the battery, specifically

which is known as the most dominant factor in Equation (

3) by scheduling.

Given the workloads of the target system fixed, the sum of discharged currents from all subsystems remains unchanged for any scheduling decision (As no dynamic voltage or frequency scaling techniques considered, the amount of power (current) dissipated by all tasks is constant for different scheduling results.). Based on the thermal dynamics modeled in [

6], it was proven that reducing the sum of squared currents by consumed by all tasks results in lesser heat generation. In other words, they tried to minimize the variance of current dissipation over time in the scheduling decision in order to maximize the battery lifetime.

However, such evenly distributed current dissipation loads may not always result in an extended expected battery lifetime for satellite systems. As elaborated in the previous section, they operate in highly varying temperature environments and the ambient temperatures mostly stay lower than the typical optimal temperature derived by Waldmann et al. [

32], where the lithium plating effect may play a critical role in battery lifetime. In particular, it is expected that the battery temperature continuously decreases during the eclipse period. Charging Li-ion battery at low temperatures, e.g., right after the eclipse period, may accelerate the lithium plating effect [

32].

As will be shown in the evaluations, if the lithium plating effect is additionally considered in the lifetime estimation, the reduced battery cell temperature caused by the RET scheduling policy results in rather shortened battery lifetime. Based on the observation of this anomaly, we propose an alternative battery-aware scheduling policy which better suits to the LEO satellite systems. It is hard to find out a single optimal operating temperature since it may vary from one battery characteristic to another [

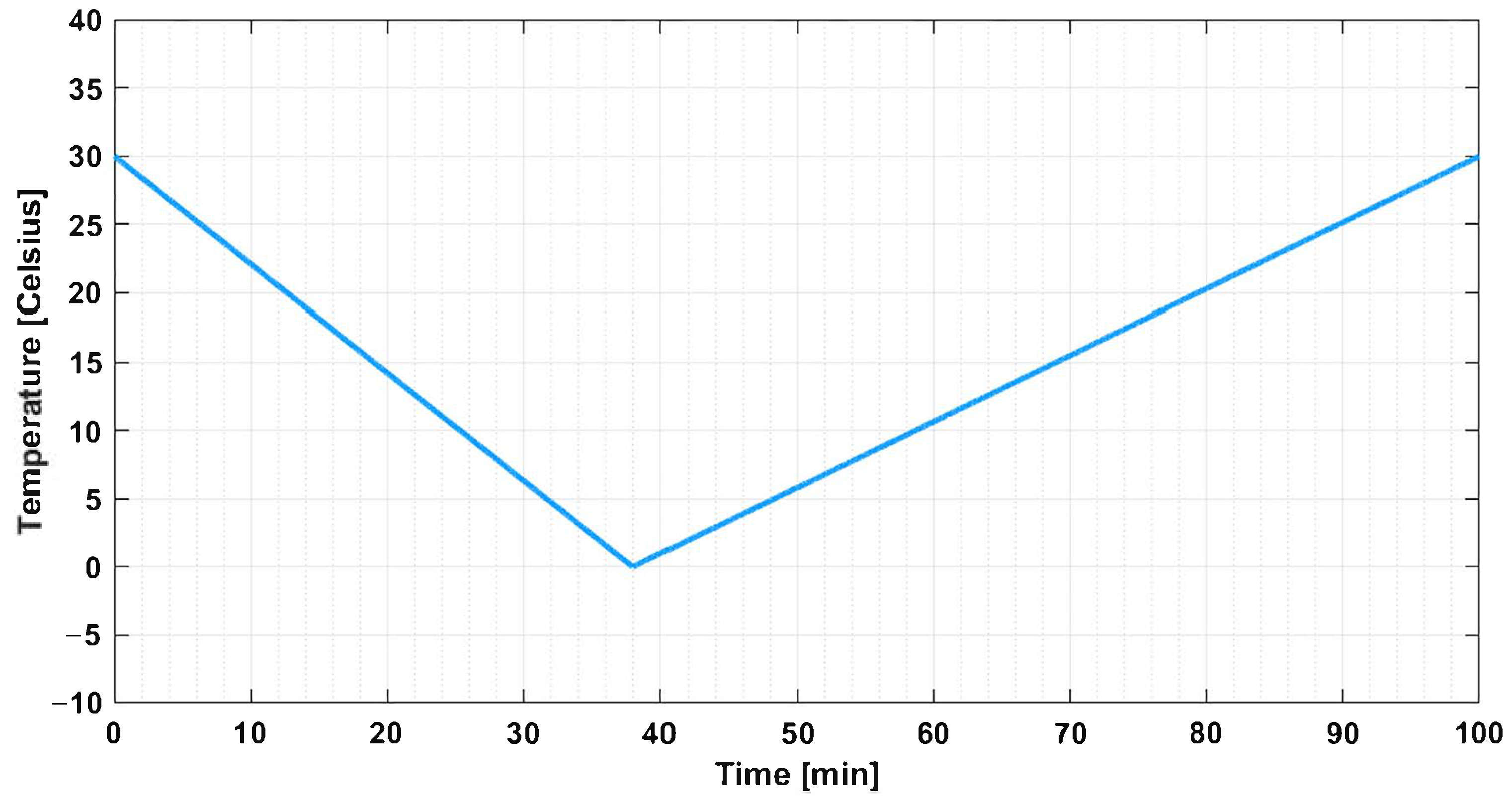

8]. Even if the optimal temperature point is known, it is not trivial to keep the battery cell temperature at the optimal point with respect to a set of heterogeneous workloads and the varying ambient temperatures. Note in

Figure 1 that the satellite ambient temperature is mostly below than the normal room temperature (300 K) that normal systems operated on the Earth have. In this case, the lithium plating effect is alleviated in a higher temperature [

8]. Therefore, in this work, we simply take the opposite approach to the RET framework; we try to place the current discharge loads as unbalanced as possible with respect to the real-time constraints in favor of increased temperature.

It is worth mentioning that, in this work, we restrict ourselves to the scope of scheduling in heating up the battery cell, which means the total amount of discharged current remains the same regardless of the scheduling decision. In the case that the harvested energy is sufficient to power all subsystems and charge the battery for a certain amount of time during the sunlight period, external thermal management techniques [

33,

34], e.g., using heaters, could be applied taking advantage of the extra power (current) to heat up the battery. However, due to the additional weight and manufacturing costs, it is not common to adopt such external thermal management approaches for small satellites [

35]. Note that the proposed scheduling approach can be applied independently together with these external thermal management techniques. In this case, when and how much to actively heat up could be considered as yet another problem to be optimized with respect to the power availability.

Similarly to the RET framework [

6], the proposed scheduling takes three-step off-/on-line hybrid approach. First, as an off-line procedure, it calculates virtual execution times, called reservation times (RV), for all tasks. These reservation times are assigned in a way that the reservation time of each task is maximized but never causes a deadline violation.

Then, at run time, as a second step, it chooses a task to be scheduled next using a conventional real-time scheduling policy, e.g., Earliest Deadline First (EDF). If task

is chosen for a subsystem in this step at time

t, this task is said to be reserved for the subsystem at time

t and the time range

is occupied by

, i.e., no other tasks can be executed in this range.

Finally, for a reserved task, when this task is executed should be fixed at run time within its reserved time range. That is, in the previous example, the actual starting time should be in the range

.

4.1. Off-Line Calculation of Reservation Time (Common for the RET Framework and the Proposed Policies)

Algorithm 1 delineates the off-line calculation procedure of reservation times that the proposed algorithm and the RET framework [

6] have in common. For each subsystem (processor), the reservation times of the tasks are initialized to their WCETs and all tasks are stored in

Q in a descending order of current consumption (lines 2–3). Then, for each task popped from the head of

Q, the reservation time is increased by 1 time quantum (line 6) and the schedulability is checked with this increased reservation time being the WCET (line 7). Once successful, this task is queued again into the tail of

Q (line 8) and this procedure is repeated until there is no further room for the increment in reservation time in all tasks (lines 4–12). The idea is to find the largest time range of the task execution time that guarantees the real-time schedulability even in the unluckiest case, i.e.,

. For the schedulability analysis, the uni-core Non-Preemptive Earliest Deadline First (NP-EDF) scheduling condition dervied by Jeffay et al. [

36] is used.

This off-line scheduling algorithm takes a time complexity of

for subsystem

. Note that this reservation time calculation procedure is performed off-line, thus has no impact on the runtime behavior of the system.

| Algorithm 1 Calculation of reservation times |

- 1:

for every do - 2:

; ▹ Initialized as an empty FIFO queue - 3:

, initialize and enqueue them into Q in a descending order of ; - 4:

while do - 5:

- 6:

; - 7:

if with then - 8:

into the tail of Q; - 9:

else - 10:

; - 11:

end if - 12:

end while - 13:

end for

|

4.2. On-Line Scheduling in RET

At run time, firstly, it should be decided which task is reserved using an ordinary scheduling policy. We call this default scheduling decision and used an EDF scheduler for this. Then, for each of the reserved ones, when to start its actual execution is judiciously determined within the reserved time range in a way that the variation of current dissipation over time is minimized during the precise scheduling in the RET framework.

Being an on-line procedure, it is computationally intractable to investigate all possible scheduling cases when making this decision. Instead, it is decided by a heuristic algorithm illustrated in Algorithm 2 whenever a new task reservation occurs.

The procedure is given the current time stamp (

) and the array of the sum of the currents dissipated by subsystems at each time step (

) for

as input (lines 1–2) where

denotes the biggest time stamp at which actual scheduling decision is made among all subsystems. If a task is newly reserved by the default scheduling (line 3), all tasks that are reserved but not yet fixed in scheduling are enqueued into a tail of the

Q in a descending order of power consumption (lines 5–10). Note that a task that has already been fixed its execution time before should also be enqueued if it is yet to be in actual execution, i.e.,

is smaller than its given starting time. Then, for each enqueued task, a feasible starting time within its reserved time range, i.e.,

such that the interval sum of system-wide current consumption is minimized, is searched (lines 14–19). Once found, the task is decided to be executed from that time on and the current (power) dissipation trace,

is updated as per the scheduling decision (lines 20–22). Note also that, if the actual task execution is completed than the end reserved time range, this reserved time should not be used for other tasks’ executions.

| Algorithm 2 Run time scheduling of the reserved tasks in the RET framework [6] |

- 1:

: the current time step index

- 2:

: current dissipation trace for - 3:

if a new task is reserved then ▹ By the default scheduling decision - 4:

; ▹ Initialized as an empty queue - 5:

for each do - 6:

if is reserved by and not in execution then - 7:

) into Q; - 8:

end if - 9:

end for - 10:

Sort Q in a descending order of ;▹ From more power-consuming ones to lesser - 11:

while do - 12:

; - 13:

; ; - 14:

for m in [, +) do - 15:

; - 16:

if then - 17:

; ; - 18:

end if - 19:

end for - 20:

for do▹ Update as per the decided starting time of - 21:

; - 22:

end for - 23:

end while - 24:

end if

|

Figure 4 illustrates scheduling examples from the EDF and RET policies in comparison. In the EDF policy, the scheduling decision is made in a work-conserving manner, i.e., no task is allowed to defer its execution when the processor is idle. Thus, in subsystem

,

and

are executed back-to-back without any slack in-between at the beginning. On the contrary, the RET framework first assigns the reservation time ranges for the two tasks and separate them in time within their own reservation time ranges, resulting in the total current dissipation workloads are more evenly distributed. For instance, by the RET policy,

in

is discouraged to be overlapped with other tasks in other subsystems and that is why

and

are separately executed in

and

is scheduled in the slack caused by these two tasks.

As stated earlier, the heat generation is minimized when the variance of current dissipation over time is minimized. Note that this is performed alternatively by minimizing the sum of current consumption as revealed in lines 14–19 of Algorithm 2. In the originally given trace

, the variance over time can be calculated as follows:

in which

and

N denote the average current dissipation and the maximum time stamp, respectively. By scheduling

from

m, the variance is changed to

in which

denotes the changed average current dissipation and

denotes a binary variable that is only 1 if

is in execution at time

k. Note that

and

are not sensitive to the scheduling decision, i.e.,

. By developing

, it can be easily noticed that minimizing

is equivalent to minimizing

. Therefore, the minimization of

can be safely replaced with minimizing

in line 15 of Algorithm 2.

4.3. Proposed On-Line Scheduling Policies

Based on empirical observations of the optimal temperature derived by Waldmann et al. [

32], unlike the RET framework, we argue that the reduced heat generation is not beneficial to the battery lifetime in the satellite systems when they operate mostly at low temperatures. Hence, we take alternative approaches for the on-line scheduling decision. The first alternative, called MAX_VAR, is to find a starting time for each task with in its reserved time that simply maximizes the sum of current dissipation as shown in

Figure 5a. In the example, the tasks are reserved in the order of

,

,

,

, and so forth. It can be seen that

and

are determined to be overlapped in time with

and

, respectively, to maximize the variance. However, this approach inevitably causes idle time slack post-fixed to the actual execution in most cases. Due to this, some chances of overlapping remain out of consideration. For example, as the second job of

is scheduled at the beginning of its reserved time, the second job of

will never be able to be executed at the same time with

. To compensate for these lost possibilities of overlapping, we propose the second alternative, referred to as MAX_VAR_ALAP, which also searches for the starting time that maximizes the variance, but keeps the schedule as late as possible. As illustrated in

Figure 5b, this approach allows

and

to be overlapped in time for their second jobs too.

Algorithm 3 illustrates the on-line scheduling procedure of the MAX_VAR approach. The default scheduling decision is made in the same way as the RET policy using EDF. But, in determining the starting time of the reserved task, it considers the opposite objective; it searches for the starting time that results in the maximum variance. Note that the

value is initialized as 0, instead of

of the RET policy, as shown in line 13. Whenever a new maximum variance point is found (line 14), the starting time is updated (line 17).

| Algorithm 3 Run time scheduling of the reserved tasks in the MAX_VAR approach |

- 1:

: the current time step index - 2:

: current dissipation trace for - 3:

if a new task is reserved then ▹ By the default scheduling decision - 4:

; ▹ Initialized ad an empty queue - 5:

for each do - 6:

if is reserved by and not in execution then - 7:

) into Q; - 8:

end if - 9:

end for - 10:

Sort Q in a descending order of ; ▹ From more power-consuming ones to lesser - 11:

while do - 12:

- 13:

; - 14:

for m in [, ) do - 15:

- 16:

if then - 17:

; - 18:

end if - 19:

end for - 20:

for do▹ Update as per the decided start time of - 21:

; - 22:

end for - 23:

end while - 24:

end if

|

Our goal in this on-line scheduling is to distribute the current dissipation workloads as unbalanced as possible. The calculated reserved time range safely keeps the scheduling decision free of any deadline violations. From the perspective of a single subsystem, one may think that it would be beneficial to place the task execution times consecutively by having horizontal execution bursts in

Figure 5. However, as mathematically shown in the previous subsection, the variance is proportional to the sum of current dissipation of all subsystems over a certain period in time, i.e., the vertical bursts in

Figure 5 matters. As exemplified in

Figure 5, the MAX_VAR may result in sub-optimal scheduling in terms of vertical bursts by losing some possible overlaps.

In order to maximize the possibility of a task being overlapped with other tasks in other subsystem, we propose another alternative on-line scheduling, called MAX_VAR_ALAP, that places the task execution as late as possible when the sums of current dissipation are tied for different starting points. For instance, the starting time of the second invocation of task

in

Figure 5b is scheduled at the end of its reserved time range, unlike the MAX_VAR approach. Afterwards, when the second invocation of

is reserved, it can be overlapped in time with

as shown in the figure.

Algorithm 4 shows how the MAX_VAR_ALAP differ from the previous two on-line scheduling policies. Overall flow of the algorithm stays the same, but it searches for the maximum variance point in a reverse order. The starting index of the search loop (lines 14–20) is initialized to the latest possible starting point in the reserved time range, i.e.,

, as shown in line 13 and the index of the loop is switched in an opposite order (line 19).

The time complexity of the proposed on-line scheduling policies, i.e., Algorithm 3 and Algorithm 4, are identical to that of the RET approach (Algorithm 2) as

. It is worthwhile to mention that, unlike the offline procedure, the scheduling overhead of this on-line part can possibly affect the timing and current discharging behaviors. However, in this work, we assume that this run time overhead is insignificant as in [

6] and do not explicitly consider its effects both in timing and battery aging.

| Algorithm 4 Run time scheduling of the reserved tasks in the MAX_VAR_ALAP approach |

- 1:

: the current time step index - 2:

: current dissipation trace for - 3:

if a new task is reserved then ▹ By the default scheduling decision - 4:

; ▹ Initialized ad an empty queue - 5:

for each do - 6:

if is reserved by and not in execution then - 7:

) into Q; - 8:

end if - 9:

end for - 10:

Sort Q in a descending order of ; ▹ From more power-consuming ones to lesser - 11:

while do - 12:

- 13:

; ; - 14:

while do - 15:

- 16:

if then - 17:

; - 18:

end if - 19:

; - 20:

end while - 21:

for do▹ Update as per the decided start time of - 22:

; - 23:

end for - 24:

end while - 25:

end if

|