1. Introduction

Robots in the industry are starting to transfer from confined spaces into areas that are shared with humans, which reduces the operational cost for several industrial applications [

1]. The co-existence of the human and the robot, however, raises many critical challenges regarding the safety of the human, tasks scheduling, and system evaluations [

2]. To tackle these challenges, researchers in human-robot collaboration (HRC) focus on improving the production efficiency, safety, and collaboration quality between humans and robots.

Until now, the human often remains in a superior guidance role, which is due to human perception, cognition, and dexterity exceeding the capability of robots [

3]. Robots, on the other hand, cope well with high payloads and repetitive tasks, while delivering high precision. Henceforth, several approaches established a combination of both human cognitive and perceptual abilities with the robot’s endurance to perform a collaborative task. This included intended physical contact between humans and robots during actions, such as hand-overs, co-manipulation, co-drilling, and many other applications [

4,

5].

To ensure human health and safety in such scenarios, robots operate at limited speeds and torque settings and perform a full stop in the case of a collision [

6]. According to [

5], however, these collisions are permissible when limiting the impact forces. Therefore, another group of researchers proposed to control unavoidable collision by limiting the impact forces, such as the work presented by [

7]. These methods rely on the kinematic and dynamic behaviour of the robot and human during the task. Such approaches require accurate models of the given setup [

7,

8,

9]. Thus, there is a growing interest in measuring physical interactions between humans and robots [

10]. Moreover, these interfaces have been utilised to establish intuitive human-robot interactions.

This paper presents a novel approach based on human-human co-manipulation to teach an industrial robot how to react to a human leader in a co-manipulation task. The main benefit of such an approach is the intuitive modelling that allows the robot to have similar behaviour to the human. This also allows the robot to isolate human haptic input. Hence, it is possible to estimate the force originating from the human guidance and the forces caused by contact with the environment (third contact point).

This paper’s proposed approach is based on two hypotheses investigated and validated on unseen test datasets. The first hypothesis includes adding a muscle activity sensor, namely an electromyograph (EMG), to improve the data-driven model quality. The second hypothesis is that data-driven approaches can achieve higher accuracy compared with mathematical modelling and hybrid modelling approaches, despite adding the complexity of human data processing. In order to validate the hypotheses, a simple leader–follower demonstration scenario was conducted. It provided the required data to model the follower behaviour and, thus, allowed teaching the robot to react similarly.

The paper is structured as follows: a brief literature review is presented in

Section 2,

Section 3 describes the co-manipulation problem from a mathematical point of view. Then,

Section 4 details the equipment utilised during the human-human demonstration and data collection. The adopted research methodology in this research is outlined in

Section 5, followed by the simulation setup and control-loop scheme in

Section 6.

Section 7 presents the results and discussion of the obtained outcome from the conducted experiment. Finally, the conclusions and future work are drawn in

Section 8.

2. Literature Review

HRC is widely considered a critical concept to advance the quality and performance in several domains [

9]. However, despite intensive research in this field, there are still many unsolved challenges that must be tackled to fully establish a safe and effective collaboration [

11]. This includes vital questions such as: How can a robot predict human intentions? What role should the robot perform? At what time? Finally, how can a HRC setup be evaluated [

12]? Whereas some companies have invested in HRC and have collaborative robots (cobots) on their manufacturing lines; many others are still waiting for more mature solutions.

The authors in [

13] claimed that the reason behind this is the lack of knowledge when integrating cobots into manufacturing and business. Therefore, Ref. [

13] examined current training programs that inform manufacturers about finances and tools that support decision makers to analyse assembly workstations and determine whether HRC would be beneficial to their applications. The study concluded with the fact that there is a lack of knowledge about the integration of cobots into the manufacturing processes. This could also be due to several definitions of HRC being available. In some cases, HRC is treated as a synonym for human-robot interaction in general.

In order to distinguish different kinds of human-robot interactions, Ref. [

14] established criteria, such as a workplace, working time, aim, and contact. At the lowest level, human-robot coexistence includes a shared working environment, where tasks of the human and the robot do not interfere [

15]. In addition to a shared working environment and working time, human-robot cooperation also includes a shared aim of the overall task [

14]. On the highest level in HRC, physical contact between humans and robots is also permitted, which makes it the most challenging method of interaction among the three [

15]. Hence, human-robot mutual awareness is required, as well as the exact timing of tasks and activities [

11].

To understand the required mutual awareness, Ref. [

16] introduced a generic definition of several types of human-human and human-robot interactions, in which interaction was considered as a function of physical distances between the human and the robot. Therefore, these interactions were categorised into avoiding, passing, following, approaching, and touching. Hence, the parties in a co-manipulation context can be two humans, a human and a robot, or two robots who manipulate a shared object from point

A in the workspace to point

B. According to [

16], this concept can be extended to multiple humans with a single robot (multiple–single) or multiple humans with multiple robots (multiple–multiple).

Using this analogy, therefore, human-human co-manipulation can provide useful insights on how humans perform a task and consequently teach robots to perform such tasks with humans. Subsequently, various controllers have been designed to allow multiple robots to work together when manipulating an object [

17]. Other researchers proposed controllers that are inspired by human anatomy and behaviour, and such research takes the damping and impedance characteristics of human movement into consideration [

18]. The authors in [

19] stated that human-friendly robot cooperation requires an adaptive impedance control that adjusts the robot impedance based on human characteristics. Hence, motion data from a human-human co-manipulation experiment was conducted.

Using dynamic model identification techniques, damping and stiffness factors have been estimated based on a simplified mathematical model (MM). The method mentioned above, however, requires an accurate MM to be conceived, which is often complicated and time-consuming. The collected data only contains position, speed, and acceleration, while the forces are estimated accordingly. Another approach to model human-human co-manipulation, similar to [

18,

19], can be found in [

20]. An analysis was performed on the leader and follower human, in which one human provided the haptic guidance and the other human followed the haptic clues. The study concluded that most forces originated from the leader, while the follower focused on tracking the co-manipulated object.

A control scheme that enables a humanoid robot to perform a co-manipulation task with a human partner was proposed by [

21]. The proposed control scheme consisted of three phases. At first, the robot predicted the human’s intentions to move based on a Force/Torque (F/T) sensor attached to the robot. In a second phase, the human and the robot switched roles, where the robot provided guidance and the human followed. In the third phase, the robot was controlled remotely with a joystick. The main problem with this work that it ha a discrete nature, and it required initiation through a joystick.

Since HRC requires the human and the robot to be co-existing in the same workspace, the design and development of a suitable HRC space is still an open challenge [

22]. The authors in [

23] examined the HRC shared workspace requirements based on a case study of disassembling press fitted components using collaborative robots. The study concluded that the compliance behaviour of a collaborative robot enabled the operator to work closely with the robot, which means lower installation costs and increasing efficiency as the robot and the human can work simultaneously.

However, many challenges need to be addressed regarding the performance evaluation, task assignments, and role management. Researchers proposed to equip the workspace with sensors that can improve the communication between the human and the robot to address the workspace challenges. These sensors can be classified as contextual sensors, such as cameras, motion trackers, biomechanical and psychological sensors, and motion sensors. These combined sensors are believed to improve human-robot communication as they can be used to infer the physical and psychological states of the human during the execution of the HRC task.

In more recent years, approaches have utilised Machine Learning (ML), including advanced classifiers such as Artificial Neural Networks (ANNs), to process sensory data [

24]. In [

25], an ANN was employed in human-human co-manipulation to predict the required motion based on the F/T input from the leader. The controllers used in this approach followed the desired trajectory to some degree of accuracy. However, the predicted trajectory was described as jerky, and the maximum error reached was 0.1–0.2 m. In further research, Ref. [

26] investigated human-human co-manipulation and proposed the use of a Deep Neural Network (DNN) to accurately estimate the velocity and acceleration of the human over 50 time steps of 0.25 s. The trained model showed higher accuracy in comparison with the work presented in [

25], although it was prone to noise interference.

In addition to haptic interfaces and F/T sensors, a growing interest in wearable sensors can be observed, which is intended to improve communication between humans and robots [

27]. These approaches also aim to improve the mutual awareness of both parties in HRC [

28]. Combining wearable sensors with existing technologies is believed to significantly improve HRC, as they can be used to infer the physical and psychological states of the human during the execution task [

29,

30].

However, processing signals originating from wearable sensors is considered challenging due to large amounts of subject-specific noise within the data. For example EMG signal depends on the individual internal structure, such as skin temperature, blood flow rate, muscle structure, and many other factors [

31]. Moreover, signals can even vary during different recording sessions for the same individual [

32]. Nevertheless, advanced ML classifiers can be deployed to demonstrate their characteristic strengths of coping well with noise [

33].

A common wearable sensor for detecting muscular fatigue, as well as applied muscular contraction, can be found in EMGs. The EMG signal directly correlates with the forces applied [

34]. For instance, Ref. [

35] proposed the use of EMG to estimate the required stiffness during HRC tasks since stiffness is an essential component in cooperative leader–follower tasks. The estimated stiffness was employed in a hybrid force/impedance controller. EMG signals in conjunction with an Online Random Forest were used to detect muscular fatigue/or a human operator struggling with a high payload in order to trigger an assistance request for a cobot [

27].

Additionally, Brain-Computer Interfaces (BCIs) have gained research interest in HRC. In one approach, a BCI was utilised for communicating human movement intentions to a robot [

36]. A DNN was deployed to process electroencephalogram (EEG) signals, which allowed for measuring and classifying human upper-limb movements up to 0.5 s prior to the actual movements. A similar result have also been reported in [

37]. Another approach based on a DNN to predict human intentions to move a limb was presented by [

38]. In this paper, the human limb position was estimated based on torque readings collected from a F/T sensor attached to the robot end-effector. In general, estimating human-intention-based different sensors is still at an early stage, and further research is required.

Overall, there is an eminent research interest in HRC, of which co-manipulation can be viewed as a small building block. Strategies for classical co-manipulation control vary between force-based and motion-based; however, almost all are limited as they require accurate mathematical modelling pre-knowledge about the desired trajectory. Nevertheless, based on the literature, promising potential towards more accurate models can be found in ML, including ANNs.

Advanced ML algorithms enable the classification of bio-sensory data, such as EMGs. Utilising EMGs, in conjunction with F/T data to predict human motions for co-manipulation tasks is still at an early stage. This would allow the collaborative robot to learn a behaviour, based on human-human co-manipulation within different data-driven models. Moreover, the performance of such data-driven models can be compared with previous mathematical models and hybrid models. This paper provides a comprehensive study on human-human co-manipulation, including a data-driven model, which also considers EMG signals.

4. Experimental Setup and Data Collection

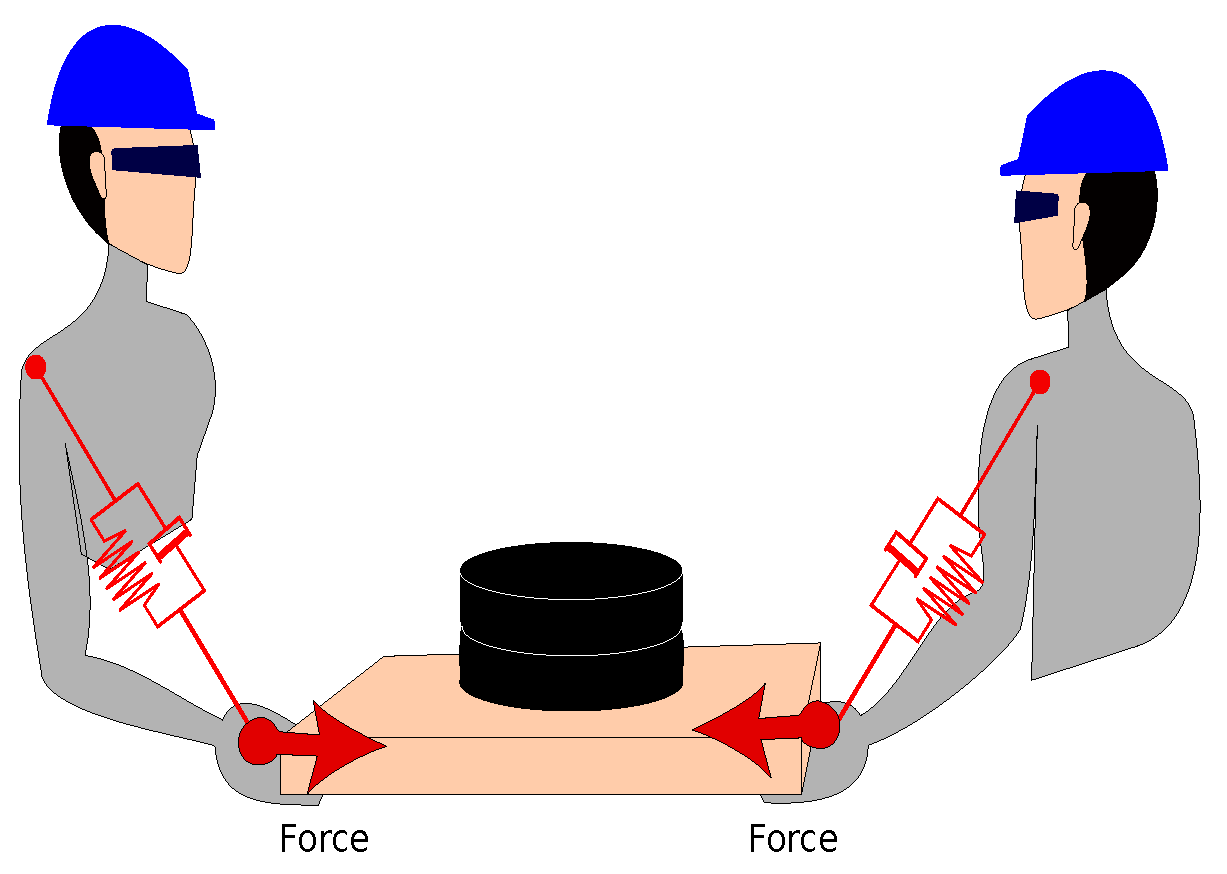

To test the proposed hypotheses in this paper, an instrumented load was used to collect data during the co-manipulation task, as shown in

Figure 2. Two sEMG sensors were utilised, which were fitted on the arm and the forearm as illustrated in

Figure 3. For the biceps muscle, the electrodes of the sensors must be aligned with the muscle axial, which was identified as shown in

Figure 3a, while the reference electrode must be shifted away from the muscle axial.

Similarly, the sensor electrodes on the forearm muscle must be fitted on the forearm muscle, specifically on the brachioradialis muscle [

40], while the reference electrode placed on the outside of the forearm close the bone side; as illustrated in

Figure 3b. The Myoware sensor used in this paper has on-board functionality that permitted the reading of the rectified signal, making the signal suitable to be integrated with the presented setup. The signals were sampled using a 12-bit Analogue-To-Digital Converter with a

Hz sampling frequency. The instrumented load is a wooden board with a

kg weight attached to it in the centre. The following sensors were utilised:

A six-axis F/T (F/T) sensor [

41].

Surface electromyography (sEMG). [

42], which is worn by the follower human

The F/T, VICON, and sEMG sensors were sampled at different frequencies; therefore, these sensory data were synchronised using an adaptive algorithm implemented in the

messageFilter-ApproximateTime tool (

http://wiki.ros.org/message_filters/ApproximateTime). The bottleneck of this tool is the slowest signal, the sEMG. Thus, the synchronised data will almost have the same frequency as the sEMG, which is around (

Hz).

For the experiment presented in this paper, two participants were asked to co-manipulate the load described above while avoiding an obstacle within the workspace.

Figure 4 depicts the floor plan for the experiment and the path that the leader and the follower had to follow. The participants were assigned a leader or follower role and were not allowed to communicate during the experiment. This prevents the participants from verbally sharing their intention. The follower was equipped with muscle activity sensors, which were integrated with the Robot Operating System (ROS) network [

43].

The kg load, in this scenario, provided some resistance while manipulating the object to emulate a realistic scenario. Additionally, each participant was only permitted to use one arm whilst carrying the object. This enabled the F/T readings to be mapped to a single local reference point. The leader guided the manipulation within the workspace while avoiding the obstacle and towards the endpoint. At the same time, the follower reacted to the leader’s movements and mimicked his/her motions until the endpoint was reached. The experiment was designed in this way to replicate human-robot co-manipulation.

Sensor Placement

The F/T sensor was placed on the follower side in the centre of the object. The correct EMG sensor placement is essential as the quality of the signal can be affected. As such, the sensor must be placed over the centre of the muscle as shown in

Figure 3 [

42]. Finally, the motion capture reference point was placed on the leader’s side in the object’s centre, while the F/T sensor was located between the leader’s handle and the load. Hence, a transformation between the F/T coordinate into the workspace coordinate is required.

Figure 5 illustrates the required transformation; also, it can be noticed that the coordinate system of the F/T sensor in which the

Z-axis is aligned with the handle axial and

plane was parallel to the surface of the sensor.

5. Methodology

Four different sets of features were used to predict the required displacements based on leader guidance to validate the proposed hypotheses. The fitted models were evaluated based on unseen test datasets using the root mean square error (RMSE). An overall displacement error (overall RMSE) was calculated to determine the resultant error (

). The employed feature sets are shown in

Table 1.

The F/T features were the F/T data (

), EMG arm and forearm (

, respectively) and previous 3D displacement, which is the displacement from thge previous timestamp (

). Then, the performance of the fitted models on the unseen test dataset was calculated using RMSE. This allows

for testing the impact of including EMG sensory data in such a context. Finally, the performance of the best data-driven model was compared against the performance of the MM and the hybrid model. Another important feature in the co-manipulation tasks is the time, in which the human intention to move the shared object at time point

t not only depends on the F/T and EMG at the same point but also depends on the displacements at the previous timestamp (

), as summarised in Equation (

4).

where

is the mapping function of the given features to the intended displacement

. The displacement

is measured using the VICON system by comparing the Cartesian position at

t with the Cartesian position at

.

5.1. Mathematical Modelling

The problem described in

Section 3, can be simplified as a mass–spring–damper system, as shown in

Figure 6. The human arms can be simplified as a spring–damper on both sides of the transported object in 3D space.

Using Newton’s second law (

), the problem in

Figure 6 can be described as shown in Equation (

5).

The superposition concept can be used for further simplification, as the 3D space system can be split into three separated equations (vector form), as shown in Equation (

6). Based on the experimental setup and the problem at hand, the resultant force is measured using the F/T sensor. Furthermore, the total mass of the moving object is known; thus, the model described in Equation (

5) can be simplified by omitting the muscle stiffness and damping effect as shown in Equations (

5) and (

6). To determine the displacements based on the measured forces, Equation (

6) can be rewritten as in Equation (

7).

In Equation (

7),

and

are the displacements in the F/T sensors. Therefore, they must be transformed into the VICON coordinate system using a transformation matrix that was calculated based on the alignment of the F/T sensor with respect to the VICON markers (

Figure 2). The final MM is shown in Equation (

8), where

is the transformation matrix from the F/T sensor into the VICON coordinate system.

5.2. Model-Free Approaches: Data-Driven Models

Modern manufacturing systems are complicated since they integrate a wide variety of equipment that extends from machinery and automation equipment on the shopfloor up to cloud systems and data-acquisition tools. In addition, the complexity on the shopfloor level due to the fast development of communication is exponentially increasing, which means higher data flows between different elements on the shopfloor. Consequently, equipment has higher nonlinearities, disturbances, and uncertainties. Therefore, it is almost impossible to describe these complicated systems using conventional mathematical models, such as differential equations or statistical models, in real applications. Nonetheless, with the fast advancement of sensing technology and data collection technologies, data-driven modelling (DDM) becomes more feasible in comparison with mathematical modelling [

44].

Based on the regression problem explained in

Section 3, we propose the use of Linear Regression (LR), Random Forest (RF) regression, Boosted-Trees (BT), and Recurrent Neural Networks (RNN) as these methods represent state-of-the-art approaches [

45]. LR is well-known for simplicity and its ease of use. In contrast, BT and RF are ensemble ML approaches that are powerful and have shown high performance on several datasets. Finally, an RNN as part of a Deep Learning Neural Network is utilised. Hence, in this paper, we compared the performance of each ML algorithm and the performance of the data-driven approaches with the mathematical and hybrid models. In the following subsection, the data-driven approaches are explained in the co-manipulation context.

5.3. Hybrid Modelling Approach (HM)

As described in the mathematical modelling section, mathematical models are often derived from the fundamental laws of physics, such as Newton’s laws of motion. Physical models extrapolate well by design and, therefore, are preferred for model-based control approaches. In realistic scenarios, however, modelling errors exist due to omitted dynamics, modelling approximations, lack of suitable friction models, backlash, or unmodelled elasticity in the mechanism. Classically, these problems can be tackled with assumptions, linearisation around an operation point, and enhancing the model parameters based on theoretical or experimental methods. In the case of a very complex mechanism, however, these solutions might not be feasible.

On the contrary, data-driven modelling approaches utilise the behavioural response of the system for different inputs and then extract a set of generic rules that describe the correlations amongst the inputs and outputs without omitting or simplifying the system. The main drawback of such systems is that they are not always interpretable as in physical/mathematical modelling. The quality of the data-driven model depends on the size and quality of the collected data.

Furthermore, data can barely ever deplete all possible configurations. Thus, a hybrid model can be used that combines simplified MM with a data-driven error model [

46]. The target is to capture the main physical attributes of the given system (e.g., the robot) while substituting for model approximations and inaccuracies. Hence, the hybrid model has a grey-box character due to the mixture of a physical and data-driven (black-box) error model. In this paper, we decided to model the error within a mathematical model,

Section 5.1, using the best data-driven approach from the previous section. Then, the problem description can be now rewritten, as shown in Equation (

9).

where

is the error between the real displacement measured using VICON and the estimated displacement using the mathematical models. The error function

can be seen as a regression problem that can be tackled using the best data-driven approach. Consequently, the final model is a combination of MM and data-driven models. For evaluation purposes, the hybrid model was compared with all the approaches above on unseen test data.

8. Conclusions and Future Work

This paper utilised a data-driven approach to extract and model a human-human (demonstration) co-manipulation skill, which can be utilised to teach an industrial robot a human-like behaviour. The collected experimental data included F/T data, the manipulated object’s Cartesian position, and the EMG signal from the human muscles (follower). The collected data were then used to fit an RNN, an RF, an LR, and BT using four sets of features (F1, F2, F3, and F4). The accuracy of the fitted models was tested using unseen, randomly split test data, which illustrated that the sequential RNN trained on F1 features had the lowest RMSE compared to other ML models.

Moreover, this showed that the use of EMG sensory data positively impacted such a model’s accuracy. After that, the best data-driven model was compared with a simplified mathematical model. The results illustrated that the RNN outperformed the mathematical model; in fact, all data-driven approaches outperformed the mathematical models. Finally, the best data-driven models were validated in a simulated environment with an impedance controller to evaluate these approaches compared to MM and HM in a more realistic scenario. The results exhibited that data-driven models had higher accuracy and smoother trajectories in comparison with HM and MM.

In order to extend the work completed in this paper, it is essential first to outline the vision for the work. This project’s desired outcome was to create a controller that allowed co-manipulation tasks, including multiple robots following a human leader. However, substantial work needs to be completed until this vision can be established. The data-driven approach offers promising potential for more intuitive interfaces in HRC and, thus, more effective collaboration between humans and robots. Hence, testing the model experimentally in a human-robot manipulation scenario will be conducted in the future.