In this section, we propose a new general word-level advanced attack framework which make use of the information of the adversarial examples generated by the local model and to transfer part of the process to the local model for completion ahead of time.

3.2. Basic Ideas

This framework is based on three basic ideas, listed as follows.

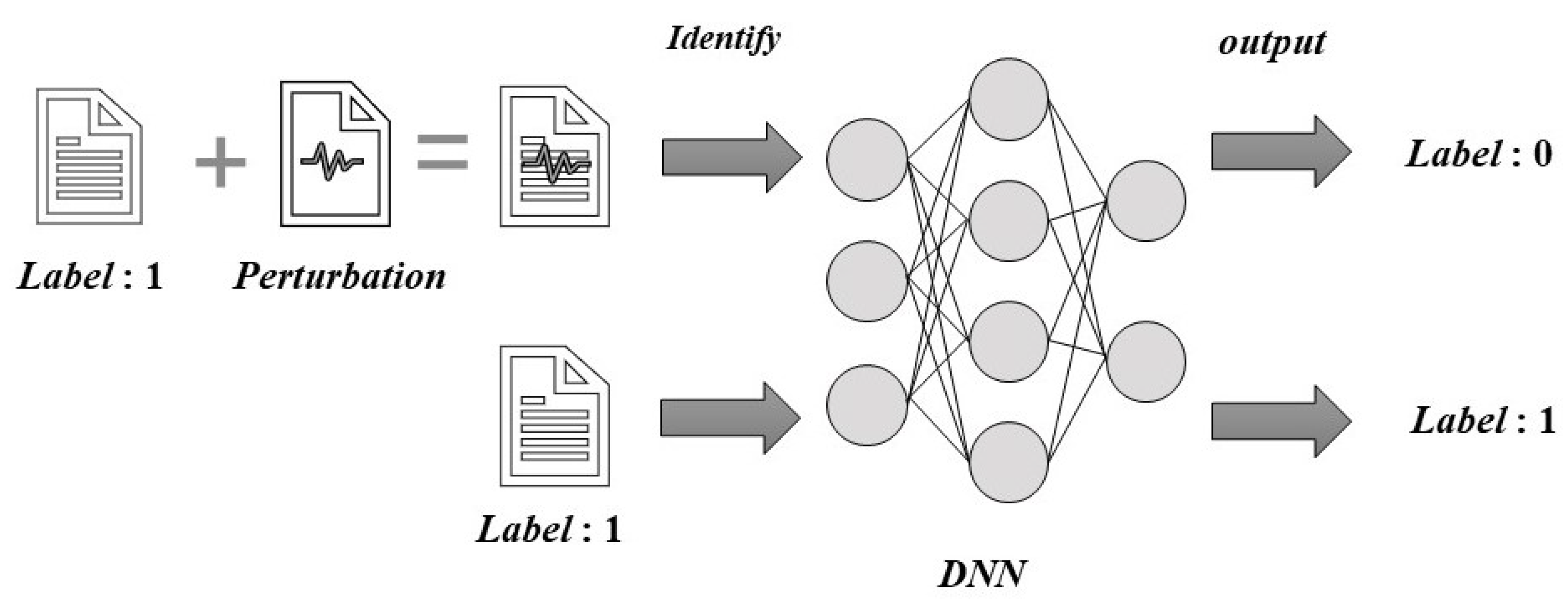

The use of transferability of adversarial examples: Research shows that neural-network models based on different structures may misclassify the same adversarial example, i.e., the adversarial example is transferable. This is because different models used for similar tasks often have similar decision boundaries [

14]. Therefore, we first conducted an adversarial attack against a local model to obtain candidate adversarial examples which is expected to be transferred to the target model. While the candidate adversarial examples generated on the local model cannot be completely transferred, they are still more aggressive than unprepared examples. Therefore, we considered candidate adversarial examples that fail to transfer as the next starting point for attacks against the target.

The use of location information of vulnerable words searched by local models: Because different models may have similar decision boundaries for similar tasks, the same sample may suffer the same word vulnerabilities based on textual expression characteristics, regardless of whether the model is local or target; this can cause decision errors in the model. Therefore, we considered the positions of vulnerable words found when each local model generates candidate adversarial examples, especially those that fail to transfer successfully. This information is passed to the next step of the attack process, during which the costs of researching vulnerable words are reduced.

The use decision information of the target model to optimize the local model in real time: Continue to attack the target model on the basis of the candidate adversarial samples to obtain the final disturbance samples. The prediction information of these samples may contain richer information about the decision boundary of the target model. Therefore, we used these disturbed samples, including successful adversarial examples and failed texts, to tune the local model in real time to achieve a model closer to the target model.

3.3. Attack Method

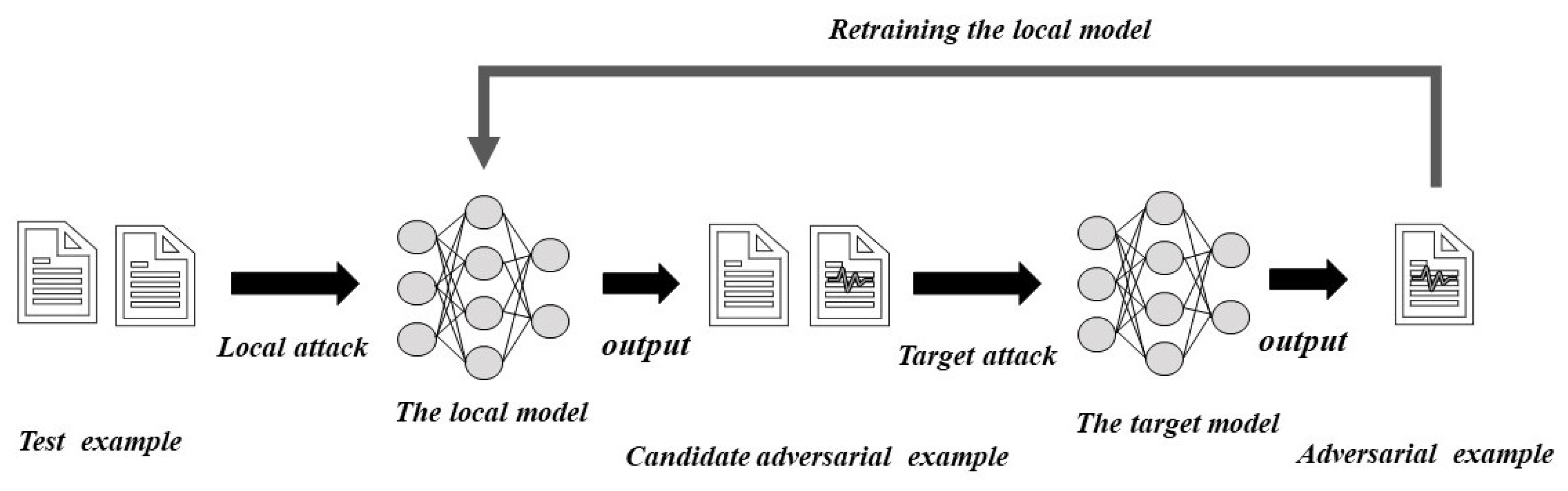

Our advanced attacks combine the three aforementioned ideas according to word-level confrontational attacks. We made full use of the prior information obtained by the local model while building adversarial examples, including candidate examples along with their keyword position information. We used the labeled input received during the target-model attack to optimize the local model to continuously improve the transfer rate. The proposed advanced attack method is shown in Algorithm 1 and is composed of three main steps. In addition, in

Figure 2 we show the overall flow of our method.

| Algorithm 1: Advance Attack |

| Input: Sample text , the corresponding ground-truth label, Y, the local model, , and the target black-box model, |

Output: Adversarial example Initialization , while each sentence in X do Determine the candidate replacement word space according to the sememe of each word in . Find the candidate word having the highest prediction score for each word in . Optimize the combination to find a suitable replacement (Replacement word position) if then Calculate using the highest prediction score of at position in if then In words other than position continue to be combined and optimized end if else return . end if end while return

|

Local attacks: The attack process generates adversarial examples against the local model. Specifically, the given input text, , contains n words, each with a variable number of replaceable words in the selected search space (e.g., thesaurus, semantics, or word-embedding space). For example, the set of replacement words for the word, , can be expressed as . We then queried the local model to find the candidate replacement word with the highest target-label prediction score in each replaceable word space, , where is the optimal replacement word for the word in sentence . For the local model, we used combinatorial optimization methods to screen out the appropriate optimal replacement word combination. In addition, we used the combination to replace the word at the corresponding position of the original sentence, thereby generating a candidate adversarial example, . This process can be repeated to obtain the required number of candidate adversarial examples. Then, we recorded the position information, , of the replacement words of each candidate example.

Target Attack: The attack process generates adversarial examples against the target models. If the candidate adversarial example directly transfers, it continues to attack the next example. If the example fails to transfer directly, the candidate adversarial example is used as a starting point to attack the target model. According to Step 1, the candidate adversarial examples that fail to transfer are still closer to the target model’s decision boundary than the original examples. Therefore, the candidate adversarial example, , which failed to transfer, uses the replacement word position information, , to find the word’s replaceable word space from the to the position in . By querying the target model, we obtained the candidate replacement word, , by using the target model’s highest prediction score and directly replace it from the to the position in with to obtain . If the attack is successful, the iteration ends; otherwise, we continue to select replacement words for the remaining positions.

Tuning the local model: After performing word replacement on the original input example, if the target model can be deceived, a successful adversarial e xample, , is returned. The adversarial examples obtained during the target attack, regardless of success, obtain the prediction score of the target model during the search process. We added these examples, , and the target model prediction label to the training dataset of the local model to further train it.