1. Introduction

The technology for detecting eye blinks is important and has been used in a variety of areas, including drowsiness detection [

1,

2]. driver safety [

3,

4,

5], computer vision [

6,

7], and anti-spoofing protection in face recognition systems [

8,

9]. Drowsiness is one of the most significant variables that jeopardize road safety and contributes to serious injuries, deaths, and economic losses on the road. Due to increased drowsiness, driving performance decreases. Accidents involving serious injury or death occur because of inattention caused by an involuntary shift from waking to sleep. Individuals with normal vision exhibit spontaneous eye blinking at a specific frequency. Improvements in information and signal processing technologies have a positive impact on autonomous driving (AD), making driving safer while reducing the challenges faced by human drivers as a result of newly developed artificial intelligence (AI) techniques [

10,

11]. Over the decades, the development of autonomous vehicles has resulted in life-changing breakthroughs. In reality, there will be noticeable societal effects from its adoption in the areas of accessibility, safety, security, and ecology [

12].

Eye blinking is influenced by various factors, including eyelid conditions, eye conditions, the presence of disease, the presence of contact lenses, psychological conditions, the surrounding environment, drugs, and other stimuli. The number of blinks per minute ranges between 6 and 30 [

13]. According to [

14], the human blink rate varies depending on the circumstances. During normal activity, a person’s average blink rate is 17 blinks per minute. There is variation in blink rate, with the highest being 26 blinks per minute and the lowest being 4–5 blinks per minute. In this way, it becomes clear that a person’s blink rate varies based on the environment he is in and his concentration on the task at hand.

While driving, one must maintain the maximum level of concentration on the road, which results in a reduction in the blink rate. When driving, the average blinking speed is about 8–10 blinks per minute. A person’s blink rate is also affected by their age group, gender, and the amount of time they spend blinking. There are real-time facial landmark detectors that can capture most of the distinctive aspects of human facial photographs. These features include the corner-of-the-eye angles and the eyelids [

15,

16].

The size of an individual’s eye does not correspond to the size of his or her body. Take, for instance, two people who are physically identical save for the sizes of their eyes: one can have enormous eyes, and the other, small eyes. The eye height when closed is the same for all people, no matter whether the size of the eye is big or small. This problem will inevitably influence the experimental findings. In response, we present a simple but very successful method for identifying the blink of an eye using a facial landmark detector with Eye Aspect Ratio (EAR). One easy way is to use the Eye Aspect Ratio (EAR) algorithm. Further, the EAR requires only basic calculations based on the ratio of the distances between the eye’s facial landmarks. This technique for detecting the blink of an eye is fast, efficient, and easy to practice. Dewi et al. [

17] built their own eye dataset, which had several challenges, including small eyes, wearing glasses, and driving a car. This dataset is adapted to the characteristics of small eyes. We used this dataset in our experiment.

The model proposed in [

18] is one in which the eye is modeled in conjunction with its surrounding context. The first step is a visual context pattern-based eye model, and the second step is semi-supervised boosting for high-precision detection. The approach consists of these two steps. Lee et al. [

19] tried to provide an estimation of the condition of an eye, which may include whether or not an eye is open or closed.

When analyzing patterns in a visual setting, it is important to maintain as much consistency as possible in what is visible. Another approach is presented in [

20], where an eye filter is utilized for finding all the eye candidate’s points. The non-negative matrix factorization (NMF) is reduced to its smallest possible value because of this reconstruction of the mistake.

Pioneering work was performed by Fan Li et al. [

21], who investigated the effects of data quality on eye-tracking-based fatigue indicators and proposed a hierarchical-based interpolation approach to extract eye-tracking-based fatigue indicators from low-quality eye-tracking data. This work was considered groundbreaking because it investigated the effects of data quality on eye-tracking-based fatigue indicators. Gracia et al. [

22] conducted an experiment using eye closure for separate frames, which was then subsequently used in a sequence for the detection of blinks. We built our algorithm upon the successful methods of Eye Aspect Ratio [

23,

24] and facial landmarks [

25,

26].

Learning and normalized cross-correlation are used to create templates with open and/or closed eyes. Eye blinks can also be identified by measuring ocular parameters, such as by fitting ellipses to eye pupils using a variation of the algebraic distance algorithm for conic approximation. Eye blinks can be detected by monitoring ocular parameters [

27,

28].

The following is a list of the most significant contributions that this article has made: (1) We propose a method to automatically classify blink types by determining the different EAR thresholds (0.18, 0.2, 0.225, 0.25). (2) Adjustments were made to the Eye Aspect Ratio to improve the detection of eye blinking based on facial landmarks. (3) We conducted an in-depth analysis of the experiment’s findings using TalkingFace, Eyeblink8, and Eye Blink datasets. (4) Our experimental results show that using 0.18 as the EAR threshold provides the best performance.

The following is the structure of this research work. The Materials and Methods section covers related work and the methodology we applied in this research. Results and Discussion describes our experimental setting and results. In the final section, conclusions are drawn and suggestions for future research are made.

2. Materials and Methods

Drowsiness is characterized by yawning, heavy eyelids, daydreaming, eye rubbing, an inability to concentrate, and lack of attention. The percentage of eyelid closure over the pupil over time (PERCLOS) [

29] is one of the most widely used parameters in computer-vision-based drowsiness detection in driving scenarios [

30]. In reference [

31], a convolutional neural network (CNN) was used to develop a tiredness detection system. The program trained the first network to distinguish between human and non-human eyes, then used the second network to locate the eye feature points and calculate the eye-opening degree. Efficient algorithms for detecting drowsiness are presented in this article. In addition, facial landmarks can be retrieved using the Dlib toolkit. By identifying the different EAR thresholds, we present a method for automatically classifying blink types.

2.1. Facial Landmarks for Eye Blink Detection

Deep-learning-based facial landmark detection systems have made impressive strides in recent years [

32,

33]. A cascaded convolutional network model, as proposed by Sun et al. [

34], consists of a total of 23 CNN models. This model has very high computational complexity during training and testing.

To detect and track important facial features, identification of facial markers must be performed on the subject. As a result of head movements and facial expressions, facial tracking is stronger for rigid facial deformations. Facial landmark identification is a computer vision job in which we try to identify and track key points on the human face using computer vision algorithms [

35]. Multi-Block Color-Binarized Statistical Image Features (MB-C-BSIF) is discussed in [

36]; this is a novel approach to single-sample facial recognition (SSFR) that makes use of local, regional, global, and textured-color properties [

37,

38].

Drowsiness can be measured on a computational eyeglass that can continually sense fine-grained measures such as blink duration and percentage of eye closure (PERCLOS) at high frame rates of about 100 fps. This work can be used for a variety of problems. Facial landmarks are used to localize and represent salient regions of the face, including eyes, eyebrows, nose, mouth, and jawline.

Blinking occurs repeatedly and involuntarily throughout the day to maintain a certain thickness of the tear film on the cornea [

39]. The act of blinking is a reflex that involves the fast closure and opening of the eyelids in rapid succession. Blinking is also known as blepharospasm. The act of blinking is performed subconsciously. The synchronization of several different muscles is required for the act of blinking one’s eyes.

While keeping the cornea healthy is an important function of blinking, there are other benefits as well [

40], and this is supported by the fact that adults and infants blink their eyes at different rates. A person’s blink frequency changes in response to their level of activity. The number of blinks increases when a person reads a certain phrase aloud or performs a visually given information exercise, whereas the number of blinks decreases when a person focuses on visual information or reads words quietly [

41].

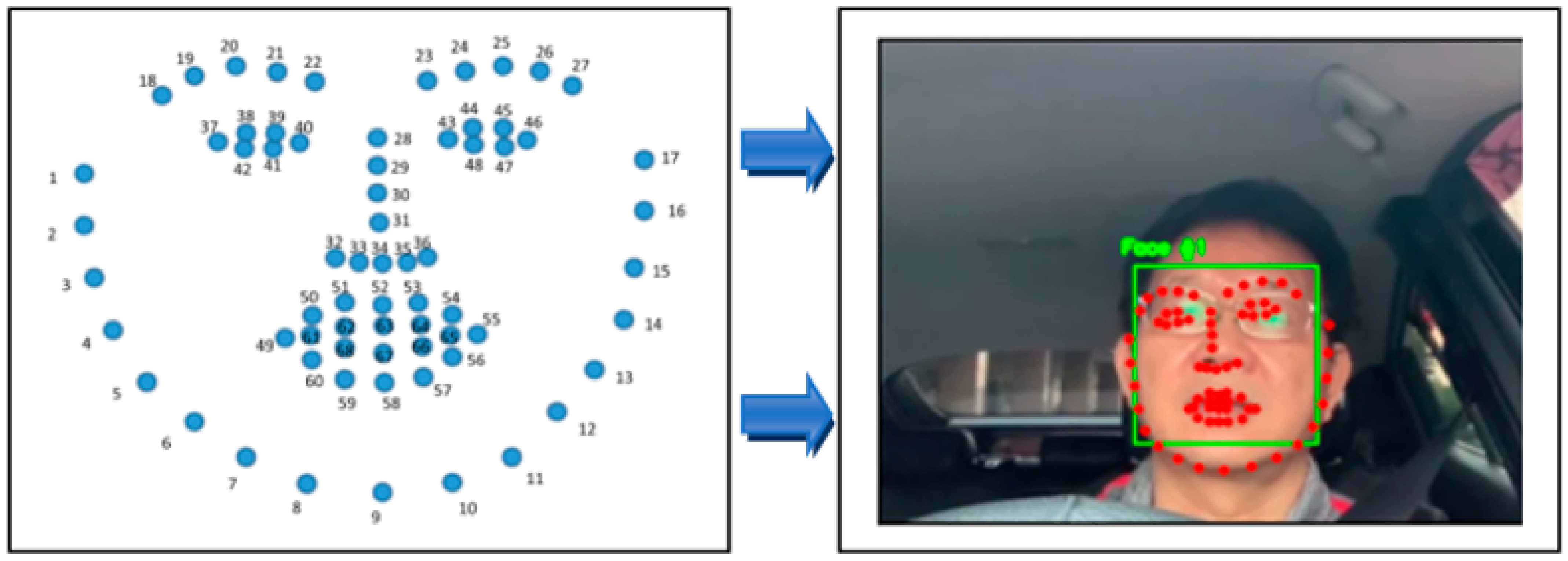

In our investigation, we made use of the 68 facial landmarks from Dlib [

42]. Estimating the 68 (x,y)-coordinates corresponding to the facial structure on the face was carried out with the help of a pre-trained facial landmark detector found in the Dlib library.

Figure 1 displays that the jaw points range from 0 to 16, the right brow points range from 17 to 21, and the left brow points range from 22 to 26. The nose points range from 27 to 35, the right eye points range from 36 to 41, and the left eye points range from 42 to 47. The mouth points range from 48 to 60, and the lip points range from 61 to 67. Dlib is a library that helps implement computer vision and machine learning techniques. The C++ programming language serves as the foundation for this library.

The process of locating facial landmark points with the use of Dlib’s 68-landmark model consists of two stages, all of which are described in the following order: (1) The first way to locate a human face, face detection, is done by returning a value in the form of x, y, w, and h coordinates, which together form a rectangle. (2) A facial landmark: Once we have determined the location of a face within an image, we must then place points within the rectangle. This annotation is included in the 68-point iBUG 300-W dataset, which serves as the basis for the training of the Dlib facial landmark predictor. The Dlib framework can be utilized to train form predictors on input training data, regardless of the dataset that is selected to be trained on.

2.2. Eye Aspect Ratio (EAR)

The Eye Aspect Ratio, or EAR, is a scalar value that responds, particularly for opening and closing the eyes [

43]. During the flashing process, we can see that the EAR value grows rapidly or decreases significantly. Interesting findings in terms of robustness were obtained when EAR was used to detect blinks in [

44]. Studies in the past have employed a predetermined EAR threshold to establish when subjects blink (EAR threshold at 0.2). This approach is impractical when dealing with a wide range of individuals, due to inter-subject variation in appearance and features such as natural eye openness, as in this study. Our works used an EAR threshold value to detect a rapid increase or decrease in the EAR value caused by blinking, based on the findings of previous studies.

We used the varying EAR threshold to automatically categorize the various sorts of blinks (0.18, 0.2, 0.225, 0.25). After that, we analyzed the experimental result and determined the best EAR threshold for our dataset. Each frame of the video stream is used to estimate the EAR. Furthermore, when the user shuts their eyes, the EAR drops and then returns to a regular level when the eyes are opened again. This technique is used to determine both blinks and eye opening. As the EAR formula is insensitive to both the direction of the face and the distance between it and the observer, it can be used to detect faces from a considerable distance. The EAR value can be calculated by entering six coordinates surrounding the eyes, as shown in

Figure 2, and Equations (1) and (2) [

30,

45].

The EAR equations are described by Equation (1), where P1 through P6 stand in for the locations of the 2D landmarks on the retina. P2, P3, P5, and P6 were utilized to measure the height of the eye, whereas P1 and P4 were utilized to measure the breadth of the eye. This is depicted in

Figure 2. When the eyes are closed, the EAR value quickly drops to virtually zero, in contrast to when the eyes are open, in which case the EAR value remains constant. This behavior is seen in

Figure 2b.

2.3. Research Workflow

Our system architecture is divided into two steps, namely, data preprocessing and eye blink detection, as described in

Figure 3. In the data preprocessing step, the video labeling procedure using Eyeblink Annotator 3.0 by Andrej Fogelton [

46] is shown in

Figure 4. OpenCV version 2.4.6 is utilized by the annotation tool. Both video 1 and video 3 were recorded at a frame rate of 27.97 frames per second. Video 2 was captured with 24 fps. Video 1 has a length of 1829 frames, totaling 29.6 MB. Video 2 has a length of 1302 frames and a file size of 12.4 MB. Next, video 3 has 2195 frames and a file size of 38.6 MB. The Talking Face and Eyeblink8 datasets contain 5000 frames and 10,712 frames, respectively. Video information is explained in

Table 1.

People who wear glasses and have relatively tiny eyes are represented in our dataset in a unique way. The people who operate automobiles make up the environment. This dataset may be utilized for additional research endeavors. Based on what we know, it is difficult to locate a dataset of persons who have tiny eyes, wear spectacles, and drive cars. We have the footage from the dashboard camera installed in a vehicle in the Wufeng District of Taichung, Taiwan. We have verified that informed consent was received from each individual who participated in the video dataset collection. Our data collection includes five films and one individual performing a driving scenario.

The annotations start with line “#start,” and rows consist of the following information: frame ID: blink ID: NF: LE_FC: LE_NV: RE_FC: RE_NV: F_X: F_Y: F_W: F_H: LE_LX: LE_LY: LE_RX: LE_RY: RE_LX: RE_LY: RE_RX: RE_RY. An example of a frame with a blink is: 118: −1: X: X: X: X: X: 394: 287: 220: 217: 442: 369: 468: 367: 516: 364: 546: 363. The eyes may or may not be completely closed during a blink. According to the website blinkmatters.com, the range of fully closed eyes during a blink is between ninety percent to one hundred percent [

46]. The row will be like: 415: 5: X: C: X: C: X: 451: 294: 182: 191: 491: 362: 513: 363: 554: 365: 577: 367. In this particular study, our experiments were only interested in the blink ID and eye completely closed (FC) columns; as a result, we ignored any additional information that may be provided.

Table 2 provides an explanation of the features included in the dataset.

The Eyeblink8 dataset is more complex because it includes facial expressions, head gestures, and staring down at a keyboard. According to [

46], this dataset has a total of 408 blinks across 70,992 video frames at 640 × 480-pixel resolution. This clip has an average length of between 5000 and 11,000 frames and was shot at 30 frames per second. There is only one video of a single participant chatting to the camera, so to speak, in the Talking Face dataset. In the video, the person can be seen smiling, laughing, and doing a “funny face” in a variety of situations. In addition, the frame rate is 30 frames per second, the resolution is 720 by 576, and 61 blinks that have been labeled.

2.4. Eye Blink Detection Flowchart

Figure 5 illustrates the blink detection method, and the frame-by-frame breakdown of the video is the initial stage. The facial landmarks feature [

47] was implemented with the help of Dlib to detect the face. The detector used here is made up of classic histogram of oriented gradients (HOG) [

48] feature along with a linear classifier. In order to identify face characteristics, including the ears, eyes, and nose, a facial landmarks detector was built inside Dlib [

49,

50]. Moreover, with the help of two lines, our research was able to identify blinks. The lines dividing the eye are drawn in two directions: horizontal and vertical. Blinking is the act of briefly closing the eyes and shifting the eyelids from one side to the other. Blinking is a natural thing to happen.

The eyes are closed or blinking when the eyeballs are not visible, the eyelids are closed, the upper and lower eyelids are fused, and the upper and lower eyelids are not connected. Further, when the eyes are opened, the vertical and horizontal lines are almost the same, but the vertical lines narrow or almost disappear when the eyes are closed. We may consider eye blinking if the EAR is less than the modified EAR threshold for three seconds. To perform our experiment, we used four alternative threshold values: 0.18, 0.2, 0.225, and 0.25. Additionally, we experimented with different EAR cutoffs and both video datasets.

3. Results

Table 3 summarizes the statistics for the predictions and test sets of video 1, video 2, and video 3. For the EAR threshold of 0.18, the total frame count for the prediction set of videos 1 is 1829, the number of closed frames analyzed was 23, and the number of blinks found was 2. On the other hand, the statistics for the test set state that there are 58 closed frames, and there are 14 blinks. This experiment exhibited an accuracy of 95.5% and an area under the curve (AUC) of 0.613. Furthermore, video 3 has 1302 frames with 182 closed frames. The maximum accuracy was obtained while implementing the 0.18 EAR threshold: 86.1%. Moreover, video 3 contains 2192 frames, totaling 73.24 seconds. Using an EAR threshold of 0.18 resulted in 89% accuracy for this dataset. In the third video, we see the minimal results of 47.5% accuracy and 0.594 AUC for an EAR threshold of 0.25 being used.

Moreover,

Table 4 describes the statistics for the prediction and test sets of Talking Face and Eyeblink8 datasets. Talking Face has 5000 frames with 227 closed frames. The optimum accuracy was achieved while employing the 0.18 EAR threshold: 97.1% accuracy and 0.974 AUC. For Eyeblink8, video 8, the highest accuracy was obtained when using the 0.18 EAR threshold: 86.1% accuracy and 0.732 AUC. This dataset has 1302 frames with 182 closed frames and 18 blinks.

The best EAR threshold in our experiment was 0.18. This value provided the best accuracy and AUC values in all experiments. Hence, 0.25 is the worst EAR threshold value because it obtained the minimum accuracy and AUC values. Based on the experimental results, it can be concluded that the higher the EAR threshold, the lower the accuracy and AUC performance. In previous studies, it was said that the EAR threshold of 0.2 is the best value, but it was not for our experiment. In fact, 0.18 was the best EAR threshold in our work. Our dataset is unique because of the small eyes. The size of the eyes will certainly affect the EAR and EAR threshold values. Therefore, our dataset also has some challenges, namely, people driving cars and people wearing glasses.

Figure 6 shows the confusion matrix for the video 1, video 2, and video 3.

Figure 6a describes the false positive (FP) value of 58 out of 58 positive labels (1.0000%) and false negative (FN) rate of 23 out of 1771 negative labels (0.0130%) for video 1 and EAR threshold 0.18.

Figure 6c explains the false positive (FP) rate of 35 out of 61 positive labels (0.5738%) and false negative (FN) rate of 206 out of 2131 negative labels (0.0967%).

In our experiment, we analyzed videos frame by frame and identified eye blinks every three frames, as shown in

Figure 7. The results of the experiment only show the blinks at the beginning, middle, and end frames. For instance,

Figure 7a illustrates the 1st blink started in the 3rd frame, the middle of the action was in the 5th frame, and it ended in the 7th frame. Next,

Figure 7b describes that the 2nd blink started in the 1555th frame, the middle of action was in the 1556th frame, and it ended in the 1557th frame. Moreover,

Figure 7c explains the 3rd blink started in the 1563rd frame, the middle of the action was in the 1564th frame, and it ended in the 1565th frame.

Figure 8 exhibits the Video 3 eye blink prediction result frame by frame with the EAR threshold of 0.18. The 1st blink started in the 69th frame, the middle of the action was in the 71st frame, and it ended in the 72nd frame as shown in

Figure 8a. The 2nd blink started in the 227th frame, the middle of the action was in the 230th frame, and it ended in the 232nd frame, as described in

Figure 8b. Next,

Figure 8c illustrates that the 3rd blink started in the 272nd frame, the middle of the action was in the 273rd frame, and it ended in the 274th frame.

Table 5 and

Table 6 present the specific results that were obtained for each video dataset. These tables include the precision, recall, and F1-score measurements. In our testing, the best EAR threshold was 0.18, which was excellent. In all tests, this number yielded the highest accuracy and AUC values, respectively. As mentioned, 0.25 was the worst EAR threshold value, since it only achieved the bare minimum in terms of accuracy and AUC.

Researchers normally choose 0.2 or 0.3 as the EAR threshold, despite the fact that not everyone’s eye size is the same. As a result, it is better to recalculate the EAR threshold to detect whether the eye is closed or open in order to identify the blinks more accurately. For video 1 we achieved 96% accuracy, followed by video 3, with 89% accuracy, and video 2, with 86% accuracy, as shown in

Table 5. Using Talking Face and Eyeblink8 datasets, we obtained the same accuracy, 97%, by employing the 0.18 EAR threshold, as shown in

Table 6.

4. Discussion

The EAR and error analysis of the video 1 dataset is presented in

Figure 9. The EAR threshold for this dataset was set to 0.18. Assume that the linear regression’s optimum slope is m ≥ 0. All the data from video 1 were plotted in our experiment, and the result is m = 0. Infrequent blinking has a small impact on the overall trend in EAR measurements depicted in

Figure 10a. However, the cumulative error is irrelevant for blinks, owing to its delayed effect. Nevertheless, mistakes behave more similarly to correctly dispersed data than the EAR values in

Figure 10b.

The effectiveness of the proposed method for detecting eye blinks was evaluated in this work by contrasting the detected blinks with the ground-truth blinks using the three video datasets. Overall, the output samples may be divided into three categories. The samples that were correctly identified are referred to as true positives (TP), the samples that had the wrong identification are referred to as false positives (FP), and the samples that were correctly not recognized are referred to as true negatives (TN) [

51,

52]. Precision (P) and recall (R) are represented by [

53,

54] in Equations (3) and (4).

Another evaluation index, F1 [

55], is shown in Equation (5).

There is a possibility that if the driver does not blink for a long time and his EAR value decreases without any blinking in the initial period, the algorithm will not return an error. Further, our work calculates errors as

errors = calibration – linear and

cumsum (). This function will return the cumulative sum of the elements along a given axis. A new array holding the result, in which case a reference to out is returned, is returned unless out is specified. The result has the same size and shape as if the axis were none or a 1d array. Cumulative errors are not very important for blinking, as their effects are delayed. However, typical errors can be exploited to detect anomalies.

Figure 10 describes the EAR and error analysis of the video 3 dataset with an EAR threshold of 0.18. The average EAR value for video 3 was 0.25, as shown in

Figure 9b. This average value is slightly different from the average value in

Figure 10a, which is close to 0.30. During our tests, we found that the optimal EAR threshold is 0.18. This figure yielded the greatest accuracy and AUC values in all tests, both excellent results. The statistics are listed in

Table 5 and

Table 6.

Furthermore,

Table 7 describes the evaluation of the proposed method in comparison to existing research. Our proposed method achieved peak average accuracies of 97.10% with the TalkingFace dataset, 97.00% with the Eyeblink8 dataset, and 96% with the Eye Video 1 dataset. We improved on the performances of previous methods.