Abstract

Impulse noise removal is an important problem in the field of image processing. Although many methods exist to remove impulse noise, there is still room for improvement. This paper proposes a new method for removing impulse noise that combines the nuclear norm and the detection TV model while considering the low-rank structure commonly found in visual images. The nuclear norm maintains this structure, while the detection TV criterion promotes sparsity in the gradient domain, effectively removing impulse noise while preserving edges and other vital features. To solve the non-convex and non-smooth optimization problem, we use a mathematical process with equilibrium constraints (MPEC) to transform it. Subsequently, the proximal alternating direction multiplication algorithm is used to solve the transformed problem. The convergence of the algorithm is proven under mild conditions. Numerical experiments in denoising and deblurring show that for low-rank images, the proposed method outperforms TV with detection, TV and OGSTV.

MSC:

65K05; 65K15; 90C25

1. Introduction

Impulse noise removal is a critical issue in image processing, which is to estimate the original image from the degraded image. In general, restoration problems for degraded images can be expressed as:

where denotes noise, K is a linear operator, such as an identity operator, convolution, wavelet transform, etc., is the observation image, and is the unknown real image. For convenience, we stack a two-dimensional image of size into a column vector where . During image acquisition and transmission, images can become blurred due to factors such as inaccurate focus, relative object movement, and degraded optical performance. Additionally, impulse noise may occur due to incorrect storage locations in the camera sensor, incorrect transmission, or faulty pixels. Impulse noise includes two common types: Salt-and-Pepper (SP) impulse noise and Random-valued (RV) impulse noise. Let the dynamic range of the image, , be between and , i.e., .

Salt-and-Pepper impulse noise: The i-th position of the observation image is denoted as , where , and it satisfies

where denotes the level of the SP impulse noise.

Random-valued impulse noise: The i-th position of the observation image satisfies

where follows a uniform distribution on , and denotes the level of the RV impulse noise. The pixels corrupted by impulse noise are randomly distributed, and since the corrupted pixels are difficult to distinguish from their neighbouring pixels, it is challenging to remove the noise. The most classical method for removing impulse noise is median filter, which has inspired many derivative methods such as adaptive centre weighted median filter (ACWMF) [1], adaptive weighted mean filter (AWMF) [2], adaptive switching median filter with pre-detection based on evidential reasoning (ASMFER) [3], etc. With the development of artificial intelligence, deep learning-based methods have also been widely used for impulse noise removal. Convolutional neural networks (CNN) are a common type of network structure in deep learning and can be used for impulse noise removal tasks. See, for example, [4,5]. Zhang et al. [6] were the first to use a denoising CNN (DnCNN) for image denoising. The network consists of convolutions, rectified linear unit (ReLU), back-normalization and residual learning. In addition, methods such as FFDNet [7] and NERNet [8] are also widely used for noise reduction. These methods can effectively remove impulse noise while preserving more detailed information in the image. However, they typically exhibit sensitivity to noise levels, whereby high levels of noise may cause the model to exhibit failure or over-smoothing of image details.

The variational approach is a significant method for image restoration. The variational formula can be expressed as follows:

where is the regularization parameter used to balance the variation regularization and data fidelity term . The data fidelity term is commonly expressed in different forms, such as the -norm [9,10,11,12,13], -norm [14,15], and non-convex data fidelity terms [16,17,18,19], among others. The regularization term includes various forms, such as total variational regularization [20,21,22], the total generalized variational [23,24,25], the total variational with overlapping group sparsity (OGSTV) [26], etc. The norm, commonly used as a data fidelity term, is sensitive to outliers and tends to perform ineffectively when they are present. Therefore, it is not suitable for removing impulse noise. Earlier studies [27,28] have shown that the norm is more robust to outliers than the norm. The TV model is the most popular method for removing impulse noise from images. Its mathematical expression is as follows:

In particular, if the parameter , denotes the anisotropic total variation. If the parameter , then denotes the isotropic total variation. It can be expressed as:

where and denote the horizontal and vertical first-order differences, respectively.

Although the TV model (5) is widely used for impulse noise removal, it does not take into account whether the pixels themselves are affected by the noise or not. The performance of TV [9,12] is usually poor when the noise level is high. To address this issue, Ma et al. [29] proposed one and two-phase models that incorporate box constraints . They solved the proposed models using a primal-dual Chambolle–Pock algorithm. Since the -norm provides an exact measure of sparsity, it avoids biased estimates that may arise from using the -norm. Based on this, Yuan and Ghanem [30] proposed a detection TV model with box constraints, which they solved using the proximal alternating direction multiplication method (PADMM). Kang et al. [31] proposed a model combining a sparse representation prior of the learning dictionary with TV, and they used a variable splitting scheme followed by a penalty method and an alternating minimization algorithm to solve the model. More recently, Yin et al. [32] proposed a detection OGSTV model replacing the TV regularization term in TV with the OGSTV regularization term. They solved this model by using a mathematical process with equilibrium constraints and a majorization minimization method, as well as PADMM.

In recent years, low-rank prior has been widely used for image restoration. For example, Ji et al. [33] studied video denoising based on nuclear norm minimization. Gu et al. [34] proposed an image denoising algorithm based on weighted nuclear norm minimization (WNNM), which achieved state-of-the-art results. Meanwhile, low-rank prior has also been applied to various image restoration tasks, such as image super-resolution [35], dynamic MRI [36], functional MRI [37], etc. Recently, low-rank prior methods have also been widely applied and developed in deep learning-based image denoising. See, for example, [38,39,40]. These successful works show that low-rank minimization-based methods are effective in preserving the low-rank prior information of images. Inspired by the above works, in this paper, we propose a new optimization model that combines the detection TV model with nuclear norm minimization for impulse noise image restoration. The new model is as follows:

where , indicates the presence of impulse noise interference at the i-th position, while indicates the absence of impulse noise interference at the i-th position. As the proposed method involves solving optimization problems with -norm, TV regularization terms, and nuclear norm, we first transform the non-convex optimization problem using a mathematical program with equilibrium constraints (MPEC), and then solve it using PADMM.

We summarise the contributions of this paper as follows: (i) We propose a new model for image deblurring under impulse noise and use a combination of MPEC and PADMM to solve this problem. (ii) We prove the convergence of the algorithm under certain conditions. (iii) Our numerical experiments demonstrate that the low-rank prior is effective in preserving the low-rank structure of the image. (iv) The model proposed in this paper outperforms the compared models in terms of PSNR and visually recovering low-rank images.

The remaining sections of this paper are organized as follows: Section 2 briefly presents some preliminaries. In Section 3, we provide the derivation of the algorithm used to solve the proposed optimization problem, along with the proof of its convergence. Section 4 presents the experimental results and a discussion of the findings. Finally, in Section 5, we conclude the paper.

2. Preliminaries

In this section, we will introduce some of the symbols, concepts and lemmas used throughout this paper. Let X be a finite-dimensional vector space, which is equipped with inner product and the associated norm is .

Let C be a non-empty closed convex set of X. The indicator function of C is defined as

Let be a proper closed convex function, whose effective domain is . The proximity operator of f with index is defined by

For a convex function, we use the characteristics of sub-differential. Therefore, , if and only if

where is the sub-differential of f at x, defined by

The proximity operator and the sub-differential operator are related as follows:

the mapping is called the resolvent of the operator with parameter .

The nuclear norm is a convexity envelope for the rank of matrices Z, and it has been widely used for problems involving low-rank matrices.

Lemma 1.

Let , the proximity operator of with is

where is a singular value decomposition of Y, and is the soft-thresholding operator.

Lemma 2

([30]). For any , the following formula holds

where is the unique optimal solution of the problem in (9).

3. Main Algorithm

In this section, we mainly solve Problem (7). As this problem is non-convex and non-smooth, we rely on Lemma 2 and the proximal ADMM to solve it. Let , and . Then, we can reformulate (7) as follows:

where , , and . The augmented Lagrangian function of (10) is

where are the Lagrangian multipliers and are the penalty parameters. The proximal ADMM for solving Problem (10) is as follows,

Next, we present how to solve the sub-problems of (11).

For the sub-problem , we add a proximal term , where . Therefore,

According to the optimality condition, we have,

After simple calculation, we obtain

where , and denotes the orthogonal projections on the closed convex sets C.

For the sub-problem , we have

Let . According to the first-order optimality condition, we have

Thus,

For the sub-problem , we have

For the sub-problem , we have

For , we have

For , we have

where .

For the sub-problem }, we have

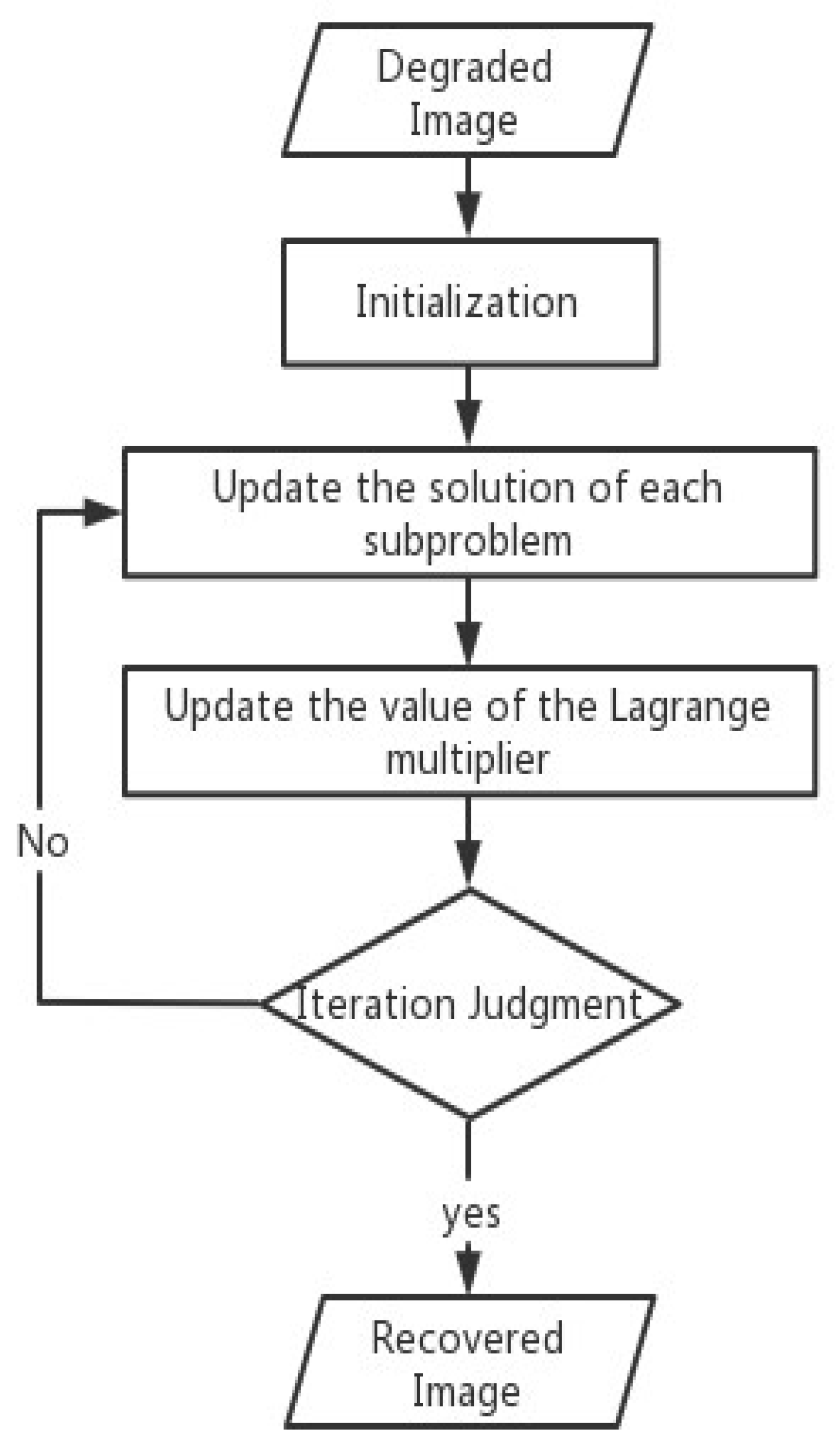

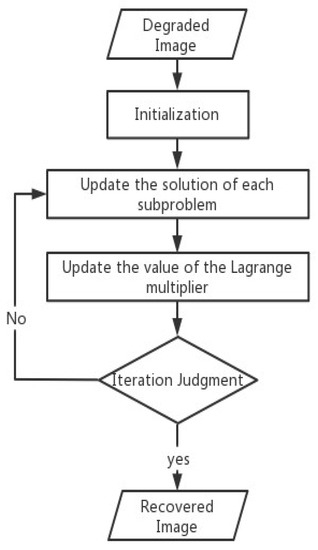

Overall, we summarize the main algorithm in this paper. In addition, we provide the main flowchart of the algorithm (Figure 1).

Figure 1.

Flowchart of Algorithm 1.

In the following, we prove the convergence of Algorithm 1. The proof follows from the main idea of Theorem 1 of [30].

| Algorithm 1 Proximal alternating direction method of multipliers (PADMM) for solving Problem (10). |

| Input: For arbitrary , and . Output: . |

Theorem 1.

Let , , and be the sequence generated by Algorithm 1. If the sequence is bounded and satisfies , then any accumulation point in the sequence is the KKT point of Problem (10).

Proof.

According to the augmented Lagrangian function, the KKT condition at can be derived:

First, let us prove that converges, that is, when , we have . Its augmented Lagrange function can be written as

Since is bounded, then is bounded. Let

where is the value of the previous iteration. is strongly convex with respect to u and v. Therefore, the second-order growth condition is satisfied for u and v, that is, there is such that

and similarly for , there is also a second-order growth condition, that is, the existence of such that

On the other hand, according to the renewal principle of Lagrange multipliers.

Let , we have

Therefore,

As a result, , i.e., , which implies and hold. For , its KKT conditions at are

Combining , we obtain

which is the same as the KKT condition derived from Problem (10). Therefore, is the KKT point of the problem. □

4. Numerical Experiments

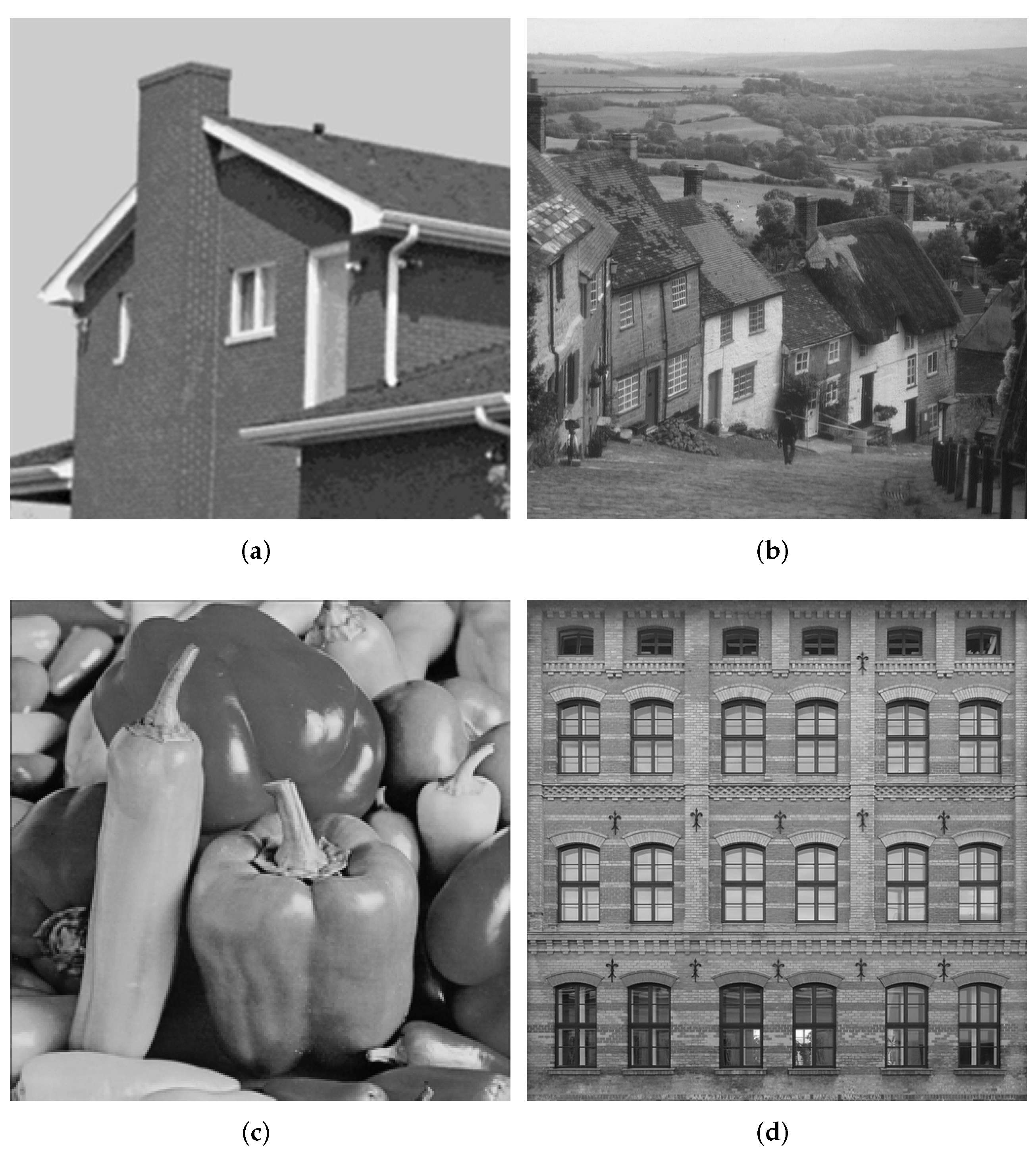

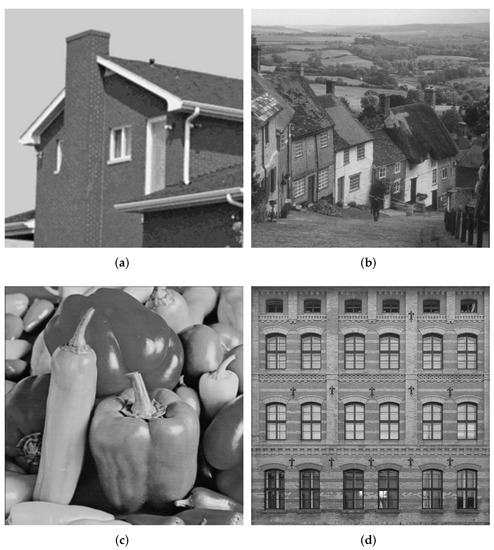

In this section, the performance of the proposed method is verified by numerical experiments. The method is compared with TV [29], TV [30] and OGSTV [32]. Here, TV is used with the model with detection. All experiments were performed on 64-bit Windows 11 and MATLAB R2018b running on an Intel(R) Core(TM) i5-1035G1 CPU and 8 GB memory. We consider four test images of size natural images: House (), Mountain (), Peppers (), and Building (), which are shown in Figure 2.

Figure 2.

Test images. (a) House; (b) Mountain; (c) Peppers; (d) Building.

4.1. Experiment Setting

For natural image denoising, deblurring and low-rank image deblurring tests, we use the following method to generate simulation images.

(1) Natural image denoising. To generate noisy images, we add SP with noise levels of 10, 30, 50, 70 and 90% into the original image.

(2) Natural image deblurring. In order to generate noisy and blurred images, we use the following MATLAB function to generate a blurring kernel of radius .

Then, noise levels of 10, 30, 50, 70 and 90% of the SP impulse noise are added.

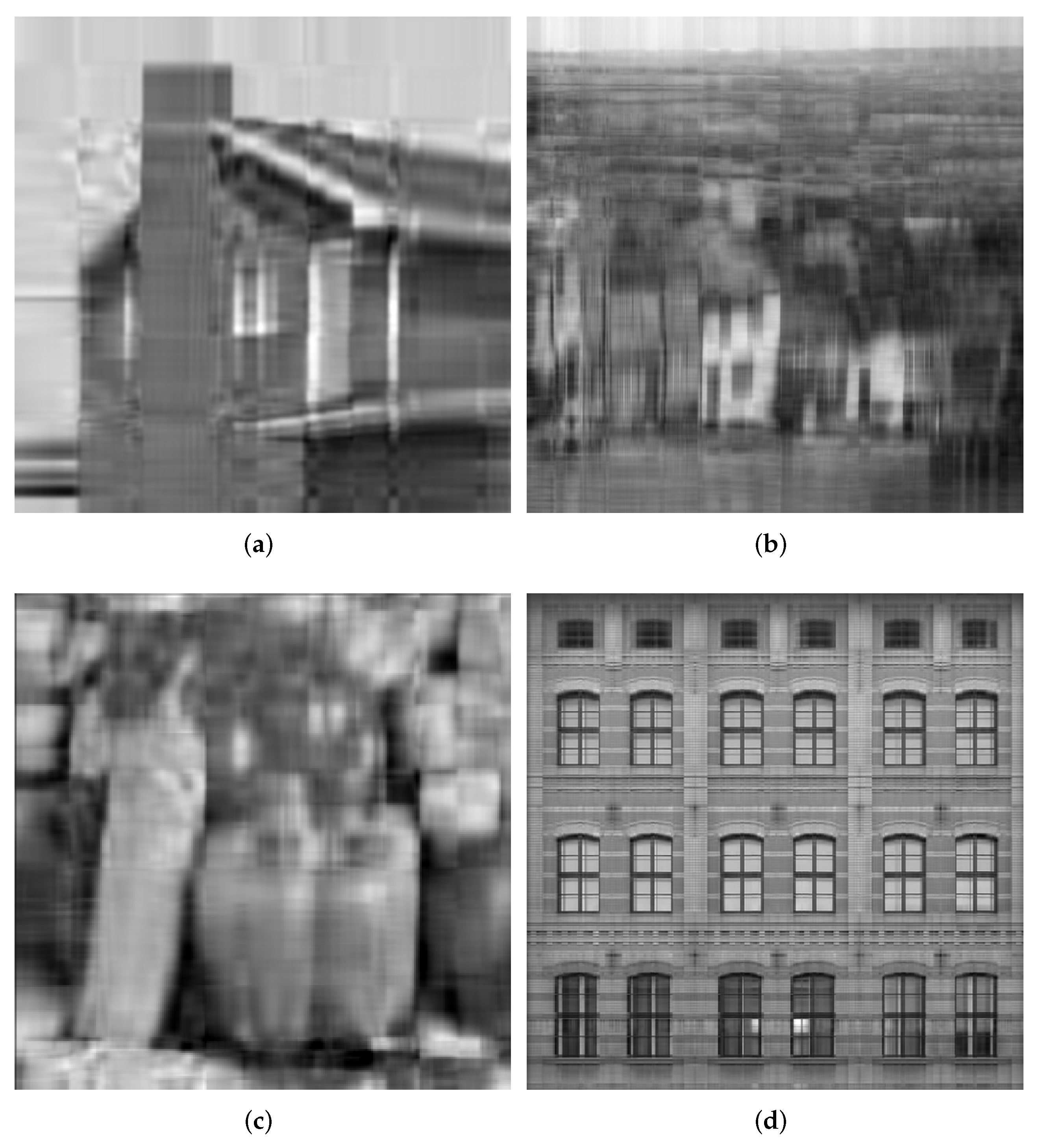

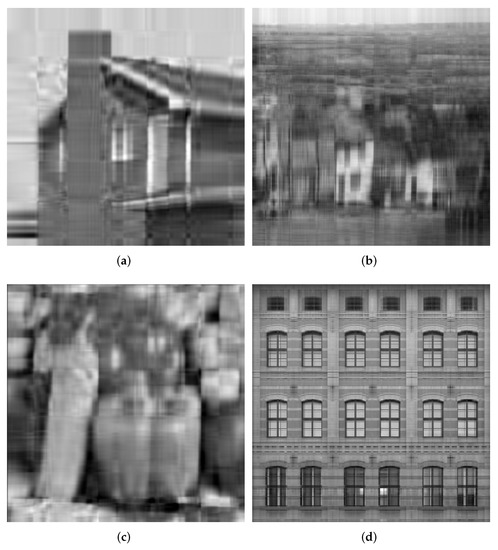

(3) Low-rank image deblurring. To obtain noisy and blurred images of low rank, we used singular value division (SVD) to reduce the rank of the test image to 10, as shown in Figure 3. Then, the same method as described in (2) is performed on the images in Figure 3.

Figure 3.

Low-rank images. (a) House; (b) Mountain; (c) Pepper; (d) Building.

In the numerical experiments, we set , , , and in the individual denoising and deblurring experiments. To obtain good results, we always adjust the regularization parameters and in each test, where and . For the regularization parameter in , we sweep over. For the regularization parameter in , we sweep over. We use the same stopping criterion as [30], defined as and and and . TV, TV and OGSTV also adopt a similar stopping criterion.

To evaluate the effectiveness of these models in recovering images, we use the peak signal-to-noise ratio (PSNR), and the structural similarity ratio (SSIM ) index, defined as follows:

and

where P is the maximum peak value of the original image u, is the restored image, and are small constants, and are the mean values of u and , respectively, and are the variances of u and , and is the covariance of u and .

4.2. Natural Images Denoising

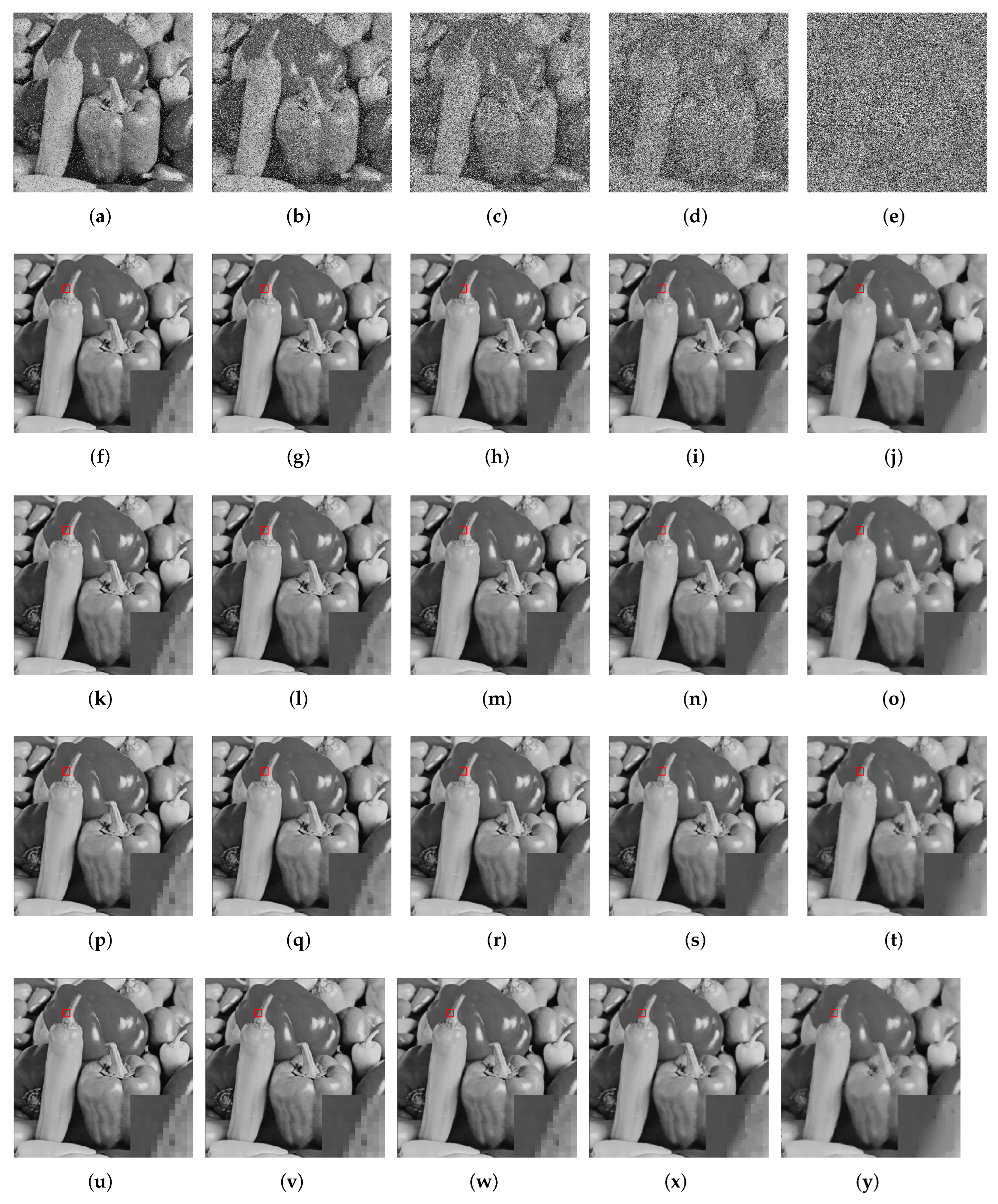

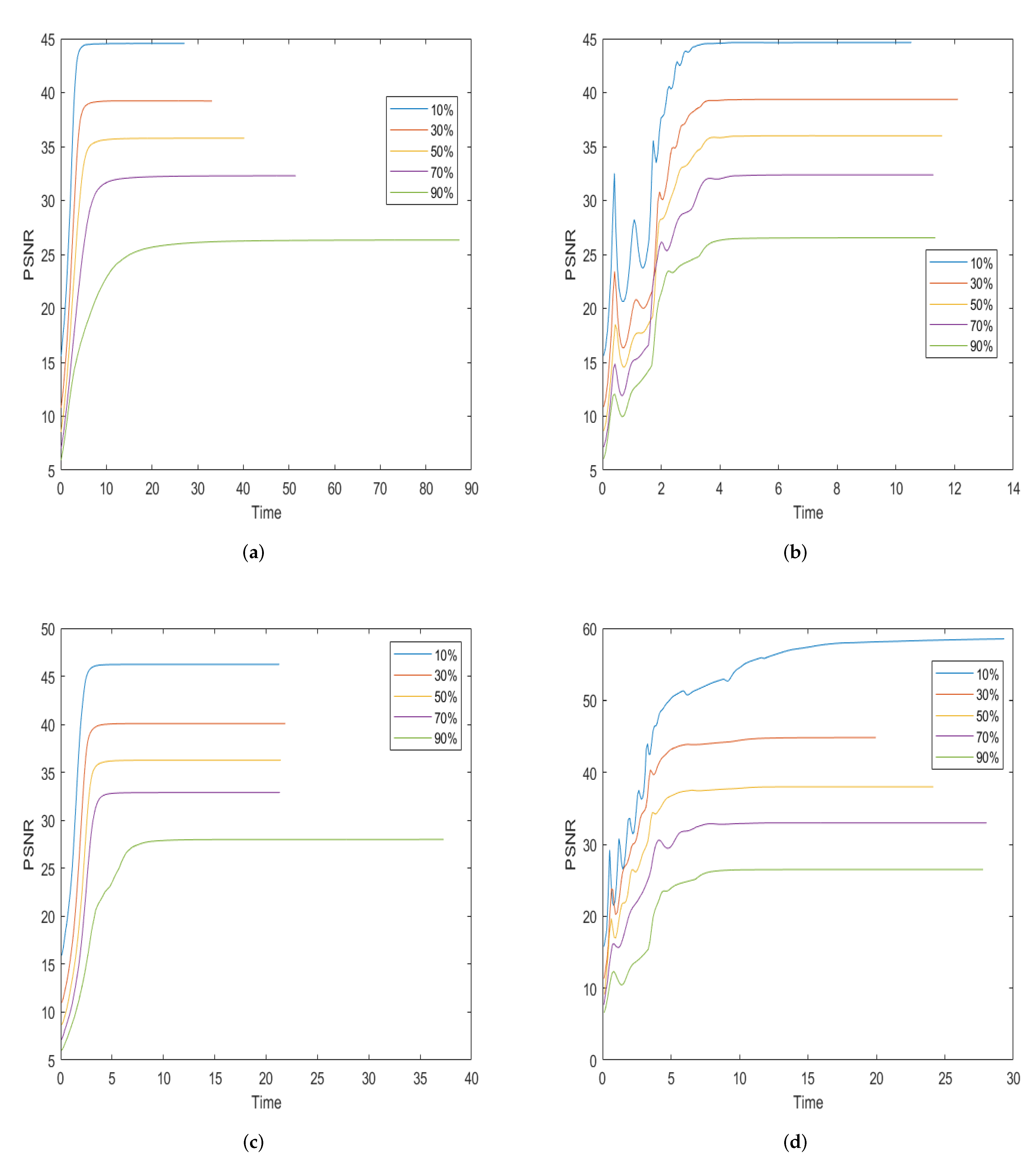

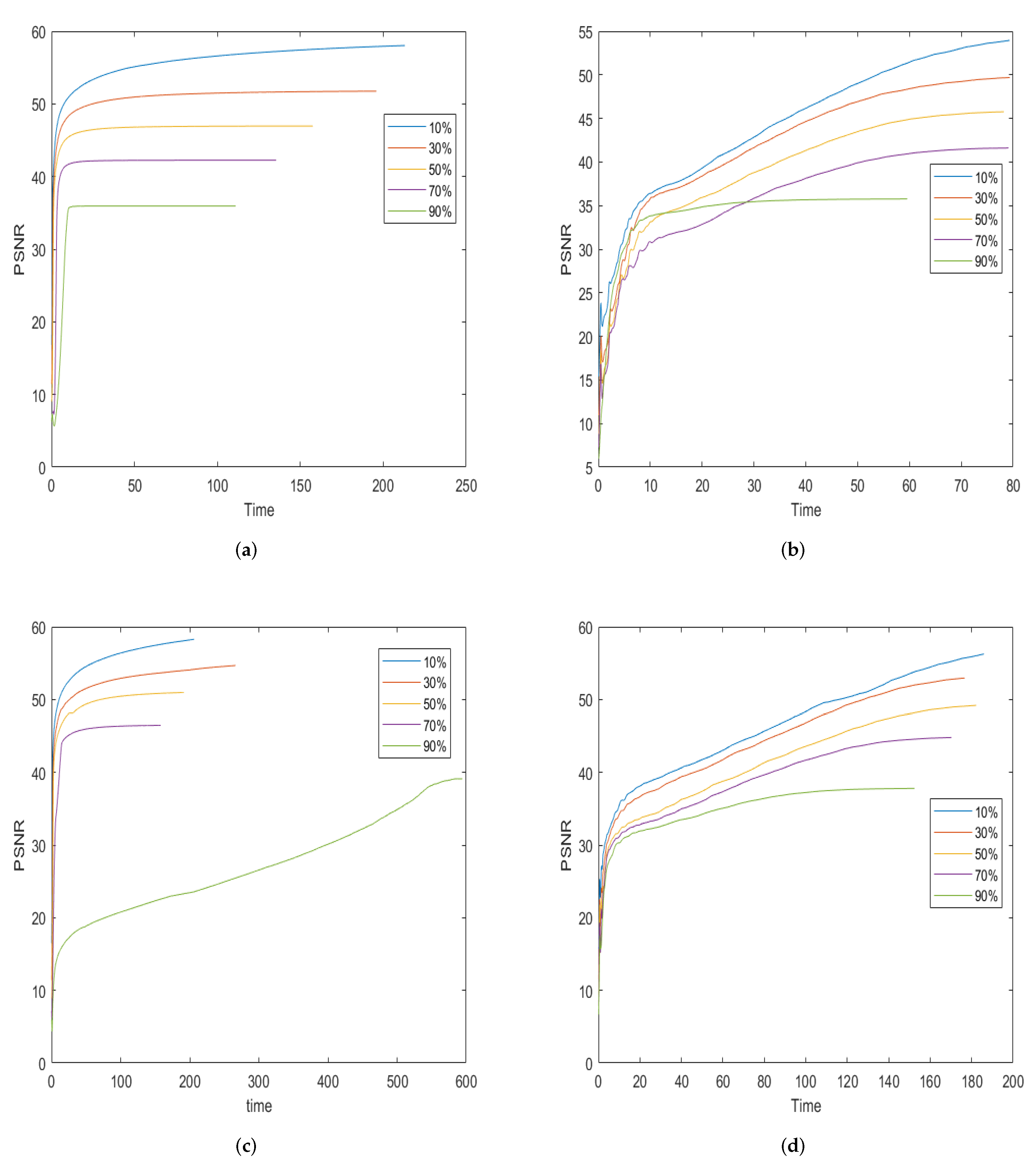

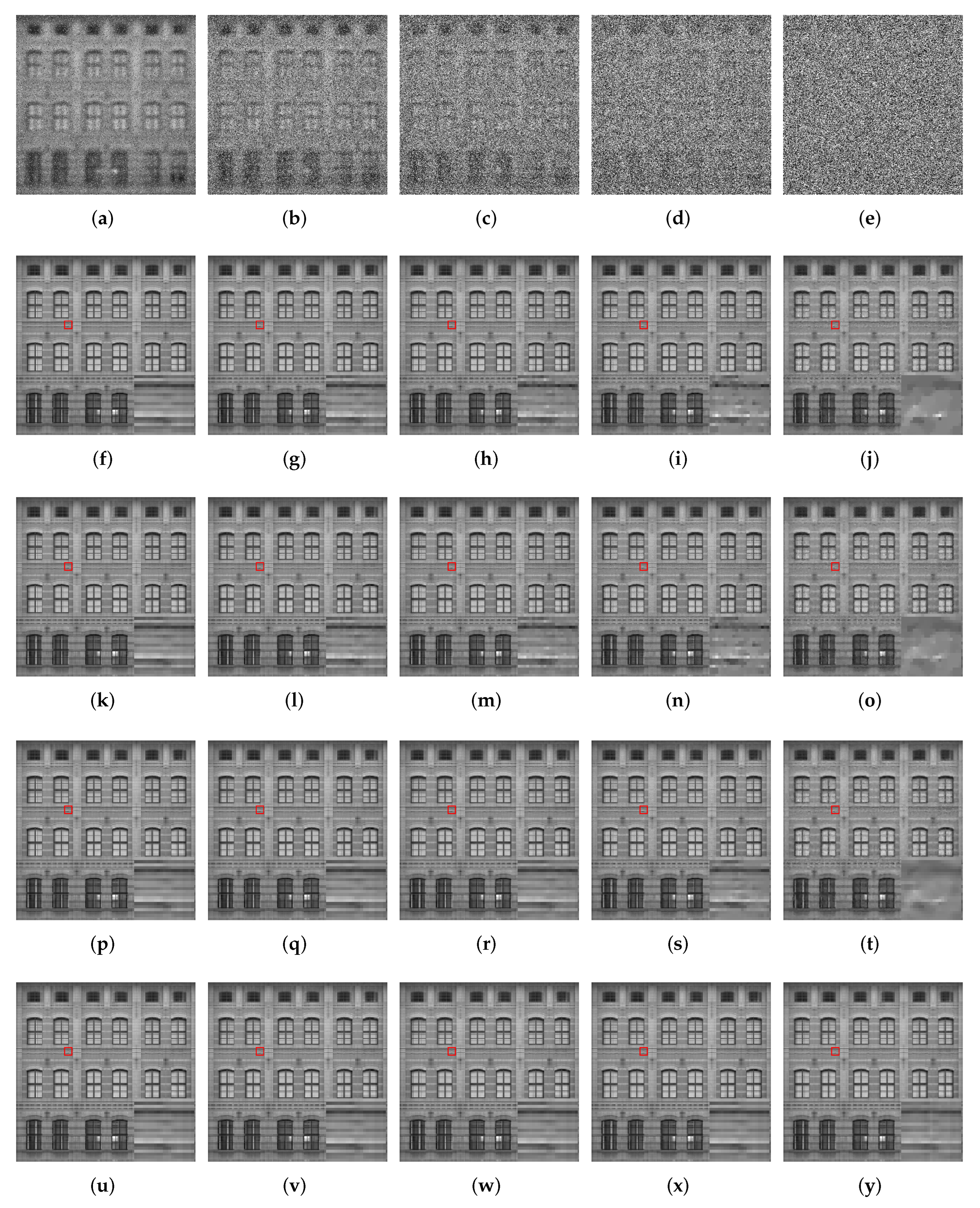

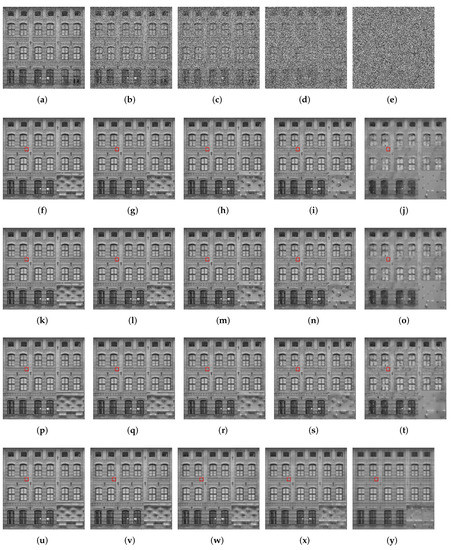

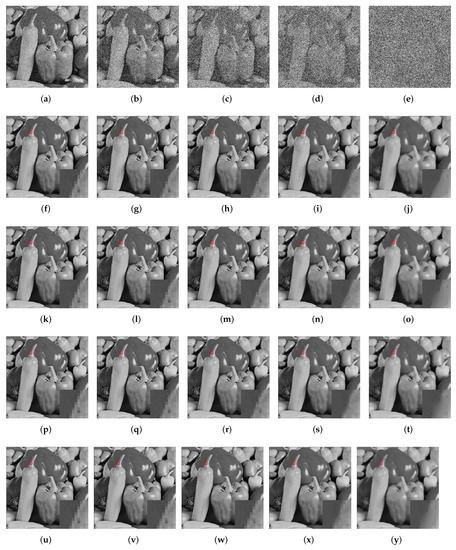

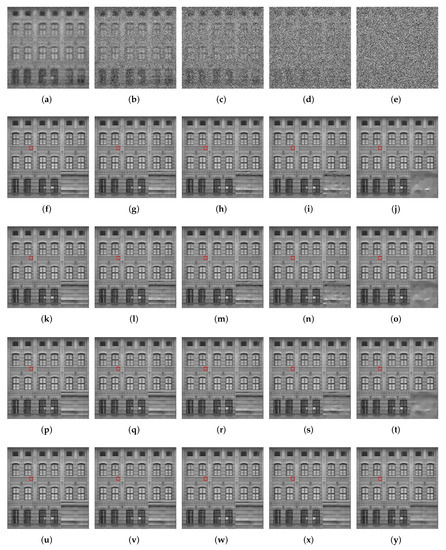

In this subsection, we compare the performance of our model with TV, TV, and OGSTV for pure denoising. Table 1 shows the results of the different recovery methods. As can be seen from the table, in most cases, the proposed model outperforms TV and TV. This is because the combination of TV and nuclear norm can better handle the texture and edge information in the image while removing noise, whereas the TV and TV methods may encounter difficulties in dealing with the texture and edge information, resulting in artefacts and blurring. In particular, for Building images with low-rank structures, Our proposed model also outperforms OGSTV in PSNR values, and is 1–2 dB and 3–4 dB higher than TV and TV, respectively, mainly because we include the nuclear norm as a penalty term. However, for the House and Mountain images, the OGSTV model outperforms the proposed model. Figure 4 and Figure 5 show a visual comparison of the different methods for recovering the damaged Building and Pepper images at different noise levels. It can be observed that the proposed model recovered the images better than the other models. Specifically, for high SP noise, the proposed model retains the neat edges better, which can be seen more visually from the local images. In addition, Figure 6 demonstrates the variation of PSNR over time for different methods to recover the Pepper images with different noise levels. It shows that our proposed method takes approximately the same amount of time to denoise at different noise levels, while TV and OGSTV take longer when the noise level is very high.

Table 1.

The PSNR (dB), SSIM, and number of iterations (Iter) of different methods for images corrupted by SP noise. The bold data represents the best PSNR, SSIM, and the least number of iterations, respectively.

Figure 4.

Comparison of restored images from different methods for the images degraded by SP noise. First line: corrupted images. Second line: restored images by TV. Third line:restored images by TV. Forth line: restored images by OGSTV. Fifth line: restored images by Ours. (a) ; (b) ; (c) ; (d) ; (e) ; (f) ; (g) ; (h) ; (i) ; (j) ; (k) ; (l) ; (m) ; (n) ; (o) ; (p) ; (q) ; (r) ; (s) ; (t) ; (u) ; (v) ; (w) ; (x) ; (y) . The numbers represent the noise level and PSNR (dB) of the image.

Figure 5.

Comparison of restored images from different methods for the images degraded by SP noise. First line: corrupted images. Second line: restored images by TV. Third line:restored images by TV. Forth line: restored images by OGSTV. Fifth line: restored images by Ours. (a) ; (b) ; (c) ; (d) ; (e) ; (f) ; (g) ; (h) ; (i) ; (j) ; (k) ; (l) ; (m) ; (n) ; (o) ; (p) ; (q) ; (r) ; (s) ; (t) ; (u) ; (v) ; (w) ; (x) ; (y) . The numbers represent the noise level and PSNR (dB) of the image.

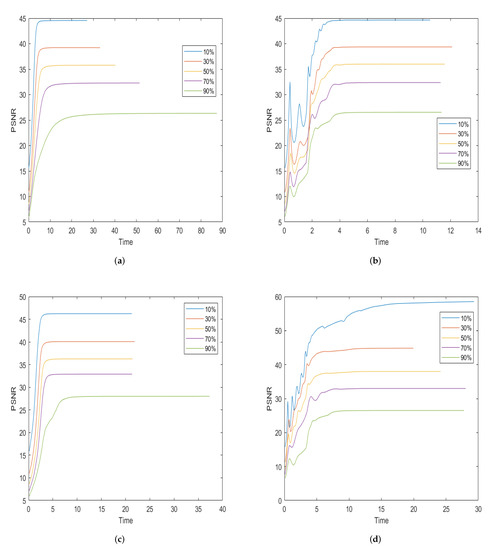

Figure 6.

The PSNR values with respect to the CPU time in seconds are compared for the test image of Peppers among the different methods. (a) TV; (b) TV; (c) OGSTV; (d) Ours.

4.3. Natural Images Deblurring

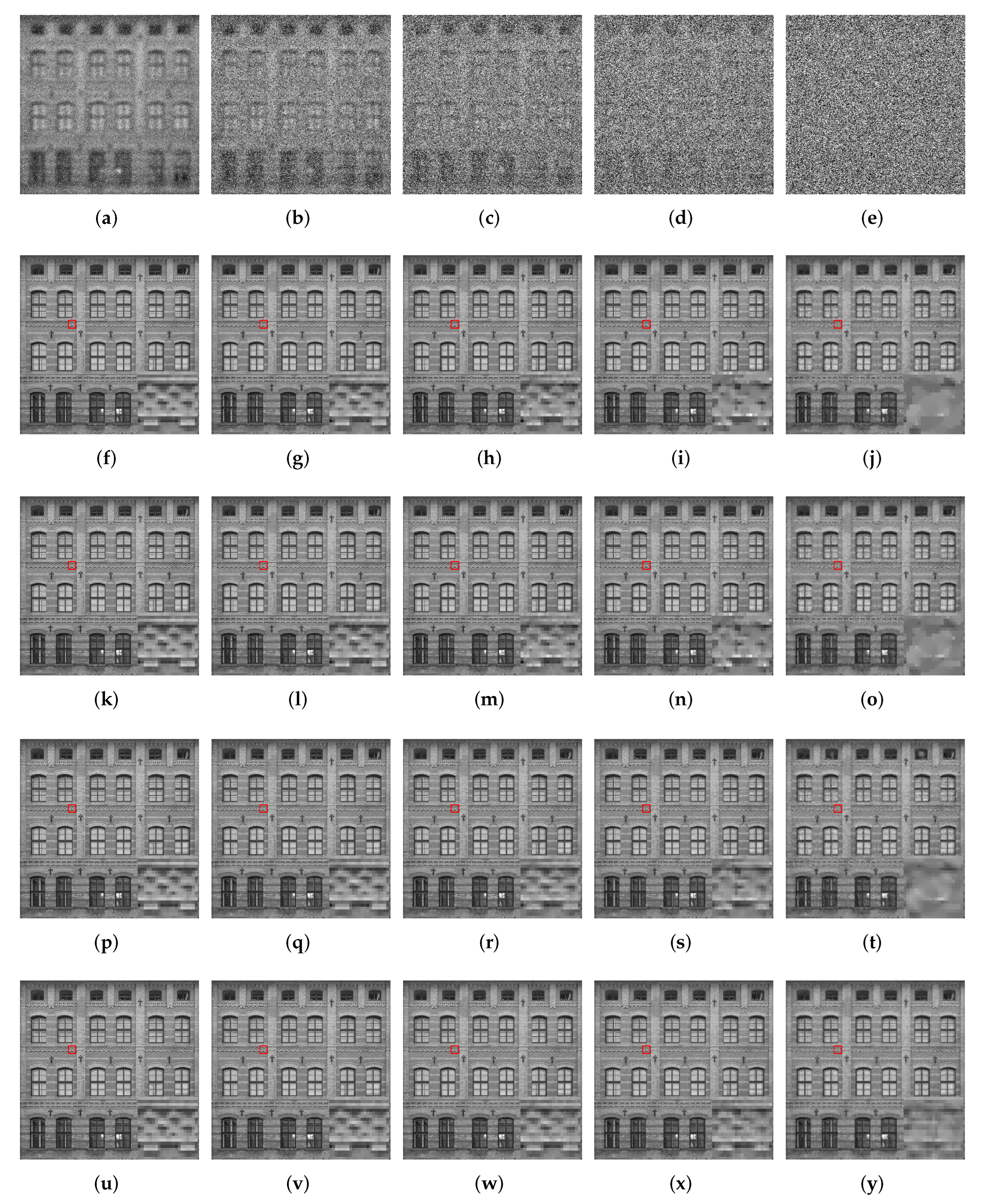

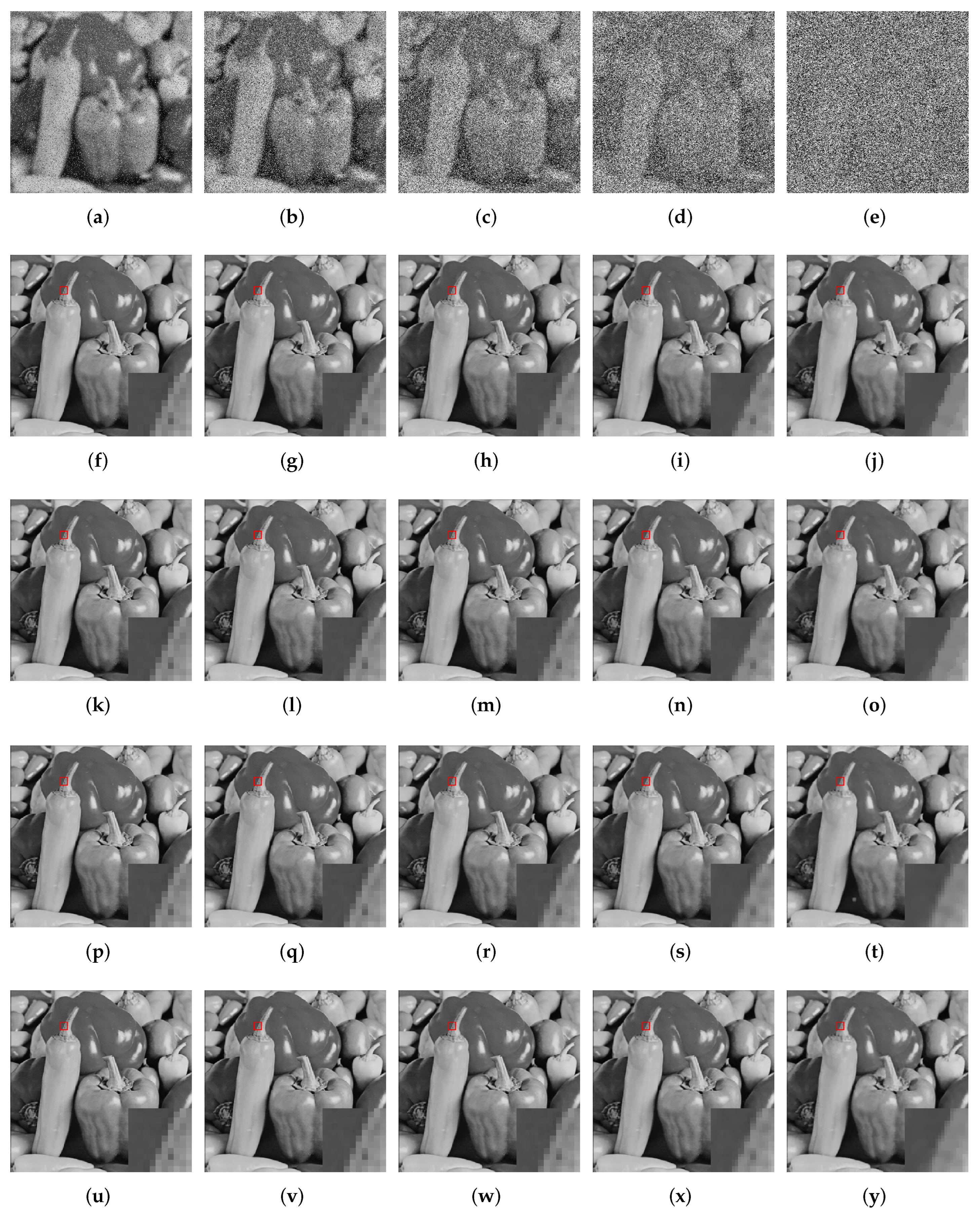

In this subsection, we compare the performance of our model with TV, TV, and OGSTV for deblurring with SP noise. Table 2 shows the recovery results of the different methods. In most situations, the proposed model outperforms TV and TV in terms of PSNR and SSIM. For images of House and Mountain, the OGSTV model outperforms the proposed model. This is attributed to OGSTV using external norm, which can comprehensively consider the structural information of the image, thereby achieving a better deblurring effect. However, for Building images with low-rank structures, the proposed model also outperforms OGST. This phenomenon indicates that our method performs well in deblurring low-rank images or images with low-rank structures. Figure 7 and Figure 8 show a visual comparison of the different methods for recovering the Building and Pepper images with blurring and different noise levels. It can be observed that the model proposed in this paper is better than other methods in terms of PSNR and visual quality, retaining clear edges and sharper images, especially at higher levels of noise. In addition, Figure 9 shows the variation in PSNR over time for different methods to recover the House image at different noise levels. Figure 9 shows that the TV method requires the least time for image deblurring, while the time for deblurring using TV and our method decreases as the noise level increases. In contrast, the OGSTV method takes longer for deblurring, especially when the noise level reaches 90%, since inner and outer iterations need to be solved when using the OGSTV method for deblurring, which requires more time.

Table 2.

The PSNR (dB), SSIM, and number of iterations (Iter) of different methods for images corrupted by blurring with SP noise. The bold data represents the best PSNR, SSIM, and the least number of iterations, respectively.

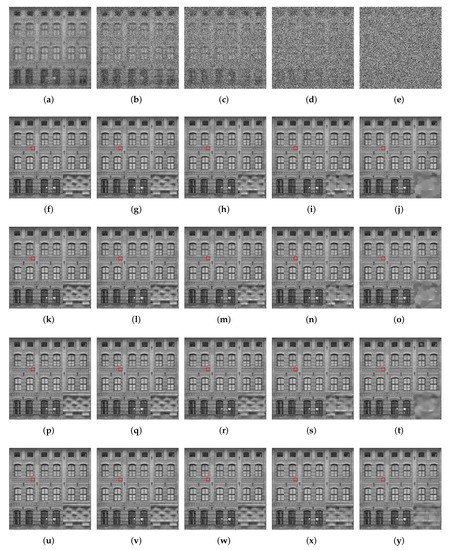

Figure 7.

Comparison of restored images from different methods for the images degraded by SP noise and blur kernel. First line: corrupted images. Second line: restored images by TV. Third line:restored images by TV. Forth line: restored images by OGSTV. Fifth line: restored images by Ours. (a) ; (b) ; (c) ; (d) ; (e) ; (f) ; (g) ; (h) ; (i) ; (j) ; (k) ; (l) ; (m) ; (n) ; (o) ; (p) ; (q) ; (r) ; (s) ; (t) ; (u) ; (v) ; (w) ; (x) ; (y) . The numbers represent the noise level and PSNR (dB) of the image.

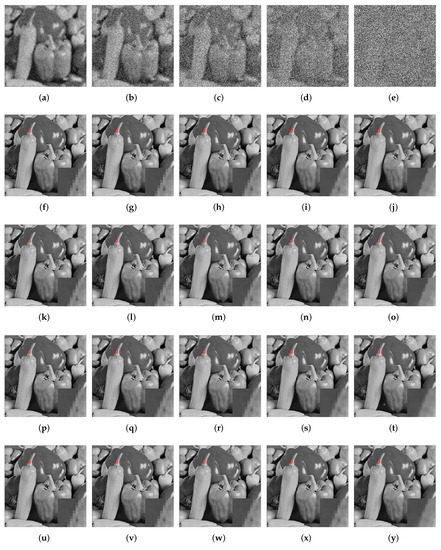

Figure 8.

Comparison of restored images from different methods for the images degraded by SP noise and blur kernel. First line: corrupted images. Second line: restored images by TV. Third line:restored images by TV. Forth line: restored images by OGSTV. Fifth line: restored images by Ours. (a) ; (b) ; (c) ; (d) ; (e) ; (f) ; (g) ; (h) ; (i) ; (j) ; (k) ; (l) ; (m) ; (n) ; (o) ; (p) ; (q) ; (r) ; (s) ; (t) ; (u) ; (v) ; (w) ; (x) ; (y) . The numbers represent the noise level and PSNR (dB) of the image.

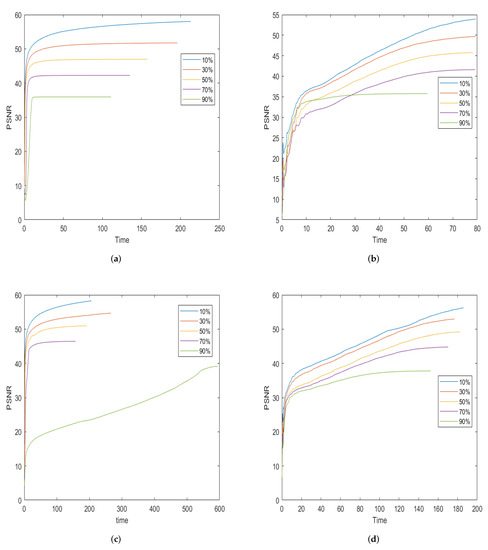

Figure 9.

The PSNR values with respect to the CPU time in seconds are compared for the test image of House among the different methods. (a) TV; (b) TV; (c) OGSTV; (d) Ours.

4.4. Low-Rank Image Deblurring

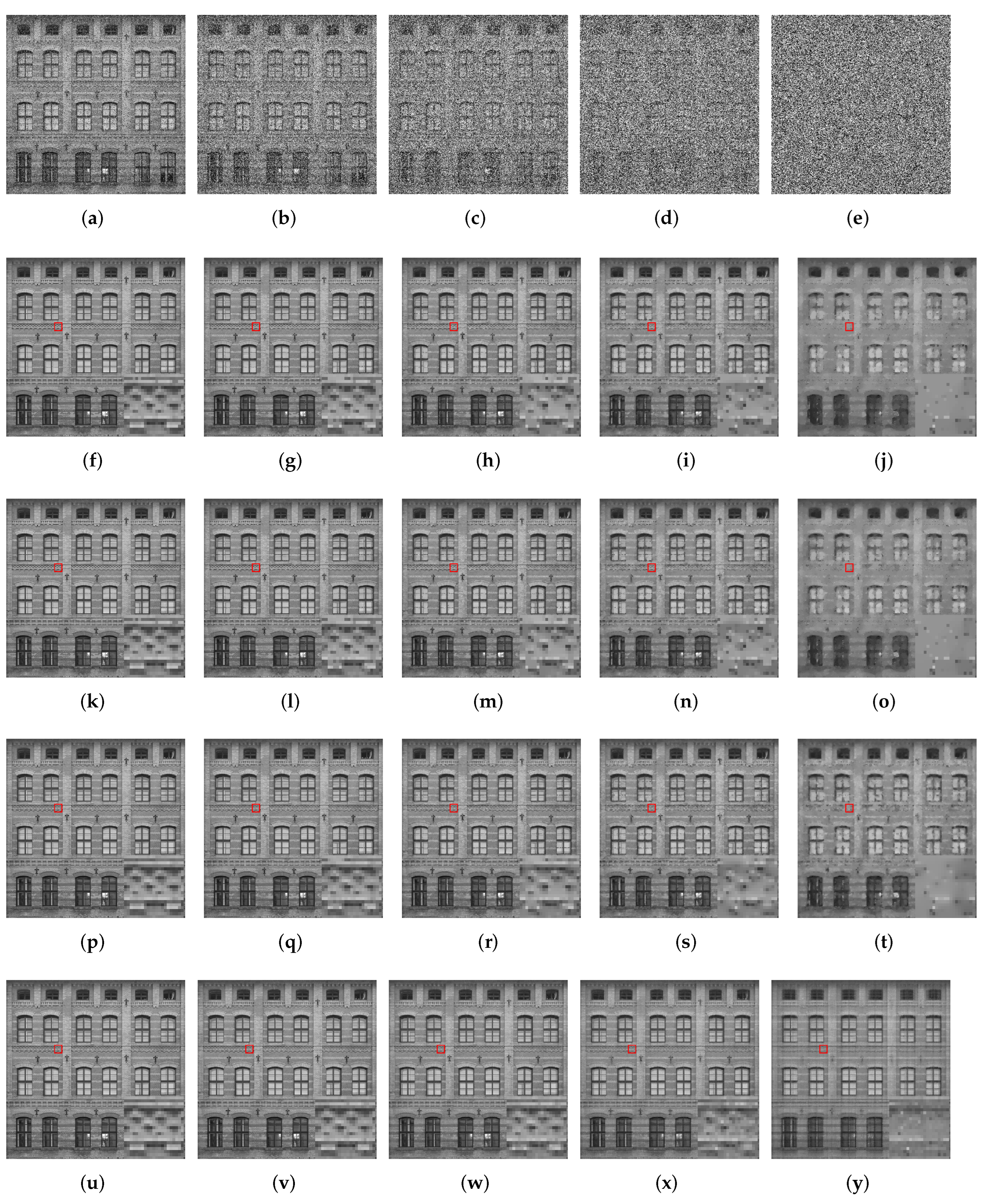

In this subsection, we compare the performance of our model with TV, TV and OGSTV for deblurring low-rank images. Table 3 presents the recovery results of the different methods. From Table 3, it is evident that our model outperforms the other three methods in most situations. Low-rank images usually contain the main structural information of an image, and the nuclear norm as a low-rank constraint can better recover global structural information, thereby improving the deblurring effect, which is consistent with the theoretical studies. Moreover, as the level of noise increases, the difference in PSNR between our model and the other three methods becomes more significant, further demonstrating the higher stability of our approach in dealing with low-rank image tasks. However, for Pepper images, TV outperforms our model at noise level of 10 and 30%. Overall, our method performs well in processing low-rank images. Figure 10 visually compares the different methods for recovering low-rank Building images under blurring and various noise levels. As shown in Figure 10, our model can achieve better visualization for low-rank image recovery compared to other methods.

Table 3.

The PSNR(dB), SSIM, and number of iterations (Iter) of different methods for images corrupted by blurring with SP noise. The bold data represents the best PSNR, SSIM, and the least number of iterations, respectively.

Figure 10.

Comparison of restored images from different methods for the images degraded by SP noise and blur kernel. First line: corrupted images. Second line: restored images by TV. Third line:restored images by TV. Forth line: restored images by OGSTV. Fifth line: restored images by Ours. (a) ; (b) ; (c) ; (d) ; (e) ; (f) ; (g) ; (h) ; (i) ; (j) ; (k) ; (l) ; (m) ; (n) ; (o) ; (p) ; (q) ; (r) ; (s) ; (t) ; (u) ; (v) ; (w) ; (x) ; (y) . The numbers represent the noise level and PSNR (dB) of the image.

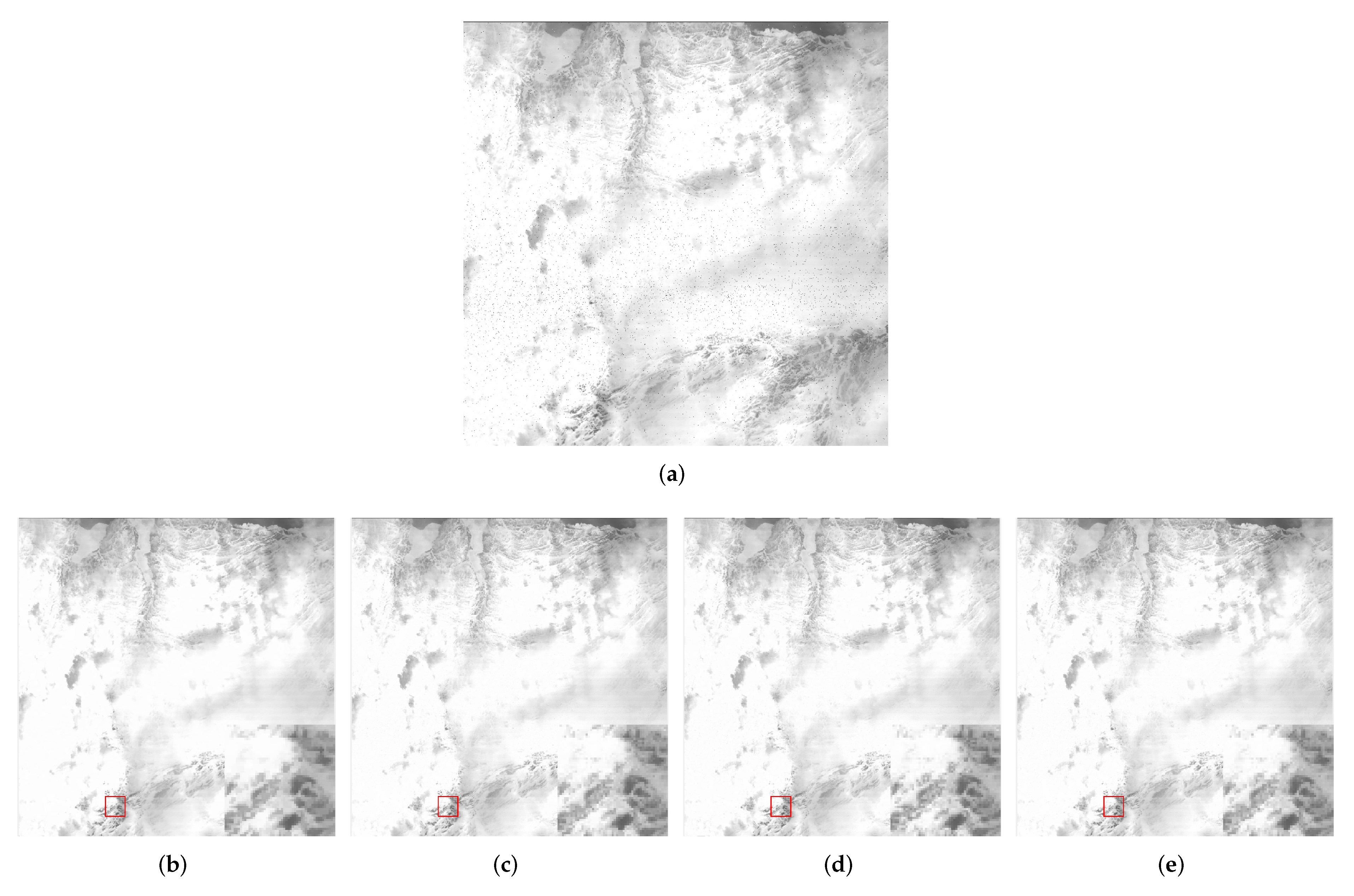

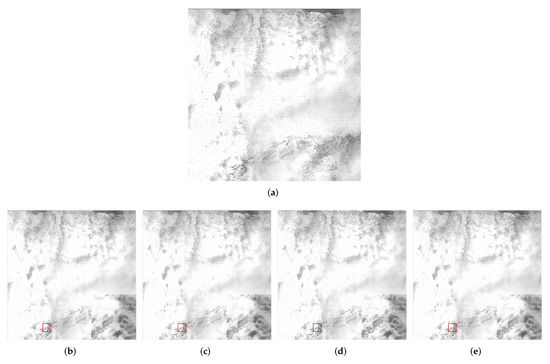

4.5. Real Image Denoising

In this section, we verify the effectiveness of our method on real images by selecting an image that has been corrupted by impulse noise, as shown in Figure 11. The figure illustrates the real image and the images restored by using four different methods. It can be seen that our method exhibits good performance on real images. The image is obtained from https://www.usgs.gov/landsat-missions/impulse-noise, accessed on 24 May 2023.

Figure 11.

Comparison of restored images by using different methods for a real image with impulse noise. (a) real image with impulse noise. (b) restored image by TV. (c) restored image by TV. (d) restored image by OGSTV. (e) restored image by Ours.

5. Conclusions

In this paper, we proposed a novel model that utilizes a combination of TV and nuclear norm to remove impulse noise. Despite the challenge of dealing with a non-convex and non-smooth problem, we developed a solution through mathematical program with equilibrium constraints (MPEC) and proximal ADMM. The convergence of our proposed algorithm was proven under certain conditions. The experimental results demonstrated that our model surpasses other state-of-the-art methods, such as TV, TV, and OGSTV, in processing low-rank images, as seen through its higher PSNR and SSIM values. In this paper, the solution process involves calculating the proximity operator of the nuclear norm, which requires using the singular value decomposition of the matrix. As a result, the computation speed may decrease when dealing with large matrix dimensions. Therefore, it is necessary to make trade-offs based on specific application scenarios. In the future, we will combine weighted nuclear norm and data fidelity terms. Weighted nuclear norm assigns different weights to different image blocks, fully utilizing the correlation between features and the sparsity of the data, resulting in better image recovery performance.

Author Contributions

Writing—original draft, Y.W.; Writing—review & editing, S.D.; Supervision, Y.T. All authors have read and agreed to the published version of the manuscript.

Funding

This work was funded by the National Natural Science Foundations of China (12061045, 12031003, 12271117), and the Jiangxi Provincial Natural Science Foundation (20224ACB211004).

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The data presented in this study are available on request from the corresponding author.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Lin, T.C. A new adaptive center weighted median filter for suppressing impulsive noise in images. Inf. Sci. 2007, 177, 1073–1087. [Google Scholar] [CrossRef]

- Zhang, P.X.; Li, F. A New Adaptive Weighted Mean Filter for Removing Salt-and-Pepper Noise. IEEE Signal Process. Lett. 2014, 21, 1280–1283. [Google Scholar] [CrossRef]

- Zhang, Z.; Han, D.; Dezert, J.; Yang, Y. A new adaptive switching median filter for impulse noise reduction with pre-detection based on evidential reasoning. Signal Process. 2018, 147, 173–189. [Google Scholar] [CrossRef]

- Zhang, W.H.; Jin, L.H.; Song, E.N.; Xu, X.Y. Removal of impulse noise in color images based on convolutional neural network. Appl. Soft Comput. 2019, 32, 105558. [Google Scholar] [CrossRef]

- Li, G.Y.; Xu, X.L.; Zhang, M.H.; Liu, Q.G. Densely connected network for impulse noise removal. Pattern Anal. Appl. 2020, 23, 1263–1275. [Google Scholar] [CrossRef]

- Zhang, K.; Zuo, W.M.; Chen, Y.J.; Meng, D.Y.; Zhang, L. Beyond a Gaussian Denoiser: Residual Learning of Deep CNN for Image Denoising. IEEE Trans. Image Process. 2017, 26, 3142–3155. [Google Scholar] [CrossRef] [PubMed]

- Zhang, K.; Zuo, W.M.; Zhang, L. FFDNet: Toward a Fast and Flexible Solution for CNN-Based Image Denoising. IEEE Trans. Image Process. 2018, 27, 4608–4622. [Google Scholar] [CrossRef]

- Guo, B.Y.; Song, K.C.; Dong, H.W.; Yan, Y.H.; Tu, Z.B.; Zhu, L. NERNet: Noise estimation and removal network for image denoising. J. Vis. Commun. Image Represent. 2020, 71, 102851. [Google Scholar] [CrossRef]

- Chan, T.F.; Esedoglu, S. Aspects of total variation regularized l1 function approximation. SIAM J. Appl. Math. 2005, 65, 1817–1837. [Google Scholar] [CrossRef]

- Yang, J.F.; Zhang, Y.; Yin, W.T. An efficient TVL1 algorithm for deblurring multichannel images corrupted by impulsive noise. SIAM J. Sci. Comput. 2009, 31, 2842–2865. [Google Scholar] [CrossRef]

- Guo, X.X.; Li, F.; Ng, M.K. A fast ℓ1-TV algorithm for image restoration. SIAM J. Sci. Comput. 2009, 31, 2322–2341. [Google Scholar] [CrossRef]

- Yuan, J.; Shi, J.; Tai, X.C. A convex and exact approach to discrete constrained TV-L1 image approximation. East Asian J. Appl. Math. 2011, 1, 172–186. [Google Scholar] [CrossRef]

- Micchelli, C.A.; Shen, L.X.; Xu, L.Y.S.; Zeng, X. Proximity algorithms for the L1/TV image denoising model. Adv. Comput. Math. 2013, 38, 401–426. [Google Scholar] [CrossRef]

- Liu, Q.G.; Xiong, B.; Yang, D.C.; Zhang, M.H. A generalized relative total variation method for image smoothing. Multimed. Tools Appl. 2016, 75, 7909–7930. [Google Scholar] [CrossRef]

- Shi, M.Z.; Han, T.T.; Liu, S.Q. Total variation image restoration using hyper-Laplacian prior with overlapping group sparsity. Signal Process. 2016, 126, 65–76. [Google Scholar] [CrossRef]

- Lanza, A.; Morigi, S.; Sgallari, F. A nonsmooth nonconvex sparsity-promoting variational approach for deblurring images corrupted by impulse noise. Comput. Vis. Med. Image Process. V 2016, 2, 87–94. [Google Scholar]

- Gu, G.Y.; Jiang, S.H.; Yang, J.F. A TVSCAD approach for image deblurring with impulsive noise. Inverse Probl. 2017, 33, 125008. [Google Scholar] [CrossRef]

- Zhang, X.J.; Bai, M.R.; Ng, M.K. Nonconvex-TV based image restoration with impulse noise removal. SIAM J. Imaging Sci. 2017, 10, 1627–1667. [Google Scholar] [CrossRef]

- Zhang, B.X.; Zhu, G.P.; Zhu, Z.B. A TV-log nonconvex approach for image deblurring with impulsive noise. Signal Process. 2020, 174, 107631. [Google Scholar] [CrossRef]

- Allard, W. Total Variation Regularization for Image Denoising, I. Geometric Theory. SIAM J. Math. Anal. 2008, 39, 1150–1190. [Google Scholar] [CrossRef]

- Michel, V.; Gramfort, A.; Varoquaux, G.; Eger, E.; Thirion, B. Total variation regularization for fMRI-based prediction of behavior. IEEE Trans. Med. Imaging 2011, 30, 1328–1340. [Google Scholar] [CrossRef] [PubMed]

- Hu, Y.; Jacob, M. Higher degree total variation (HDTV) regularization for image recovery. IEEE Trans. Image Process. 2012, 21, 2559–2571. [Google Scholar] [CrossRef] [PubMed]

- Valkonen, T.; Bredies, K.; Knoll, F. Total generalized variation in diffusion tensor imaging. SIAM J. Imaging Sci. 2013, 6, 487–525. [Google Scholar] [CrossRef]

- Gao, Y.M.; Liu, F.; Yang, X.P. Total generalized variation restoration with non-quadratic fidelity. Multidimens. Syst. Signal Process. 2018, 29, 1459–1484. [Google Scholar] [CrossRef]

- Liu, X.W.; Tang, Y.C.; Yang, Y.X. Primal-dual algorithm to solve the constrained second-order total generalized variational model for image denoising. J. Electron. Imaging 2019, 28, 043017. [Google Scholar] [CrossRef]

- Liu, G.; Huang, T.Z.; Liu, J.; Lv, X.G. Total variation with overlapping group sparsity for image deblurring under impulse noise. PLoS ONE 2015, 10, e0122562. [Google Scholar] [CrossRef]

- Nikolova, M. Minimizers of cost-functions involving nonsmooth data-fidelity terms application to the processing of outliers. SIAM J. Numer. Anal. 2002, 40, 965–994. [Google Scholar] [CrossRef]

- Nikolova, M. A variational approach to remove outliers and impulse noise. J. Math. Imaging Vis. 2004, 20, 99–120. [Google Scholar] [CrossRef]

- Ma, L.Y.; Ng, M.K.; Yu, J.; Zeng, T.Y. Efficient box-constrainted TV-type-l1 algorithms for restoring images with impulse noise. J. Comput. Math. 2013, 31, 249–270. [Google Scholar] [CrossRef]

- Yuan, G.Z.; Ghanem, B. ℓ0TV: A sparse optimization method for impulse noise image restoration. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 41, 352–364. [Google Scholar] [CrossRef]

- Kang, M.M.; Kang, M.J.; Jung, M.Y. Sparse representation based image delburing model under random-valued impulse noise. Multidimens. Syst. Signal Process. Vol. 2019, 30, 1063–1092. [Google Scholar] [CrossRef]

- Yin, M.M.; Adam, T.; Paramesran, R.; Hassan, M.F. An ℓ0-overlapping group sparse total variation for impulse noise image restoration. Signal Process. Image Commun. 2022, 102, 116620. [Google Scholar] [CrossRef]

- Ji, H.; Liu, C.; Shen, Z.; Xu, Y. Robust video denoising using low rank matrix completion. In Proceedings of the 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, San Francisco, CA, USA, 13–18 June 2010; pp. 1791–1798. [Google Scholar]

- Gu, S.; Zhang, L.; Zuo, W.; Feng, X. Weighted Nuclear Norm Minimization with Application to Image Denoising. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 2862–2869. [Google Scholar]

- Chang, K.; Zhang, X.Y.; Ding, P.L.K.; Li, B.X. Data-adaptive low-rank modeling and external gradient prior for single image super-resolution. Signal Process. 2019, 161, 36–49. [Google Scholar] [CrossRef]

- Huang, W.Q.; Ke, Z.W.; Cui, Z.X.; Cheng, J.; Qiu, Z.; Jia, S.; Ying, L.; Zhu, Y.; Liang, D. Deep low-rank plus sparse network for dynamic mr imaging. Med. Image Anal. 2021, 73, 102190. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Ren, Y.S.; Zhang, W.S. Depression disorder classification of fmri data using sparse low-rank functional brain network and graph-based features. Comput. Math. Methods Med. 2017, 2017, 3609821. [Google Scholar] [CrossRef]

- Zhang, L.; Zuo, W. Image Restoration: From Sparse and Low-Rank Priors to Deep Priors. IEEE Signal Process. Mag. 2017, 34, 172–179. [Google Scholar] [CrossRef]

- Zhang, H.; Chen, H.; Yang, G.; Zhang, L. LR-Net: Low-Rank Spatial-Spectral Network for Hyperspectral Image Denoising. IEEE Trans. Image Process. 2021, 30, 8743–8758. [Google Scholar] [CrossRef]

- Wu, Y.M.; Sun, J.N.; Chen, W.G.; Yin, J.P. Improved Image Compressive Sensing Recovery with Low-Rank Prior and Deep Image Prior. Signal Process. 2023, 205, 108896. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).