1. Introduction

In actual outdoor surveillance videos, when under low illumination at night and insufficient illumination in the daytime, the use of only visible light would easily result in missed detection and false detection of pedestrian objects, which cannot satisfy monitoring requirements throughout the day and thus cannot guarantee the security of people’s lives and property [

1,

2,

3,

4]. Meanwhile, in terms of traffic safety and autonomous driving, video surveillance is also playing an increasingly important role in low illumination at night and insufficient illumination in the daytime [

5,

6]. With the advancement of technology, a variety of sensor devices have emerged, such as speed dome cameras, box cameras and high-definition sensors for use in the daytime, and thermal infrared, mid-wave infrared, and long-wave infrared sensors for use at night and in bad weather [

1,

2,

3,

7,

8]. In order to satisfy all-day monitoring needs, it is necessary for different types of sensors to work together. Thus, it is a very challenging task to effectively utilize the data acquired by different types of sensors so as to improve the intelligent analysis level of surveillance video.

There are various spectral band data in nature. For each spectral band dataset, the information that it carries is different, as human eyes can only perceive part of the spectral band. In addition, owing to the limitation of imaging technology, there is currently no sensor device that can acquire all spectral band data [

1,

2,

3,

7]. For example, visible light imaging devices can be used to obtain rich visual information of objects such as texture, color, and shape from visible light spectrum bands that human eyes can perceive, whereas thermal infrared imaging devices can be used to obtain visual information of objects such as brightness, shape and contour from thermal infrared spectrum bands that human eyes cannot perceive. In particular, thermal infrared images are obtained according to the thermal radiation properties of objects, from which the object information such as contour and shape can be extracted under low illumination at night and shadows during the daytime [

1,

7,

8,

9,

10,

11,

12,

13]. To this end, this paper focuses on how to utilize the differences and complementarities between visible light images and thermal infrared images to obtain effective context information of pedestrians [

14,

15,

16,

17,

18,

19,

20,

21,

22], thereby enhancing feature expression characteristics of pedestrians under low illumination at night and shadows during the daytime, and improving the detection accuracy of pedestrians.

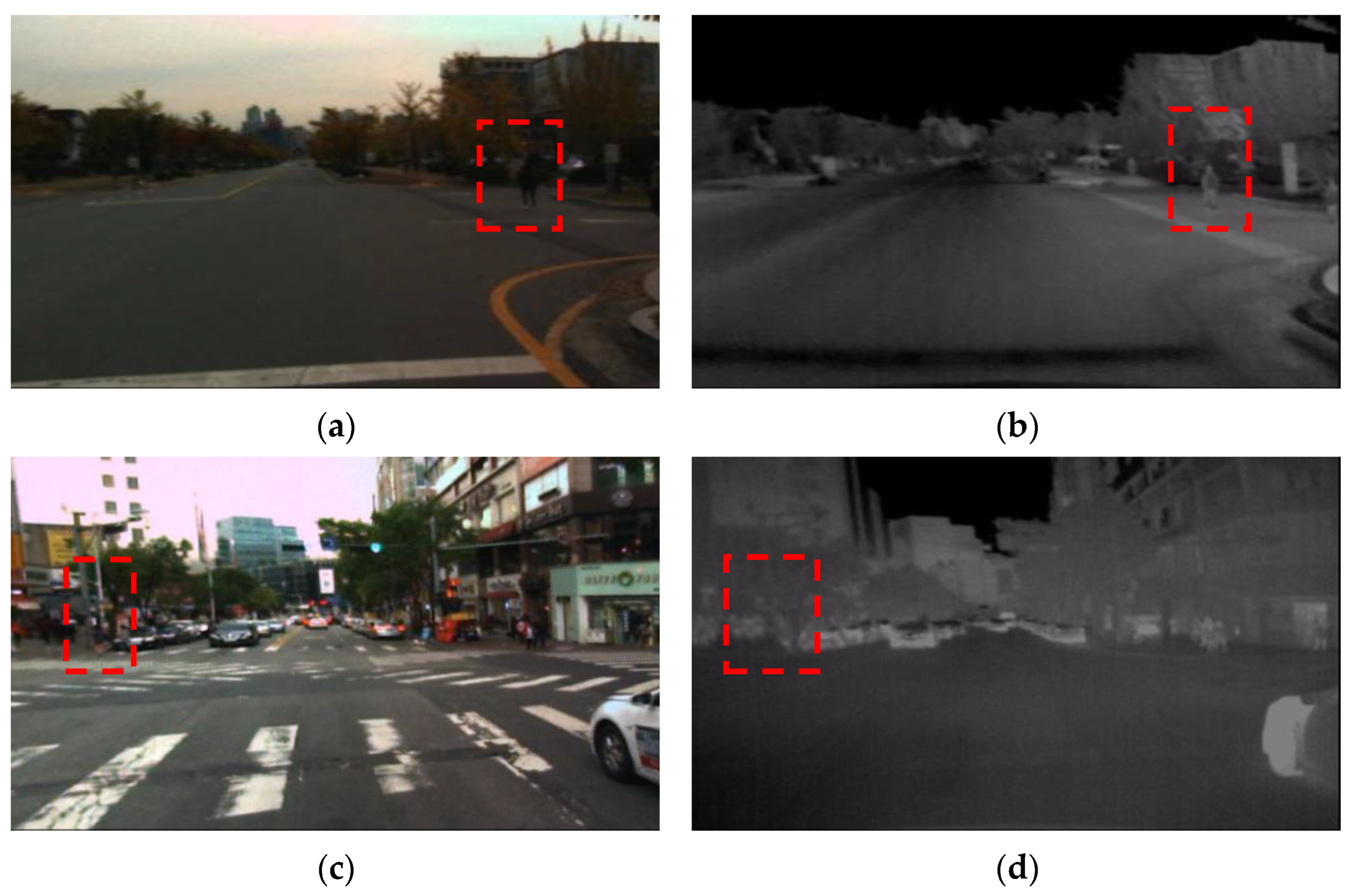

To better illustrate the physical properties of visible light images and thermal infrared images, a visible light image and the corresponding thermal infrared image on the KAIST dataset [

13] are shown in

Figure 1.

From

Figure 1, it can be seen that in the visible light image (a), the pedestrian marked by a red dotted box has no significant features and thus is difficult to distinguish even by human eyes, while in the corresponding thermal infrared image (b), the pedestrian information such as brightness, contour and shape can be better provided. In another visible light image (c), information such as color, texture, shape and contour is not clearly provided for the pedestrian marked by the red dotted box, while in the corresponding thermal infrared image (d), the pedestrian feature information is not significant, and thus it is different to distinguish the pedestrian even with human eyes. Therefore, it is necessary to study how to effectively utilize the physical properties of visible light images and thermal infrared images and fully exploit the complementarity between these two images so as to improve pedestrian detection effects. In recent years, researchers have made many efforts in pixel-level fusion [

10,

23], feature-level fusion [

9,

13] and decision-level fusion [

12] of visible light images and thermal infrared images. However, owing to the complexity and diversity of outdoor scenes and the changeable weather, it is difficult to meet complex and changeable application requirements by using existing methods.

For example, the pixel-level fusion image obtained from the visible light image and the thermal infrared image would lose color and part of the texture and background information of the scene as compared with the visible light image, and would lose part of the brightness information as compared with the thermal infrared image; therefore, it is difficult to effectively improve the detection accuracy of pedestrians only by using the pixel-level fusion image, especially because of the fact that the missed and false detection would easily occur. In some cases, given the influence caused by factors such as illumination, temperature, and weather, the pedestrian information in the visible light image, such as color, texture and shape could not be provided, and the pedestrian information in the thermal infrared image such as shape, brightness, and contour is not clearly obtained. In view of this, the feature-level fusion of the visible light image and the thermal infrared image could not significantly enhance the feature expression of the pedestrians. On the contrary, it will introduce interference information, which would easily result in missed detection and false detection of pedestrians. When the visible light image and the thermal infrared image are fused at the decision level, pedestrian detection is required to be performed respectively in the visible light image and the corresponding thermal infrared image, and then a mapping relationship and a master–slave relationship between the visible light detection results and the thermal infrared detection results is established. However, in actual monitoring, the weather changes at any time, which makes the pedestrian information in the visible light image and in the thermal infrared image not equivalent. Therefore, the mapping relationship and the master–slave relationship there between cannot be established to meet initial conditions for the decision-level fusion, and thus missed detections and false detections of the pedestrian object would easily occur. With this in mind, this paper studies and analyzes the physical properties of visible light images and thermal infrared images, and uses the complementarity between the two images to enhance the visually significant features and feature expression properties of pedestrians, so as to obtain the context information that is required for pedestrian detection and thereby improving pedestrian detection accuracy.

In order to improve the detection accuracy of pedestrians under low illumination at night and in the shadows during the daytime, this paper studies pedestrian detection based on the two-stage fusion of visible light images and thermal infrared images. This paper is distinguished from the existing references [

11,

13,

22] mainly in that it makes full use of the complementarity and difference between visible light images and thermal infrared images to perform the two-stage fusion, including pixel-level fusion and feature-level fusion, according to the varying daytime conditions, so as to obtain effective pedestrian context information. The organization of this paper is as follows:

Section 1 includes the introduction and existing problems;

Section 2 introduces the existing pedestrian detection methods using pixel-level fusion, feature-level fusion and decision-level fusion of the visible light image and thermal infrared image;

Section 3 introduces the proposed method;

Section 4 introduces the experimental results; and

Section 5 summarizes the content of this paper.

3. The Proposed Method

The proposed method in the paper, specifically the visible light images and thermal infrared images, are subjected to pixel-level fusion and feature-level fusion according to the varying daytime conditions. In the pixel-level fusion stage, the thermal infrared image, after being brightness enhanced, is fused with the visible image. The obtained pixel-level fusion image contains the information critical for accurate pedestrian detection, such as pedestrian context, shape, contour, and background in the scene. In the feature-level fusion stage, in the daytime, the previous pixel-level fusion image is fused with the visible light image so as to make full use of the color, texture and background information in the scene of the visible light image; meanwhile, under low illumination at night, the previous pixel-level fusion image is fused with the thermal infrared image, so as to make full use of the significant information of thermal infrared images, such as pedestrian brightness, shapes and contours.

3.1. Stage I: Pixel-Level Fusion of Visual Light Images and Thermal Infrared Images

Under shadows in the daytime, it is difficult to detect pedestrians in the visible light images, while corresponding thermal infrared images have clear image information such as brightness and shape of pedestrians. Under low illumination at night, visible light images have certain information such as color, texture and background of the scene, but do not have complete edges, textures and shapes of pedestrians, while corresponding thermal infrared images have information such as brightness, shape and contour of pedestrians. Moreover, owing to the influence of imaging sensing devices, Gaussian noise or salt–pepper noise are prone to occur in visible light images [

24,

25,

26,

27,

28]. In view of all this, many studies have been done on the fusion, especially the pixel-level fusion, of visible light images and thermal infrared images by utilizing the complementarity between the two types of images. In the literature [

29], visible light images and thermal infrared images were separately visually enhanced before pixel-level fusion, resulting in overly strong contrast in the enhanced visible light images; thus, it is difficult to effectively extract pedestrian features from the fusion results. To solve this problem, this paper proposes that only the brightness of thermal infrared images is enhanced before the pixel-level fusion of visible light images and thermal infrared images. By doing so, pedestrian features can be effectively extracted from the fusion result, which conforms to human visual perception, as specifically shown in the following Formula (1):

where

represents a pixel value at the current position

,

represents the minimum value of pixels in the current thermal infrared image, and

represents the maximum value of pixels in the current thermal infrared image. The visible light image and the brightness-enhanced thermal infrared image are fused at the pixel level through guided filtering technology to significantly enhance the visual appearance information of pedestrians. The strategies and methods of selecting parameters are explained in the literature [

29,

30,

31,

32,

33,

34,

35,

36,

37].

Figure 2 depicts, under shadows in the daytime, the visible light image (a), the corresponding thermal infrared image (b), the pixel-level fusion image of the visible light image and the thermal infrared image (c), and the pixel-level fusion image of the visible light image and the brightness-enhanced thermal infrared image as proposed in this paper (d), as well as the corresponding edge features of each of these images.

From

Figure 2, it can be seen that under shadows in the daytime, as compared with the visible light image (a), the thermal infrared image (b) and the pixel-level fusion image of thermal infrared image and visible light image (c), the pixel-level fusion image of the brightness-enhanced thermal infrared image and visible light image as proposed in this paper (d) has more significant pedestrian information and corresponding bottom-layer edge features. The results show that the pixel-level fusion image as proposed in this paper has clearer pedestrian information such as context, partial texture, shape and background of the scene, which are essential for achieving accurate pedestrian detection. By making full use of the above information, it is possible for pedestrian detectors to better distinguish pedestrians and backgrounds, thereby improving the accuracy of pedestrian detection.

The effectiveness of the pixel-level fusion method proposed in this paper is further demonstrated below by using the image quality metrics, including information entropy, average gradient and edge strength [

27,

38]. The results are shown in

Table 1.

It can be seen from

Table 1 that the pixel-level fusion of the brightness-enhanced thermal infrared image and the visible light image results in a fusion image have higher information entropy, average gradient and edge strength, as compared with the visible light image, thermal infrared image and non-brightness-enhanced fusion image. The higher the information entropy, average gradient and edge strength, the richer the information contained in the image. In particular, more pedestrian context information indicates higher image quality and thus a clearer image. Therefore, although the pedestrian object under shadow in the daytime does not have complete bottom-layer visual information, since the fusion image obtained by the proposed method contains more pedestrian context information and scene background information, the pedestrian and the background can be better distinguished, and thus the pedestrian detection accuracy can be improved.

Figure 3 depicts, under low illumination at night, the visible light image (a), corresponding thermal infrared image (b), pixel-level fusion image of the visible light image and the corresponding thermal infrared image (c), and pixel-level fusion image of the visible light image and the brightness-enhanced thermal infrared image (d), as well as the corresponding edge features of each of these images.

As shown in

Figure 3, the pixel-level fusion image of the visible light image and the brightness-enhanced thermal infrared image has more significant context, shape and contour information of the pedestrian and the scene background information, as well as pedestrian edge features, all of which are beneficial to improve the accuracy of pedestrian detection.

As indicated by the evaluation metrics of the image quality, including information entropy, average gradient and edge intensity, under low illumination at night, the pixel-level fusion image obtained by the proposed method has greater detail and thus is clearer. The results are shown in

Table 2.

It can be seen from

Table 2 that, as compared with the visible light image, thermal infrared image, and pixel-level fusion image of the visible light image and the thermal infrared image, the pixel-level fusion image of the visible light image and the brightness-enhanced thermal infrared image have higher information entropy, average gradient and edge strength. Therefore, the pixel-level fusion image of the visible light image and the brightness-enhanced thermal infrared image contains more information, such as pedestrian context, shape, contour and scene background, all of which make pedestrians and backgrounds more distinguishable, and thus is beneficial for improving pedestrian detection accuracy.

In conclusion, the pixel-level fusion image of the visible light image and the brightness-enhanced thermal infrared image has rich information, including pedestrian context, shape, brightness, contour and scene background, both under shadows in the daytime and under low illumination at night; this information contributes to the improvement in the accuracy of pedestrian detection throughout the whole day.

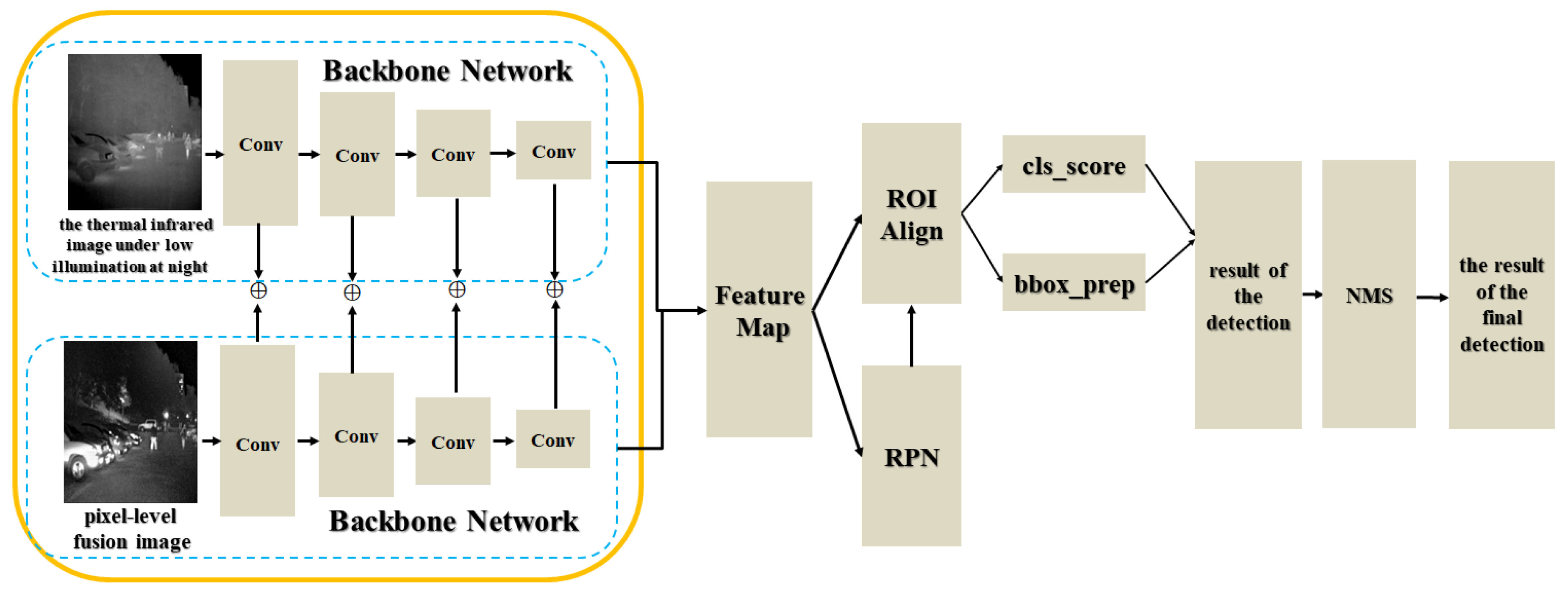

3.2. Stage II: Combination of Pixel-Level Fusion and Feature-Level Fusion for Pedestrian Detection

In order to meet all-day monitoring requirements of the monitoring system, under shadows in the daytime, the pixel-level fusion image obtained in the first stage is fused at a feature level with the visible light image so as to compensate for its loss of color, texture and scene background information as compared with the visible light image. While under low illumination at night, the pixel-level fusion image obtained in the first stage is fused at a feature level with the thermal infrared image so as to compensate for its loss of some brightness information of pedestrians as compared with the thermal infrared image. Therefore, on the basis of the pixel-level fusion obtained the first stage, this part is directed to the selection of visible light images or thermal infrared images for feature-level fusion according to the varying daytime conditions, so as to improve the all-day accuracy of pedestrian detection.

3.2.1. Feature-Level Pedestrian Detection Method Based on the Combination of the Pixel-Level Fusion Image and the Visible Light Image

In the daytime, the color, texture and scene background information in the visible light image are important factors for achieving accurate pedestrian detection [

28,

38,

39]. Keeping this in mind, when visible light images and thermal infrared images are fused at a feature level by using existing feature-level fusion methods [

2,

9,

13], the effect of increasing the ratio of visible light images to thermal infrared images on pedestrian detection performance is studied. The increase in said ratio is meant to increase the proportion of color, texture and scene background information in the visible light image during the feature-level fusion. The pedestrian detection results are shown in

Table 3.

It can be seen from

Table 3 that in the daytime, increasing the ratio of visible light images to thermal infrared images essentially has no effect on the evaluation metrics of pedestrian detection, including average accuracy, indicating that the accuracy of pedestrian detection has not been effectively improved. For the specific meaning of the evaluation metrics AP, AP

0.5, AP

0.75, AP

S, AP

M and AP

L, please refer to the introduction of the evaluation metrics in

Section 4.2. The main reason for this is that, although increasing the proportion of visible light images enhances the color and texture information of pedestrians in the visible light images, it does not enhance the context and scene background information of pedestrians under shadows and at distance. On the other hand, in the daytime, the pixel-level fusion image of the visible light image and the thermal infrared image loses color as well as part of the texture and scene background information as compared with the visible light image, whereas the color, texture and scene background information are absent in the thermal infrared image. In view of the above, we propose a feature-level fusion of the pixel-level fusion image with the visible light image so as to compensate for the missing color, part of the texture, and scene background information, thus realizing the accurate detection of pedestrians.

Based on the above analysis, in the daytime, the pixel-level fusion image of the visible light image and the thermal infrared image is fused with the visible light image at the feature level, so as to enhance the bottom-layer and middle-layer visual features required for accurate pedestrian detection, such as the context, texture and color of pedestrians and background information in the scene, as shown in Formula (2):

where

represents the feature map obtained from the extraction of the nth frame of the visible light image,

represents the feature map obtained from the extraction of the nth frame of the pixel-level fused image,

represents pixel-by-pixel addition, and

represents the feature-level fusion result. By using this method, the feature-level fusion of the pixel-level fusion image and the visible light image can be obtained, which improves the detection accuracy of pedestrians in the daytime. The specific flowchart is shown in

Figure 4.

As shown in

Figure 4, in order to perform the feature-level fusion of pixel-level fusion images and visible light images, a multi-stage and currently popular Cascade R-CNN network model [

40] for object detection based on the feature pyramid [

41] is adopted as the pedestrian detection network model, ResNet-50 [

35] is used as the backbone network, a stochastic gradient descent function is used as the optimization procedure, and the learning rate is set to 0.001, the weight decay is set to 0.0005, and the momentum is set to 0.9. The training data is expanded by image data expansion methods such as flipping, scaling, and rotation, so that the pedestrian features are enhanced and, ultimately, the accurate detection of pedestrians in outdoor surveillance videos is achieved.

3.2.2. Feature-Level Pedestrian Detection Method Based on the Combination of the Pixel-Level Fusion Image and the Thermal Infrared Image

Under low illumination at night, visible light images contain salt–pepper noise and Gaussian noise [

24,

27], which would reduce the accuracy of pedestrian detection. In addition, visible light images do not have effective bottom-layer visual information for pedestrians, while thermal infrared images contain bottom-layer visual information such as brightness, contour and shape of pedestrians. In view of this, in the feature-level fusion of visible light images and thermal infrared images, the effect of increasing the ratio of thermal infrared image to visible light image on pedestrian detection performance is studied. The increase in said ratio is meant to increase the proportion of brightness, contour and shape of pedestrians in thermal infrared images during the feature-level fusion. The pedestrian detection performance results are shown in

Table 4.

It can be seen from

Table 4 that increasing the ratio of the thermal infrared image to the visible light image essentially has no effect on the evaluation metrics of pedestrian detection, including average accuracy, indicating that the accuracy of pedestrian detection has not been effectively improved. The main reason is that, although increasing the proportion of thermal infrared images improves the brightness and contour information of pedestrians, it cannot improve the context, texture and scene background information, so that the accuracy of pedestrian detection under low illumination at night cannot be effectively improved. On the other hand, under low illumination at night, the pixel-level fusion image of the visible light image and the thermal infrared image loses some brightness information for pedestrians and edge contour information as compared with the thermal infrared image [

42], while visible light images cannot compensate for said information loss, since they contain no effective bottom-layer visual features of pedestrians. Therefore, we propose a feature-level fusion of the pixel-level fusion image with the thermal infrared image to compensate for the bottom-layer visual information, such as partial brightness and edge contours of pedestrians, thereby improving the detection accuracy of pedestrians.

Based on the above analysis, under low illumination at night, the pixel-level fusion image and the thermal infrared image are fused at a feature level so that the pedestrian features can be enhanced, as shown in Formula (3):

where

represents the feature map extracted from the nth frame of thermal infrared image,

represents the feature map extracted from the nth frame of the pixel-level fusion image, and

represents the result of feature-level fusion. By using this formula, the feature-level fusion of the pixel-level fusion image and the thermal infrared image can be performed, which improves the accuracy of pedestrian detection. The detection flowchart is shown in

Figure 5:

In

Figure 5, during the feature-level fusion of the pixel-level fusion image with the thermal infrared image, the specific settings of the parameters, including the network model, optimization function, learning rate, weight decay and momentum, are analogous to the above model parameter settings in

Figure 4. By using the feature-level fusion of pixel-level fusion images with thermal infrared images, the pedestrian features can be enhanced, and thus the accuracy of pedestrian detection under low illumination at night can be improved.

5. Conclusions and Future

This paper proposed a method for pedestrian detection based on the pixel-level fusion of visible light image and thermal infrared image and the feature-level fusion of the two images according to the varying daytime conditions. In particular, in the pixel-level fusion stage, the thermal infrared image was firstly enhanced in terms of its brightness, and then was fused with the visible light image at the pixel level to obtain a pixel-level fusion image, which contains the information of context, contour, shape and background of the scene required for accurate pedestrian detection. In the feature-level fusion stage, under shadows during the daytime, the pixel-level fusion image was fused with the visible light image at the feature level to obtain a feature-level image, which compensates for the information loss of the pixel-level fusion image, such as color as well as part of the texture and background of the scene contained in the visible light image; meanwhile, under low illumination at night, the pixel-level fusion image was fused with the thermal infrared image at the feature level to obtain a feature-level image, which compensates the pixel-level fusion image for its loss of part of the brightness information contained in the thermal infrared image. The experimental results show that the proposed method achieves accurate pedestrian detection. The proposed method still has some aspects that require improvement. For example, the proposed method cannot automatically discriminate between the varying daytime conditions for the corresponding detection model, and it does not consider the effect of misalignment of data collected by different sensor devices on the detection accuracy. Nonetheless, the proposed method exhibits a novel method for detecting pedestrians, which can be potentially used in outdoor surveillance videos.