When it is challenging for the transmitter to acquire the jamming information such as the jammers’ strategies, the DQN algorithm has been used recently to solve the anti-jamming problem without being aware of the jamming strategies [

28,

29]. Based on the DQN algorithm, a two-dimensional anti-jamming communication scheme that combines the frequency domain and the spatial domain has been proposed in [

28]. In [

29], a frequency–power domain anti-jamming communication scheme for fixed jamming patterns is proposed based on the deep double-Q learning algorithm.

Inspired by the aforementioned methods, to deal with the smart jammers, we propose a decision-making network scheme based on the DQN algorithm. Based on the non-zero-sum game model, we design a DQN decision-making network for S and each jammer, and the anti-jamming decision is made through learning the environment and the historical information.

3.1. The DQN-Based Scheme for Multi-Domain Anti-Jamming Strategies

3.1.1. The Process of the Proposed Scheme

The proposed scheme based on the DQN algorithm includes the agents for S and each smart jammer. Each agent independently trains its DQN decision-making network and makes decisions based on the local information, which means S and the jammers do not share any information about each other’s strategies. Without loss of generality, we only describe the network of S here, since the agent at S and each jammer is similar. Specifically, we define as the state of the agent of S in the k-th time slot, which is used to describe the local environment of S. The agent of S utilizes the -greedy policy to take an action only based on its own information, . The -greedy policy is a trade-off between exploration and exploiting, employed to balance between exploring new actions and exploiting the existing knowledge. The actions of S and each jammer are executed in parallel.

To calculate the reward, D feeds the received SINR to S by broadcasting. The agent of S obtains the SINR and the transmit power and calculates the reward , which will be given later. The SINR broadcast by D can also be heard by the jammers, so the agent of each jammer can calculate its reward , . It should be noted that the jammers cooperate with each other to degrade the transmission utility. Due to the cooperation between the agents of the jammers, all jamming agents share a common reward .

When the agent of

S moves to the next state

, it obtains an experience of

. The agent of

S stores its experiences in its own experience pool

, and after the experience pool is full, a mini-batch is sampled from it to update the neural network, which is known as experience replay [

30] and used to reduce the data correlation. In the process of learning, the Q-function

represents the long-term reward after the action

is executed under the state

and

is the weight vector of the DQN. It is known that the structure of a double neural network has a better and stable performance on the training process [

30]. In the proposed scheme, the agent of

S has both the train DQN and the target DQN with the weight vectors

and

, respectively. In the

k-th time slot, the agent of

S randomly selects a mini-batch

with

B experiences from the experience pool

and uses the stochastic gradient algorithm to minimize the prediction error between the train DQN and the target DQN. As a loss function, the prediction error is given as

where

is the discount factor.

Finally, by using the gradient descent optimizer to minimize the loss function, the gradients to update the weights of the train DQN are given as

where

is updated by

per

. The structure of the proposed DQN algorithm is shown in

Figure 2.

A similar DQN algorithm is also performed at

. In the

k-th time slot, the agent of

obtains its state, which is responsible for describing the local environment. Then, each jamming agent individually chooses an action according to the local information, known as state, and they execute in parallel. The only difference is that all the jamming agents calculate a common reward by using the feedback SINR of

D as well as the jamming powers shared among the jammers. The DQN algorithm of each jamming agent is the same as shown in

Figure 2, and we will not describe it again. The process of the proposed DQN-based scheme is shown in Algorithm 1.

| Algorithm 1 The pseudocode of the proposed DQN-Based Scheme |

| Without loss of generality, the DQNs of S and are illustrated in the following: |

| Set up DQNs for both S and , set empty experience pools and |

| Initialize the train DQN with random weights for S and |

| Initialize the target DQN with weights and for S and , respectively |

| Agent of chooses an action randomly |

| Agent of stores its experience into , respectively, until full |

| Repeat |

| Agent of observes its states , respectively, in the k-th time slot |

| Agent of chooses an action , respectively |

| Agent of S calculates reward according to the feedback of D |

| Agent of calculates reward according to the feedback of D and the jamming powers |

| shared among the jammers |

| Agent of obtains the next state , respectively |

| Agent of stores its experience into , respectively |

| Agent of samples a mini-batch from , respectively |

| Agent of updates the weights of the train DQN , respectively |

| Agent of updates the weights of the target DQN with per , respectively |

| Until convergence |

In Algorithm 1, the notation means A or B, the actions , the rewards and the states of S and the jammer will be given in detail in the following subsection.

3.1.2. The Definition of the Action, Reward and State

As described in

Section 2,

S expects to obtain the transmission channel and transmission power, which can be denoted as

according to the decision-making network’s output, where × represents the Cartesian product. Therefore, the action of the agent of

S needs to indicate the channel and power selection. Denote an action of

S in the

k-th time slot as

, which represents a certain choice of

. The size of the action space

is

, thus an action has

possible choices, and

. If the action of the agent of

S is

, it indicates that

S needs to select the transmission channel and power according to the

i-th element in

. Similarly, the action of

denoted as

can be defined in a similar way.

To solve the anti-jamming game in

Section 2.2 without requiring the opponents’ information, the DQN algorithm is designed to find the optimal transmission strategy, which maximizes the long-term reward of

S. Therefore, we utilize the game utility of

S as the reward of the agent, that is

where

is the SINR feedback from

D at the

k-th time slot.

denotes the transmission cost per unit power of

S, and

is the transmission power chosen by the action

. The reward of the jammers,

, is similar to

S.

Referring to [

28], the state of

S is composed of the previous SINR at

D and previous actions of

S, which is denoted as

where

represents the SINR feedback from

D during the

k-th time slot and

w is the number of previous SINRs or actions.

Similarly, we use the game utility as the reward

of the agent of

, which can be calculated as

where

denotes the cost per unit power of the jammers and

is the jamming power chosen by

’s action

. The jammers share the jamming powers to calculate the reward

. In the same way, the state of

is defined as

3.2. Hierarchical Learning-Based Scheme for Mixed Strategies

When the information of the opponent is available, it has been proved that the mixed strategy for a Nash equilibrium can be obtained for a game with finite players and a finite-size strategy set [

31]. The mixed strategy of

S denoted by

is given as

where

represents the probability that

S selects the action

, which is defined in

Section 3.1. Similarly, the mixed strategy of

is defined as

where

is the probability that

chooses the

i-th action from the action space

. The

N jammers cooperate with each other to attack the communication between

S and

D as much as possible, so we define the mixed strategies for the jamming attack as

which consists of the mixed strategies of the

N jammers.

If a pair of mixed strategies

constitutes a Nash equilibrium, the mathematical expression for the Nash equilibrium is as follows

However, it is impossible to derive the optimal mixed strategies of S when the information of the jammers is unavailable. For this case, we can use learning methods to find the mixed strategies of S to maximize the utility without being aware of the opponent’s action spaces.

A hierarchical learning method has been proposed in [

22] to obtain the mixed strategies on the power control for a legitimate transmitter and a single jammer. Inspired by the aforementioned work, we proposed an HL-based scheme to obtain the mixed strategies of the players by learning a Q-function for each action and exploiting the participant’s own utility feedback.

An agent is designed at S to learn a Q-function for each action and then obtain the mixed strategy . For the jammers, an agent can be designed to learn the strategy and instruct all jammers to cooperate in the electronic countermeasures. However, a centralized learning algorithm in this case results in a large action space and much information overhead, which is challenging and complicated in the real wireless environment. Therefore, we set up a local agent for each jammer to have their own strategy by using a common feedback to achieve the cooperation among the jammers.

In the proposed HL-based scheme, we firstly use the Q-learning algorithm to obtain the Q-function of the agent’s actions, which is used to evaluate the importance of each action and then obtain the mixed strategy through the acquired Q-function. The following is the detailed procedure of the algorithm.

We create agents for

S and each jammer. In order to learn the Q-functions of

S and the jammer

,

, denoted by

and

, respectively, every agent has a Q-function table to record the Q-value of all possible actions. Meanwhile, the agent of

S has a mixed strategy

and agents of all jammers also have their own strategies

,

. In the

k-th time slot, the strategies for

S and

can be denoted as

and

, while the Q-functions are denoted as

and

. In the

k-th time slot,

S chooses its action

according to the strategy

, i.e., the probability distribution for the actions of

S.

also chooses an action

according to its strategy

. Then,

D feeds the received SINR by broadcasting to help

S in calculating the reward

in this time slot. Meanwhile, the jammers can also obtain the SINR from the broadcasting. They share their powers with each other so that they can obtain a common reward

. By using the SINR and the shared information about jamming powers, each jammer can calculate its reward independently. The definition and calculation of the rewards are the same as mentioned in

Section 3.1.

After this, the agent of

S updates its Q-function

to

by the following equation

where

is the learning rate.

Subsequently,

S updates its mixed strategy to

for the next time slot by the equation as follows

where

is a parameter to balance exploration and exploitation.

Similarly, the agents of jammers update their Q-function as follows

and the mixed strategies of jammers are updated by the equation as follows

where

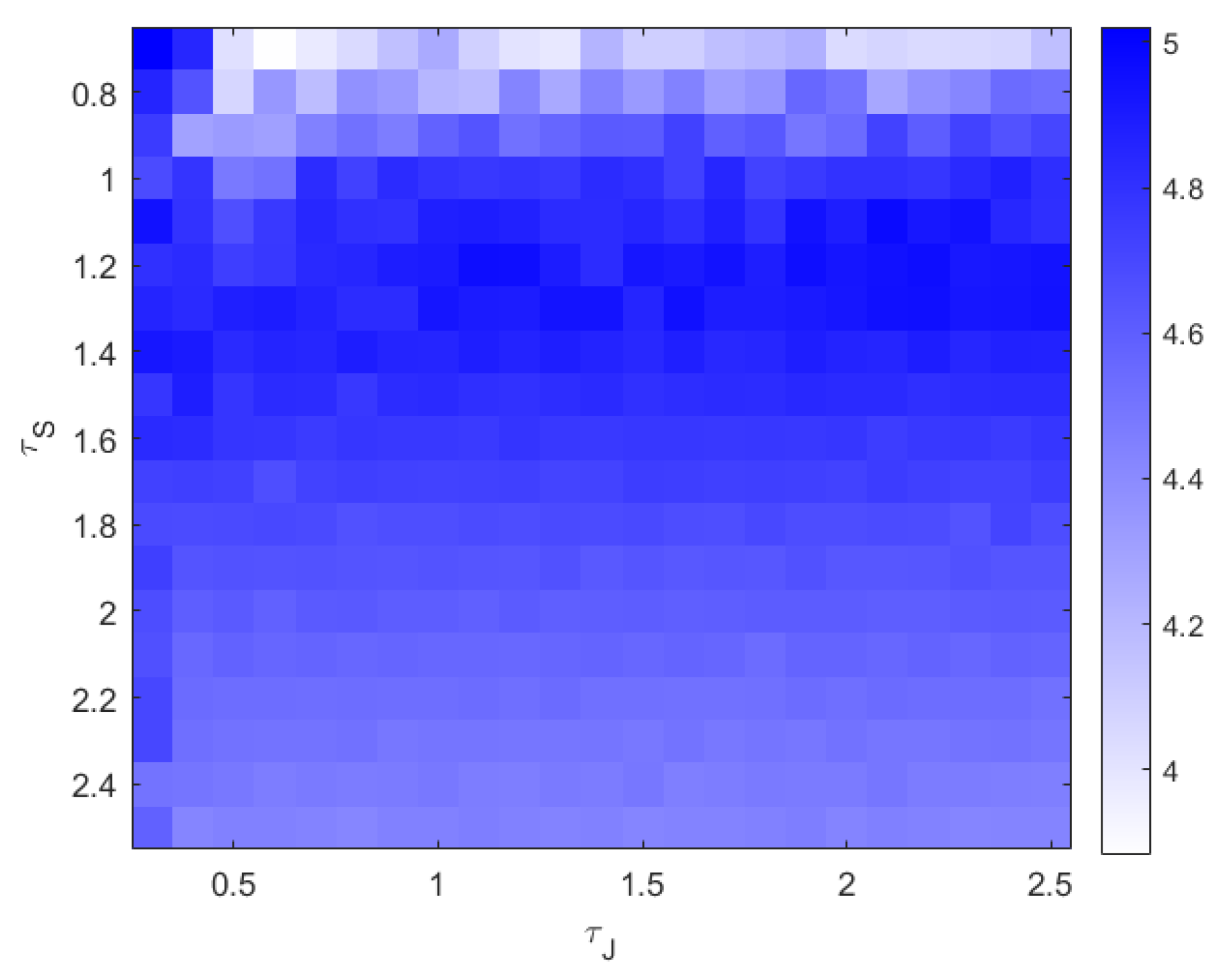

is a parameter to balance exploration and exploitation. The parameters

and

are important and can influence the performance of the HL-based scheme. Therefore, we will discuss the influence of them in detail in the simulation section.

The process of the proposed HL-based scheme is shown in Algorithm 2.

| Algorithm 2 The pseudocode of the HL-Based Scheme |

| Set up Q-functions and for S and , |

| Set up mixed strategies and for S and , respectively |

| Initialize and for all actions of S and |

| Initialize and so that every action can be chosen with equal probability |

| Repeat |

| S chooses action by in the k-th time slot |

| chooses action by in the k-th time slot |

| S calculates the reward according to the feedback SINR of D |

| calculates the reward according to the feedback SINR of D as well as the jamming powers |

| shared among the jammers |

| S and update their Q-functions via (16) and (18), respectively |

| S and update their mixed strategies via (17) and (19), respectively |

| Until convergence |