Revisiting Hard Negative Mining in Contrastive Learning for Visual Understanding

Abstract

:1. Introduction

- We define a metric for the penalty strength of negatives, which provides a quantitative analysis tool for HNM.

- We find that the penalty strength of hard negatives and the difficulty of model optimization are contradictory. The design of loss functions needs to balance the two items.

- Experiments on two visual understanding tasks, i.e., Image–Text Retrieval (ITR) and Temporal Action Localization (TAL), with different modal data as research objects have verified that T-PSC can accelerate model training and improve the performance of current visual understanding models. T-PSC can be applied to existing ITR and TAL models in a plug-and-play manner without any changes.

2. Related Work

2.1. Contrastive Learning

2.2. Image–Text Retrieval

2.3. Temporal Action Localization

3. Methodology

3.1. Preliminaries

3.1.1. Contrastive Learning

3.1.2. Hardness of Negatives

3.1.3. Triplet Loss

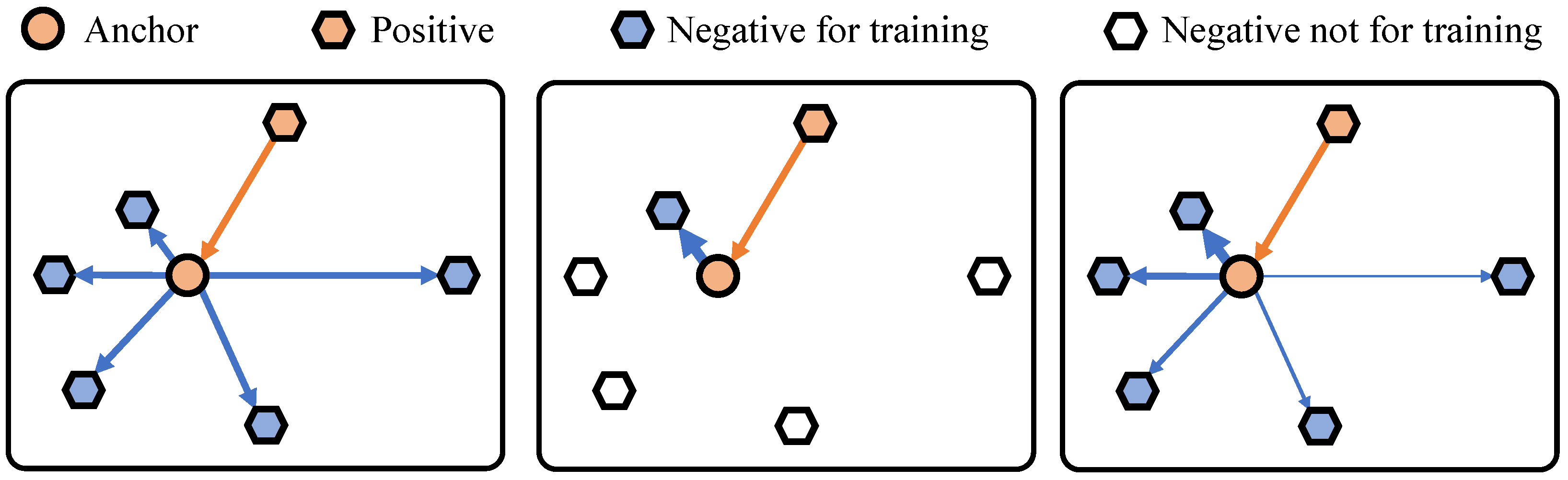

3.1.4. Hard Negative Mining

3.2. Metric for the Penalty Strength of Negatives

3.3. Model Optimization Difficulty

3.4. Penalty Strength Control

4. Experiments

4.1. Datasets and Experiment Settings

- Image–Text Retrieval (ITR). Our method is evaluated using two benchmarks: Flickr30K [60] contains 31,000 images; each image is annotated with five sentences. We use 29,000 images for training, 1000 images for validation, and 1000 images for testing. MS-COCO [61] contains 123,287 images; each image is annotated with five sentences. We use 113,287 images for training, 5000 images for validation, and 5000 images for testing. For the performance evaluation of ITR, we use Recall@K (R@K) and Average Recall@K (Avg.), with as the evaluation metric. R@K indicates the percentage of queries for which the model returns the correct item in its top K results. Avg. represents the mean value of R@K.

- Temporal Action Localization (TAL). Our method is evaluated using two benchmarks: THUMOS14 [62] and ActivityNet-1.3 [63]. THUMOS14 consists of 200 validation videos and 213 test videos for TAL. Without a loss of generality, we apply training on the validation subset and evaluate the model performance on the test subset [64]. ActivityNet-1.3 contains 10,024 videos and 15,410 action instances for training, 4926 videos and 7654 action instances for validation, and 5044 videos for testing. Following the standard practice [65], we train our method on the training subset and test it on the validation subset.

- Experiment Settings. For T-PSC, the hyperparameters are set to and for all the datasets used in this paper.

4.2. Ablation Studies

4.2.1. Impact of Hyperparameters

4.2.2. Impact of Loss Functions

4.2.3. Comparisons with Existing Loss Functions

4.3. Comparisons with Existing ITR and TAL Models

4.3.1. Improvements to Existing ITR Models

4.3.2. Improvements to Existing TAL Models

4.4. Convergence Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Schroff, F.; Kalenichenko, D.; Philbin, J. FaceNet: A Unified Embedding for Face Recognition and Clustering. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 815–823. [Google Scholar]

- Khosla, P.; Teterwak, P.; Wang, C.; Sarna, A.; Tian, Y.; Isola, P.; Maschinot, A.; Liu, C.; Krishnan, D. Supervised contrastive learning. In Proceedings of the Advances in Neural Information Processing Systems, Virtual, 6–12 December 2020; Volume 33, pp. 18661–18673. [Google Scholar]

- Luo, D.; Liu, C.; Zhou, Y.; Yang, D.; Ma, C.; Ye, Q.; Wang, W. Video cloze procedure for self-supervised spatio-temporal learning. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; Volume 34, pp. 11701–11708. [Google Scholar]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum contrast for unsupervised visual representation learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Virtual, 14–19 June 2020; pp. 9729–9738. [Google Scholar]

- Fang, Z.; Wang, J.; Wang, L.; Zhang, L.; Yang, Y.; Liu, Z. Seed: Self-supervised distillation for visual representation. arXiv 2021, arXiv:2101.04731. [Google Scholar]

- Xiang, N.; Chen, L.; Liang, L.; Rao, X.; Gong, Z. Semantic-Enhanced Cross-Modal Fusion for Improved Unsupervised Image Captioning. Electronics 2023, 12, 3549. [Google Scholar] [CrossRef]

- Xu, T.; Liu, X.; Huang, Z.; Guo, D.; Hong, R.; Wang, M. Early-Learning Regularized Contrastive Learning for Cross-Modal Retrieval with Noisy Labels. In Proceedings of the 30th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2022; pp. 629–637. [Google Scholar]

- Qian, S.; Xue, D.; Fang, Q.; Xu, C. Integrating Multi-Label Contrastive Learning with Dual Adversarial Graph Neural Networks for Cross-Modal Retrieval. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 4794–4811. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.; Wu, J.; Qu, L.; Gan, T.; Yin, J.; Nie, L. Self-Supervised Correlation Learning for Cross-Modal Retrieval. IEEE Trans. Multimed. 2023, 25, 2851–2863. [Google Scholar] [CrossRef]

- Park, J.; Lee, J.; Kim, I.J.; Sohn, K. Probabilistic Representations for Video Contrastive Learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 14711–14721. [Google Scholar]

- Wang, X.; Zhao, K.; Zhang, R.; Ding, S.; Wang, Y.; Shen, W. ContrastMask: Contrastive Learning To Segment Every Thing. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 11604–11613. [Google Scholar]

- Mohamed, M. Empowering deep learning based organizational decision making: A Survey. Sustain. Mach. Intell. J. 2023, 3. [Google Scholar] [CrossRef]

- Mohamed, M. Agricultural Sustainability in the Age of Deep Learning: Current Trends, Challenges, and Future Trajectories. Sustain. Mach. Intell. J. 2023, 4. [Google Scholar] [CrossRef]

- Sleem, A. Empowering Smart Farming with Machine Intelligence: An Approach for Plant Leaf Disease Recognition. Sustain. Mach. Intell. J. 2022, 1. [Google Scholar] [CrossRef]

- Yang, C.; An, Z.; Cai, L.; Xu, Y. Mutual Contrastive Learning for Visual Representation Learning. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 22 February–1 March 2022; Volume 36, pp. 3045–3053. [Google Scholar]

- Lee, K.H.; Chen, X.; Hua, G.; Hu, H.; He, X. Stacked cross attention for image-text matching. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 201–216. [Google Scholar]

- Li, K.; Zhang, Y.; Li, K.; Li, Y.; Fu, Y. Visual semantic reasoning for image-text matching. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 4654–4662. [Google Scholar]

- Liu, C.; Mao, Z.; Zhang, T.; Xie, H.; Wang, B.; Zhang, Y. Graph structured network for image-text matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 14–19 June 2020; pp. 10921–10930. [Google Scholar]

- Zhang, K.; Mao, Z.; Wang, Q.; Zhang, Y. Negative-Aware Attention Framework for Image-Text Matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 15661–15670. [Google Scholar]

- Hermans, A.; Beyer, L.; Leibe, B. In defense of the triplet loss for person re-identification. arXiv 2017, arXiv:1703.07737. [Google Scholar]

- Xuan, H.; Stylianou, A.; Liu, X.; Pless, R. Hard negative examples are hard, but useful. In Proceedings of the European Conference on Computer Vision (ECCV), Online, 23–28 August 2020; pp. 126–142. [Google Scholar]

- Faghri, F.; Fleet, D.J.; Kiros, J.R.; Fidler, S. Vse++: Improving visual-semantic embeddings with hard negatives. arXiv 2017, arXiv:1707.05612. [Google Scholar]

- Oh Song, H.; Xiang, Y.; Jegelka, S.; Savarese, S. Deep metric learning via lifted structured feature embedding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 4004–4012. [Google Scholar]

- Hadsell, R.; Chopra, S.; LeCun, Y. Dimensionality Reduction by Learning an Invariant Mapping. In Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’06), New York, NY, USA, 17–22 June 2006; Volume 2, pp. 1735–1742. [Google Scholar]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A simple framework for contrastive learning of visual representations. In Proceedings of the International Conference on Machine Learning, PMLR, Virtual, 13–18 July 2020; pp. 1597–1607. [Google Scholar]

- Chen, X.; Fan, H.; Girshick, R.; He, K. Improved baselines with momentum contrastive learning. arXiv 2020, arXiv:2003.04297. [Google Scholar]

- Reiss, T.; Hoshen, Y. Mean-shifted contrastive loss for anomaly detection. In Proceedings of the AAAI Conference on Artificial Intelligence, Washington, DC, USA, 7–14 February 2023; Volume 37, pp. 2155–2162. [Google Scholar]

- Bai, Y.; Wang, A.; Kortylewski, A.; Yuille, A. CoKe: Contrastive Learning for Robust Keypoint Detection. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 3–7 January 2023; pp. 65–74. [Google Scholar]

- Fan, R.; Poggi, M.; Mattoccia, S. Contrastive Learning for Depth Prediction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, Vancouver, BC, Canada, 18–22 June 2023; pp. 3225–3236. [Google Scholar]

- Chen, H.; Ding, G.; Liu, X.; Lin, Z.; Liu, J.; Han, J. Imram: Iterative matching with recurrent attention memory for cross-modal image-text retrieval. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 14–19 June 2020; pp. 12655–12663. [Google Scholar]

- Lan, H.; Zhang, P. Learning and Integrating Multi-Level Matching Features for Image-Text Retrieval. IEEE Signal Process. Lett. 2021, 29, 374–378. [Google Scholar] [CrossRef]

- Li, S.; Lu, A.; Huang, Y.; Li, C.; Wang, L. Joint Token and Feature Alignment Framework for Text-Based Person Search. IEEE Signal Process. Lett. 2022, 29, 2238–2242. [Google Scholar] [CrossRef]

- Wang, H.; Zhang, Y.; Ji, Z.; Pang, Y.; Ma, L. Consensus-aware visual-semantic embedding for image-text matching. In Proceedings of the European Conference on Computer Vision (ECCV), Online, 23–28 August 2020; pp. 18–34. [Google Scholar]

- Chen, J.; Hu, H.; Wu, H.; Jiang, Y.; Wang, C. Learning the Best Pooling Strategy for Visual Semantic Embedding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 19–25 June 2021; pp. 15789–15798. [Google Scholar]

- Zhang, Q.; Lei, Z.; Zhang, Z.; Li, S.Z. Context-Aware Attention Network for Image-Text Retrieval. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 14–19 June 2020; pp. 3536–3545. [Google Scholar]

- Frome, A.; Corrado, G.S.; Shlens, J.; Bengio, S.; Dean, J.; Ranzato, M.; Mikolov, T. Devise: A deep visual-semantic embedding model. In Proceedings of the Advances in Neural Information Processing Systems (NIPS), Lake Tahoe, NV, USA, 5–10 December 2013; pp. 2121–2129. [Google Scholar]

- Lin, T.; Zhao, X.; Shou, Z. Single shot temporal action detection. In Proceedings of the 25th ACM International Conference on Multimedia, New York, NY, USA, 23–27 October 2017; pp. 988–996. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. SSD: Single Shot MultiBox Detector. In Proceedings of the 2016 European Conference on Computer Vision (ECCV), Amsterdam, The Netherlands, 11–14 October 2016; pp. 21–37. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLO9000: Better, faster, stronger. In Proceedings of the 2017 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 June 2017; pp. 7263–7271. [Google Scholar]

- Long, F.; Yao, T.; Qiu, Z.; Tian, X.; Luo, J.; Mei, T. Gaussian temporal awareness networks for action localization. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 344–353. [Google Scholar]

- Liu, Y.; Ma, L.; Zhang, Y.; Liu, W.; Chang, S.F. Multi-Granularity Generator for Temporal Action Proposal. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 3604–3613. [Google Scholar]

- Zhang, C.L.; Wu, J.; Li, Y. Actionformer: Localizing moments of actions with transformers. In Proceedings of the 2022 European Conference on Computer Vision (ECCV), Tel Aviv, Israel, 23–27 October 2022; pp. 492–510. [Google Scholar]

- Shi, D.; Zhong, Y.; Cao, Q.; Ma, L.; Li, J.; Tao, D. TriDet: Temporal Action Detection with Relative Boundary Modeling. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 18–22 June 2023; pp. 18857–18866. [Google Scholar]

- Lin, T.; Zhao, X.; Su, H.; Wang, C.; Yang, M. Bsn: Boundary sensitive network for temporal action proposal generation. In Proceedings of the 2018 European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Lin, T.; Liu, X.; Li, X.; Ding, E.; Wen, S. BMN: Boundary-Matching Network for Temporal Action Proposal Generation. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October– 2 November 2019; pp. 3888–3897. [Google Scholar]

- Zeng, R.; Huang, W.; Tan, M.; Rong, Y.; Zhao, P.; Huang, J.; Gan, C. Graph Convolutional Networks for Temporal Action Localization. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 7094–7103. [Google Scholar]

- Zhu, Z.; Tang, W.; Wang, L.; Zheng, N.; Hua, G. Enriching Local and Global Contexts for Temporal Action Localization. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Virtual, 11–17 October 2021; pp. 13516–13525. [Google Scholar]

- Chen, S.; Zhao, Y.; Jin, Q.; Wu, Q. Fine-grained video-text retrieval with hierarchical graph reasoning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 14–19 June 2020; pp. 10638–10647. [Google Scholar]

- Croitoru, I.; Bogolin, S.V.; Leordeanu, M.; Jin, H.; Zisserman, A.; Albanie, S.; Liu, Y. TeachText: CrossModal Generalized Distillation for Text-Video Retrieval. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Virtual, 11–17 October 2021; pp. 11583–11593. [Google Scholar]

- Bueno-Benito, E.; Vecino, B.T.; Dimiccoli, M. Leveraging Triplet Loss for Unsupervised Action Segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, Vancouver, BC, Canada, 18–22 June 2023; pp. 4921–4929. [Google Scholar]

- Tang, Z.; Huang, J. Harmonious Multi-Branch Network for Person Re-Identification with Harder Triplet Loss. ACM Trans. Multimed. Comput. Commun. Appl. 2022, 18, 1–21. [Google Scholar] [CrossRef]

- Tian, M.; Wu, X.; Jia, Y. Adaptive Latent Graph Representation Learning for Image-Text Matching. IEEE Trans. Image Process. 2023, 32, 471–482. [Google Scholar] [CrossRef]

- Zhang, K.; Mao, Z.; Liu, A.A.; Zhang, Y. Unified Adaptive Relevance Distinguishable Attention Network for Image-Text Matching. IEEE Trans. Multimed. 2023, 25, 1320–1332. [Google Scholar] [CrossRef]

- Liu, Y.; Liu, H.; Wang, H.; Liu, M. Regularizing Visual Semantic Embedding With Contrastive Learning for Image-Text Matching. IEEE Signal Process. Lett. 2022, 29, 1332–1336. [Google Scholar] [CrossRef]

- Zhang, H.; Mao, Z.; Zhang, K.; Zhang, Y. Show your faith: Cross-modal confidence-aware network for image-text matching. In Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), Virtual, 22 Febrary–1 March 2022; Volume 36, pp. 3262–3270. [Google Scholar]

- Wang, Y.; Yang, H.; Qian, X.; Ma, L.; Lu, J.; Li, B.; Fan, X. Position focused attention network for image-text matching. In Proceedings of the 28th International Joint Conference on Artificial Intelligence (IJCAI), Macao, China, 10–16 August 2019; pp. 3792–3798. [Google Scholar]

- Li, Z.; Guo, C.; Feng, Z.; Hwang, J.N.; Xue, X. Multi-View Visual Semantic Embedding. In Proceedings of the Thirty-First International Joint Conference on Artificial Intelligence (IJCAI), Vienna, Austria, 23–29 July 2022; pp. 1130–1136. [Google Scholar]

- van den Oord, A.; Li, Y.; Vinyals, O. Representation learning with contrastive predictive coding. arXiv 2018, arXiv:1807.03748. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models From Natural Language Supervision. In Proceedings of the 38th International Conference on Machine Learning, PMLR, Virtual, 6–7 August 2021; pp. 8748–8763. [Google Scholar]

- Young, P.; Lai, A.; Hodosh, M.; Hockenmaier, J. From image descriptions to visual denotations: New similarity metrics for semantic inference over event descriptions. Trans. Assoc. Comput. Linguist. 2014, 2, 67–78. [Google Scholar] [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, 6–12 September 2014; pp. 740–755. [Google Scholar]

- Idrees, H.; Zamir, A.R.; Jiang, Y.G.; Gorban, A.; Laptev, I.; Sukthankar, R.; Shah, M. The THUMOS challenge on action recognition for videos “in the wild”. Comput. Vis. Image Underst. 2017, 155, 1–23. [Google Scholar] [CrossRef]

- Caba Heilbron, F.; Escorcia, V.; Ghanem, B.; Carlos Niebles, J. ActivityNet: A Large-Scale Video Benchmark for Human Activity Understanding. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 961–970. [Google Scholar]

- Zeng, R.; Huang, W.; Tan, M.; Rong, Y.; Zhao, P.; Huang, J.; Gan, C. Graph Convolutional Module for Temporal Action Localization in Videos. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 44, 6209–6223. [Google Scholar] [CrossRef]

- Liu, X.; Hu, Y.; Bai, S.; Ding, F.; Bai, X.; Torr, P.H. Multi-shot temporal event localization: A benchmark. In Proceedings of the 2021 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 19–25 June 2021; pp. 12596–12606. [Google Scholar]

- Wei, J.; Xu, X.; Yang, Y.; Ji, Y.; Wang, Z.; Shen, H.T. Universal Weighting Metric Learning for Cross-Modal Matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 14–19 June 2020; pp. 13005–13014. [Google Scholar]

- Wei, J.; Xu, X.; Wang, Z.; Wang, G. Meta Self-Paced Learning for Cross-Modal Matching. In Proceedings of the 29th ACM International Conference on Multimedia (ACM MM), Chengdu, China, 20–24 October 2021; pp. 3835–3843. [Google Scholar]

- Chen, T.; Deng, J.; Luo, J. Adaptive Offline Quintuplet Loss for Image-Text Matching. In Proceedings of the European Conference on Computer Vision (ECCV), Online, 23–28 August 2020; pp. 549–565. [Google Scholar]

- Huang, Z.; Niu, G.; Liu, X.; Ding, W.; Xiao, X.; Wu, H.; Peng, X. Learning with Noisy Correspondence for Cross-modal Matching. In Proceedings of the Advances in Neural Information Processing Systems, Virtual, 6–14 December 2021; Volume 34, pp. 29406–29419. [Google Scholar]

- Liu, C.; Mao, Z.; Liu, A.A.; Zhang, T.; Wang, B.; Zhang, Y. Focus your attention: A bidirectional focal attention network for image-text matching. In Proceedings of the 27th ACM International Conference on Multimedia (ACM MM), Nice, France, 21–25 October 2019; pp. 3–11. [Google Scholar]

- Diao, H.; Zhang, Y.; Ma, L.; Lu, H. Similarity Reasoning and Filtration for Image-Text Matching. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 4–6 November 2021; Volume 35, pp. 1218–1226. [Google Scholar]

- Zhang, H.; Feng, C.; Yang, J.; Li, Z.; Guo, C. Boundary-Aware Proposal Generation Method for Temporal Action Localization. arXiv 2023, arXiv:2309.13810. [Google Scholar]

- Xu, M.; Zhao, C.; Rojas, D.S.; Thabet, A.; Ghanem, B. G-TAD: Sub-Graph Localization for Temporal Action Detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 14–19 June 2020. [Google Scholar]

- Yang, L.; Peng, H.; Zhang, D.; Fu, J.; Han, J. Revisiting Anchor Mechanisms for Temporal Action Localization. IEEE Trans. Image Process. 2020, 29, 8535–8548. [Google Scholar] [CrossRef] [PubMed]

- Zhao, C.; Thabet, A.K.; Ghanem, B. Video Self-Stitching Graph Network for Temporal Action Localization. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Virtual, 11–17 October 2021; pp. 13658–13667. [Google Scholar]

- Lin, C.; Xu, C.; Luo, D.; Wang, Y.; Tai, Y.; Wang, C.; Li, J.; Huang, F.; Fu, Y. Learning Salient Boundary Feature for Anchor-free Temporal Action Localization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual, 19–25 June 2021; pp. 3320–3329. [Google Scholar]

- Liu, X.; Wang, Q.; Hu, Y.; Tang, X.; Zhang, S.; Bai, S.; Bai, X. End-to-End Temporal Action Detection with Transformer. IEEE Trans. Image Process. 2022, 31, 5427–5441. [Google Scholar] [CrossRef] [PubMed]

| Eval Task | Image-to-Text (%) | Text-to-Image (%) | ||||||

|---|---|---|---|---|---|---|---|---|

| Loss | R@1 | R@5 | R@10 | Avg. | R@1 | R@5 | R@10 | Avg. |

| 56.3 | 85.1 | 91.4 | 77.6 | 43.4 | 72.9 | 82.4 | 66.2 | |

| 64.0 | 88.8 | 94.6 | 82.5 | 47.0 | 76.0 | 83.9 | 69.0 | |

| 61.4 | 88.4 | 93.9 | 81.2 | 44.6 | 74.5 | 83.5 | 67.5 | |

| 66.5 | 90.0 | 94.0 | 83.5 | 48.4 | 76.9 | 84.6 | 70.0 | |

| Eval Task | Image-to-Text (%) | Text-to-Image (%) | ||||||

|---|---|---|---|---|---|---|---|---|

| Model | R@1 | R@5 | R@10 | Avg. | R@1 | R@5 | R@10 | Avg. |

| BFAN [70] | 68.1 | 91.4 | 95.9 | 85.1 | 50.8 | 78.4 | 85.8 | 71.7 |

| + SSP [66] | 71.3 | 92.6 | 96.2 | 86.7 | 52.5 | 79.5 | 86.6 | 72.9 |

| + AOQ [68] | 73.2 | 94.5 | 97.0 | 88.2 | 54.0 | 80.3 | 87.7 | 74.0 |

| + Meta-SPN [67] | 72.5 | 93.2 | 96.7 | 87.5 | 53.3 | 80.2 | 87.2 | 73.6 |

| + T-PSC | 74.3 | 93.8 | 96.7 | 88.3 | 54.5 | 80.8 | 87.5 | 74.3 |

| SGRAF [71] | 77.8 | 94.1 | 97.4 | 89.8 | 58.5 | 83.0 | 88.8 | 76.8 |

| + NCR [69] | 77.3 | 94.0 | 97.5 | 89.6 | 59.6 | 84.4 | 89.9 | 78.0 |

| + T-PSC | 78.3 | 95.0 | 97.4 | 90.2 | 60.4 | 85.0 | 90.6 | 78.7 |

| Eval Task | Image-to-Text (%) | Text-to-Image (%) | ||||||

|---|---|---|---|---|---|---|---|---|

| Model | R@1 | R@5 | R@10 | Avg. | R@1 | R@5 | R@10 | Avg. |

| SCAN [16] | 67.4 | 90.3 | 95.8 | 84.5 | 48.6 | 77.7 | 85.2 | 70.5 |

| VSRN [17] | 71.3 | 90.6 | 96.0 | 86.0 | 54.7 | 81.8 | 88.2 | 74.9 |

| VSE++ [22] | 69.4 | 90.7 | 95.4 | 85.2 | 52.1 | 79.0 | 85.5 | 72.2 |

| + T-PSC | 70.7 | 90.8 | 95.6 | 85.7 | 52.9 | 79.8 | 86.7 | 73.1 |

| BFAN [70] | 68.1 | 91.4 | 95.9 | 85.1 | 50.8 | 78.4 | 85.8 | 71.7 |

| + T-PSC | 74.3 | 93.8 | 96.7 | 88.3 | 54.5 | 80.8 | 87.5 | 74.3 |

| SGRAF [71] | 77.8 | 94.1 | 97.4 | 89.8 | 58.5 | 83.0 | 88.8 | 76.8 |

| + T-PSC | 78.3 | 95.0 | 97.4 | 90.2 | 60.4 | 85.0 | 90.6 | 78.7 |

| Eval Task | Image-to-Text (%) | Text-to-Image (%) | ||||||

|---|---|---|---|---|---|---|---|---|

| Model | R@1 | R@5 | R@10 | Avg. | R@1 | R@5 | R@10 | Avg. |

| SCAN [16] | 72.7 | 94.8 | 98.4 | 88.6 | 58.8 | 88.4 | 94.8 | 80.7 |

| VSRN [17] | 76.2 | 94.8 | 98.2 | 89.7 | 62.8 | 89.7 | 95.1 | 82.5 |

| VSE++ [22] | 73.0 | 94.5 | 98.2 | 88.6 | 58.3 | 88.1 | 94.4 | 80.3 |

| + T-PSC | 74.0 | 94.6 | 98.4 | 89.0 | 58.7 | 88.6 | 94.4 | 80.6 |

| BFAN [70] | 74.9 | 95.2 | 98.3 | 89.5 | 59.4 | 88.4 | 94.5 | 80.8 |

| + T-PSC | 76.2 | 95.8 | 98.7 | 90.2 | 60.7 | 88.6 | 94.7 | 81.3 |

| SGRAF [71] | 79.6 | 96.2 | 98.5 | 91.4 | 63.2 | 90.7 | 96.1 | 83.3 |

| + T-PSC | 79.9 | 97.0 | 98.8 | 91.9 | 65.1 | 90.8 | 96.0 | 84.0 |

| Model | THUMOS14 (%) | |||||

|---|---|---|---|---|---|---|

| 0.3 | 0.4 | 0.5 | 0.6 | 0.7 | Avg. | |

| BMN [45] | 56.00 | 47.40 | 38.80 | 29.70 | 20.50 | 38.50 |

| G-TAD [73] | 54.50 | 47.60 | 40.30 | 30.80 | 23.40 | 39.30 |

| A2Net [74] | 58.60 | 54.10 | 45.50 | 32.50 | 17.20 | 41.60 |

| VSGN [75] | 66.70 | 60.40 | 52.40 | 41.00 | 30.40 | 50.20 |

| ContextLoc [47] | 68.30 | 63.80 | 54.30 | 41.80 | 26.20 | 50.90 |

| AFSD [76] | 67.30 | 62.40 | 55.50 | 43.70 | 31.10 | 52.00 |

| TadTR [77] | 74.80 | 69.10 | 60.10 | 46.60 | 32.80 | 56.70 |

| Actionformer [42] | 81.96 | 77.41 | 71.08 | 58.91 | 43.74 | 66.62 |

| + BAPG [72] | 82.09 | 77.66 | 71.45 | 59.51 | 44.35 | 67.01 |

| + T-PSC | 82.19 | 77.70 | 71.48 | 59.62 | 44.65 | 67.13 |

| TriDet [43] | 83.53 | 79.60 | 72.12 | 60.76 | 45.40 | 68.28 |

| + BAPG [72] | 83.60 | 79.72 | 72.49 | 61.49 | 46.35 | 68.73 |

| + T-PSC | 83.72 | 79.81 | 72.52 | 61.56 | 46.45 | 68.81 |

| Model | ActivityNet v1.3 (%) | ||||

|---|---|---|---|---|---|

| 0.5 | 0.6 | 0.7 | 0.8 | Avg. | |

| BMN [45] | 50.11 | 44.21 | 37.61 | 28.83 | 33.91 |

| G-TAD [73] | 50.42 | 43.98 | 38.13 | 29.42 | 34.13 |

| Actionformer [42] | 54.67 | 48.25 | 41.85 | 32.91 | 36.56 |

| + BAPG [72] | 54.80 | 48.38 | 41.97 | 32.93 | 36.61 |

| + T-PSC | 54.89 | 48.40 | 42.01 | 32.95 | 36.65 |

| TriDet [43] | 54.71 | 48.54 | 42.34 | 32.93 | 36.76 |

| + BAPG [72] | 54.85 | 48.58 | 42.50 | 33.15 | 36.83 |

| + T-PSC | 54.95 | 48.62 | 42.58 | 33.26 | 36.89 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, H.; Li, Z.; Yang, J.; Wang, X.; Guo, C.; Feng, C. Revisiting Hard Negative Mining in Contrastive Learning for Visual Understanding. Electronics 2023, 12, 4884. https://doi.org/10.3390/electronics12234884

Zhang H, Li Z, Yang J, Wang X, Guo C, Feng C. Revisiting Hard Negative Mining in Contrastive Learning for Visual Understanding. Electronics. 2023; 12(23):4884. https://doi.org/10.3390/electronics12234884

Chicago/Turabian StyleZhang, Hao, Zheng Li, Jiahui Yang, Xin Wang, Caili Guo, and Chunyan Feng. 2023. "Revisiting Hard Negative Mining in Contrastive Learning for Visual Understanding" Electronics 12, no. 23: 4884. https://doi.org/10.3390/electronics12234884

APA StyleZhang, H., Li, Z., Yang, J., Wang, X., Guo, C., & Feng, C. (2023). Revisiting Hard Negative Mining in Contrastive Learning for Visual Understanding. Electronics, 12(23), 4884. https://doi.org/10.3390/electronics12234884