2. Related Work

Uskov et al. performed a recent analysis of three New Jersey schools which shows that current public-school enrollments provide many future enrollments. Three New Jersey school districts were implemented to project enrollments three years into the future. Stochastic forecasting is commonly used in large geographic domains such as provinces or countries; it has not been widely used for congested fields such as the school level. At the elementary level, gender, age, father’s income, and literacy rate significantly impact student performance. One of the hot issues is improving the productivity of students entering school. The primary reason for dropping out of school is to observe students and take precautions as early as possible to determine the reasons for dropping out. The model uses educational methods of data mining. The research plan is divided into six stages, i.e., data collection, data integration, data pre-processing (e.g., cleaning, normalization conversion), feature selection, template extraction, model optimization, and evaluation. Comparison results were obtained and discussed [

22].

According to Adebayo and Chaubey, educational data analysis in the recent area of research has been welcomed over the past decade due to its ability to monitor student performance and predict future development. Many machine learning methods, significantly controlled learning algorithms, have developed accurate models to predict student characteristics and stimulate their behavior [

23].

Uskov et al. examined and evaluated the effectiveness of two packaging methods for supervised learning algorithms to expect student performance at the final exam discussed in this paper. Preliminary numerical experiments show that the advantage of the technique under observation is significantly improving classification accuracy by developing reliable prediction models using markers and a lot of unmarked data [

22]. It was stated that educational institutions’ primary purpose is to facilitate pupils’ productive education. One of the ways to attain the highest quality is to replace the traditional model of teaching in the classroom by opening the predictive knowledge of students participating in the course [

23].

According to Zhang, this exploration investigates the expected nearness in the market. It sets up a choice help structure that merchants can use to turn recommended bits of knowledge into future stock, the value bearing with the critical potential for taking that stock. It is fascinating to many people to foresee the outcome of sports matches, from viewers to punters. It is also important as a research issue because of its complexity to a certain extent. The result of a sports match depends on several variables, such as a team’s morale (or a player’s), abilities, current score, etc. So even for sports experts, the exact results of sports matches are complicated to predict [

24].

Research concerns using an approach to machine learning, Artificial Neural Networks (ANNs), to predict the results of one week, specifically applied to the 2013–2014 football matches in the Iran Pro League (IPL). The data from the past matches in the last seven leagues are used to make better predictions for the games to come. Results showed a remarkable ability of neural networks to predict football match results [

25].

Adebayo and Chaubeysaid that the markets are unstable, and strategies that create strong expectations on one platform can allow more traders to take that action. In a perfect world, if such “idea floats” can be standard, the broker should store models for use in each new market circumstance (or thought) and afterwards model those models in the coming information that must apply [

23].

According to Cortez et al. [

26], the future is unpredictable, so the possible concepts are unknown. Keeping up a model with the most forward-thinking cost information is not generally the most advantageous alternative as the market is recouping, and old data are helping later. Therefore, short preparation occasions permit the changed classifier to work with high-recurrence stock information, which can bring about a loss of items because of an absence of appropriate practice time. The model adopts an alternate strategy to learning by streaming ideas, streams the thought, and creating them as a model.

Similarly, the design acknowledges these adjustments in the market by building a vast number of conventional base scientific classifications (SVMs, Choice Trees, and Neural Systems), covering specific (area) shares, using a random subset of previous data, and the best of these taxonomic models. When the market changes, the base classification of the ensemble is adjusted to be complex and to maintain a high model efficiency level. Policies improve already established algorithms. This study also discusses specific issues related to learning with existing data resources, especially class inequality. Media releases and feature creation due to time of day (such as technical and emotional analysis), dimensionality reduction, and model output. It addresses standard transactional methods, identifying unfair practices used in online exams and identifying outliers in the results, worksheets, student performance forecasts, etc. Knowledge is hidden in educational datasets and can be extracted using data mining techniques [

21].

Grading exercises were used to measure student performance, and because of the many methods used to classify data, the decision tree approach was used here. With the help of this assignment, we can extract knowledge that describes the student’s academic performance in the final exam. This helps identify dropouts and students who need special attention early and allows teachers to provide relevant advice and counseling [

21].

The method is used to predict student performance based on essential features, such as the age of the student, the school in which they study, place of residence, number of households, previous performance scores, and activities, to verify the effectiveness of the model [

27].

Compression of the proposed method with other well-known classifiers has been performed. Studies of existing student data show that this method is suitable for evaluating student performance. Research and analysis of educational data, especially student performance, is fundamental. Learning Data Mining (LDM) is a research area that produces educational data to identify interesting patterns and knowledge in educational institutions [

21]. The study of Soofi and Awan [

27] also focuses on computational education, specifically exploring the factors that theoretically affect student performance in higher education and finding a qualitative model for relevant personal and social factors that classifies based on students’ performance.

Iqbal et al. evaluated the effectiveness of various university admissions criteria in Pakistan using a case study of the Information Technology University (ITU) in Lahore. They focused on the applicants’ academic standing for ITU’s Bachelor of Science in Computer Science program. It was discovered that some of the admissions requirements strongly correlated with the student’s overall academic performance. The results of the admissions test and a High School Diploma were the best indicators of academic success (HSSC). Their main finding was that when determining admission to a university, a candidate’s performance on the entrance exam and the HSSC should be given a lot of weight [

28].

According to Pal and Pal, considering the sum of the above investigations, it very well may be said with sureness that the abilities and experience of the administrators assume a significant job in surveying the presentation of proposition ventures, which from one perspective, affirms past exploration [

29].

Results show that the proposed algorithm can predict student dropouts within 4–6 weeks after the course and is trustworthy, and can be used in early warning systems. In the intellectual analysis of educational data, the central problem in discovering knowledge from data are the determination of representative data sets and constructing classification models based on their individual demographic and social foundations. The results show that the accuracy of the classification model generated by the Random Forest algorithm and the J48 algorithm exceeds 71%. [

21].

The programmer generates a continuous software response algorithm to adjust and improve the pattern using these input signals. The model is further enhanced with each new data set provided in the program to clearly distinguish between “humans” and “non-humans” [

30].

There is no denying that machine learning can make people work more innovatively and reliably. Finally, machine learning allows us to read, save, and initiate very complex or stressful situations in machine records, such as paper invoices, processing, processing, and editing. Machine learning helps to train the model on the data set before deployment. Some AI models are ceaseless and on the web. This reproducible web-based displaying technique prompts enhancements in the associations between information parts. Those examples and affiliations could undoubtedly have been ignored by human perception because of their unpredictability and size. After a model has been prepared, a method called “gaining from the information” can be used continuously [

27].

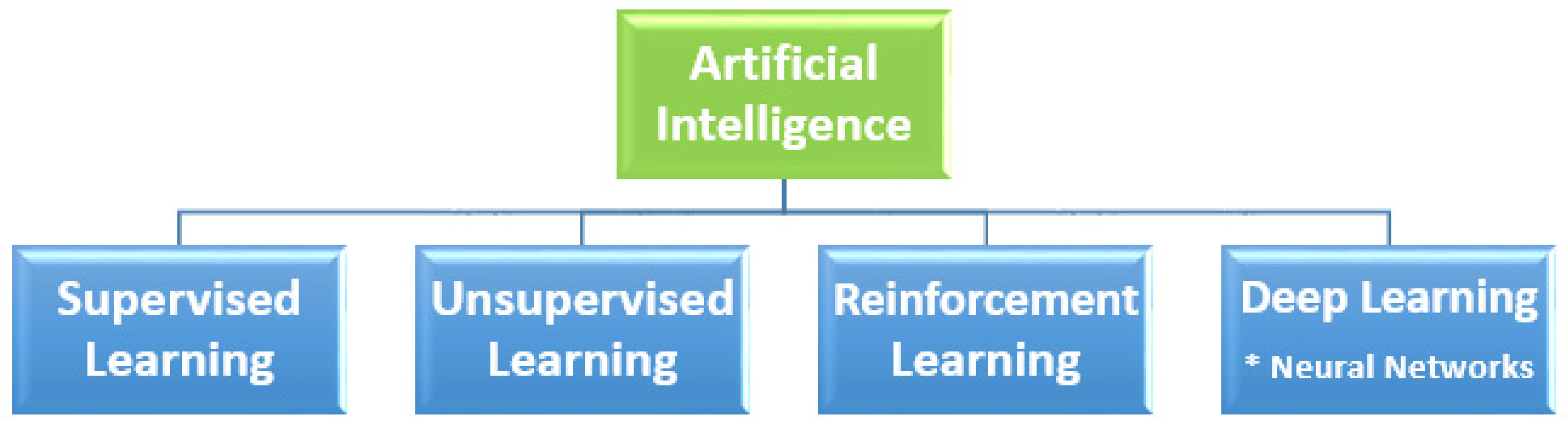

AI strategies are significant for more exact evaluation models. Depending on the data’s form and quality, different methods are used depending on the business problem’s nature. We discuss the types of machine learning. Supervised practice usually begins with understanding defined data collection and analysis. The supervised practice aims to identify data patterns applicable within the analytics framework [

31].

The data used in Superby et al.’s research include features assigned to explain the data’s function. For example, if we want to develop a machine learning application that differentiates millions of species based on features, i.e., images and written descriptions [

12].

Unsupervised practice is used when the problem involves significant amounts of unlisted data; life applications such as Twitter, Instagram, and Snapchat all contain much-unlisted information. Understanding the essentialness behind this information requires a philosophy that characterizes information as dependent on personality or group. Unskilled training follows a clear example: testing knowledge without human mediation [

32].

This is used for spam location innovation via phone. Official and spam communications have too many variables for an observer to tag unsolicited bulk emails. The emails-learning classification focused on clustering to identify spam emails [

33].

Akinode and Bada investigated the impact of various pre-admission factors (WAEC grades, JAMB Scores, etc.). The field survey method was used in their study. A dataset of 560 students enrolled in various courses at a Federal Polytechnic in South-West Nigeria from 2017 to 2018 was used to validate the proposed methodology. Their research used machine learning methods to examine the impact of various factors on student enrollment. The analysis employed the decision tree algorithm (ID3) and support vector machine (SVM) techniques. The Scikit learn tool was used for pre-processing, processing, and experimenting. Results were obtained by comparing the ID3 Decision Algorithm to other ML Algorithms such as Artificial Neural networks, Logistics, etc. Regression analysis reveals that the ID3 algorithm outperforms other ML algorithms. The Decision Tree is the most accurate. This research is beneficial for enhancing the students’ enrollment and finding the below targets schools in time so that necessary action can be taken to improve the performance of schools. Prediction and classification techniques were used to analyze students’ performance and dropout [

34].

Kim and Sunderman looked at student achievement data from six states to see if any demographic differences existed between schools that were identified as requiring improvement and those that achieved the federal standards for adequate annual progress. It is shown that using mean proficiency scores creates a selection bias and that requiring students in schools with a high racial diversity to meet multiple performance goals contributes to these disparities using school-level data from Virginia and California. The authors suggested new approaches to creating accountability systems, including using multiple student achievement indicators, such as student growth in reading and math achievement tests and state accountability ratings of school performance [

35].

Machine learning is a subfield of computer science that grew out of the study of pattern recognition and computational learning theory in the field of artificial intelligence [

36].

According to the statistics, the most used data mining algorithms are ANN and Random Forest, while WEKA is gaining popularity as a way of forecasting student achievement. Previous academic success and demographic traits are the most accurate predictors of a student’s potential. This study demonstrates that including extraneous features in a dataset reduces prediction accuracy and raises the model’s excessive computing cost. This work paves the path for future researchers to employ a wide range of inputs and approaches to obtain remarkably accurate prediction results for a wide range of scenarios. The research also teaches educational institutions how to use data mining techniques to increase their ability to foresee and improve student achievements through the quick provision of additional support services [

37].

The authors used an ANN to assess candidates’ eligibility for admission to a university based on their O-level scores, CGPAs, departmental rankings, and other information. Positive results from performance analysis using the Confusion Matrix and the AUC ROC suggested effective prediction and provided an overall accuracy of 99% [

38].

The authors proposed a brand-new machine learning-based approach to anticipate undergraduate students’ final exam grades using midterm exam results as the source data. The performance of the machine learning methods, random forests, nearest neighbor, support vector machines, logistic regression, Naive Bayes, and k-nearest neighbor, were calculated and compared to forecast the students’ final exam marks. This research determines the most efficient machine learning algorithms and contributes to the early identification of students who are at high risk of failing [

39,

40,

41,

42].

Several classification methods, including decision trees, random forests, SVM classifiers, SGD classifiers, Ada Boost classifiers, and LR classifiers, were used to analyze the dataset. The results show that random forest outperforms the other methods (98%). Decision tree, Ada Boost, logistic regression, and SVM receive 90%, 89%, and 88%, respectively, whereas SGD, SVM, and SGD receive 84%. According to the research, technological factors have a significant influence on children’s academic achievement. Students who used social media daily performed worse than those who used it just sometimes on the weekends. Additionally, assessments are made on how various factors affect student results [

43].