1. Introduction

In the current scientific field, measurement is the process by which scientific researchers obtain specific values for objective things to understand a process. With the development of technology, people have officially entered the electronic age from the electrical age. With the development of the electronic age, major changes have taken place in weaponry, communications, aerospace, and medical care. Testing signals is characterized by more and more complex time-domain signals, higher and higher frequencies to be tested, and higher and higher requirements for measurement. A trigger is the key to capturing a signal in a timely and accurate manner [

1]. Methods of triggering are divided into digital triggering and analog triggering. Compared with digital triggering, due to the inconsistency of the noise source, analog triggering has a relatively large jitter when measuring high-speed signals, and the accuracy of triggering is also limited by the performance of analog devices. With the improvement of trigger accuracy requirements, digital triggers are increasingly becoming the first choice.

An oscilloscope as one of the most widely used time-domain measuring instruments. The triggering function is the key performance of an oscilloscope. It enables the oscilloscope to isolate specific signal events for detailed analysis and to realize the stable display of a repeated waveform. Optical coherence tomography (OCT) is an imaging technique used in industrial and clinical applications [

2], requiring high trigger accuracy and the implementation of variable sampling rates for data acquisition.

Most of the signals to be measured today are continuously changing analog quantities [

3], but most of the information to be processed is digital. It is necessary to convert the analog signal quantities into digital ones, which requires the use of analog-to-digital converters (ADC). The interaction between the analog and digital worlds has been a challenge [

4]. ADCs act as a bridge between analog and digital signals [

5], transforming analog signals that vary continuously in both the time domain and amplitude at the input into equivalent digital signals that are discrete in the time domain and quantized in amplitude [

6,

7]. After ADC conversion, the generated digital signal is further processed using digital signal processing (DSP) or a microcontroller unit (MCU) [

8].

In the process of data acquisition using ADCs, the time interval of data acquisition is limited by the sampling rate of the ADC. To improve the accuracy of data acquisition without changing the sampling rate, interpolation processing is often used to process the data. The interpolation algorithm is a method for evaluating the function value for any intermediate value of the self-adjusting variable [

9], and the main function of interpolation is to improve the resolution of the curve to make it closer to the real waveform [

10]. Among the interpolation methods, sinc interpolation is theoretically the best, and the use of sinc interpolation does not distort the signal of the sample [

11,

12]. However, sinc interpolation is more resource intensive, so its number of interpolation multiples is typically limited by controller resources. Linear interpolation, compared to the sinc interpolation approach, uses first-order linear equations to calculate linearly interpolated data from the two closest data points, which is relatively fast and consumes fewer resources to process, but introduces a large number of interpolation errors [

13]. Therefore, linear interpolation is not suitable for achieving high-accuracy interpolation processing.

Many attempts have been made to improve the accuracy of data acquisition by using interpolation algorithms for data processing, among which Vinzenz Bandi et al. designed an SS-OCT data acquisition system with two input channels for data acquisition at a sampling rate of 500 MHz, and used linear interpolation processing for the acquired data so that the rate of an A-scan could reach 100 KHz [

14]. A. Bossen et al. used non-uniform fast Fourier variation for the data acquired by OCT to process the data in real-time, which can be done at a sampling rate of 100 MHz and the rate of an A-scan can reach 50 KHz [

15]. Baptiste Joly et al. used cubic spline interpolation for the data acquired by a time of flight (TOF) acquisition system, which allows the resolution of data acquisition in the interval of 200–300 ps [

16]. Tao Han et al. proposed a spline interpolation for spectral resampling in swept-source OCT (SS-OCT), which was used to improve the resolution of data acquisition and the signal-to-noise ratio after data acquisition [

17]. Y.sun et al. used one-dimensional spline interpolation to reduce the triggering time interval of the virtual oscilloscope from 12.5 ns to 0.1 ns [

18]. K.park used interpolation processing to reduce the trigger time interval of the oscilloscope from 4 ns to 7.8 ps [

19]. C.F. Ye et al. used FPGA to implement an interpolation algorithm to reduce the trigger time interval of a time-of-flight mass spectrometer to 390 ps [

20]. But in the current high-precision trigger resampling application scenario, where the trigger signal rate can reach the level of GHz, the use of a MHz-level sampling rate cannot meet the current application requirements of the environment, while trigger sampling accuracy requirements have reached a single ps level for the sampling rate, and interpolation has high requirements.

In today’s common high-precision trigger resampling application scenarios, such as oscilloscopes, mass spectrometers not only have strict requirements for trigger accuracy, but also have requirements for real-time data processing. With the advancement of very large-scale integration (VLSI) technology, FPGA chips have larger capacity, higher performance and lower power consumption than before, making FPGA the best choice for current control and algorithms [

21]. Compared with application-specific integrated circuits (ASIC), FPGA have great advantages in speed, precision and flexibility [

22]. Traditional ASIC can only implement a specific function, but FPGA has reconfigurable characteristics, making its design very flexible [

23,

24]. Designers can flexibly configure and adjust according to the performance and efficiency required by users [

25,

26]. Compared with traditional ASIC, the design, implementation, and testing cycle of designers on FPGA may only take a few hours, while it may take months on ASIC [

27]. Compared with custom dedicated chips, FPGA has a lower design cost [

28]. At the same time, compared with using a central processing unit (CPU) or graphics processing unit (GPU) for data processing, FPGA can process data in real time.

The traditional increase in trigger resampling is mostly an interpolation method. Where the improved effectiveness of an interpolation method is large relative to the consumption of resources, if an interpolation method with less resource consumption is used, it will introduce a relatively large interpolation error. To get a better interpolation effect with less resource consumption, an interpolation method combining sinc interpolation and linear interpolation is proposed in this paper to improve the accuracy of trigger resampling. This combines the advantages of small sinc interpolation errors and the simplicity of linear interpolation processing. Firstly, the sinc interpolation with a relatively small error is performed, and then the linear interpolation is performed on this basis. We achieved better interpolation results with relatively small resource consumption.

In this paper, the overall algorithm architecture is given in

Section 2 to describe the principle of algorithm implementation. The resampling performance comparison under different sinc interpolation multipliers and different filter parameters is given in

Section 3. The root mean square (RMS) [

29] values of the trigger point and ideal trigger point under different sinc interpolation multipliers and different filter parameters are given in

Section 4. The performance and RMS data of resampling after sinc interpolation and linear interpolation processing are given in

Section 5. The results of actual tests are given in

Section 6. The conclusions are given in

Section 7.

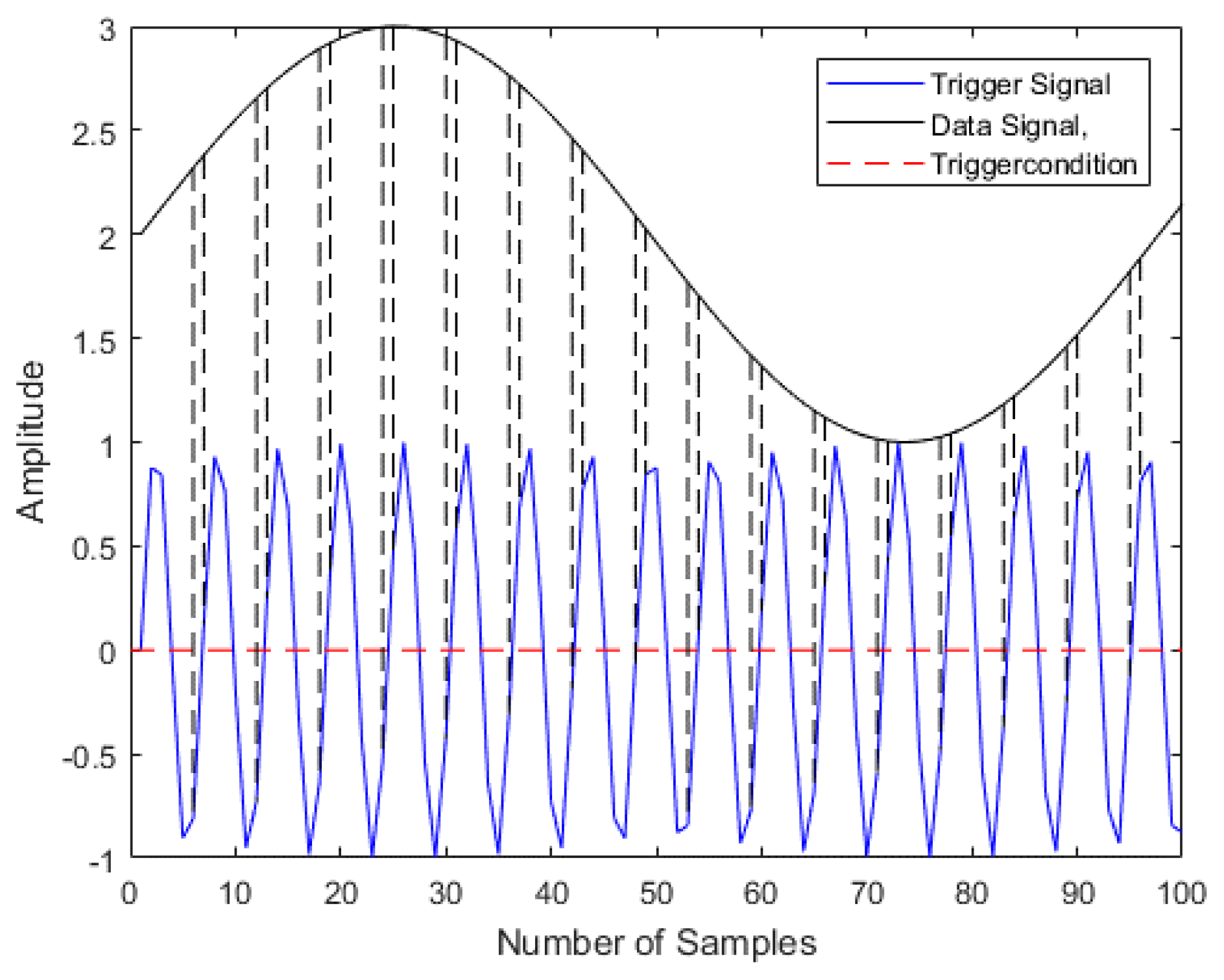

3. Performance Comparison under Different Parameters

In the sinc interpolation process, the interpolated data have the problem of mirror spectrum. In order to address the effect of the mirrored spectrum, it is necessary to design a suitable filter to eliminate the mirrored spectrum. In this design, the interp function in MATLAB is used to design the sinc interpolation. The interp function completes the sinc interpolation process according to the interpolation multiplier and the parameters of the filter. In this design, the results of sinc interpolation are compared from two aspects. Firstly, the performance of the interpolation multiplier of 2/4/6/8/10 is compared under the condition that the filter parameters are default, and the appropriate interpolation multiplier is selected. Then, the appropriate filter parameters are selected according to the determined interpolation multiplier to complete the overall selection of interpolation multiplier and filter parameters for sinc interpolation. This section focuses on the performance corresponding to different interpolation multiples under the condition that the filter parameters are default, and

Section 4 focuses on the performance corresponding to different filter parameters under the selected interpolation multiples. The performance comparison is mainly shown by taking a 701 MHz-resampled 15 MHz signal as an example.

Table 1 shows the performance of resampling the 15 MHz signal without using the algorithm to process the 701 MHz signal,

Figure 6a shows its corresponding spectrum, and

Figure 6b shows the time domain waveform recovered after resampling.

Figure 7a shows the comparison of the performance parameters of the filter parameters in the default state with the interpolation multiplier of 2/4/6/8/10. The specific performance parameters are given in

Table 2, from which we can see that the performance is gradually getting better with the increase in the interpolation multiplier, and the overall performance is the best when the interpolation multiplier is 8.

Figure 7b shows the comparison curves of the performance under the condition of 8-times interpolation. Changing the filter parameters, the filter parameters are 2/4/6/8/10, and the specific corresponding performance parameters are given in

Table 3. With the filter parameters increasing, the overall performance is gradually getting better. In the actual application process, considering the complexity of the implementation and the consumption of resources, at the filter parameters of 6 the performance parameters have met the needs of this design. Therefore, the condition of filter parameter 6 is selected for the sinc interpolation in this design.

5. The Performance of the Algorithm after Processing

In order to reduce the trigger time accuracy to the ps level, this project used a 3GSps 12-bit ADC for data acquisition, which cannot reach the set demand after 8 times sinc interpolation, so linear interpolation was performed again after the sinc interpolation, and the trigger accuracy was reduced to about 42 ps after 8 times sinc interpolation. To obtain a trigger accuracy in ps units, the linear interpolation process was performed on this basis 16 times, so that the trigger accuracy reached about 2.6 ps.

Figure 11a shows the RMS values of the trigger data after adding 16 times of linear interpolation at different sinc interpolation multipliers. In

Figure 11a, it is obvious that the RMS values after adding linear interpolation have significantly decreased compared with those after only sinc interpolation, which proves that the data satisfying the trigger condition tend to zero.

Figure 11b shows the various performance parameters for the three different resampling methods. The three resampling methods are: direct resampling without interpolation processing, resampling after sinc interpolation processing, and resampling after sinc interpolation and linear interpolation. It can be seen from

Figure 11b that compared with directly resampling without interpolation processing, the other two resampling methods have a great improvement on various performance parameters. Although the performance of resampling with linear interpolation is slightly worse than that of only sinc interpolation, there is a significant improvement in trigger accuracy. Combined with test results and resource consumption, the overall structure of this interpolation algorithm is 8 times sinc interpolation and 16 times linear interpolation.

The spectrograms and recovered time domain waveforms obtained by resampling after combining 8-fold sinc interpolation and 16-fold linear interpolation are given in

Figure 12a,b, respectively, and the performance tables after resampling are given in

Table 4.

Table 5 shows the simulation test results under additional different frequency points.

6. Actual Test Results

The actual verification used Xilinx xc7k480tffg1156-2 FPGA; two Ceyear 1435B signal generators, which can generate waveform signals from 9 KHz to 6 GHz; the AcelaMicro production of the AAD12S3000 data acquisition chip; and an independent-design ADAQ1004 data acquisition board.

Figure 13 shows the actual test environment.

Table 6 shows the consumption of main resources of this algorithm in FPGA.

The signal generator 1 generates 701 MHZ and 351 MHz trigger signals, the signal generator 2 generates 15 MHZ data signals, data acquisition and processing occurs through acquisition card 3, and the computer 4 displays the waveform after resampling.

Figure 14 shows the recovery graph of the time domain waveform resampled at 701 MHz to 15 MHz in the actual test. From the waveform, it can be seen that it is consistent with the results of behavior simulation, which verifies the correctness of the resampled time domain waveform recovery in the actual test.

Table 7 shows the performance results of the actual test.

Figure 15a,b show the spectrogram and recovered time domain waveform obtained by resampling 15 MHz at 701 MHz in the actual test, respectively. From the spectrum, we can see that the results are consistent with the previous simulation, and the attenuation amplitude of the remaining frequency points, except for the main frequency, is below −60 db, which meets the overall design requirements. Since there are few detailed data of other works to compare, a horizontal comparison of detailed data was not carried out here.