In order to determine the feasibility of using a real-time operating system for SoC sub-blocks, several factors must be considered:

3.2. Operating System Selection

TurbOS is the operating system specifically designed for the Turbo9. It was derived from NitrOS-9 [

21], which includes a number of innovative features for a small-footprint microprocessor, including pre-emptive multitasking, interprocess communication, and a module structure which allows code to be located anywhere in the memory and reused by multiple processes. It is loosely based on Unix and incorporates a number of fundamental choices in that operating system [

22]. A summary of the major system calls and parameters is shown in

Table 1.

Selecting an operating system that is open source encourages contributions from others for study and improvement. An open-source model for sub-blocks is opposite to the closed-source, black-box model of existing IP sub-block vendors. While the idea of an open-source framework for SoC applications has been explored before (Mantovani and Giri et al. explored an open-source SoC platform with Open ESP [

23]), there is no 16-bit open-source project specifically focused on SoC sub-blocks. TurbOS is an open-source project that encourages collaboration and innovation from a worldwide group of contributors. The source code for both system-level software (kernel, support modules, etc.) and the application code is available on GitHub. GitHub offers a rich set of services that allow for collaboration on and extension of the TurbOS project. The preferred method is for an interested contributor to create a fork in the TurbOS repository. This fork gives the contributor a copy of the source code repository on their own GitHub account. They can experiment by making changes that they can easily discard or decide to improve upon. Once their changes are committed to their fork, they can create a pull request which offers the opportunity to integrate changes into the original (forked) repository. Principal contributors can review the pull request and either accept it as it is, reject it, or ask for revisions.

Issue tracking and documentation for the project are also hosted on GitHub. Collaborators can open issues for investigation by principal contributors. This level of openness and transparency intends to foster a sense of purpose and community. It also structures code changes within a peer review system and enforces code discipline. Since the source code lies in a single repository, the entire project can be built on a modern computer using cross-hosted assemblers and compilers [

24,

25].

3.2.1. The Kernel

The heart of TurbOS is the kernel. The kernel creates an environment that processes reside and work in. It provides a number of common operating system services, including:

Process creation, known as forking.

Process scheduling and prioritization.

Memory allocation and deletion.

Module validation and management.

Interrupt handling.

Interprocess communication.

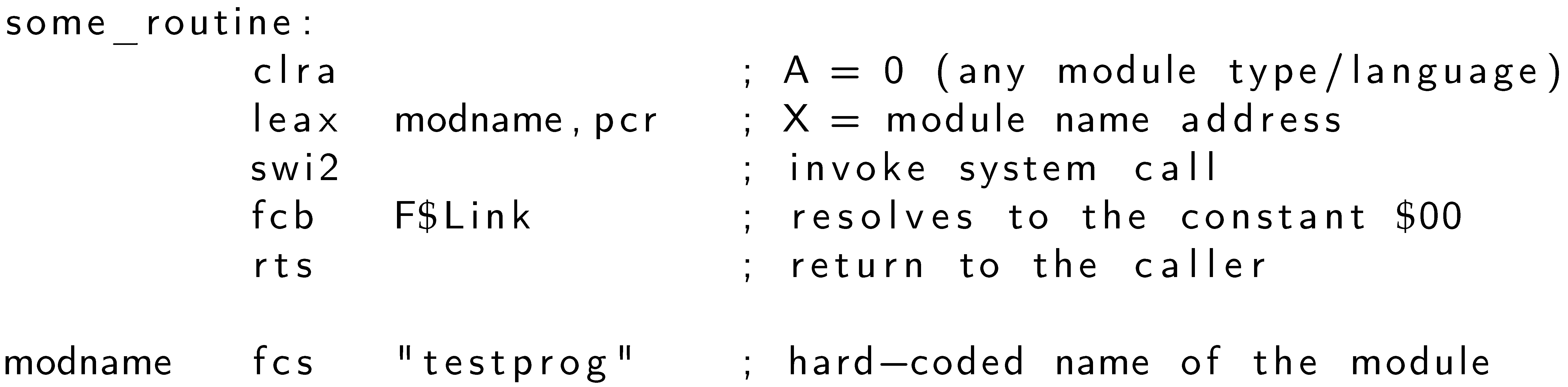

These services are available through system calls. System call names have a prefix of

F$, for example,

F$Fork,

F$Chain, etc. System calls are made through the Turbo9’s

swi2 instruction. This is a software interrupt instruction that suspends execution, pushes registers to the stack, then redirects execution to a location in the kernel that performs the system call. The byte following the

swi2 call represents the system call code. The assembly language source in Listing 1 demonstrates how this is achieved in TurbOS. The

F$Link system call code, which is byte

$00, follows the

swi2 instruction in the instruction stream.

| Listing 1. TurbOS system call in Turbo9 assembly language. |

![Electronics 13 01978 i001]() |

System call parameters are passed directly within the Turbo9’s registers themselves. This provides a tight binding which saves code space and increases execution speed, compared to the C convention of the caller/callee using the stack for parameter placement and reference.

3.2.2. The Module

The fundamental organizational structure of a block of executable code or data is the

module. As indicated in

Figure 2, a module is composed of three parts: a

header, a

body, and a

footer. The header contains information about the module such as its name and its identity (for example, is it object code or data). The body contains either executable code or data, and the footer is a 3-byte CRC (cyclic redundancy check) that the kernel uses to verify the integrity of the module. A module has a name, which uniquely identifies it in the system.

Modules reside in the memory and are indexed in a module directory. The module directory contains a list of all of the modules in the memory. To obtain access to a module, the application can link to it with the F$Link system call. This increases the module’s link count, which is an indicator of how many times it is in use. Conversely, when the application finishes using a module, it calls F$Unlink to unlink it. This decreases the module’s link count. When a module’s link count reaches 0, it is removed from memory and the space is reclaimed.

3.2.3. Programs and Processes

A program is an executable block of code that resides inside a module. In other words, a program may also be considered an app. A program’s purpose is to perform some useful work on the system, such as controlling inputs/outputs or performing calculations. Applications are a broad class of programs that communicate to the kernel through system calls.

On the other hand, a

process, (also known as a

task, in other operating systems such as FreeRTOS) is a single instance of a program. The kernel manages the running time of one or more processes through priority-based round-robin scheduling. The scheduling algorithm incorporates an age for each process to ensure that even lowest priority processes receives some run time. The kernel keeps track of the process with a data structure known as the

process descriptor, (also known as a

task control block in FreeRTOS), which is a 64-byte block of data.

Figure 3 illustrates four sub-block processes interacting with the TurbOS kernel.

The system call responsible for creating a new process is F$Fork. When a new process is forked, the kernel provides it with its own data area and stack, then places it in the active queue for scheduled execution. The process calling F$Fork becomes the parent, and the new process becomes the child. If the parent chooses to wait for the child process to finish, it calls the F$Wait system call, which blocks the parent process for the duration of the child process’ lifetime.

New processes can also be created through chaining. When a process calls F$Chain, it effectively becomes the new process. There is no parent/child relationship when chaining occurs. This is useful when attempting to keep system resource usage to a minimum.

3.2.4. Process Scheduling

The kernel is responsible for determining when a process can run and obtain CPU time. The basic unit of time is called a tick and is usually 1 millisecond in length. The unit of time that a process can run on the CPU at an interval is called the time slice. A time slice is a configurable multiple of a tick. A time slice is nominally 10 ticks or 10 milliseconds given a 1 millisecond tick.

The kernel uses a priority-based round-robin scheduling algorithm. Every process has an 8-bit priority and an 8-bit age. The highest value for priority and age is 255 and the lowest is 0. The default priority and age value for a new process is 128.

In order to manage access to the microprocessor, known as providing CPU time, the kernel defines a number of different queues that a process can be in at any given time.

3.2.5. The Active Queue

The active queue is a singly linked list of process descriptors that represents processes which are actively expecting to use the CPU. When a process is created through the F$Fork system call, the kernel places it in the active queue. The active queue is sorted by a process’ age in order to ensure that lower priority processes receive some CPU time. As each process receives a slice of time on the CPU, the kernel increases its age. When the age reaches the maximum value of 255, the process’ priority value is copied into its age. The kernel then reinserts the process into the active queue based on its new age value.

3.2.6. The Wait Queue

The wait queue is a singly linked list of process descriptors that represents processes which are waiting on one or more children to complete execution. A process enters this queue by calling the F$Wait system call.

3.2.7. The Sleep Queue

The sleep queue is a singly linked list of process descriptors that represents processes which are expecting to not use the CPU. A process can call the F$Sleep system call to give CPU time to another process for a given period of time.

3.2.8. Memory Allocation and Management

Given that memory is a constrained resource, the kernel manages memory in both 256-byte and 64-byte chunks. Some kernel data structures are small enough to only need 64 bytes, while others require 256 bytes, hence the separate system calls for obtaining different sizes.

The kernel divides the memory up primarily into 256-byte pages. The page size of 256 bytes conveniently fits into the 64K address space of the Turbo9, as 64K can accommodate exactly 256 pages of 256 bytes.

The kernel is responsible for managing memory resources on behalf of processes, but also claims some memory for its own use. Starting at the very low end of the memory map, the first 256-byte page from $0000 to $00FF is the area known as the system globals. The kernel uses this area for its own housekeeping. The next three 256-byte pages between $0100 and $03FF contain allocation bitmaps, the module directory, the system call table, and the system stack.

The top of the memory map contains the kernel and other system modules, as well as any program modules necessary to perform work. The free memory exists in between, and is available for both the kernel and programs to draw from.

The kernel provides four system calls that vend memory to and recover memory from processes. For memory allocations with 256-byte increments, there is F$SRqMem, which allocates memory on behalf of a process, and F$SRtMem, which returns allocated memory back to the system’s free memory pool. The system’s free memory pool is managed by the kernel using a 256-bit (32 byte) allocation bitmap located in the system globals area. Each bit in the 256-bit table represents one 256-byte page of memory starting at address 0 and ending at address 65280. It is the responsibility of the process to return the memory once it has finished using it; however, if the process terminates without deallocating memory, it is automatically returned to the system’s free memory pool.

For finer grained memory allocation, two additional system calls are available for 64 byte allocation and deallocation: F$All64 allocates memory on behalf of a process, and F$Ret64 returns allocated memory back to the system’s free memory pool. These two calls rely on F$SRqMem and F$SRtMem, respectively, to request and return 256-byte pages that are subdivided into four 64-byte blocks. TurbOS uses these fine grained calls for certain system data structures that fit within 64 bytes to utilize memory more efficiently.

3.2.9. Interrupt Handling

Interrupts are external triggers that arrest the microprocessor’s execution and direct its path to a new execution area. Interrupts are instigated by hardware sources like clock generators, communications circuits, and human interface devices such as buttons or keys. The nature of interrupts is that they may arrive at any time, and must be serviced as soon as possible. Only after the interrupt is serviced will the original execution path resume at the interrupted location.

A key component to an RTOS’s response is minimizing the time between the generation of the interrupt and the time at which it is handled. It is important that this span of time, known as the interrupt latency, be as short as possible.

In TurbOS, interrupts are directed into the kernel, where they are checked against an interrupt polling table. A process that expects to service interrupts must call the F$IRQ system call with a pointer to a polling table entry data structure, which includes the address of the interrupt service routine and other information that the kernel uses to verify the party responsible for servicing that interrupt.

3.2.10. Interprocess Communication through Signaling

At times, multiple processes may need to pass information between each other. Interprocess communication facilities such as pipes, events, message queues, and signals are mechanisms for this type of cross-communication in many operating systems.

TurbOS provides a fast and simplistic method of interprocess communication known as signaling. A signal is an 8-bit value that a process receives and acts upon. When a process receives a signal, a routine, known as a signal handler, is invoked. The code to address and act upon the signal value is executed inside the signal handler.

Once the signal handler routine is complete, execution resumes at the point in the process where it left off. Signals act in a similar fashion to interrupts, but are sourced from processes instead of hardware.

A number of signals are reserved for specific behavior. S$Kill is the kill signal; any process that receives it is immediately terminated. S$Wake is the wakeup signal. When a process receives this signal, the kernel puts the process into the active queue if it is not already there. The signal handler is not called, and is not even necessary, for a process receiving this signal.

A process can install a signal handler to process signals by using the F$Icpt system call. If a process does not install a signal handler, any signal it receives (except for S$Wake) immediately causes its termination; its parent then receives the signal value as the exit code.

The remaining signal values from 4 to 255 are known as user-defined signals, and can be used to pass information from one process to another. Cooperating applications can define their own behavior when receiving such a signal, and use the F$Send system call to send a signal to another process.