Hybrid Uncertainty Calibration for Multimodal Sentiment Analysis

Abstract

1. Introduction

- •

- We propose a hybrid uncertainty calibration (HUC) method, which utilizes the labels of both modalities to impose uncertainty constraints on each modality separately, aiming to reduce the uncertainties in each modality and enhance the calibration ability of the model.

- •

- We propose an uncertain-aware late fusion (ULF) method to enhance the classification ability of the model.

- •

- We add common types of noise, such as Gaussian noise and salt-and-pepper noise, to the test set. Experimental results demonstrate that our proposed model exhibits greater generalization ability.

2. Related Work

2.1. Multimodal Sentiment Analysis

2.2. Multimodal Uncertainty Calibration

3. Methodology

3.1. Framework Overview

3.2. Unimodal Backbone Model

3.3. ENN Head

3.4. Hybrid Uncertainty Calibration

3.4.1. EDL Loss

3.4.2. HUC Loss

3.5. Uncertainty-Aware Late Fusion

| Algorithm 1 Algorithm of Uncertainty-aware Late Fusion (ULF) | |

| Input: The text sample and the image sample . | |

| Output: The classification output for sample i. | |

| 1: | Obtain the and for the text modality and image modality classifiers according to Equation (4); |

| 2: | Obtain the uncertainty estimates and for the text modality and image modality, respectively, according to Equation (7); |

| 3: | if == then |

| 4: | = |

| 5: | else if == “neutral” then |

| 6: | = |

| 7: | else if == “neutral” then |

| 8: | = |

| 9: | else if < then |

| 10: | = |

| 11: | else |

| 12: | = |

| 13: | end if |

| 14: | return |

4. Experiments

4.1. Experiment Setups

4.1.1. Datasets

4.1.2. Evaluation Metrics

4.1.3. Implementation Details

4.2. Comparison with Existing Methods

4.2.1. Comparative Methods

4.2.2. Results and Analysis

4.3. Ablation Study

4.4. Case Study

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Mercha, E.M.; Benbrahim, H. Machine learning and deep learning for sentiment analysis across languages: A survey. Neurocomputing 2023, 531, 195–216. [Google Scholar] [CrossRef]

- Zad, S.; Heidari, M.; Jones, J.H.; Uzuner, O. A survey on concept-level sentiment analysis techniques of textual data. In Proceedings of the 2021 IEEE World AI IoT Congress (AIIoT), Seattle, WA, USA, 10–13 May 2021; pp. 0285–0291. [Google Scholar]

- Das, R.; Singh, T.D. Multimodal Sentiment Analysis: A Survey of Methods, Trends, and Challenges. ACM Comput. Surv. 2023, 55, 1–38. [Google Scholar] [CrossRef]

- Gandhi, A.; Adhvaryu, K.; Poria, S.; Cambria, E.; Hussain, A. Multimodal sentiment analysis: A systematic review of history, datasets, multimodal fusion methods, applications, challenges and future directions. Inform. Fusion 2023, 91, 424–444. [Google Scholar] [CrossRef]

- Amrani, E.; Ben-Ari, R.; Rotman, D.; Bronstein, A. Noise Estimation Using Density Estimation for Self-Supervised Multimodal Learning. Proc. AAAI Conf. Artif. Intell. 2021, 35, 6644–6652. [Google Scholar] [CrossRef]

- Xu, N. Analyzing multimodal public sentiment based on hierarchical semantic attentional network. In Proceedings of the 2017 IEEE International Conference on Intelligence and Security Informatics (ISI), Beijing, China, 22–24 July 2017; pp. 152–154. [Google Scholar]

- Xu, N.; Mao, W.; Chen, G. A co-memory network for multimodal sentiment analysis. In Proceedings of the The 41st International ACM SIGIR Conference on Research & Development in Information Retrieval, Ann Arbor, MI, USA, 8–12 July 2018; pp. 929–932. [Google Scholar]

- Niu, T.; Zhu, S.; Pang, L.; El Saddik, A. Sentiment analysis on multi-view social data. In Proceedings of the MultiMedia Modeling: 22nd International Conference, MMM 2016, Miami, FL, USA, 4–6 January 2016; Proceedings, Part II 22. Springer: Berlin/Heidelberg, Germany, 2016; pp. 15–27. [Google Scholar]

- Xu, N.; Mao, W. Multisentinet: A deep semantic network for multimodal sentiment analysis. In Proceedings of the 2017 ACM on Conference on Information and Knowledge Management, Singapore, 6–10 November 2017; pp. 2399–2402. [Google Scholar]

- Cheema, G.S.; Hakimov, S.; Müller-Budack, E.; Ewerth, R. A fair and comprehensive comparison of multimodal tweet sentiment analysis methods. In Proceedings of the 2021 Workshop on Multi-Modal Pre-Training for Multimedia Understanding, Taipei, China, 16–19 November 2021; pp. 37–45. [Google Scholar]

- Zhang, K.; Geng, Y.; Zhao, J.; Liu, J.; Li, W. Sentiment Analysis of Social Media via Multimodal Feature Fusion. Symmetry 2020, 12, 2010. [Google Scholar] [CrossRef]

- Tomani, C.; Cremers, D.; Buettner, F. Parameterized temperature scaling for boosting the expressive power in post-hoc uncertainty calibration. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 24–28 October 2022; Springer: Berlin/Heidelberg, Germany, 2022; pp. 555–569. [Google Scholar]

- Zhuang, D.; Bu, Y.; Wang, G.; Wang, S.; Zhao, J. SAUC: Sparsity-Aware Uncertainty Calibration for Spatiotemporal Prediction with Graph Neural Networks. In Proceedings of the Temporal Graph Learning Workshop@ NeurIPS 2023, New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Xu, J.; Huang, F.; Zhang, X.; Wang, S.; Li, C.; Li, Z.; He, Y. Visual-textual sentiment classification with bi-directional multi-level attention networks. Knowl.-Based Syst. 2019, 178, 61–73. [Google Scholar] [CrossRef]

- Cholet, S.; Paugam-Moisy, H.; Regis, S. Bidirectional Associative Memory for Multimodal Fusion: A Depression Evaluation Case Study. In Proceedings of the 2019 International Joint Conference on Neural Networks (IJCNN), Budapest, Hungary, 14–19 July 2019. [Google Scholar] [CrossRef]

- Kumar, A.; Srinivasan, K.; Cheng, W.H.; Zomaya, A.Y. Hybrid context enriched deep learning model for fine-grained sentiment analysis in textual and visual semiotic modality social data. Inf. Process. Manag. 2020, 57, 102141. [Google Scholar] [CrossRef]

- Jiang, T.; Wang, J.; Liu, Z.; Ling, Y. Fusion-extraction network for multimodal sentiment analysis. In Proceedings of the Advances in Knowledge Discovery and Data Mining: 24th Pacific-Asia Conference, PAKDD 2020, Singapore, 11–14 May 2020; Proceedings, Part II 24. Springer: Berlin/Heidelberg, Germany, 2020; pp. 785–797. [Google Scholar]

- Zhang, K.; Zhu, Y.; Zhang, W.; Zhu, Y. Cross-modal image sentiment analysis via deep correlation of textual semantic. Knowl.-Based Syst. 2021, 216, 106803. [Google Scholar] [CrossRef]

- Guo, W.; Zhang, Y.; Cai, X.; Meng, L.; Yang, J.; Yuan, X. LD-MAN: Layout-Driven Multimodal Attention Network for Online News Sentiment Recognition. IEEE Trans. Multimed. 2021, 23, 1785–1798. [Google Scholar] [CrossRef]

- Liao, W.; Zeng, B.; Liu, J.; Wei, P.; Fang, J. Image-text interaction graph neural network for image-text sentiment analysis. Appl. Intell. 2022, 52, 11184–11198. [Google Scholar] [CrossRef]

- Ye, J.; Zhou, J.; Tian, J.; Wang, R.; Zhou, J.; Gui, T.; Zhang, Q.; Huang, X. Sentiment-aware multimodal pre-training for multimodal sentiment analysis. Knowl.-Based Syst. 2022, 258, 110021. [Google Scholar] [CrossRef]

- Zeng, J.; Zhou, J.; Huang, C. Exploring Semantic Relations for Social Media Sentiment Analysis. IEEE/ACM Trans. Audio Speech Lang. Process. 2023, 31, 2382–2394. [Google Scholar] [CrossRef]

- Liu, Y.; Li, Z.; Zhou, K.; Zhang, L.; Li, L.; Tian, P.; Shen, S. Scanning, attention, and reasoning multimodal content for sentiment analysis. Knowl.-Based Syst. 2023, 268, 110467. [Google Scholar] [CrossRef]

- Gawlikowski, J.; Tassi, C.R.N.; Ali, M.; Lee, J.; Humt, M.; Feng, J.; Kruspe, A.; Triebel, R.; Jung, P.; Roscher, R.; et al. A survey of uncertainty in deep neural networks. Artif. Intell. Rev. 2023, 56, 1513–1589. [Google Scholar] [CrossRef]

- Minderer, M.; Djolonga, J.; Romijnders, R.; Hubis, F.; Zhai, X.; Houlsby, N.; Tran, D.; Lucic, M. Revisiting the calibration of modern neural networks. Adv. Neural Inf. Process. Syst. 2021, 34, 15682–15694. [Google Scholar]

- Pakdaman Naeini, M.; Cooper, G.; Hauskrecht, M. Obtaining Well Calibrated Probabilities Using Bayesian Binning. Proc. AAAI Conf. Artif. Intell. 2015, 29, 2901–2907. [Google Scholar] [CrossRef]

- Krishnan, R.; Tickoo, O. Improving model calibration with accuracy versus uncertainty optimization. Adv. Neural Inf. Process. Syst. 2020, 33, 18237–18248. [Google Scholar]

- Tomani, C.; Gruber, S.; Erdem, M.E.; Cremers, D.; Buettner, F. Post-hoc Uncertainty Calibration for Domain Drift Scenarios. In Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021. [Google Scholar] [CrossRef]

- Hubschneider, C.; Hutmacher, R.; Zollner, J.M. Calibrating Uncertainty Models for Steering Angle Estimation. In Proceedings of the 2019 IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019. [Google Scholar] [CrossRef]

- Zhang, H.M.Q.; Zhang, C.; Wu, B.; Fu, H.; Zhou, J.T.; Hu, Q. Calibrating Multimodal Learning. arXiv 2023, arXiv:2306.01265. [Google Scholar]

- Tellamekala, M.K.; Amiriparian, S.; Schuller, B.W.; André, E.; Giesbrecht, T.; Valstar, M. COLD Fusion: Calibrated and Ordinal Latent Distribution Fusion for Uncertainty-Aware Multimodal Emotion Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 46, 805–822. [Google Scholar] [CrossRef] [PubMed]

- Kose, N.; Krishnan, R.; Dhamasia, A.; Tickoo, O.; Paulitsch, M. Reliable Multimodal Trajectory Prediction via Error Aligned Uncertainty Optimization. In Proceedings of the Computer Vision—ECCV 2022 Workshops, Tel Aviv, Israel, 24–28 October 2022; Springer Nature: Cham, Switzerland, 2023; pp. 443–458. [Google Scholar] [CrossRef]

- Folgado, D.; Barandas, M.; Famiglini, L.; Santos, R.; Cabitza, F.; Gamboa, H. Explainability meets uncertainty quantification: Insights from feature-based model fusion on multimodal time series. Inform. Fusion 2023, 100, 101955. [Google Scholar] [CrossRef]

- Wang, R.; Liu, X.; Hao, F.; Chen, X.; Li, X.; Wang, C.; Niu, D.; Li, M.; Wu, Y. Ada-CCFNet: Classification of multimodal direct immunofluorescence images for membranous nephropathy via adaptive weighted confidence calibration fusion network. Eng. Appl. Artif. Intel. 2023, 117, 105637. [Google Scholar] [CrossRef]

- Peng, X.; Wei, Y.; Deng, A.; Wang, D.; Hu, D. Balanced Multimodal Learning via On-the-fly Gradient Modulation. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 21–24 June 2022. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Sensoy, M.; Kaplan, L.; Kandemir, M. Evidential deep learning to quantify classification uncertainty. Adv. Neural Inf. Process. Syst. 2018, 31, 1–31. [Google Scholar]

- Sentz, K.; Ferson, S. Combination of Evidence in Dempster-Shafer Theory; Sandia Nat. Lab.: Albuquerque, NM, USA, 2002. [Google Scholar] [CrossRef]

- Jøsang, A. Subjective Logic; Springer: Berlin/Heidelberg, Germany, 2016; Volume 3. [Google Scholar]

- Gal, Y. Uncertainty in Deep Learning. Ph.D. Thesis, Department of Engineering, University of Cambridge, Cambridge, UK, 2016. [Google Scholar]

- Guo, C.; Pleiss, G.; Sun, Y.; Weinberger, K.Q. On calibration of modern neural networks. In Proceedings of the International Conference on Machine Learning; PMLR: New York, NY, USA, 2017; pp. 1321–1330. [Google Scholar]

- Rere, L.R.; Fanany, M.I.; Arymurthy, A.M. Simulated Annealing Algorithm for Deep Learning. Procedia Comput. Sci. 2015, 72, 137–144. [Google Scholar] [CrossRef]

- Zhang, Q.; Wu, H.; Zhang, C.; Hu, Q.; Fu, H.; Zhou, J.T.; Peng, X. Provable Dynamic Fusion for Low-Quality Multimodal Data. arXiv 2023, arXiv:2306.02050. [Google Scholar]

- Kiela, D.; Bhooshan, S.; Firooz, H.; Perez, E.; Testuggine, D. Supervised multimodal bitransformers for classifying images and text. arXiv 2019, arXiv:1909.02950. [Google Scholar]

- Wang, H.; Li, X.; Ren, Z.; Wang, M.; Ma, C. Multimodal Sentiment Analysis Representations Learning via Contrastive Learning with Condense Attention Fusion. Sensors 2023, 23, 2679. [Google Scholar] [CrossRef]

- Laves, M.H.; Ihler, S.; Kortmann, K.P.; Ortmaier, T. Well-calibrated model uncertainty with temperature scaling for dropout variational inference. arXiv 2019, arXiv:1909.13550. [Google Scholar]

- Zhang, Y.; Jin, R.; Zhou, Z.H. Understanding bag-of-words model: A statistical framework. Int. J. Mach. Learn Cyb. 2010, 1, 43–52. [Google Scholar] [CrossRef]

- Han, Z.; Zhang, C.; Fu, H.; Zhou, J.T. Trusted multi-view classification. arXiv 2021, arXiv:2102.02051. [Google Scholar]

- Han, Z.; Zhang, C.; Fu, H.; Zhou, J.T. Trusted Multi-View Classification With Dynamic Evidential Fusion. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 2551–2566. [Google Scholar] [CrossRef]

- Bao, W.; Yu, Q.; Kong, Y. Evidential deep learning for open set action recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 10–17 October 2021; pp. 13349–13358. [Google Scholar]

- Ma, H.; Han, Z.; Zhang, C.; Fu, H.; Zhou, J.T.; Hu, Q. Trustworthy multimodal regression with mixture of normal-inverse gamma distributions. Adv. Neural Inf. Process. Syst. 2021, 34, 6881–6893. [Google Scholar]

- Verma, V.; Qu, M.; Kawaguchi, K.; Lamb, A.; Bengio, Y.; Kannala, J.; Tang, J. Graphmix: Improved training of gnns for semi-supervised learning. In Proceedings of the AAAI Conference on Artificial Intelligence, Online, 2–9 February 2021; Volume 35, pp. 10024–10032. [Google Scholar]

- Hu, Z.; Tan, B.; Salakhutdinov, R.R.; Mitchell, T.M.; Xing, E.P. Learning data manipulation for augmentation and weighting. Adv. Neural Inf. Process. Syst. 2019, 32, 1–12. [Google Scholar]

- Xie, Z.; Wang, S.I.; Li, J.; Lévy, D.; Nie, A.; Jurafsky, D.; Ng, A.Y. Data noising as smoothing in neural network language models. arXiv 2017, arXiv:1703.02573. [Google Scholar]

| Dataset | Positive | Neutral | Negative | Total |

|---|---|---|---|---|

| MVSA-Single | 2683 | 470 | 1358 | 4511 |

| MVSA-Multiple | 11,318 | 4408 | 1298 | 17,024 |

| MVSA-Single-Small | 1398 | 470 | 724 | 2592 |

| Modality | Model | MVSA-Single | MVSA-Multiple | ||

|---|---|---|---|---|---|

| Acc (%) | F1 (%) | Acc (%) | F1 (%) | ||

| Unimodal | BoW | 51.00 | 48.13 | 66.00 | 59.88 |

| BERT | 71.11 | 69.70 | 67.59 | 66.24 | |

| ResNet | 66.08 | 64.32 | 67.88 | 61.30 | |

| Multimodal | MultiSentiNet | 69.84 | 69.84 * | 68.86 | 68.11 * |

| HSAN | 69.88 | 66.90 * | 67.96 | 67.76 * | |

| Co-MN-Hop6 | 70.51 | 70.01 * | 68.92 | 68.83 * | |

| CFF-ATT | 71.44 | 71.06 * | 69.62 | 69.35 * | |

| ConcatBow | 61.64 | 60.81 | 68.06 | 63.32 | |

| ConcatBert | 68.51 | 67.84 | 69.65 | 65.75 | |

| Late fusion | 74.28 | 73.16 | 69.29 | 65.81 | |

| Se-MLNN | 75.33 | 73.76 | 66.35 | 61.89 | |

| MLFC-SCSupCon | 76.44 | 75.61 | 70.53 | 67.97 | |

| Ours | 77.61 | 76.59 | 72.06 | 68.83 | |

| Modality | Model | MVSA-Single-Small | |

|---|---|---|---|

| Acc (%) | F1 (%) | ||

| Unimodal | BoW | 66.67 | 64.59 |

| BERT | 74.53 | 73.15 | |

| ResNet | 66.08 | 62.21 | |

| Multimodal | ConcatBow | 65.32 | 64.32 |

| ConcatBert | 66.15 | 65.02 | |

| Late fusion | 74.92 | 74.07 | |

| TMC | 75.98 | 75.21 | |

| ETMC | 76.11 | 75.34 | |

| QMF | 78.07 | 76.86 | |

| Ours | 79.38 | 78.06 | |

| ULF | MVSA-Single | MVSA-Multiple | MVSA-Single-Small | ||||

|---|---|---|---|---|---|---|---|

| Acc (%) | F1 (%) | Acc (%) | F1 (%) | Acc (%) | F1 (%) | ||

| ✗ | ✗ | 74.28 | 73.16 | 69.29 | 65.81 | 74.92 | 74.07 |

| ✗ | ✔ | 75.52 | 74.71 | 70.35 | 66.25 | 75.26 | 74.66 |

| ✔ | ✗ | 75.39 | 74.80 | 70.29 | 66.08 | 75.14 | 74.21 |

| ✔ | ✔ | 77.61 | 76.59 | 72.06 | 68.83 | 79.38 | 78.06 |

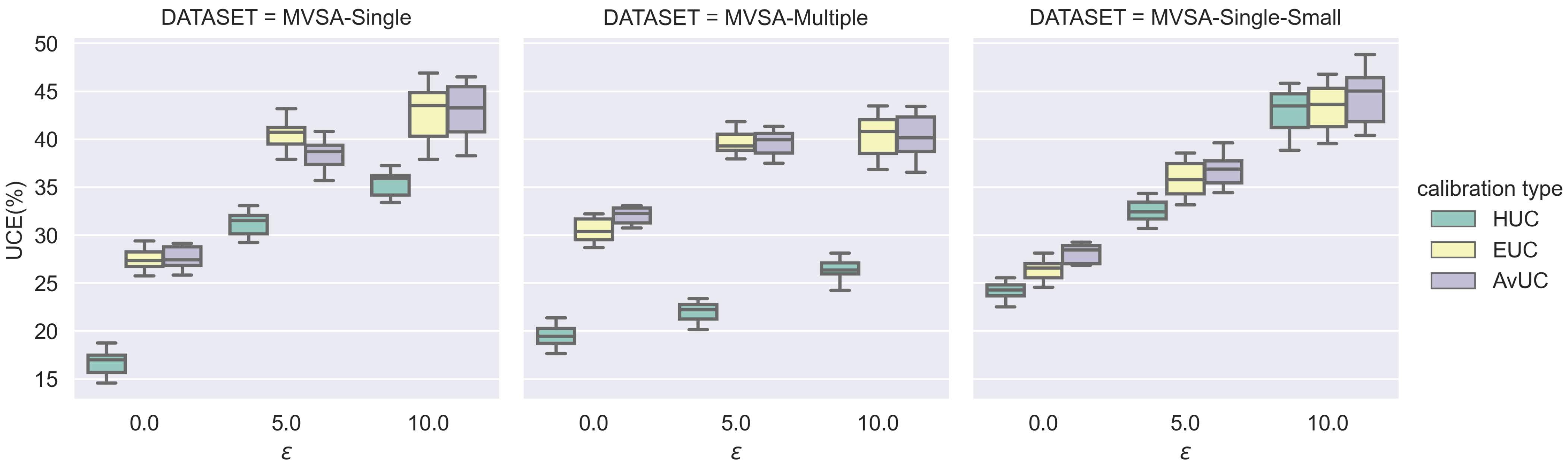

| Noise Type | Model | MVSA-Single | MVSA-Multiple | MVSA-Single-Small | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| = 0.0 | = 5.0 | = 10.0 | = 0.0 | = 5.0 | = 10.0 | = 0.0 | = 5.0 | = 10.0 | ||

| Gaussian | ULF-HUC (w/o ) | 27.03 | 42.57 | 45.38 | 31.55 | 38.87 | 41.72 | 26.46 | 35.56 | 46.47 |

| ULF-HUC (full) | 16.69 | 31.21 | 35.38 | 19.48 | 21.95 | 26.14 | 24.16 | 32.51 | 42.82 | |

| Salt-Pepper | ULF-HUC (w/o ) | 27.03 | 38.74 | 47.62 | 31.55 | 36.71 | 42.09 | 26.46 | 32.71 | 46.42 |

| ULF-HUC (full) | 16.69 | 30.01 | 35.32 | 19.48 | 21.70 | 25.19 | 24.16 | 30.00 | 41.23 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pan, Q.; Meng, Z. Hybrid Uncertainty Calibration for Multimodal Sentiment Analysis. Electronics 2024, 13, 662. https://doi.org/10.3390/electronics13030662

Pan Q, Meng Z. Hybrid Uncertainty Calibration for Multimodal Sentiment Analysis. Electronics. 2024; 13(3):662. https://doi.org/10.3390/electronics13030662

Chicago/Turabian StylePan, Qiuyu, and Zuqiang Meng. 2024. "Hybrid Uncertainty Calibration for Multimodal Sentiment Analysis" Electronics 13, no. 3: 662. https://doi.org/10.3390/electronics13030662

APA StylePan, Q., & Meng, Z. (2024). Hybrid Uncertainty Calibration for Multimodal Sentiment Analysis. Electronics, 13(3), 662. https://doi.org/10.3390/electronics13030662