A Multi-Channel Δ-BiLSTM Framework for Short-Term Bus Load Forecasting Based on VMD and LOWESS

Abstract

1. Introduction

- (1)

- Multi-scale/Multi-phase coupling. Single-channel end-to-end models face challenges in simultaneously capturing trends, seasonality, and pulses, often resulting in a trade-off between these factors. Full-modeling after decomposition tends to dilute effective signals and increases the risk of overfitting [18,19].

- (2)

- A discrepancy exists between forecasted targets and actual scheduling requirements. Solely minimizing point value prediction errors fails to adequately constrain change rate or ramp behavior, resulting in error concentration during peak and abrupt-change periods [20].

- (3)

2. Methodological Foundations

2.1. Variational Mode Decomposition

2.1.1. Sub-Sequence Analytic Signal and Frequency Mixing

2.1.2. Sub-Sequence Baseband Minimum Bandwidth Model

2.1.3. Sub-Sequence Smoothing Optimization

2.1.4. Multi-Channel Input and Leakage Prevention

2.2. Recurrent Neural Networks

2.2.1. LSTM Unit

2.2.2. BiLSTM Architecture

2.2.3. Δ-Target and Multi-Channel Sample Organization

2.3. Channel Value Metrics and Selection

2.3.1. Forward Correlation

2.3.2. Redundancy-Aware Channel Subset Selection

2.4. Lightweight Bayesian Optimization and Stable Training

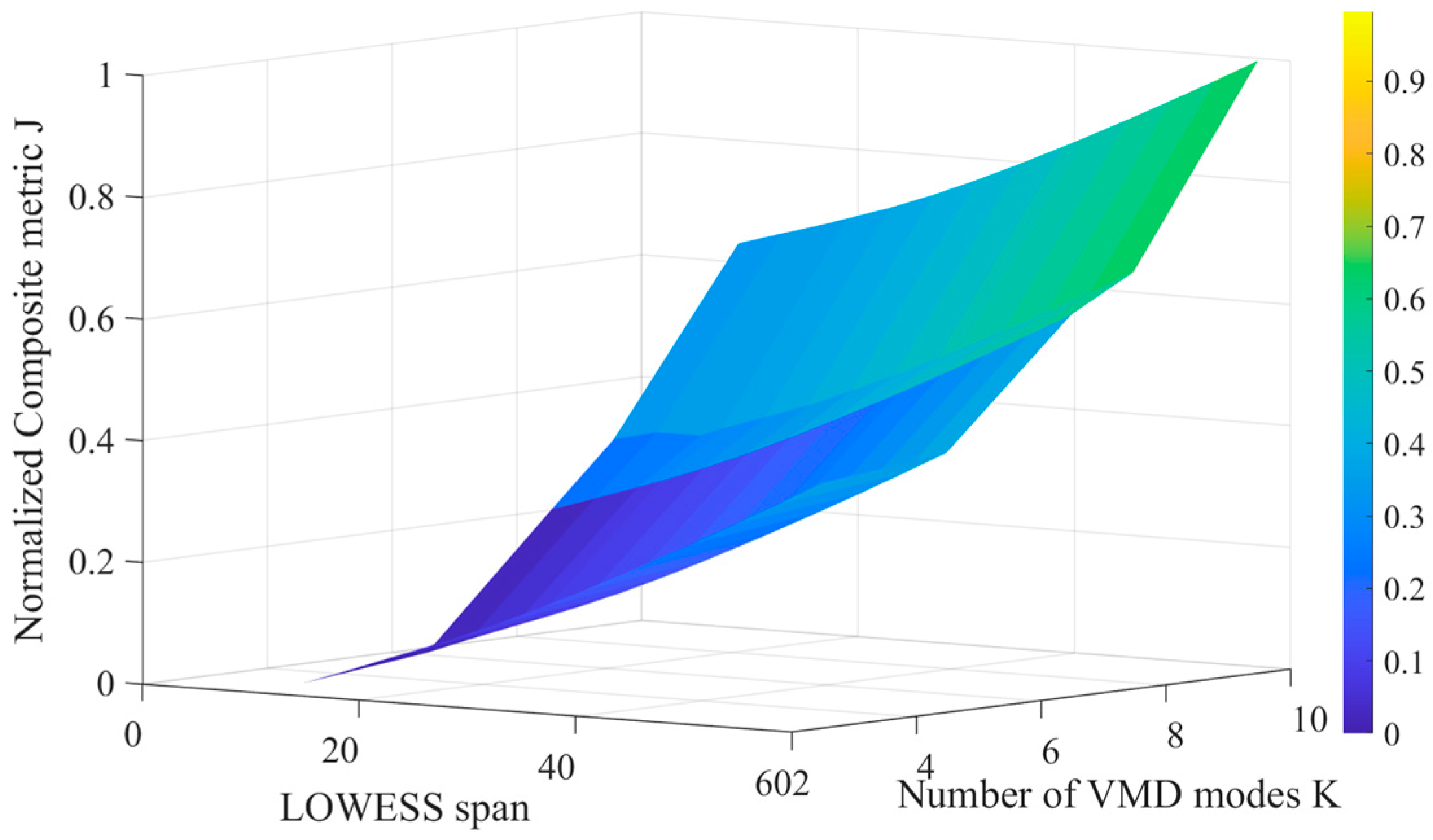

2.4.1. Objective Functions and Evaluation Protocol

2.4.2. Search Space and Lightweight Search Strategy

2.4.3. Stable Training and Reproducibility Protocols

3. Combined Forecasting Framework

3.1. Overall Procedure and End-to-End Mapping

3.2. Training and Validation Protocol

3.3. Complexity Analysis

4. Case Study Analysis

4.1. Time Series Decomposition and Residual Smoothing

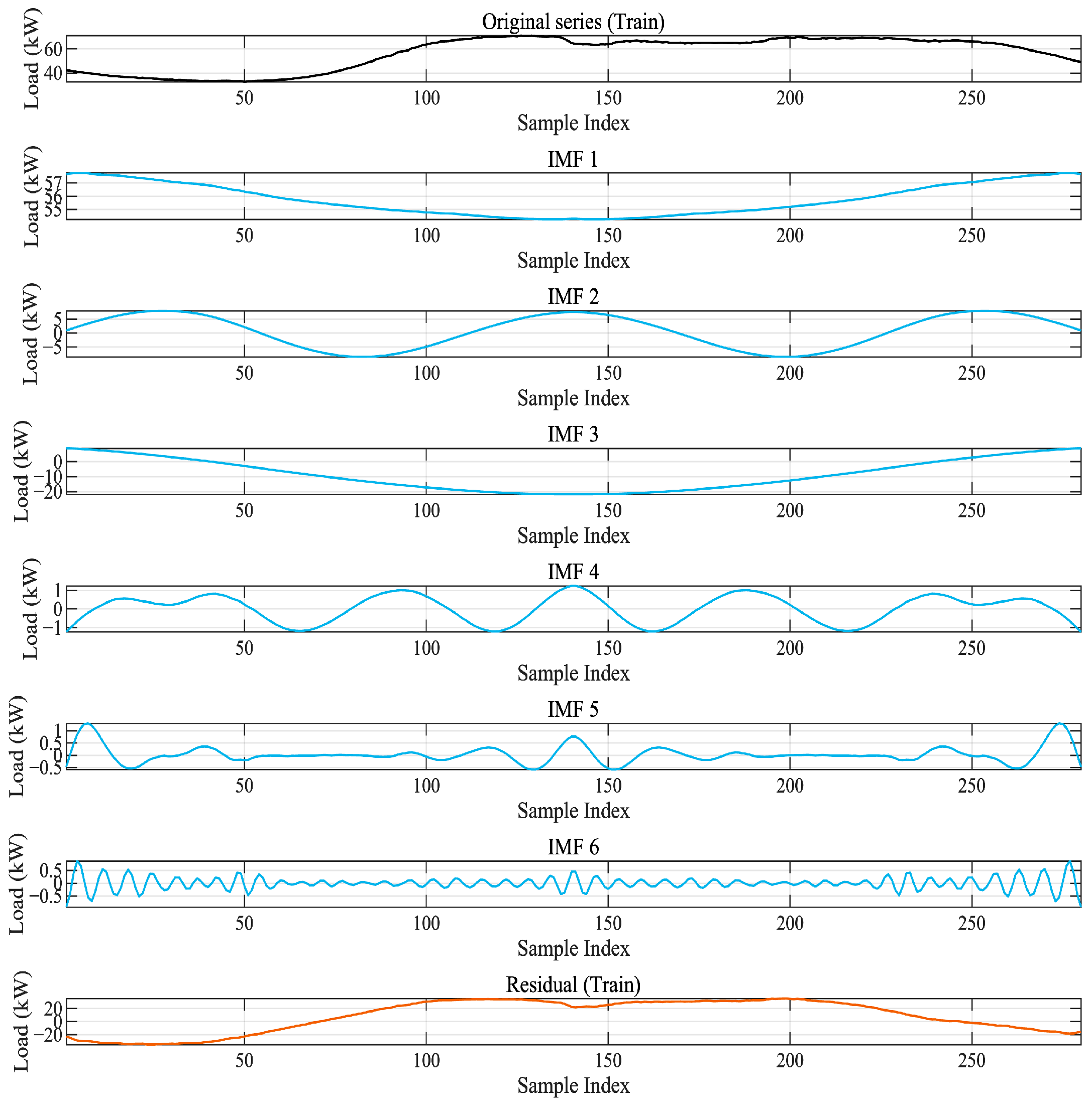

4.1.1. VMD Results and Multi-Scale Features

4.1.2. Channel Value Evaluation and Selection

- (i)

- Foresight correlation

- (ii)

- Energy ratio

- (iii)

- Selection rule (Top-M)

4.1.3. Residual LOWESS and Spike Suppression

4.2. Experimental Setup and Evaluation

4.2.1. Δ Target Definition and Experimental Protocol

4.2.2. Single-Step Prediction Result Analysis

4.2.3. Multi-Step Forecasting Error Propagation

4.2.4. Time Window and Step Length Sensitivity Analysis

4.3. Results and Discussion

4.3.1. Model Comparison and Advantages

4.3.2. Robustness Analysis

4.3.3. Error Distribution Characteristics and Prediction Comparison of Multiple Algorithms

4.3.4. Segment-Wise Improvement Under Different Operating Conditions

4.3.5. Corresponding Effects of Contribution Points

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Eren, Y.; Küçükdemiral, I. A comprehensive review on deep learning approaches for short-term load forecasting. Renew. Sustain. Energy Rev. 2024, 189, 114031. [Google Scholar] [CrossRef]

- Morais, L.B.S.; Aquila, G.; de Faria, V.A.D.; Lima, L.M.M.; Lima, J.W.M.; de Queiroz, A.R. Short-term load forecasting using neural networks and global climate models. Appl. Energy 2023, 348, 121439. [Google Scholar] [CrossRef]

- Wang, C.; Zhao, H.; Liu, Y.; Fan, G. Minute-level ultra-short-term power load forecasting based on time-series data features. Appl. Energy 2024, 372, 123801. [Google Scholar] [CrossRef]

- Chan, J.W.; Yeo, C.K. A Transformer-based approach to electricity load forecasting. Electr. J. 2024, 37, 107370. [Google Scholar] [CrossRef]

- Hasan, M.; Mifta, Z.; Papiya, S.J.; Roy, P.; Dey, P.; Salsabil, N.A.; Chowdhury, N.-U.; Farrok, O. A State-of-the-Art Comparative Review of Load Forecasting Methods: Characteristics, Perspectives, and Applications. Energy Convers. Manag. X 2025, 26, 100922. [Google Scholar] [CrossRef]

- Iqbal, M.S.; Adnan, M.; Mohamed, S.E.G.; Tariq, M. A hybrid deep learning framework for short-term load forecasting. Results Eng. 2024, 22, 103560. [Google Scholar] [CrossRef]

- Fan, G.-F.; Han, Y.-Y.; Li, J.-W.; Peng, L.-L.; Yeh, Y.-H.; Hong, W.-C. A hybrid model for deep learning short-term power load forecasting. Expert Syst. Appl. 2024, 234, 122012. [Google Scholar] [CrossRef]

- Zhong, B.; Yang, L.; Li, B.; Ji, M. Short-term power grid load forecasting based on VMD-SE-BiLSTM-Attention hybrid model. Int. J. Low-Carbon Technol. 2024, 19, 1951–1958. [Google Scholar] [CrossRef]

- Wang, X.; Jiang, H.; Wu, Z.; Yang, Q. Adaptive variational autoencoding generative adversarial networks for rolling bearing fault diagnosis. Adv. Eng. Inform. 2023, 56, 102027. [Google Scholar] [CrossRef]

- Wang, X.; Jiang, H.; Zeng, T.; Dong, Y. An adaptive fused domain cycling variational generative adversarial network for machine fault diagnosis under data scarcity. Inf. Fusion 2025, 56, 103616. [Google Scholar] [CrossRef]

- Yan, J.; Cheng, Y.; Zhang, F.; Zhou, N.; Wang, H.; Jin, B.; Wang, M.; Zhang, W. Multimodal imitation learning for arc detection in complex railway environments. IEEE Trans. Instrum. Meas. 2025, 74, 1–13. [Google Scholar] [CrossRef]

- Dong, Z.; Tian, Z.; Lv, S. Decomposition and weighted correction for short-term load forecasting. Appl. Soft Comput. 2025, 162, 111863. [Google Scholar] [CrossRef]

- Han, M.; Lee, J.-S. IVMD-BiLSTM with differential mechanism for load forecasting. Appl. Soft Comput. 2024, 152, 110657. [Google Scholar] [CrossRef]

- Zare, M.; Nouri, N.M. End-effects mitigation in empirical mode decomposition. Mech. Syst. Signal Process. 2023, 200, 110205. [Google Scholar] [CrossRef]

- Yu, M.; Yuan, H.; Li, K.; Deng, L. Noise cancellation via TVF-EMD with adaptive thresholding. Algorithms 2023, 16, 296. [Google Scholar] [CrossRef]

- Shalby, E.M.; Abdelaziz, A.Y.; Ahmed, E.S.; Rashad, B.A.-E. A guide to selecting wavelet decomposition level and functions. Sci. Rep. 2025, 15, 82025. [Google Scholar] [CrossRef]

- Coroneo, L.; Iacone, F. Testing for equal predictive accuracy with strong dependence. Int. J. Forecast. 2025, 41, 1073–1092. [Google Scholar] [CrossRef]

- Guenoukpati, A.; Agbessi, A.P.; Salami, A.A.; Bakpo, Y.A. Hybrid Long Short-Term Memory Wavelet Transform Models for Short-Term Electricity Load Forecasting. Energies 2024, 17, 4914. [Google Scholar] [CrossRef]

- Wang, Y.; Li, H.; Jahanger, A.; Li, Q.; Wang, B.; Balsalobre-Lorente, D. A novel ensemble electricity load forecasting system based on a decomposition-selection-optimization strategy. Energy 2024, 312, 133524. [Google Scholar] [CrossRef]

- Li, H.; Li, S.; Wu, Y.; Xiao, Y.; Pan, Z.; Liu, M. Short-term power load forecasting for integrated energy system based on a residual and attentive LSTM-TCN hybrid network. Front. Energy Res. 2024, 12, 1384142. [Google Scholar] [CrossRef]

- Harvey, D.I.; Leybourne, S.J.; Zu, Y. Tests for equal forecast accuracy under heteroskedasticity. J. Appl. Econ. 2024, 39, 850–869. [Google Scholar] [CrossRef]

- Harvey, D.I.; Leybourne, S.J.; Zu, Y. Testing for equal average forecast accuracy in possibly unstable environments. J. Bus. Econ. Stat. 2024, 43, 643–656. [Google Scholar] [CrossRef]

- Liu, F.; Chen, L.; Zheng, Y.; Feng, Y. A Prediction Method with Data Leakage Suppression for Time Series. Electronics 2022, 11, 3701. [Google Scholar] [CrossRef]

- Wu, Y.; Meng, X.; Zhang, J.; He, Y.; Romo, J.A.; Dong, Y.; Lu, D. Effective LSTMs with seasonal-trend decomposition (STL, LOESS) and adaptive learning. Expert Syst. Appl. 2024, 236, 121202. [Google Scholar] [CrossRef]

- Zhuang, Q.; Gao, L.; Zhang, F.; Ren, X.; Qin, L.; Wang, Y. MIVNDN: Ultra-Short-Term Wind Power Prediction Method with MSDBO-ICEEMDAN-VMD-Nons-DCTransformer Net. Electronics 2024, 13, 4829. [Google Scholar] [CrossRef]

- Li, K.; Huang, W.; Hu, G.; Li, J. Ultra-short-term power load forecasting based on CEEMDAN-SE + LSTM. Energy Build. 2023, 279, 112666. [Google Scholar] [CrossRef]

- Sun, H.; Yu, Z.; Zhang, B. Research on short-term power load forecasting based on VMD + GRU/CNN-Attention. PLoS ONE 2024, 19, e0306566. [Google Scholar] [CrossRef]

- Ouyang, T.; He, Y.; Li, H.; Sun, Z.; Baek, S. Modeling and forecasting short-term power load with copula model and deep belief network. IEEE Trans. Emerg. Top. Comput. Intell. 2019, 3, 127–136. [Google Scholar] [CrossRef]

- Zelios, V.; Mastorocostas, P.; Kandilogiannakis, G.; Kesidis, A.; Tselenti, P.; Voulodimos, A. Short-Term Electric Load Forecasting Using Deep Learning: A Case Study in Greece with RNN, LSTM, and GRU Networks. Electronics 2025, 14, 2820. [Google Scholar] [CrossRef]

| Component | Correlation | Energy Ratio | Selected |

|---|---|---|---|

| IMF1 | 0.3426509 | 0.78173379 | 1 |

| IMF2 | 0.5236128 | 0.00835674 | 1 |

| IMF3 | 0.3203291 | 0.04366188 | 1 |

| IMF4 | 0.0184756 | 0.00012883 | 0 |

| IMF5 | 0.2062651 | 3.0151668 | 1 |

| IMF6 | 0.0216455 | 1.5061263 | 1 |

| Residual | 0.198236 | 0.166074 | 1 |

| h | Hidden | lr | Dropout | L2 | Clip | Epochs | Patience | Batch | Seed |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 64 | 0.001 | 0.2 | 1.00 × 10−5 | 1 | 60 | 8 | 64 | 123 |

| 2 | 64 | 0.001 | 0.2 | 1.00 × 10−5 | 1 | 60 | 8 | 64 | 123 |

| 3 | 64 | 0.001 | 0.2 | 1.00 × 10−5 | 1 | 60 | 8 | 64 | 123 |

| h | RMSE | MAE | R2 | sMAPE |

|---|---|---|---|---|

| 1 | 0.509282 | 0.408892 | 0.998107 | 0.922703 |

| 2 | 1.661738 | 1.431842 | 0.979848 | 2.940043 |

| 3 | 1.525787 | 1.295397 | 0.98301 | 2.896958 |

| L | hmax | RMSEweight | RMSE_h1 | RMSE_h2 | RMSE_h3 | RMSEstd |

|---|---|---|---|---|---|---|

| 28 | 1 | 0.853788 | 0.853788 | - | - | 0.0 |

| 28 | 2 | 1.537469 | 0.841834 | 1.885286 | - | 0.737832 |

| 28 | 3 | 2.085647 | 0.825713 | 1.705126 | 2.759306 | 0.968112 |

| 56 | 1 | 0.373426 | 0.373426 | - | - | 0.0 |

| 56 | 2 | 0.576676 | 0.396564 | 0.666732 | - | 0.191037 |

| 56 | 3 | 0.8369 | 0.408113 | 0.643933 | 1.108469 | 0.35634 |

| 112 | 1 | 0.394342 | 0.394342 | - | - | 0.0 |

| 112 | 2 | 0.812356 | 0.458781 | 0.909494 | - | 0.375977 |

| 112 | 3 | 1.354393 | 0.564388 | 0.997245 | 1.855827 | 0.657311 |

| h | Model | RMSE | MAE | sMAPE | R2 |

|---|---|---|---|---|---|

| 1 | Δ-BiLSTM | 0.7389 | 0.6055 | 1.1648 | 0.9972 |

| 1 | SVM | 2.3686 | 2.1735 | 4.5011 | 0.9716 |

| 1 | RawLSTM | 2.3847 | 2.0314 | 4.0968 | 0.9712 |

| 1 | RNN | 2.9761 | 2.1992 | 4.2940 | 0.9552 |

| 1 | ARIMA | 2.1529 | 1.9474 | 4.1697 | 0.9766 |

| 2 | Δ-BiLSTM | 1.3095 | 1.0822 | 2.0845 | 0.9913 |

| 2 | SVM | 2.3284 | 2.1413 | 4.3742 | 0.9725 |

| 2 | RawLSTM | 2.1514 | 2.0558 | 4.2051 | 0.9765 |

| 2 | RNN | 3.0320 | 2.2967 | 4.5144 | 0.9534 |

| 2 | ARIMA | 2.1211 | 1.9634 | 4.0636 | 0.9772 |

| 3 | Δ-BiLSTM | 1.8737 | 1.5495 | 2.9898 | 0.9822 |

| 3 | SVM | 1.8227 | 1.6084 | 3.3730 | 0.9831 |

| 3 | RawLSTM | 2.0252 | 1.7485 | 3.3213 | 0.9792 |

| 3 | RNN | 3.2521 | 2.4447 | 4.8504 | 0.9463 |

| 3 | ARIMA | 2.1830 | 1.8968 | 4.2016 | 0.9758 |

| h | Model | RMSE | MAE | sMAPE | R2 |

|---|---|---|---|---|---|

| 1 | Δ-BiLSTM | 0.7389 | 0.6055 | 1.1648 | 0.9972 |

| 1 | Abl_w_o_Delta | 0.7977 | 0.6981 | 1.3982 | 0.9816 |

| 1 | Abl_w_o_ChSel | 0.7716 | 0.6717 | 1.3717 | 0.9828 |

| 1 | Abl_w_o_LOWESS | 0.8714 | 0.7716 | 1.4716 | 0.9713 |

| 1 | Abl_w_o_BiLSTM | 0.9554 | 0.7554 | 1.5554 | 0.9668 |

| 2 | Δ-BiLSTM | 1.3095 | 1.0822 | 2.0845 | 0.9913 |

| 2 | Abl_w_o_Delta | 1.4915 | 1.0917 | 2.1919 | 0.9727 |

| 2 | Abl_w_o_ChSel | 1.4728 | 1.0872 | 2.1629 | 0.9866 |

| 2 | Abl_w_o_LOWESS | 1.5766 | 1.1267 | 2.2767 | 0.9734 |

| 2 | Abl_w_o_BiLSTM | 1.6534 | 1.1536 | 2.3537 | 0.9675 |

| 3 | Δ-BiLSTM | 1.8737 | 1.5495 | 2.9898 | 0.9822 |

| 3 | Abl_w_o_Delta | 1.9427 | 1.6101 | 3.2102 | 0.9733 |

| 3 | Abl_w_o_ChSel | 1.9335 | 1.6047 | 3.2075 | 0.9793 |

| 3 | Abl_w_o_LOWESS | 1.9796 | 1.8236 | 3.6727 | 0.9705 |

| 3 | Abl_w_o_BiLSTM | 1.9866 | 1.8467 | 3.6947 | 0.9659 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Guo, Y.; Wang, L.; Zhao, J. A Multi-Channel Δ-BiLSTM Framework for Short-Term Bus Load Forecasting Based on VMD and LOWESS. Electronics 2025, 14, 4772. https://doi.org/10.3390/electronics14234772

Guo Y, Wang L, Zhao J. A Multi-Channel Δ-BiLSTM Framework for Short-Term Bus Load Forecasting Based on VMD and LOWESS. Electronics. 2025; 14(23):4772. https://doi.org/10.3390/electronics14234772

Chicago/Turabian StyleGuo, Yeran, Li Wang, and Jie Zhao. 2025. "A Multi-Channel Δ-BiLSTM Framework for Short-Term Bus Load Forecasting Based on VMD and LOWESS" Electronics 14, no. 23: 4772. https://doi.org/10.3390/electronics14234772

APA StyleGuo, Y., Wang, L., & Zhao, J. (2025). A Multi-Channel Δ-BiLSTM Framework for Short-Term Bus Load Forecasting Based on VMD and LOWESS. Electronics, 14(23), 4772. https://doi.org/10.3390/electronics14234772