1. Introduction

The definition of a smart city was first proposed in the 1990s, referring to the use of Information and Communications Technologies (ICT) and modern infrastructures within cities [

1]. Consider the purpose of improving the citizens’ living quality, and the smart city is conceptualized as a scenario where the citizens are the center of all applications and services [

2]. In this context, many cities, such as Shenzhen (China), Busan (South Korea), Santander (Spain), Chicago (United States), and Milton Keynes (United Kingdom) are trying their best to become smarter by using ICT to optimize various aspects of city operation and management.

As we know, the medical wisdom plays an important role during the process of building smart cities because it belongs to the non-negligible livelihood problem. Although many countries have improved the healthcare systems to increase the average life expectancy, it is very difficult to provide the proper care for the older people because the frequent workplace changes have the great increasing influence on the average distance between family members (children and parents). Furthermore, the falling behavior has been regarded as one of the significant issues faced by older people, in which a fall behavior can be very dangerous and often results in the severe health problem [

3]. Therefore, the fall detection in the field of medical wisdom has attracted the considerable attention from the global researchers and medical institutions.

At present, there have been some methods being used for fall detection. For example, from the perspective of detection pathway, they usually include wearable sensors based ones, site environmental installation based ones, and video installation based ones [

4]. Among them, the first type is to obtain the fall signal from the sensors equipped at the body; the second type determines whether the older people fall according to both site environment and body posture; the last type uses the video surveillance with the continuous monitoring actions to analyze the fall probability. It is obvious that the video surveillance based detection pathway is the most popular because such technique does not have excessive interference with the older people. Furthermore, from the perspective of detection devices, these methods can be usually divided into two forms, i.e., sensors and smart devices [

5]. However, these sensors are equipped at the body with some limitations, such as inconvenience and being nonsynchronous. Thus, more and more researchers are inclined to use the smart devices, which shows the important popularity with the rapid development of Internet of Things (IoT). Nowadays, the smartphone is a great choice to make such fall detection. According to the above statements, it is the relatively optimal fall detection solution by using the combination of video surveillance and smartphone.

However, in fact, if the smartphone is used, it is not necessary to analyze the fall probability via the video surveillance because the continuous monitoring actions consume the transmission traffic and easily lead to the delay jitter [

6]. Instead, we can analyze the collected data from the smartphone and make the timely judgment. In spite of this, it is very difficult for the previous fall detection strategies to realize the intelligent detection with the following three reasons: (i) the data collection cannot reach the real-time level; (ii) the adopted detection methods cannot satisfy the enough stability; and (iii) the computation overhead of the collection device is very high, which causes the barely satisfactory detection effect [

7,

8,

9]. Therefore, it is considerably significant to study the novel fall detection method based on smartphones.

Nevertheless, the cache size of smartphone is limited, which prevents the smartphone from storing much data. On the other hand, the computation resources of smartphone are also limited, which prevents the smartphone from making efficient computing. Under such situations, the analyzed effect fails to reach the accurate level. To this end, it requires employing the other devices with the large cache size to help store a large proportion of data from the smartphone and support the high performance computing. Regarding this, the edge computing [

10,

11,

12] framework can be considered as the candidate solution, where the edge servers are responsible for providing storage resources and computation resources.

With the above considerations, this paper proposes Convolutional Neural Network (CNN)-based fall detection strategy with edge computing, called EdCNN. In particular, the global network view ability of Software-Defined Networking (SDN) is used to collect the generated data from smartphone. The contributions of this paper are recognized as follows: (i) The edge computing is exploited to put some computation tasks at the edge server by the scheduling technique. (ii) CNN is equipped with both edge server and smartphone to train the related data and give the guidance of fall detection. (iii) The simulation experiments are made by testing the accurate rate, transmission delay, and stability to demonstrate the efficiency. To the best of our knowledge, this paper is the first to do fall detection with SDN, edge computing, and CNN.

The rest paper is structured as follows:

Section 2 reviews the existing literature.

Section 3 presents a system framework of EdCNN. A scheduling strategy is proposed in

Section 4.

Section 5 introduces the CNN-based data training method. The experimental results are shown in

Section 6.

Section 7 discusses and concludes this paper.

2. Literature Review

There have been a number of research papers on fall detection. For example, Lgual et al. [

13] reviewed 327 related literature, which mainly concentrated on the context-aware systems further including based on cameras, floor sensors, microphones, and pressure sensors. Meanwhile, they emphasized that there was no standardized context-aware technique widely accepted by the research community in this field even though many feature extractions and machine learning techniques were adopted by researchers. Ward et al. [

14] made a comprehensive review on fall detection from the perspective of applications. Therein, these technique methods could be divided into based on manually operated devices, body worn automatic alarm systems and devices to detect such changes that might increase the falling risk. In addition, Refs. [

15,

16,

17,

18,

19,

20] also presented the survey contributions to address the similar issues. Different from them, this paper plans to review the latest and representative research achievements since 2017.

In [

21], this research made use of a comprehensive bounding box and a dynamic state machine in a new way to do fall detection. The proposed approach offered a way to track and analyze continuous data streams of the visual images to automatically predict a fall event prior to the fall state in a single-phase instead of the typical two-phases. In [

22], the authors regarded the fall detection as an example of action detection and proposed to locate its temporal extent, which was achieved by exploiting the effectiveness of deep networks. In the training stage, the trimmed video clips of four phases (standing, falling, fallen, and not moving) in a fall were converted into four categories of so-called dynamic images to train and predict the label of each dynamic image. In the testing stage, a set of sub-videos was generated by using a sliding window on an untrimmed video. In [

23], this paper aimed to detect human fall by utilizing the built inertial measurement unit sensors of a smartphone attached to the body with the signals wirelessly transmitted to remote PC for processing, where a threshold based fall detection algorithm was implemented while a supervised machine learning algorithm was used to classify activity daily living. In [

24], a new dataset of movement traces acquired through the systematic emulation of a set of predefined activities of daily life and falls was described to provide the reference for research.

In [

25], the authors concentrated on energy efficiency of a wearable sensor node and proposed the design of a tiny, lightweight, flexible, and energy efficient wearable device, in which different parameters (e.g., sampling rate, communication bus interface, transmission protocol, and transmission rate) impacting on energy consumption of the wearable device were studied. In [

26], a framework by using acoustic local ternary patterns and analyzing environmental sounds was proposed, which suppressed silence zones in sound signals and distinguished overlapping sounds. Specifically, acoustic features were extracted from the separated source components by using the acoustic local ternary patterns, and then fall events were detected through a support vector machine based classifier. In [

27], an intelligent system to detect human fall events by using a physics-based myoskeletal simulation was proposed, which demonstrated that the use of fall recordings was unnecessary for modeling the fall since the simulation engine could produce a variety of fall events customized to the individual’s physical characteristics. In [

28], a fuzzy logic-based fall detection algorithm was developed to process the output signals from the accelerometer and sound sensor, where a valid fall activity detected by the accelerometer, coupled with a detected sound pressure from the resultant fall could infer an occurrence of a valid fall. In [

29], a methodology for acquisition and preprocessing of measurement data from infrared depth sensors was proposed. Therein, the data processing was initiated with extraction of the silhouette from the depth image and estimation of the coordinates of the center of that silhouette.

In [

30], a hierarchical classifier based on fisher discriminant analysis was developed to improve detection accuracy and reduce false alarms. It divided human activities into three categories: non-fall, backward fall, and forward fall with the hierarchical classifier. In [

31], a computer vision based framework was proposed to detect falls from surveillance videos. It introduced a novel three-stream CNN as an event classifier, where silhouettes and their motion history images serve as input to the first two streams, while dynamic images whose temporal duration were equal to motion history images, were used as input to the third stream. In [

32], the authors presented an automated analysis algorithm for remote detection of high impact falls, based on a physical model of a fall, aiming at universality and robustness. In [

33], a fall detection system based on a 2D CNN inference method and multiple cameras was devised. This approach analyzed images in fixed time windows and extracted features by using an optical flow method that obtained information on the relative motion between two consecutive images. In [

34], the authors presented the design of an embedded software for wearable devices connected in wireless mode to a remote monitoring system. In particular, the work proposed the embedding of a recurrent neural network architecture on a micro controller unit. Furthermore, to address the feasibility of such resource-costrained deep learning approach, the work presented a few general formulas to determine memory occupation, computational load and power consumption. In [

35], a new fall detection system relying on different signals acquired with multiple wearable sensors was proposed. The system made use of the covariance of the raw signals and the nearest neighbor classifier, at the same time, it also employed the covariance matrix as a straightforward mean for fusing signals from multiple sensors to enhance the classification performance. In [

36], combining ensemble stacked autoencoders with one-class classification based on the convex hull, the authors proposed a novel intelligent fall detection method based on accelerometer data from a wrist-worn smart watch. In the proposed method, the first role was adopted for unsupervised feature extraction to overcome the disadvantages of artificial feature extraction while the second role was used for pattern recognition.

In [

37], the authors presented a dedicated system for detecting falls caused by complications in hemodialysis patients using RF signals. In particular, they designed a residual feature extraction algorithm based on the hemodialysis patient safety process model, and the fall detection of hemodialysis patient was treated as a machine learning problem where four classification models were built via learning residual feature space. In [

38], an innovative highly-efficient intelligent system based on a fog-cloud computing architecture was proposed to timely detect falls using deep learning techniques deployed on resource-constrained fog nodes. In [

39], the authors devised a scalable architecture of a system that could monitor thousands of older adults, detect falls, and notify caregivers, in which several machine learning models were employed to evaluate their suitability in the detection process. In [

40], the paper proposed a centralized unobtrusive IoT based device-type invariant fall detection and rescue system for monitoring of a large population in real time. It supported that any type of device could be used to monitor a large population in the proposed system. In [

41], the authors introduced an effective and optimized fall detection system that used an approach based on a killer heuristics optimized AlexNet convolution neural network, in which the feature searching was performed by applying the alpha-beta pruning move.

According the above reviewed literature, it is observed that the new emerging techniques have been used to help fall detection. In spite of this, the current research achievements always have some limitations which need to be improved and enhanced. For example, the data collection cannot reach the real-time level; the adopted detection methods cannot satisfy the enough stability; and the computation overhead of collection device is very high. Given this, this paper will exploit the emerging techniques (e.g., CNN and edge computing) and networking paradigms (e.g., SDN) to further study fall detection. In other words, SDN, edge computing and CNN are novel elements in this paper which are different from the traditional methods.

3. System Framework

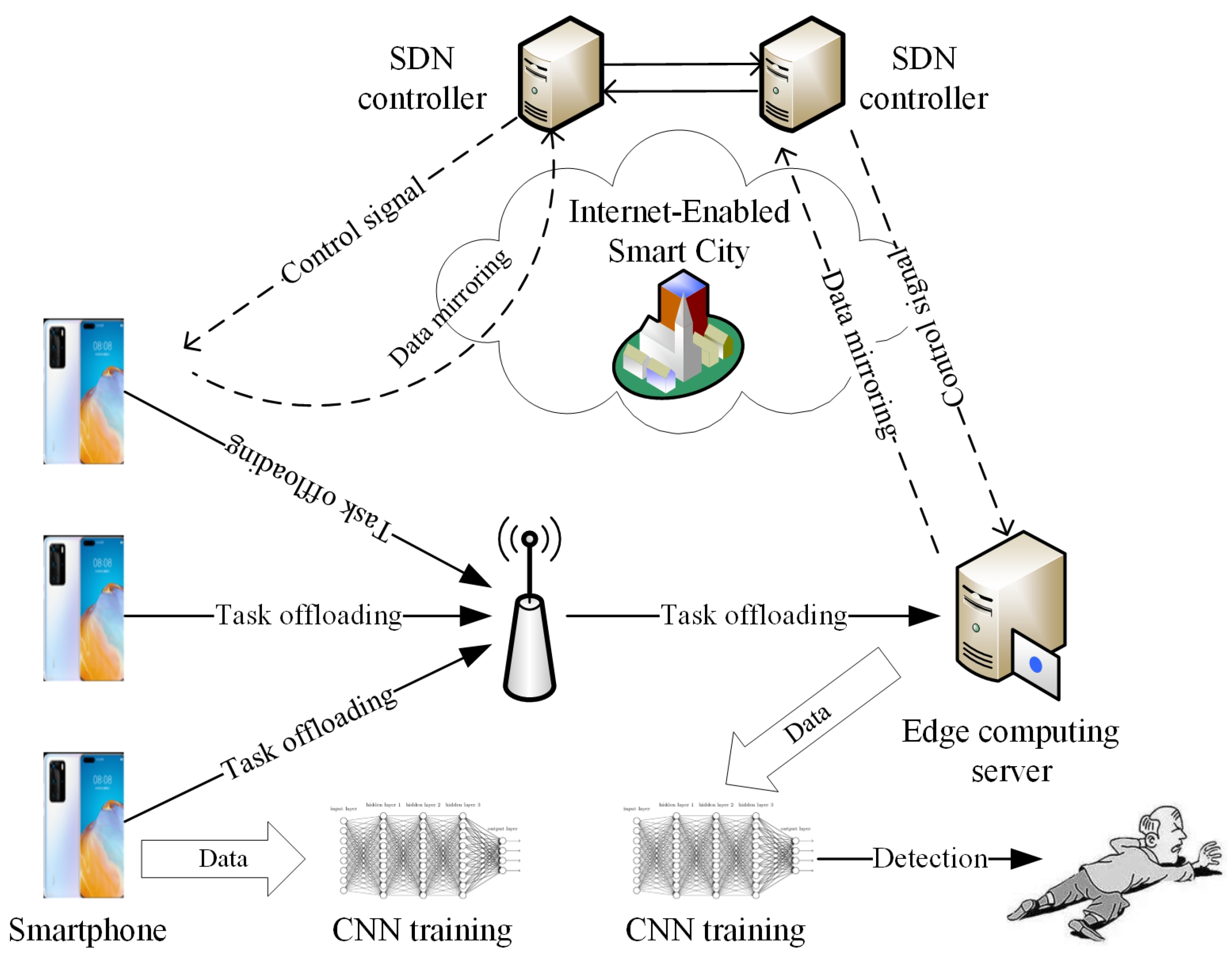

As the above mentioned, the smartphone has the limited storage resources and computation resources, which cannot support the efficient fall detection. Based on the edge computing model, the some computation tasks can be migrated into the edge server for computation. In addition, the current data collection cannot reach the real-time level, thus SDN is employed to monitor the global data status in terms of the smartphone or the edge computing server via the concentrated control ability. In summary, the edge computing and SDN are adopted to help realize the fast and efficient fall detection, which is the newest idea to the best of our knowledge. In spite of this, it always requires CNN for the data training in order to provide the guidance of fall detection. According to such statements, the system framework of EdCNN is shown in

Figure 1. We can see that the proposed EdCNN includes three main external roles, i.e., a number of smartphones, an edge computing server, and two SDN controllers, where both smartphone and edge computing server are integrated with the CNN used to training the corresponding data. The whole functions and workflow of EdCNN are described as follows.

The smartphone is used to collect data from the older people with convenience and flexibility, and it is also used to make the data computing via CNN. The edge computing server is used to accommodate much data and make the data computing via CNN. The SDN controller is used to scan the global data status in the smartphone or edge computing server. Regarding the simple workflow, at first, some computation tasks are migrated into the edge computing server via the scheduling strategy (see

Section 4 for details), at the same time, the remaining tasks are computed via CNN at the smartphone. Then, the migrated tasks are computed via CNN at the edge computing server. During the performing process, one SDN controller is responsible for monitoring the data status of smartphone and the other one is responsible for scanning the data status of edge computing service, which provide the support for the scheduling strategy through the Internet-enabled smart city. Meanwhile, the SDN controller sends the controller signal to adjust the migration amount of data after both smartphone and edge computing server submit the mirroring data to the SDN controller. At last, the trained data are obtained to conduct the fall detection.

It is obvious that two techniques issues should be addressed in this paper, i.e., how to make the edge computing scheduling (how many tasks are migrated into the edge computing server) and how to train the data (which is used to implement CNN-based data training). Regarding the virtual data submission to the SDN controller, it can be realized by the OpenFlow switch [

42,

43] that has the inherent minoring and indirection functions. Therefore, we will introduce edge computing scheduling strategy and CNN-based data training in the following sections.

6. Experiment Results

At first, we give two kinds of parameters settings, i.e., algorithm simulation parameters and CNN structure parameters. The former is shown in

Table 1, and the latter is shown in

Table 2.

Then, we introduce the fall detection’s dataset which includes 1000 older adults from four different scenarios (i.e., home, square, shopping mall, and street), where there are 600 males and 400 females, respectively, as shown in

Table 3.

In addition, the edge computing server comes from Huawei’s 1288H V5 with Intel C622. In particular, all experiments scenarios are simulated by the Roblox software; this is because it is unrealistic to equip the smartphone for each older adult or collect the inherent fall data from their smartphones.

Furthermore, two latest and systematic research from [

39,

41] which integrate the most cutting-edge techniques are considered as baselines, called InfS and MeaM, respectively. Meanwhile, we test two kinds of experiments. At first, the performance of classification is verified via evaluating recall ratio, precision ratio, and F1 value; then, three metrics, i.e., accurate rate, transmission delay, and stability are used to evaluate the efficiency of strategy.

6.1. Classification Verification

The experimental results on recall ratio, precision ratio, and F1 value are shown in

Table 4, where F1 value is defined as follows:

where

and

are the recall ratio and precision ration, respectively.

We can find that EdCNN has the best recall ratio, precision ratio, and F1 value, followed by MeaM and InfS; this is because EdCNN comprehensively optimizes CNN via ReLU function, Maxout function, Softmax function, and cross entropy function. InfS only considers the boosted decision function to realize machine learning, which cannot reach the same classification effect with CNN, thus it has the worst classification result. Although both EdCNN and MeaM adopt CNN, MeaM does not make the systematic optimization while EdCNN presents the relatively optimal CNN structure (see

Table 2) via the simulation experiments, thus MeaM has a worse classification effect than EdCNN.

6.2. Accurate Rate

The experimental results on accurate rate based on four different scenarios are shown in

Table 5. We can find that EdCNN always all the highest accurate rate, followed by MeaM and InfS. There are two aspects of reasons. On one hand, EdCNN has the best classification effect. On the other hand, EdCNN uses BSA to optimize the trade-off between running delay and energy consumption, which guarantees that the obtained solution is relatively optimal. In addition, we can also find that it has the highest accurate rate in case of testing the home scenario; this is because such scenario has no external interference.

6.3. Transmission Delay

The experimental results on transmission delay based on four different scenarios are shown in

Table 6. Similarly, we can find that EdCNN consumes the smallest transmission delay, followed by InfS and MeaM. At first, MeaM does not have the assistance via employing the networking paradigms. On the contrary, EdCNN and InfS use edge computing and cloud computing techniques, respectively, which solve the problem of limited storage resources and computation resources. As a result, MeaM spends the largest transmission delay. Then, for EdCNN and InfS, the proposed EdCNN shows two distinguished advantages. On one hand, it puts the computation tasks at the edge server, which is close to the users with smartphone, obtaining the small communication delay. On the other hand, it uses the SDN controller to collect the data from smartphone, which realizes the virtual data transmission with the relatively small delay. In summary, EdCNN takes smaller transmission delays than InfS.

6.4. Stability

In this section, we test the stability through doing the statistical testing based on Wilcoxon [

52] according to 200 experiments, where the level of significance is set as 0.01. The testing results about two metrics (accurate rate and transmission delay) and three strategies (EdCNN, InfS and MeaM) are shown in

Table 7, where the abnormal results mean that the relatively better strategy is the inferior position in terms of some simulation points. We can find that all corresponding

p-values are smaller than the designated significance level 0.01, which indicates that the proposed EdCNN has better performance than InfS and MeaM. Furthermore, from the comprehensive evaluation perspective, the proposed EdCNN is stable and can be acceptable.

7. Conclusions

The remote monitoring of older adults and detecting dangers in the state of human health have become essential elements in smart cities. In terms of the three limitations of current fall detection strategies faced, i.e., (i) the data collection cannot reach the real-time level; (ii) the adopted detection methods cannot satisfy the enough stability, and (iii) the computation overhead of collection device is very high, this paper proposes a novel EdCNN to do fall detection, via leveraging CNN, SDN, and edge computing techniques.

Meanwhile, the global network view ability of SDN is used to collect the generated data from smartphone. In terms of edge computing scheduling on putting some computation tasks at the edge server, BSA is employed to solve the trade-off problem between running delay and energy consumption, which guarantees the relative optimal scheduling solution. In terms of CNN for data training, it is comprehensively optimized via ReLU function, Maxout function, Softmax function, and cross entropy function. In fact, these three sub-proposals guarantee to address the above-mentioned three limitations. To be specific, the SDN controller can ensure the real-time data collection; the enhanced CNN structure can make the stability; and the edge computing framework can decrease the computation overhead.

Finally, two kinds of simulation experiments are made. At first, the classification performance is verified via evaluating recall ratio, precision ratio, and F1 value, which can reach 98.26%, 97.87%, and 97.49%, respectively. Then, the whole performance is evaluated by testing accurate rate, transmission delay, and stability. In particular, the accurate rate and transmission delay can reach 99.01% and 19.261 ms, respectively, in case of a testing home scenario. The experimental results show that EdCNN outperforms two baselines.

However, as a novel method based on SDN, edge computing, and CNN, the proposed EdCNN also has some limitations. At first, we do not consider the application types, that is, the data division is not completed in the fine-grained way. Then, although CNN computing and edge computing decrease the communication delay, they introduce the computation overhead. Finally, the experiment environment is stalled at the simulation platform, irrespective of the real data collection from some persons. In the future, we plan to enhance the performance of EdCNN from two aspects. On one hand, we improve EdCNN around the above-mentioned three limitations. On the other hand, we improve BSA and reach much faster convergence. In addition, we will study the relevance of CNN in different domains and security issues like [

53,

54].