Reverse Difference Network for Highlighting Small Objects in Aerial Images

Abstract

1. Introduction

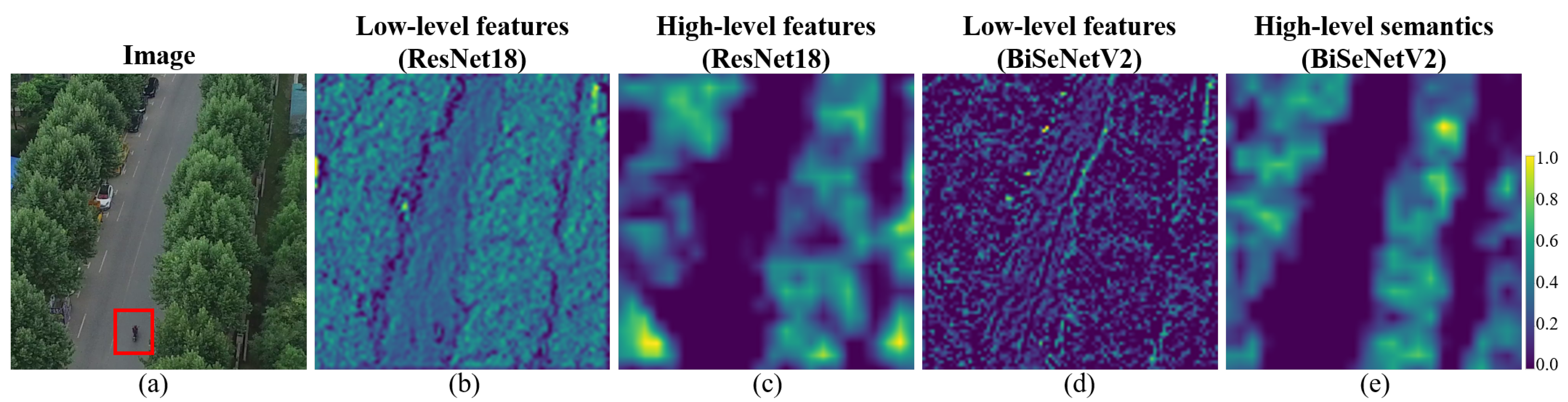

- A reverse difference mechanism (RDM) is proposed to highlight small objects. RDM aligns the low-level features and high-level semantics and excludes the large-object information from the low-level features via the guidance of high-level semantics. Small objects are preferentially learned during training via RDM.

- Based on the RDM, a new semantic segmentation framework called RDNet is proposed. The RDNet significantly improves the accuracy of the segmentation of small objects. The inference speed and computational complexity of RDNet are acceptable for a resource-constrained GPU facility.

- In RDNet, the DS and CS are designed. The DS obtains more semantics for the outputs of RDM by modeling both spatial and channel correlations. The CS, which ensures the sufficient accuracy of the segmentation of large objects, produces high-level semantics by enlarging the receptive field. Consequently, the higher accuracy scores of the segmentation of both small and large objects are achieved.

2. Related Work

2.1. Traditional Methods

2.2. Small-Object-Oriented Methods

2.3. Semantic Segmentation Networks in Remote Sensing

3. RDNet

3.1. RDM

3.1.1. Cosine Alignment

| Algorithm 1 Cosine alignment. |

|

3.1.2. Neural Alignment

| Algorithm 2 Neural alignment. |

|

3.2. DS

3.3. CS

3.4. The Loss Function

4. Datasets

4.1. The UAVid Benchmark

4.2. The Aeroscapes Benchmark

5. Experiments

5.1. Evaluation Metrics

5.2. Implementation Details

5.3. Experimental Settings Based on Validation

5.3.1. The Backbone Selection

5.3.2. The Selection of Comparison Methods

5.4. Results on UAVid

5.4.1. Quantitative Results

5.4.2. Visual Results

5.5. Results on Aeroscapes

5.5.1. Quantitative Results

5.5.2. Visual Results

6. Discussion

6.1. Ablation Study

6.2. The Output of Each Module in RDNet

6.3. The Usage of Complex Backbones

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Han, W.; Chen, J.; Wang, L.; Feng, R.; Li, F.; Wu, L.; Tian, T.; Yan, J. Methods for Small, Weak Object Detection in Optical High-Resolution Remote Sensing Images: A survey of advances and challenges. IEEE Geosci. Remote Sens. Mag. 2021, 9, 8–34. [Google Scholar] [CrossRef]

- Chen, Z.; Gong, Z.; Yang, S.; Ma, Q.; Kan, C. Impact of extreme weather events on urban human flow: A perspective from location-based service data. Comput. Environ. Urban Syst. 2020, 83, 101520. [Google Scholar] [CrossRef] [PubMed]

- Castellano, G.; Castiello, C.; Mencar, C.; Vessio, G. Crowd Detection in Aerial Images Using Spatial Graphs and Fully-Convolutional Neural Networks. IEEE Access 2020, 8, 64534–64544. [Google Scholar] [CrossRef]

- Lyu, Y.; Vosselman, G.; Xia, G.S.; Yilmaz, A.; Yang, M.Y. UAVid: A semantic segmentation dataset for UAV imagery. ISPRS J. Photogramm. Remote Sens. 2020, 165, 108–119. [Google Scholar] [CrossRef]

- Nigam, I.; Huang, C.; Ramanan, D. Ensemble Knowledge Transfer for Semantic Segmentation. In Proceedings of the 2018 IEEE Winter Conference on Applications of Computer Vision (WACV), Lake Tahoe, NV, USA, 12–15 March 2018; pp. 1499–1508. [Google Scholar] [CrossRef]

- Li, X.; Zhao, H.; Han, L.; Tong, Y.; Tan, S.; Yang, K. Gated Fully Fusion for Semantic Segmentation. Proc. AAAI Conf. Artif. Intell. 2020, 34, 11418–11425. [Google Scholar] [CrossRef]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid Scene Parsing Network. In Proceedings of the 2017 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 6230–6239. [Google Scholar] [CrossRef]

- Zhao, H.; Zhang, Y.; Liu, S.; Shi, J.; Loy, C.C.; Lin, D.; Jia, J. PSANet: Point-wise Spatial Attention Network for Scene Parsing. In Proceedings of the Computer Vision—ECCV, Munich, Germany, 8–14 September 2018; Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 270–286. [Google Scholar]

- Lin, T.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature Pyramid Networks for Object Detection. In Proceedings of the 2017 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 936–944. [Google Scholar] [CrossRef]

- Tian, Z.; Zhao, H.; Shu, M.; Yang, Z.; Li, R.; Jia, J. Prior Guided Feature Enrichment Network for Few-Shot Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2020. early access. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 770–778. [Google Scholar] [CrossRef]

- Yu, C.; Wang, J.; Peng, C.; Gao, C.; Yu, G.; Sang, N. BiSeNet: Bilateral Segmentation Network for Real-Time Semantic Segmentation. In Proceedings of the Computer Vision—ECCV, Munich, Germany, 8–14 September 2018; Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 334–349. [Google Scholar]

- Yu, C.; Gao, C.; Wang, J.; Yu, G.; Shen, C.; Sang, N. BiSeNet V2: Bilateral Network with Guided Aggregation for Real-Time Semantic Segmentation. Int. J. Comput. Vis. 2021, 129, 3051–3068. [Google Scholar] [CrossRef]

- Yang, M.Y.; Kumaar, S.; Lyu, Y.; Nex, F. Real-time Semantic Segmentation with Context Aggregation Network. ISPRS J. Photogramm. Remote Sens. 2021, 178, 124–134. [Google Scholar] [CrossRef]

- Marmanis, D.; Schindler, K.; Wegner, J.; Galliani, S.; Datcu, M.; Stilla, U. Classification with an edge: Improving semantic image segmentation with boundary detection. ISPRS J. Photogramm. Remote Sens. 2018, 135, 158–172. [Google Scholar] [CrossRef]

- Xie, S.; Tu, Z. Holistically-Nested Edge Detection. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Washington, DC, USA, 7–13 December 2015; pp. 1395–1403. [Google Scholar] [CrossRef]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef]

- Shelhamer, E.; Long, J.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 640–651. [Google Scholar] [CrossRef] [PubMed]

- Huang, Z.; Wang, X.; Wei, Y.; Huang, L.; Shi, H.; Liu, W.; Huang, T.S. CCNet: Criss-Cross Attention for Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2020. early access. [Google Scholar] [CrossRef] [PubMed]

- Chen, L.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking Atrous Convolution for Semantic Image Segmentation. arXiv 2017, arXiv:1706.05587. [Google Scholar]

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation. In Proceedings of the Computer Vision—ECCV, Munich, Germany, 8–14 September 2018; Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 833–851. [Google Scholar]

- Ding, H.; Jiang, X.; Shuai, B.; Liu, A.Q.; Wang, G. Context Contrasted Feature and Gated Multi-scale Aggregation for Scene Segmentation. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 2393–2402. [Google Scholar] [CrossRef]

- Zhang, L.; Li, X.; Arnab, A.; Yang, K.; Tong, Y.; Torr, P.H.S. Dual Graph Convolutional Network for Semantic Segmentation. In Proceedings of the BMVC, Cardiff, UK, 9–12 September 2019; p. 254. [Google Scholar]

- Wang, X.; Girshick, R.; Gupta, A.; He, K. Non-local Neural Networks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7794–7803. [Google Scholar] [CrossRef]

- Huang, Z.; Wang, X.; Huang, L.; Huang, C.; Wei, Y.; Liu, W. CCNet: Criss-Cross Attention for Semantic Segmentation. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 October–2 November 2019; pp. 603–612. [Google Scholar] [CrossRef]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual Attention Network for Scene Segmentation. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 3141–3149. [Google Scholar] [CrossRef]

- Li, X.; Zhong, Z.; Wu, J.; Yang, Y.; Lin, Z.; Liu, H. Expectation-Maximization Attention Networks for Semantic Segmentation. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 October–2 November 2019; pp. 9166–9175. [Google Scholar] [CrossRef]

- Zhong, Z.; Lin, Z.Q.; Bidart, R.; Hu, X.; Daya, I.B.; Li, Z.; Zheng, W.; Li, J.; Wong, A. Squeeze-and-Attention Networks for Semantic Segmentation. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 13062–13071. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of the International Conference on Learning Representations, Vienna, Austria, 4 May 2021. [Google Scholar]

- Mehta, S.; Rastegari, M. MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer. In Proceedings of the International Conference on Learning Representations, Virtual, 25–29 April 2022. [Google Scholar]

- Wang, L.; Li, R.; Wang, D.; Duan, C.; Wang, T.; Meng, X. Transformer Meets Convolution: A Bilateral Awareness Net-work for Semantic Segmentation of Very Fine Resolution Ur-ban Scene Images. Remote Sens. 2021, 13, 3065. [Google Scholar] [CrossRef]

- Zhang, Y.; Pang, B.; Lu, C. Semantic Segmentation by Early Region Proxy. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 21–24 June 2022; pp. 1258–1268. [Google Scholar]

- Li, X.; You, A.; Zhu, Z.; Zhao, H.; Yang, M.; Yang, K.; Tong, Y. Semantic Flow for Fast and Accurate Scene Parsing. In Proceedings of the Computer Vision—ECCV, Glasgow, UK, 23–28 August 2020; Springer International Publishing: Cham, Switzerland, 2020; Volume abs/2002.10120. [Google Scholar]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. MobileNetV2: Inverted Residuals and Linear Bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Howard, A.; Sandler, M.; Chen, B.; Wang, W.; Chen, L.C.; Tan, M.; Chu, G.; Vasudevan, V.; Zhu, Y.; Pang, R.; et al. Searching for MobileNetV3. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 October–2 November 2019; pp. 1314–1324. [Google Scholar] [CrossRef]

- Han, K.; Wang, Y.; Tian, Q.; Guo, J.; Xu, C.; Xu, C. GhostNet: More Features From Cheap Operations. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 1577–1586. [Google Scholar] [CrossRef]

- Chollet, F. Xception: Deep Learning with Depthwise Separable Convolutions. In Proceedings of the 2017 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 1800–1807. [Google Scholar] [CrossRef]

- Tan, M.; Le, Q. EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. In Proceedings of the 36th International Conference on Machine Learning, Long Beach, CA, USA, 10–15 June 2019; Chaudhuri, K., Salakhutdinov, R., Eds.; Volume 97, pp. 6105–6114. [Google Scholar]

- Tan, M.; Le, Q. EfficientNetV2: Smaller Models and Faster Training. In Proceedings of the 38th International Conference on Machine Learning, Virtual, 18–24 July 2021; Meila, M., Zhang, T., Eds.; Volume 139, pp. 10096–10106. [Google Scholar]

- Fan, M.; Lai, S.; Huang, J.; Wei, X.; Chai, Z.; Luo, J.; Wei, X. Rethinking BiSeNet For Real-time Semantic Segmentation. In Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 9711–9720. [Google Scholar] [CrossRef]

- Li, R.; Zheng, S.; Zhang, C.; Duan, C.; Wang, L.; Atkinson, P.M. ABCNet: Attentive bilateral contextual network for efficient semantic segmentation of Fine-Resolution remotely sensed imagery. ISPRS J. Photogramm. Remote Sens. 2021, 181, 84–98. [Google Scholar] [CrossRef]

- Maggiori, E.; Tarabalka, Y.; Charpiat, G.; Alliez, P. Fully convolutional neural networks for remote sensing image classification. In Proceedings of the 2016 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Beijing, China, 10–15 July 2016; pp. 5071–5074. [Google Scholar] [CrossRef]

- Audebert, N.; Le Saux, B.; Lefèvre, S. Semantic Segmentation of Earth Observation Data Using Multimodal and Multi-scale Deep Networks. In Proceedings of the Computer Vision—ACCV 2016, Taipei, Taiwan, 20–24 November 2016; Lai, S.H., Lepetit, V., Nishino, K., Sato, Y., Eds.; Springer International Publishing: Cham, Switzerland, 2017; pp. 180–196. [Google Scholar]

- Yang, N.; Tang, H. GeoBoost: An Incremental Deep Learning Approach toward Global Mapping of Buildings from VHR Remote Sensing Images. Remote Sens. 2020, 12, 1794. [Google Scholar] [CrossRef]

- Yang, N.; Tang, H. Semantic Segmentation of Satellite Images: A Deep Learning Approach Integrated with Geospatial Hash Codes. Remote Sens. 2021, 13, 2723. [Google Scholar] [CrossRef]

- Chai, D.; Newsam, S.; Huang, J. Aerial image semantic segmentation using DCNN predicted distance maps. ISPRS J. Photogramm. Remote Sens. 2020, 161, 309–322. [Google Scholar] [CrossRef]

- Ding, L.; Tang, H.; Bruzzone, L. LANet: Local Attention Embedding to Improve the Semantic Segmentation of Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2021, 59, 426–435. [Google Scholar] [CrossRef]

- Mou, L.; Hua, Y.; Zhu, X.X. Relation Matters: Relational Context-Aware Fully Convolutional Network for Semantic Segmentation of High-Resolution Aerial Images. IEEE Trans. Geosci. Remote Sens. 2020, 58, 7557–7569. [Google Scholar] [CrossRef]

- Peng, C.; Zhang, K.; Ma, Y.; Ma, J. Cross Fusion Net: A Fast Semantic Segmentation Network for Small-Scale Semantic Information Capturing in Aerial Scenes. IEEE Trans. Geosci. Remote. Sens. 2021. early access. [Google Scholar] [CrossRef]

- Zheng, Z.; Zhong, Y.; Wang, J.; Ma, A. Foreground-Aware Relation Network for Geospatial Object Segmentation in High Spatial Resolution Remote Sensing Imagery. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 4095–4104. [Google Scholar] [CrossRef]

- Zhu, M.; Li, J.; Wang, N.; Gao, X. A Deep Collaborative Framework for Face Photo–Sketch Synthesis. IEEE Trans. Neural Networks Learn. Syst. 2019, 30, 3096–3108. [Google Scholar] [CrossRef] [PubMed]

- Everingham, M.; Eslami, S.M.A.; Van Gool, L.; Williams, C.K.I.; Winn, J.; Zisserman, A. The Pascal Visual Object Classes Challenge: A Retrospective. Int. J. Comput. Vis. 2015, 111, 98–136. [Google Scholar] [CrossRef]

- Cordts, M.; Omran, M.; Ramos, S.; Rehfeld, T.; Enzweiler, M.; Benenson, R.; Franke, U.; Roth, S.; Schiele, B. The Cityscapes Dataset for Semantic Urban Scene Understanding. In Proceedings of the 2016 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 3213–3223. [Google Scholar] [CrossRef]

- Zhou, B.; Zhao, H.; Puig, X.; Fidler, S.; Barriuso, A.; Torralba, A. Scene Parsing through ADE20K Dataset. In Proceedings of the 2017 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 5122–5130. [Google Scholar] [CrossRef]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; et al. ImageNet Large Scale Visual Recognition Challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

| Clutter | Build. | Road | Tree | Veg. | Mov.c. | Stat.c. | Human | |

|---|---|---|---|---|---|---|---|---|

| Area (pixels) | 10,521.5 | 365,338.1 | 50,399.9 | 90,888.4 | 35,338.9 | 2586.5 | 4012.4 | 555.0 |

| Group | large | large | large | large | large | medium | medium | small |

| b.g. | Person | Bike | Car | Drone | Boat | Animal | Obstacle | Constr. | Veg. | Road | Sky | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Area (pixels) | 84,770.38 | 2076.04 | 206.53 | 10,048.31 | 1455.47 | 6154.82 | 18,984.38 | 2726.77 | 46,935.03 | 232,037.61 | 151,683.97 | 141,605.99 |

| Group | large | small | small | medium | small | medium | medium | small | large | large | large | large |

| Methods (Backbone) | Characteristics | Pars. | FLOPs | FPS | Mem. | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| RDNet(MobileNetV3 [35]) | RDM, DS, CS | 1.4M | 14.6G | 54.1 | 2.4G | 65.6 | 37.6 | 64.9 | 71.5 | |

| RDNet(GhostNet [36]) | RDM, DS, CS | 3.6M | 13.4G | 56.7 | 1.7G | 62.3 | 25.8 | 60.8 | 70.2 | |

| RDNet(STDC [40]) | RDM, DS, CS | 10.8 M | 126.4G | 32.9 | 2.5G | 67.4 | 42.5 | 67.5 | 72.4 | |

| RDNets | RDNet(EfficientNetV2 [39]) | RDM, DS, CS | 21.4M | 169.0G | 14.9 | 6.1G | 70.3 | 45.6 | 68.0 | 76.2 |

| RDNet(Xception [37]) | RDM, DS, CS | 29.4M | 298.6G | 11.7 | 6.3G | 71.7 | 50.4 | 71.2 | 76.2 | |

| RDNet(MobileViT [30]) | RDM, DS, CS | 2.08M | 48.8G | 6.7 | 28.5G | 69.9 | 43.5 | 71.2 | 74.8 | |

| RDNet(ResNet18 [11]) | RDM, DS, CS | 13.8M | 130.6G | 32.8 | 1.9G | 72.3 | 50.6 | 72.5 | 76.5 | |

| BiSeNet(ResNet18) [12] | Bilateral network | 13.8M | 147.9G | 28.9 | 2.2G | 63.0 | 27.0 | 57.7 | 72.2 | |

| ABCNet(ResNet18) [41] | Self-attention, bilateral network | 13.9M | 144.1G | 29.6 | 2.1G | 67.8 | 37.8 | 66.9 | 74.2 | |

| Small- object- oriented | SFNet(ResNet18) [33] | Multi-level feature fusion | 13.7M | 206.4G | 22.9 | 2.3G | 67.2 | 41.3 | 63.6 | 73.9 |

| BiSeNetV2(None) [13] | Bilateral network | 19.4M | 194.4G | 22.4 | 2.8G | 69.3 | 41.9 | 69.1 | 74.9 | |

| GFFNet(ResNet18) [6] | Multi-level feature fusion | 20.4M | 446.1G | 10.2 | 3.0G | 67.3 | 38.9 | 66.4 | 73.3 | |

| DeepLabV3(ResNet101) [20] | Atrous convolutions, pyramids | 58.52M | 2195.68G | 2.2 | 16.4G | 63.3 | 9.5 | 60.1 | 75.3 | |

| DeepLabV3(ResNet18) [20] | Atrous convolutions, pyramids | 16.41M | 603.31G | 10.1 | 2.5G | 60.1 | 8.8 | 59.9 | 70.4 | |

| Traditional | DANet(ResNet101) [26] | Self-attention | 68.5M | 2484.05G | 1.9 | 27.6G | 64.9 | 12.6 | 61.2 | 76.9 |

| DANet(ResNet18) [26] | Self-attention | 13.2M | 489.2G | 7.2 | 13.1G | 61.2 | 12.3 | 60.7 | 71.1 | |

| MobileViT(-) [30] | Combination of CNNs and ViTs | 2.02M | 32.43G | 6.9 | 27.8G | 63.5 | 25.7 | 55.7 | 74.2 | |

| Methods | mIoU(%) | (Small) | (Medium) | (Large) | IoU(%) | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Clutter (Large) | Build. (Large) | Road (Large) | Tree (Large) | Veg. (Large) | Mov.c. (Medium) | Stat.c. (Medium) | Human (Small) | |||||

| MSD(-) [4] | 57.0 | 19.7 | 47.5 | 68.24 | 57.0 | 79.8 | 74.0 | 74.5 | 55.9 | 62.9 | 32.1 | 19.7 |

| CAgNet(MobileNetV3) [14] | 63.5 | 19.9 | 58.1 | 74.4 | 66.0 | 86.6 | 62.1 | 79.3 | 78.1 | 47.8 | 68.3 | 19.9 |

| ABCNet(ResNet18) [41] | 63.8 | 13.9 | 59.1 | 75.6 | 67.4 | 86.4 | 81.2 | 79.9 | 63.1 | 69.8 | 48.4 | 13.9 |

| BANet(ResNet18) [31] | 64.6 | 21.0 | 61.1 | 74.7 | 66.6 | 85.4 | 80.7 | 78.9 | 62.1 | 69.3 | 52.8 | 21.0 |

| BiSeNet(ResNet18) [12] | 61.5 | 17.5 | 56.0 | 73.4 | 64.7 | 85.7 | 61.1 | 78.3 | 77.3 | 48.6 | 63.4 | 17.5 |

| GFFNet(ResNet18) [6] | 61.8 | 24.4 | 53.7 | 72.6 | 62.1 | 83.4 | 78.6 | 78.0 | 60.7 | 70.7 | 36.6 | 24.4 |

| SFNet(ResNet18) [33] | 65.3 | 27.0 | 60.6 | 74.8 | 65.7 | 86.2 | 80.9 | 79.4 | 62.0 | 71.0 | 50.2 | 27.0 |

| BiSeNetV2(-) [13] | 65.9 | 28.3 | 62.1 | 75.1 | 66.6 | 86.3 | 80.8 | 79.5 | 62.3 | 72.5 | 51.6 | 28.3 |

| RDNet(ResNet18) (ours) | 68.2 | 32.8 | 66.0 | 76.2 | 68.5 | 87.6 | 81.2 | 80.1 | 63.7 | 73.0 | 58.9 | 32.8 |

| Methods | mIoU(%) | (Small) | (Medium) | (Large) | IoU(%) | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| b.g. (Large) | Person (Small) | Bike (Small) | Car (Medium) | Drone (Small) | Boat (Medium) | Animal (Medium) | Obstacle (Small) | Constr. (Large) | Veg. (Large) | Road (Large) | Sky (Large) | |||||

| EKT-Ensemble(-) [5] | 57.1 | ≈30.5 | ≈52.7 | ≈83.8 | ≈76.0 | ≈47.0 | ≈15.0 | ≈69.0 | ≈46.0 | ≈51.0 | ≈38.0 | ≈14.0 | ≈70.0 | ≈93.0 | ≈86.0 | ≈94.0 |

| BiSeNet(ResNet18) [12] | 60.1 | 35.4 | 58.4 | 80.9 | 74.4 | 46.1 | 24.8 | 86.3 | 58.9 | 49.3 | 39.4 | 11.7 | 57.2 | 93.5 | 84.6 | 95.1 |

| ABCNet(ResNet18) [41] | 62.2 | 33.3 | 65.3 | 83.5 | 77.8 | 44.3 | 23.3 | 82.8 | 54.1 | 72.2 | 41.1 | 11.3 | 65.2 | 93.7 | 89.3 | 91.3 |

| GFFNet(ResNet18) [6] | 62.3 | 36.7 | 63.2 | 82.2 | 75.6 | 45.8 | 27.4 | 84.6 | 59.2 | 63.5 | 41.5 | 14.4 | 59.6 | 93.4 | 87.6 | 94.8 |

| SFNet(ResNet18) [33] | 63.7 | 37.7 | 65.0 | 83.7 | 76.8 | 49.4 | 25.8 | 84.4 | 57.3 | 66.0 | 44.4 | 18.2 | 65.4 | 93.7 | 88.9 | 93.9 |

| BiSeNetV2(-) [13] | 63.7 | 38.3 | 68.2 | 81.4 | 76.1 | 48.7 | 30.9 | 85.8 | 59.4 | 79.5 | 39.2 | 14.2 | 57.8 | 93.1 | 88.1 | 92.0 |

| RDNet(ResNet18)(ours) | 66.7 | 42.2 | 70.6 | 83.9 | 79.8 | 54.4 | 38.7 | 85.3 | 60.0 | 78.8 | 47.7 | 15.8 | 62.9 | 94.1 | 88.8 | 93.6 |

| Method | Pars. | FLOPs | (%) | (%) | (%) | (%) |

|---|---|---|---|---|---|---|

| RDNet - CS - DS (ResNet18 + RDM) | 12.6M | 124.4G | 63.3 | 40.9 | 68.2 | 78.2 |

| RDNet - CS | 12.8 M | 129.9G | 64.0 | 42.0 | 69.7 | 78.2 |

| RDNet - DS | 13.6M | 125.1G | 64.6 | 40.6 | 68.2 | 81.6 |

| RDNet - | 13.6M | 127.7G | 60.5 | 31.9 | 64.1 | 81.2 |

| RDNet - | 13.8M | 130.5G | 62.7 | 37.5 | 66.0 | 81.0 |

| RDNet - | 13.7M | 130.4G | 63.8 | 38.6 | 68.2 | 81.3 |

| RDNet | 13.8M | 130.6G | 66.7 | 42.2 | 70.6 | 83.9 |

| Methods | Backbones | ||||

|---|---|---|---|---|---|

| BiSeNet [12] | ResNet50 | 61.8 | 35.3 | 60.5 | 83.7 |

| ABCNet [41] | ResNet50 | 62.8 | 30.3 | 66.4 | 86.6 |

| GFFNet [6] | ResNet50 | 63.1 | 35.5 | 64.3 | 84.5 |

| SFNet [33] | ResNet50 | 64.5 | 35.9 | 66.6 | 86.0 |

| RDNet | ResNet50 | 67.4 | 43.3 | 67.6 | 86.7 |

| BiSeNet [12] | ResNet101 | 62.4 | 35.3 | 61.1 | 84.8 |

| ABCNet [41] | ResNet101 | 63.2 | 30.1 | 66.8 | 87.4 |

| GFFNet [6] | ResNet101 | 64.4 | 35.4 | 66.4 | 86.5 |

| SFNet [33] | ResNet101 | 65.1 | 36.1 | 66.5 | 86.8 |

| RDNet | ResNet101 | 68.5 | 44.0 | 69.7 | 87.4 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ni, H.; Chanussot, J.; Niu, X.; Tang, H.; Guan, H. Reverse Difference Network for Highlighting Small Objects in Aerial Images. ISPRS Int. J. Geo-Inf. 2022, 11, 494. https://doi.org/10.3390/ijgi11090494

Ni H, Chanussot J, Niu X, Tang H, Guan H. Reverse Difference Network for Highlighting Small Objects in Aerial Images. ISPRS International Journal of Geo-Information. 2022; 11(9):494. https://doi.org/10.3390/ijgi11090494

Chicago/Turabian StyleNi, Huan, Jocelyn Chanussot, Xiaonan Niu, Hong Tang, and Haiyan Guan. 2022. "Reverse Difference Network for Highlighting Small Objects in Aerial Images" ISPRS International Journal of Geo-Information 11, no. 9: 494. https://doi.org/10.3390/ijgi11090494

APA StyleNi, H., Chanussot, J., Niu, X., Tang, H., & Guan, H. (2022). Reverse Difference Network for Highlighting Small Objects in Aerial Images. ISPRS International Journal of Geo-Information, 11(9), 494. https://doi.org/10.3390/ijgi11090494