1. Introduction

Map navigation systems are generally used to show items’ spatial distribution and activities’ deployment. It is frequently utilized in typical scenarios such as operational command [

1], geological survey [

2], catastrophe rescue [

3], public safety emergency [

4], etc. In recent years, the emphasis of research on this subject has switched from the 3D depiction of geographic information [

5] to the integration of innovative display and interaction technologies [

6,

7]. Specifically, the natural interaction technology for the XR (a collective term for virtual reality, augmented reality, and mixed reality) environment allows for accurate three-dimensional information and adaptable natural interaction.

The immersive world generated by the head-mounted XR display expands the interaction interface compared to the flat-panel display. It increases the presentation space and method of information presentation and offers an excellent user experience for map navigation and dynamic planning [

8]. Extending the layout of 2D maps to 3D immersive spaces might give recommendations for the design of XR systems [

9,

10]. Because visualization in XR enhances the effectiveness of multidimensional information display, it reduces the user’s reliance on interactive devices such as the keyboard, mouse, and touch screen. The conventional interactive method is no longer appropriate for interactive operations such as selection, manipulation, and navigation. Therefore, it is necessary to establish an alternate way of coordinating the dynamic presentation of 3D data in virtual environments.

Human–computer interaction (HCI) researchers have investigated effective selection, manipulation, and movement methods in virtual environments for a long time. Exploring all conceivable combinations of suitable virtual experiences, user preferences, platform capabilities, and physical space available for natural interactions is an endless journey [

11]. For one thing, consumer platform developers like to employ a range of hardware to engage in virtual worlds [

12,

13,

14]. This is because they are focused on interaction accuracy. For another thing, using non-intrusive sensors to complete interactions has become a new trend due to the emphasis on user experience [

15,

16,

17]. On this premise, an increasing number of studies indicate that the metaphor of the contact must match that of the virtual activity [

18,

19,

20].

From the perspective of interactive metaphors, this study seeks to create natural gestures and interactive feedback for our mapping system. On the basis of assuring the accuracy of the interaction, psychological studies are used to inspire the design and validate the procedure’s efficacy in order to boost users’ subjective feelings and make the interaction more immersive and natural. The remainder of the article has the following structure:

Section 2 outlines the primary aims of this paper as well as the methods utilized for this project.

Section 3 of this article introduces the first experiment (heuristic user gesture collecting) and discusses the outcomes.

Section 4 describes the map navigation technology we developed and presents the second experiment (map usability evaluation). In

Section 5, the article’s findings are summarized.

2. Aim and Concept

This study aims to enhance military commanders’ situational awareness, thereby enabling them to make prompt and adaptable decisions and take appropriate actions based on thorough immersion in the scenario information. To achieve this, the study necessitates the use of interaction methods that maintain immersion without causing disorientation among operators in the virtual space. Previous research primarily focuses on designing methods that boost immersion for users, which may cause operators to become overly fixated on their position, ultimately hindering the commanders’ ability to effectively comprehend the situational information of the entire battlefield in our scenario. In addition, operators in similar scenarios often face high levels of psychological and time pressures. Therefore, interaction methods are designed to be natural and easy to learn, while avoiding overly complicated interaction processes that may impose unnecessary cognitive load on commanders during decision-making. The developed system requires specific theoretical and technological methods for map navigation in an operating environment with intense time constraints. These methods include choosing the appropriate input devices, motion perspectives, and operation modes.

Regarding input devices, the tangible user interface [

21] is an effective solution that enables users to sculpt and modify digital information via physical material, particularly in mixed reality scenarios. To ensure immersion, the researchers in this work use a fully closed VR display, making it challenging to ensure that the virtual scene maintains a consistent coordinate match with the interactive hardware. In addition, since the 2010 introduction of the leap motion controller [

22], continuously controlled trials have demonstrated that non-invasive bare-handed interactive recognition can improve immersion and natural experience in virtual environments [

23,

24,

25]. We ultimately decided to implement a non-invasive gesture engagement system.

Regarding motion perspectives, viewpoint control is frequently categorized as allocentric and egocentric spatial perspectives [

26], which have been tried in various virtual world applications. The results demonstrate that navigation tasks favor narcissistic spatial views, whereas rotation operations tend towards allocentric spatial perspectives [

27,

28]. In this study, it is essential to maintain a sense of immersion and ensure that the operator can fully comprehend the situational information of the entire picture. Using egocentric spatial perspectives would result in a loss of orientation. Thus, allocentric spatial perspectives better accommodate our needs.

Regarding operation mode, it has been discovered that separating the direction of look from the direction of travel yields a more precise result [

29]. Optimizing the interaction with virtual objects during motion can create a more immersive experience for the whole body [

30]. This type of navigation is more akin to manipulating a 3D scene than traversing it [

31,

32]. Consequently, this study clarifies that the map is shown as an electronic sand table and that the user interacts with the map from a third-person perspective.

In conclusion, taking into account the uniqueness of the system we designed, we concentrate on developing natural gesture navigation approaches with the following features: (1) the use of non-intrusive equipment; (2) the allocentric spatial perspective; and (3) the replacement of manipulation for travel navigation. Due to the requirement for a high level of immersion, we selected a closed VR HMD as the display device. Under this modification, the author chooses leap motion as the gesture sensor to ensure the system’s light weight, portability, and low cost.

The study consists of two phases. Experiment 1 constructs a straightforward VR map and collects user datasets suitable for the navigation system utilizing quantitative heuristic research (shown in

Section 3). Based on the gathered experiment data, a more precise interaction design is implemented in the second phase. Using Mapbox Plugins, we constructed a virtual map navigation system based on accurate geographic data. In this system, this article provides the associated dynamic visualization scheme and interaction feedback mechanism, and we conducted quantitative psychology experiments (Experiment 2) to evaluate the system’s efficacy from various perspectives (shown in

Section 4).

The experiments utilized a unified hardware and software environment comprising a 2080TI desktop computer, an HTC Vive Pro head-mounted display (2880 × 1600), and a leap motion gesture recognition sensor worn on the head. Unity is used to construct the experimental program and system prototype. The Mapbox SDK for Unity plug-in is used to implement the real-world map.

3. Experiment 1: Heuristic User Gesture Collection

3.1. Materials and Methods

Usually, interactive gestures are designed and evaluated by experts, but traveling gestures have explicit semantics, and people intuitively utilize gestures with related semantics in daily communication. To assure the naturalness of gesture interaction, this study investigates the needs and preferences of users in detail; collects gestures that are simple to comprehend, recall, and recognize; and makes interactive gestures as consistent and feasible as possible with users’ psychological expectations.

In this part, we execute a heuristic experiment of the natural gestures of map pan, rotate, and scale instructions to comprehend the user’s performance in the scene and the interactive preference for map navigation activities. The heuristic experiment is a widely used research method for collecting natural gestures [

33,

34,

35]. It involves presenting suggestive dynamic images to participants and asking them to perform a gesture that corresponds semantically with the image. A total of 10 males and 11 females between 21 and 26 years of age (Mean = 23.71, SD = 1.45) were invited to participate in the experiment. After rectification, the vision of each subject is average or usual, with achromatopsia, color blindness, and normal stereoscopic vision. There are 19 right-handed subjects and 2 left-handed subjects. Eight individuals have extensive expertise with VR interaction. The experiment is created in Unity, as shown in

Figure 1.

After wearing the device in the virtual environment, the subjects are briefed on the system’s fundamentals, including the system’s scope and the estimated limits of natural gesture recognition. The experimental application displays the motion state of simulated map navigation. The participants are required to develop two to three interactive gestures and exhibit actions for map movement, zooming, and rotation. In this procedure, the think-aloud method [

36] is employed, in which individuals are asked to describe the expected response of the system to each gesture they make while executing interactive actions. The results are measured by the consistency rate, which counts how many users have made identical gestures in the same task.

The experiment is video-recorded and manually analyzed by the experimenter after completion. During the investigation, users who lift their hands above their abdomen perform valid gestures. Hand position changes above 10 cm will be recognized as effective movement. We classify all gestures into three categories based on which hand is lifted: “dominant hand”, “recessive hand”, and “both hands”. Moreover, we categorize gestures into four types according to the shape of the hand: “open hand”, “grip”, “index finger”, and “pinch”. Furthermore, we add suffixes such as “slide”, “drag”, and “move relatively” to the gesture description based on the relative position and moving speed of the hand. “Slide” indicates a fast swinging motion; “drag” denotes a slow dragging motion; “move relatively” involves changes in relative position between two hands. Additionally, we use special terms such as “palm direction navigation” to describe gestures that do not follow this rule; this means opening your palm and controlling movement by palm orientation.

3.2. Results

The gathered heuristic gestures are split into three categories (dominant hand, recessive hand, and both hands), as shown in

Figure 2.

In

Figure 2a, the top two gestures with the highest statistical frequency are “open hand and slide with dominant hand” (13 cases) and “grip and drag with dominant hand” (10 cases). Most subjects chose the dominant hand operation, and a few chose the two-handed process. In

Figure 2b, the top two most frequently used gestures for map rotation are “grip and rotate relatively with both hands” (9 cases) and “open hand and turn wrist with dominant hand” (8 cases). No subjects choose non-dominant hand interaction in the rotation task. In

Figure 2c, the two most commonly used gestures for map zoom are “open hand and move relatively with both hands” (11 cases) and “grip and move relatively with both hands” (10 cases).

In

Figure 2d, interactive hands with three tasks are integrated. During the execution of map pan, rotate, and zoom tasks, the number of interactions with the dominant hand decreases gradually. In contrast, the number of interactions with both hands increases gradually, while almost no one chooses non-dominant hand interaction. The current results show that pan gestures have an apparent dominant hand operation tendency, zoom gestures have an evident two-hand operation tendency, and rotate gestures have no clear interactive hand operation tendency.

3.3. Discussion

It can be shown from the initial results that finger stretching activation in interactive activities are often employed, notably by users with no previous experience with gesture interaction, who prefer five-finger stretching to accomplish operations via sliding motions in mid-air. This demonstrates that consumers perceive its naturalness and that it satisfies their psychological expectations for interaction. However, from a technological standpoint, five-finger stretching is a gesture that is frequently exhibited unintentionally, which can result in accidental activation.

Moreover, “grip and drag with dominant hand” for pan, “grip and rotate relatively with both hands” for rotation, and “open hand and move relatively” for zoom have a high and consistent level of recognition across the respondents. This is possibly because, on the one hand, the drag-and-drop action is similar to what the user does every day and matches what the user expects from the system. On the other hand, this set of gestures gives the user a strong sense of control over the system, and the user’s hands can provide tactile feedback to make the interaction more reliable.

Based on the conclusions of the heuristic experiment, the designers referred to their experience and screened out the gestures that appeared in the investigation. First of all, since the open hand gestures’ interaction mode does not have apparent motion features in recognition, it is prone to accidental activation. Although this type of gesture scored the highest in the experiment, it is unsuitable for practical interaction control. In contrast, the Grip and Drag gesture, which ranked second in score, has higher usability. Secondly, considering that operators may need to use other functions (moving and plotting) during navigation interaction, the gestures screened out in subsequent experiments need to ensure at least one set of single-hand interaction gestures. Finally, gestures using the dominant hand and both hands have high scores in different tasks. However, mixing gestures with different logic in the same interaction scenario will increase learning costs and cognitive load. Therefore, we decide to design two sets of interaction gestures: one using both hands for interaction and one using the dominant hand for interaction.

4. Experiment 2: Dynamic Visualization and Usability Evaluation

4.1. Dynamic Visualization

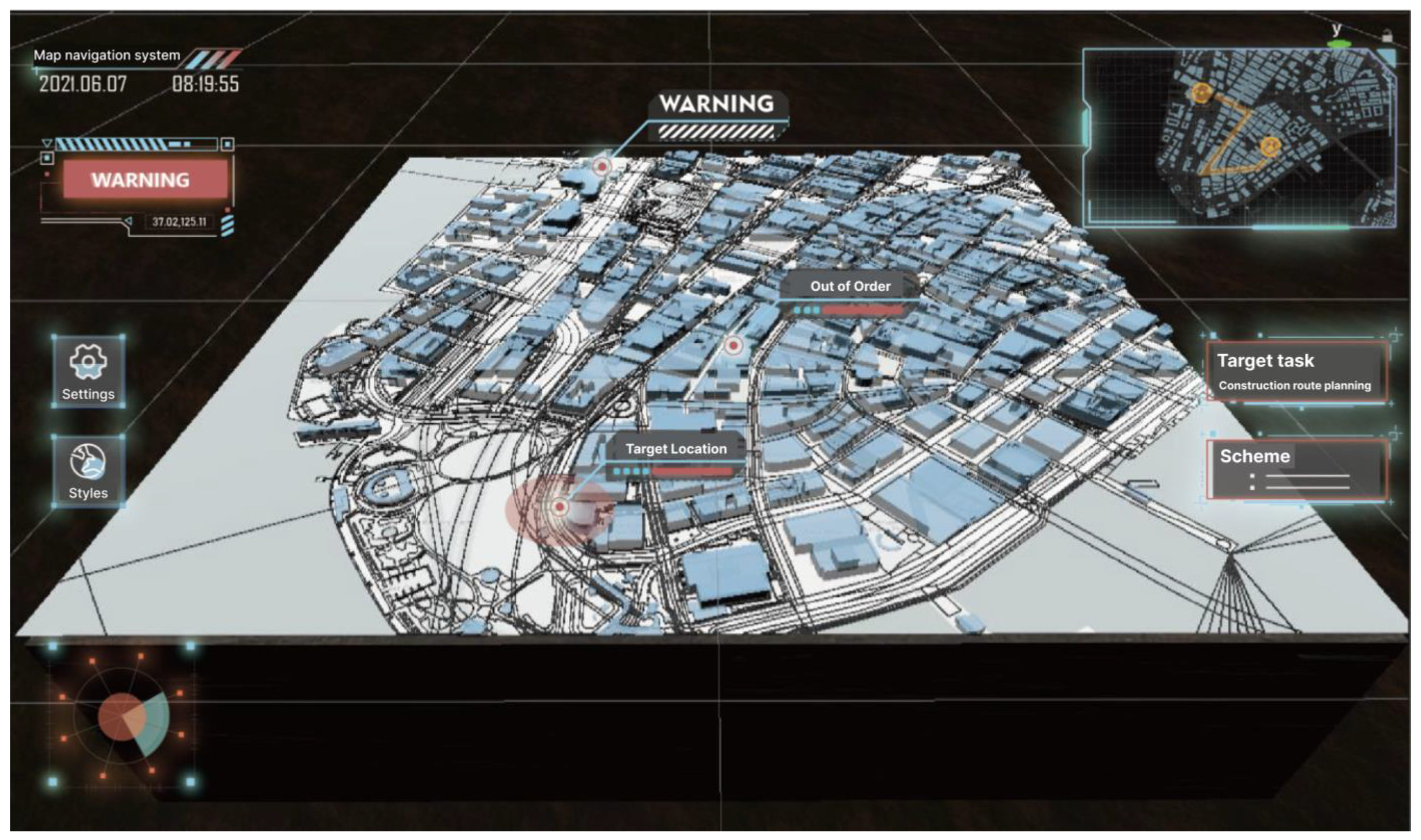

In this part, we focus on the 3D map’s information display and the visual feedback of gesture action. The VR map navigation system was designed using the collected heuristic user gesture library. It can perform 3D visualization and real-time depiction of real-world geographic information in VR and edit the map using gestures, as shown in

Figure 3.

In

Figure 3a,b, the system can perform 3D visualization and real-time characterization of real-world geographic information in VR and edit the map using gestures. In

Figure 3c, the rotation gesture can rotate the entire 3D map clockwise/counterclockwise. In

Figure 3d, the Zoom gesture adjusts the map’s scale and indicates the current zoom factor through the arrow effects of the virtual hand model. In

Figure 3e–g, the gesture interface enables the map to switch between different visualization schemes quickly. In

Figure 3h,i, users can also use gestures to simultaneously add markers and lines to the map for plotting. As illustrated in

Figure 4, the Unity-created demo scene features portions of Shanghai as an example.

We developed two sets of natural gestures based on the data gathered in Experiment 1, as illustrated in

Figure 5. The interactive gestures used in both groups are derived from the “state-of-the-art” (SOA) gestures (in this paper, SOA is used to refer to the best-performing gesture in Experiment 1). In order to avoid unnecessary cognitive costs in continuous interaction, we regroup the SOA gestures based on their interactive features. Group 1 consists of dynamic movements that involve the interaction of both hands, whereas Group 2 consists of static gestures that involve the use of one hand to set the operating position in the scene. The dynamic gesture (group 1) must be activated by a particular trigger gesture. In contrast, the static gesture (group 2) requires the relevant action to be performed in a specific location to execute the command. For reference, the rotation gesture in group 2 is an exception since it still requires minor rotation motions throughout gesture detection.

4.2. Materials and Methods

In this part, a simple comparative experiment is used to evaluate which design is more suitable for VR map navigation. The investigation comprises 4 sub-tasks that use 2 groups of movements to pan, rotate, zoom, and travel the map (travel means pan, rotate, and zoom simultaneously and exploring freely in the 3D map). Each sub-task is repeated 8 times, with a different task goal for each repetition.

A total of 23 subjects were invited, including 10 males and 13 females, aged from 21 to 26 years old (Mean = 23.74, SD = 1.39). After receiving corrective lenses, they all has average or usual vision. Furthermore, 21 participants are right-handed, whereas 2 are left-handed, and 10 individuals have extensive expertise in VR interaction.

The experimental setting is depicted in

Figure 6. Subjects must engage using predetermined motions to pan, rotate, and zoom the 3D map to the specified box. In the pan sub-task, the participants need to guarantee that the yellow ball suspended above the map enters the central red wireframe region. In other activities, the participants must ensure that the yellow wireframe is aligned with the map’s edge once the operation has been performed. Each trial has the same moving distance, and the moving direction is randomly set. This setting is achieved by initializing the starting position of each interaction.

The sub-tasks are presented in random order, and the subjects are permitted to practice each task before the formal experiment. They become familiar with the VR environment, gesture operation, and experimental requirements during the training process. The volunteers can give their opinions and suggestions regarding gestures and interactions throughout the experiment. The subjects can control the rest time for each sub-task to reduce tiredness. During the experiment, subjects are instructed to perform the goal task as rapidly as feasible. The software records the time cost of different operations. Only primary handedness is used in the investigation to assure the scientific validity of the experimental findings and eliminate the factor difference between right-handed and left-handed people.

After completing the gesture interaction experiment, the participants completed a seven-point Likert scale for two groups of interactive gestures with four sub-tasks, as shown in

Table 1. Minitab 17 was utilized to finish the analysis of the experimental data.

4.3. Results

To quantify performance, the experiment recorded each participant’s time costs to complete different tasks. The task completion time for map pan, rotate, zoom, and travel tasks utilizing two groups of interface gestures were statistically assessed and presented as box plots. As shown in

Figure 7, due to individual variances among participants, we cleaned the outliers with [U + 1.5IQR, L − 1.5IQR] as the boundary condition (interquartile ranges, abbreviated as IQR). We performed paired t-tests to analyze the time costs of two groups of gestures in pan, rotate, zoom, and travel tasks. The results indicate significant differences in time costs between the two groups in all four tasks, with pan (

p < 1.9 × 10

−15) and zoom (

p < 3.8 × 10

−20) showing highly significant differences and rotate (

p < 0.047) and travel (

p < 0.029) also showing significance. The time costs of group 2 consistently outperforms that of group 1, indicating that the gestures in group 1 have higher overall performance across all tasks.

In comparison to other gestures, the gestures in group 1 exhibit a higher IQR of approximately 10 in the Travel task. While users demonstrate consistent performance when executing pan, rotate, and zoom operations individually, there are certain fluctuations in time costs when using these gestures for free travel operations. The author suggests that the lower performance of the Travel-1 gesture group may be attributed to its greater difficulty in learning when compared to Travel-2. Nonetheless, given that both the upper and lower box boundaries of Travel-1 are lower than those of Travel-2, the gestures in group 1 still demonstrate superior performance, even though the participants have not yet fully mastered them.

Our analysis reveals that Rotate-2 and Travel-2 display considerably lower bounds when compared to the gestures of group 1. Further investigation through tracking has led to the discovery that the high-performing participants in Travel-2 do not overlap with the group of expert users. Interestingly, these participants all possessed varying degrees of experience with video games. Given that most of these participants had not previously attempted gesture interaction, we suggest that the prior knowledge acquired from mouse and keyboard interaction during gaming is somewhat transferable to the gesture interactions of group 2. The prior knowledge might have influenced their performance during the interaction task.

Due to the fact that some participants are novices in gesture interaction and have less adaptability than others, the time required to complete each assignment has a very high upper bound. Thus, we must use psychological scales to quantify participants’ psychological appraisal of usefulness.

The assessment results of the Likert Scale are depicted in

Figure 8. Almost all items on the scale indicate that the gestures of group 1 received higher evaluations, except for the “easy to learn” aspect. This assertion is consistent with the earlier discussion of the Travel-1 gesture’s IQR, wherein it was suggested that group 2’s static gestures that require single-handed operation are easier to trigger as compared to those in group 1. However, this ease of triggering also leads to occasional false activations, ultimately resulting in poor interaction performance. Furthermore, the Fatigue aspect of Zoom-2 is lower than that of Zoom-1, while its Attractive aspect is slightly higher than Zoom-1. However,

Figure 7 illustrates that Zoom-2 requires considerably more time for successful execution than Zoom-1. We posit that while certain single-handed interaction gestures are less fatiguing on the arm muscles, they still fail to significantly enhance interaction performance.

4.4. Discussion

The study initially gathered a natural gesture library that may manifest in the scenario of “using non-intrusive equipment, the allocentric spatial perspective, and manipulation as the main factors” via a heuristic experiment. Following this, the gesture library underwent classification and filtering, based on software development experience, coupled with multiple factors such as light weight, recognition accuracy, accidental activation, and semantic ambiguity. To assess the comparative usability of different design schemes, we conducted a behavioral experiment and a Likert Scale survey on the two groups of selected and classified gestures.

Both the behavioral experiment and Likert Scale survey exhibit significant consistency, and indicate that the group 1 gestures demonstrate superior interaction performance, comfortability, reliability, naturalness, and fluency in comparison to group 2. Although certain gestures in group 2 are marginally more learnable and less fatiguing than their group 1 counterparts, this can be attributed to the ease with which group 2 gestures can be triggered, resulting in occasional false activations that impede the efficient execution of interaction commands.

The exceptional performance of some participants in Rotate-2 and Travel-2 is noteworthy. Upon further investigation, we discovered that these participants are not necessarily experts but have a certain level of experience with video games. This highlights the notion that interaction gesture design need not exclusively be based on natural gestures in everyday life. Instead, designers of different software should take into account the target users’ accumulated interaction-related prior knowledge from their past experiences.

In conclusion, the use of gestures from group 1, as illustrated in

Figure 5a,c,e, proves more efficient and reliable for controlling map navigation in this project. However, some participants performed exceptionally well with gestures from group 2. For future designs, personalized gesture interaction settings should be tailored according to the target users’ existing knowledge and experience, thereby ensuring optimal interaction performance.

5. Conclusions

This study explores the interactive performance and subjective evaluation of various natural motions and compares the usability of two sets of interaction design and dynamic visualization schemes. The findings indicate that grasp is a common and effective interaction gesture. Natural and effective navigation of the VR map is possible by grabbing, dragging, rotating, etc. Similarly, this result shows that designers can use their own ideas for designing and researching interactive gestures.

Despite the fact that this study is based on a tiny application situation, the authors present a standardized and explicit interaction design strategy. We described how to generate test cases in a specific system and how to implement psychological experiments to filter and evaluate the required natural interaction modes. This is an efficient plan that facilitates the building of systems with unique characteristics based on local conditions, hence optimizing the enhancement of interaction efficiency.

Still, it is difficult to ensure that the scheme can consider learning cost, interaction efficiency, and comfort. We should be adept at utilizing heuristic research methodologies during the design phase to investigate gesture users’ thought processes and subjective preferences. In addition, the interactive performance of the VR interface and virtual controls is a crucial aspect of the evaluation of VR GIS that should be investigated further in subsequent research.