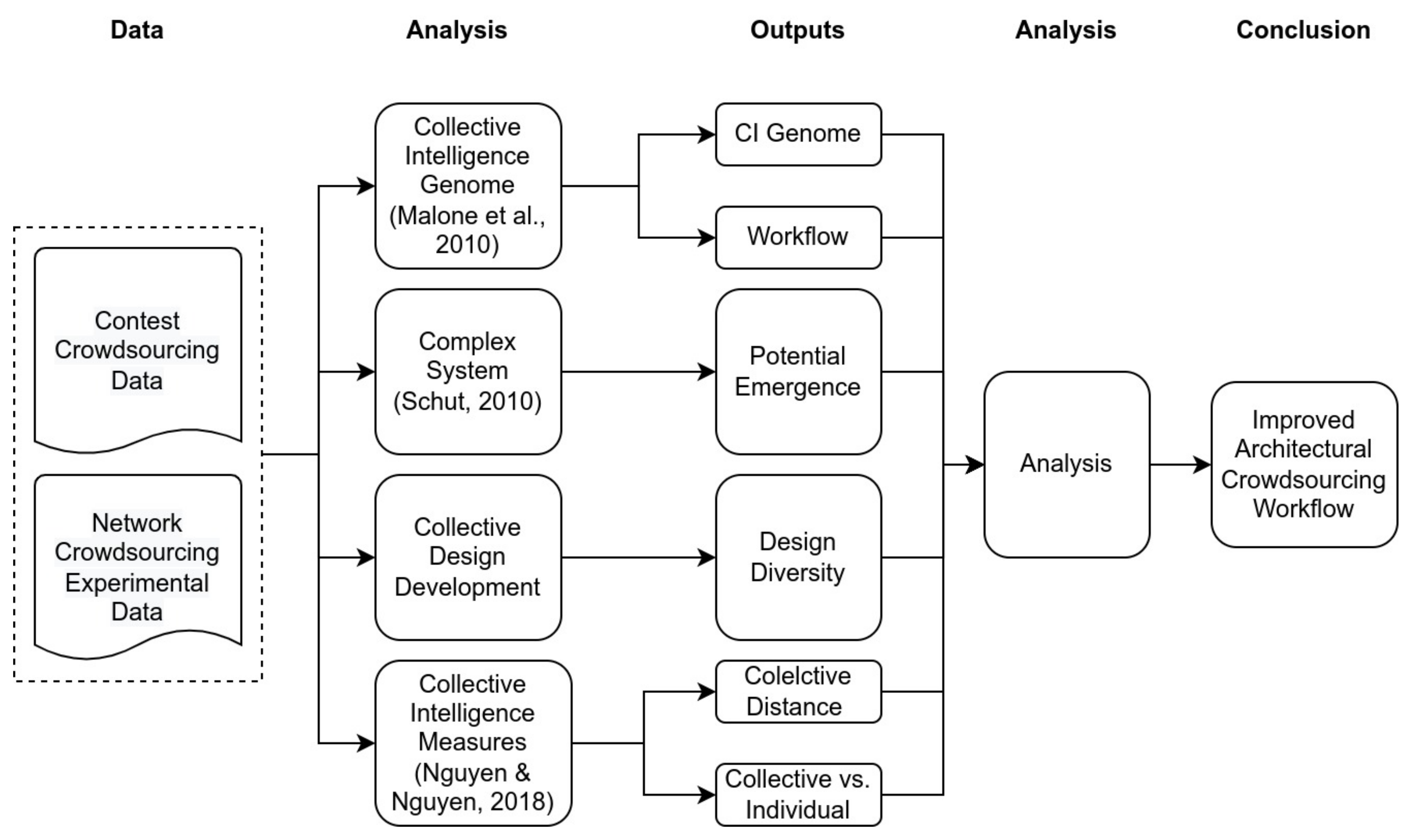

The research framework we used to compare Arcbazar and Architasker was similar to the one previously proposed by Salminen [

4] (see

Figure 3). Based on Salminen’s conclusions, in order to identify and compare the structure and the emergence of collective intelligence in the analysed systems, we used two analysis tools: the collective intelligence genome and the complex systems approach. We added collective design development analysis, where a participation index is calculated and measures the distance between collaborative and individual performance. Finally, based on the results of the analysis, we suggested an improved crowdsourcing design process that could arguably facilitate a more collective intelligent process.

2.3.1. Collective Intelligence Genome

The collective intelligence genome [

6] is a simple classification system to differentiate between different collective intelligence systems. This system helps one to understand and compare the systems’ processes through, first, identification of different phases of production and, second, answering four design questions.

The first question is the goal and what is being done. The possible answers are ‘Create’ or ‘Decide’. Create means that something new, such as a text or a design, was generated. ‘Decide’ means that the phase aimed to select something, such as a contest winner. The second question is who the staff performing the activity are. This question also has two possible answers: ‘Crowd’ or ‘Hierarchy’. ‘Crowd’ here refers to a group of undefined participants, while ‘Hierarchy’ denotes organisers of the process. The third question concerns the incentives and the reasons why actors get engaged in the design process. This question has three possible answers: money, love, or glory. The fourth and final question is related to the structure of the production method—that is, how is it done? The possible answers are collection, contest, collaboration, voting, averaging, and consensus.

Based on the collective intelligence genome, we created workflow diagrams that explain what input was provided and what information was generated at each step. These diagrams helped to identify the communication network that may produce collective intelligence.

2.3.2. Collective Intelligence Complex System

The second analysis method that we used to identify a possible emergence of collective intelligence was based on a complex system approach [

62]. Such systems are adaptable and capable of self-organisation, and collective intelligence can emerge under certain conditions.

For the present analysis, we used Schut’s [

62] complexity-based model to identify systems with potential emergence of collective intelligence. Schut’s model is based on the notion that collective intelligence is an emergent phenomenon on a system level that results from an interaction among agents. Shut’s model includes the identification of the following two sets of properties: (1) enabling properties and (2) defining characteristics.

Enabling properties, which include adaptivity, interaction, and system rules, are essential properties for the emergence of collective intelligence systems. Adaptivity means that the system is capable of adjusting its structure to a changing environment.

Interaction is thought to occur when there is communication between agents in the system, which enables adequate response to different behaviours. System rules are logical conditions that restrict and adjust information, e.g., task instructions. Since humans are complex agents in crowdsourcing systems based on communication and have explicit rules, enabling properties can be observed on many crowdsourcing websites [

4].

Furthermore, defining characteristics are properties that can be recognised in complex systems with collective intelligence. Among others, defining characteristics include local (user) aggregation, global (system) aggregation, randomness, emergence, redundancy, and robustness.

Local aggregation occurs on the individual level, for instance, when a crowd worker composes something creative, such as a review or design. Global aggregation is the ability of the system to adapt itself in response to its environment. In crowdsourcing systems, global aggregation is the sum of votes or a collection of reviews. Furthermore, randomness is a typical element of complex systems identified when there is some random behaviour. For example, in crowdsourcing systems, rated items can be displayed in random order.

Next, emergence refers to the process of local-level aggregations that result in a global level of adaptivity. Whenever emergence occurs, the whole is larger than the sum of its parts. Emergence is the most challenging property in crowdsourcing systems since crowdsourcing involves humans with different behaviour each time, which makes it difficult to predict emergence. Furthermore, redundancy refers to instances when the same information exists or emerges in several places, as when several workers perform a task in parallel. Finally, robustness is related to redundancy and means that, even though some parts of the process can fail, the system will continue to function. For instance, a crowdsourcing system needs to be able to cope with cheaters who perform tasks to get a reward and produce inadequate data.

While most of the parameters briefly reviewed above are observed on many crowdsourcing websites, a significant parameter to identify the emergence of collective intelligence is the local-global aggregation parameter [

4]. Both crowdsourcing design systems we analysed are adaptive since they can receive different design challenges and act on them accordingly. They are also interactive and facilitate communication among agents. Finally, there are different rules and constraints, such as restricting votes or participation, in both systems.

In addition, due to human participation, with different individuals involved in each specific case, both systems are characterised by randomness. Similarly, both systems are redundant since all micro-tasks are performed multiple times by multiple people. Finally, the redundancy of the two systems makes them robust so that failures on individual tasks do not lead to system failure. Accordingly, our subsequent analysis focused on the local, global, and emergence parameters.

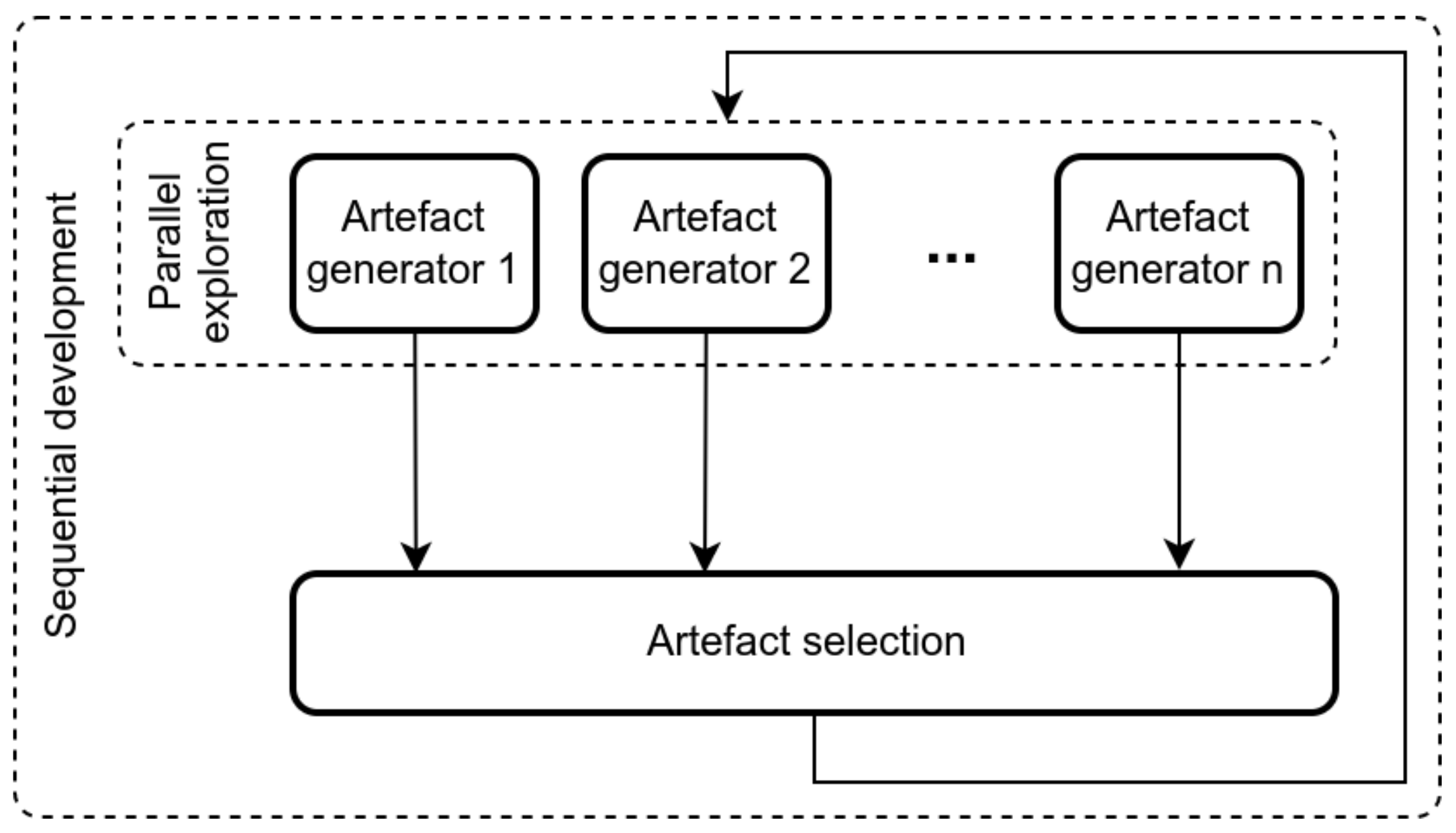

2.3.3. Collective Design Diversity

Next, we analysed the diversity of design outcomes and produced design development diagrams. Along with documenting the artefacts produced in each design iteration, these diagrams specified which artefacts were selected for further development, showed the dynamics of the design development, and identified how many designers were involved in creating the outcomes.

In order to quantify the level of participation in the resulting design artefact, i.e., the number of unique contributing agents whose designs became part of the final product, the participation index was used. This index allowed us to better understand the level of collaboration, compare collaborative processes, and trace the diversity of contributions over time. Overall, the participation index is a measure of contribution diversity that suggests the potential for the emergence of collective intelligence.

In the present study, the level of collaboration was defined with simple participation index

D for an iteration

i (see Equation (

1)).

where

is the number of contributors (unique participants whose product is actually in the design). Of note,

depends on the

n, the total number of participants, and

i, the number of iterations, since the contributor number cannot be higher than the number of participants or the number of iterations.

In the network-based crowdsourcing system, we assumed that the designers would have different skill levels within our heterogeneous group of students and professional architects. Therefore, we hypothesised that some individuals among our study participants would possess better design skills and knowledge. Accordingly, we expected that these individuals’ artefacts would be selected more frequently during the design process and that the results would contain the contributions of the best few designers.

2.3.4. Collective Design Measurement

Measuring the emergence of collective intelligence requires a quantitative assessment of the quality of design artefacts produced by various participants during the design process. Since, as discussed previously, architectural designs evaluations are subjective, in the present study, we asked three experts to provide a quantitative evaluation for each artefact. These three experts were professional architects with advanced (Master or Ph.D.) degrees in architecture and experience in educating architecture students. The experts were asked to carefully inspect and then rate the design quality of each artefact on a scale between 1 and 10. The three architects did not know the participants and were affiliated with different universities. The experts’ ratings were then normalised, and an average expert score was computed for each artefact.

Following Nguyen and Nguyen [

68], based on the experts’ evaluations, we computed the following two collective intelligence measures: (1) the distance of the collective prediction from the proper value and (2) the number of times that the collective prediction was better than individual predictions.

For the first measure, we computed the distance of the collective product from the maximal experts’ score. This was done by calculating the distance of the average quality of the selected artefacts from the highest-rated artefact in each iteration. Since there were several selected artefacts in each iteration, we computed their average distance from the highest-rated artefacts for each iteration. The smaller the distance was, the better the collective performance was.

Given a collective

, which represents individual artefacts,

r are the best artefacts and

is the collective prediction; in the present study, the distance between the maximal experts’ score and the collective produce was defined (see Equation (

2)).

The second measure was based on the number of times when the collective performance was better than the performance of individual designers. We calculated the same average distance from the highest-rated artefacts for each participating designer. This was followed by comparing the average collective distance with the average distance of each designer. A smaller distance was assumed to indicate improved performance. If an individual distance was smaller than the collective distance, we interpreted it to mean that an individual performed better than the group. Finally, we also counted the number of designers whose individual performance was higher than the collective value.

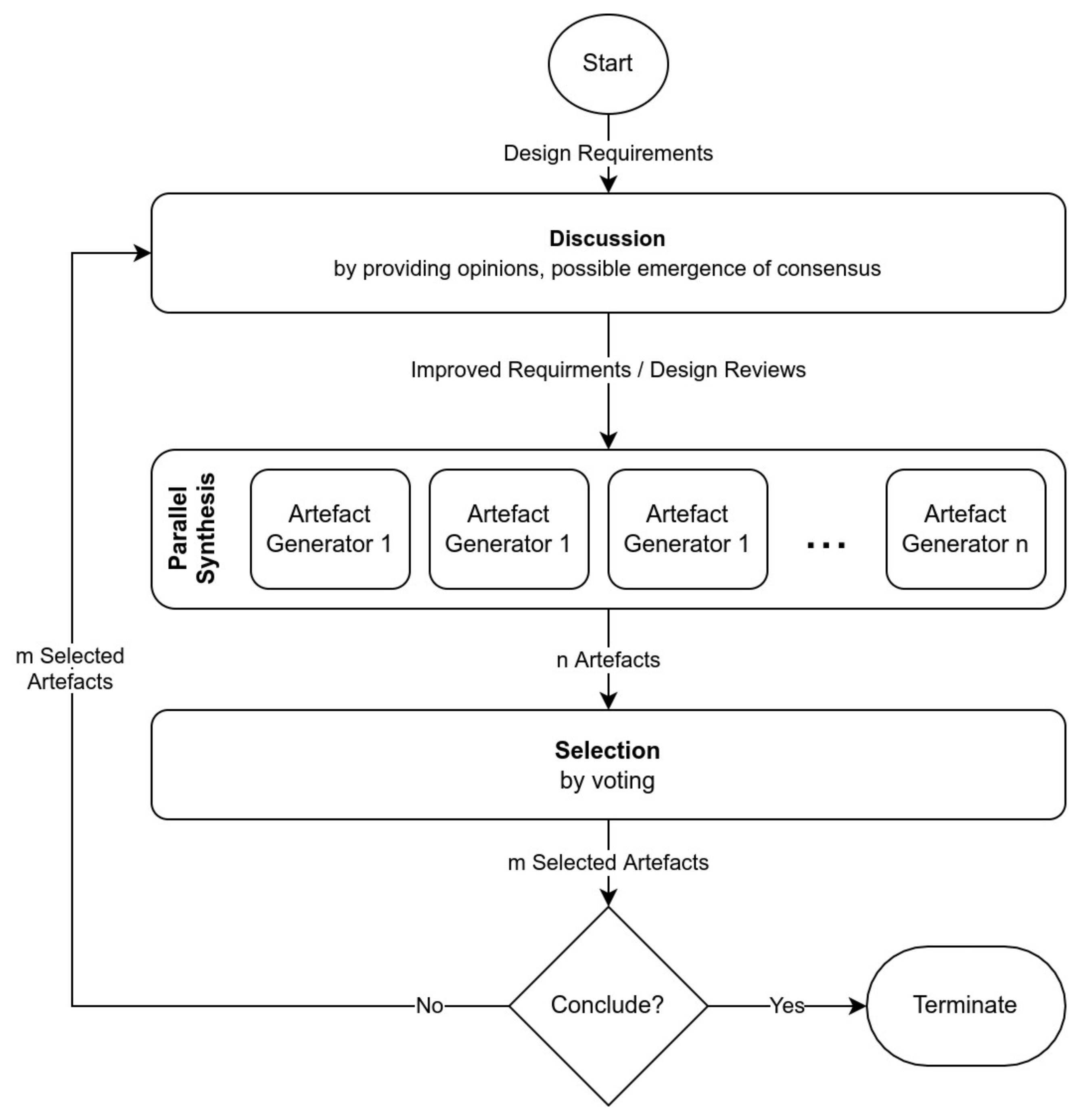

Finally, based on the analysis of both crowdsourcing systems, we proposed an improved design process that can facilitate the emergence of collective intelligence in the design process.