Abstract

The rapid development of digital image inpainting technology is causing serious hidden danger to the security of multimedia information. In this paper, a deep network called frequency attention-based dual-stream network (FADS-Net) is proposed for locating the inpainting region. FADS-Net is established by a dual-stream encoder and an attention-based blue-associative decoder. The dual-stream encoder includes two feature extraction streams, the raw input stream (RIS) and the frequency recalibration stream (FRS). RIS directly captures feature maps from the raw input, while FRS performs feature extraction after recalibrating the input via learning in the frequency domain. In addition, a module based on dense connection is designed to ensure efficient extraction and full fusion of dual-stream features. The attention-based associative decoder consists of a main decoder and two branch decoders. The main decoder performs up-sampling and fine-tuning of fused features by using attention mechanisms and skip connections, and ultimately generates the predicted mask for the inpainted image. Then, two branch decoders are utilized to further supervise the training of two feature streams, ensuring that they both work effectively. A joint loss function is designed to supervise the training of the entire network and two feature extraction streams for ensuring optimal forensic performance. Extensive experimental results demonstrate that the proposed FADS-Net achieves superior localization accuracy and robustness on multiple datasets compared to the state-of-the-art inpainting forensics methods.

Keywords:

inpainting forensics; deep convolutional neural network; learning in frequency domain; dual-stream feature extraction; attention mechanism MSC:

68M25; 68T07

1. Introduction

With the rapid development of digital image processing techniques, increasingly advanced image editing software provides more convenience and fun for modern human life. Nevertheless, a large number of forged digital images are generated by malicious use of these techniques, which has led to a serious security and trust crisis of digital multimedia. Therefore, image forensics has gradually attracted an increasing concern in the digital multimedia era, such as JPEG compression forensics [1,2], median filtering detection [3], copy-moving and splicing localization [4,5], universal image manipulation detection [6,7], and so on.

Image inpainting is an effective image editing technique which aims to repair damage or removed image regions based on known image information in a visually plausible manner, as shown in Figure 1. A variety of image inpainting methods have been constantly proposed in recent years, and these can be classified roughly into three categories: the diffusion-based approaches [8,9], the exemplar-based approaches [10,11], and the deep learning (DL)-based approaches [12,13]. Due to its effective and efficient editing ability, image inpainting has been widely applied in many image processing fields [11], such as image restoration, image coding and transmission, photo-editing and virtual restoration of digitized paintings, etc. However, the powerful image editing tool is also conveniently used to maliciously modify an image even by non-professional forgers with less visible traces, which poses a serious threat to multimedia information security.

Figure 1.

An illustrative example of image tampering by image inpainting: the real image (left), the inpainted image (middle), and the utilized mask (right).

The major forensic tasks for image inpainting are to locate the inpainted regions of an input image, so inpainting forensics require pixel-wise binary classification at the manipulation level, i.e., binary semantic segmentation. In fact, the goal of binary semantic segmentation is to classify pixels in an image into two categories: foreground and background. Specifically, for the inpainting forensics task, the pixels in the image are classified into inpainted pixels and uninpainted pixels. Generally, this is more difficult than the common manipulation detection, which only makes a decision regarding whether a certain manipulation took place or not.

There has been limited research on inpainting forensics until now. Some traditional forensic methods employ hand-crafted features to identify inpainted pixels. For instance, the features depending on image patch similarity were extracted for the detection of exemplar-based inpainting operation [14,15], and the features based on image Laplacian transform were designed to identify the diffusion-based inpainting operation [16,17]. However, the manipulation traces left by image inpainting on the image are so weak that it is hard to reveal by manually designed features. In addition, the emerging DL-based inpainting methods can not only achieve more realistic inpainting results than traditional methods, but also generate new objects, which brings greater challenges to inpainting forensics. Recently, deep convolutional neural networks (DCNNs) have made great success in many fields [18,19,20] via their powerful learning capabilities. Inspired by these works, some researchers have made some attempts to develop CNN-based forensics methods, such as median filtering forensics [3], camera model identification [21], copy-move and splicing localization [4], as well as JPEG compression forensics [2]. A few research efforts have been also devoted to deep learning-based forensics for image inpainting [22,23].

DL-based methods learn the discriminant features and make the decisions for target tasks in a data-driven way and thus bring about a significant performance advantage on large-scale datasets. In this paper, we propose a new end-to-end network for image inpainting forensics. The network is established by considering the following factors.

First, manipulation feature extraction is a key problem for the DL-based inpainting forensics. Although DCNN is employed to directly extract features from the inpainted image through end-to-end training [22,24], it tends to learn image content rather than manipulate features [25]. To this end, the preprocessing modules are constructed to enhance image manipulation traces in many deep forensic approaches [7,23,26]. However, most of these methods rely on prior knowledge and cannot be effectively applied to all inpainting methods.

In addition, down-sampling operations are inevitably used in a DCNN for the extraction of high-level features, causing detail information loss on image regions [27]. For dense prediction forensics, it is necessary to study the strategies for compensating spatial information.

Last, cross-entropy and weighting strategies are generally selected for most deep inpainting forensics [23,24,26]. In practice, the loss function is extremely important for training DL-based methods, and its selection should be closely related to the proposed network structure and mission objectives. Thus, the design of loss function needs to be carefully considered based on our methods.

Based on the above considerations, we propose a novel dual-stream network for image inpainting forensics, called frequency attention-based dual-stream network (FADS-Net) in this paper. The main contributions of this work are three-fold, as below:

- We develop the DCNN for image inpainting forensics following the encoder–decoder network structure [18,28] to directly regress the ground truth binary mask, representing the pixel-wise class labels (inpainting and uninpainting). In order to capture richer inpainting clues, the encoder is designed as a dual-stream structure that consists of the raw input stream (RIS), the frequency recalibration stream (FRS), and a fusion module. The RIS, like most forensics methods, takes the original inpainted image as the input, and image information adaptively recalibrated in the frequency domain is used as input to the FRS. Then, the extracted dual-streamed features are fused into more effective and comprehensive feature responses through a well-designed fusion module. Finally, the fused features will be gradually enlarged to the full resolution through the decoder, and the final prediction mask is generated.

- We design the comprehensive up-sampling module combining transpose convolution [29], skip connection [30], and attention mechanism [31]. Through transpose convolution, the fed features are refined to enhance the feature representations for the purpose of forensics while increasing the feature resolution. Information fusion can be effectively performed between coarse high-level features and fine low-level features by combination of skip connection and attention mechanism, which compensates for the spatial information loss caused by the down-sampling operations in the encoder.

- We design a new joint loss function based on the intersection-over-union (IoU) metric to train the proposed forensics network. The loss is obtained by combining a proposed IoU loss and the cross entropy (CE) loss, where the IoU loss can directly guide the FADS-Net to optimize the IoU performance metric. CE loss is used to supervise the training of the entire network and two feature extraction streams, respectively, which ensures stable training of the network and efficient operation of each feature extraction stream.

The structure of this paper is as follows. Section 2 briefly introduces the related work of inpainting forensics. In Section 3, a frequency attention-based dual-stream forensics network is proposed and details of the network are presented. A series of experiments are carried out to evaluate the proposed network in Section 4. Finally, Section 5 concludes this paper.

2. Related Works

A few research efforts have been devoted to developing forensic methods for image inpainting. They can be roughly divided into the following two categories.

2.1. Conventional Inpainting Forensics Methods

The conventional inpainting forensic methods rely on manually designed features to predict the inpainted pixels. Initially, for exemplar-based inpainting [10], a zero-connectivity length (ZCL) feature was designed to measure the similarity among image patches, and the inpainted patches were recognized by a fuzzy membership function of patch similarity [32]. A similar forensics method depending on patch similarity was presented in [33] for video inpainting. However, the similar patch searching process is very time-consuming, especially for a large image. In addition, a high false alarm rate may be provided by these methods for an image with uniform background.

The skipping patch matching was explored for inpainting forensics and copy-move detection in [34]. A two-stage patch searching method based on weight transformation was proposed in [14]. The two patch search methods accelerate the search of suspicious patches, but may cause accuracy loss. Furthermore, by multi-region relations based ZCL features, the inpainted image regions are identified in [14], achieving an improved false alarm rate. The work was further improved by exploiting the greatest ZCL feature and fragment splicing detection in [15]. Meanwhile, the suspicious patch search was sped up by the central pixel mapping method. The resulting problem is that a truly inpainted region is prone to be recognized as some isolated suspicious regions and they might be removed by fragment splicing detection.

The inpainted patch set was determined by the hybrid feature including Euclidean distance, the position distance, and the number of same pixels between two image patches in [35]. Unfortunately, the feature is very weak against the image post-processing operations and the forensics performance is highly image-dependent.

A few works were dedicated to improving the robustness of the inpainting forensics. For the compressed inpainted image, the forensics was performed by computing and segmenting the averaged sum of absolute difference images between the target image and a resaved JPEG compressed image at different quality factors [36]. However, the feature effectiveness is not clear if the image samples are modified using other manipulations. The method in [37] was developed based on high-dimensional feature mining in the discrete cosine transform domain to resist the compression attack. Many combinations of inpainting, compression, filtering, and resampling are recognized by extracting the marginal density and neighboring joint density features in [38]. The obtained classifiers only distinguish the considered specific forgery methods and do not locate the inpainted region.

To detect image tampering by sparsity-based image inpainting schemes [39,40], the forensics method based on canonical correlation analysis (CCA) was proposed in [41]. The method exhibits the advantage of robustness against some image post-processing operations, but has the same drawback as [38]. For diffusion-based inpainting technologies [8,9], a feature set based on the image Laplacian was constructed to identify the inpainted regions in [16]. The performance was further enhanced by weighted least squares filtering and the ensemble classifier in [17]. However, these methods fail to resist even quite weak attacks.

Principally, hand-crafted features for image inpainting are designed according to the observations in some images, which cannot be guaranteed to be valid in all cases. Moreover, the design of robust hand-crafted features is usually very difficult, since no obvious traces are left by image inpainting operations, particularly deep learning-based inpainting methods. In addition, the optimization of classifiers is carried out on a relatively small dataset or in a certain small parameter range and is dependent upon feature extraction, causing the restricted forensic performance.

2.2. Deep Learning-Based Inpainting Forensics Methods

The strategy of the method based on deep learning is different from the conventional one, which can automatically learn the inpainting features and makes decisions in a data-driven way. As the first attempt in [22], the fully convolutional network (FCN) was constructed to locate the tampered regions by exemplar-based inpainting method [10], and the weighted CE loss was designed to tackle the imbalance between the inpainted and normal pixels. The method is significantly superior to the conventional forensics methods in terms of detection accuracy and robustness, which can be further improved by skip connections [42]. A deep learning approach combining CNN and long short-term memory (LSTM) network was proposed in [43] to accomplish the spatially dense prediction for exemplar-based inpainting. As to the network design, attention is mainly concentrated on the improvement of the robustness and false alarm performance. The ResNet-based approach [44] merged the networks of object detection and semantic segmentation. The approach is developed to achieve the hybrid forensic purpose for exemplar-based inpainting, including manipulated localization, recognition, and semantic segmentation. The forensic approach for deep inpainting was first addressed in [23], and an FCN with a high-pass filter was designed to identify the inpainted pixels in an image.

Recently, some of the latest advances in deep learning have also been applied to the design of inpainting forensics methods. A deep forensic network was proposed in [26] which is automatically designed through the one-shot neural structure search algorithm [45] and includes a preprocessing module to enhance inpainting traces. A backbone network with multi-stream structure [46] was employed to establish a progressive network for image manipulation detection and localization [24], which could gradually fine-tune the prediction results from low resolution to high resolution.

DL-based methods can learn the discriminant features directly from the data, avoiding the difficulties of manually extracting features. Moreover, relying on end-to-end learning, DCNNs permit the optimization of the feature extraction and the final decision steps in a unique framework. With these characteristics, DL-based methods manifest a significant performance advantage on large-scale datasets. This motivates us to further investigate the deep learning-based methods for inpainting forensics.

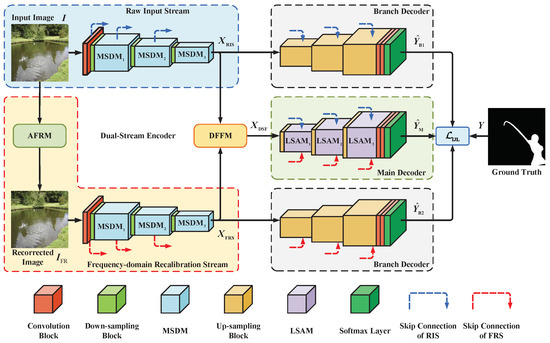

3. Frequency Attention-Based Dual-Stream Inpainting Forensics Network

In this section, a dual-stream inpainting forensics network based on attention in the frequency domain, abbreviated as FADS-Net in this paper, is presented. We build our forensic network based on the encoder–decoder network structure by considering the fact that such networks are widely used for dense prediction tasks and produce remarkable results [18,28]. The overall architecture of FADS-Net is illustrated in Figure 2. As shown in Figure 2, FADS-Net first encodes a given input image into feature maps by a dual-stream encoder and then sends features to an attention-based associative decoder to generate final predictions for the entire network and individual feature streams. The network is carefully designed by considering the network architecture, the main function modules, and the loss function, which are described in the following one by one.

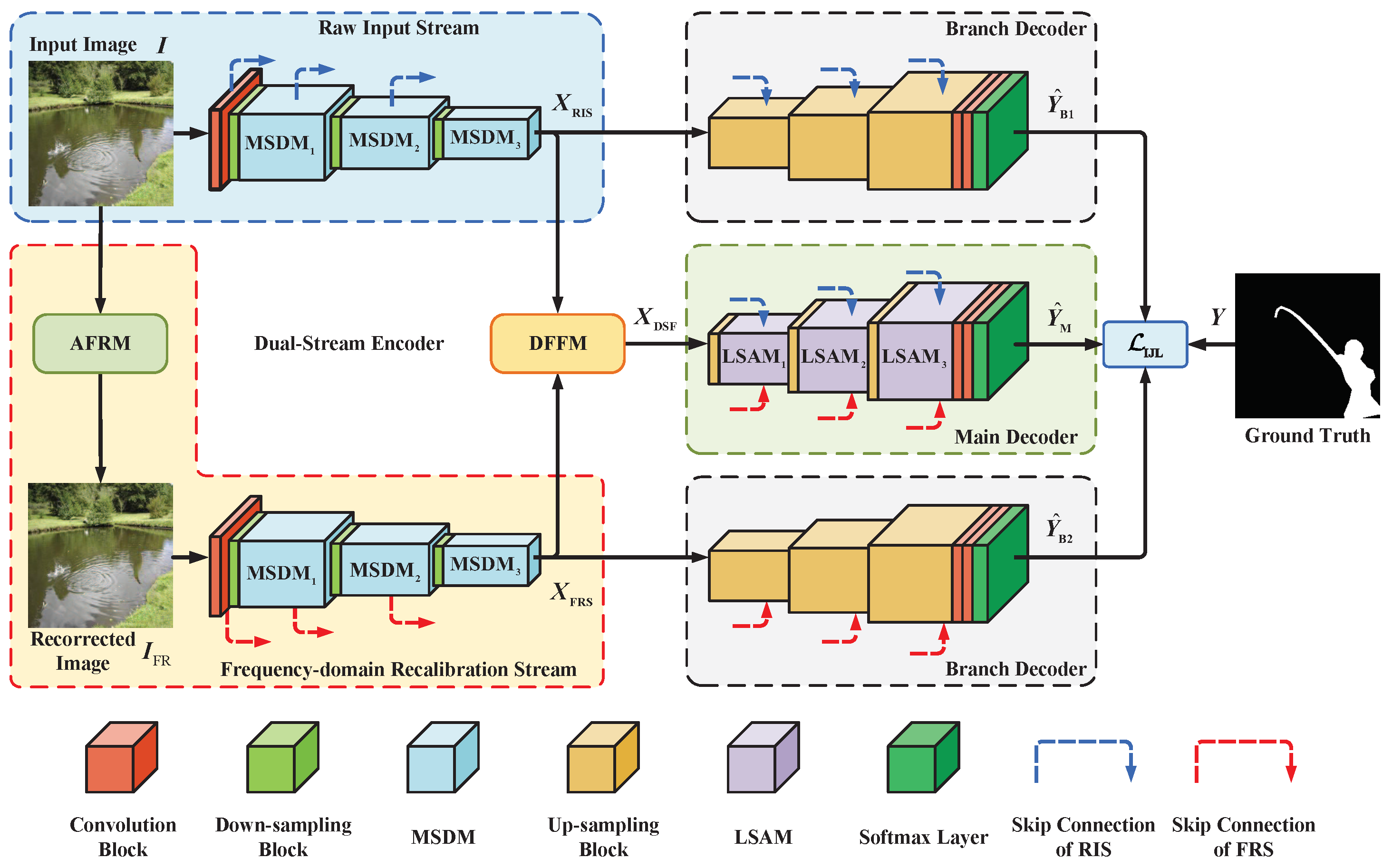

Figure 2.

The architecture of the proposed frequency attention-based dual-stream inpainting forensics network (FADS-Net). FADS-Net has the same structure as encoder–decoder networks. The two feature extraction streams extract features from the input image, and two features are fused using a dense feature fusion module (DFFM) to generate the final feature response of the encoder. Then through the attention-based decoder, the fed features are progressively refined and the spatial resolution is restored for the final dense predictions.

3.1. Dual-Stream Encoder with Attention in Frequency Domain

The encoder part of the proposed network contains two branches of feature extraction: raw input stream (RIS) and frequency recalibrated stream (FRS). As feature extraction sub-networks with the same backbone structure, they are able to capture the features of the inpainting trace by utilizing their respective input information. The RIS learns manipulation features directly, which represent the difference between the inpainted and uninpainted regions, through the original image , that is,

where composite function represents the feature extraction operation performed by RIS. Meanwhile, the image recalibrated by a frequency-domain preprocessing module, called the adaptive frequency recalibration module (AFRM), serves as the input of another branch, namely the FRS. The overall process can be written as

where represents the operation of the AFRM on the input image, and is a composite function that represents the operation of extracting features from performed by FRS. The application of AFRM can enhance the inpainting traces and suppress irrelevant information through the data-driven method. The network backbones of the above two streams are constructed based on the single-stream CNN for inpainting forensics proposed in our other recent work (under submission). The feature responses extracted from the two feature streams are fully fused through a dual-stream feature fusion module (DFFM) to obtain more abundant and more effective inpainting feature representation, as follows:

where represents the feature fusion operation performed in DFFM.

A more detailed description of the AFRM, the network backbone of two feature extraction streams, and DFFM will be provided in the following sections.

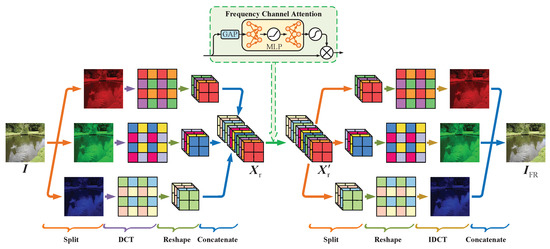

3.1.1. Adaptive Frequency Recalibration Module

Some researchers attempt to combine manually designed features with DCNN [3,23,26] for further mining of latent artifacts. However, these prior knowledge-based methods are insufficient to capture comprehensive inpainting traces. Moreover, faced with the challenge of increasingly advanced inpainting technologies to forensic science, frequency-related features may provide extra and abundant forensic clues [3,47], except for the visual inpainting traces revealed in RGB space.

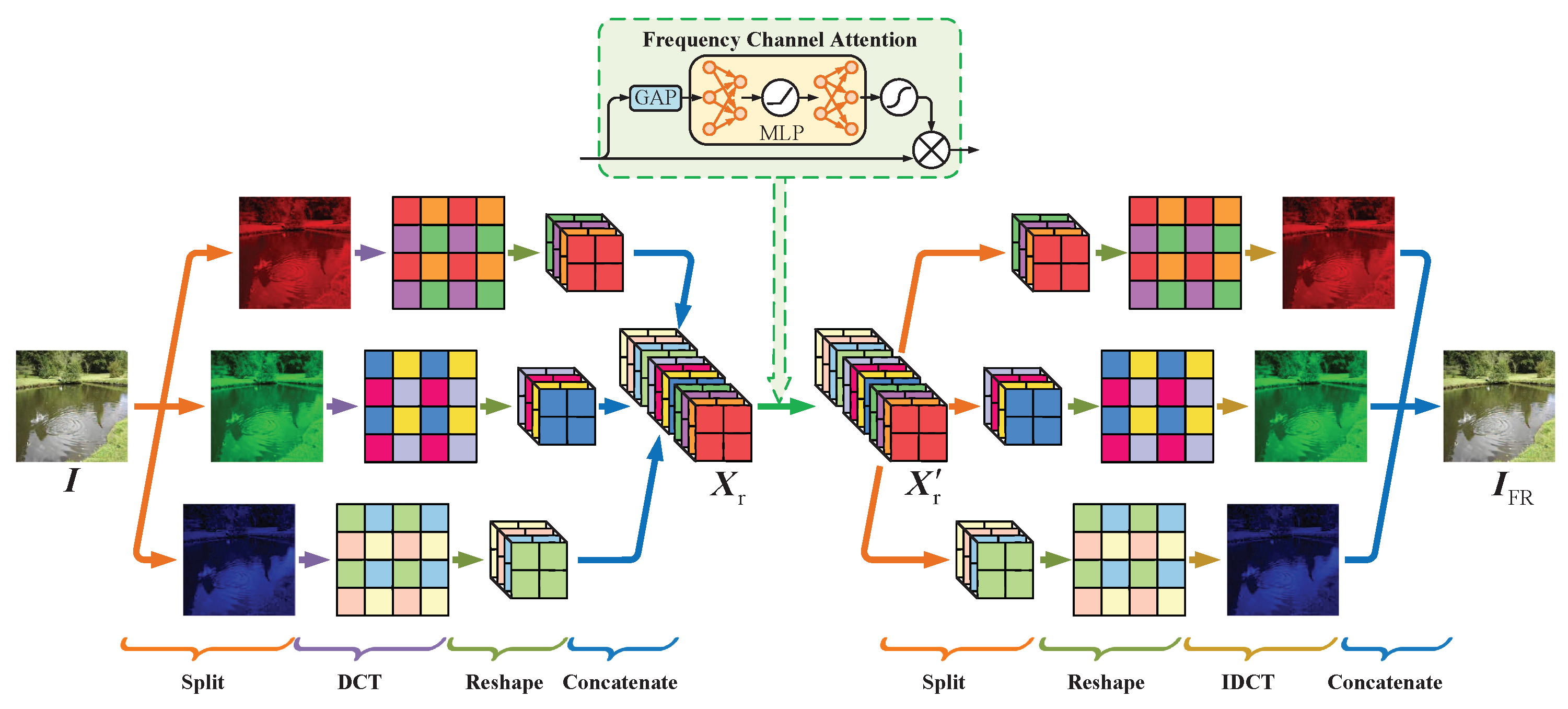

Based on the above considerations and inspired by [47,48], the adaptive frequency recalibration module is proposed to adaptively enhance frequency-related inpainting features through a data-driven approach. The process of this module is illustrated in Figure 3.

Figure 3.

The architecture of the adaptive frequency recalibration module.

First, we attempt to employ discrete cosine transform (DCT) to convert the input inpainted image from the spatial domain to frequency domain, where W and H denote the width and height of the image, respectively. Thanks to its positive decorrelation and excellent energy compaction, DCT has been applied extensively to the field of image processing. However, the complexity of DCT is relatively high, so the block-based DCT is usually selected to process images. Considering the computational cost, we apply 2-D DCT with a block of size (marked as DCT) to each channel of . Thus, the DCT result of the i-th channel can be expressed as

where , and indicates the DCT. Through applying DCT, there are a total of blocks with size generated on each channel. As a result of converting to the frequency domain, all elements in each block represent the DCT coefficients of the corresponding frequency components, which are regularly distributed at various locations in each block according to the frequency.

Then, the 2-D DCT resulting on each RGB channel needs to be reshaped into a 3-D shape for further processing. Specifically, the components with the same frequency in all blocks of each channel are grouped into the same channel, and still maintain the spatial relationships with each other. In this way, each of the R, G, and B components of the inpainted image forms a tensor with 64 channels, which correspond to different frequencies. The overall reshaped result is achieved through concatenating these generated tensors. The above process can be expressed as

where and denote the reshaping and concatenating operations, respectively.

At present, each channel of represents the different frequency information of an inpainted image. The inpainting manipulation fills the tampered region with known image information, which inevitably causes changes in energy in some frequency bands of the original image. To adaptively enhance the weak inpainting trace and suppress irrelevant information, we attempt to employ channel attention [49] to learn a frequency attention map of , which would be utilized to recalibrate information on different frequency bands of . To be specific, is first converted into a global descriptor through the global average pooling (GAP) , that is,

where represents the DCT coefficient located at on the k-th channel of , and is the k-th element of , which represents the global information of the k-th channel of . Then, the is input into a multi-layer perceptron (MLP) with the sigmoid function to derive . The process can be expressed as

where and denote the weight parameters of the hidden layer and output layer, respectively, of MLP, and denotes a rectified linear unit (ReLU) activation function. Finally, the recalibrated frequency information is achieved by

where ⊗ refers to element-wise multiplication.

By applying the channel attention mechanism, the dynamic weight is provided for each frequency channel of , so as to improve the effectiveness of frequency information for forensics. However, the forensics network infers the final pixel-wise prediction, which depends on the spatial information of the image. Therefore, the recalibrated result in the frequency domain is reconverted into an image in the spatial domain by inverse reshaping and inverse DCT , which is implemented as follows:

where denotes the concatenating operation, and is the recalibration result of the i-th channel of the input image through channel attention.

Although CNN has superior feature learning capabilities, it may be insufficient that only RIS is utilized to learn features of inpainting operation. This is because, in the spatial domain, the inpainting traces are hidden in the content information of an image, while CNN tends to learn the features of content information. According to the previous description, through DCT and reshaping, AFRM can first alleviate the coupling between image content and inpainting artifact, which have different frequency information in the frequency domain. Furthermore, channel attention is employed to adaptively enhance or suppress corresponding frequency information through data-driven approaches, so as to meet the requirement of forensic tasks. Therefore, the FRS can also extract extra abundant features from the images recorrected by AFRM, and these features extracted by FRS will be fused with the features obtained by RIS to ultimately improve the forensic performance. The comparative and ablation experiments have demonstrated the effectiveness of FRS with AFRM.

3.1.2. Sub-Network for High-Resolution Feature Extraction

In general, depending on the progressive down-sampling and deep structure, DCNN can learn abstract high-level features. However, the continuous down-sampling results in the serious loss of spatial details, which is harmful to the dense prediction task [50].

Thus, a network structure in Figure 2 proposed in our recent work is employed to establish the sub-networks of two feature extraction streams which can effectively extract high-resolution feature responses from their respective input information.

Thus, a single-stream network with the structure in Figure 2 proposed in our recent work is introduced as a sub-network to efficiently extract high-resolution feature responses. For readers to better understand our work, the network is re-described in the following.

At the beginning, a convolution block is employed to generate shallow feature maps from input information. Subsequently, the combination of down-sampling block and multi-stream dense modules (MSDMs) is repeatedly applied three times to further learn higher-level features. As a result, the spatial resolution of the final feature responses on each stream is adjusted to 1/8 of that of the input, which effectively reduces the loss of detail information on the inpainted regions.

As the basic unit of the network and the component of other modules, the convolution block implements the feature extraction through the convolution operations, followed by a batch normalization (BN) layer and ReLU activation layer.

By setting the stride to 2, the above convolution block is converted into a down-sampling block, so as to reduce the spatial resolution of input feature maps by half. Compared to ordinary prior-based pooling, this trainable down-sampling is more suitable for forensics tasks of learning inpainting trace rather than image content.

MSDM is the main unit and was designed in our recent work to efficiently learn feature representation after each down-sampling. It has three parallel information flows: local feature stream, global feature stream, and residual stream.

Through multiple densely connected dilated convolutional blocks, the local feature stream is employed to learn a set of local features from the input of MSDM. For the n-th dilated convolution block in the k-th MSDM, the local features extracted by it can be expressed as

where denotes the input feature of the k-th MSDM in the feature extractor, denotes the concatenating operation, and denotes the operation performed by the n-th convolutional block. Dense connection can not only strengthen information propagation and promote feature reuse, but also effectively improve model compactness [19,51,52].

Then, the global feature stream can aggregate the global context of input by global average pooling (GAP) to obtain the image-level features. For the k-th MSDM, the image-level features can be obtained as follows:

where and represent convolution and up-sampling operations, respectively, which are used to adjust the dimensions of feature maps so as to facilitate subsequent feature fusion performed by MSDM.

Finally, the input features and local and global features are fused by a convolutional block, and the derived results as residuals are combined with the inputs of MSDM, forming the residual stream. When N densely connected convolutional blocks are set on the local feature stream of MSDM, the final output of the k-th MSDM can be expressed as

where and ⊕ refer to feature fusion performed by the convolutional block and pixel-wise addition, respectively. The detailed description of MSDM is provided in our recent work.

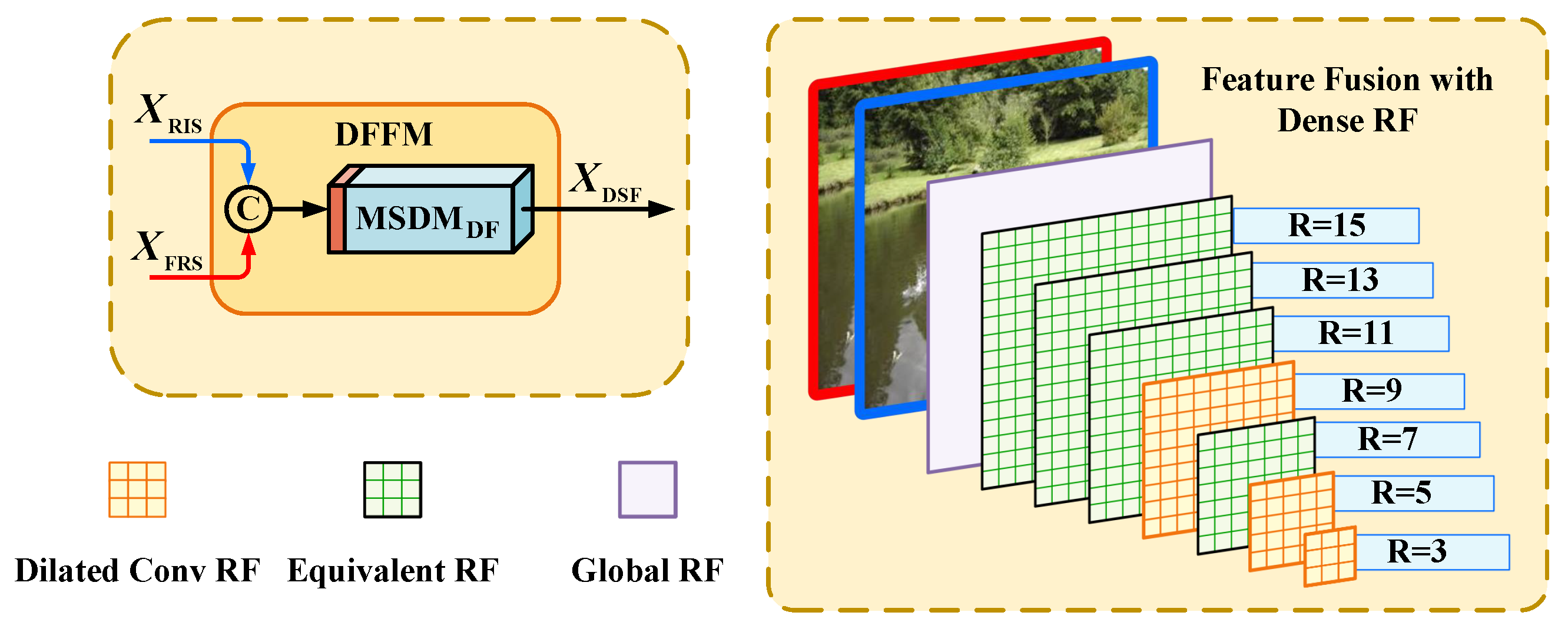

3.1.3. Dense-Scale Feature Fusion

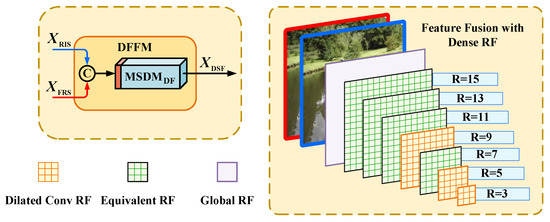

The final feature responses and extracted from the two feature streams contain abundant and valid forensic information. We utilize a dense-scale feature fusion module (DFFM) to promote their full fusion and finally obtain a fusion feature representation .

The DFFM is composed of a convolution block and a specially set MSDM, as illustrated in Figure 4. Firstly, and are concatenated along the channel dimension. Then, a convolution block is used to resize the channel depth of the concatenated feature maps by a factor of 0.5, which can reduce the computation cost of subsequent processing. Furthermore, we employ the specially set MSDM (marked as MSDM) to capture a set of dense-scale context information from the concatenated feature maps and then fuse them together, so as to promote the in-depth mining and interacting of feature maps on dense scales.

Figure 4.

The architecture of dense-scale feature fusion module (DFFM). An example of receptive field (RF) formed in the MSDM is shown on the right side of the figure.

Specifically, for a dilated convolution layer with kernel size S and dilation rate , its receptive field R can be obtained by

Obviously, we can flexibly adjust the receptive fields R to incorporate different contexts by setting dilated rate . In addition, N dilated convolution blocks with the dense connection can form a total of information propagation paths on local feature stream of MSDM, where represents the number of all combinations of i elements taken from N different elements. Furthermore, when there are k convolution blocks on a path, a larger equivalent receptive field would be obtained.

Hence, by adjusting the dilation rate of each dilated convolution block in MSDM, a set of receptive fields can be generated to capture contextual information with dense scales.

In this paper, we set the dilation rates in MSDM to 1, 2, 4 in order. According to the above design, the scale of the i-th local receptive field is Moreover, the purple plane represents the receptive field that aggregates the global context on the global feature stream, which can not only further enrich the feature information, but also effectively avoids the degradation of equivalent receptive fields caused by dilation rate settings [50]. Simulations indicate that the performance of the proposed network can be effectively improved by the proposed DFFM.

3.2. Attention-Based Associative Decoder

We propose an attention-based associative decoder, which consists of a main decoder and two branch decoders in parallel. The main decoder can gradually restore the spatial resolution of features and refine them, thereby yielding the final prediction for dense forensics. Moreover, two decoders with the same structure are applied on two individual feature streams, which is facilitated to further supervise their training and ensures that they both work effectively.

3.2.1. Main Decoder and Branch Decoder

The main decoder consists of up-sampling blocks, locality-sensitive attention modules (LSAMs), skip connection units, convolution blocks, and Softmax layer, as shown in Figure 2.

The up-sampling block resizes the resolution of the input feature maps by a factor of 2, which is constructed by replacing the convolutional layer with a transposed convolutional layer in the down-sampling block. Different from linear interpolation and inverse pooling, the up-sampling operation based on transposed convolution can learn feature representation with trainable parameters while increasing the feature resolution. This is obviously favorable for the forensic task.

A skip connection unit is usually directly arranged behind the up-sampling block, which can introduce the encoder features to compensate for the lost spatial information due to down-sampling via simple addition [30] or concatenation [18]. However, the non-selective information fusion ignores the different importance of each feature on the spatial and channel dimensions for the forensic task. To address this issue, we propose LSAM to learn an optimal attention map to recalibrate up-sampled feature maps , and then enable it to achieve better fusion with decoder features . The overall fusion process can be expressed as

where ⊗ and ⊕ stand for element-wise multiplication and addition, respectively, and stands for the feature maps fused by LSAM following the l-th up-sampling block. The specific design of LSAM will be addressed in the next section. Here, the additive skip connection is explored for a global residual learning [53], which can ensure training stability and accelerate network convergence.

The implementation details for the fusion stream decoder are given as follows. The combination of an up-sampling block, an LSAM, and a skip connection unit is repeated three times to gradually restore the initial spatial resolution of features. The kernel size of all the up-sampling blocks is set to as the convolution block. Through the first two up-sampling blocks, the channel number of input feature maps remains unchanged, but is resized by half through the third one. Two convolutional blocks with 8 and 2 kernels of size , respectively, are placed at the end of the main decoder to generate 2-channel logits. Through them, we can obtain the receptive field with fewer parameters to be trained according to Equation (15) than using one convolutional block directly. The logits are finally fed to the Softmax layer, yielding the confidence map for the inpainted image.

In addition, two simplified branch decoders without LSAM are added at the ends of two feature extraction streams to yield two prediction confidence maps and for joint supervision. This is facilitated to ensure the effectiveness of both streams and prevent forensic performance from over-dependence on one of them.

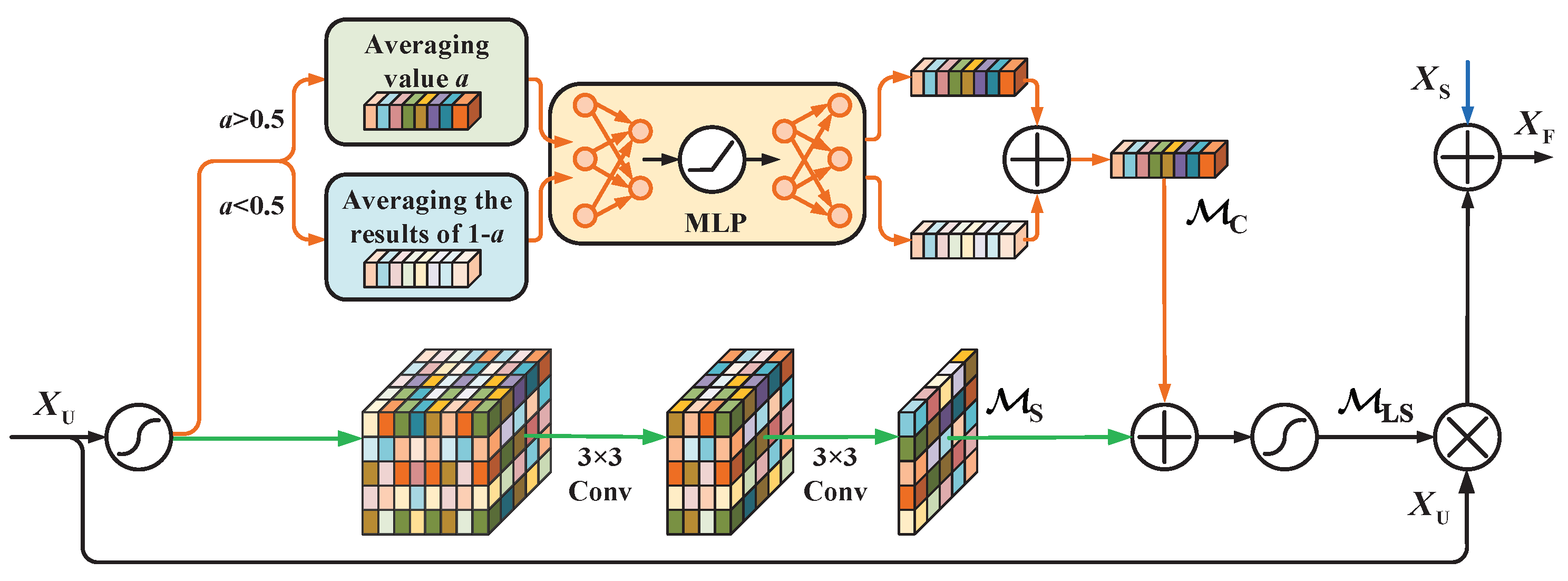

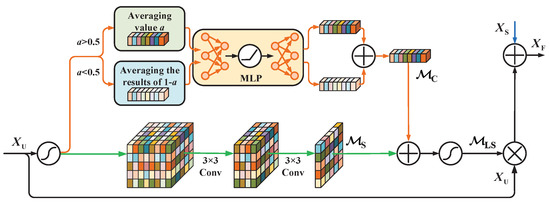

3.2.2. Locality-Sensitive Attention Module

The attention mechanism was proposed by learning the important property of the human visual system [54,55,56], which can improve feature representation power and exhibits a performance advantage in many vision tasks, such as object detection [20], semantic segmentation [57], and human pose estimation [58].

In the study, our goal is to take into account the individual importance of different features by using the attention mechanism while performing the additive skip connections. Therefore, we design a novel locality-sensitive attention module, i.e., LSAM, by considering the local importance of input feature maps for the forensics task. As shown in Figure 5, LSAM is composed of channel and spatial attention sub-modules. To achieve the channel attention maps, it is necessary to first compress the spatial dimension of input feature maps to capture the global descriptor. So far, the global maximum and average pool have been widely employed to implement this operation [49,59,60]. However, these two methods are not the best choice for inpainting forensics, since the pixels of the input image need to be classified into two classes, i.e., inpainting and uninpainting. The global descriptors captured by the above methods are not sufficient to represent the global information of inpainting features. Based on this consideration, as the input of LASM in the decoder, the up-sampled feature map in the channel attention module is first normalized by the sigmoid function , that is,

Figure 5.

The structure of the locality-sensitive attention module (LSAM). LSAM is composed of channel and spatial attention modules, where the upper network branch depicts the structure of channel attention module and the bottom network branch depicts the structure of spatial attention module.

In each channel of the normalized feature maps , the features with values above 0.5 may be thought to describe inpainting traces to some extent, and other features are more closely related with the uninpainting class. Therefore, important clues about the two object classes are obtained by carrying out two novel methods of aggregating global information such as Equations (18) and (19) on each channel of normalized features maps, resulting in two different spatial context descriptors and . The k-th elements and on the two descriptors are calculated as follows:

where refers to the element at on the k-th channel of , and are the total number of two classes of elements, and is the Sign function. , and represent the width, height, and channel depth of , respectively.

Furthermore, the two feature descriptors are used to fully capture channel-wise dependencies by a shared network. The shared network is composed of MLP with one hidden layer, and the hidden activation size is set to 1/16 of the channel depth of to reduce parameter overhead. The final channel attention for is produced by merging the outputs of MLP, that is,

The orange branch in Figure 5 depicts the structure of the channel attention module. Clearly, the derived attention is relatively sensitive to local changes of the input feature maps due to the adopted operations before MLP.

The structure of the spatial attention module is illustrated in Figure 5 (see the green branch). The spatial attention module learns which information to emphasize or suppress along the spatial dimensions. Feature normalization is first performed on the input feature maps , carried out in the channel attention module. This effectively alleviates the impact of feature scale difference on the spatial attention inference. Subsequently, the channel dimension of input feature maps is squeezed, resulting in feature map . The feature channel depth is in turn reduced by half and to 1 through two consecutive convolution layers. Here, the two fully trainable network layers allow us to learn the spatial attention map in a data-driven way, which is apparently superior to one derived by hand-selected processing methods [59] in feature diversity. Moreover, since the kernel size of the first convolution layer is larger than , the local information of can be considered during the attention inference. The above process can be expressed as

where and denote two consecutive convolution operations.

Last, the overall attention map for is obtained by normalizing the fusion results of and as

LSAM is constructed based on the characteristics of the forensics task, by which the feature fusion is accomplished better in the skip connection units. Simulations indicate that the performance of the proposed network can be effectively improved by the proposed LSAM.

3.3. IoU-Aware Joint Loss

In this study, we design the IoU-aware joint loss (IJL) composed of IoU loss and CE loss to train our network, which can perform joint supervision on the entire network and two feature extraction streams. The overall IoU-aware joint loss is as follows:

where represents the main loss that supervises the final prediction result yielded by the main decoder, and the branch loss is employed to measure the difference between the output of the i-th branch decoder and the ground truth label. The weight , is the hyper-parameter representing the importance of .

CE loss and its variants have been used for image inpainting forensics [22,23,24], but they are actually accuracy-oriented, which is different from the IoU metric used to evaluate the performance of dense prediction in inpainting forensics. Thus, we employ a loss based on IoU metrics and CE loss together to supervise the final output of the main decoder. As a common performance metric for image segmentation tasks, IoU can effectively measure the difference between predicted results and ground truth masks. According to the definition of IoU, its value is calculated as follows:

where , , and denote the numbers of true positive, false negative, and false positive pixels of the dense prediction, respectively. The above three items count the numbers of various pixels in the predicted results, but the output of the proposed network is the probability of each pixel being inpainted. Thus, for inpainting forensics, , , and are approximately derived by the ground truth mask and the confidence map output by the decoder in our recent work, and their corresponding approximate values , , and can be calculated as follows:

where and are the corresponding elements of and at , respectively. Then, through substituting Equations (25)–(27) to Equation (24), the approximate value of IoU can be obtained as

Finally, the IoU loss is defined as the negative logarithm of , that is,

Based on the above considerations, the loss for the main decoder is written as

where represents the weight of cross-entropy loss , and is the confidence maps output by the main decoder. The loss function combines the stability of cross entropy and the property that IoU loss is not affected by class imbalance, as well as directly guides the network to optimize the IoU performance metric.

In addition, CE loss is also employed to supervise the confidence maps and output by two branch decoders, that is,

In this way, it can ensure that two feature extraction streams all play their role effectively rather than relying on a certain branch for forensic performance. This results in the remarkable performance gain showed in our ablation experiments.

4. Experimental Results

In order to validate the proposed inpainting forensics method, we train and test the established network on image databases set up by representative inpainting technologies. Experiments are conducted on the datasets to compare our FADS-Net with the state-of-the-art forensic networks in terms of localization accuracy and robustness. An ablation study is also performed to verify the major components of our network.

4.1. Training and Testing Datasets

In the MIT Place dataset [61], we randomly select 79,200 color images of size to set up experimental datasets. These images are divided into four datasets on average, each of which is tampered with by one of the four inpainting methods, including diffusion-based inpainting [62], exemplar-based inpainting [10], and two state-of-the-art deep learning-based methods [12,13]. The region to be inpainted is produced by a random mask in shape and location. Circular, rectangular, and irregular masks are randomly generated and located at a given image. The mask size is indicated by the tampering ratio of the tampered pixels to all the pixels in an image. It is randomly set to one of 0.1%, 0.4%, 1.56%, or 6.25% for diffusion-based inpainting and 1.0%, 5.0%, or 10.0% for others. The parameter settings are applied considering the fact that diffusion-based inpainting is more appropriate for inpainting a smaller missing region. The inpainted images with the associated binary masks form four datasets, called, for convenience, diffusion, exemplar, ICT, and DeepfillV2 datasets, corresponding to the utilized inpainting. Several sample images are shown in Figure 6 with the masked regions in green.

Figure 6.

Examples for images in datasets. From left to right: original images, images with the missing regions (marked in green), inpainted images, and ground truth masks.

4.2. Training Details on Synthetic Datasets

The proposed FADS-Net with the input of size is implemented using PyTorch. It is trained and tested on a single Nvidia GeForce GTX 3080Ti GPU. For training, the ADAM optimizer with a batch size of 32 is adopted. The parameters and of ADAM take the default values of 0.9 and 0.999, respectively. The learning rate is initialized to 0.001 for all layers and remains unchanged during training. Training is carried out on the constructed synthetic datasets for 100 epochs to ensure convergence.

Moreover, data augmentation technologies are involved to prevent our model from overfitting and improve the forensic performance on robustness. Specifically, each training image is a JPEG compressed under quality factors (QFs) of 95, 85, 75, and additive white Gaussian noise (AWGN) with 30 dB signal-to-noise ratio (SNR). The processed images together with the original are further randomly flipped horizontally and vertically and rotated by 90 degrees before they are fed into the network.

For comparisons, the state-of-the-art deep learning-based forensics methods are chosen, including FCN with the high-pass pre-filtering module, called HP-FCN [23], IID-Net [26], PSCC-Net [24], and our early work named VGG-FNet [22]. These methods were retrained on our dataset. The training procedures and parameter settings introduced in their papers are strictly followed during training.

4.3. Forensic Performance on Inpainting Images without Any Distortions

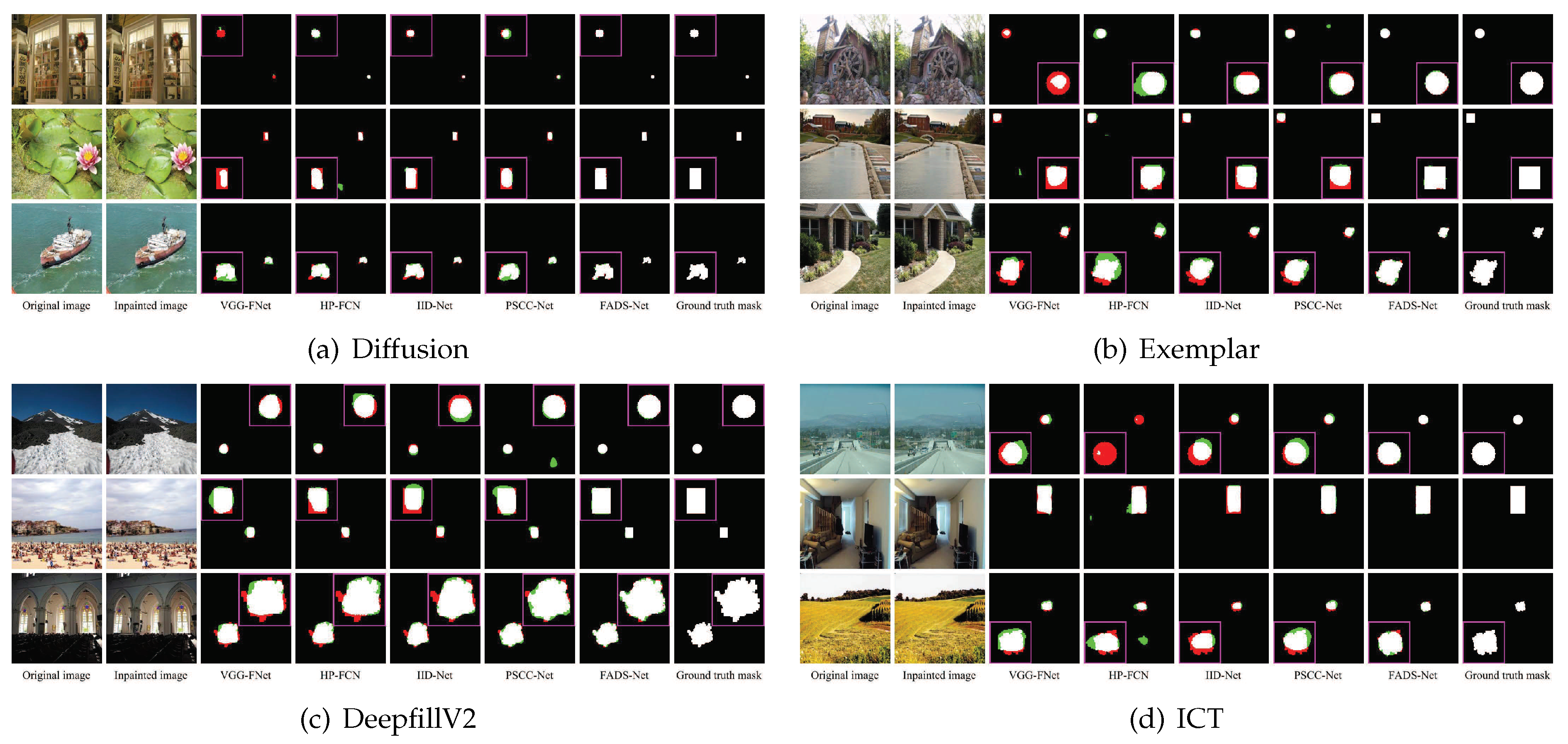

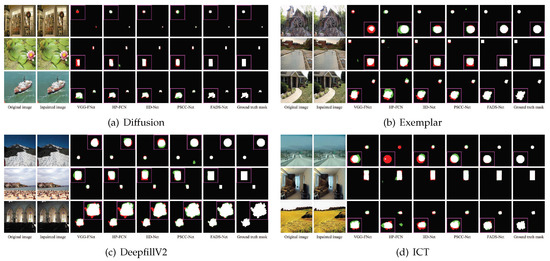

Firstly, we evaluate the forensics performance of FADS-Net by qualitative and quantitative methods on each testing dataset without postprocessing. Figure 7 visually displays the forensic results obtained on several sample images generated by four typical inpainting methods.

Figure 7.

Qualitative comparisons of different forensic methods on the images tampered by (a) diffusion-based inpainting [62], (b) exemplar-based inpainting [10], (c) DeepfillV2 [12], and (d) ICT [13]. The original uninpainted images, inpainted images, forensic results obtained by the methods in [22,23,24,26] and our method, as well as ground truth masks are shown in columns 1 to 8, where the pixels in white, black, green, and red indicate true positive, true negative, false positive, and false negative, respectively. For better visual comparison, the zoom-in versions of the predicted tampering regions marked by the pink rectangles are shown in a corner.

Figure 7a,b exhibit the forensic effects for two traditional inpainting methods, i.e., diffusion-based and exemplar-based inpainting [10,62], respectively. Apparently, our method (see the 7th column) achieves quite accurate predictions with little error, while the comparison methods (see columns 3 to 6) yield significant false positive and false negative regions, especially in the 3rd row of Figure 7b. The forensic results illustrate that VGG-FNet [22] (the third column) manifests more false negative errors, while HP-FCN [23] (the fourth column) presents worse false positive performance. For these given sample images, the predictions of IID-Net [26] and PSCC-Net [24] (the fifth and sixth columns) are only slightly better than the previous two methods, and worse than our results.

For two deep learning-based inpainting methods, i.e., ICT [13] and DeepfillV2 [12], the forensic results are shown in Figure 7c,d, respectively. It is noticeable that the performances of VGG-FNet [22], HP-FCN [23], and IID-Net [26] are similar, and there are prediction results with significant false positive or negative errors, e.g., the results of VGG-FNet, HP-FCN, and IID-Net in the third row of Figure 7c and first row of Figure 7d. PSCC-Net [24] reaches a better performance, but it is still inferior to our model.

In principle, the forensic effects of all the tested methods become much worse for the inpainted regions with lower tampering ratios (e.g., the first row of Figure 7a) or uniform regions (e.g., the third row of Figure 7d). Generally, the inpainting effects of small or uniform regions are more realistic, and fewer inpainting traces are left, thus causing difficulties in forensics. In addition, the edges of inpainting regions, especially irregular regions (e.g., the third rows of Figure 7a–d), are more prone to prediction errors. Impressively, our FADS-Net obtains forensic results (in the penultimate columns of Figure 7a–d) best fitting with the ground truth masks (in the last columns of Figure 7a–d) for inpainted regions with different shapes and scales.

Then, the forensic performance is measured by two objective metrics: IoU and F1-score. The average values of IoU and F1-score obtained by the tested methods are summarized in Table 1 on four testing datasets. The best results are marked in bold.

Table 1.

Average IoU (%) and F1 (%) values of forensic results on different forensic datasets with no postprocessing. The best results are marked in bold.

All compared methods achieve relatively low forensic performance on the forensic dataset for diffusion-based inpainting, mainly due to the image with a smaller inpainting ratio and weak inpainting traces left. For example, PSCC-Net achieves IoU of approximately 61.5% and F1-score of approximately 67.2%, which is close to the performance of VGG-FNet. IID-Net also has a similar performance to HP-FCN, both reaching slightly higher than IoU of 70.0% and F1 score of 75.0%. Surprisingly, FADS-Net presents IoU of approximately 88.07% and F1 score of approximately 91.56%, which is obviously superior to other methods. The performance gain of FADS-Net may be obtained by the design of mining inpainting traces and refining spatial information.

On the forensic dataset for exemplar-based inpainting, each tested method yields larger IoU and F1 than the previous inpainting dataset. For instance, the IoU of PSCC-Net increases from approximately 61.5% to 86.2%. Clearly, the phenomenon indicates that it is easier to locate a larger inpainted region. IID-Net also has a performance close to PSCC-Net and outperforms VGG-FNet and HP-FCN by approximately 10% in IoU and more than 8% in F1 score. Our FADS-Net is approximately 6.7% and 4.0% higher in IoU and F1 score, respectively, than the second-best IID-Net. This is consistent with the qualitative results given previously.

For the ICT dataset, the forensic performance of PSCC-Net presents approximately 85.7% in IoU and 91.8% in F1-score, and ours (91.34% in IoU and 95.27% in F1-score) explicit exceed its performance. Other compared methods reach IoU from 69% to 82%, and F1 score from 75% to 88%. On the DeepfillV2 dataset, except for VGG-FNet and our FADS-Net, the tested methods emerge with significant performance degradation. Similarly, FADS-Net once again exhibits the best performance and has quite a large margin in IoU, approximately 10.6%, compared with the second-best method.

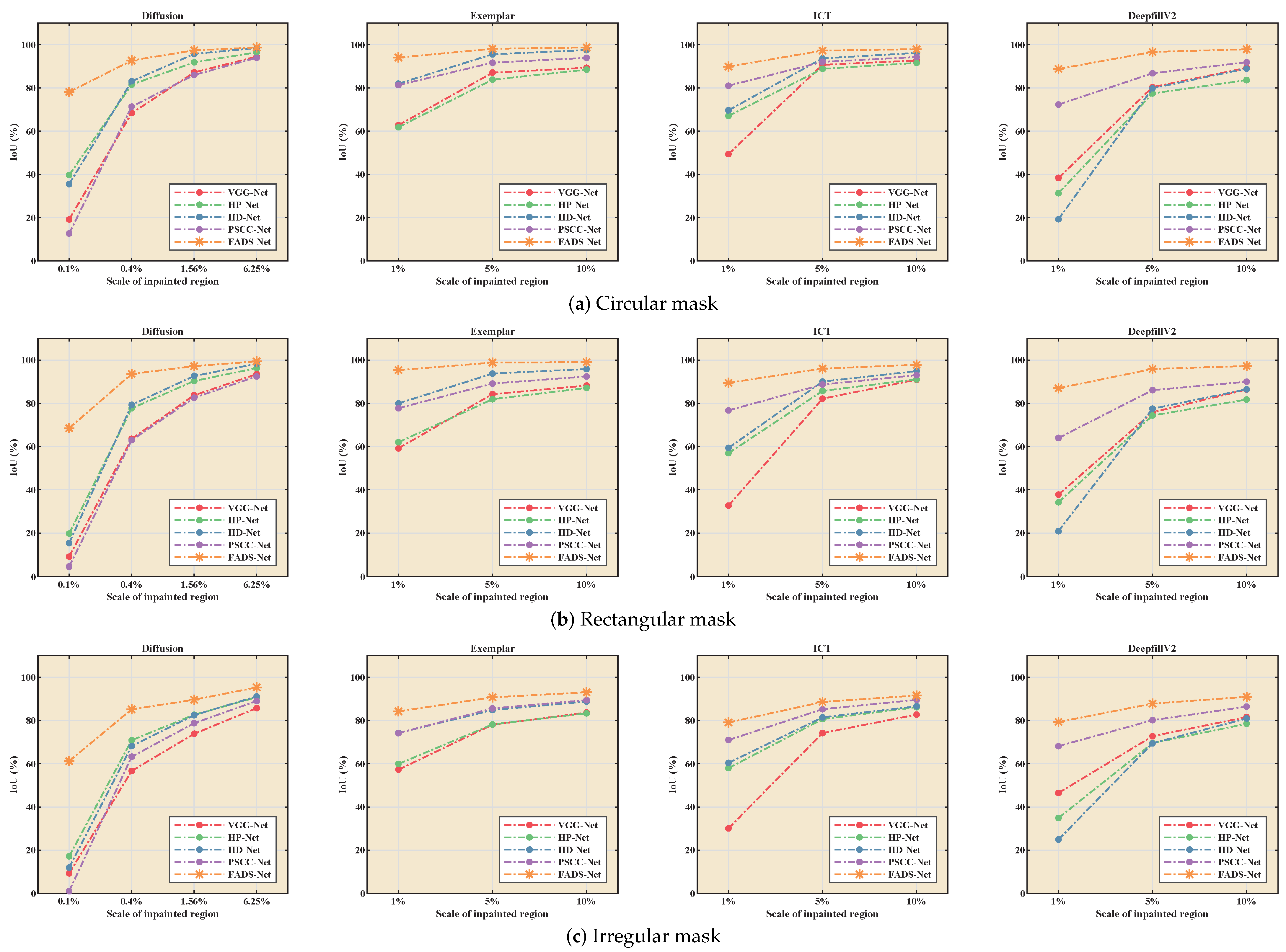

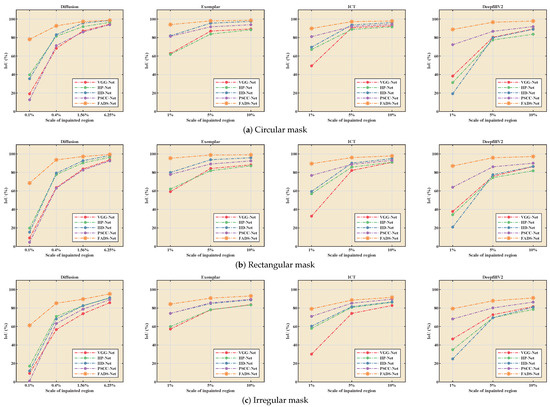

Figure 8a–c respectively show the influence of the inpainted regions with various shapes and scales on the forensics performance. Observing Figure 8a, as the scale of the inpainting region decreases, the forensic performance of all methods decreases dramatically. The effect is the most prominent on the dataset for diffusion-based inpainting, which again confirms the difficulty of forensics for the small inpainted region. In addition, through comparing the results of the diffusion dataset in Figure 8a–c, all the tested methods generally have the lower IoU for irregular masks and the larger one for circular or rectangular masks. This situation also almost obtains on the other three datasets. According to the above analysis, due to the AFRM, dual-stream feature fusion encoders, and attention-based feature fusion, the proposed method provides optimal and stable forensic performance for masks with various shapes and scales.

Figure 8.

Comparison of different forensic methods for inpainted regions (IRs) with different scales on Diffusion, Exemplar, ICT, and DeepfillV2 datasets, respectively.

4.4. Quantitative Evaluation under Typical Attacks

In practice, some postprocessing operations might be performed by forgers after inpainting to hide traces of tampering and evade forensic detection. Thus, we investigate the robustness of the proposed method against JPEG compression and AWGN. These manipulations are considered because they are often employed in many applications.

Specifically, forensics is performed on the distorted images by JPEG compression with QFs of 95, 85, and 75, and AWGN with signal-to-noise ratios (SNRs) of 50 dB, 45 dB, 40 dB, and 35 dB. The average values of IoU and F1-score obtained by the tested methods on the established datasets are reported in Table 2, Table 3, Table 4 and Table 5. Notice that none of these postprocessing operations with the above parameter settings are used to create our training datasets. The best results are marked in bold.

Table 2.

Average IoU (%) and F1 score (%) values of different methods on the dataset for diffusion-based inpainting [8] under JPEG compression (QF) and AWGN (SNR) attacks. The best results are marked in bold.

Table 3.

Average IoU (%) and F1 score (%) values of different methods on the dataset for exemplar-based inpainting [10] under JPEG compression (QF) and AWGN (SNR) attacks. The best results are marked in bold.

Table 4.

Average IoU (%) and F1 score (%) values of different methods on the ICT [13] dataset under JPEG compression (QF) and AWGN (SNR) attacks. The best results are marked in bold.

Table 5.

Average IoU (%) and F1 score (%) values of different methods on the DeepfillV2 [12] dataset under JPEG compression (QF) and AWGN (SNR) attacks. The best results are marked in bold.

From Table 2, all tested methods experience significant performance degradation as QF or SNR decrease. For instance, FADS-Net receives IoU of 86.03% and F1-score of 89.76% for the case of QF = 0.95, which are slightly lower than those obtained under no attacks, while only approximately IoU of 73% and F1-score of 77% are received under QF = 75. Notice that some forensic networks are relatively insensitive to JPEG compression since they obtain a little lower IoU and F1-score on the images with no distortions, e.g., VGG-FNet [22]. For AWGN with SNR from 50 dB to 35 dB, the IoU and F1-score of FADS-Net are reduced to 92.45% from 94.54% and 95.51% from 97.10%, respectively. The performance degradation is approximately 2%, and the compared methods are subjected to a similar slight performance drop. The above results reveal that all tested methods have more stable robustness against AWGN than JPEG compression. The main reason is that JPEG compression tries to remove the content-unrelated information while guaranteeing the image quality; thus, some inpainting traces are further masked during this process. Similar observations can be made from Table 3, Table 4 and Table 5 on the other three datasets. Impressively, our model outperforms other methods significantly despite different datasets and attack parameters. As an example, FADS-Net outperforms the second-best PSCC-Net [24] by nearly 10.0% in IoU and 8.0% in F1-score under QF = 75 in Table 4. This reveals that our forensic method is more effective for capturing inpainting traces.

4.5. Ablation Analysis

We perform ablation experiments to investigate the effect of two feature extraction streams (RIS and FRS), dense-scale feature fusion module (DFFM), Locality-Sensitive Attention Module (LSAM), and IoU-aware joint loss (IJL) through ablation experiments. For this purpose, we construct the following variants of our full model (FADS-Net).

- RISS-Net: The variant refers to a single-stream encoder network, which takes the original inpainted image as input. Because the encoder is changed to a single-stream structure, the DFFM is removed, but the LSAM module in the decoder is still retained. Moreover, the network training makes use of a hybrid loss that combines CE loss and IoU loss.

- FRSS-Net: The setting of this network is consistent with RIS, except that its single-stream encoder employs FRS.

- DSCF-Net: The network architecture is the same as that of our full model but it discards DFFM and uses simple concatenation to fuse features.

- DSAF-Net: This variant employs the full encoder and loss function of the full model, yet the decoder employs element-wise addition to recover spatial information instead of LSAM.

- FADS-Net (MCL): The network has the same structure as the full model but removes two branch decoders and uses CE loss to supervise the difference between the output of the main decoder and the ground truth label.

- FADS-Net (JCL): CE loss is applied to optimize the results of the main decoder and branch decoder of the full model during training.

- FADS-Net (MHL): The network utilizes a hybrid loss established by combining CE loss and IoU loss to train the full model removing two branch decoders.

All these variants are trained on the DeepfillV2 dataset with the same training options as those of the full model. The average quantitative results are listed in Table 6, where no extra distortions, JPEG compression with QF = 75, and AWGN with SNR = 35 dB are considered. The best results are marked in bold.

Table 6.

Average IoU (%) and F1 score (%) values of forensic results on the DeepfillV2 [12] dataset for different variants of FADS-Net without and with postprocessing. The best results are marked in bold.

As shown in Table 6, by removing two feature extraction streams, the network with a single-stream encoder only achieves IoU of near 89% and F1 scores of approximately 94% with no distortions averaged on the whole testing dataset, and obtains lower IoU and F1 score under attacks, particularly JPEG compression. The performance is much worse than that of our full model, but is still competitive or superior to that of the former state-of-the-art models for comparison in the considered cases. This shows that the high-resolution structure of encoder and efficient feature extraction module MSDM are beneficial to forensic performance. In addition, FRS has a significant performance improvement over RIS under JPEG compression, which indicates that learning in the frequency domain plays a key role in enhancing the inpainting trace.

The performance is further improved by two variants with dual-stream feature extraction. Although DFFM and LSAM were removed, DSCF-Net and DSAF-Net still exceed two single-stream variants by approximately 2.5% to 3.5% in both average scores of IoU and F1. These results imply that the dual-stream feature fusion can effectively improve the performance of inpainting forensics. By comparing the above two variants with the full model, we may get to know the contribution of DFFM and LSAM. For example, the full model outperforms DSCF-Net by approximately 1.1% in IoU score at SNR = 35 db and produces larger performance margin in the case of DSAF-Net with QF = 75.

Through analyzing the performance of the remaining three variants, the full model has the best performance despite whether or not the tested images undergo some attacks. From the results of the full model and variant FADS-Net (MCL), the use of IoU-aware joint loss increases the performance margin by approximately 2.0% in average IoU and 2.1% in average F1. In particular, the performance of the complete model is approximately 4.5% higher than that of the methods without IAL in the case of QF = 75. Thus, we can confirm that IoU-aware joint loss can drive networks to focus on the inpainted regions more than CEL and ensure that the two-stream feature extraction works effectively.

All the results of the above ablation experiments exhibit that all the components present performance improvement and contribute to the overall performance.

5. Conclusions

In this paper, a novel deep learning method for image inpainting forensics, called FADS-Net, has been presented. In order to locate the tampered regions by inpainting operation, FADS-Net is constructed by following the encoder–decoder network structure. The encoder is a dual-stream network composed of an adaptive frequency recalibration module, two feature extraction sub-networks, and a feature fusion module. The two feature streams can efficiently extract feature maps from the original input and the one recalibrated by this adaptive frequency recalibration module. Then, through the feature fusion module, these extracted features are fully fused to generate more comprehensive and effective feature representations. By introducing the attention mechanism, the decoder can restore more spatial information while improving the feature resolution. Last, we propose an IoU-aware joint loss to guide the training of FADS-Net, where the item of IoU loss takes the forensics performance as the optimization objective, and CE loss can ensure the stability of training and the validity of various parts.

FADS-Net has been extensively tested on various images and several typical inpainting methods and compared with the state-of-the-art forensics methods. Qualitative and quantitative experimental results show that the proposed network can locate the inpainting region more accurately and achieve superior performance in terms of IoU and F1-score. Moreover, our network shows excellent robustness against commonly used post-processing, including JPEG compression and AWGN.

Author Contributions

Conceptualization, H.W. and X.Z.; methodology, H.W. and X.Z.; software, H.W.; validation, H.W. and L.Z.; formal analysis, H.W.; investigation, H.W.; resources, H.W.; data curation, H.W.; writing—original draft preparation, H.W.; writing—review and editing, X.Z.; visualization, X.Z.; supervision, C.R. and S.M.; project administration, X.Z.; funding acquisition, X.Z. All authors have read and agreed to the published version of the manuscript.

Funding

This study was supported by the National Natural Science Foundation of China under Grant 61972282, and by the Opening Project of State Key Laboratory of Digital Publishing Technology under Grant Cndplab-2019-Z001.

Data Availability Statement

The data presented in this study are available on request from the corresponding author. The data are not publicly available due to privacy or ethical restrictions.

Conflicts of Interest

The authors declare no conflict of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript; or in the decision to publish the results.

References

- Alipour, N.; Behrad, A. Semantic segmentation of JPEG blocks using a deep CNN for non-aligned JPEG forgery detection and localization. Multimedia Tools Appl. 2020, 79, 8249–8265. [Google Scholar] [CrossRef]

- Bakas, J.; Ramachandra, S.; Naskar, R. Double and triple compression-based forgery detection in JPEG images using deep convolutional neural network. J. Electron. Imaging 2020, 29, 023006. [Google Scholar] [CrossRef]

- Zhang, J.; Liao, Y.; Zhu, X.; Wang, H.; Ding, J. A deep learning approach in the discrete cosine transform domain to median filtering forensics. IEEE Signal Process. Lett. 2020, 27, 276–280. [Google Scholar] [CrossRef]

- Abhishek; Jindal, N. Copy move and splicing forgery detection using deep convolution neural network, and semantic segmentation. Multimedia Tools Appl. 2021, 80, 3571–3599. [Google Scholar] [CrossRef]

- Liu, B.; Pun, C.M. Exposing splicing forgery in realistic scenes using deep fusion network. Inf. Sci. 2020, 526, 133–150. [Google Scholar] [CrossRef]

- Mayer, O.; Stamm, M.C. Forensic similarity for digital images. IEEE Trans. Inf. Forensics Secur. 2020, 15, 1331–1346. [Google Scholar] [CrossRef]

- Mayer, O.; Bayar, B.; Stamm, M.C. Learning unified deep-features for multiple forensic tasks. In Proceedings of the 6th ACM Workshop on Information Hiding and Multimedia Security, Innsbruck, Austria, 20–22 June 2018; pp. 79–84. [Google Scholar]

- Bertalmio, M.; Sapiro, G.; Caselles, V.; Ballester, C. Image inpainting. In Proceedings of the 27th Internationl Conference on Computer Graphics and Interactive Techniques Conference, New Orleans, LA, USA, 23–28 July 2000; pp. 417–424. [Google Scholar]

- Oliveira, M.M.; Bowen, B.; McKenna, R.; Chang, Y.S. Fast digital image inpainting. In Proceedings of the International Conference on Visualization, Imaging and Image Processing (VIIP 2001), Marbella, Spain, 3–5 September 2001; pp. 106–107. [Google Scholar]

- Criminisi, A.; Perez, P.; Toyama, K. Region filling and object removal by exemplar-based image inpainting. IEEE Trans. Image Process. 2004, 13, 1200–1212. [Google Scholar] [CrossRef]

- Ružić, T.; Pižurica, A. Context-aware patch-Based image inpainting using Markov random field modeling. IEEE Trans. Image Process. 2015, 24, 444–456. [Google Scholar] [CrossRef]

- Yu, J.; Lin, Z.; Yang, J.; Shen, X.; Lu, X.; Huang, T. Free-form image inpainting with gated convolution. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 4470–4479. [Google Scholar]

- Wan, Z.; Zhang, J.; Chen, D.; Liao, J. High-fidelity pluralistic image mcopletion with transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Virtually, 11–17 October 2021; pp. 4672–4681. [Google Scholar]

- Chang, I.; Yu, J.C.; Chang, C.C. A forgery detection algorithm for exemplar-based inpainting images using multi-region relation. Image Vis. Comput. 2013, 31, 57–71. [Google Scholar] [CrossRef]

- Liang, Z.; Yang, G.; Ding, X.; Li, L. An efficient forgery detection algorithm for object removal by exemplar-based image inpainting. J. Vis. Commun. Image R. 2015, 30, 75–85. [Google Scholar] [CrossRef]

- Li, H.; Luo, W.; Huang, J. Localization of diffusion-based inpainting in digital images. IEEE Trans. Inf. Forensics Secur. 2017, 12, 3050–3064. [Google Scholar] [CrossRef]

- Zhang, Y.; Liu, T.; Cattani, C.; Cui, Q.; Liu, S. Diffusion-based image inpainting forensics via weighted least squares filtering enhancement. Multimedia Tools Appl. 2021, 80, 30725–30739. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015, Munich, Germany, 5–9 October 2015; pp. 234–241. [Google Scholar]

- Zhu, X.; Li, S.; Gan, Y.; Zhang, Y.; Sun, B. Multi-stream fusion network with generalized smooth L1 loss for single image dehazing. IEEE Trans. Image Process. 2021, 30, 7620–7635. [Google Scholar] [CrossRef]

- Pang, J.; Chen, K.; Shi, J.; Feng, H.; Ouyang, W.; Lin, D. Libra R-CNN: Towards balanced learning for object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 821–830. [Google Scholar]

- Rafi, A.M.; Tonmoy, T.I.; Kamal, U.; Wu, Q.M.J.; Hasan, M.K. RemNet: Remnant convolutional neural network for camera model identification. Neural Comput. Appl. 2021, 33, 3655–3670. [Google Scholar] [CrossRef]

- Zhu, X.; Qian, Y.; Zhao, X.; Sun, B.; Sun, Y. A deep learning approach to patch-based image inpainting forensics. Signal Process. Image Commun. 2018, 67, 90–99. [Google Scholar] [CrossRef]

- Li, H.; Huang, J. Localization of deep inpainting using high-pass fully convolutional network. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 8301–8310. [Google Scholar]

- Liu, X.; Liu, Y.; Chen, J.; Liu, X. PSCC-Net: Progressive Spatio-Channel Correlation Network for Image Manipulation Detection and Localization. IEEE Trans. Circuits Syst. 2022, 32, 7505–7517. [Google Scholar] [CrossRef]

- Bayar, B.; Stamm, M.C. Constrained convolutional neural networks: A new approach towards general purpose image manipulation detection. IEEE Trans. Inf. Forensics Secur. 2018, 13, 2691–2706. [Google Scholar] [CrossRef]

- Wu, H.; Zhou, J. IID-Net: Image inpainting detection network via neural architecture search and attention. IEEE Trans. Circuits Syst. Video Technol. 2022, 32, 1172–1185. [Google Scholar] [CrossRef]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Dumoulin, V.; Visin, F. A guide to convolution arithmetic for deep learning. arXiv 2016, arXiv:1603.07285. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar]

- Wang, F.; Jiang, M.; Qian, C.; Yang, S.; Li, C.; Zhang, H.; Wang, X.; Tang, X. Residual attention network for image classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 3156–3164. [Google Scholar]

- Wu, Q.; Sun, S.; Zhu, W.; Li, G.H.; Tu, D. Detection of digital doctoring in exemplar-based inpainted images. In Proceedings of the 2008 International Conference on Machine Learning and Cybernetics, Kunming, China, 12–15 July 2008; Volume 3, pp. 1222–1226. [Google Scholar]

- Das, S.; Shreyas, G.D.; Devan, L.D. Blind detection method for video inpainting forgery. Int. J. Comput. Appl. 2012, 60, 33–37. [Google Scholar] [CrossRef]

- Bacchuwar, K.S.; Ramakrishnan, K.R. A jump patch-block match algorithm for multiple forgery detection. In Proceedings of the 2013 International Mutli-Conference on Automation, Computing, Communication, Control and Compressed Sensing (iMac4s), Kottayam, India, 22–23 March 2013; pp. 723–728. [Google Scholar]

- Trung, D.T.; Beghdadi, A.; Larabi, M.C. Blind inpainting forgery detection. In Proceedings of the 2014 IEEE Global Conference on Signal and Information Processing (GlobalSIP), Atlanta, GA, USA, 3–5 December 2014; pp. 1019–1023. [Google Scholar]

- Zhao, Y.Q.; Liao, M.; Shih, F.Y.; Shi, Y.Q. Tampered region detection of inpainting JPEG images. Optik 2013, 124, 2487–2492. [Google Scholar] [CrossRef]

- Liu, Q.; Zhou, B.; Sung, A.H.; Qiao, M. Exposing inpainting forgery in JPEG images under recompression attacks. In Proceedings of the 2016 15th IEEE International Conference on Machine Learning and Applications (ICMLA), Anaheim, CA, USA, 18–20 December 2016; pp. 164–169. [Google Scholar]

- Zhang, D.; Liang, Z.; Yang, G.; Li, Q.; Li, L.; Sun, X. A robust forgery detection algorithm for object removal by exemplar-based image inpainting. Multimedia Tools Appl. 2018, 77, 11823–11842. [Google Scholar] [CrossRef]

- Xu, Z.; Sun, J. Image inpainting by patch propagation using patch sparsity. IEEE Trans. Image Process. 2010, 19, 1153–1165. [Google Scholar]

- Li, Z.; He, H.; Tai, H.M.; Yin, Z.; Chen, F. Color-direction patch-sparsity-based image inpainting using multidirection features. IEEE Trans. Image Process. 2014, 24, 1138–1152. [Google Scholar] [CrossRef]

- Jin, X.; Su, Y.; Zou, L.; Wang, Y.; Jing, P.; Wang, Z.J. Sparsity-based image inpainting detection via canonical correlation analysis with low-rank constraints. IEEE Access 2018, 6, 49967–49978. [Google Scholar] [CrossRef]

- Zhu, X.; Qian, Y.; Sun, B.; Ren, C.; Sun, Y.; Yao, S. Image inpainting forensics algorithm based on deep neural network. Acta Opt. Sin. 2018, 38, 1110005-1–1110005-9. [Google Scholar]

- Lu, M.; Liu, S. A detection approach using LSTM-CNN for object removal caused by exemplar-based image inpainting. Electronics 2020, 9, 858. [Google Scholar] [CrossRef]

- Wang, X.; Wang, H.; Niu, S. An intelligent forensics approach for detecting patch-based image inpainting. Math. Probl. Eng. 2020, 2020, 8892989. [Google Scholar] [CrossRef]

- Bender, G.; Kindermans, P.J.; Zoph, B.; Vasudevan, V.; Le, Q. Understanding and simplifying one-shot architecture search. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; Volume 80, pp. 550–559. [Google Scholar]

- Wang, J.; Sun, K.; Cheng, T.; Jiang, B.; Deng, C.; Zhao, Y.; Liu, D.; Mu, Y.; Tan, M.; Wang, X.; et al. Deep high-resolution representation learning for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 3349–3364. [Google Scholar] [CrossRef] [PubMed]

- Qian, Y.; Yin, G.; Sheng, L.; Chen, Z.; Shao, J. Thinking in frequency: Face forgery detection by mining frequency-aware clues. In Proceedings of the 16th European Conference, Glasgow, UK, 23–28 August 2020; pp. 86–103. [Google Scholar]

- Xu, K.; Qin, M.; Sun, F.; Wang, Y.; Chen, Y.K.; Ren, F. Learning in the frequency domain. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtually, 14–19 June 2020; pp. 1740–1749. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 7132–7141. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking atrous convolution for semantic image segmentation. arXiv 2017, arXiv:1706.05587. [Google Scholar]

- Huang, G.; Liu, Z.; Maaten, L.V.D.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Yang, M.; Yu, K.; Zhang, C.; Li, Z.; Yang, K. DenseASPP for semantic segmentation in street scenes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 3684–3692. [Google Scholar]

- Wang, Z.; Chen, J.; Hoi, S.C.H. Deep learning for image super-resolution: A survey. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 3365–3387. [Google Scholar] [CrossRef]

- Itti, L.; Koch, C.; Niebur, E. A model of saliency-based visual attention for rapid scene analysis. IEEE Trans. Pattern Anal. Mach. Intell. 1998, 20, 1254–1259. [Google Scholar] [CrossRef]

- Rensink, R. The dynamic representation of scenes. Vis. Cognit. 2000, 7, 17–42. [Google Scholar] [CrossRef]

- Corbetta, M.; Shulman, G.L. Control of goal-directed and stimulus-driven attention in the brain. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 3, 201–215. [Google Scholar] [CrossRef]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual attention network for scene segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 3146–3154. [Google Scholar]

- Tian, Y.; Hu, W.; Jiang, H.; Wu, J. Densely connected attentional pyramid residual network for human pose estimation. Neurocomputing 2019, 347, 13–23. [Google Scholar] [CrossRef]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Li, W.; Zhu, X.; Gong, S. Harmonious Attention Network for Person Re-Identification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 2285–2294. [Google Scholar]

- Zhou, B.; Lapedriza, A.; Xiao, J.; Torralba, A.; Oliva, A. Learning deep features for scene recognition using places database. In Advances in Neural Information Processing Systems; NeurIPS: San Diego, CA, USA, 2014; Volume 27, pp. 487–495. [Google Scholar]

- G’MIC. GREYC’s Magic for Image Computing. 2021. Available online: http://gmic.eu (accessed on 25 February 2023).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).