Abstract

A rapidly expanding multimedia environment in recent years has led to an explosive increase in demand for multimodality that can communicate with humans in various ways. Even though the convergence of vision and language intelligence has shed light on the remarkable success over the last few years, there is still a caveat: it is unknown whether they truly understand the semantics of the image. More specifically, how they correctly capture relationships between objects represented within the image is still regarded as a black box. In order to testify whether such relationships are well understood, this work mainly focuses on the Graph-structured visual Question Answering (GQA) task which evaluates the understanding of an image by reasoning a scene graph describing the structural characteristics of an image in the form of natural language together with the image. Unlike the existing approaches that have been accompanied by an additional encoder for scene graphs, we propose a simple yet effective framework using pre-trained multimodal transformers for scene graph reasoning. Inspired by the fact that a scene graph can be regarded as a set of sentences describing two related objects with a relationship, we fuse them into the framework separately from the question. In addition, we propose a multi-task learning method that utilizes evaluating the grammatical validity of questions as an auxiliary task to better understand a question with complex structures. This utilizes the semantic role labels of the question to randomly shuffle the sentence structure of the question. We have conducted extensive experiments to evaluate the effectiveness in terms of task capabilities, ablation studies, and generalization.

Keywords:

multimodal deep learning; scene graph reasoning; multimodal transformer; multi-task learning MSC:

68T50

1. Introduction

A rapidly expanding multimedia environment in recent years has led to an explosive increase in demand for multimodality that can communicate with humans in various ways [1]. A speech recognition system [2,3] typically used in mobile phones and navigation systems is the easiest way to experience human interaction with computers these days. This is an artificial intelligence technology for a single modality dealing only with the human voice. On the other hand, multimodal technology is a more difficult issue that addresses multiple modalities such as language, vision, speech, motion, etc. [4,5,6,7,8,9,10]. Among all the combinations, the fastest growing topic is the convergence of visual and language intelligence [11,12]. One typical example is multimodal large language models [13] which contain existing text-based comprehension capabilities as well as image processing capabilities. It can create paragraph length detailed descriptions of images and converse with human beings regarding the image. Another example can be found in the medical domain. Doctors are able to leverage large-language-model-based architectures to automatically generate reports from radiographs [14,15] or utilize the result of system-generated interpretations of radiographs in the form of text [16]. For this to succeed, two conditions must be satisfied. First of all, the system must have the ability to accurately understand visual information primarily. And the system must be able to make inferences based on language-oriented knowledge and visual information.

Visual Question Answering (VQA) is a typical artificial intelligence research topic that can verify whether a system contains multimodality that satisfies the two conditions. More specifically, it is a task of determining answers to natural language questions related to images, which takes both images and questions as inputs [17]. Existing VQA models require two main components: obtaining a structured representation of the image and processing natural language questions related to the structured representation [18,19,20,21,22,23,24,25]. They have selected answers based on either the image or image segmentation as an input, but it is unknown whether they correctly capture relationships between objects represented within the image.

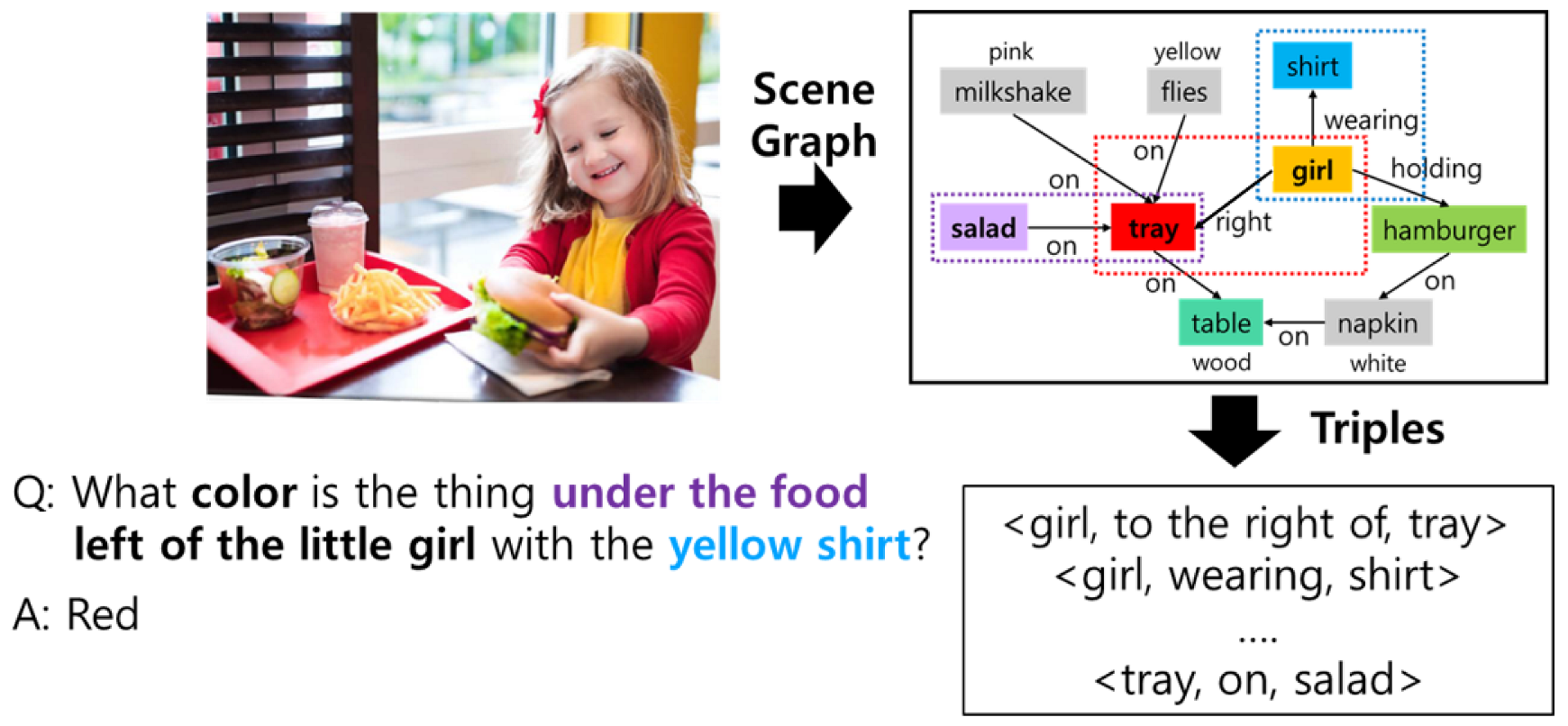

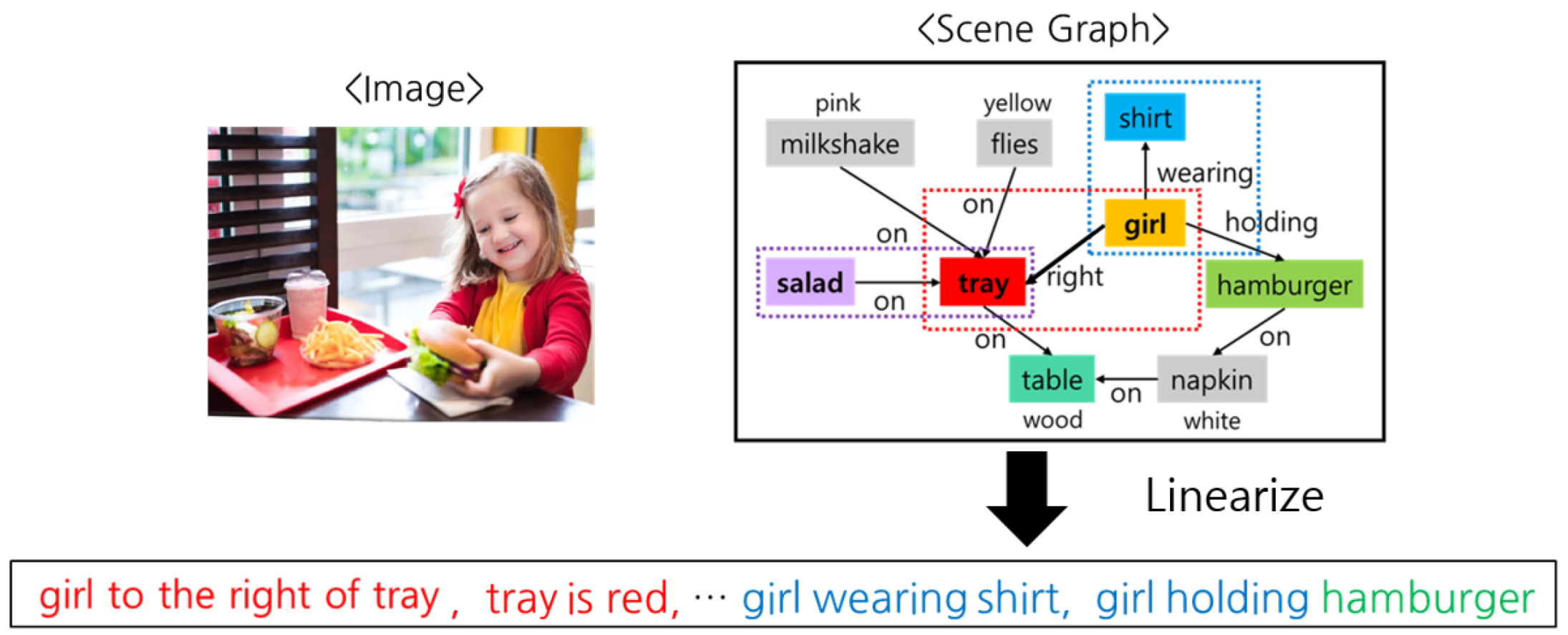

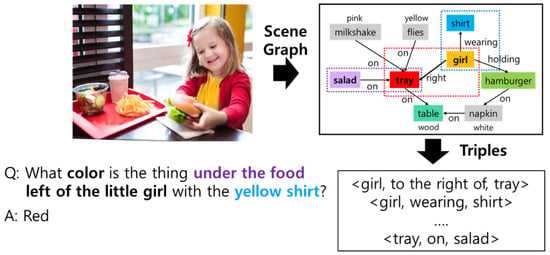

In order to testify whether such relationships are well understood, we mainly focus on the Graph-structured visual Question Answering (GQA) [26] task. Compared to the traditional VQA task, this has two major differences. One is a scene graph expressing the semantics of an image in natural language, and the other is a question with a complex structure composed of several noun phrases. As shown in Figure 1, a scene graph is a graph data structure that expresses information in an image in the form of natural language and includes three elements: objects in the image (e.g., tray, girl, salad), attributes of objects (e.g., table is wooden, napkin is white), and relations between two adjacent objects (e.g., to the right of, on, wearing). In other words, the scene graph can be regarded as a set of triples consisting of two adjacent objects with its relation described in Figure 1. In this paper, we regard all the attributes as objects. The scene graph serves as a powerful knowledge resource for the system to understand images through linguistic intelligence, contributing to a new driving force to enhance the semantic understanding of images.

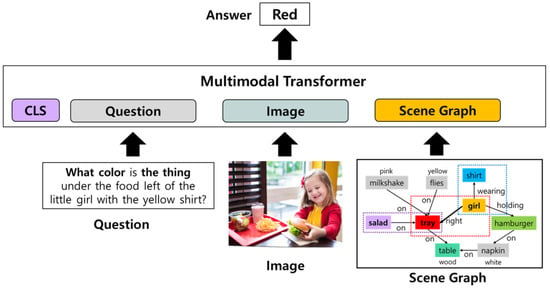

Figure 1.

An example of GQA task. It consists of three parts: Image, its corresponding scene graph, and a question/answer pair.

Next, since the structure of the question is usually accompanied by several noun phrases, the ability to understand the question is very important in evaluating the ability to understand visual information. This is a more complex structure compared to the questions of the typical VQA task. The question in Figure 1 refers to the color of the tray in the image. If the system precisely understands the question and predicts the correct answer, it can indirectly evaluate how the system perceives the tray among other objects in the image.

Existing approaches [18,19,20,21,22,23,24,25] have taken as backbones of their works a family of multimodal transformers which have shown tremendous progress in visual language domains. The only difference from transformer-based language models, which have shown remarkable performance in natural language processing, is that they additionally use visual information as input. They are largely divided into two types according to the structure of visual information input. One is a one-stream framework, in which image and text features are integrated through the self-attention layer at the same time. In this paper, OSCAR [19] is used as a representative model. On the other hand, two-stream frameworks perform multimodal fusion in stages according to modality. First, image features and text features are expressed as contextualized representations through separate self-attention layers. Then, each representation is fed into one unified self-attention layer, resulting in multimodal fusion by attending to all the knowledge in the layer. In this paper, LXMERT [18] is used as a representative model.

Even though multimodal transformers have shown comparable performance in understanding visual linguistic information, an additional structural transformation has been required in order to process visual linguistic information accompanying the scene graph [27,28,29,30,31]. Specifically, Yang et al. [31] has introduced a graph-convolutional-network-based architecture in which all the graphs are transferred into latent variables and directly feed into the model. Despite great advancement in the downstream task, there is still a caveat. If there are not enough data to understand the graph structure, a cold-start problem arises in which graphs cannot be processed in completely different areas that are not in the learning stage. This is a problem that can occur in a real environment where there is no human-labeled scene graph. Therefore, it is necessary to propose a more effective scene graph processing method capable of mitigating data dependence.

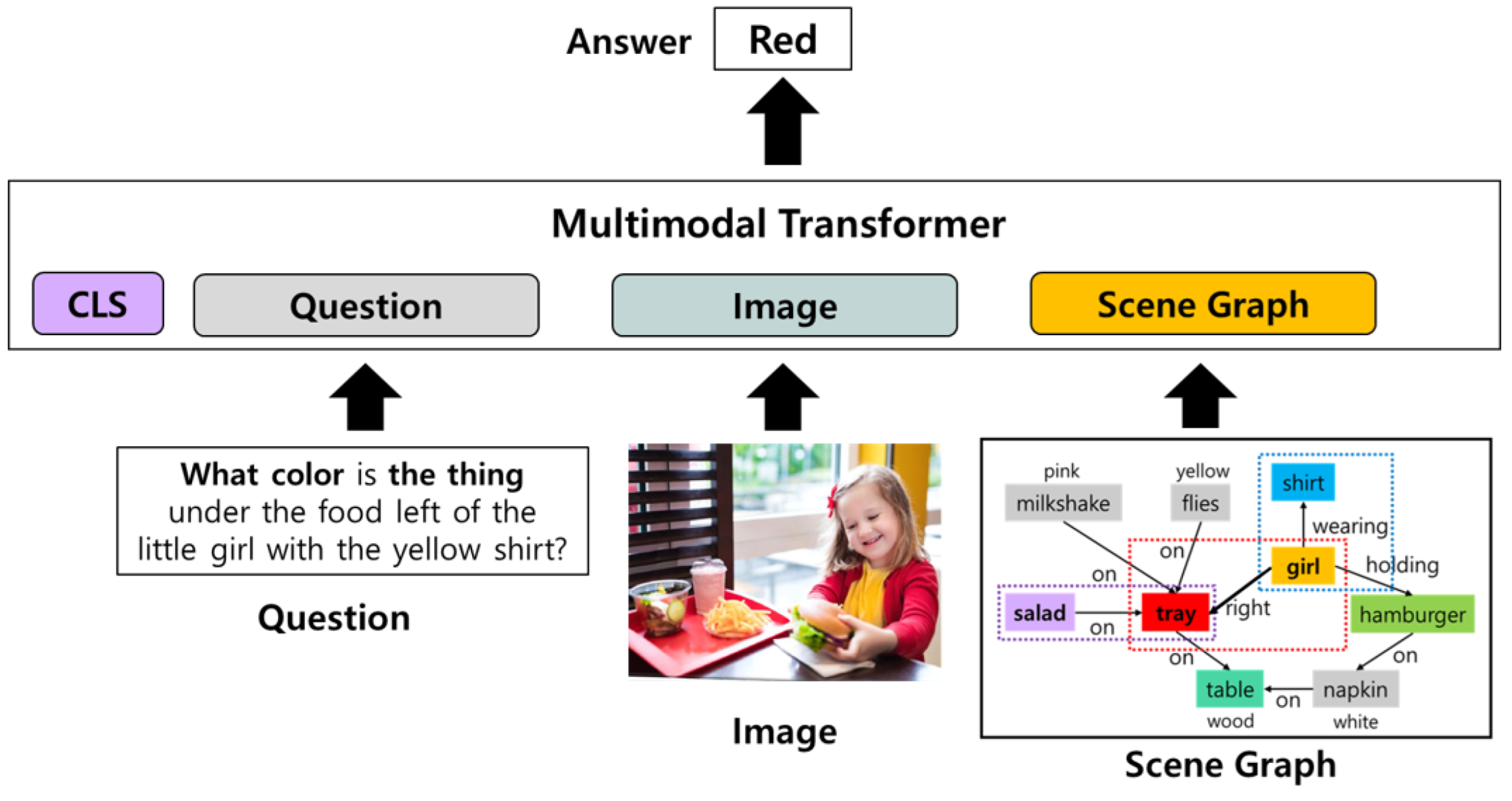

Therefore, we propose a simple yet effective scene graph reasoning framework using multimodal transformers with multi-task learning to better capture the semantics of questions. As shown in Figure 2, we adopt pre-trained multimodal transformer frameworks which were trained on a large-scale visual linguistic dataset. Unlike typical multimodal transformers, the proposed framework utilizes a scene graph as an additional input. Unlike the methods of previous studies [27,28,29,30,31] that require a separate scene graph encoding module, the proposed framework can effectively address scene graphs only by linearizing the scene graph based on the prior knowledge of the pre-trained language model. A scene graph can be treated like natural language input, just like questions. It is a set of triples defined by two related objects in an image and their relational information. A triple (e.g., girl to the right of tray) can be considered a sentence even though the grammatical structure is incomplete. Therefore, a set of triples can be considered as a set of sentences, allowing the proposed framework with excellent language understanding ability to understand the semantics of images from text. In addition, we propose a multi-task learning method that utilizes evaluating the grammatical validity of questions as an auxiliary task to better understand a question with complex structures. This utilizes the semantic role labels of the question to randomly shuffle the sentence structure of the question. Then, the framework addresses a binary classification problem that predicts the adequacy of the sentence structure of the question. We have conducted extensive experiments to evaluate the effectiveness in terms of task capabilities, ablation studies, and generalization.

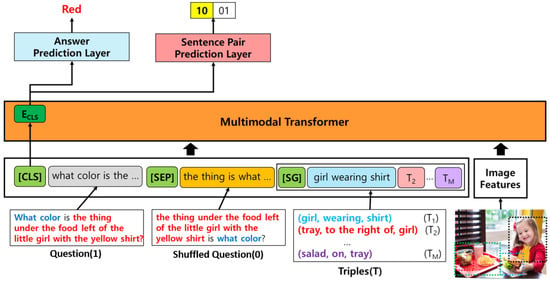

Figure 2.

An overview of the overall architecture.

The contributions of this papercan be summarized as follows:

- We propose a simple yet effective scene graph reasoning framework using only the multimodal transformer structures without any additional structure for understanding the scene graph. The proposed method shows a significant effect in GQA tasks that require scene graph reasoning capability.

- We also propose a multi-task learning method that can effectively understand queries with complex structures composed of multiple phrases. Multi-task learning takes the classification problem for the existing GQA as a main task and uses the sentence pair classification problem as a secondary task to learn the validity of the grammatical structure of sentences.

- We have conducted extensive experiments to evaluate the effectiveness in terms of task capabilities, ablation studies, and generalization.

2. Related Works

In the past decade, there has been a line of research for pre-training large-scale vision language models which have shown remarkable performance of vision language tasks [18,19,20,21,22,23,24,25]. It leverages transformer architectures, called multimodal transformers, to learn cross-modal representations using the pairs of image and text. More specifically, there are basically two steps to fuse the different modalities. In order to encode visual knowledge into the same semantic space of language, a pre-trained object detection model (e.g., Faster-RCNN [32]) is exploited to extract object-level visual features. Each object feature can be regarded as embedding for each token in the text. Then, the next step is to fuse all the knowledge into the same self-attention layer to blend textual and visual features. Based on the network architecture, multimodal transformers can be divided into two types: One type [12,19], called a one-stream multimodal transformer, only contains a single layer for multimodal fusion by linking all the visual and text features into a single sequence. In this paper, we adopt OSCAR [19] as a representative model for the one-stream transformer. On the other hand, the other type [18,20], called a two-stream multimodal transformer, consists of two independent fusion layers. The first layer has two different self-attention layers for each modality. Every feature from each modality has been fused internally by the self-attention layer. In other words, the contextual knowledge from each modality has been processed in this layer. Then, the second layer takes as input all the contextualized representations from each modality and attends to all the knowledge regardless of modalities. In this paper, we adopt LXMERT [18] as a representative model for the two-stream transformer.

While the quantitative performance of transformer-based visual language understanding models continues to improve, qualitative analysis is still largely a black box. Research on image semantics has been conducted even before deep learning became popular. Image semantic analysis [33,34] means not only detecting objects in an image, but also identifying characteristics of objects and relationships between objects. In particular, an artificial intelligence system capable of analyzing the spatial relationship between objects (e.g., up, down, around, inside) can be used for various studies such as image search and image–text mapping. In order for artificial intelligence systems to have the ability to recognize visual relationships, data with the relationships between objects labeled are essential. The Visual Genome [35] is a large amount of data built into a graph structure by manually labeling the relationships and characteristics between objects included in static images in natural language. Therefore, graph convolution networks [27,36] specialized in graph data processing are used as a representative encoder architecture. Despite significant progress, the premise of needing a separate encoder for scene graph understanding is an issue that needs to be improved. Additionally, this is not useful in real environments where human-constructed scene graphs are very rare.

Therefore, in the following section, we introduce a simple yet effective scene graph reasoning framework for visual question answering using pre-trained multimodal transformers with multi-task learning to better capture the semantics of questions. Our work has two distinct differences from previous studies [21,37]. First of all, our work focuses on developing a multimodal transformer specialized for visual question answering tasks. More specifically, we propose a multi-task learning method in which pre-trained multimodal transformers (e.g., LXMERT, OSCAR) are fine-tuned with GQA data. On the other hand, the aim of the two existing studies [21,37] is to develop a pre-trained multimodal transformer with an excellent multimodal (vision language) understanding ability. That is, they are dealing with a new method for developing pre-trained multimodal transformers, such as LXMERT or OSCAR, which we use as baselines in this work.

Secondly, as a more threshold matter, our goal is to develop a visual question answering method through reasoning about the scene graph that represents the semantics of images in the form of natural language, within the multimodal transformer framework. Along with the first goal, the pre-trained multimodal transformers are fine-tuned to predict accurate answers to triples composed of an image, its scene graph, and its question.

3. Proposed Architecture

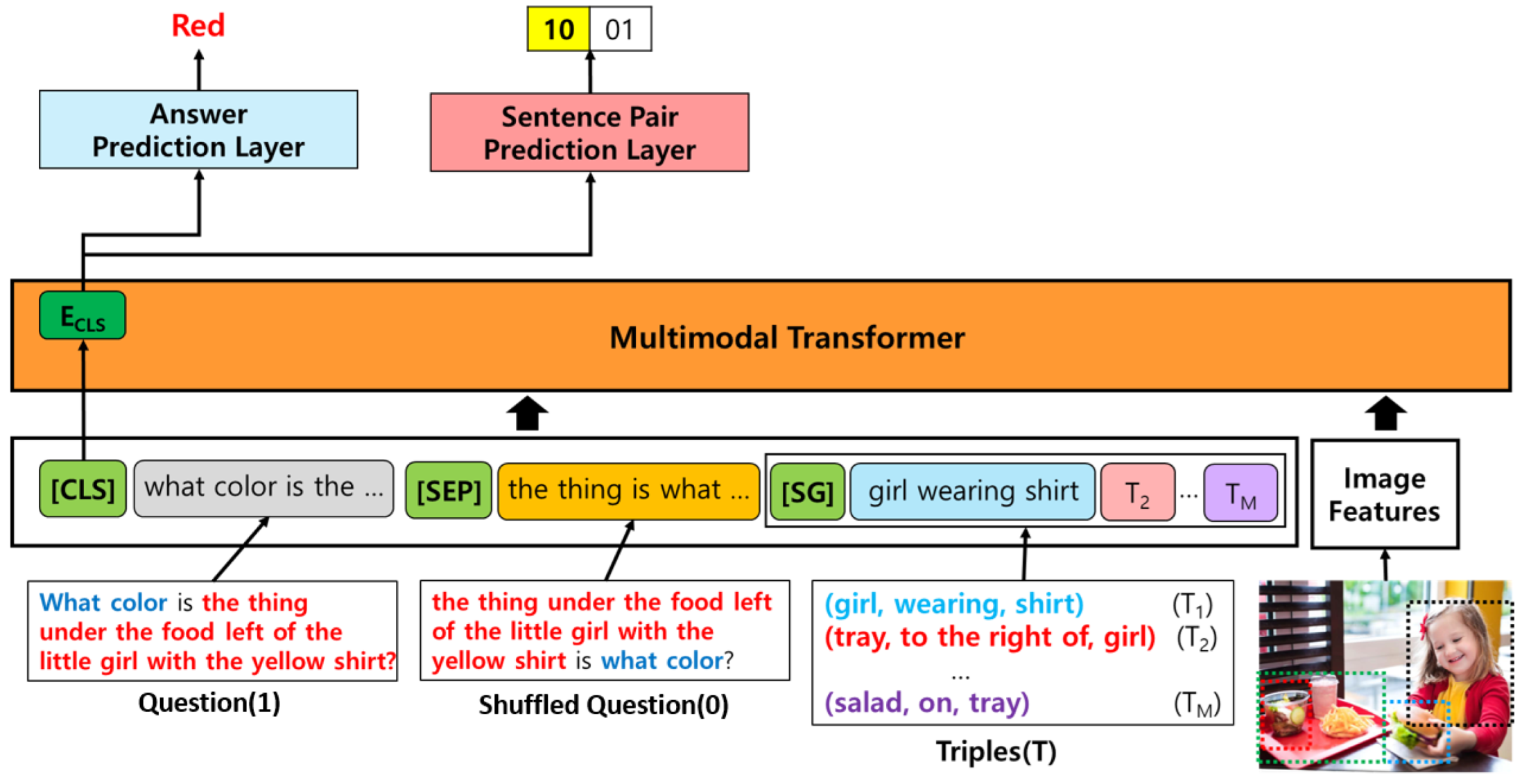

This section introduces a simple yet effective scene graph reasoning framework for visual question answering tasks with multi-task learning that can improve complex question understanding. As shown in Figure 3, the backbone of this model comes from a family of multimodal transformers, which, in this paper, adopts two different types such as LXMERT [18] and OSCAR [19]. Regardless of transformer architectures, a model can take as input the following four pieces of information such as the question, the shuffled question (a variant of the original question), triples from the scene graph, and its corresponding image.

Figure 3.

An illustration of the overall architecture of the proposed model. It takes as input question, shuffled question, triples from a scene graph, and its original image. Each component of inputs is concatenated with special tokens ([CLS], [SEP], [SG]). The backbone is a multimodal transformer, which, in this paper adopts two different types such as LXMERT [18] and OSCAR [19].

The proposed model adopts a multi-task learning paradigm to improve the understanding of questions with a complicated structure. This work proposes a new auxiliary task called sentence pair prediction that makes the model predict whether or not the structure of the question is correct or not. We have observed that it has shown considerable improvement to increase the understanding capabilities, which will be addressed in the experiment part. In the following section, we will describe each component in detail.

3.1. Input Representation

As shown in Figure 3, the model takes as input four types of visual and textual information such as original question (Question), Shuffled Question, Triples, and Image. All these components except image features are constructed with one single input representation with special tokens such as [CLS] (start token), [SEP] (separator between original question and shuffled question), and [SG] (a separator between a shuffled question and a set of triples). It is very natural to tokenize the original question using a pre-trained tokenizer and add the [CLS] token at the beginning.

3.1.1. Shuffled Question

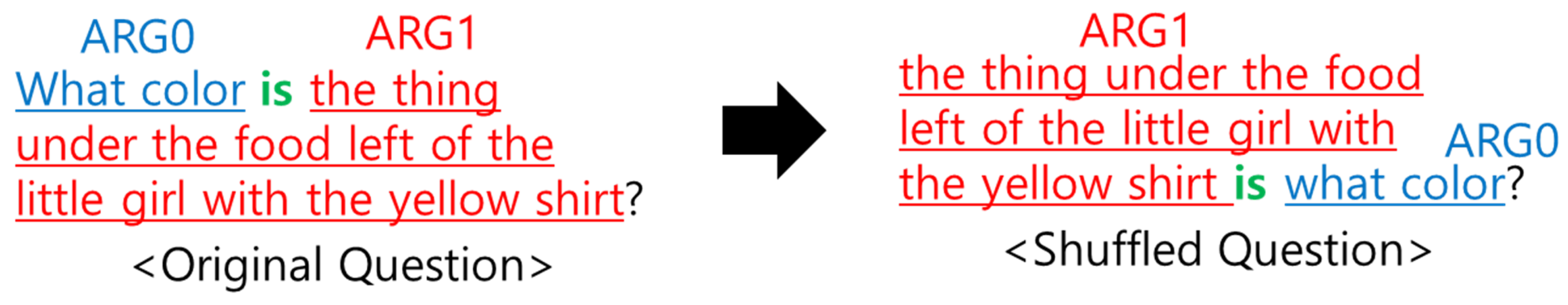

A shuffled question is a variant of the original question by shuffling some words/phrases using semantic role labeling. It is one of the typical natural language processing techniques that assigns labels to words or phrases in a sentence that indicate their semantic role in the sentence, such as that of an agent, goal, or result. For example, there is a sentence: Mary hit John with the stick. Based on the predicate (e.g., hit), Mary is the agent of this action; John is the recipient of this action; and stick is a tool for this action. In this case, Mary is labeled as ARG0, which stands for the agent of the predicate. John is labeled as ARG1, which indicates the recipient of the predicate. The phrase “with the stick” is labeled as ARG2, which refers to instrument in this case. Likewise, semantic role labeling is a way to interpret a sentence in a semantic manner by analyzing the role of the words/phrases.

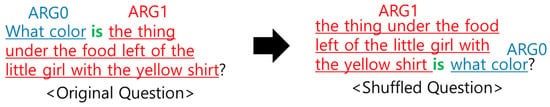

In order to mix the order of the original question, this work only considers two labels: ARG0 and ARG1. When a semantic role is labeled into a question, only two phrases corresponding to ARG0 and ARG1 are changed; otherwise, all else remains the same. Then, a shuffled question can be constructed as in Figure 4. This work adopts a publicly available semantic role labeling model [38].

Figure 4.

An example of a shuffled question using semantic role labeling.

3.1.2. Triples

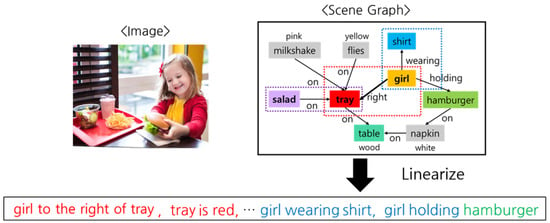

A scene graph is encoded as a set of triples by linearizing all the tokens as shown in Figure 5. When a triple (girl, wearing, shirt) is linearized, it seems a sentence missing a simple verb (e.g., is). Therefore, in this paper, we also add the verb (is) for almost every relation as a prefix in a heuristic manner. Then, it is more natural for the model to accept a sequence of linearized triples as the same as a sequence of sentences.

Figure 5.

An illustration of the scene graph encoding by linearizing the triples.

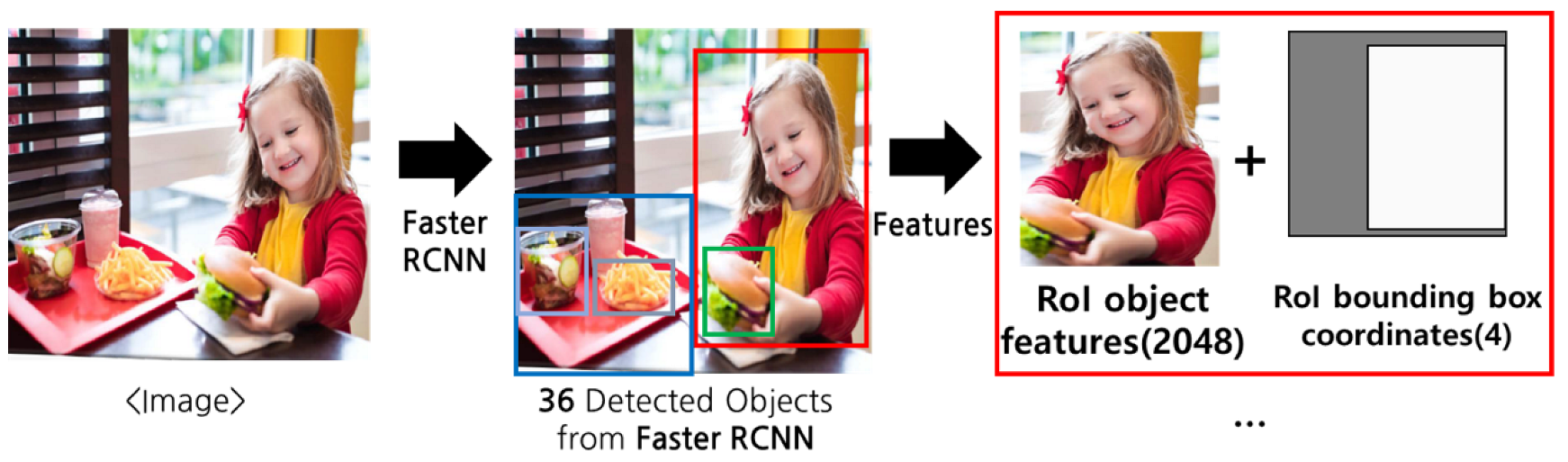

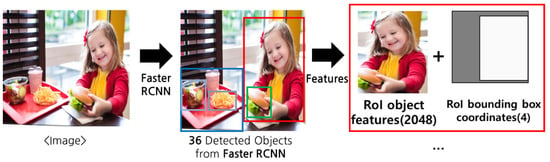

3.1.3. Image

Before being fed into the model, an image is fed into an object feature extractor called Faster-RCNN [32], a very popular pre-trained model for object detection. As shown in Figure 6, the feature extractor detects 36 objects in a single image. Each object has two different types of visual features such as RoI object features with 2048 dimensions and RoI bounding box coordinates with 4 dimensions. Then, every object can be transformed into a 2052-dimensional representation by concatenating these two features. In two-stream models, this encoding is the typical way to extract a unimodal feature from the visual encoder. On the other hand, in one-stream models, this encoding is performed before feeding into the model. Instead, these features are regarded as token embeddings, making all the information fused into the language model.

Figure 6.

An illustration of the image feature representation.

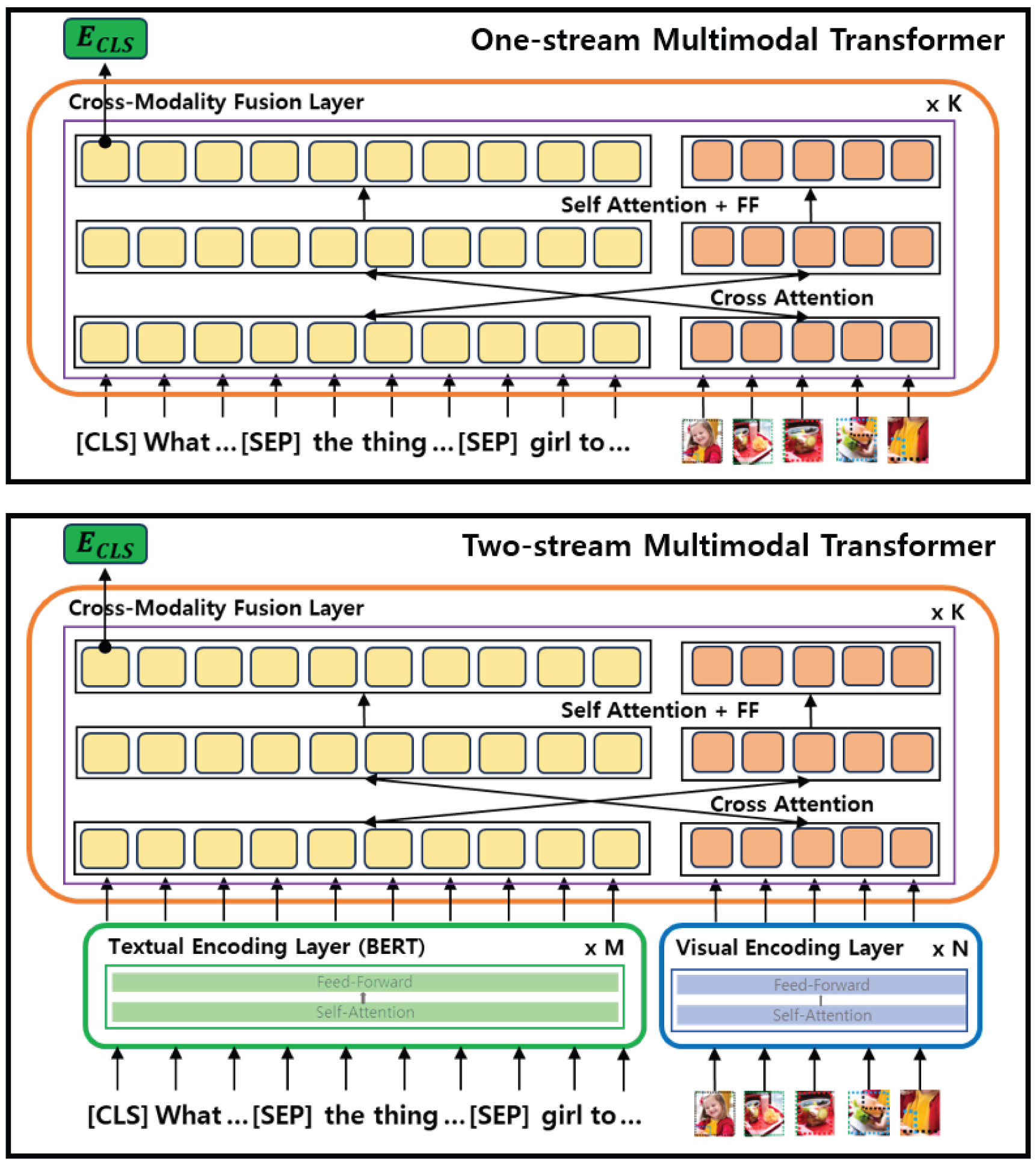

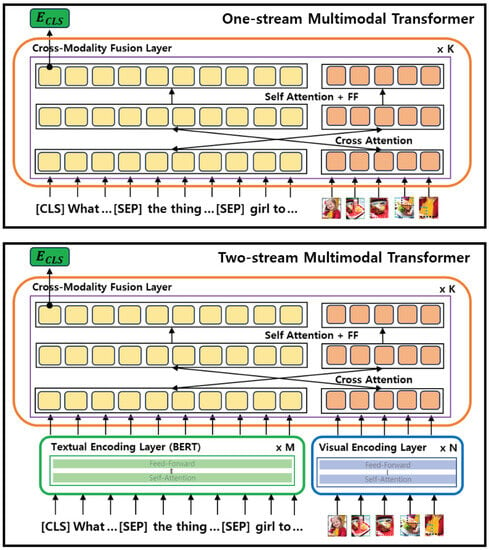

3.2. Multimodal Transformers

The main purpose of the multimodal transformer framework for vision and language is to design a structure capable of convergence between modalities so that information from each modality can be comprehensively understood. Therefore, the framework in this study utilizes all information of images, questions, and scene graphs to infer answers. As described in Section 3.1, it takes as input tokenized text composed of questions, shuffled questions, and scene graphs; and image features obtained from Faster-RCNN [32]. As shown in Figure 7, multimodal transformers can be divided into two structures according to the fusion method. The first is a one-stream multimodal transformer (up in Figure 7). It generally consists of K cross-modality fusion layers, each of which includes a cross-attention structure for information fusion between modalities, which is different from the existing transformer structure. This layer directly enables information fusion between modalities by using a cross-attention structure between modalities for features used as inputs. And the updated features can reflect the context between the information within the modality through self-attention and feed-forward (ff). The framework will repeat this operation K times. And finally, the output embedding () for [CLS] is considered to represent the entire context of images and text and is used in a classification model for generating correct answers, which will be explained in detail in Section 3.3.

Figure 7.

The overview of multimodal transformers with respect to their structural difference. A one-stream multimodal transformer (Up) merges all the features into one single layer with self-attention. On the other hand, a two-stream multimodal transformer (Down) has separate modality-specific encoding layers and then one single cross-modality fusion layer.

On the other hand, the two-stream multimodal transformer (down in Figure 7) contains an additional step for information fusion. It is a modality-specific encoding layer that creates contextual embeddings for text and image, respectively. The output embeddings from each encoding layer are used as input to the cross-modality fusion layer. That is, convergence between pieces of information of the same modality is first performed, and information convergence between different modalities is secondarily performed. This is different from the one-stream structure that proceeds with information convergence through a cross-attention structure without distinction of modality. The text encoding layer is a stack of self-attention structures and feed-forward structures. It consists of a total of M stacks, and in this study, it is set to a total of 12 as the same structure as BERT, a transformer encoder. This creates contextual embeddings for each token, each representing the connotation of each token in the question and scene graph. Similarly, the visual encoding layer is a stack of a self-attention layer and a feed-forword layer. It consists of a total of N stacks, and in this study, 12 are set. This enables information fusion between features obtained from Faster-RCNN on images. So in the end, this layer creates the final embedding of the object in the image as the final result. And this is used as an input for the image to the cross-modality fusion layer. Next, the cross-modality fusion layer enables information fusion between different modalities through a cross-attention structure, similar to the one-stream series. As a result, the framework will repeat this operation K times. And finally, the output embedding () for [CLS] is considered to represent the entire context of images and text and is used in a classification model for generating correct answers, which will be explained in detail in Section 3.3.

3.3. Multi-Task Learning for Question Understanding

Motivated by previous findings [39,40,41] that showed an improved quality of training for the target task when the models were optimized for multiple objective functions, we adopted a multi-task learning method to solve both problems simultaneously. In order to support the performance of the main task (e.g., predicting the correct answer given the question and image), we newly design a sentence pair prediction task as an auxiliary task and leverage it by the weighted sum of losses from each task to fine-tune the proposed model in an end-to-end paradigm. Since we observed that the questions are basically composed of multiple phrases, which prohibits the model from understanding the semantics of questions, the sentence pair prediction task is to predict whether or not the structure of the question is correct or not. In the following, we describe each loss function for each task in detail.

In the multimodal transformer, all the information is fused by the attention mechanism. Then, the final output () of embedding of the CLS token contains the whole context. It is natural to design an answer prediction layer above the transformer using () as an input. Since there are a set of predefined labels, the loss function is a negative log-likelihood (L1) as described in Equation (1):

where and refer to the predicted label and ground-truth label, respectively, N is the number of data, and C is the number of answer categories.

Inspired by the work [42] that showed that a transformer-based encoder has difficulty discriminating the correct semantics with similar patterns of sentences, this work addresses a new auxiliary task to allow the model to improve its ability to discriminate the meaning of different sentences with similar patterns. Therefore, this work proposes a sentence pair prediction task as an auxiliary task that can classify the order of question types. There are two ways to place a question and a shuffled question. As an example of Figure 3, the input representation is as follows: original question (Q) [SEP]–Shuffled Question (SQ), which is represented as 10. In the same way, the sentence pair prediction label 01 indicates the input representation as follows: Shuffled Question (SQ) [SEP] an original question (Q). Therefore, this task is designed as a binary classification problem, so the loss function is a binary cross entropy (L2) as described in Equation (2):

where N is the number of data, refers to the predicted label (0 or 1), and indicates its corresponding predicted probabilities.

As a result, two losses are calculated with Equation (3):

where is a hyper-parameter, and in this case , is set to 0.1 depending on two perspectives: the scale of the losses from each task and model optimization to the GQA task.

4. Experiment

This section describes an experimental setup with data specification and analyzes the experimental result.

4.1. Experimental Setup

4.1.1. Dataset

In this paper, we adopt three datasets: GQA [26], VQA [17], and NLVR [43]. GQA is a visual question-answering dataset, each of which consists of a pair of question-and-answer, an image, and its scene graph. Due to having no scene graphs for test-dev, we define the original validation set as the test set of this work. Instead, we manually split the training data into training and validation sets. More specifically, we first shuffle all the question–answer pairs in the training set with a random seed of 0. Then, we set 70 K for the validation set, and the remaining are left for the training set.

In order to evaluate the generalization of the proposed framework, we adopt VQA and NLVR. Similar to GQA, VQA is a visual question-answering dataset that only contains a set of an image and a pair of question-and-answer. The questions are quite a bit simpler than those in GQA. We only use the test set for evaluating the generalization of the proposed framework. NLVR is a binary classification task in which the framework should determine whether the descriptive sentence is correctly mapped to the original photographs. It is a more challenging task than VQA since the domain of the images is web-based photographs. The images are mainly regarded as more semantically diverse and compositional than others.

4.1.2. Baselines

- LXMERT [18]: one of the two-stream multimodal transformers pre-trained on large-scale image–text pair data for learning both intra-modality and cross-modality relationship.

- OSCAR [19]: one of the one-steam multimodal transformers pre-trained on large-scale image–text pair data with multi-task learning in order to train the alignment of image–text pairs.

4.1.3. Implementation Details

All the experiments were conducted on a Ubuntu 18.04 with two GPUs of NVIDIA GeForce RTX 3090. This work leverages pre-trained LXMERT (https://github.com/airsplay/lxmert accessed on 25 November 2021) and OSCAR (https://github.com/microsoft/Oscar accessed on 20 December 2021) as backbones, which are publicly available. First, LXMERT uses BERT (110 M parameters) as the text encoder. For the image encoder, pre-trained Faster-RCNN (https://cs.stanford.edu/people/dorarad/gqa/download.html accessed on 20 December 2021) is used. Unlike BERT, Faster-RCNN is frozen and used as is. The specific details of the model training are described in Table 1. Next, OSCAR also uses Faster-RCNN, as with the case in LXMERT, to obtain object tags and features for images. It is also frozen and used without further training. The specific details of the model training are described in Table 1. All the training is performed for 10 epochs, and the accuracy on the validation set is the metric for model optimization.

Table 1.

Training details for fine-tuning LXMERT and OSCAR on GQA dataset, respectively.

4.2. Experimental Result

The experiment has investigated the effects of scene graphs based on the type of multimodal transformers such as LXMERT [18] and OSCAR [19]. As shown in Table 2 and Table 3, the usage of scene graph always showed significant effects on visual language understanding regardless of the type of multimodal transformer. In the case of the two-stream model (LXMERT), the model fine-tuned with the scene graph (LXMERT + SG) showed a performance improvement of about 20.89. The same tendency is also shown in the one-stream model (OSCAR). As shown in Table 3, the model fine-tuned with the scene graph (OSCAR + SG) showed a performance improvement of about 20.01.

Table 2.

LXMERT performance on GQA dataset. w/o refers to without, SG stands for scene graph, TS refers to topology sorting, and MTL refers to multi-task learning. LXMERT + SG indicates the model fine-tuned with scene graphs. LXMERT + SG + TS indicates the model fine-tuned with scene graphs. The order of triplets is determined by the topology sorting algorithm. LXMERT + SG + TS + MTL indicates the model trained with multi-task learning.

Table 3.

OSCAR performance on GQA dataset. w/o refers to without, SG stands for scene graph, TS refers to topology sorting, and MTL refers to multi-task learning. OSCAR + SG indicates the model fine-tuned with scene graphs. OSCAR + SG + TS indicates the model fine-tuned with scene graphs. The order of triplets is determined by the topology sorting algorithm. OSCAR + SG + TS + MTL indicates the model trained with multi-task learning.

Next, the experiment showed the effect of ordering triples of the scene graph when passing them to the model. This work adopts a topology sorting algorithm to re-order the input triples. For the two-stream model described in Table 2, the model (LXMERT + SG + TS) showed a 0.61 gain over the one with random sorting (LXMERT + SG). It can also be observed for the one-stream model in Table 3 that the model with topology sorting (OSCAR + SG + TS) showed a 0.4 gain over the one with random sorting (OSCAR + SG).

Last, the effect of the auxiliary task to improve question comprehension was confirmed. The multi-task learning algorithm was effective for both types of transformers. Auxiliary tasks made performance improvements of 1.01 and 0.7, respectively, possible.

5. Discussion

In this section, we investigate our proposed framework in terms of an ablation study and generalization for model interpretation. The ablation experiments were conducted to validate the effects of scene graphs on visual language understanding. The first result (LXMERT + Scene Graph) in Table 4 represents the accuracy of the proposed model without multi-task learning. The second result (LXMERT w/o Scene Graph) involves fine-tuning LXMERT using only GQA images and their corresponding question–answer pairs without utilizing scene graphs. In this case, scene graphs were not used during the training and evaluation processes. The two models exhibited a significant difference in accuracy, with a margin of 21.5. This indicates that scene graphs contain sufficient semantic information about the images. More importantly, it suggests that the model’s understanding of images is improved through the reasoning of scene graphs expressed in natural language.

Table 4.

The analysis of the effect of scene graph on vision language understanding. w/o: without.

In addition, another experiment was conducted to assess the accuracy of answer prediction when provided with only the scene graph of an image and a corresponding question without the actual image to strengthen the claim that scene graph reasoning plays a helpful role in multimodal understanding. Since BERT is a text understanding model embedded in LXMERT, this experiment leverages BERT to train questions and scene graphs. The result (BERT + scene graph) in Table 4 showed a higher accuracy by 10.2 than the second result trained only with images and questions without a scene graph. This indicates that even without direct image information, utilizing natural language descriptions that capture the structural characteristics of the image allows for a certain level of visual understanding. Therefore, high-quality scene graphs can assist the model in comprehending images which have lower expressive power compared to text.

This experiment was designed to verify the generalization capability of the proposed model for other visual language tasks such as Visual Question Answering (VQA) [17] and Natural Language for Visual Reasoning (NLVR) [43]. The proposed method demonstrates effectiveness across similar visual language understanding datasets, regardless of the types of multimodal transformer. As shown in Table 5, the proposed LXMERT-based approach achieves 1.18 higher accuracy than the baseline in VQA. Similarly, it shows a 0.59 improvement in accuracy in NLVR. Likewise, the proposed OSCAR-based method, as shown in Table 6, achieves 0.63 higher accuracy than the baseline in VQA and shows a 0.45 improvement in accuracy in NLVR. The experimental results generally indicate performance improvement, although the magnitude of improvement is not substantial. This can be attributed to the absence of scene graphs in the evaluation data, where only images and text are provided, creating a significantly different evaluation environment from the training setting, which may hinder performance improvement.

Table 5.

The performance of LXMERT on VQA and NLVR. w/o: without.

Table 6.

The performance of OSCAR on VQA and NLVR. w/o: without.

6. Conclusions

In this paper, we propose a simple yet effective multimodal transformer-based scene graph reasoning framework for visual question answering tasks to better capture the visual relationships in static images. A scene graph contains the semantics of an image in natural language with a set of triples consisting of two related objects and their relation. Our proposed framework takes as input a series of triples that are the same as a series of sentences by linearizing all the information in the framework. In addition, we also propose a multi-task learning method with a sentence pair prediction task as an auxiliary task to better understand a question with complex structures. We have observed that scene graph reasoning has contributed to considerable effects on enhancing visual understanding. Regardless of the types of multimodal transformers, the scene graph contributes to an increase of about 20 percentage points in the answer prediction performance on the GQA task. Also, our multi-task learning method with the sentence pair prediction task has also shown a performance gain by approximately 1 percentage point regardless of the types of multimodal transformers, suggesting that our method has shown model-agnostic behavior. The premise of this work is to address human-labeled scene graphs for a set of images, which can be considered a very strong data dependency. Therefore, in the future, we are devoted to developing an automatic scene graph generation algorithm in order to overcome the data dependencies. Then, we hope to be involved in a weakly labeled supervision algorithm to merge this work with the automatic scene graph generation method.

Author Contributions

Conceptualization, Y.H. and S.K.; Methodology, Y.H. and S.K.; Project administration, S.K.; Supervision, S.K.; Writing—original draft, Y.H.; Writing—review and editing, S.K. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korean government (MSIT) (No. 2022R1A2C1005316).

Data Availability Statement

The GQA dataset can be found in https://cs.stanford.edu/people/dorarad/gqa/download.html accessed on 25 November 2021. The VQA dataset can be found in https://visualqa.org/ accessed on 2 June 2022. The NLVR dataset can be found in https://lil.nlp.cornell.edu/nlvr/ accessed on 10 March 2023.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Turk, M. Multimodal interaction: A review. Pattern Recognit. Lett. 2014, 36, 189–195. [Google Scholar] [CrossRef]

- Matveev, Y.; Matveev, A.; Frolova, O.; Lyakso, E.; Ruban, N. Automatic Speech Emotion Recognition of Younger School Age Children. Mathematics 2022, 10, 2373. [Google Scholar] [CrossRef]

- Zgank, A. Influence of Highly Inflected Word Forms and Acoustic Background on the Robustness of Automatic Speech Recognition for Human–Computer Interaction. Mathematics 2022, 10, 711. [Google Scholar] [CrossRef]

- Mokady, R.; Hertz, A.; Bermano, A.H. ClipCap: CLIP Prefix for Image Captioning. arXiv 2021, arXiv:2111.09734. [Google Scholar]

- Wang, J.; Yang, Z.; Hu, X.; Li, L.; Lin, K.; Gan, Z.; Liu, Z.; Liu, C.; Wang, L. Git: A generative image-to-text transformer for vision and language. arXiv 2022, arXiv:2205.14100. [Google Scholar]

- Hu, X.; Gan, Z.; Wang, J.; Yang, Z.; Liu, Z.; Lu, Y.; Wang, L. Scaling up vision-language pre-training for image captioning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 17980–17989. [Google Scholar]

- Aafaq, N.; Akhtar, N.; Liu, W.; Gilani, S.Z.; Mian, A. Spatio-temporal dynamics and semantic attribute enriched visual encoding for video captioning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 12487–12496. [Google Scholar]

- Li, L.; Lei, J.; Gan, Z.; Yu, L.; Chen, Y.C.; Pillai, R.; Cheng, Y.; Zhou, L.; Wang, X.E.; Wang, W.Y.; et al. Value: A multi-task benchmark for video-and-language understanding evaluation. arXiv 2021, arXiv:2106.04632. [Google Scholar]

- Liu, S.; Ren, Z.; Yuan, J. Sibnet: Sibling convolutional encoder for video captioning. In Proceedings of the 26th ACM International Conference on Multimedia, Seoul, Republic of Korea, 22–26 October 2018; pp. 1425–1434. [Google Scholar]

- Alamri, H.; Cartillier, V.; Lopes, R.G.; Das, A.; Wang, J.; Essa, I.; Batra, D.; Parikh, D.; Cherian, A.; Marks, T.K.; et al. Audio Visual Scene-Aware Dialog (AVSD) Challenge at DSTC7. arXiv 2018, arXiv:1806.00525. [Google Scholar]

- He, L.; Liu, S.; An, R.; Zhuo, Y.; Tao, J. An End-to-End Framework Based on Vision-Language Fusion for Remote Sensing Cross-Modal Text-Image Retrieval. Mathematics 2023, 11, 2279. [Google Scholar] [CrossRef]

- Zhang, P.; Li, X.; Hu, X.; Yang, J.; Zhang, L.; Wang, L.; Choi, Y.; Gao, J. Vinvl: Revisiting visual representations in vision-language models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 5579–5588. [Google Scholar]

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar]

- Zhang, D.; Ren, A.; Liang, J.; Liu, Q.; Wang, H.; Ma, Y. Improving Medical X-ray Report Generation by Using Knowledge Graph. Appl. Sci. 2022, 12, 11111. [Google Scholar] [CrossRef]

- Ramesh, V.; Chi, N.A.; Rajpurkar, P. Improving Radiology Report Generation Systems by Removing Hallucinated References to Non-existent Priors. arXiv 2022, arXiv:2210.06340. [Google Scholar]

- Sharma, D.; Purushotham, S.; Reddy, C.K. MedFuseNet: An attention-based multimodal deep learning model for visual question answering in the medical domain. Sci. Rep. 2021, 11, 19826. [Google Scholar] [CrossRef] [PubMed]

- Goyal, Y.; Khot, T.; Summers-Stay, D.; Batra, D.; Parikh, D. Making the v in vqa matter: Elevating the role of image understanding in visual question answering. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 6904–6913. [Google Scholar]

- Tan, H.; Bansal, M. LXMERT: Learning Cross-Modality Encoder Representations from Transformers. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), Hong Kong, China, 3–7 November 2019; pp. 5100–5111. [Google Scholar] [CrossRef]

- Li, X.; Yin, X.; Li, C.; Zhang, P.; Hu, X.; Zhang, L.; Wang, L.; Hu, H.; Dong, L.; Wei, F.; et al. Oscar: Object-semantics aligned pre-training for vision-language tasks. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, 23–28 August 2020; pp. 121–137. [Google Scholar]

- Lu, J.; Batra, D.; Parikh, D.; Lee, S. Vilbert: Pretraining task-agnostic visiolinguistic representations for vision-and-language tasks. In Proceedings of the 33rd International Conference on Neural Information Processing System, Vancouver, BC, Canada, 8–14 December 2019; Voulme 32. [Google Scholar]

- Li, L.H.; Yatskar, M.; Yin, D.; Hsieh, C.J.; Chang, K.W. Visualbert: A simple and performant baseline for vision and language. arXiv 2019, arXiv:1908.03557. [Google Scholar]

- Socher, R.; Ganjoo, M.; Manning, C.D.; Ng, A. Zero-shot learning through cross-modal transfer. arXiv 2013, arXiv:1301.3666. [Google Scholar]

- Zhou, L.; Palangi, H.; Zhang, L.; Hu, H.; Corso, J.; Gao, J. Unified vision-language pre-training for image captioning and vqa. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; Volume 34, pp. 13041–13049. [Google Scholar]

- Chen, Y.C.; Li, L.; Yu, L.; Kholy, A.E.; Ahmed, F.; Gan, Z.; Cheng, Y.; Liu, J. UNITER: UNiversal Image-TExt Representation Learning. arXiv 2020, arXiv:1909.11740. [Google Scholar]

- Li, G.; Duan, N.; Fang, Y.; Gong, M.; Jiang, D. Unicoder-vl: A universal encoder for vision and language by cross-modal pre-training. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; Volume 34, pp. 11336–11344. [Google Scholar]

- Hudson, D.A.; Manning, C.D. Gqa: A new dataset for real-world visual reasoning and compositional question answering. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 6700–6709. [Google Scholar]

- Liang, W.; Jiang, Y.; Liu, Z. GraghVQA: Language-Guided Graph Neural Networks for Graph-based Visual Question Answering. In Proceedings of the Third Workshop on Multimodal Artificial Intelligence, Mexico City, Mexico, 1–5 June 2021; pp. 79–86. [Google Scholar] [CrossRef]

- Hudson, D.A.; Manning, C.D. Compositional Attention Networks for Machine Reasoning. arXiv 2018, arXiv:1803.03067. [Google Scholar]

- Kim, E.S.; Kang, W.Y.; On, K.W.; Heo, Y.J.; Zhang, B.T. Hypergraph Attention Networks for Multimodal Learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020. [Google Scholar]

- Yao, T.; Pan, Y.; Li, Y.; Mei, T. Exploring Visual Relationship for Image Captioning. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 684–699. [Google Scholar]

- Yang, X.; Tang, K.; Zhang, H.; Cai, J. Auto-Encoding Scene Graphs for Image Captioning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 10685–10694. [Google Scholar]

- Girshick, R. Fast r-cnn. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 1440–1448. [Google Scholar]

- Shi, Z. Image Semantic Analysis and Understanding. In Proceedings of the International Conference on Intelligent Information Processing, Manchester, UK, 13–16 October 2010; Shi, Z., Vadera, S., Aamodt, A., Leake, D., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; pp. 4–5. [Google Scholar]

- Sun, G.; Wang, W.; Dai, J.; Gool, L.V. Mining Cross-Image Semantics for Weakly Supervised Semantic Segmentation. arXiv 2020, arXiv:2007.01947. [Google Scholar]

- Krishna, R.; Zhu, Y.; Groth, O.; Johnson, J.; Hata, K.; Kravitz, J.; Chen, S.; Kalantidis, Y.; Li, L.J.; Shamma, D.A.; et al. Visual Genome: Connecting Language and Vision Using Crowdsourced Dense Image Annotations. arXiv 2016, arXiv:1602.07332. [Google Scholar] [CrossRef]

- Pham, K.; Kafle, K.; Lin, Z.; Ding, Z.; Cohen, S.; Tran, Q.; Shrivastava, A. Learning to Predict Visual Attributes in the Wild. arXiv 2021, arXiv:2106.09707. [Google Scholar]

- Kamath, A.; Singh, M.; LeCun, Y.; Synnaeve, G.; Misra, I.; Carion, N. MDETR—Modulated Detection for End-to-End Multi-Modal Understanding. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, BC, Canada, 11–17 October 2021; pp. 1780–1790. [Google Scholar]

- Gardner, M.; Grus, J.; Neumann, M.; Tafjord, O.; Dasigi, P.; Liu, N.F.; Peters, M.; Schmitz, M.; Zettlemoyer, L.S. AllenNLP: A Deep Semantic Natural Language Processing Platform. arXiv 2017, arXiv:1803.07640. [Google Scholar]

- Caruana, R. Multitask learning. Mach. Learn. 1997, 28, 41–75. [Google Scholar] [CrossRef]

- Zhang, Y.; Yang, Q. A Survey on Multi-Task Learning. IEEE Trans. Knowl. Data Eng. 2022, 34, 5586–5609. [Google Scholar] [CrossRef]

- Zhang, Z.; Yu, W.; Yu, M.; Guo, Z.; Jiang, M. A Survey of Multi-task Learning in Natural Language Processing: Regarding Task Relatedness and Training Methods. In Proceedings of the 17th Conference of the European Chapter of the Association for Computational Linguistics, Dubrovnik, Croatia, 2–6 May 2023; pp. 943–956. [Google Scholar]

- Clark, K.; Luong, M.T.; Le, Q.V.; Manning, C.D. ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators. arXiv 2020, arXiv:2003.10555. [Google Scholar]

- Suhr, A.; Zhou, S.; Zhang, A.; Zhang, I.; Bai, H.; Artzi, Y. A Corpus for Reasoning about Natural Language Grounded in Photographs. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 28 July–2 August 2019; pp. 6418–6428. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).