1. Introduction

Silver, denoted by the symbol Ag and originating from the Latin term ‘argentum’, stands as a metallic element with an atomic number of 47. Its distinct properties and traits render it a sought-after resource across diverse industries. Renowned for its remarkable electrical and thermal conductivity, silver frequently assumes a crucial role in the production of electronic devices within the manufacturing sector [

1]. Furthermore, within the realm of medicine, the utilization of silver nanoparticles has wielded a substantial influence on the progression of treatments in the past few decades [

2]. Silver’s antimicrobial properties empower the application of silver nanoparticles as coatings for medical instruments and treatments. Beyond this, silver assumes a pivotal function in the realm of solar energy capture, with a standard solar panel necessitating around 20 g of silver for its production [

3]. Year by year, the manufacturing of solar panels demonstrates a consistent rise, driving an escalating need for silver. Additionally, classified as a precious metal, silver maintains a relatively high value and demand in the market [

4].

Precious metals such as gold, silver, and platinum have correlations that influence their respective prices [

5]. The price correlation among these precious metals can be influenced by various factors, including politics, the value of the US dollar, market demand, and others [

6]. For example, in a gold price forecasting study conducted by Jabeur, Mefteh–Wali, and Viviani, supplementary variables including platinum prices, iron ore rates, and the dollar-to-euro exchange rate were incorporated [

7]. By incorporating these variables, the forecasting results were more accurate compared to using only one variable [

8]. Many investors choose to invest in precious metals (such as silver, gold, and platinum) as valuable assets [

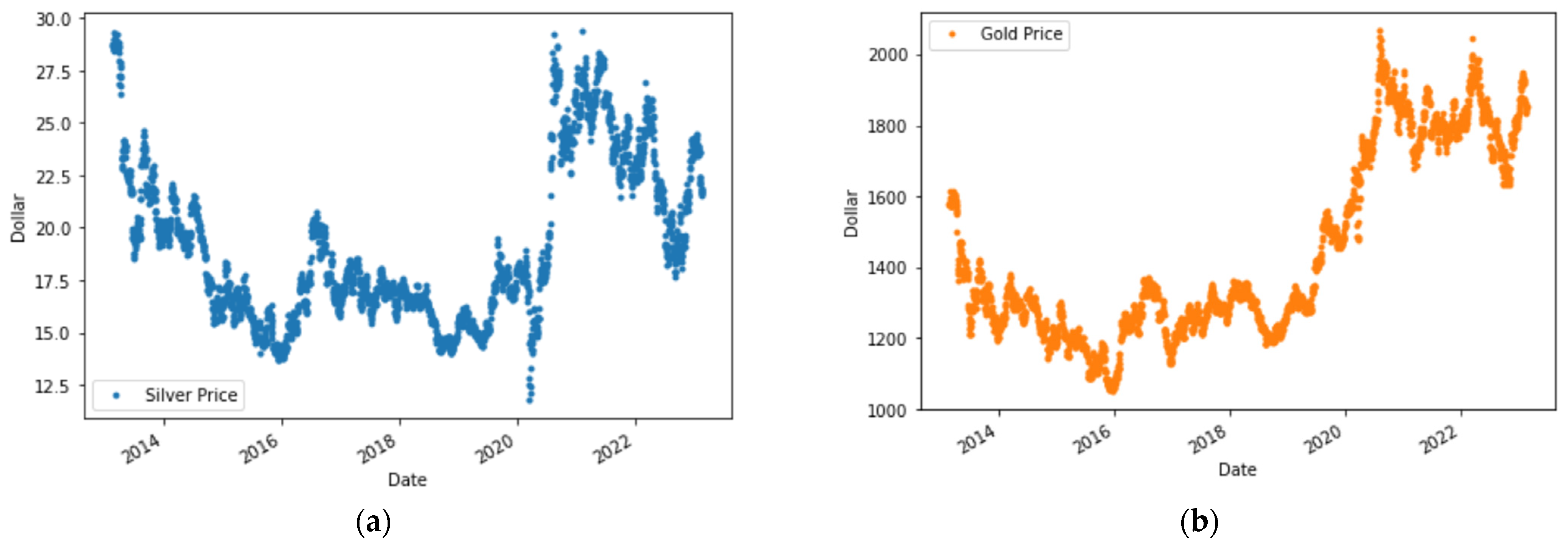

9]. The time series data for international silver prices, sourced from investing.com (accessed on 21 February 2023) [

10], reveals notable volatility in silver price trends. Considering this challenge, there emerges a necessity for a predictive model capable of forecasting silver prices—a tool that holds value for investors when making informed decisions.

Forecasting is a discipline that involves studying the available data to predict the future [

11]. The forecasting process typically involves using time series data with target categories that align with the research objectives. There are many traditional methods available, such as statistical analysis, regression, smoothing, and exponential smoothing, to perform forecasting [

12]. However, these traditional methods are often deemed less effective in addressing complex problems. Therefore, machine-learning approaches have been developed to tackle more complex problems.

Machine learning involves a system capable of learning from targeted training data, and automating the creation of analytical models to adeptly tackle various problems [

13]. Machine learning finds its common classification in three principal types: supervised learning (involving labeled output), unsupervised learning (operating without labeled output), and reinforcement learning. These categories house an array of evolving methodologies. In particular, supervised learning plays a significant role in forecasting, especially when dealing with time series data containing inherently desired outputs. Amid the plethora of techniques within supervised learning, prominent forecasting methods comprise gradient boosting, LSTM, CatBoost, extreme gradient boosting, and several others.

Extreme gradient boosting, popularly referred to as XGBoost, stands out as one of the most prevalent techniques in the realm of machine learning, employed extensively for tasks like forecasting and classification. XGBoost was first introduced by Friedman as an enhancement of decision tree and gradient boost methods [

14]. XGBoost has several advantages, including relatively high computational speed, parallel computing capability, and high scalability [

15]. In the process of creating and training an XGBoost model, there are several aspects to consider. Notably, XGBoost entails hyperparameters that require careful tuning to achieve optimal model performance [

16]. Researchers must delve into hyperparameter combinations, a process referred to as hyperparameter tuning, to attain an XGBoost model performance that optimally suits the specific problem. In the realm of machine learning, mean absolute error (MAE) and root mean square Error (RMSE) stand as prevalent metrics, employed to gauge the effectiveness of the model [

17].

Several previous studies use machine learning in forecasting. One example is the research conducted by Nasiri and Ebadzadeh [

18] where they predicted time series data using a multi-functional recurrent fuzzy neural network (MFRFNN). It was found that the proposed method performed better than the second-best method in the Lorenz time series. Luo et al. [

19] conducted a study using the ensemble learning method where they compared several methods to estimate the aboveground biomass and found the CatBoost method has the best performance. In addition, there are also several previous studies that use the XGBoost method in forecasting. Li et al. employed XGBoost to forecast solar radiation and discovered that XGBoost outperformed previous research with the lowest RMSE value [

20]. Fang et al. conducted forecasting of COVID-19 cases in the USA by comparing the ARIMA and XGBoost methods. It was found that the XGBoost method had better performance based on the metrics used in the study [

14]. Jabeur et al. predicted gold prices using XGBoost and compared it with other machine learning methods such as CatBoost, random forest, LightGBM, neural networks, and linear regression. They found that XGBoost demonstrated the best performance [

7]. However, it is worth noting that Jabeur et al. conducted their research without hyperparameter tuning, as their focus was on comparing the performance of different machine learning methods.

Based on the background presented, this study aims to forecast silver prices using the XGBoost method, similar to the approach employed by Jabeur et al. [

7], but with the addition of hyperparameter tuning using grid search. The novelty offered in this research focuses on the hyperparameter tuning process before grid search. Generally, a random value is selected for each hyperparameter for grid search, but this research proposes to perform hyperparameter tuning for each hyperparameter first by plotting the MAPE and RMSE evaluation values to determine the value to be selected. The study incorporates gold and platinum prices, as well as the euro-to-dollar exchange rate, as additional variables. To attain the best XGBoost model, the performance of the model was evaluated using the MAPE and RMSE. In addition, this research also compares models by adding two evaluation metrics, namely MAE and SI, to obtain a more comprehensive conclusion.