Feature Selection to Optimize Credit Banking Risk Evaluation Decisions for the Example of Home Equity Loans

Abstract

1. Introduction

- The credit risk represents 60% of the total risk of the financial institution.

- The requirements by authorities to comply with Basel III, among other requirements, implies an increase in the entity’s reserves based on a higher percentage of the calculation of the expected losses, with a consequent reduction of the benefits to be distributed to shareholders.

- With the introduction of the Ninth International Financial Reporting Standard (IFRS 9) in January 2018, financial companies will have to calculate their expected losses due to default during the 12 months after these financial instruments are implemented, transferring them as losses to their income statement, with a consequent reduction in profit.

- Linear Methods: The authors of [5,6,7,8,9] considered this type of methodology to solve this problem, but the majority of authors did not obtain good results. For example, in the comparative study carried out by Yu et al. [5] in which the efficacy of 10 methods using three databases was compared to test the different methods: one referring to loans from an English financial company extracted (England Credit) from [10], another referring to credit cards from a German bank (German Credit) and finally another database referring to credit cards in the Japanese market (Japanese Credit). The results show that the method ranks among the last classified, only surpassing neural networks with backwards propagation. However, subsequent research works (e.g., [11]) have shown the best percentages for this approach compared to other more complex methods, but the existing limitations due to the size of the data have been evident; in contrast, Xiao et al. [12] considered Logistic Regressions to be the best method if the information can be managed.

- Decision Trees: The authors of [9,13,14,15,16] used this methods in research comparing different methodologies. In none of these works, however, was this method the best of the methods used. For example, the results obtained in [9] can be observed, in which this approach lags far behind the rest of the methods in predictive precision. A predictive improvement can be observed in [17] where 11 methods are compared using the databases of German Credit and Australian Credit.

- Neural networks are commonly used to solve this problem in the literature, and successful results have been found, such as in the work of Malhotra and Malhotra [18] comparing the results with a discriminant analysis and obtaining good performance with the German Credit database. However, in general, there have been many research works that use neural networks without good or with insignificant results compared with the complexity of the models (e.g., [19,20,21]).

- Support Vector Machine obtains good results with classifications, as can be seen in [22], where the best accuracy results are obtained in comparison with four different methods. However, as Pérez-Martin and Vaca [23] concluded with a Monte Carlo simulation study, these best accuracy results have an impact on the efficiency (i.e., reducing it). Other authors have attempted to create or use another parametrization of the kernel, such as Xiao et al. [12], Bellotti and Crook [24] or Wang et al. [25], but the same problem remains regarding efficiency.

2. Materials and Methods

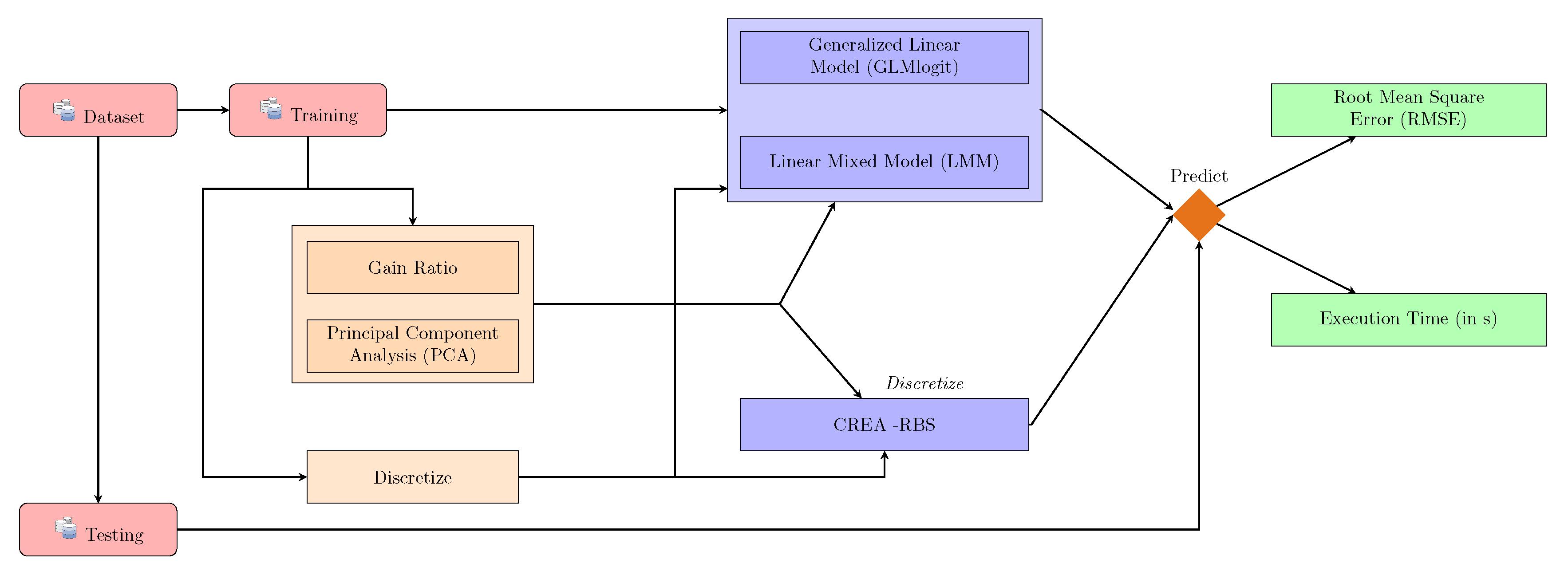

- Construction of training and testing datasets—the details of the procedure are in Section 2.1.

- Execution of the process of attribute selection and discretization—the entire process is explained in Section 2.2.

- Training of classification models—three algorithms are selected for this study (GLMlogit, LMM and CREA-RBS), and the methods are explained in Section 2.3.

- Collection of results—at the end of the process, two indicators are obtained to evaluate the effect of the feature selection methods on credit scoring models.

2.1. Datasets

- The German Credit dataset: The reason for choosing this database is because it is one of the most used in the existing literature on credit scoring research ([34], UCI Machine learning Repository (http://archive.ics.uci.edu/ml/datasets.html)).

- The IEF semi-synthetic dataset: The IEF semi-synthetic dataset comes from the IEF dataset from the Spanish Institute of Fiscal Studies (IEF) and is obtained from its statistical repository ([32,35,36,37,38,39]). This subset was generated and transformed in order to achieve four necessary conditions: to homogenize diverse variables from different years, to remove missing data, to choose or transform the interest variables for our research and to reduce the size for computational reasons.The variables used in the research were chosen based on the different articles and works consulted regarding the variables that financial institutions take into account in the decision to grant a mortgage loan ([7,18,40,41,42,43,44,45,46,47,48,49,50,51]). It is observed that there is unanimity in the choice of the explanatory variables and, at the same time, they are relevant in the time window of the study. The factors to take into account for the selection of the explanatory variables are the following [32]:

- (a)

- Social and personal factors: Variables related to the client’s social and personal environment are selected, such as sex, age, marital status, number of dependents, population of the habitual residence, habitual residence and other dwellings.

- (b)

- Economic factors: Variables related to the availability of income are selected, such as salaries, the economic sector in which they carry out their activity and the size of the loan requested.

2.1.1. German Credit Dataset

- social attributes, such as marital status, age, sex; and

- economic attributes, such as business or employment and being a property owner.

2.1.2. IEF Semi-Synthetic Dataset

- social attributes, such as marital status, age, province and number of family members;

- economic attributes, such as business or employment, family income, properties and amount borrowed;

- statistical attributes based on adjustments such as corrected income—that is, the total income dichotomized by the minimum inter-professional salary (SMI) for further data processing; and

- synthetic variables, such as the response variable , which was simulated using a linear regression model with several attributes of the dataset and a normal perturbation vector , with . Then, the response variable was recategorized as binary (pay and default cases), as follows:This expression can be considered as a probability of default by dividing into 100.

2.2. Methods and Algorithms

2.2.1. Gain Ratio

2.2.2. Principal Component Analysis (PCA)

2.2.3. Discretization Process

2.3. Credit Scoring Methods

2.3.1. Generalized Linear Model (GLMlogit)

2.3.2. Linear Mixed Model (LMM)

2.3.3. Reduction Based on Significance (RBS)

- Region 2: This group of regions contains rules with high support and confidence regarding facts. Rabasa Dolado [58] called these direct rules, because these combinations are very reliable.

- Region 1: The region groups rules with high support and low-confidence rules.

- Region 3: These rules have a very low support. It should be understood that any conclusion can be reached.

- Region 0: The rules inside this region are discarded because this region is composed of rules with medium support and confidence of facts, and they may arise from randomness.

2.4. Model Evaluation

3. Results

- The first group is formed by a complete dataset trained with a linear method. This group has the highest execution times and the second lowest RMSE. The best relationship within this group is LMM, because it saves more than 30%, losing less than 2% compared to GLMLogit.

- The second group is formed by a selected dataset with two proposed methods. This group has the second-lowest execution times and the worst RMSE. In general, GLMlogit behaves well; it obtains a good RMSE and shorter execution times. Within the methodologies used, the gain ratio is more effective than the PCA in view of the results.

- The last group is formed by RBS models regardless of whether there is a selection of variables or not. This stands out due to its low execution times, whatever the volume of data, and the best precision results. In this case, it is observed that the method of removing information makes the model lose precision.

4. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Yu, L.; Yao, X.; Wang, S.; Lai, K. Credit risk evaluation using a weighted least squares SVM classifier with design of experiment for parameter selection. Expert Syst. Appl. 2011, 38, 15392–15399. [Google Scholar] [CrossRef]

- Pérez-Martin, A.; Pérez-Torregrosa, A.; Vaca, M. Big data techniques to measure credit banking risk in home equity loans. J. Bus. Res. 2018, 89, 448–454. [Google Scholar] [CrossRef]

- Simon, H. Models of Man: Social and Rational; Mathematical Essays on Rational Human Behavior in Society Setting; Wiley: Hoboken, NJ, USA, 1957. [Google Scholar]

- Durand, D. Risk Elements in Consumer Instalment Financing; National Bureau of Economic Research: Cambridge, MA, USA, 1941. [Google Scholar]

- Yu, L.; Wang, S.; Lai, K.K. An intelligent-agent-based fuzzy group decision making model for financial multicriteria decision support: The case of credit scoring. Eur. J. Oper. Res. 2009, 195, 942–959. [Google Scholar] [CrossRef]

- Loterman, G.; Brown, I.; Martens, D.; Mues, C.; Baesens, B. Benchmarking regression algorithms for loss given default modeling. Int. J. Forecast. 2012, 28, 161–170. [Google Scholar] [CrossRef]

- Yu, L. Credit Risk Evaluation with a Least Squares Fuzzy Support Vector Machines Classifier. Discret. Dyn. Nat. Soc. 2014, 2014, 564213. [Google Scholar] [CrossRef]

- Baesens, B.; Van Gestel, T.; Viaene, S.; Stepanova, M.; Suykens, J.; Vanthienen, J. Benchmarking state-of-the-art classification algorithms for credit scoring. J. Oper. Res. Soc. 2003, 54, 627–635. [Google Scholar] [CrossRef]

- Sinha, A.P.; May, J.H. Evaluating and Tuning Predictive Data Mining Models Using Receiver Operating Characteristic Curves. J. Manag. Inf. Syst. 2004, 21, 249–280. [Google Scholar] [CrossRef]

- Thomas, L.; Edelman, D.; Crook, J. Credit Scoring and Its Applications; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 2002. [Google Scholar] [CrossRef]

- Alaraj, M.; Abbod, M.; Al-Hnaity, B. Evaluation of Consumer Credit in Jordanian Banks: A Credit Scoring Approach. In Proceedings of the 2015 17th UKSIM-AMSS International Conference on Modelling and Simulation (UKSIM ’15), Cambridge, MA, USA, 25–27 March 2015; IEEE Computer Society: Washington, DC, USA, 2015; pp. 125–130. [Google Scholar] [CrossRef]

- Xiao, W.; Zhao, Q.; Fei, Q. A comparative study of data mining methods in consumer loans credit scoring management. J. Syst. Sci. Syst. Eng. 2006, 15, 419–435. [Google Scholar] [CrossRef]

- Ong, C.S.; Huang, J.J.; Tzeng, G.H. Building credit scoring models using genetic programming. Expert Syst. Appl. 2005, 29, 41–47. [Google Scholar] [CrossRef]

- Chen, W.; Ma, C.; Ma, L. Mining the customer credit using hybrid support vector machine technique. Expert Syst. Appl. 2009, 36, 7611–7616. [Google Scholar] [CrossRef]

- Zhou, L.; Lai, K.K.; Yu, L. Least squares support vector machines ensemble models for credit scoring. Expert Syst. Appl. 2010, 37, 127–133. [Google Scholar] [CrossRef]

- Tsai, C.F. Combining cluster analysis with classifier ensembles to predict financial distress. Inf. Fusion 2014, 16, 46–58. [Google Scholar] [CrossRef]

- Li, J.; Wei, L.; Li, G.; Xu, W. An evolution strategy-based multiple kernels multi-criteria programming approach: The case of credit decision making. Decis. Support Syst. 2011, 51, 292–298. [Google Scholar] [CrossRef]

- Malhotra, R.; Malhotra, D. Evaluating consumer loans using neural networks. Omega 2003, 31, 83–96. [Google Scholar] [CrossRef]

- Twala, B. Multiple classifier application to credit risk assessment. Expert Syst. Appl. 2010, 37, 3326–3336. [Google Scholar] [CrossRef]

- Finlay, S. Multiple classifier architectures and their application to credit risk assessment. Eur. J. Oper. Res. 2011, 210, 368–378. [Google Scholar] [CrossRef]

- Yao, P.; Lu, Y. Neighborhood rough set and SVM based hybrid credit scoring classifier. Expert Syst. Appl. 2011, 38, 11300–11304. [Google Scholar] [CrossRef]

- Purohit, S.; Kulkarni, A. Credit evaluation model of loan proposals for Indian Banks. In Proceedings of the 2011 World Congress on Information and Communication Technologies, Mumbai, India, 11–14 December 2011; pp. 868–873. [Google Scholar] [CrossRef]

- Pérez-Martin, A.; Vaca, M. Compare Techniques In Large Datasets To Measure Credit Banking Risk In Home Equity Loans. Int. J. Comput. Methods Exp. Meas. 2017, 5, 771–779. [Google Scholar] [CrossRef]

- Bellotti, T.; Crook, J. Support vector machines for credit scoring and discovery of significant features. Expert Syst. Appl. 2009, 36, 3302–3308. [Google Scholar] [CrossRef]

- Wang, G.; Hao, J.; Ma, J.; Jiang, H. A comparative assessment of ensemble learning for credit scoring. Expert Syst. Appl. 2011, 38, 223–230. [Google Scholar] [CrossRef]

- Liu, Y.; Schumann, M. Data mining feature selection for credit scoring models. J. Oper. Res. Soc. 2005, 56, 1099–1108. [Google Scholar] [CrossRef]

- Howley, T.; Madden, M.G.; O’Connell, M.L.; Ryder, A.G. The effect of principal component analysis on machine learning accuracy with high-dimensional spectral data. Knowl. Based Syst. 2006, 19, 363–370. [Google Scholar] [CrossRef]

- Latimore, D. Artificial Inteligence in Banking; Oliver Wyman: Boston, MA, USA, 2018. [Google Scholar]

- Martinez-Murcia, F.; Górriz, J.; Ramírez, J.; Illán, I.; Ortiz, A. Automatic detection of Parkinsonism using significance measures and component analysis in DaTSCAN imaging. Neurocomputing 2014, 126, 58–70. [Google Scholar] [CrossRef]

- Zhou, L.; Lai, K.K.; Yu, L. Credit scoring using support vector machines with direct search for parameters selection. Soft Comput. 2009, 13, 149–155. [Google Scholar] [CrossRef]

- Wang, X.; Xu, M.; Pusatli, Ö.T. A Survey of Applying Machine Learning Techniques for Credit Rating: Existing Models and Open Issues. In Neural Information Processing; Springer: Berlin/Heidelberg, Germany, 2015; pp. 122–132. [Google Scholar]

- Vaca, M. Evaluación de Estimadores Basados en Modelos Para el Cálculo del Riesgo de Crédito Bancario en Entidades Financieras. Ph.D. Thesis, Universidad Miguel Hernández, Elche, Spain, 2017. [Google Scholar]

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2015. [Google Scholar]

- Dua, D.; Graff, C. UCI Machine Learning Repository; School of Information and Computer Sciences, University of California: Irvine, CA, USA, 2017. [Google Scholar]

- Picos Sánchez, F.; Pérez López, C.; Gallego Vieco, C.; Huete Vázquez, S. La Muestra de Declarantes del IRPF de 2008: Descripción General y Principales Magnitudes; Documentos de trabajo 14/2011; Instituto de Estudios Fiscales: Madrid, Spain, 2011. [Google Scholar]

- Pérez López, C.; Burgos Prieto, M.; Huete Vázquez, S.; Gallego Vieco, C. La Muestra de Declarantes del IRPF de 2009: Descripción General y Principales Magnitudes; Documentos de trabajo 11/2012; Instituto de Estudios Fiscales: Madrid, Spain, 2012. [Google Scholar]

- Pérez López, C.; Burgos Prieto, M.; Huete Vázquez, S.; Pradell Huete, E. La Muestra de Declarantes del IRPF de 2010: Descripción General y Principales Magnitudes; Documentos de trabajo 22/2013; Instituto de Estudios Fiscales: Madrid, Spain, 2013. [Google Scholar]

- Pérez López, C.; Villanueva García, J.; Burgos Prieto, M.; Pradell Huete, E.; Moreno Pastor, A. La Muestra de Declarantes del IRPF de 2011: Descripción General y Principales Magnitudes; Documentos de trabajo 17/2014; Instituto de Estudios Fiscales: Madrid, Spain, 2014. [Google Scholar]

- Pérez López, C.; Villanueva García, J.; Burgos Prieto, M.; Bermejo Rubio, E.; Khalifi Chairi El Kammel, L. La Muestra de Declarantes del IRPF de 2012: Descripción General y Principales Magnitudes; Documentos de trabajo 18/2015; Instituto de Estudios Fiscales: Madrid, Spain, 2015. [Google Scholar]

- Mylonakis, J.; Diacogiannis, G. Evaluating the Likelihood of Using Linear Discriminant Analysis as a Commercial Bank Card Owners Credit Scoring Model. Int. Bus. Res. 2010, 3. [Google Scholar] [CrossRef]

- Hand, D.; Henley, W. Statistical Classification Methods in Consumer Credit Scoring: A Review. J. R. Stat. Soc. Ser. A Stat. Soc. 1997, 160, 523–541. [Google Scholar] [CrossRef]

- Boj, E.; Claramunt, M.M.; Esteve, A.; Fortiana, J. Credit Scoring basado en distancias: Coeficientes de influencia de los predictores. In Investigaciones en Seguros y Gestión de riesgos: RIESGO 2009; Estudios, F.M., Ed.; Cuadernos de la Fundación MAPFRE: Madrid, Spain, 2009; pp. 15–22. [Google Scholar]

- Ochoa P, J.C.; Galeano M, W.; Agudelo V, L.G. Construcción de un modelo de scoring para el otorgamiento de crédito en una entidad financiera. Perfil de Conyuntura Económica 2010, 16, 191–222. [Google Scholar]

- Cabrera Cruz, A. Diseño de Credit Scoring Para Evaluar el Riesgo Crediticio en una Entidad de Ahorro y crédito popular. Ph.D. Thesis, Universidad tecnológica de la Mixteca, Oxaca, Mexico, 2014. [Google Scholar]

- Moreno Valencia, S. El Modelo Logit Mixto Para la Construcción de un Scoring de Crédito. Ph.D. Thesis, Universidad Nacional de Colombia, Bogotá, Colombia, 2014. [Google Scholar]

- Salinas Flores, J. Patrones de morosidad para un producto crediticio usando la técnica de árbol de clasificación CART. Rev. Fac. Ing. Ind. UNMSM 2005, 8, 29–36. [Google Scholar]

- Gomes Goncalves, O. Estudio Comparativo de Técnicas de Calificación Creditícia. Ph.D. Thesis, Universidad Simón Bolívar, Barranquilla, Colombia, 2009. [Google Scholar]

- Lee, T.; Chen, I. A two-stage hybrid credit scoring model using artificial neural networks and multivariate adaptive regression splines. Expert Syst. Appl. 2005, 28, 743–752. [Google Scholar] [CrossRef]

- Lee, T.; Chiu, C.; Lu, C.; Chen, I. Credit Scoring Using the Hybrid Neural Discriminant Technique. Expert Syst. Appl. 2002, 23, 245–254. [Google Scholar] [CrossRef]

- Steenackers, A.; Goovaerts, M. A credit scoring model for personal loans. Insur. Math. Econ. 1989, 8, 31–34. [Google Scholar] [CrossRef]

- Quintana, M.J.M.; Gallego, A.G.; Pascual, M.E.V. Aplicación del análisis discriminante y regresión logística en el estudio de la morosidad en las entidades financieras: Comparación de resultados. Pecvnia Rev. Fac. Cienc. Econ. Empres. Univ. Leon 2005, 1, 175–199. [Google Scholar] [CrossRef]

- Shannon, C.E. Prediction and entropy of printed English. Bell Syst. Tech. J. 1951, 30, 50–64. [Google Scholar] [CrossRef]

- Dunteman, G.H. Principal Components Analysis (Quantitative Applications in the Social Sciences) Issue 69; Quantitative Applications in the Social Sciences; Sage Publications, Inc.: Thousand Oaks, CA, USA, 1989. [Google Scholar]

- Kotsiantis, S.; Kanellopoulos, D. Discretization techniques: A recent survey. GESTS Int. Trans. Comput. Sci. Eng. 2006, 32, 47–58. [Google Scholar]

- Zaidi, N.; Du, Y.; Webb, G. On the Effectiveness of Discretizing Quantitative Attributes in Linear Classifiers. arXiv 2017, arXiv:1701.07114. [Google Scholar]

- Fisher, R.A. XV.—The Correlation between Relatives on the Supposition of Mendelian Inheritance. Trans. R. Soc. Edinb. 1919, 52, 399–433. [Google Scholar] [CrossRef]

- Almi nana, M.; Escudero, L.F.; Pérez-Martín, A.; Rabasa, A.; Santamaría, L. A classification rule reduction algorithm based on significance domains. TOP 2014, 22, 397–418. [Google Scholar] [CrossRef]

- Rabasa Dolado, A. Método Para la Reducción de Sistemas de Reglas de Clasificación por Dominios de Significancia. Ph.D. Thesis, Universidad Miguel Hernández, Elche, Spain, 2009. [Google Scholar]

| Class | Training | Testing | Total Cases |

|---|---|---|---|

| Payers | 485 | 215 | 700 |

| Defaulters | 215 | 85 | 300 |

| Total | 700 | 300 | 1000 |

| Class | Training | Testing | Total Cases |

|---|---|---|---|

| Payers | 2,131,601 | 811,375 | 2,942,976 |

| Defaulters | 1,461,779 | 696,505 | 2,158,284 |

| Total | 3,593,380 | 1,507,880 | 5,101,260 |

| Original Feature | Discretized Criteria | Category Name |

|---|---|---|

| Percentage of ownership of the residence (ptvh) | Equal to 0% | ptvh-0 |

| Equal to 50% | ptvh-50 | |

| Equal to 100% | ptvh-100 | |

| Between 0% and 50% | ptvh- <50 | |

| Between 50% and 100% | ptvh- >50 | |

| Percentage of ownership of the residence of spouse (pctvh) | Equal to 0% | pctvh-0 |

| Equal to 50% | pctvh-50 | |

| Equal to 100% | pctvh-100 | |

| Between 0% and 50% | pctvh- <50 | |

| Between 50% and 100% | pctvh- >50 | |

| Average ownership percentage (Pmedowner) | Equal to 0% | PMO-0 |

| Equal to 50% | PMO-50 | |

| Equal to 100% | PMO-100 | |

| Between 0% and 50% | PMO- <50 | |

| Between 50% and 100% | PMO- >50 | |

| Property tax deduction (PTD) | Does not have | PTD-0 |

| Has deduction | PTD-Dist0 | |

| Property income (PI) | Does not have | PI-0 |

| Has property income | PI-Dist0 | |

| Family Income (FI) | Negative | FI- <0 |

| Equal to 0 € | FI-0 | |

| Between 0 and 3701 € (FIrst Quartile) | FI-1st Quartile | |

| Between 3701 € and 10,730 € | FI- <Media | |

| >10,730 € | FI- + Media | |

| Amount (A) | First Quartile | A-1stQ |

| Second Quartile | A-2ndQ | |

| Third Quartile | A-3thQ | |

| Fourth Quartile | A-4thQ |

| Original Feature | Discretized Criteria | Category Name |

|---|---|---|

| credit_amount | First Quartile | A-1stQ |

| Second Quartile | A-2ndQ | |

| Third Quartile | A-3thQ | |

| Fourth Quartile | A-4thQ | |

| duration_in_month | Under 12 | duration-1 |

| Between 12 and 18 | duration-2 | |

| Between 18 and 24 | duration-3 | |

| Over 24 | duration-4 | |

| age | Under 28 | Age- <28 |

| Between 28 and 32 | Age-28–32 | |

| Between 32 and 36 | Age-32–36 | |

| Between 36 and 41 | Age-36–41 | |

| Between 41 and 49 | Age-41–49 | |

| Over 50 | Age-50+ |

| Dataset | GLM | LMM | RBS | |

|---|---|---|---|---|

| IEF Dataset | Raw | 0.3635 | 0.3660 | - |

| Discretize | 0.3527 | 0.3572 | 0 | |

| Gain Ratio | 0.3680 | 0.3960 | 0.3184 | |

| PCA | 0.3966 | 0.4181 | 0.1809 | |

| German Credit | Raw | 0.3955 | 0.4037 | - |

| Discretize | 0.4048 | 0.4120 | 0 | |

| Gain Ratio | 0.4045 | 0.4082 | 0.4303 | |

| PCA | 0.4275 | 0.4414 | 0.4082 | |

| Dataset | GLM | LMM | RBS | |

|---|---|---|---|---|

| IEF Dataset | Raw | 533.18 | 347.77 | - |

| Discretize | 693.17 | 521.96 | 15.02 | |

| Gain Ratio | 17.687 | 32.092 | 0.4980 | |

| PCA | 23.184 | 53.952 | 0.928 | |

| German Credit | Raw | 0.042 | 0.179 | - |

| Discretize | 0.042 | 0.107 | 0.085 | |

| Gain Ratio | 0.015 | 0.095 | 0.041 | |

| PCA | 0.011 | 0.089 | 0.039 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pérez-Martín, A.; Pérez-Torregrosa, A.; Rabasa, A.; Vaca, M. Feature Selection to Optimize Credit Banking Risk Evaluation Decisions for the Example of Home Equity Loans. Mathematics 2020, 8, 1971. https://doi.org/10.3390/math8111971

Pérez-Martín A, Pérez-Torregrosa A, Rabasa A, Vaca M. Feature Selection to Optimize Credit Banking Risk Evaluation Decisions for the Example of Home Equity Loans. Mathematics. 2020; 8(11):1971. https://doi.org/10.3390/math8111971

Chicago/Turabian StylePérez-Martín, Agustin, Agustin Pérez-Torregrosa, Alejandro Rabasa, and Marta Vaca. 2020. "Feature Selection to Optimize Credit Banking Risk Evaluation Decisions for the Example of Home Equity Loans" Mathematics 8, no. 11: 1971. https://doi.org/10.3390/math8111971

APA StylePérez-Martín, A., Pérez-Torregrosa, A., Rabasa, A., & Vaca, M. (2020). Feature Selection to Optimize Credit Banking Risk Evaluation Decisions for the Example of Home Equity Loans. Mathematics, 8(11), 1971. https://doi.org/10.3390/math8111971