1. Introduction

In the recent decades, owing to the development of AI technology, intelligent manufacturing technology has rapidly evolved, including automation, digitization, and intelligence, however, many challenges remain to be overcome. These include the collection of in-line manufacturing data in industry, the long-time consuming in pre-processing, the selection of sensors, features, and AI models, and the tuning of hyperparameters.

A Tool Condition Monitoring (TCM) system has been performed using advanced sensors and computational intelligence to predict and avoid adverse conditions for cutting tools and machinery [

1,

2,

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13]. The sensors are indispensable parts of intelligent manufacturing. First, the signals of sensors are obtained; second, after a series of data processing techniques, the desired data can be obtained; finally, the obtained data can be input into the AI model to classify or cluster the target topic. However, selection of the suitable sensors is crucial. There are many sensors in the world, including accelerometers, microphones, thermocouples, proximity sensors, acoustic emission sensors, pressure sensors, gas sensors, and position sensors. Use of an excessive number of sensors can increase cost and decrease the performance of the system, whereas using a few important sensors on the tool machine can increase their contribution by adopting features, reducing the cost incurred during sensor installation, model computing, and research. In addition, it would improve the robustness and performance of the system. However, this is a chicken-and-egg conundrum; that is, it is not feasible to clearly judge the suitability of the sensors before installation. Except for the domain knowledge required, the selection of sensors is usually done by sensitivity analysis after acquiring the relevant data, and even the selection of time-domain or frequency-domain features in the sensors. Over the past few decades, statistical methods including Pearson, Spearman, and ANOVA (analysis of variance) correlation coefficient analyses have been commonly used. Moreover, the algorithms in tree family, namely the decision tree, isolation forest, gradient boosting decision tree, and the light gradient boosting machine, have recently been used to compute the feature contribution of data. However, finding the importance of each feature in a neural network model, which is commonly known as a black box, poses a considerable problem. Fortunately, a technique known as Shapley Additive ExPlanations (SHAP) has been proposed to overcome this challenge [

14]. The solid theoretical foundation of SHAP can explain any models from tree or neural networks, irrespective of whether the learning model is shallow or deep learning. Therefore, in this study, we compared two traditional analysis methods with SHAP to determine the importance of the features of five different sensors. With the AI models explained, the suitable sensor for reduction of the above cost and improvement of the performance of the system can be determined. The traditional Shapley value has been used for feature selection as early as 2005 [

15] and for sensor selection in 2009 [

16], but it has not been applied to the field of wear prediction. Until SHAP was invented, some scholars, such as Wang [

16], applied SHAP to elucidate the contribution of each feature in the model, except for the intelligent manufacturing domain.

The sensor systems used for monitoring tool wear condition are dynamometers, accelerometers, acoustic emission sensors, current and power sensors, image sensors, and other sensors. Using the processed data obtained from the sensor, the following algorithms are among the most referred methods of deep learning in recent years: Deep multilayer perceptron (DMLP), long-short-term memory (LSTM), convolutional neural network (CNN), and deep reinforcement learning (DRL) [

17].

Weili [

18] in 2020 designed a nonlinear regression model to predict tool wear based on a new input vector. This method is validated on both NASA Ames milling data set and the 2010 PHM Data Challenge data set. Results show the outstanding performance of the hybrid information model in tool wear prediction, especially when the experiments are conducted under various operating conditions.

However, Serin used a genetic algorithm for feature selection, which is a type of optimization algorithm that requires various parameters for tuning, and Weili used empirical rules for feature extraction. Furthermore, neither Serin nor Weili focused on the hyperparameter tuning of the model. It is important to understand that AI modeling must adhere to the garbage in garbage out principle. Both the feature selection and the hyperparameters of the model will affect the final model performance. In this study, we propose solutions for these two cases.

The current problem is that if a new problem domain is applied with machine learning and deep learning, we have to consider what kind of framework to use at first. After all, deep learning is very sensitive to many different hyperparameters, such as optimizers. The choice of algorithm may even require setting 20 to 50 parameter settings to train a model system with good performance.

Moreover, in addition to the abovementioned feature selection problem, the tuning of hyperparameters is another topic of significance. AutoML seeks to automatically compose and parametrize machine learning algorithms to maximize a given metric, such as predictive accuracy [

19,

20,

21,

22,

23,

24]. The available algorithms are typically related either to preprocessing (feature selection, transformation, imputation, etc.) or to the core functionality (classification, regression, ranking, etc.)

In the past, empirical rules have been used to improve the robustness of the models by gradually adjusting the hyperparameters with experienced AI modeling researchers to reduce the occurrence of underfitting and overfitting. Later on, optimization methods such as Simulated Annealing, Particle Swarm Optimization, and Genetic Algorithm were gradually used for the field of Hyperparameter Optimization (HPO), until 2011, when scholars such as James Bergstra [

25] successfully applied the Bayesian optimization method for treed Parzen estimators to achieve a bright performance, followed by Frank Hutter [

26] in 2011, and Kevin Swersky [

27] in 2013 for searching kernel functions.

In this study, the new cloud-based TCM system is established by using the LSTM model for non-indirect milling cutter monitoring, and the service will eventually propose a recommended optimized hyperparameter and show the improvement in the model performance. In the newly proposed cloud services, users could operate with an interactive and visualized cloud service user interface to tune hyperparameters via a web browser, and the AI model optimization process will be carried out fully automatically by integrating container management and Kubernetes in high-speed computers.

2. Experimental Setup

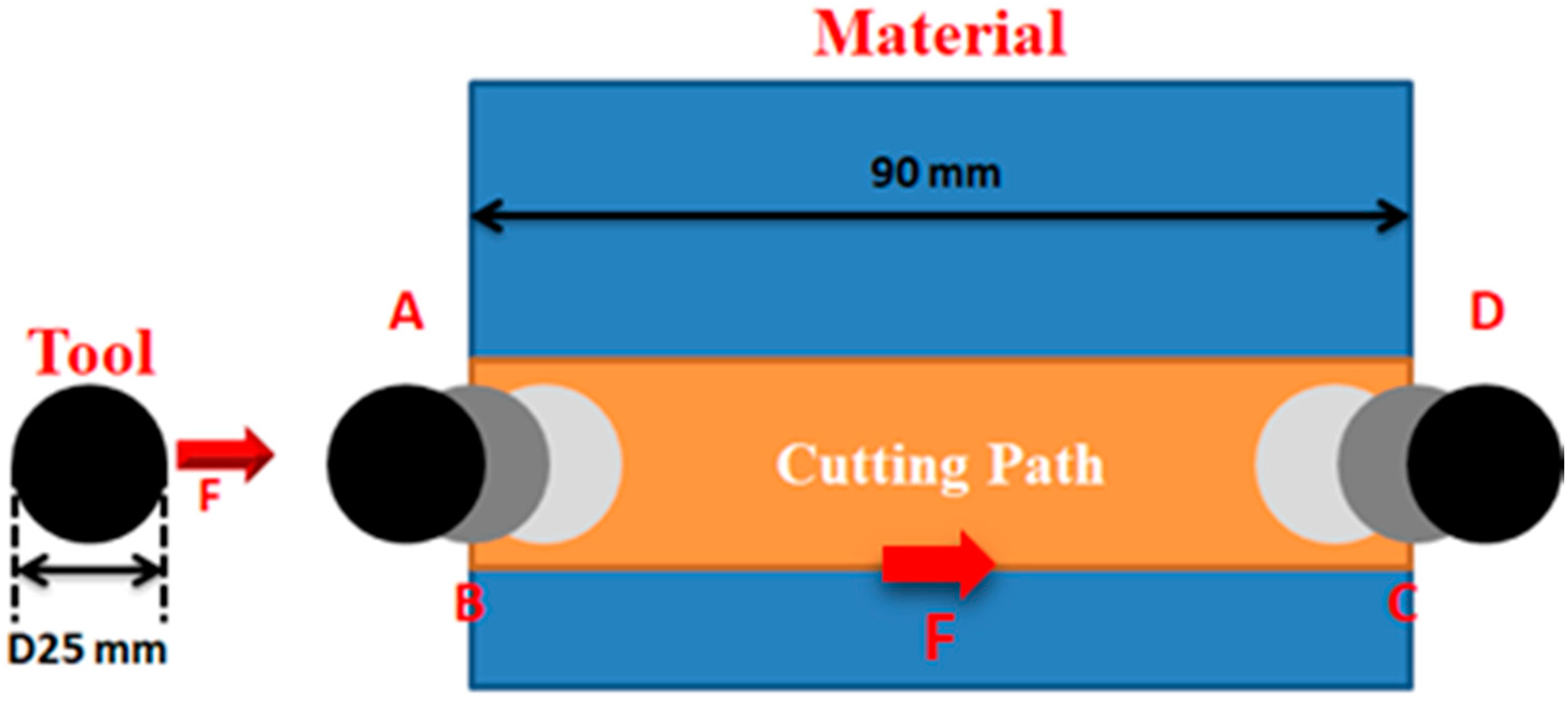

In this study, an experiment was conducted to analyze the contribution of five different sensors in an intelligent milling machine. As illustrated in

Figure 1, the experimental setup consisted of two accelerometers, one condense microphone (not visible in the figure), and one thermocouple installed on the rotating table of a Hardinge VMC 1000 II milling machine; simultaneously, the spindle load was acquired by the NI’s data acquisition module. Since a hydrostatic worktable was installed on the milling machine, the temperature of oil in the worktable was also considered.

The experiment was performed using a Hardinge computer numerical control (CNC) milling machine. The workpiece material was SUS 304 stainless steel, and a JSK 4 tooth carbide end mill milling cutter of straight-line slot milling type was used. The workpiece was processed until each cutter was rendered unusable, and the wear and tear of the tools along with the signals of the five sensors were recorded. The suitability of the sensors was examined based on the assumption that a tool condition monitoring system would be developed using this intelligent tool machine.

To increase the variance of the system, four commonly used cutting parameters were used in the manufacturing processes.

Table 1 provides the experimental conditions of each tool order before the cutter was rendered unusable; namely, spindle speed, feed rate, cutting depth, and milling times. Moreover, each cut was recorded with Charge Coupled Device (CCD) photos and the real wear was calculated. We obtained 22, 30, 35, and 75 data points from each cutting condition. The information of specifications and installation location of the sensors is shown in

Table 2, and the milling path is shown in

Figure 2.

In the full groove milling workpiece, the tool just entering the workpiece generated unstable signal; in the feed rate and path calculation, milling A is the near tool contact point and D is the exit point; a 10 s signal window is set to capture the stable signal during milling, as shown in

Figure 3. The total sensor and the effective feature index of each direction are obtained using wavelet conversion, RMS, SE, Kurtosis, Skewness, and inverse spectrum.

4. Hyperparameters Optimization with Tree-Structured Parzen Estimator (TPE)

In this chapter, we will introduce our proposed online cloud service system of automated HPO that integrates the NNI module of Microsoft and the TPE resources.

4.1. Methodology

Methodology includes TPE and interactive cloud service integration. In Bayesian optimization (BO), we can obtain a posteriori probabilistic description of the objective function and, thus, obtain the expected mean and variance of each hyperparameter at each point using the Gaussian process regression. The mean value represents the final desired effect at this point (exploiting); moreover, the larger the mean value, the-larger the indicated final desired value of the model. The variance indicates the uncertainty of the effect at this point (exploration), and the larger the variance, the higher the indicated exploration value at this point.

Based on the BO theorem mentioned above, the Gaussian mixing model and the concept of Parzen tree structure, the Hyperopt, are introduced to form the TPE algorithm, which increases its performance in high-dimensional space compared to the BO algorithm.

4.2. Cloud Service of Automated HPO

Due to the convenience of grid search and random search algorithms, these are currently the most widely used hyperparametric optimization strategies that can be used in different domains: the number of hidden layers, number of neurons, type of activation function, different learning rates, and batch size. In this study, we first use the milling data of Condition 2, with the sensor signal as input and the CCD image to calculate the wear as the curve output. At first, the LSTM model is trained for hyperparameter tuning and the model architecture is uploaded to the cloud. During the tuning process, Neural Network Intelligence (NNI) [

8] provides a friendly visual web interface to monitor the tuning process, and the hyperparameter ranking can be done according to the performance metrics of the model of interest to the user or the algorithm developed by the user. The optimized model can be downloaded, and the interface is shown in

Figure 7.

The Light Gradient Boosting Machine (LightGBM) algorithm [

27,

28,

29] performs automatic feature filtering. Based on the histogram algorithm, the training data is traversed, the number of discrete values of each feature is counted, and the corresponding regression curves of the features in the data are ranked according to their mean values.

A Tree-structured Parzen Estimator (TPE) [

30] is a sequential model-based optimization method, which is based on the previous model, setting the target hyperparameters to be optimized, establishing a hyperparameter exploration space grid, and then using loss minimization as a criterion for optimization. The hyperparameters are the key factors that affect iterative learning in machine learning, and their settings have a great impact on the training of the model, which is the most pestilent challenge. The purpose of adjusting the hyperparameter exploration is to maintain the model performance and accelerate the speed of model training.

Table 5 shows the optimization of the three hyperparameters, such as optimizer, learning rate, and batchsize. According to the default range of values of keras hyperparameters, and the different combinations are determined by the TPE optimization search strategy.

The hyperparameters are the key factors affecting the iterative learning of machine learning, and their setting is the most troubling problem because of the great influence on the model training. First, a set of pre-trained hyperparameters is given, and p(y|x) is modeled directly based on the Gaussian process method to speed up the training of the model.

4.3. Results

In

Figure 8, after the 100 different combinations of hyperparameters were optimized by TPE, the three regression model indicators of MSE, MAPE, and MAE were ranked. The MSEs of the top three hyper parameter combinations were observed to be in the range of

to

, which were not significantly different from each other; however, they were found to be better than the results of the manually adjusted model by about 17%.

The first three combinations of hyperparameters with the lowest loss were selected, where MSE was in the range of to , MAPE was in the range of 19 to 22, and MAE was in the range of to . The difference between the error indices of the first three combinations of hyperparameters is not significant, but all of them are about 17% better than the results of manual model adjustment.

In order to verify whether the model is suitable for practical application, the model was built and optimized using the first set of tool data as shown in

Table 6 * Symbols, and then inferred using three other sets of data with different working conditions, and the prediction was carried out using the hyperparameter-optimized model, and good performance was obtained.

Figure 9 shows the prediction results obtained with HPO progress. Although the true values do not overlap with the predicted values, highly similar trends are observed. This shows that the prediction results are a valuable reference and can be provided to the actual operators of the equipment.

5. Discussion and Conclusions

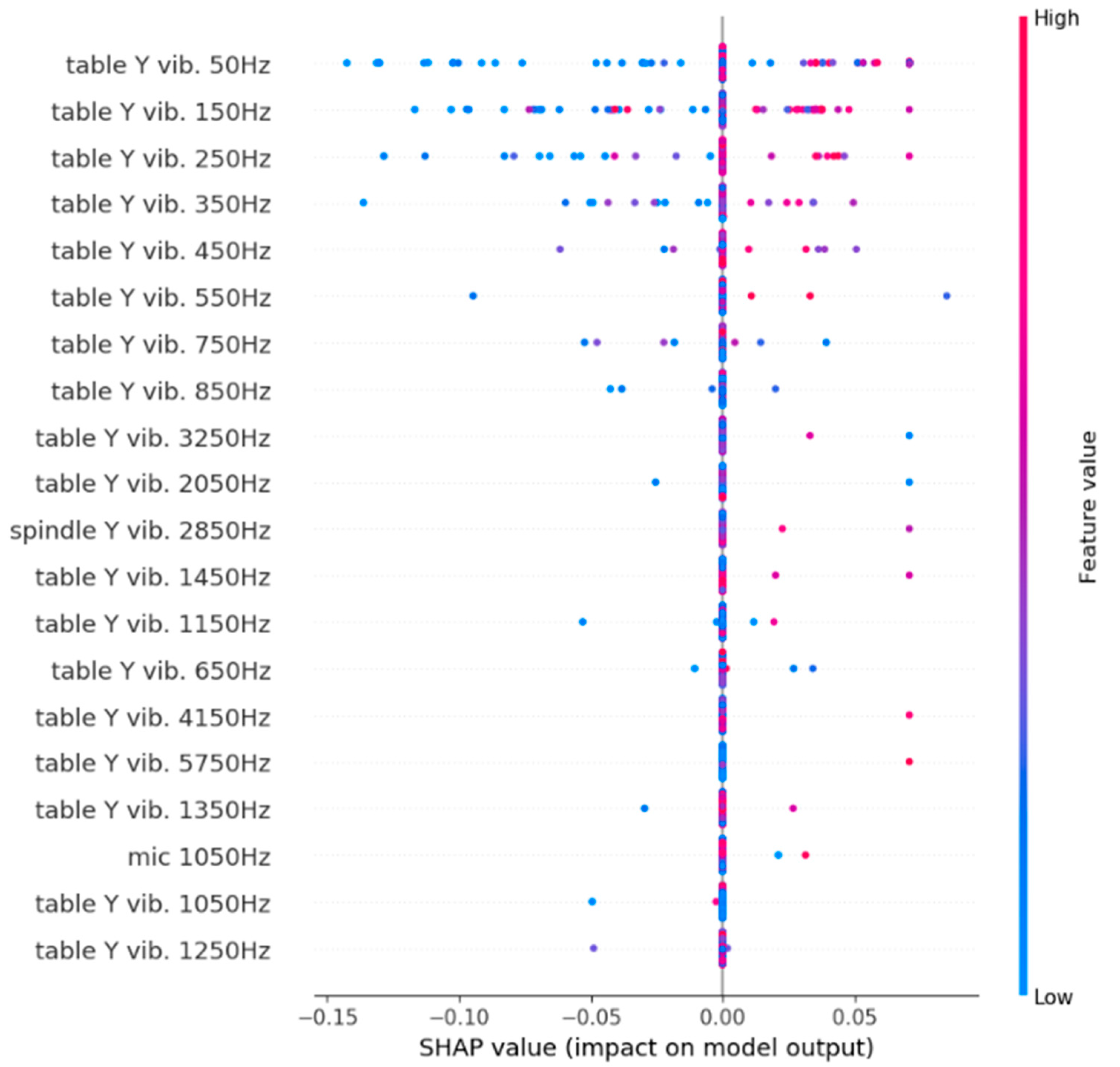

In the sensors and features selection system, the results obtained from the Pearson and Spearman correlation coefficients indicate that the accelerometer on the spindle provided a higher level of contribution to the system compared to other signals, whereas the contribution of oil temperature and spindle load was negligible. In addition, according to the SHAP value and a chain of analysis, the table accelerometer was more significant than the spindle accelerometer and microphone. However, the results from the SHAP value revealed that the FFT signals in some bandwidth from the table accelerometer had a considerably negative impact on the system. Although it is not possible to intuitively compare the traditional approach with SHAP in this case, SHAP solves the issue of long-standing black box and uses the non-linear solution using the AI model by deriving a different explanation than the linear one.

Moreover, in the HPO part, to build a cloud-based tool condition monitoring system, we used cloud-based techniques as well as automated hyperparameter optimization methods and investigated the performance of hyperparameter optimization on LSTM models to predict the tool life. In this study, data from different full groove milling conditions are subjected to the same data pre-processing. We trained the model with the second set of conditions and the hyperparameter optimization reduced the MSE error value by 17%. In order to verify the real performance of the model after hyperparameter optimization, inference validation was performed with other working conditions and good performance was obtained. All the details regarding this development are on the cloud, and will be applied to the production line in the future to reduce the time spent on hyper parameter tuning.

In summary, the proposed sensors and features selection system could solve the issue of black box of AI and select the sensors and features by AI models, and the proposed cloud service with HPO could extend the HPO concept to the intelligent manufacturing area and establish a cloud service system. In addition, the usability of these methodologies in the research field of tool wear were also verified.

The two newly proposed solutions in this study are the first to be implemented in the field of intelligent manufacturing. The sensors and features selection system applying SHAP could display the contribution of each sensor or each feature with machine learning models and help engineers to install the sensors in their suitable position avoiding the redundant sensors. On the other hand, the newly proposed cloud-based HPO service provides an interactive and visualized interface for automatic hyperparameter tuning and was validated by a newly proposed cloud-based TCM system.