1. Introduction

The optimization process aims to find the optimal decision variables for a system from all the possible values by minimizing its cost function [

1]. In many scientific fields, such as image processing [

2], industrial manufacturing [

3], wireless sensor networks [

4], scheduling problems [

5], path planning [

6], feature selection [

7], and data clustering [

8], optimization problems are essential. Real-world engineering problems have the characteristics of non-linearity, computation burden, and wide solution space. Due to the gradient mechanism, traditional optimization methods have the drawbacks of poor flexibility, high computational complexity, and local optima entrapment. In order to overcome these shortcomings, many new optimization methods have been proposed, especially the meta-heuristic (MH) algorithms. MH algorithms perform well in optimization problems, with high flexibility, no gradient mechanism, and high ability to escape the trap of local optima [

9].

In general, MH algorithms are classified into four main categories: swarm intelligence algorithms (SI), evolutionary algorithms (EA), physics-based algorithms (PB), and human-based algorithms (HB) [

10], as shown in

Table 1. Despite the different sources of inspiration for these four categories, they all occupy two important search phases, namely, exploration (diversification) and exploitation (intensification) [

11,

12]. The exploration phase explores the search space widely and efficiently, and reflects the capability to escape from the local optimal traps, while the exploitation phase reflects the capability to improve the quality locally and tries to find the best optimal solution around the obtained solution. In summary, a great MH algorithm can obtain a balance between these two phases to achieve good performance.

Generally, the optimization process of the MH algorithms begins with a set of candidate solutions generated randomly. These solutions follow an inherent set of optimization rules to update, and they are evaluated by a specific fitness function iteratively; this is the essence of an optimization algorithm. The Aquila Optimizer (AO) [

37] was first proposed in 2021 as one of the SI algorithms. It is achieved by simulating the four Aquila hunting strategies, which are high soar with a vertical stoop, walk and grab prey, low flight with slow descent attack, and walk and grab prey. The first two behavioral strategies reflect the exploitation ability, while the latter two reflect the exploration ability. AO has the advantages of fast convergence speed, high search efficiency, and a simple structure. However, this algorithm is insufficient as the complexity of real-world engineering problems increases. The performance of AO depends on the diversity of population and the optimization update rules. Moreover, the algorithm has inadequate exploitation and exploration capabilities, weak ability to jump out of local optima, and poor convergence accuracy.

In [

38], Ewees, A. et al. improved the AO algorithm through using the search strategy of the WOA to boost the search process of the AO. They mainly used the operator of WOA to substitute for the exploitation phase of the AO. The proposed method tackled the limitations of the exploitation phase as well as increasing the diversity of solutions in the search stage. Their work attempted to avoid the weakness of using a single search strategy; comparative trials showed that the suggested approach achieved promising outcomes compared to other methods.

Zhang, Y. et al. [

39] proposed a new hybridization algorithm of AOA with AO, named AOAAO. This new algorithm mainly utilizes the operators of AOA to replace the exploitation strategies of AO. In addition, inspired by the HHO algorithm, an energy parameter, E, was applied to balance the exploration and exploitation procedures of AOAAO, with a piecewise linear map used to decrease the randomness of the energy parameter. AOAAO is more efficient than AO in optimization, with faster convergence speed and higher convergence accuracy. Experimental results have confirmed that the suggested strategy outperforms the alternative algorithms.

Wang, S. et al. [

40] proposed Improved Hybrid Aquila Optimizer and Harris Hawks Algorithm (IHAOHHO) in 2021. This algorithm combines the exploration strategies of AO and the exploitation strategies of HHO. Additionally, the nonlinear escaping energy parameter is introduced to balance the two exploration and exploitation phases. Finally, it uses random opposition-based learning (ROBL) strategy to enhance performance. IHAOHHO has the advantages of high search accuracy, strong ability to escape local optima, and good stability.

The binary variants of the AO mentioned above all improve on the performance of original AO. However, they remain insufficient for specific complex optimization problems, especially multimodal and high-dimensional problems. In order to enhance the optimization capability in both low and high dimensions, we propose an improved AO algorithm, IAO, which uses SCF and mutations. The main drawbacks of the AO consist of two main points:

The optimization rules of AO limit the search capability. The second strategy of AO limits the exploration capability, as the effect of the Levy flight function leads to local optima, while the third limits the exploitation capability with the fixed number parameter , resulting in low convergence accuracy.

The population diversity of AO decreases with increasing numbers of iterations [

38]. While the candidate solutions are updated according to the specific optimization rules of AO, the randomness of the solutions decreases through the search process, resulting in inferior population diversity.

Therefore, in order to improve the optimization rules of AO, we introduce a search control factor (SCF) to improve these two search methods and allow the Aquila to search widely in the search space and around the best result. The random opposition-based learning (ROBL) and Gaussian mutation (GM) strategies are applied to increase the population diversity of the algorithm. The ROBL strategy is employed to further enhance the exploitation capability, while the GM strategy is utilized to further improve the exploration phase. In addition, the 23 standard benchmark functions were applied to rigorously evaluate the robustness and effectiveness of the IAO algorithm. In this paper, the IAO is compared with AO, IHAOHHO, and several well-known MH algorithms, including PSO, SCA, WOA, GWO, HBA, SMA, and SNS. In the end, CEC2019 test functions and four real-world engineering design problems were utilized to further evaluate the performance of IAO. The experimental results show that the proposed IAO achieves the best performance among all of these algorithms.

The main contribution of this paper is to improve on the performance of the AO algorithm. To improve the exploration and exploitation phases, we define an SCF to change the second and third predation strategies of the Aquila in order to fully search around the solution space and the optimal Aquila, respectively. We apply the ROBL strategy to further enhance the ability to exploit the search space and utilize the GM strategy to further enhance the ability to escape from local optimal traps. The remainder of this paper is organized as follows:

Section 2 introduces the AO algorithm;

Section 3 illustrates the details of the proposed algorithm; and comparative trials on benchmark functions and real-world engineering experiments are provided in

Section 4. Finally,

Section 5 concludes the study.

2. Aquila Optimizer (AO)

The AO algorithm is a typical SI algorithm proposed by Abualigah, L. et al. [

37] in 2021. The algorithm is optimized by simulating four predator–prey behaviors of Aquila through four strategies: selecting the search space by high soar with a vertical stoop; exploring within a divergent search space by contour flight with a short glide attack; exploiting within a convergent search space via low flight with a slow descent attack; and swooping, walking, and grabbing prey. A brief description of these strategies is provided below.

In this strategy, the Aquila searches the solution space through high soar and uses a vertical stoop to determine the hunting area; the mathematical model is defined as

where

represents the best position in the current iteration,

represents the average value of positions, which is shown in Equation (

2),

is a random number between 0 and 1, Dim is the dimension value of the solution space, N represents the number of Aquila population, and

t and

T are the current iteration and the maximum number of iterations, respectively.

In the second strategy, the Aquila uses spiral flight above the prey and then attacks through a short glide. The model formula is described as

where

is a random number within (0, 1),

is a random number selected from the Aquila population,

D is the dimension number, and

is the Levy flight method function, which is shown as

where

s is a constant value equal to 0.01,

is another equal to 1.5,

u and

are random numbers within (0, 1), and

y and

x indicate the spiral flight trajectory in the search, which are calculated as follows:

where

is an integer number from 1 to the dimension length (

D) and

refers to an integer indicating the search cycles between 1 and 20.

In this method, when the hunting area is selected the Aquila descends vertically and searches the solution space through low flight, then attacks the prey. The mathematical expression formula is represented as

where

and

are integer numbers equal to 0.1 which are used to adjust exploitation,

and

are random numbers between 0 and 1, and

and

are the upper and lower bounds of the solution space, respectively.

In the final method, the Aquila chases the prey in the light of stochastic escape route and attacks the prey on the ground. The mathematical expression of this behavior is

where

is a quality function parameter which is applied to tune the search strategies, rand is a random value within (0, 1),

is a random number between −1 and 1 indicating the behavior of prey tracking during the elopement, and

represents the flight slope when hunting prey, which decreases from 2 to 0.

3. The Proposed Improved Aquila Optimizer (IAO) Algorithm

Among the four strategies of the original AO algorithm, the effect of the Levy flight function of the second makes Aquila search insufficient in the solution space and tends to fall into local optima. Meanwhile, the third leads to a weak local exploitation ability, as the parameter is constant. To reduce the search pace of Aquila as iterations proceeds, we create a SCF woth an absolute value (abs) that decreases with the number of iterations to improve on the second and third strategies of the original AO. Furthermore, the ROBL and GM strategies are added to further improve the exploitation and exploration phases, respectively. The IAO is discussed in additional detail below.

3.1. Search Control Factor (SCF)

The search control factor is used to control the basic search step size and direction of Aquila. As iteration progresses, the movement of the Aquila will gradually decrease to increase the search accuracy. Therefore, the abs of SCF decreases with iterations (t), which is described as

where

is a constant >0 (default = 2). The term

is used to control the Aquila’s flight speed through the number of iterations,

r is a random number between 0 and 1, and

is the direction control factor described by Equation (

9), which is used to control the Aquila’s flight direction.

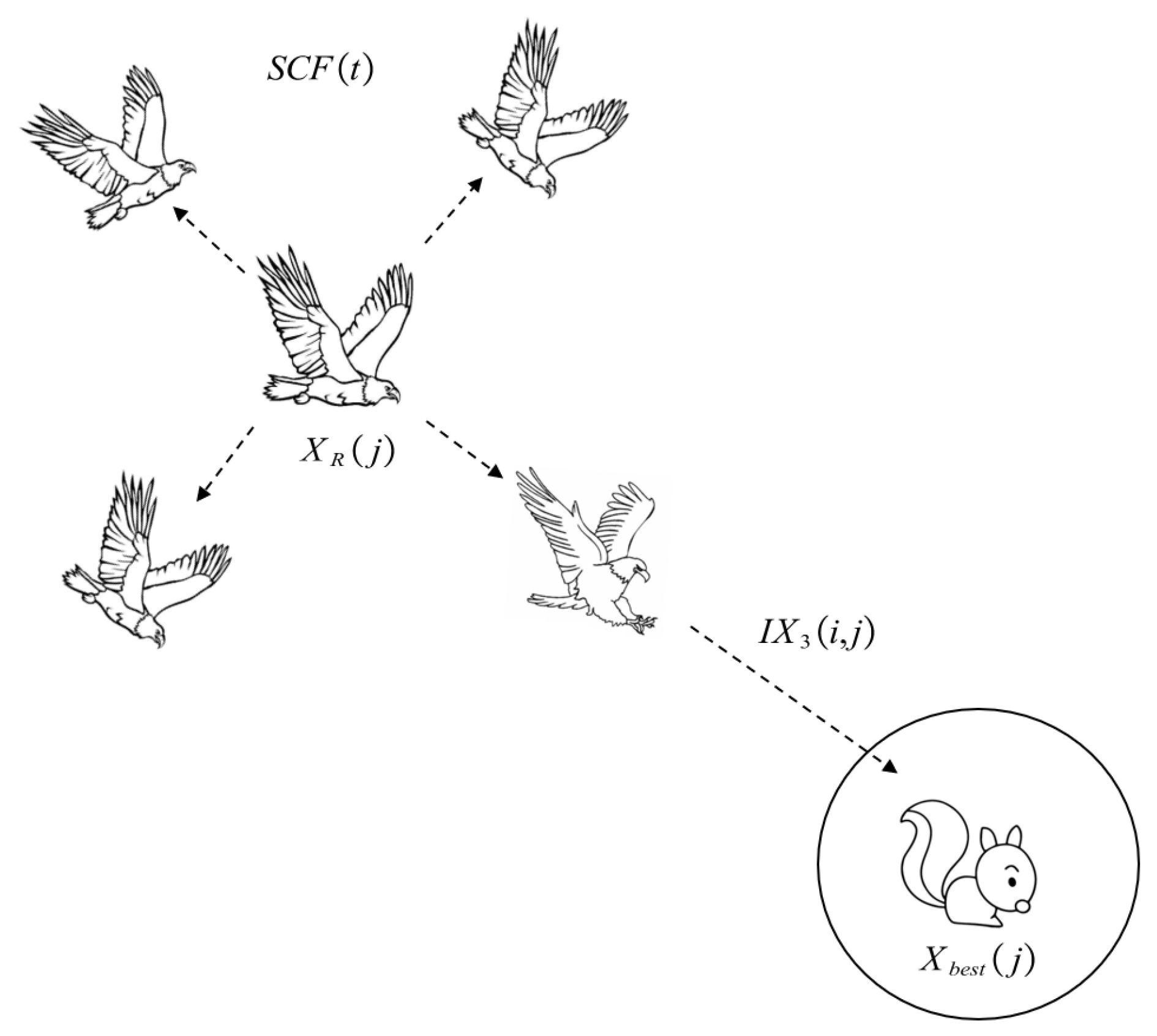

3.1.1. Improved Narrowed Exploration (): Full Search with Short Glide Attack

In the second improved method, the Aquila searches the target solution space sufficiently through different directions and speeds, then attacks the prey. The improved method is called full search with short glide attack, and is shown in

Figure 1. The mathematical expression formula of the improved strategy is illustrated in Equation (

10):

where

is the search control factor, which is introduced above,

is the solution of the next iteration of

t,

is a random selected from the Aquila population,

is a random number within (0,1),

is the best-obtained solution until t iteration, and

y and

x are the same as in the original AO algorithm, and describe the spiral flight method in the search as calculated in Equation (

5).

3.1.2. Improved Expanded Exploitation (): Search around Prey and Attack

In the third improved method, the Aquila searches thoroughly around the prey and then attacks it.

Figure 2 shows this strategy, called Aquila search around prey and attack. This method aims to improve the exploitation capability, and is mathematically expressed by Equation (

11):

where

is the search control factor,

and rand are random numbers between 0 and 1,

is the solution of the next iteration of

t,

represents the

jth dimension of the best position in this current iteration,

j is a random integer between 1 and the dimension length,

is the

jth dimension of a random one selected from the Aquila population, and

and

represent the

jth dimension of the the upper and lower bounds of the solution space, respectively.

3.2. Random Opposition-Based Learning (ROBL)

On the basis of opposition-based learning (OBL) [

41], Long, W. [

42] developed a powerful optimization tool called random opposition-based learning (ROBL). The main idea of ROBL is to consider the fitness of a solution and its corresponding random opposite solution at the same time in order to obtain a better candidate solution. ROBL has made a great contribution to improving exploitation ability, as defined by

where

is a random number between 0 and 1,

is the opposite solution, and

and

are the upper and lower bounds of the solution space, respectively.

3.3. Gaussian Mutation (GM)

Gaussian mutation (GM) is another commonly employed optimization tool, and performs well in the exploitation phase. Here, we use GM to prevent the IAO from falling into local optima; it is mathematically expressed by

where

and

are the current and mutated positions, respectively, of a search agent and

denotes a uniform random number that following a Gaussian distribution with a value of mean to 0 and standard deviation to 1, shown as

3.4. Computational Complexity of IAO

In this section, we discuss the computational complexity of the proposed IAO algorithm. In general, the computational complexity of the IAO typically includes three phases: initialization, fitness calculation, and updating of Aquila positions. The computational complexity of the initialization phase is O (N), where N is the population size of Aquila. Assume that T is the total number of iterations and O (T × N) is the computational complexity of the fitness calculation phase. Additionally, assume that Dim is the dimension of the problem; then, for updating positions of Aquila, the computational complexity is O (T × N × Dim + T × N × 2). Consequently, the total computational complexity of IAO is O (N × (T × (Dim + 2) + 1)).

3.5. Pseudo-Code of of IAO

In summary, IAO uses SCF to improve the second and third hunting methods of the original AO. Additionally, GM is applied to further enhance the exploitation capability, while ROBL is utilized to further improve the exploration phase. The target is to increase the solution diversity of the algorithm. The pseudocode and details of the IAO are shown in Algorithm 1 and

Figure 3, respectively.

| Algorithm 1 Improved Aquila Optimizer |

- 1:

Initialization phase: - 2:

Initialize the population X and the parameters of the IAO - 3:

while (The end condition is not met) do - 4:

Calculate the fitness function values and : the best obtained solution - 5:

for (i = 1, 2…, N) do - 6:

Update the mean value of the current solution and x, y, , , , etc. - 7:

if then - 8:

if then - 9:

Step 1: Expanded exploration () - 10:

Update the current solution using Equation ( 1) - 11:

- 12:

else - 13:

Step 2: Narrowed exploration () - 14:

Update the current solution using Equation ( 10) - 15:

- 16:

else - 17:

if then - 18:

Step 3: Expanded exploitation () - 19:

Update the current solution using Equation ( 11) - 20:

- 21:

else - 22:

Step 4: Narrowed exploration () - 23:

Update the current solution using Equation ( 7) - 24:

- 25:

if then - 26:

- 27:

Step 5: Random Opposition-Based Learning Updating () - 28:

Update the current solution using Equation ( 12) - 29:

if then - 30:

- 31:

Step 6: Gaussian-mutation Updating () - 32:

Update the current solution using Equation ( 13) - 33:

if then - 34:

- 35:

return The best solution ().

|

5. Conclusions

This paper proposes an Improved Aquila Optimizer (IAO) algorithm. A search control factor (SCF) is proposed to improve the second and third search strategies of the original Aquila Optimizer (AO) algorithm. Then, GM and ROBL methods are integrated to further improve the exploration and exploitation ability of the original AO. To evaluate the performance of the IAO, we tested the algorithm with 23 benchmark functions and CEC2019 test functions. From the results, the performance of the IAO is superior to other advanced MH algorithms. Meanwhile, through experimental results on four real-world engineering design problems, it is validated that the IAO has high practicability in solving practical problems.

In future studies, we will consider various methods to balance the exploration and exploitation phases. Different mutation strategies can be taken into account to increase the solution diversity. In addition, the proposed algorithm could be applied to more fields, including deep learning, material scheduling problems, parameter estimation, wireless sensor networks, path planning, signal denoising, image segmentation, model optimization, and more.