1. Introduction

The demographic changes and the large increase of dependency rates in developed countries, together with the rising demand for professional care, pose new requirements in all aspects of society [

1]. For all of these reasons, in this paper, we present an assistive patrol robot. There are several proposals related to this topic, such as [

2] or [

3], that propose assistive service robots that aim to support the independent living of elderly people in their own homes. However, we focus our research on navigation in complex environments, such as nursing homes or hospitals where the structure is very repetitive with so many similar corridors or halls making localization tasks very difficult.

2. Assistive Robotic Platform

As the basis of our work, we have used an updated version of our low-cost and autonomous assistance robot “LOLA,” which can be seen in

Figure 1. It is a differential wheeled robot, equipped with two motors and their corresponding encoders, which are all controlled with an open-source Arduino board. The complete platform measures approximately 800 mm. The sensing part is composed of an Intel RealSense D435 camera and a LIDAR. An on-board computer is used, with Ubuntu 18.04.5 LTS as the operating system, and it includes an Nvidia board Quadro RTX 5000.

3. Functionalities Integration

Due to the mobile nature of the platform, it has the capacity to navigate in the environment. It executes other functionalities in parallel that generate information of interest about the detected objects during the route. All of them are controlled from a single user interface which is managed from a touch screen. The following sub-sections explain them in more detail.

3.1. Navigation

We propose a special method based on a particle filter, where the information to estimate the robot’s position in each iteration is obtained only from the object detections that are in the surroundings of the platform. This detection is computed using YOLOv3 [

4]. For this purpose, we have retrained YOLOv3, which is based on CNNs, in a fine-tuning process for some classes of interests, such as doors, windows, or persons.

The map of the environment and the middle and end-points that make up our routes are previously known and loaded, so the patrolling policy decides in each iteration, based on the estimated position calculated by the particle filter, the next movement that the platform should perform to reach the next corresponding node. The information of the LIDAR is also considered in the process of navigation in order to prevent the platform from colliding with elements of the environment.

3.2. People Registration

This utility aims to generate a record of people during the robot’s navigation. Facial recognition on the images captured by the platform’s camera makes it possible to identify the people registered in a database. In addition, the identity information is complemented with the time and the location of the detection on the map. Our face recognition module is based on the open-source face recognition package [

5], which was built using dlib’s state-of-the-art face recognition.

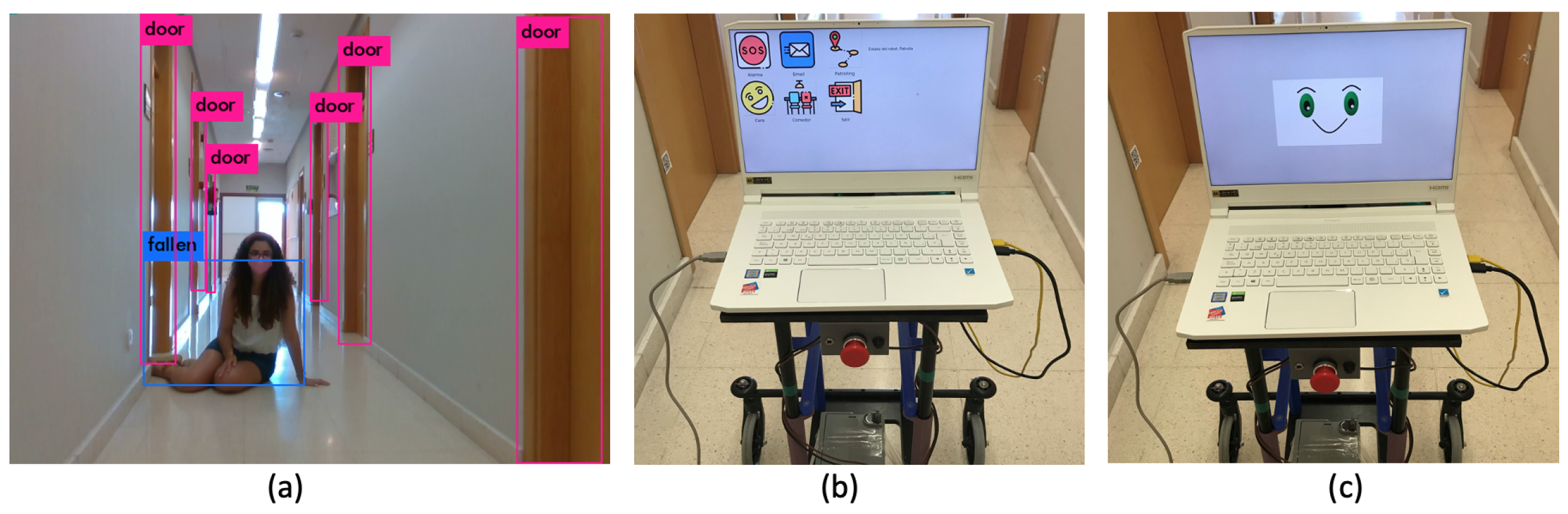

3.3. Fallen People Detection

This functionality is oriented to the automatic detection of fallen people based on computer vision. A retrained YOLOv3, where one of the classes is a fallen person, has been used. If a fallen person is detected, the platform asks if the person needs help. If so, or if there is no answer, the platform sounds the alarm, sending an email to the configured person and generating an audible sound. In the case where no help is needed, the platform continues with the patrolling.

3.4. Audio Generation with AWS

The platform also has the ability to convert from text to speech using Amazon Web Services [

6], playing audios on and offline. The system stores the identified conversions which are already done to reduce the number of petitions to the server.

3.5. Single and Friendly Interface

All the platform’s behavior is controlled by a single user interface which is displayed and managed from the touch screen. It is made up of buttons that control the different functionalities. If the interface is not being used, it shows a standby face. An example is shown in Figure 3b,c

4. Results

For the generation of the results, we have made a complete route with a platform prototype in our testing environment, which is the third floor of the Polytechnic School of the University of Alcalá.

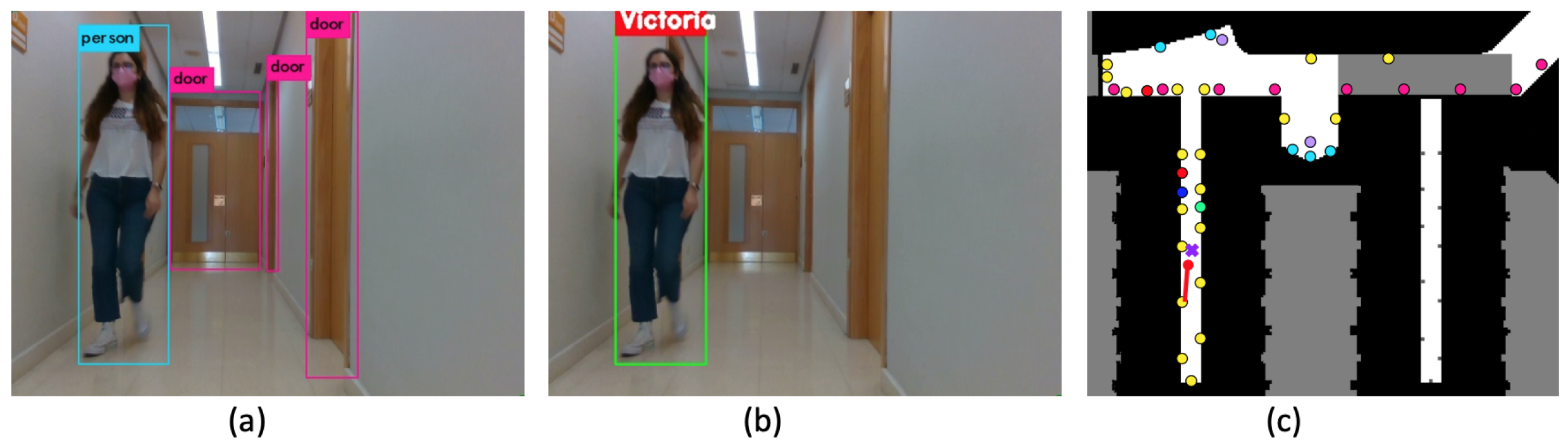

Two examples of object detection by YOLOv3 are shown in

Figure 2a and

Figure 3a. An example of people registration is shown in

Figure 2b, where facial recognition has been applied to the category ’person’ at the output of the detector YOLOv3. Then, the detected person’s position is registered on the map as a purple cross.

Figure 2c shows the identification of all types of objects that are in our environment. They are represented by a different colour. This helps the particle filter to locate the platform with the capture made with the camera.

5. Conclusions

In this paper, we propose a new navigation algorithm and its integration with other functionalities in an assistive platform for complex indoor environments, where the structure makes location tasks very difficult. Our platform has facial recognition and the possibility of detecting fallen people. The navigation is based on a particle filter, which is used to locate the robot with the object detections made by YOLOv3 with the capture of the camera, so our patrolling policy decides the next movement. Simultaneously, a continuous check of the surroundings of the platform is carried out by the LIDAR to avoid collisions. All these functionalities are controlled by a friendly interface based on a touch screen and a voice service model.