Journal Description

Software

Software

is an international, peer-reviewed, open access journal on all aspects of software engineering published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 28.9 days after submission; acceptance to publication is undertaken in 4.2 days (median values for papers published in this journal in the first half of 2025).

- Recognition of Reviewers: APC discount vouchers, optional signed peer review, and reviewer names published annually in the journal.

- Software is a companion journal of Electronics.

Latest Articles

Building Shared Alignment for Agile at Scale: A Tool-Supported Method for Cross-Stakeholder Process Synthesis

Software 2025, 4(4), 31; https://doi.org/10.3390/software4040031 - 3 Dec 2025

Abstract

►

Show Figures

Organizations increasingly rely on Agile software development to navigate the complexities of digital transformation. Agile emphasizes flexibility, empowerment, and emergent design, yet large-scale initiatives often extend beyond single teams to include multiple subsidiaries, business units, and regulatory stakeholders. In such contexts, team-level mechanisms

[...] Read more.

Organizations increasingly rely on Agile software development to navigate the complexities of digital transformation. Agile emphasizes flexibility, empowerment, and emergent design, yet large-scale initiatives often extend beyond single teams to include multiple subsidiaries, business units, and regulatory stakeholders. In such contexts, team-level mechanisms such as retrospectives, backlog refinement, and planning events may prove insufficient to achieve alignment across diverse perspectives, organizational boundaries, and compliance requirements. To address this limitation, this paper introduces a complementary framework and a supporting software tool that enable systematic cross-stakeholder alignment. Rather than replacing Agile practices, the framework enhances them by capturing heterogeneous stakeholder views, surfacing tacit knowledge, and systematically reconciling differences into a shared alignment artifact. The methodology combines individual Functional Resonance Analysis Method (FRAM)-based process modeling, iterative harmonization, and an evidence-supported selection mechanism driven by quantifiable performance indicators, all operationalized through a prototype tool. The approach was evaluated in a real industrial case study within the regulated gaming sector, involving practitioners from both a parent company and a subsidiary. The results show that the methodology effectively revealed misalignments among stakeholders’ respective views of the development process, supported structured negotiation to reconcile these differences, and produced a consolidated process model that improved transparency and alignment across organizational boundaries. The study demonstrates the practical viability of the methodology and its value as a complementary mechanism that strengthens Agile ways of working in complex, multi-stakeholder environments.

Full article

Open AccessArticle

Software Quality Assurance and AI: A Systems-Theoretic Approach to Reliability, Safety, and Security

by

Joseph R. Laracy, Ziyuan Meng, Vassilka D. Kirova, Cyril S. Ku and Thomas J. Marlowe

Software 2025, 4(4), 30; https://doi.org/10.3390/software4040030 - 13 Nov 2025

Abstract

►▼

Show Figures

The integration of modern artificial intelligence into software systems presents transformative opportunities and novel challenges for software quality assurance (SQA). While AI enables powerful enhancements in testing, monitoring, and defect prediction, it also introduces non-determinism, continuous learning, and opaque behavior that challenge traditional

[...] Read more.

The integration of modern artificial intelligence into software systems presents transformative opportunities and novel challenges for software quality assurance (SQA). While AI enables powerful enhancements in testing, monitoring, and defect prediction, it also introduces non-determinism, continuous learning, and opaque behavior that challenge traditional quality and reliability paradigms. This paper proposes a framework for addressing these issues, drawing on concepts from systems theory. We argue that AI-enabled software systems should be understood as dynamical systems, i.e., stateful adaptive systems whose behavior depends on prior inputs, feedback, and environmental interaction, as well as components embedded within broader socio-technical ecosystems. From this perspective, quality assurance becomes a matter of maintaining stability by enforcing constraints as well as designing robust feedback and control mechanisms that account for interactions across the full ecosystem of stakeholders, infrastructure, and operational environments. This paper outlines how the systems-theoretic perspective can inform the development of modern SQA processes. This ecosystem-aware approach repositions software quality as an ongoing, systemic responsibility, especially important in mission-critical AI applications.

Full article

Figure 1

Open AccessArticle

RCEGen: A Generative Approach for Automated Root Cause Analysis Using Large Language Models (LLMs)

by

Rubel Hassan Mollik, Arup Datta, Anamul Haque Mollah and Wajdi Aljedaani

Software 2025, 4(4), 29; https://doi.org/10.3390/software4040029 - 7 Nov 2025

Abstract

►▼

Show Figures

Root cause analysis (RCA) identifies the faults and vulnerabilities underlying software failures, informing better design and maintenance decisions. Earlier approaches typically framed RCA as a classification task, predicting coarse categories of root causes. With recent advances in large language models (LLMs), RCA can

[...] Read more.

Root cause analysis (RCA) identifies the faults and vulnerabilities underlying software failures, informing better design and maintenance decisions. Earlier approaches typically framed RCA as a classification task, predicting coarse categories of root causes. With recent advances in large language models (LLMs), RCA can be treated as a generative task that produces natural language explanations of faults. We introduce RCEGen, a framework that leverages state-of-the-art open-source LLMs to generate root cause explanations (RCEs) directly from bug reports. Using 298 reports, we evaluated five LLMs in conjunction with human developers and LLM judges across three key aspects: correctness, clarity, and reasoning depth. Qwen2.5-Coder-Instruct achieved the strongest performance (correctness ≈ 0.89, clarity ≈ 0.88, reasoning ≈ 0.65, overall ≈ 0.79), and RCEs exhibited high semantic fidelity (CodeBERTScore ≈ 0.98) to developer-written references despite low lexical overlap. The results demonstrated that LLMs achieve high accuracy in root cause identification from bug report titles and descriptions, particularly when reports contained error logs and reproduction steps.

Full article

Figure 1

Open AccessArticle

Software Engineering Aspects of Federated Learning Libraries: A Comparative Survey

by

Hiba Alsghaier and Tian Zhao

Software 2025, 4(4), 28; https://doi.org/10.3390/software4040028 - 5 Nov 2025

Abstract

►▼

Show Figures

Federated Learning (FL) has emerged as a pivotal paradigm for privacy-preserving machine learning. While numerous FL libraries have been developed to operationalize this paradigm, their rapid proliferation has created a significant challenge for practitioners and researchers: selecting the right tool requires a deep

[...] Read more.

Federated Learning (FL) has emerged as a pivotal paradigm for privacy-preserving machine learning. While numerous FL libraries have been developed to operationalize this paradigm, their rapid proliferation has created a significant challenge for practitioners and researchers: selecting the right tool requires a deep understanding of their often undocumented software architectures and extensibility, aspects that are largely overlooked by existing algorithm-focused surveys. This paper addresses this gap by conducting the first comprehensive survey of FL libraries from a software engineering perspective. We systematically analyze ten popular open-source FL libraries, dissecting their architectural designs, support for core and advanced FL features, and most importantly, their extension mechanisms for customization. Our analysis produces a novel taxonomy of FL concepts grounded in software implementation, a practical decision framework for library selection, and an in-depth discussion of architectural limitations and pathways for future development. The findings provide developers with actionable guidance for selecting and extending FL tools and offer researchers a clear roadmap for advancing FL infrastructure.

Full article

Figure 1

Open AccessArticle

The Evolution of Software Usability in Developer Communities: An Empirical Study on Stack Overflow

by

Hans Djalali, Wajdi Aljedaani and Stephanie Ludi

Software 2025, 4(4), 27; https://doi.org/10.3390/software4040027 - 31 Oct 2025

Abstract

This study investigates how software developers discuss usability on Stack Overflow through an analysis of posts from 2008 to 2024. Despite recognizing the importance of usability for software success, there is a limited amount of research on developer engagement with usability topics. Using

[...] Read more.

This study investigates how software developers discuss usability on Stack Overflow through an analysis of posts from 2008 to 2024. Despite recognizing the importance of usability for software success, there is a limited amount of research on developer engagement with usability topics. Using mixed methods that combine quantitative metric analysis and qualitative content review, we examine temporal trends, comparative engagement patterns across eight non-functional requirements, and programming context-specific usability issues. Our findings show a significant decrease in usability posts since 2010, contrasting with other non-functional requirements, such as performance and security. Despite this decline, usability posts exhibit high resolution efficiency, achieving the highest answer and acceptance rates among all topics, suggesting that the community is highly effective at resolving these specialized questions. We identify distinctive platform-specific usability concerns: web development prioritizes responsive layouts and form design; desktop applications emphasize keyboard navigation and complex controls; and mobile development focuses on touch interactions and screen constraints. These patterns indicate a transformation in the sharing of usability knowledge, reflecting the maturation of the field, its integration into frameworks, and the migration to specialized communities. This first longitudinal analysis of usability discussions on Stack Overflow provides insights into developer engagement with usability and highlights opportunities for integrating usability guidance into technical contexts.

Full article

(This article belongs to the Topic Software Engineering and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Using Genetic Algorithms for Research Software Structure Optimization

by

Henning Schnoor, Wilhelm Hasselbring and Reiner Jung

Software 2025, 4(4), 26; https://doi.org/10.3390/software4040026 - 28 Oct 2025

Abstract

►▼

Show Figures

Our goal is to generate restructuring recommendations for research software systems based on software architecture descriptions that were obtained via reverse engineering. We reconstructed these software architectures via static and dynamic analysis methods in the reverse engineering process. To do this, we combined

[...] Read more.

Our goal is to generate restructuring recommendations for research software systems based on software architecture descriptions that were obtained via reverse engineering. We reconstructed these software architectures via static and dynamic analysis methods in the reverse engineering process. To do this, we combined static and dynamic analysis for call relationships and dataflow into a hierarchy of six analysis methods. For generating optimal restructuring recommendations, we use genetic algorithms, which optimize the module structure. For optimizing the modularization, we use coupling and cohesion metrics as fitness functions. We applied these methods to Earth System Models to test their efficacy. In general, our results confirm the applicability of genetic algorithms for optimizing the module structure of research software. Our experiments show that the analysis methods have a significant impact on the optimization results. A specific observation from our experiments is that the pure dynamic analysis produces significantly better modularizations than the optimizations based on the other analysis methods that we used for reverse engineering. Furthermore, a guided, interactive optimization with a domain expert’s feedback improves the modularization recommendations considerably. For instance, cohesion is improved by 57% with guided optimization.

Full article

Figure 1

Open AccessArticle

From Intention to Adoption: Managerial Misalignment in Cybersecurity Training Investments for Software Development Organizations

by

Hannes Salin and Vasileios Gkougkaras

Software 2025, 4(4), 25; https://doi.org/10.3390/software4040025 - 13 Oct 2025

Abstract

To ensure adequate skill development, but also competitive advantage as a software engineering organization, initiatives in cybersecurity training is one of several important investment decisions to make for management. This study builds upon three case organizations in Sweden and Greece, where managers’ and

[...] Read more.

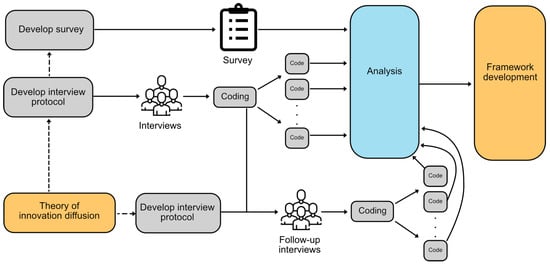

To ensure adequate skill development, but also competitive advantage as a software engineering organization, initiatives in cybersecurity training is one of several important investment decisions to make for management. This study builds upon three case organizations in Sweden and Greece, where managers’ and software developers’ perceptions on trialability and observability effects are analyzed, grounded in the theory of innovation diffusion. Using interviews and a developer-centric survey, both quantitative and qualitative data are collected, and used in combination to support the development of a pre-investment framework for management. The analysis includes thematic analysis, cosine similarity comparison, and, to some extent, sentiment polarity scoring. A pre-investment framework consisting of a process of seven concrete steps is proposed, based on the empirical findings in the study.

Full article

(This article belongs to the Topic Software Engineering and Applications)

►▼

Show Figures

Figure 1

Open AccessHypothesis

The Agile PMO Paradox: Embracing DevOps in the UAE

by

Ibrahim Peerzada

Software 2025, 4(4), 24; https://doi.org/10.3390/software4040024 - 24 Sep 2025

Abstract

This study investigates how Development and Operations (DevOps) practices impact Project Management Office (PMO) governance within the technology sector of the United Arab Emirates (UAE). It addresses the need for agile-aligned governance frameworks by exploring how DevOps principles affect traditional PMO structures. A

[...] Read more.

This study investigates how Development and Operations (DevOps) practices impact Project Management Office (PMO) governance within the technology sector of the United Arab Emirates (UAE). It addresses the need for agile-aligned governance frameworks by exploring how DevOps principles affect traditional PMO structures. A quantitative cross-sectional survey was conducted, and data was collected from 321 DevOps and PMO professionals in UAE organizations. The analysis, using Partial Least Squares Structural Equation Modelling (PLS-SEM), revealed a moderate positive correlation between specific DevOps practices—such as microservices, Minimum Viable Experience (MVE) culture, continuous value streams, automated configuration, and continuous delivery—and effective PMO governance. The study’s novel theoretical contribution is the integration of the Dynamic Capabilities Framework (DCF) with the Agile DevOps Reference Model (ADRM) to examine this alignment, bridging strategic agility and operational execution. This research offers actionable insights for UAE organizations and policymakers seeking to enhance governance and digital maturity.

Full article

(This article belongs to the Topic Software Engineering and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Parallel Towers of Hanoi via Generalized Nets: Simulated with OnlineGN

by

Angel Dimitriev, Krassimir Atanassov and Nora Angelova

Software 2025, 4(4), 23; https://doi.org/10.3390/software4040023 - 23 Sep 2025

Abstract

►▼

Show Figures

This paper introduces a variant of the classic Towers of Hanoi (TH) puzzle in which parallel moves—simultaneous transfers of multiple discs—are permitted. The problem is formalized with Generalized Nets (GN), an extension of Petri nets (PN) whose tokens and transitions encode the

[...] Read more.

This paper introduces a variant of the classic Towers of Hanoi (TH) puzzle in which parallel moves—simultaneous transfers of multiple discs—are permitted. The problem is formalized with Generalized Nets (GN), an extension of Petri nets (PN) whose tokens and transitions encode the ordering and movement of the n discs among three rods under the usual constraints. The resulting GN model, implemented on the OnlineGN platform, provides a clear, precise, and systematic representation that highlights the role of parallelism and supports interactive experimentation. This framework enables the exploration of strategies that reduce the number of parallel steps (PS) and, more broadly, illustrates how GN-captured parallelism can shorten the sequential depth for selected problems with exponential-time solutions.

Full article

Figure 1

Open AccessArticle

Automatic Complexity Analysis of UML Class Diagrams Using Visual Question Answering (VQA) Techniques

by

Nimra Shehzadi, Javed Ferzund, Rubia Fatima and Adnan Riaz

Software 2025, 4(4), 22; https://doi.org/10.3390/software4040022 - 23 Sep 2025

Abstract

Context: Modern software systems have become increasingly complex, making it difficult to interpret raw requirements and effectively utilize traditional tools for software design and analysis. Unified Modeling Language (UML) class diagrams are widely used to visualize and understand system architecture, but analyzing them

[...] Read more.

Context: Modern software systems have become increasingly complex, making it difficult to interpret raw requirements and effectively utilize traditional tools for software design and analysis. Unified Modeling Language (UML) class diagrams are widely used to visualize and understand system architecture, but analyzing them manually, especially for large-scale systems, poses significant challenges. Objectives: This study aims to automate the analysis of UML class diagrams by assessing their complexity using a machine learning approach. The goal is to support software developers in identifying potential design issues early in the development process and to improve overall software quality. Methodology: To achieve this, this research introduces a Visual Question Answering (VQA)-based framework that integrates both computer vision and natural language processing. Vision Transformers (ViTs) are employed to extract global visual features from UML class diagrams, while the BERT language model processes natural language queries. By combining these two models, the system can accurately respond to questions related to software complexity, such as class coupling and inheritance depth. Results: The proposed method demonstrated strong performance in experimental trials. The ViT model achieved an accuracy of 0.8800, with both the F1 score and recall reaching 0.8985. These metrics highlight the effectiveness of the approach in automatically evaluating UML class diagrams. Conclusions: The findings confirm that advanced machine learning techniques can be successfully applied to automate software design analysis. This approach can help developers detect design flaws early and enhance software maintainability. Future work will explore advanced fusion strategies, novel data augmentation techniques, and lightweight model adaptations suitable for environments with limited computational resources.

Full article

(This article belongs to the Topic Applications of NLP, AI, and ML in Software Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

MetaFFI-Multilingual Indirect Interoperability System

by

Tsvi Cherny-Shahar and Amiram Yehudai

Software 2025, 4(3), 21; https://doi.org/10.3390/software4030021 - 26 Aug 2025

Abstract

The development of software applications using multiple programming languages has increased in recent years, as it allows the selection of the most suitable language and runtime for each component of the system and the integration of third-party libraries. However, this practice involves complexity

[...] Read more.

The development of software applications using multiple programming languages has increased in recent years, as it allows the selection of the most suitable language and runtime for each component of the system and the integration of third-party libraries. However, this practice involves complexity and error proneness, due to the absence of an adequate system for the interoperability of multiple programming languages. Developers are compelled to resort to workarounds, such as library reimplementation or language-specific wrappers, which are often dependent on C as the common denominator for interoperability. These challenges render the use of multiple programming languages a burdensome and demanding task that necessitates highly skilled developers for implementation, debugging, and maintenance, and raise doubts about the benefits of interoperability. To overcome these challenges, we propose MetaFFI, introducing a fully in-process, plugin-oriented, runtime-independent architecture based on a minimal C abstraction layer. It provides deep binding without relying on a shared object model, virtual machine bytecode, or manual glue code. This architecture is scalable (O(n) integration for n languages) and supports true polymorphic function and object invocation across languages. MetaFFI is based on leveraging FFI and embedding mechanisms, which minimize restrictions on language selection while still enabling full-duplex binding and deep integration. This is achieved by exploiting the less restrictive shallow binding mechanisms (e.g., Foreign Function Interface) to offer deep binding features (e.g., object creation, methods, fields). MetaFFI provides a runtime-independent framework to load and xcall (Cross-Call) foreign entities (e.g., getters, functions, objects). MetaFFI uses Common Data Types (CDTs) to pass parameters and return values, including objects and complex types, and even cross-language callbacks and dynamic calling conventions for optimization. The indirect interoperability approach of MetaFFI has the significant advantage of requiring only

(This article belongs to the Topic Software Engineering and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Enabling Progressive Server-Side Rendering for Traditional Web Template Engines with Java Virtual Threads

by

Bernardo Pereira and Fernando Miguel Carvalho

Software 2025, 4(3), 20; https://doi.org/10.3390/software4030020 - 13 Aug 2025

Abstract

Modern web applications increasingly demand rendering techniques that optimize performance, responsiveness, and scalability. Progressive Server-Side Rendering (PSSR) bridges the gap between Server-Side Rendering and Client-Side Rendering by progressively streaming HTML content, improving perceived load times. Still, traditional HTML template engines often rely on

[...] Read more.

Modern web applications increasingly demand rendering techniques that optimize performance, responsiveness, and scalability. Progressive Server-Side Rendering (PSSR) bridges the gap between Server-Side Rendering and Client-Side Rendering by progressively streaming HTML content, improving perceived load times. Still, traditional HTML template engines often rely on blocking interfaces that hinder their use in asynchronous, non-blocking contexts required for PSSR. This paper analyzes how Java virtual threads, introduced in Java 21, enable non-blocking execution of blocking I/O operations, allowing the reuse of traditional template engines for PSSR without complex asynchronous programming models. We benchmark multiple engines across Spring WebFlux, Spring MVC, and Quarkus using reactive, suspendable, and virtual thread-based approaches. Results show that virtual threads allow blocking engines to scale comparably to those designed for non-blocking I/O, achieving high throughput and responsiveness under load. This demonstrates that virtual threads provide a compelling path to simplify the implementation of PSSR with familiar HTML templates, significantly lowering the barrier to entry while maintaining performance.

Full article

(This article belongs to the Topic Software Engineering and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Research and Development of Test Automation Maturity Model Building and Assessment Methods for E2E Testing

by

Daiju Kato, Ayane Mogi, Hiroshi Ishikawa and Yasufumi Takama

Software 2025, 4(3), 19; https://doi.org/10.3390/software4030019 - 5 Aug 2025

Abstract

Background: While several test-automation maturity models (e.g., CMMI, TMMi, TAIM) exist, none explicitly integrate ISO 9001-based quality management systems (QMS), leaving a gap for organizations that must align E2E test automation with formal quality assurance. Objective: This study proposes a test-automation maturity model

[...] Read more.

Background: While several test-automation maturity models (e.g., CMMI, TMMi, TAIM) exist, none explicitly integrate ISO 9001-based quality management systems (QMS), leaving a gap for organizations that must align E2E test automation with formal quality assurance. Objective: This study proposes a test-automation maturity model (TAMM) that bridges E2E automation capability with ISO 9001/ISO 9004 self-assessment principles, and evaluates its reliability and practical impact in industry. Methods: TAMM comprises eight maturity dimensions, 39 requirements, and 429 checklist items. Three independent assessors applied the checklist to three software teams; inter-rater reliability was ensured via consensus review (Cohen’s κ = 0.75). Short-term remediation actions based on the checklist were implemented over six months and re-assessed. Synergy with the organization’s ISO 9001 QMS was analyzed using ISO 9004 self-check scores. Results: Within 6 months of remediation, mean TAMM score rose from 2.75 → 2.85. Inter-rater reliability is filled with Cohen’s κ = 0.75. Conclusions: The proposed TAMM delivers measurable, short-term maturity gains and complements ISO 9001-based QMS without introducing conflicting processes. Practitioners can use the checklist to identify actionable gaps, prioritize remediation, and quantify progress, while researchers may extend TAMM to other domains or automate scoring via repository mining.

Full article

(This article belongs to the Special Issue Software Reliability, Security and Quality Assurance)

►▼

Show Figures

Figure 1

Open AccessArticle

Intersectional Software Engineering as a Field

by

Alicia Julia Wilson Takaoka, Claudia Maria Cutrupi and Letizia Jaccheri

Software 2025, 4(3), 18; https://doi.org/10.3390/software4030018 - 30 Jul 2025

Abstract

Intersectionality is a concept used to explain the power dynamics and inequalities that some groups experience owing to the interconnection of social differences such as in gender, sexual identity, poverty status, race, geographic location, disability, and education. The relation between software engineering, feminism,

[...] Read more.

Intersectionality is a concept used to explain the power dynamics and inequalities that some groups experience owing to the interconnection of social differences such as in gender, sexual identity, poverty status, race, geographic location, disability, and education. The relation between software engineering, feminism, and intersectionality has been addressed by some studies thus far, but it has never been codified before. In this paper, we employ the commonly used ABC Framework for empirical software engineering to show the contributions of intersectional software engineering (ISE) as a field of software engineering. In addition, we highlight the power dynamic, unique to ISE studies, and define gender-forward intersectionality as a way to use gender as a starting point to identify and examine inequalities and discrimination. We show that ISE is a field of study in software engineering that uses gender-forward intersectionality to produce knowledge about power dynamics in software engineering in its specific domains and environments. Employing empirical software engineering research strategies, we explain the importance of recognizing and evaluating ISE through four dimensions of dynamics, which are people, processes, products, and policies. Beginning with a set of 10 seminal papers that enable us to define the initial concepts and the query for the systematic mapping study, we conduct a systematic mapping study leads to a dataset of 140 primary papers, of which 15 are chosen as example papers. We apply the principles of ISE to these example papers to show how the field functions. Finally, we conclude the paper by advocating the recognition of ISE as a specialized field of study in software engineering.

Full article

(This article belongs to the Special Issue Women’s Special Issue Series: Software)

►▼

Show Figures

Figure 1

Open AccessArticle

Investigating Reproducibility Challenges in LLM Bugfixing on the HumanEvalFix Benchmark

by

Balázs Szalontai, Balázs Márton, Balázs Pintér and Tibor Gregorics

Software 2025, 4(3), 17; https://doi.org/10.3390/software4030017 - 14 Jul 2025

Abstract

Benchmark results for large language models often show inconsistencies across different studies. This paper investigates the challenges of reproducing these results in automatic bugfixing using LLMs, on the HumanEvalFix benchmark. To determine the cause of the differing results in the literature, we attempted

[...] Read more.

Benchmark results for large language models often show inconsistencies across different studies. This paper investigates the challenges of reproducing these results in automatic bugfixing using LLMs, on the HumanEvalFix benchmark. To determine the cause of the differing results in the literature, we attempted to reproduce a subset of them by evaluating 12 models in the DeepSeekCoder, CodeGemma, CodeLlama, and WizardCoder model families, in different sizes and tunings. A total of 35 unique results were reported for these models across studies, of which we successfully reproduced 12. We identified several relevant factors that influenced the results. The base models can be confused with their instruction-tuned variants, making their results better than expected. Incorrect prompt templates or generation length can decrease benchmark performance, as well as using 4-bit quantization. Using sampling instead of greedy decoding can increase the variance, especially with higher temperature values. We found that precision and 8-bit quantization have less influence on benchmark results.

Full article

(This article belongs to the Topic Applications of NLP, AI, and ML in Software Engineering)

►▼

Show Figures

Figure 1

Open AccessEditorial

New Editor-in-Chief of Software

by

Mirko Viroli

Software 2025, 4(3), 16; https://doi.org/10.3390/software4030016 - 10 Jul 2025

Abstract

I would like to introduce myself as the new Editor-in-Chief of Software [...]

Full article

Open AccessArticle

Analysing Concurrent Queues Using CSP: Examining Java’s ConcurrentLinkedQueue

by

Kevin Chalmers and Jan Bækgaard Pedersen

Software 2025, 4(3), 15; https://doi.org/10.3390/software4030015 - 7 Jul 2025

Abstract

►▼

Show Figures

In this paper we examine the OpenJDK library implementation of the ConcurrentLinkedQueue. We use model checking to verify that it behaves according to the algorithm it is based on: Michael and Scott’s fast and practical non-blocking concurrent queue algorithm. In addition, we

[...] Read more.

In this paper we examine the OpenJDK library implementation of the ConcurrentLinkedQueue. We use model checking to verify that it behaves according to the algorithm it is based on: Michael and Scott’s fast and practical non-blocking concurrent queue algorithm. In addition, we develop a simple concurrent queue specification in CSP and verify that Michael and Scott’s algorithm satisfies it. We conclude that both the algorithm and the implementation are correct and both conform to our simpler concurrent queue specification, which we can use in place of either implementation in future verification tasks. The complete code is available on GitHub.

Full article

Figure 1

Open AccessReview

Machine Learning Techniques for Requirements Engineering: A Comprehensive Literature Review

by

António Miguel Rosado da Cruz and Estrela Ferreira Cruz

Software 2025, 4(3), 14; https://doi.org/10.3390/software4030014 - 28 Jun 2025

Abstract

Software requirements engineering is one of the most critical and time-consuming phases of the software-development process. The lack of communication with stakeholders and the use of natural language for communicating leads to misunderstanding and misidentification of requirements or the creation of ambiguous requirements,

[...] Read more.

Software requirements engineering is one of the most critical and time-consuming phases of the software-development process. The lack of communication with stakeholders and the use of natural language for communicating leads to misunderstanding and misidentification of requirements or the creation of ambiguous requirements, which can jeopardize all subsequent steps in the software-development process and can compromise the quality of the final software product. Natural Language Processing (NLP) is an old area of research; however, it is currently undergoing strong and very positive impacts with recent advances in the area of Machine Learning (ML), namely with the emergence of Deep Learning and, more recently, with the so-called transformer models such as BERT and GPT. Software requirements engineering is also being strongly affected by the entire evolution of ML and other areas of Artificial Intelligence (AI). In this article we conduct a systematic review on how AI, ML and NLP are being used in the various stages of requirements engineering, including requirements elicitation, specification, classification, prioritization, requirements management, requirements traceability, etc. Furthermore, we identify which algorithms are most used in each of these stages, uncover challenges and open problems and suggest future research directions.

Full article

(This article belongs to the Topic Applications of NLP, AI, and ML in Software Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Characterizing Agile Software Development: Insights from a Data-Driven Approach Using Large-Scale Public Repositories

by

Carlos Moreno Martínez, Jesús Gallego Carracedo and Jaime Sánchez Gallego

Software 2025, 4(2), 13; https://doi.org/10.3390/software4020013 - 24 May 2025

Abstract

►▼

Show Figures

This study investigates the prevalence and impact of Agile practices by leveraging metadata from thousands of public GitHub repositories through a novel data-driven methodology. To facilitate this analysis, we developed the AgileScore index, a metric designed to identify and evaluate patterns, characteristics, performance

[...] Read more.

This study investigates the prevalence and impact of Agile practices by leveraging metadata from thousands of public GitHub repositories through a novel data-driven methodology. To facilitate this analysis, we developed the AgileScore index, a metric designed to identify and evaluate patterns, characteristics, performance and community engagement in Agile-oriented projects. This approach enables comprehensive, large-scale comparisons between Agile methodologies and traditional development practices within digital environments. Our findings reveal a significant annual growth of 16% in the adoption of Agile practices and validate the AgileScore index as a systematic tool for assessing Agile methodologies across diverse development contexts. Furthermore, this study introduces innovative analytical tools for researchers in software project management, software engineering and related fields, providing a foundation for future work in areas such as cost estimation and hybrid project management. These insights contribute to a deeper understanding of Agile’s role in fostering collaboration and adaptability in dynamic digital ecosystems.

Full article

Figure 1

Open AccessArticle

AI Testing for Intelligent Chatbots—A Case Study

by

Jerry Gao, Radhika Agarwal and Prerna Garsole

Software 2025, 4(2), 12; https://doi.org/10.3390/software4020012 - 15 May 2025

Cited by 2

Abstract

►▼

Show Figures

The decision tree test method works as a flowchart structure for conversational flow. It has predetermined questions and answers that guide the user through specific tasks. Inspired by principles of the decision tree test method in software engineering, this paper discusses intelligent AI

[...] Read more.

The decision tree test method works as a flowchart structure for conversational flow. It has predetermined questions and answers that guide the user through specific tasks. Inspired by principles of the decision tree test method in software engineering, this paper discusses intelligent AI test modeling chat systems, including basic concepts, quality validation, test generation and augmentation, testing scopes, approaches, and needs. The paper’s novelty lies in an intelligent AI test modeling chatbot system built and implemented based on an innovative 3-dimensional AI test model for AI-powered functions in intelligent mobile apps to support model-based AI function testing, test data generation, and adequate test coverage result analysis. As a result, a case study is provided using a mental health and emotional intelligence chatbot system, Wysa. It helps in tracking and analyzing mood and helps in sentiment analysis.

Full article

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Applied Sciences, ASI, Blockchains, Computers, MAKE, Software

Recent Advances in AI-Enhanced Software Engineering and Web Services

Topic Editors: Hai Wang, Zhe HouDeadline: 31 May 2026

Topic in

Algorithms, Applied Sciences, Electronics, MAKE, AI, Software

Applications of NLP, AI, and ML in Software Engineering

Topic Editors: Affan Yasin, Javed Ali Khan, Lijie WenDeadline: 30 August 2026

Special Issues

Special Issue in

Software

Software Reliability, Security and Quality Assurance

Guest Editors: Tadashi Dohi, Junjun Zheng, Xiao-Yi ZhangDeadline: 25 December 2025

Special Issue in

Software

Women’s Special Issue Series: Software

Guest Editors: Tingting Bi, Xing Hu, Letizia JaccheriDeadline: 31 December 2025