Abstract

Ecological remote sensing is being transformed by three-dimensional (3D), multispectral measurements of forest canopies by unmanned aerial vehicles (UAV) and computer vision structure from motion (SFM) algorithms. Yet applications of this technology have out-paced understanding of the relationship between collection method and data quality. Here, UAV-SFM remote sensing was used to produce 3D multispectral point clouds of Temperate Deciduous forests at different levels of UAV altitude, image overlap, weather, and image processing. Error in canopy height estimates was explained by the alignment of the canopy height model to the digital terrain model (R2 = 0.81) due to differences in lighting and image overlap. Accounting for this, no significant differences were observed in height error at different levels of lighting, altitude, and side overlap. Overall, accurate estimates of canopy height compared to field measurements (R2 = 0.86, RMSE = 3.6 m) and LIDAR (R2 = 0.99, RMSE = 3.0 m) were obtained under optimal conditions of clear lighting and high image overlap (>80%). Variation in point cloud quality appeared related to the behavior of SFM ‘image features’. Future research should consider the role of image features as the fundamental unit of SFM remote sensing, akin to the pixel of optical imaging and the laser pulse of LIDAR.

1. Introduction

Forests cover roughly 30% of global land area and hold 70%–90% of terrestrial aboveground and belowground biomass, a key sink of global carbon []. Accurate understanding of the spatial extent, condition, quality, and dynamics of forests is vital for understanding their role in the biosphere []. Obtaining such information through field work alone is impossible, but has been made possible within the last four decades by remote sensing technologies that map the extent and dynamics of structural, spectral, and even taxonomic traits of forests at spatial scales ranging from the single leaf to the entire planet [,,,,,,]. Even so, no one remote sensing instrument can simultaneously capture structural and spectral traits and dynamics of forests at high spatial resolution due to technical or practical constraints [] or other factors, including frequent cloud cover in the humid tropics [,].

A solution lies in the rapid advancement of two consumer-grade technologies: unmanned aerial vehicles (UAV) and structure from motion image processing algorithms (SFM). Consumer-grade UAVs have reached a degree of technical maturity that, when equipped with digital cameras or other sensors, enable rapid and on-demand ‘personal remote sensing’ of landscapes at high spatial and temporal resolution [,,,,,,,]. At the same time, automated computer vision SFM algorithms enable the creation of LIDAR-like (Light Detection and Ranging) three-dimensional (3D) point clouds produced from images alone with color spectral information from images associated with each point []. The combination of these two technologies represents a transformative shift in the capabilities of forest remote sensing. UAV-SFM remote sensing can capture information on the 3D structural and spectral traits of forest canopies at high spatial resolution and frequencies not possible or practical with existing forms of airborne or satellite remote sensing [,,].

Use of SFM and UAVs for remote sensing has increased rapidly thanks to the relative ease with which these technologies can be deployed for research applications. Personal remote sensing systems have enabled accurate mapping of canopy height and biomass density as well as the discrimination of individual tree structural, spectral, and phenological traits [,,,]. Similar systems have also been used for mapping stream channel geomorphology [,], vineyard and orchard plant structure [,,], the topography of bare substrates [,,,,,], and lichen and moss extent and coverage [].

With this rapid advance in UAV-SFM applications comes an increasing complexity in the methods used to carry out the research. UAV-SFM research spans a range of applications, algorithms, data collection methods (including UAV, manned aircraft, and ground-based strategies), and flight configurations. Across the studies cited above, UAV-SFM research was conducted at a range of flight altitudes (30–225 m above ground level, AGL) and parameters of photographic overlap (from 40% to >90% forward and side overlap). These studies also used different SFM applications (both free, open-source and commercial, closed-source) applying different processing and post-processing approaches. While these studies arrive at the similar conclusion that accurate 3D reconstructions of landscapes (including vegetation and topography) can be produced with SFM remote sensing, the diversity of methods with which the research was carried out highlights a significant challenge and research opportunity for this burgeoning remote sensing field. In particular, little is known about the relationship between observations of vegetation structural and spectral metrics and the conditions under which observations are obtained. Given the myriad potential combinations of system, sensor, and flight configurations, it is unclear what the optimal methods might be for accurately measuring forest structure using these techniques.

Similar challenges arise in the use of LIDAR for remote sensing of forest canopies, where due to differences in sensor, aircraft, flight configuration and processing, differences in data collection strategies and quality are diverse []. Lack of understanding of how such differences influence canopy metrics (e.g., canopy height, biomass density) could potentially limit future applications, in particular when multiple datasets are combined to assess change. Dandois and Ellis [] summarized several uncertainties about the relationship between UAV-SFM data and data collection conditions. For example, it is not clear how changes in UAV flying altitude, photographic overlap, and resolution as well as wind, cloud cover, and light will influence the quality of SFM point clouds or even what the relevant measures of quality might be for such a system. It is also unclear how such changes in SFM point cloud quality will influence vegetation measurements, in particular metrics of canopy structure like height and biomass.

This research aims to address these uncertainties by characterizing how UAV-SFM point cloud quality traits and metrics (geometric positioning accuracy, point cloud density and canopy penetration, estimates of canopy structure, and point cloud color radiometric quality) vary as a function of different observation conditions. The ‘Ecosynth’ UAV-SFM remote sensing tools and approach [,] are used to characterize forest canopy structure across three Temperate Deciduous forest sites in Maryland USA. At a single site, a replicated set of image acquisitions were carried out under crossed treatments of lighting, flight altitude, and image overlap. Variation in traits and metrics were compared within and across treatment levels and to other factors (wind, post-processing, algorithm, computation). Forest canopy metrics derived from Ecosynth products were compared to field based measurements of canopy height and also to a contemporaneous high-resolution (≈ 10 points·m−2) discrete-return LIDAR dataset. The results of this study should inform best-practices for future UAV-SFM research.

2. Methods

2.1. Data Collection

2.1.1. Study Area and Field Data

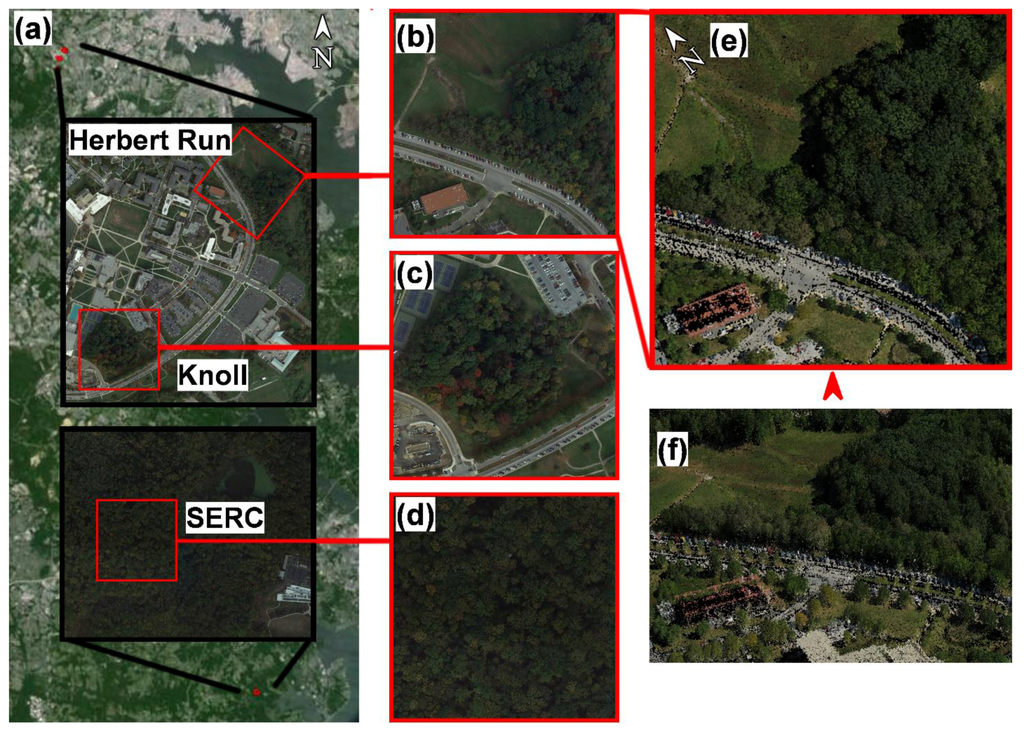

Research was carried out at three 6.25 ha (250 m × 250 m) Temperate Deciduous forest research study sites in Maryland USA (Figure 1): two sites (Herbert Run and Knoll) are located on the campus of the University of Maryland Baltimore County (UMBC: 39°15′18″N 76°42′32″W) and one at the Smithsonian Environmental Research Center in Edgewater Maryland (SERC: 38°53′10″N 76°33′51″W), the same study sites of Dandois and Ellis []. Sites were divided into 25 m × 25 m plots and per-plot height and aboveground biomass density were estimated on a per-plot basis. Per-plot average maximum canopy height was estimated based on the average height obtained by laser rangefinder of the five largest trees per plot by DBH (diameter at breast height) []. Per-plot above ground biomass density (AGB; Mg·ha−1) at Herbert Run was estimated by allometric modeling of the DBH of all stems greater than 1 cm DBH within each plot []. Mean and range of average maximum canopy height across all plots at each site was: Herbert Run 20 m, 9–36 m; Knoll 22 m, 4–36 m; SERC 36 m, 27–44 m. Mean AGB at Herbert Run was 204 Mg·ha−1 with a standard deviation of 156 Mg·ha−1 across 49 plots. Landcover maps used to separate the analysis of point cloud traits within sites were based on aerial photo interpretation from prior work []. Site descriptions and tree data for Herbert Run and Knoll are available online []. A site description for SERC is available at [] and online [].

Figure 1.

Maps showing location of 3 study sites in Maryland, USA, with local area insets (a). Maps of each 250 m × 250 m study area for Herbert Run (b), Knoll (c), and SERC (d). Overhead view of Ecosynth 3D point cloud for Herbert Run site from 2013-08-26 (e) and oblique view of the same point cloud from approximately the point of view of the red arrow (f). In all panels, the red squares are 250 m × 250 m in size. Imagery in (a–d) from Google Earth, image date 2014-10-23.

2.1.2. UAV Image Acquisition under a Controlled Experimental Design

UAV image data were collected following Ecosynth procedures of Dandois and Ellis []. Image data were collected with a Canon ELPH 520 HS digital camera attached to a hobbyist, commercial multirotor UAV consisting of Mikropkopter frames (HiSystems GmbH, []) and Arducopter flight electronics (3D Robotics, Inc., []) in ‘octo’ and ‘hexa’ configurations of 8 and 6 propellers [] and following []. The larger ‘octocopter’ configuration was used for flights >4 km in distance, the smaller hexacopter was used for all other flights. With either UAV, images were collected at nadir view at roughly 2 frames per second at 10 megapixels (MP) resolution and ‘Infinity’ focus (≈4 mm focal length, no optical zoom) using fixed white balance and exposure settings calibrated to an 18% gray card with an unobstructed view of the sky. UAVs were programmed to fly at 6 m s−1 and all flights were carried out below 120 m (394 feet) above the surface using automated waypoint control, take-off, and landing modes. Image ground sampling distance (GSD) at 80 m above the canopy surface was approximately 3.4 cm.

Image datasets were collected following a crossed factorial experimental design based on combinations of light, altitude above the canopy, and photographic side overlap. Two levels of lighting, uniformly clear and uniformly cloudy (diffuse) were controlled for by choice of day for flights. Four levels of flight altitude above the canopy (20 m, 40 m, 60 m, 80 m) and four levels of photographic side overlap (20%, 40%, 60%, 80%) were controlled for by pre-programming of automated UAV waypoint flight paths based on a designated flight altitude above the launch location and by the spacing between parallel flight tracks, respectively. Based on the camera settings used in this study GSD was 0.8 cm, 1.7 cm, 2.5 cm, and 3.4 cm at flight altitudes of 20 m, 40 m, 60 m, and 80 m, respectively. A full table of all flight parameters can be found in Table S1. Five replicates were planned for each treatment which were flown from 2013-06-21 to 2013-10-21 between 09:00–16:00 each day. All treatments for lighting, altitude, and overlap with five replicates were flown at Herbert Run (n = 82) and one treatment each at fixed settings (clear lighting, 80 m altitude, 80% side overlap) were flown at the Knoll and SERC sites. Average wind speeds during flights at Herbert Run, as computed from a nearby eddy covariance station at 90 m above mean sea level [], ranged from 0.6–5.9 m s−1 and were converted to values of Beaufort wind force scale (1–4) for categorical comparison across datasets [].

A single set of five image replicates collected under the same conditions (clear lighting, 80 m altitude, 80% side overlap, collected 2013-08-26, mean 1219 images per replicate) at Herbert Run were also processed under different variations of image processing and computation to evaluate the effects of these variables on point cloud traits and metrics. Image resolution of these replicates was down-sampled from the original 10 MP (3.4 cm GSD) to lower resolutions (7.5 MP, 5 MP, 2.5 MP, 1 MP, 0.3 MP; n = 25) and correspondingly increased GSD (3.9 cm, 4.7 cm, 6.7 cm, 10.6 cm, 19.3 m, respectively). The same replicates, at original 10 MP resolution, were also incrementally sampled from every single image to every 10th image, corresponding to decreasing levels of forward overlap: 96%, 92%, 88%, 84%, 80%, 76%, 72%, 68%, 64%, and 60%, n = 50.

2.1.3. Airborne LIDAR

Discrete-return LIDAR data were acquired over all three sites on 2013-10-25 by the contractor Watershed Sciences, Inc. LIDAR was collected with a nominal point density of 10.1 points·m−2 (0.028 m and 0.017 m horizontal and vertical accuracy, contractor reported). While some seasonal change was already underway at the time of acquisition, the data represents the only comparable leaf-on LIDAR for the UMBC campus that can be used for analysis of Ecosynth point cloud canopy quality measures. The contractor-provided 1 m × 1 m gridded bare earth filtered product was used as a digital terrain model (DTM) for extracting heights from point clouds. The points corresponding only to the LIDAR first return were extracted for each 6.25 ha study site to serve as a ‘gold-standard’ measure of the overall surface structure [].

2.2. Data Processing

After manually removing photos of take-off and landing, all UAV image replicates were processed into 3D-RGB point cloud datasets using the commercial Agisoft Photoscan SFM software package (64-bit, v0.91, build 1703; settings: ‘Align Photos’, ‘high’ accuracy, ‘generic’ pair pre-selection, maximum 40,000 point features per image) following Dandois and Ellis []. A single set of photos from one flight (clear lighting, 80 m altitude, 80% side overlap, collected 2013-08-26) was also processed in a previous version of Photoscan (v0.84) from prior research [], the latest version available at the time of writing (v1.0.4), and in an enhanced version of the popular free and open source Bundler SFM algorithm []. Dubbed Ecosynther (v1.0, []) this algorithm was built on the Bundler source code (v0.4) and includes several other algorithms intended to speed up performance of SFM reconstruction and produce a dense 3D point cloud model by making use of graphical processing unit (GPU) computation [,,]. Similar processing pipelines that combine the Bundler source code with dense point cloud reconstruction techniques [] have been used in other SFM remote sensing applications, including for 3D reconstruction of vineyards [] and bare substrates [,,,,,]. Ecosynther outputs both a ‘sparse’ and a ‘dense’ version of the SFM point cloud and the quality traits and metrics of both were evaluated.

Briefly, Photoscan, Bundler, Ecosynther and SFM algorithms in general produce 3D point clouds by first identifying ‘image features’ in each image, matching image features across multiple images to produce correspondences, and then iteratively using those correspondences in a photogrammetric sparse bundle adjustment to simultaneously solve for the 3D location of images in space and the 3D geometry of the objects observed within those images [,]. At this stage the 3D points in the point cloud correspond to a location identified and matched as an image feature across multiple images from different views along with color spectral information assigned to each point from the images. Image features play a fundamental role in many applications including image matching, motion tracking, and even image and object identification and classification [,,]. Popular open-source SFM packages like Bundler [] make use of image feature algorithms like SIFT (Scale Invariant Feature Transform) [], and the image feature descriptor in Photoscan is said to be ‘SIFT-like’ [].

Point cloud reconstructions were run on multiple computers (with different configurations of OS, RAM, and CPU resources) to facilitate the large amount of computation required to process all replicates (>4500 compute hours; 157 datasets) and so one set of replicates (collected 2013-08-26, n = 5, mean 1219 images per replicate) were also run on each computer to evaluate what, if any, effect that variable would have on point cloud traits and metrics. Point clouds were then processed following Ecosynth data processing procedures, which included filtering to remove stray noise points and georeferencing into the WGS84 UTM Zone 18N projected coordinate system by optimized ‘spline’ fitting of the SFM camera point ‘path’ to the UAV GPS telemetry path [,]. Two measurements of UAV elevation data were provided by the flight controller: relative elevation in meters above the launch location from built-in barometric pressure sensor and absolute elevation in meters above sea level based on a combination of pressure sensor and GPS altitude (3D Robotics, Inc., []). By measuring the vertical height of the UAV using a handheld laser rangefinder (TruPulse 360B) while it was flown vertically at fixed heights (e.g., 10 m, 20 m, ...100 m), we found that the relative height value had less error than the absolute height value (RMSE 6.6 m & 10.7 m, respectively; Figure S1), similar to prior studies []. The relative height values plus the altitude in meters above sea level of the launch location were then used to estimate UAV altitude during flight. UAV horizontal location data was still obtained from the UAV GPS and subsequent references to the UAV horizontal GPS data plus pressure sensor vertical data are referred to as the UAV GPS data for simplicity. All post-processed UAV-SFM point clouds are referred to as the ‘Ecosynth’ point clouds, referring to the overall processing pipeline applied to them.

2.3. Data Analysis

Ecosynth data quality was measured based on three main categories of empirical traits and applied forest canopy metrics that were extracted from georeferenced point clouds: positioning accuracy, canopy sampling, and canopy structure. Analysis of the effects of acquisition parameters on point cloud quality traits and metrics was only carried out on point clouds collected over Herbert Run with 82 replicates for testing lighting, altitude, wind, and side overlap, 50 replicates for testing forward overlap, and 25 replicates for testing resolution. Aspects of point cloud radiometric quality were also evaluated to assess the degree to which they play a role in point cloud quality traits and metrics.

2.3.1. Measurements of Position Accuracy

Point cloud positioning accuracy was quantified based on relative and absolute reference. Relative positioning accuracy was measured as meters root mean square error (RMSE) of the horizontal and vertical distance between each SFM camera point and the closest segment of two GPS points along the UAV GPS track (‘Path Error’: Path-XY, Path-Z), providing an estimate of the ‘goodness-of-fit’ of the 3D reconstruction and georeferencing. Absolute positioning accuracy was quantified by measuring the amount of horizontal and vertical displacement in meters RMSE required to rigidly align the Ecosynth point cloud to the LIDAR first return point cloud using a Python implementation (Python v2.7.5; VTK v6.1.0) of the Iterative Closest Point algorithm (ICP; ICP-XY, ICP-Z) [] and following Habib et al. []. ICP fitting to LIDAR was only used to measure absolute positioning accuracy of point clouds and no other metrics were computed based on these ‘fitted’ point clouds. Absolute positioning accuracy of each point cloud, without ICP correction, was also measured as the difference between the average elevation of all points within a 3 m × 3 m area around the UAV launch location (i.e., a flat and open space) and the average elevation over the same area from the LIDAR DTM, this measure is referred to as the ‘Launch Location Elevation Difference’ (LLED). The absolute value of the launch location elevation difference (mean absolute deviation, LLED-MAD) was also computed to provide a metric comparable to the ICP-Z RMSE value. To summarize, the metrics of positing accuracy are: Path-XY, Path-Z, ICP-XY, ICP-Z, and LLED-MAD. Errors in height estimates were highly correlated with vertical co-registration of the point clouds to the LIDAR DTM and so point clouds were vertically registered to the LIDAR DTM based on the LLED offset value (see Section 3.2 in Results). Other studies have georeferenced SFM datasets through the use of ground control points (GCPs) that are visible in images and for which the real world coordinates are measured with high accuracy instruments like differential GPS, RTK-GPS (real-time kinematic GPS), and total station surveying equipment with sub-meter accuracy [,,,,,,]. GCPs were not used in the current study because a technique was sought for rapid assessment of positioning accuracy [] across a large number of datasets.

2.3.2. Measurements of Canopy Structure

Gridded canopy height models (CHMs) at 1 m × 1 m pixel resolution were produced for all Ecosynth point cloud datasets and for the first-return of LIDAR points based on the highest point elevation within each grid cell after subtraction of LIDAR DTM values from each point cloud elevation []. Within each 25 m × 25 m field plot, the top-of-canopy height (TCH) [] was calculated from CHMs based on the average of all pixel values within the plot. Measures of data quality from canopy height are defined as the RMSE between Ecosynth, field, and LIDAR measurements at the scale of 25 m × 25 m (0.0625 hectare) field plots (‘Ecosynth TCH to field RMSE’ and ‘Ecosynth to LIDAR TCH RMSE’). Aboveground biomass density (AGB, Mg·ha−1) was modeled for each field plot at Herbert Run using Ecosynth TCH estimates following Dandois and Ellis [].

2.3.3. Measures of Canopy Sampling

Two measures of data quality were defined that characterize the way in which the canopy is sampled or ‘seen’ by Ecosynth SFM point clouds: point cloud density (PD, points·m−2) and canopy penetration (CP, the coefficient of variation (CV) of point cloud heights), both of which are calculated first within a raster grid of 1 m × 1 m cells and then averaged across forested areas only.

2.3.4. Radiometric Quality of Ecosynth Point Clouds

The radiometric quality of Ecosynth point clouds was measured as the standard deviation of the color of points inside 1 m × 1 m bins averaged by landcover, providing an estimate of the amount of noise in Ecosynth point colors within a fixed area []. Radiometric quality was evaluated on red, green, and blue (RGB) values and grayscale intensity (Gray = 0.299 × R + 0.587 × G + 0.114 × B) [] of point color.

3. Results

3.1. Point Cloud Positioning Quality

The response of Ecosynth quality traits and metrics across all replicates at different levels of lighting, altitude, and overlap are summarized in Table 1. Relative vertical positioning error (Path-Z) was unaffected by changes in lighting, altitude, and side photographic overlap, but showed a small increase with decreasing forward overlap (R2 = 0.88, RMSE 0.36–0.41 m). Relative horizontal positioning error (Path-XY), was unaffected by changes in lighting but increased with decreasing forward overlap (R2 = 0.65) and increasing flight altitude (R2 = 0.98). The latter may be explained by the fact that UAV track width increased with increasing flight altitude and so Path-XY error may not accurately reflect actual camera positioning error relative to the intended flight path at narrow track widths. Measures of absolute positioning accuracy (ICP-XY and ICP-Z error, LLED-MAD) were unaffected by changes in flight altitude or photographic side overlap, but showed nearly half the error on clear days compared to cloudy days (1.9 m vs. 2.3 m, 2.0 m vs. 3.8 m, 2.2 m vs. 3.8, respectively). ICP-Z and LLED-MAD error also increased with decreasing forward overlap, however the difference was small (R2 = 0.41 & 0.47; 1.9–2.2 m & 2.1–2.5 m RMSE, respectively).

Table 1.

Ecosynth quality traits and metrics across treatments of lighting, altitude above the canopy, and image side and forward overlap at Herbert Run (n = 82). Values are mean and standard deviation for all replicates within each level. Significant differences in mean values are indicated with p-value for lighting or correlation for altitude and overlap. ‘NS’ indicates no significant trend at the α = 0.05 level. All results are for Ecosynth point clouds produced in Photoscan v0.91.

| Lighting Condition | Altitude above Canopy (meters) | |||||||||||||||

| CLEAR | CLOUDY | p < | 20 | 40 | 60 | 80 | R2 | |||||||||

| N | 43 | 39 | 9 | 15 | 17 | 41 | ||||||||||

| Path-XY Error | 1.2 | 1.4 | NS | 0.61 | 1.0 | 1.2 | 1.6 | 0.98 | ||||||||

| RMSE m | (0.6) | (1.3) | (0.25) | (0.5) | (0.7) | (1.2) | ||||||||||

| Path-Z Error | 0.44 | 0.44 | NS | 0.4 | 0.5 | 0.4 | 0.5 | NS | ||||||||

| RMSE m | (0.13) | (0.12) | (0.1) | (0.1) | (0.1) | (0.1) | ||||||||||

| ICP-XY Error | 1.8 | 2.3 | 0.05 | 2.2 | 1.8 | 2.2 | 2.0 | NS | ||||||||

| RMSE m | (0.8) | (1.2) | (1.1) | (0.7) | (1.2) | (1.2) | ||||||||||

| ICP-Z Error | 2.0 | 3.8 | 0.00001 | 3.4 | 2.7 | 2.4 | 3.0 | NS | ||||||||

| RMSE m | (1.0) | (1.7) | (0.9) | (1.2) | (1.5) | (1.9) | ||||||||||

| LLED | 2.2 | 3.8 | 0.00001 | 3.2 | 3.4 | 2.4 | 3.0 | NS | ||||||||

| MAD (m) | (1.3) | (1.9) | (0.9) | (1.2) | (1.6) | (2.1) | ||||||||||

| Ecosynth TCH to | 4.2 | 4.3 | NS | 5.3 | 4.2 | 4.2 | 4.0 | NS | ||||||||

| Field RMSE (m) | (0.6) | (0.6) | (0.6) | (0.3) | (0.3) | (0.4) | ||||||||||

| Ecosynth to LIDAR | 2.5 | 2.5 | NS | 2.2 | 2.5 | 2.3 | 2.6 | NS | ||||||||

| TCH RMSE (m) | (0.6) | (0.7) | (0.4) | (0.7) | (0.6) | (0.7) | ||||||||||

| Point Density | 33 | 43 | 0.05 | 80 | 53 | 38 | 23 | 0.97 | ||||||||

| Points m−2 | (14) | (27) | (24) | (13) | (7) | (9) | ||||||||||

| Canopy Penetration | 18 | 16 | 0.01 | 17 | 16 | 17 | 17 | NS | ||||||||

| % CV | (3) | (2) | (2) | (2) | (2) | (2) | ||||||||||

| Average Computation | 44 | 49 | NS | 104 | 70 | 53 | 23 | NS | ||||||||

| Time hours | (38) | (46) | (14) | (59) | (33) | (16) | ||||||||||

| Image Side Overlap (%) | Image forward Overlap (%) a | |||||||||||||||

| 20 | 40 | 60 | 80 | R2 | 96 | 60 | R2 | |||||||||

| N | 10 | 10 | 29 | 33 | 5 | 5 | ||||||||||

| Path-XY Error | 1.9 | 2.2 | 1.2 | 0.9 | NS | 1.3 | 1.7 | 0.65 | ||||||||

| RMSE m | (1.3) | (1.7) | (0.6) | (0.4) | (0.2) | (0.2) | ||||||||||

| Path-Z Error | 0.5 | 0.4 | 0.5 | 0.4 | NS | 0.36 | 0.41 | 0.88 | ||||||||

| RMSE m | (0.1) | (0.1) | (0.1) | (0.1) | (0.1) | (0.1) | ||||||||||

| ICP-XY Error | 2.1 | 2.8 | 1.7 | 2.1 | NS | 1.7 | 1.9 | NS | ||||||||

| RMSE m | (1.3) | (1.5) | (0.8) | (1.0) | (0.3) | (0.3) | ||||||||||

| ICP-Z Error | 2.3 | 3.3 | 2.6 | 3.1 | NS | 1.9 | 2.2 | 0.41 | ||||||||

| RMSE m | (1.5) | (2.0) | (1.2) | (1.8) | (0.8) | (1.1) | ||||||||||

| LLED | 2.0 | 3.4 | 3.0 | 3.2 | NS | 2.1 | 2.5 | 0.47 | ||||||||

| MAD (m) | (1.5) | (2.0) | (1.4) | (2.0) | (1.2) | (1.5) | ||||||||||

| Ecosynth TCH to | 4.1 | 4.5 | 4.1 | 4.4 | NS | 3.6 | 7.0 | 1.0 | ||||||||

| Field RMSE (m) | (0.2) | (0.5) | (0.3) | (0.8) | (0.1) | (0.3) | ||||||||||

| Ecosynth to LIDAR | 2.6 | 2.2 | 2.5 | 2.6 | NS | 3.4 | 2.7 | NS | ||||||||

| TCH RMSE (m) | (0.7) | (0.6) | (0.6) | (0.7) | (0.6) | (0.1) | ||||||||||

| Point Density | 14 | 18 | 34 | 54 | 0.93 | 36 | 0.8 | 0.67 | ||||||||

| Points m−2 | (0.5) | (0.7) | (10) | (23) | (1) | (0.1) | ||||||||||

| Canopy Penetration | 15 | 17 | 17 | 18 | 0.93 | 18 | 0.02 | 0.91 | ||||||||

| % CV | (3) | (3) | (3) | (2) | (0.02) | (0.01) | ||||||||||

| Average Computation | 8 | 10 | 26 | 87 | 0.93 | 45 | 0.5 | 0.91 | ||||||||

| Time hours | (0.7) | (0.5) | (3) | (38) | (1.5) | (0.01) | ||||||||||

a Image forward overlap was tested at decreasing increments of ≈ 4% (see Section 2.1.2), but only the largest and smallest levels are shown here to highlight the observed pattern.

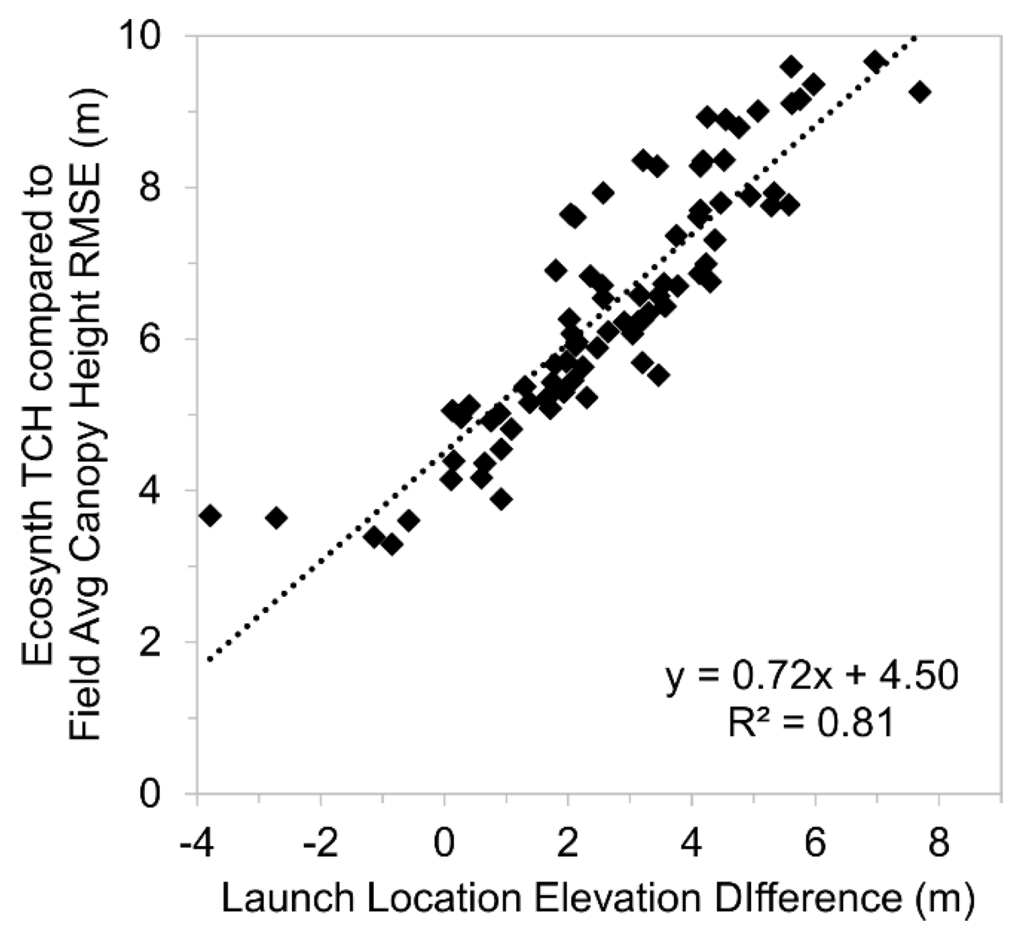

3.2. Canopy Structure and Canopy Sampling

Across all replicates at Herbert Run (n = 82) Ecosynth TCH error (RMSE) relative to field measurements was highly correlated with the vertical positioning of the Ecosynth point cloud relative the LIDAR dataset used for the DTM (LLED, R2 = 0.81, Figure 2; ICP-Z error, R2 = 0.69, Figure S2). The absolute value of LLED (LLED-MAD) was highly correlated with ICP-Z (R2 = 0.85, Figure S2), suggesting that these two values may serve as comparable diagnostics for characterizing point cloud quality, with LLED being relatively easy to compute across a range of applications and sites: e.g., when a LIDAR DTM is unavailable and heights are calculated from other DTM sources including from GPS [] or satellite topography []. Given this, all point clouds were corrected for this offset by adding the value of LLED to each point cloud point elevation value prior to any further analysis. After this correction, there were no significant differences in the error between Ecosynth TCH and field or LIDAR measurements at different levels of cloud cover, altitude and side photographic overlap (Table 1). Across all replicates at different levels of lighting, altitude and side overlap Ecosynth TCH showed an average of 4.3 m RMSE relative to field measurements and 2.5 m RMSE relative to LIDAR TCH.

Figure 2.

Relationship between the error in Ecosynth top-of-canopy height (TCH) estimates of field canopy height and the displacement of the Ecosynth point cloud relative to the digital terrain model (DTM) used for extracting heights, as measured by the value Launch Location Elevation Difference (LLED) in meters (n = 82).

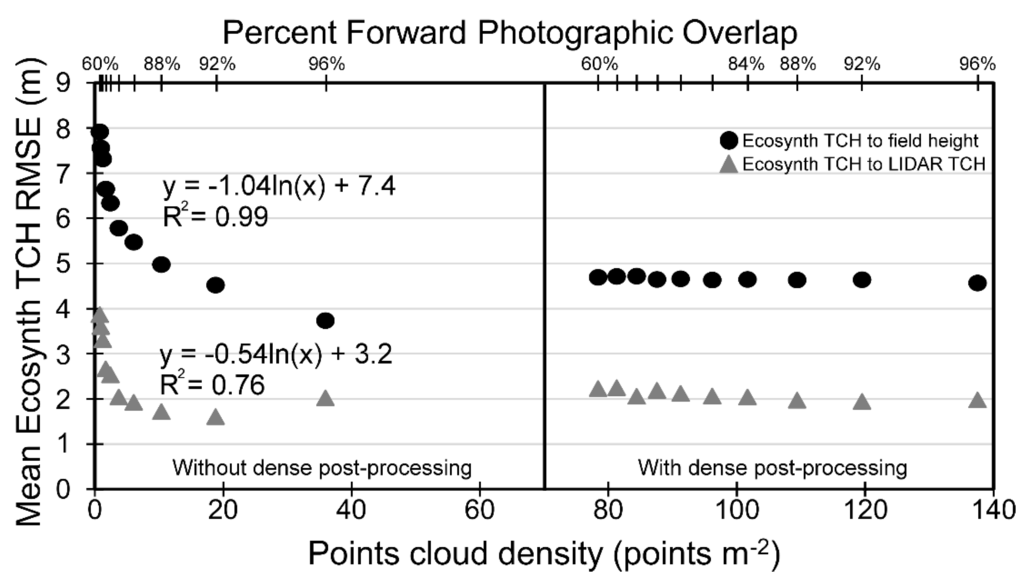

Error in Ecosynth TCH relative to field measurements doubled with decreased forward photographic overlap (R2 = 1.0, 3.6 m to 7.0 m, Table 1). This effect appeared to be explained by rapidly decreasing point cloud density (PD) as a function of forward overlap. RMSE of Ecosynth TCH was negatively correlated with the logarithm of PD for both field and LIDAR estimates (R2 = 0.99 & 0.76, respectively; Figure 3). While all replicates were processed in v0.91 of Photoscan, at the time of writing a new version of Photoscan was available (v1.0.4) offering a new option for applying a dense point cloud post-processing to the point cloud after the initial photo alignment and sparse bundle adjustment. This stage produces a dense point cloud based on dense matching algorithms similar to those commonly employed in other open-source SFM image processing pipelines [,,,,,,,]. Dense processing on point clouds with reduced overlap significantly increased PD (≈80–140 points·m−2) and removed TCH error associated with changing forward overlap relative to field and LIDAR measurements (Figure 3).

Point cloud density in forested areas was highest under conditions of the highest amount of photographic forward and side overlap (mean 54 points·m−2 with 80% side overlap) or the lowest flight altitude (mean 80 points·m−2 at 20 m above the canopy, Table 1). By comparison, average LIDAR first return PD in forested areas was 10.8 points·m−2. While average PD was 30% higher on cloudy days versus clear days (43 vs. 33 points·m−2), this difference appears to be driven by two datasets collected at low altitude (20 m above the canopy) where average forest PD was roughly 100 points·m−2. Median PD values were not significantly different on cloudy versus clear days (36 vs. 32 points m−2; Mann-Whitney U = 960, p > 0.25).

Figure 3.

Point cloud density (PD) and Ecosynth TCH error relative to field and LIDAR TCH for a single replicate sampled from every image to every 10th image, decreasing forward photographic overlap. Top axis is forward overlap: 60%, 64%, 68%, 72%, 76%, 80%, 84%, 88%, 92%, and 96%. Left plots show error without dense post-processing, plots at right show error of the same point clouds with dense post-processing.

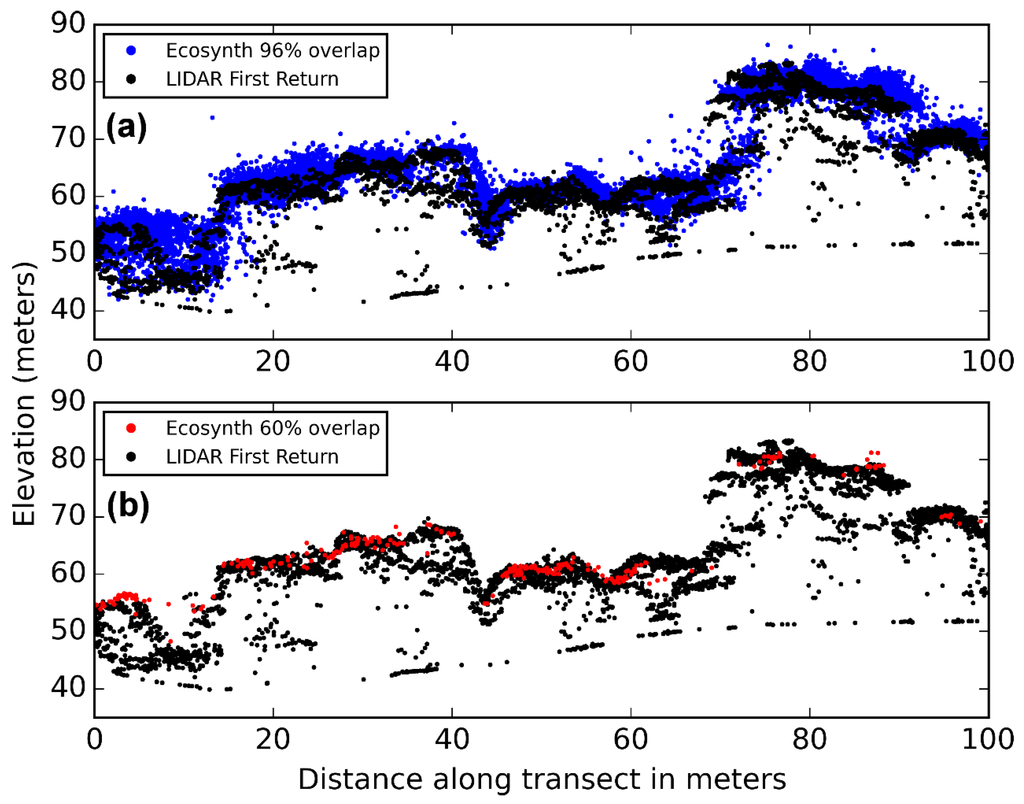

Average canopy penetration (CP) was significantly lower on cloudy days (18% vs. 16%, p < 0.01, Table 1), meaning that Ecosynth point clouds ‘see’ up to 1 m deeper into the canopy on clear days. CP increased with increasing side overlap (15% to 18%, R2 = 0.93) and decreased with decreasing forward overlap (18% to 2%, R2 = 0.91), but was unaffected by changes in flight altitude. Average CP across all Ecosynth replicates was 17%, by comparison average forest area CP for the LIDAR first return point cloud was 29%. This difference was significantly different than zero (one-sampled t-test: t = 40.85, p < 0.05) and equates to LIDAR observing roughly 2.5–3.5 m deeper into the canopy than Ecosynth at canopy heights of 20–30 m. Across all replicates (n = 82) there was no significant relationship between PD and CP (R2 = 2 × 10−5, p = 0.9697). However for the set of replicates where forward overlap was incrementally reduced, there was a strong relationship between CP and the logarithm of PD (R2 = 0.97), suggesting a strong underlying control on point cloud quality based on forward overlap. Differences in the effect of forward overlap on PD and CP are visualized in Figure 4 for a 100 m × 5 m swath of forest at Herbert Run viewed in cross-section for high overlap (96%, 4a) and low overlap (60%, 4b) point clouds relative to the LIDAR first return point cloud over the same area.

Figure 4.

Cross-sections of a 100 m × 5 m swath of forest at the Herbert Run site showing Ecosynth point clouds produced from high forward overlap images (96%, (a)) and low forward overlap images (60%, (b)), relative to the LIDAR first return point cloud over the same area.

3.3. Influence of Wind of Point Cloud Quality

The only statistically significant trend between wind speed (Beaufort wind force scale) and point cloud metrics at the α = 0.5 level was with Path-Z error (R2 = 0.99, p = 0.006) however the magnitude of the difference was minimal (RMSE 0.4–0.5 m; Table S2).

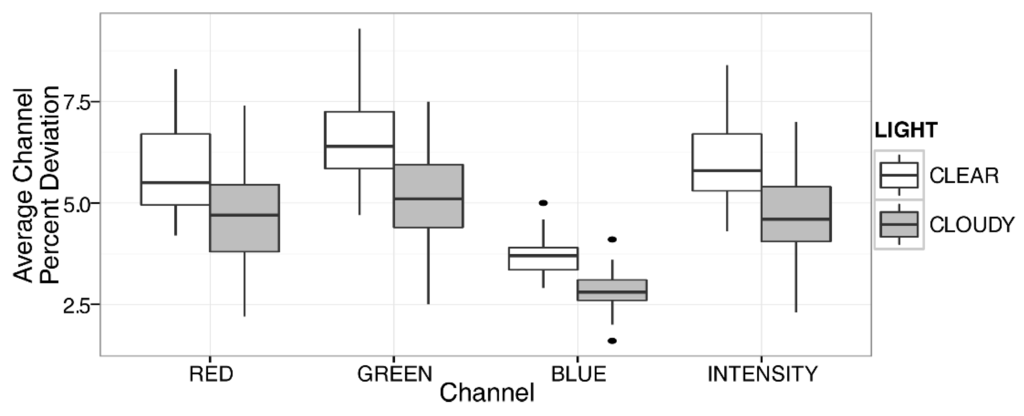

3.4. Radiometric Quality of Ecosynth Point Clouds

Average radiometric variation ranged from 2.0%–5.9% and was correlated with the mean rugosity [] or meters standard deviation of surface height within landcovers (R2 = 0.74, Figure S3). Color-spectral variation and rugosity was lowest in relatively homogenous turf areas (2.0%–3.1%) and highest in forest areas (3.3%–5.9%). Color variation also measures contrast and forest areas were observed with lower contrast on cloudy days compared to clear days (p < 0.0001, Figure 5).

Figure 5.

Average variation or contrast of point cloud point color values per channel within forest areas under clear and cloudy lighting conditions. All per channel differences in average variation under different lighting were significantly different based on analysis of variance (p < 0.0001).

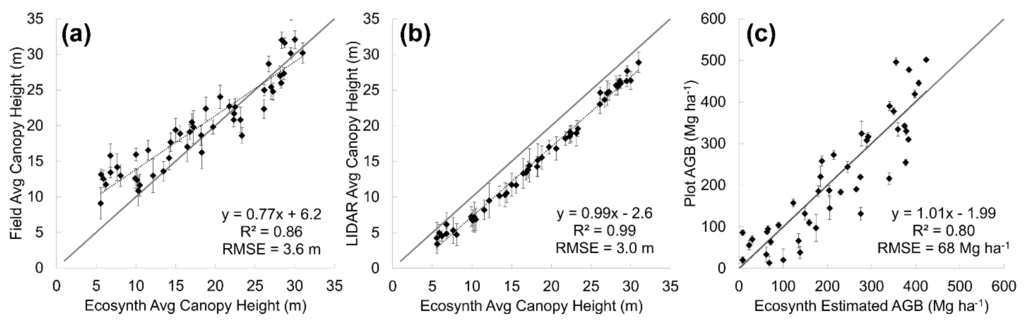

3.5. Optimal Conditions for Ecosynth UAV-SFM Remote Sensing of Forest Structure

Given these results, optimal UAV-SFM remote sensing scanning conditions were identified as those days where data was collected under clear skies and with maximum photographic overlap (>60% side photographic overlap). An altitude of 80 m was chosen because it provides the greatest overall area for data collection based on increased camera field of view (Table S1). Under these optimal scanning conditions (clear skies, 80 m altitude above the canopy, 80% side photographic overlap, n = 7 Table S1), Ecosynth TCH estimated field measured canopy height with 3.6 m RMSE at Herbert Run and SERC (Table 2, Figure 6a). At all sites, Ecosynth TCH estimates had relatively low error compared to LIDAR TCH (1.6–3.0 m RMSE) and generally high correlation values (R2 = 0.89–0.99; Table 2, Figure 6b). The correlation between Ecosynth TCH and field heights was low at SERC due to low variation in field heights []. Error in tree height estimates was high at Knoll sites for both Ecosynth and LIDAR (7.9 m and 6.4 m, respectively). Estimates of aboveground biomass (AGB) derived from TCH height estimates via allometric modeling at Herbert Run were similarly highly correlated with field estimates of AGB (R2 = 0.80) but with relatively high error (68 Mg ha−1, or roughly 33% of site mean AGB; Figure 6c). LIDAR TCH was similarly well correlated with field measured average canopy height (R2 = 0.88) and with higher error than Ecosynth TCH (RMSE = 5.5 m; Figure S4). Across all 82 replicates at Herbert Run there was a weak but significant relationship between solar angle and Ecosynth TCH error (R2 = 0.26, p < 0.00003, Figure S5), whether flights were carried out on clear or cloudy days.

Table 2.

Ecosynth point cloud quality traits and metrics at Herbert Run, Knoll and SERC under optimal parameters: clear skies, 80 m altitude, 80% side overlap processed with Photoscan v0.91.

| Average Ecosynth Quality Traits and Metrics | |||

|---|---|---|---|

| Point Cloud Traits and Metrics | Herbert Run | Knoll | SERC |

| N | 7 | 1 | 1 |

| Path-XY Error RMSE (m) | 1.1 | 0.7 | 1.0 |

| Path-Z Error RMSE (m) | 0.5 | 0.6 | 0.4 |

| ICP-XY Error RMSE (m) | 1.7 | 0.5 | 1.8 |

| ICP-Z Error RMSE (m) | 1.6 | 4.0 | 1.8 |

| Launch Location Elevation Difference (m) | 1.2 | 3.1 | 2.1 |

| Ecosynth TCH to Field Height RMSE (m) | 3.6 | 5.2 | 3.6 |

| Ecosynth TCH to LIDAR TCH RMSE (m) | 3.0 | 1.6 | 3.2 |

| Ecosynth TCH to Field Height R2 | 0.86 | 0.79 | 0.19 |

| Ecosynth TCH to LIDAR TCH R2 | 0.99 | 0.99 | 0.89 |

| Average Forest Point Density (points m−2) | 35 | 33 | 39 |

| Average Forest Canopy Penetration (% CV) | 20 | 24 | 11 |

| Computation Time (hours) | 45 | 50 | 15 |

Figure 6.

Linear models of Ecosynth average TCH across optimal replicates (n = 7) to field (a) and LIDAR TCH (b), average Ecosynth TCH estimated above ground biomass density (AGB Mg·ha−1) relative to field estimated AGB (c). Solid line is one to one line, error bars are standard deviation, dotted lines are linear model.

3.6. Influence of Computation on Ecosynth Point Cloud Quality

Across all replicates, computation time was highly correlated with roughly the square of the number of images (R2 = 0.96, Figure S6), requiring between 0.5–164 hours of computation. Computation time was improved with better computation resources (faster CPU and more RAM) but otherwise point clouds produced from the same photos on different computers had identical quality traits and metrics (Table S3). Point cloud quality traits and metrics were also similar when image GSD was increased from 3.4 cm to 4.7 cm (10 to 5 MP), however at GSD greater than 5 cm (≤2.5 MP) reconstructions failed, as represented by very large error values sometimes exceeding 100 m RMSE (Table S4).

Point cloud quality traits and metrics were relatively constant across different versions of Photoscan with noticeable differences primarily in point cloud density and in computation time, potentially reflecting changes in the algorithm designed to enhance performance (Table 3). Applying dense post-processing however did change point cloud quality traits and metrics relative to the point cloud produced from the same photos without such processing. With dense post-processing, Ecosynth TCH error relative to field measurements increased by 0.5 m while error relative to LIDAR TCH decreased by 0.9 m and, while PD was 4 times greater, as expected, CP actually decreased by roughly 40% (Table 3). Both the sparse and dense point clouds produced in the free and open source Ecosynther SFM algorithm had similar point cloud quality traits and metrics as those from Photoscan (v 1.0.4 sparse vs. dense; Table 3). Measures of point cloud positioning were comparable between Ecosynther and Photoscan, as were measures of error in TCH estimates relative to field measurements and LIDAR TCH. Point cloud density of Ecosynther dense point clouds was 8 times greater than Ecosynther sparse point clouds (7 to 59 points·m−2) and roughly 40% that of Photoscan dense point clouds. Forest canopy penetration of Ecosynther point clouds was lower than that typically observed for Photoscan point clouds (≈18%) and was improved by dense processing (9% to 13%), however this was the opposite effect observed with dense processing on Photoscan point clouds. As was the case with Photoscan, dense processing on Ecosynther point clouds resulted in increased error in TCH estimates relative to field measurements (3.8 m to 5.3 m RMSE) and decreased error relative to LIDAR TCH (2.9 m to 2.0 m). In total, producing a dense SFM point cloud required 56 hours of computation for Photoscan (v1.0.4) and 66 hours for Ecosynther, however Ecosynther was run on a computer with fewer system resources (CPU, RAM) which is generally expected to reduce computation speed (Table S3).

Table 3.

Comparison of Ecosynth point cloud quality traits and metrics for a single replicate processed under different structure from motion (SFM) algorithms.

| SFM Algorithm | Photoscan v0.84 a | Photoscan v0.91 a | Photoscan v1.04 a Sparse | Photoscan v1.04 a Dense | Ecosynther v1.0 b Sparse | Ecosynther v1.0 b Dense |

|---|---|---|---|---|---|---|

| Path-XY Error RMSE (m) | 1.1 | 1.1 | 1.1 | 1.1 | 1.1 | 1.1 |

| Path-Z Error RMSE (m) | 0.3 | 0.3 | 0.3 | 0.3 | 0.7 | 0.7 |

| ICP-XY Error RMSE (m) | 1.6 | 1.6 | 1.6 | 1.6 | 1.9 | 1.9 |

| ICP-Z Error RMSE (m) | 1.0 | 0.9 | 0.9 | 0.9 | 0.8 | 0.8 |

| Launch Location Elevation Difference (m) | 0.9 | 0.9 | 0.9 | 0.9 | 0.6 | 0.6 |

| Ecosynth TCH to Field Height RMSE (m) | 3.8 | 3.9 | 3.9 | 4.6 | 3.8 | 5.3 |

| Ecosynth TCH to LIDAR TCH RMSE (m) | 3.4 | 3.0 | 2.9 | 2.0 | 2.9 | 2.0 |

| Forest Point Cloud Density (points m−2) | 88 | 36 | 34 | 138 | 7 | 59 |

| Forest Canopy Penetration (% CV) | 18 | 18 | 18 | 11 | 9 | 13 |

| Computation Time (hours) | 30 | 45 | 16 | +40 c | 61 | +5c |

4. Discussion

By looking at variation in Ecosynth point cloud quality traits and metrics across a large number of flights carried out under different conditions (n = 82), this research is able to suggest optimal parameters for collecting UAV-SFM remote sensing measurements of forest structure. Ecosynth TCH estimates relative to field and LIDAR were highly influenced by errors in point cloud positioning and in point cloud density, both of which were improved by flying on clear days and with high photographic overlap. Optimal conditions of clear skies, 80% side photographic overlap, and 80 m altitude above the canopy resulted in estimates of canopy height that were highly correlated with both field and LIDAR estimates of canopy height (R2 = 0.86 and 0.99, respectively). While it is important to note that the optimal levels arrived at in this study are constrained in part by the type of equipment used (e.g., a higher resolution camera will produce point clouds with greater PD), the examination of the relationship between error and different flight configurations provides useful insights into the functioning of UAV-SFM remote sensing and suggests useful areas for future applications and research.

4.1. The Importance of Accurate DTM Alignment

Accurate co-registration of canopy surface models and DTMs from different data sources represents a major challenge to accurately estimating canopy structure [,,,] and remains a significant challenge for using Ecosynth UAV-SFM remote sensing to estimate canopy height [,]. The metric Launch Location Elevation Difference (LLED) was used to measure co-registration accuracy and was found to be highly correlated with overall error in canopy height measurements (R2 = 0.81, Figure 2). Such a measure is easily computed relative to any DTM that would be used for estimating canopy heights from Ecosynth point clouds, including old LIDAR DTMs that no longer accurately portray canopy height [,], satellite remote sensing based DTMs [], or even sub-meter precision GPS when no LIDAR is present, a situation not uncommon in many parts of the world []. Even so, users still need to be aware of potential errors introduced in height measurements based on the quality of the DTM [].

4.2. The Importance of Image Overlap

Similar to prior research in the use of LIDAR for measuring forests [,,] this study found a strong relationship between canopy height metrics, 3D point cloud density (PD), and canopy penetration (CP). Both PD and CP were strongly related to forward photographic overlap (Figure 3, Figure 4). The relationship between photographic overlap and CP is related to the number of possible views and view-angles on a given point in space. Prior studies that used high-overlap, multiple-view stereo photogrammetric cameras for mapping canopy structure reveal similar results, with a high overlap (90%) ‘multi-view’ photogrammetric model penetrating to the forest floor in canopy gaps while the traditional-overlap (60%) stereo photogrammetric model penetrated roughly half the depth into gaps [,].

PD may be affected by forward photographic overlap as a function of the view-angle on the same point in space as viewed from multiple images. Prior research found that point matching stability begins to decrease rapidly after view-angle exceeds 20° []. A decreased number of feature matches could then lead to the observed decrease in PD, resulting in reduced sampling of the canopy and potentially increased error in canopy height estimates relative to field measurements []. Based on the camera and UAV settings used here, view-angles exceeded 20° at a flight altitude of 80 m above the canopy when forward overlap was less than 72%. Even so, dense post-processing of point clouds increased PD, resulting in relatively constant height error regardless of forward overlap (Figure 3). These results suggest that the dense post-processing step can be used to reduce error in height estimates associated with low point cloud density and low forward photographic overlap.

Not surprisingly, point cloud density was also strongly related to flight altitude, which directly reflects the decrease in GSD (finer resolution) with decreasing altitude (R2 = 0.97, Table 1; Table S1). Similar gains in GSD and therefore PD might be achieved simply by flying at the same altitude with a higher resolution camera. Even so, care should be taken in flight planning to maximize forward overlap based on UAV speed, camera speed and field of view, and flight altitude. A camera with a higher resolution but narrower field of view would result in an increase in GSD and decrease in overlap for the same altitude and track spacing settings. Depending on the software and post-processing settings, PD could vary by as much as 4–8 times for the same dataset with sometimes half or double the amount of CP, which resulted in large differences in canopy height estimates (Table 3). As of 2015-08-26 the latest version of Photoscan was 1.1.6, which may also produce point clouds with different levels of point cloud quality. While this work did not focus on the potential range of settings that might be available in SFM software packages, future research should carefully consider how these parameters (e.g., quality settings and thresholds, number of photos to include, etc.) will impact canopy height estimates.

4.3. The Importance of Lighting, Contrast, and Radiometric Quality

Ecosynth point cloud radiometric color quality was evaluated based on the standard deviation of point colors within 1 m bins, which can also be interpreted as a measure of color contrast [] and image contrast was reduced on cloudy days due to the lack of direct sun light (Figure 5). Reduced image contrast can have a strong influence on the stability of image features [], resulting in increasing error in point cloud point position, as was observed in this study. Even so, after accounting for this error via LLED correction, estimates of canopy height were not significantly different on cloudy versus clear days. This suggests that UAV-SFM remote sensing is a viable option for mapping canopy structure even under cloudy conditions when it is otherwise not possible using satellite or airborne remote sensing [,]. While direct lighting increases contrast, it will also lead to an increase in the amount of shadows, as will flying on sunny days in the morning and afternoon with low solar angles. We found a weak but significant relationship between Ecosynth TCH error and solar angle (R2 = 0.26, Figure S5) whether on clear or cloudy days. Low solar angles on sunny days will produce larger shadows, potentially leading to an under-sampling of the canopy surface. However, the same solar angles on cloudy days should produce minimal shadows, but also less light overall, potentially leading to reduced contrast, and increased error (Table 1, Figure 5). That error was somewhat higher at low solar angles on both clear and cloudy days suggests that there are potentially multiple mechanisms linking light, SFM behavior, and point cloud quality that warrant further investigation. For example, it is unknown how image features and feature matching will respond to differences in shadow, solar angle, and light intensity. In addition, techniques such as high-dynamic-range (HDR) or ‘bracketed’ digital imaging should be explored for increasing image dynamic range and reducing the appearance of shadows [].

4.4. The Importance of Wind Speed

This study showed no strong effect of wind speed on point cloud quality traits and metrics. At average wind speeds up to 7.9 m·s−1 the UAV was able to carry out missions without running out of battery power. However, high wind speeds should be avoided as they will cause the UAV to use more power during flight and generally reduce UAV stability. Increased wind speed may also increase error in 3D point cloud quality by introducing error into the SFM-bundle adjustment step that assumes that the only thing moving is the camera and not the image features (leaves fluttering or branches swaying). Increased wind speed, and movement of branches, may also lead to decreased point cloud density due to features matches being rejected for lack of consistency. Similarly, increased wind might lead to an underestimation in the TCH estimate error due to the fact that small outer branches at the top of the canopy are more exposed to the wind, potentially increasing the likelihood of feature rejection. However, further work is needed to test these hypotheses. The multirotor UAVs used here are generally more stable under varying wind conditions compared to fixed-wing UAV and these results may not be applicable when the latter platform is used for SFM forest remote sensing.

4.5. Factors Influencing Tree Height Estimates

Ecosynth TCH underestimated field measured average maximum canopy height by 3.6 m RMSE, as did LIDAR by 5.5 m RMSE. However, Ecosynth and LIDAR TCH estimates of height were highly correlated (R2 = 0.99), with Ecosynth consistently overestimating LIDAR TCH by roughly 3 m. These results may be explained in part by error in field measurements and also the way in which height metrics are obtained from CHMs. Field based estimates of tree height have been found to have significant error (1 m to >6 m) owing to the challenges in observing the top of trees from the ground below [,,]. The measure top-of-canopy height (TCH), represents the average height over the entire outer surface of the canopy as observed by the remote sensing instrument [], whether Ecosynth or LIDAR. It is not surprising then that TCH underestimated field estimated height in this study since field measures are from the tallest observed point for the largest trees in a plot and most of the canopy surface observed by LIDAR or Ecosynth is below these tallest points, whether for a single crown or multiple crowns. Indeed, as more of the outer canopy surface was sampled in dense point clouds produced by Photoscan and Ecosynther, Ecosynth TCH error increased relative to field observations and decreased relative to LIDAR TCH, further emphasizing potential discrepancies between characterizing canopy height as the average of a surface versus the average of several fixed points. Differences in canopy height error may be explained by differences in canopy penetration, a finding similar to that observed by others [,,]. Here, LIDAR point clouds penetrated roughly 2.5–3.5 m further into the canopy than those from Ecosynth, which is comparable to the 3.0 m RMSE over-prediction of TCH by Ecosynth relative to LIDAR and the difference in RMSE relative to field measurements (3.6 m and 5.5 m, respectively). Differences in TCH may also be explained by co-registration errors. Vertical co-registration error was accounted for by applying the offset LLED value in point clouds prior to computing TCH (Section 3.2) but corrections to horizontal co-registration were not addressed. Average absolute horizontal accuracy of point clouds was roughly 2 m RMSE. Prior research using simulated LIDAR data found that increasing horizontal co-registration error did increase error in LIDAR height estimates, but the magnitude of this difference was small (<1 m height error at 5 m planimetric error) []. The use of GCPs would improve registration overall, as would the use of higher accuracy UAV-GPS, for example a new system of relatively inexpensive (US$1000) and lightweight (32 g) Piksi RTK-GPS modules [].

4.6. Future Research: The Path forward for UAV-SFM Remote Sensing

4.6.1. Optimizing Data Collection with Computation Time

While this research was able to arrive at an optimal data collection configuration, it remains unclear if other levels of overlap and altitude, combined with different levels of post-processing and image preparation would result in improved optimal forest canopy metrics or data collections. For example, this research shows that high photographic overlap is desirable for reducing error in canopy structure measurements, yet high overlap requires more images, resulting in rapidly increasing computation time, O(N2). It is unclear then if there exists a combination of reduced image resolution and forward overlap, plus different levels of SFM computation that could produce point clouds of similar or better quality but with reduced computation requirements. Flight configurations that minimize the number of images while still providing a large amount of photographic overlap will provide an optimal trade-off between point cloud quality and computation time. In addition, computation time can be reduced by supplying SFM with more information prior to photo alignment: for example using the ‘reference’ mode for ‘Align Photos’ in Photoscan along with estimates of camera location from UAV GPS will produce results more quickly than ‘generic’ mode operating without such data. The latter ‘generic’ mode was used here to facilitate scripted automation of point cloud post-processing following Ecosynth georeferencing techniques [].

4.6.2. The Role of the Camera Sensor; Multi and Hyperspectral Structure from Motion

Further research should also examine the role that the camera sensor plays in UAV-SFM reconstructions of forest canopies. Here, the same camera with the same calibration settings was used for all data collection, but it is unclear if different camera settings or even image post-processing could improve or change results. Varying camera exposure settings or even capturing multiple exposure settings over the same area [] may produce images with improved contrast, leading to potentially improved image matching. In addition, radiometric corrections like histogram equalization [] or image block homogenization [] may be useful to reduce the influence of variable scene lighting when it is not possible to collect images under constant lighting over the duration of entire flight []. It is also possible to carry out near-infrared remote sensing with UAVs using modified off-the-shelf digital cameras [,,] or custom light-weight multi-spectral cameras [,,] and future work should consider the potential of using such sensors for SFM mapping of canopy NDVI (Normalized Difference Vegetation Index) at high spatial resolution and in 3D, providing links between canopy structure, optical properties, and biophysical parameters. Future research should also consider the influence of different spectral bands on reconstructions of canopy structure from SFM, for example this and prior studies used RGB imagery for modeling canopy height [,], while other studies achieved comparable results using RG-NIR imagery [,], and it is not clear what, if any, effect the NIR information has on estimates of canopy structure compared to RGB alone.

4.6.3. Computer Vision Image Features: The New Pixel

Future research should more closely examine the role of image features in SFM remote sensing, an element of the ‘sensor system’ which may be as fundamental as the image pixel in optical image remote sensing or the laser spot in LIDAR remote sensing. The behavior of image features and feature matching may help to shed light on the relationship between image contrast and measures of canopy structure observed here, among other advances. Image features play a fundamental role in SFM reconstruction and many areas of computer vision and remote sensing. Briefly, image features represent a numerical descriptor of an entire image or part of an image that can be used to identify or track that same area in other images or identify how similar that area is to a reference library of other features which might include semantic or classification data about feature content. The reader is referred to several core texts about features for more information [,,,]. Recent studies have also shown the value of image features for remote sensing and ecological applications. Beijborn et al. [] used image features to automatically classify coral communities. Image features have been used for automatic detection and classification of leaves and flowers [,]. Recent research has also extended the use of image features and classification for ‘geographic image retrieval’ from high resolution remote sensing images []. Such questions could not be explored because the closed source nature of Photoscan prohibits access to the image features. However, free and open source algorithms like Ecosynther, which had comparable point cloud traits and metrics, would allow access to image features. Ultimately, access to computer vision image features in a UAV-SFM remote sensing point cloud offers the potential for a rich new area of data collection and information extraction: point cloud classification based on image features and image content. Merging research on image features with UAV-SFM point clouds could lead to a transformative new remote sensing fusion of 3D, color/spectral, and semantic information. This new data fusion may provide a comprehensive perspective on landscape and forest structure, composition, and quality not practical or possible with any other form of remote sensing.

5. Conclusions

The measurement of vegetation structural and spectral traits by automated SFM algorithms and consumer UAVs is a rapidly growing part of the field of remote sensing. UAV-SFM remote sensing will fill an increasingly important role in earth observation, providing a scale of measurement that bridges ground observations with those from aircraft and satellite systems []. In this study, a comprehensive examination of the effects of varying the conditions of UAV-SFM remote sensing on 3D point cloud traits and canopy metrics revealed important insights into the range of data quality possible using these techniques. While it is to be expected that the values obtained in this study may vary as a function of the hardware used (e.g., greater point cloud density with higher resolution sensors), many of the results are generalizable. Maximizing photographic overlap, especially forward overlap is crucial for minimizing canopy height error and overall sampling of the forest canopy. Yet high overlap results in more photos and increased computation time, irrespective of computation equipment, highlighting important trade-offs between data quality and the ability to rapidly produce high quality results. There are also many facets that were not explored in the current work: how does using different camera sensors or spectral bands (e.g., NIR) influence data quality? How does the type of platform (fixed-wing vs. multirotor) or forest type (Temperate Deciduous, Evergreen Needleleaf, Tropical) influence the ability of UAV-SFM remote sensing to accurately characterize canopy metrics? Indeed, there may be as many combinations of unique data collection settings as research questions. To that end, this research provides a framework for describing UAV-SFM datasets across studies and sites based on several fundamental point cloud quality traits and metrics. This work also shed light on the important role that image features play in SFM reconstruction, the underlying mechanisms for which should be given more careful consideration in future work, for example in the influence between image feature matching and flight parameters and also in image contrast. Indeed, it is with image features that UAV-SFM remote sensing can provide an entire new avenue for remote sensing of forest properties. Beyond just replicating existing remote sensing tasks (e.g., measuring canopy height) access to image features of canopies opens the door for automated mapping and identification of canopy objects like leaves, fruits, and flowers through the use of image features. If UAVs provide field scientists with a birds-eye view of the landscape, computer vision will provide the ‘ecologists-eye’ view of the elements within that landscape.

Supplementary Files

Supplementary File 1Acknowledgements

This material is based upon work supported by the US National Science Foundation under Grant DBI 1147089 awarded March 1, 2012 to Erle Ellis and Marc Olano. Jonathan Dandois was supported by NSF IGERT grant 0549469, PI Claire Welty and hosted by CUERE (Center for Urban Environmental Research and Education). The authors thank the Ecosynth team: Dana Boswell Nadwodny, Stephen Zidek and Lindsay Digman for their help with UAV data collection as well as Yu Wang, Yingying Zhu, Will Bierbower, and Terrence Seneschal for their help with Ecosynther and Ecosynth processing. Graphical abstract UAV image credit: J. Leighton Reid. Data and information on Ecosynth are available online at http://ecosynth.org/.

Author Contributions

Dandois conceived of and carried out the research, analyzed the data and wrote the manuscript. Olano and Ellis contributed to development of research ideas and reviewing manuscript. Olano oversaw development of Ecosynther application. Dandois and Ellis conceived of Ecosynth concept.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Lindquist, E.J.; D’annunzio, R.; Gerrand, A.; Macdicken, K.; Achard, F.; Beuchle, R.; Brink, A.; Eva, H.D.; Mayaux, P.; San-Miguel-Ayanz, J.; Stibig, H.-J. Global Forest Land-Use Change 1990–2005; FAO & JRC: Rome, Italy, 2012. [Google Scholar]

- Houghton, R.A.; Hall, F.; Goetz, S.J. Importance of biomass in the global carbon cycle. J Geophys. Res. 2009, 114, 1–13. [Google Scholar] [CrossRef]

- Asner, G.P.; Martin, R.E. Airborne spectranomics: Mapping canopy chemical and taxonomic diversity in tropical forests. Front. Ecol. Environ. 2009, 7, 269–276. [Google Scholar] [CrossRef]

- Defries, R.S.; Hansen, M.C.; Townshend, J.R.G.; Janetos, A.C.; Loveland, T.R. A new global 1-km dataset of percentage tree cover derived from remote sensing. Glob. Change Biol. 2000, 6, 247–254. [Google Scholar] [CrossRef]

- Hansen, M.C.; Roy, D.P.; Lindquist, E.; Adusei, B.; Justice, C.O.; Altstatt, A. A method for integrating MODIS and Landsat data for systematic monitoring of forest cover and change in the Congo Basin. Remote Sens. Environ. 2008, 112, 2495–2513. [Google Scholar] [CrossRef]

- Lefsky, M.A.; Cohen, W.B.; Parker, G.G.; Harding, D.J. LIDAR remote sensing for ecosystem studies. Bioscience 2002, 52, 20–30. [Google Scholar] [CrossRef]

- Parker, G.G.; Russ, M.E. The canopy surface and stand development: Assessing forest canopy structure and complexity with near-surface altimetry. For. Ecol. Manage. 2004, 189, 307–315. [Google Scholar] [CrossRef]

- Richardson, A.D.; Braswell, B.; Hollinger, D.Y.; Jenkins, J.C.; Ollinger, S.V. Near-surface remote sensing of spatial and temporal variation in canopy phenology. Ecol. Appl. 2009, 19, 1417–1428. [Google Scholar] [CrossRef] [PubMed]

- Zhang, X.; Friedl, M.; Schaaf, C.; Strahler, A.; Hodges, J.; Gao, F.; Reed, B.; Huete, A. Monitoring vegetation phenology using MODIS. Remote Sens. Environ. 2003, 84, 471–475. [Google Scholar] [CrossRef]

- Lefsky, M.; Cohen, W. SELECTION of remotely sensed data. In Remote Sensing of Forest Environments; Wulder, M., Franklin, S., Eds.; Kluwer Academic Publishers: Boston, MA, USA, 2003; pp. 13–46. [Google Scholar]

- Beuchle, R.; Eva, H.D.; Stibig, H.-J.; Bodart, C.; Brink, A.; Mayaux, P.; Johansson, D.; Achard, F.; Belward, A. A satellite data set for tropical forest area change assessment. Int. J. Remote Sens. 2011, 32, 7009–7031. [Google Scholar] [CrossRef]

- Anderson, K.; Gaston, K.J. Lightweight unmanned aerial vehicles will revolutionize spatial ecology. Front. Ecol. Environ. 2013, 11, 138–146. [Google Scholar] [CrossRef]

- Turner, W. Sensing biodiversity. Science 2014, 346, 301–302. [Google Scholar] [CrossRef] [PubMed]

- Dandois, J.P.; Ellis, E.C. High spatial resolution three-dimensional mapping of vegetation spectral dynamics using computer vision. Remote Sens. Environ. 2013, 136, 259–276. [Google Scholar] [CrossRef]

- Rango, A.; Laliberte, A.; Herrick, J.E.; Winters, C.; Havstad, K.; Steele, C.; Browning, D. Unmanned aerial vehicle-based remote sensing for rangeland assessment, monitoring, and management. J. Appl. Remote Sens. 2009, 3. [Google Scholar] [CrossRef]

- Turner, D.; Lucieer, A.; Watson, C. An automated technique for generating georectified mosaics from ultra-high resolution unmanned aerial vehicle (UAV) imagery, based on structure from motion (SFM) point clouds. Remote Sens. 2012, 4, 1392–1410. [Google Scholar] [CrossRef]

- Getzin, S.; Wiegand, K.; Schöning, I. Assessing biodiversity in forests using very high-resolution images and unmanned aerial vehicles. Methods Ecol. Evol. 2012, 3, 397–404. [Google Scholar] [CrossRef]

- Koh, L.P.; Wich, S.A. Dawn of drone ecology: Low-cost autonomous aerial vehicles for conservation. Trop. Conserv. Sci. 2012, 5, 121–132. [Google Scholar]

- Hunt, J.; Raymond, E.; Hively, W.D.; Fujikawa, S.; Linden, D.; Daughtry, C.S.; McCarty, G. Acquisition of NIR-green-blue digital photographs from unmanned aircraft for crop monitoring. Remote Sens. 2010, 2, 290–305. [Google Scholar] [CrossRef]

- Snavely, N.; Seitz, S.; Szeliski, R. Modeling the world from internet photo collections. Int. J. Comp. Vis. 2008, 80, 189–210. [Google Scholar] [CrossRef]

- Lisein, J.; Pierrot-Deseilligny, M.; Bonnet, S.; Lejeune, P. A photogrammetric workflow for the creation of a forest canopy height model from small unmanned aerial system imagery. Forests 2013, 4, 922–944. [Google Scholar] [CrossRef]

- Dandois, J.P.; Ellis, E.C. Remote sensing of vegetation structure using computer vision. Remote Sens. 2010, 2, 1157–1176. [Google Scholar] [CrossRef]

- Puliti, S.; Ørka, H.; Gobakken, T.; Næsset, E. Inventory of small forest areas using an unmanned aerial system. Remote Sens. 2015, 7, 9632–9654. [Google Scholar] [CrossRef]

- Castillo, C.; Pérez, R.; James, M.R.; Quinton, J.N.; Taguas, E.V.; Gómez, J.A. Comparing the accuracy of several field methods for measuring gully erosion. Soil Sci. Soc. Am. J. 2012, 76, 1319–1332. [Google Scholar] [CrossRef]

- Javernick, L.; Brasington, J.; Caruso, B. Modeling the topography of shallow braided rivers using structure-from-motion photogrammetry. Geomorphology 2014, 213, 166–182. [Google Scholar] [CrossRef]

- Dey, D.; Mummert, L.; Sukthankar, R. Classification of plant structures from uncalibrated image sequences. In Proceedings of the 2012 IEEE Workshop on Applications of Computer Vision WACV, Breckenridge, CO, USA, 9–11 January 2012; pp. 329–336.

- Mathews, A.; Jensen, J. Visualizing and quantifying vineyard canopy lai using an unmanned aerial vehicle (UAV) collected high density structure from motion point cloud. Remote Sens. 2013, 5, 2164–2183. [Google Scholar]

- Torres-Sánchez, J.; López-Granados, F.; Serrano, N.; Arquero, O.; Peña, J.M. High-throughput 3-D monitoring of agricultural-tree plantations with unmanned aerial vehicle (UAV) technology. PLoS ONE 2015, 10, e0130479. [Google Scholar] [CrossRef] [PubMed]

- de Matías, J.; Sanjosé, J.J.D.; López-Nicolás, G.; Sagüés, C.; Guerrero, J.J. Photogrammetric methodology for the production of geomorphologic maps: Application to the veleta rock glacier (sierra nevada, granada, spain). Remote Sens. 2009, 1, 829–841. [Google Scholar] [CrossRef]

- Harwin, S.; Lucieer, A. Assessing the accuracy of georeferenced point clouds produced via multi-view stereopsis from unmanned aerial vehicle (UAV) imagery. Remote Sens. 2012, 4, 1573–1599. [Google Scholar] [CrossRef]

- James, M.; Applegarth, L.J.; Pinkerton, H. Lava channel roofing, overflows, breaches and switching: Insights from the 2008–2009 eruption of mt. Etna. Bull. Volcanol 2012, 74, 107–117. [Google Scholar] [CrossRef]

- James, M.; Robson, S. Straightforward reconstruction of 3d surfaces and topography with a camera: Accuracy and geoscience application. J Geophys. Res.: Earth Surf. 2012, 117, 1–23. [Google Scholar] [CrossRef]

- Rosnell, T.; Honkavaara, E. Point cloud generation from aerial image data acquired by a quadrocopter type micro unmanned aerial vehicle and a digital still camera. Sensors 2012, 12, 453–480. [Google Scholar] [CrossRef] [PubMed]

- Westoby, M.J.; Brasington, J.; Glasser, N.F.; Hambrey, M.J.; Reynolds, J.M. ‘Structure-from-motion’ photogrammetry: A low-cost, effective tool for geoscience applications. Geomorphology 2012, 179, 300–314. [Google Scholar] [CrossRef]

- Næsset, E. Effects of different sensors, flying altitudes, and pulse repetition frequencies on forest canopy metrics and biophysical stand properties derived from small-footprint airborne laser data. Remote Sens. Environ. 2009, 113, 148–159. [Google Scholar] [CrossRef]

- Jenkins, J.C.; Chojnacky, D.C.; Heath, L.S.; Birdsey, R.A. National-scale biomass estimators for united states tree species. For. Sci. 2003, 49, 12–35. [Google Scholar]

- Dandois, J.P.; Boswell, D.; Anderson, E.; Bofto, A.; Baker, M.; Ellis, E.C. Forest census and map data for two temperate deciduous forest edge woodlot patches in Baltimore MD, USA. Ecology 2015, 96, 1734. [Google Scholar] [CrossRef]

- McMahon, S.M.; Parker, G.G.; Miller, D.R. Evidence for a recent increase in forest growth. Proc. Natl. Acad. Sci. 2010, 107, 3611–3615. [Google Scholar] [CrossRef] [PubMed]

- ForestGeo. Available online: http://www.forestgeo.si.edu (accessed on 25 July 2015).

- Mikrokopter. Available online: http://www.mikrokopter.de (accessed on 9 September 2015).

- Arducopter. Available online: http://copter.ardupilot.com (accessed on 9 September 2015).

- Ecosynth Wiki. Available online: http://wiki.ecosynth.org (accessed on 9 September 2015).

- Runyan, C. Methodology for Installation of Eddy Covariance Meteorological Sensors and Data Processing; UMBC/CUERE Technical Memo 2009/002: Baltimore, MD, USA, 2009. [Google Scholar]

- Beaufort Wind Force Scale. Available online: http://www.spc.noaa.gov/faq/tornado/beaufort.html (accessed on 20 May 2014).

- Habib, A.; Bang, K.I.; Kersting, A.P.; Lee, D.-C. Error budget of LIDAR systems and quality control of the derived data. Photogramm. Eng. Remote Sens. 2009, 75, 1093–1108. [Google Scholar] [CrossRef]

- Ecosynther v1.0. Available online: https://bitbucket.org/ecosynth/ecosynther (accessed on 9 September 2015).

- Furukawa, Y.; Ponce, J. Accurate, dense, and robust multiview stereopsis. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 1362–1376. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Olano, M. A framework for GPS 3D model reconstruction using structure-from-motion. In Proceedings of ACM SIGGRAPH’ 11, Vancouver, BC, Canada, 7–11 August 2011.

- Wu, C. SIFTGPU: A GPU implementation of scale invariant feature transform (SIFT). Available online: http://www.cs.unc.edu/~ccwu/siftgpu/ (accessed on 27 July 2015).

- Szeliski, R. Computer Vision; Algorithms and Applications, Texts in Computer Science, Springer-Verlag: London, UK, 2011. [Google Scholar]

- Li, J.; Allinson, N.M. A comprehensive review of current local features for computer vision. Neurocomputing 2008, 71, 1771–1787. [Google Scholar] [CrossRef]

- Mikolajczyk, K.; Schmid, C. A performance evaluation of local descriptors. IEEE Trans. Pattern Anal. Mach. Intell. 2005, 27, 1615–1630. [Google Scholar] [CrossRef] [PubMed]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vision 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Ecosynth Aerial. Available online: http://code.ecosynth.org/EcosynthAerial (accessed on 9 September 2015).

- Besl, P.J.; McKay, H.D. A method for registration of 3-D shapes. IEEE Trans. Pattern Anal. Mach. Intell. 1992, 14, 239–256. [Google Scholar] [CrossRef]

- Asner, G.P.; Mascaro, J. Mapping tropical forest carbon: Calibrating plot estimates to a simple LIDAR metric. Remote Sens. Environ. 2014, 140, 614–624. [Google Scholar] [CrossRef]

- Baltsavias, E.; Pateraki, M.; Zhang, L. Radiometric and geometric evaluation of IKONOS GEO images and their use for 3D building modelling. In Proceedings of the Joint ISPRS Workshop “High Resolution Mapping from Space 2001”, Hanover, Germany, 19–21 September 2001; 2001; pp. 19–21. [Google Scholar]

- Yang, Y.; Newsam, S. Geographic image retrieval using local invariant features. IEEE Trans. Geosci. Remote Sens. 2013, 51, 818–832. [Google Scholar] [CrossRef]

- Zahawi, R.A.; Dandois, J.P.; Holl, K.D.; Nadwodny, D.; Reid, J.L.; Ellis, E.C. Using lightweight unmanned aerial vehicles to monitor tropical forest recovery. Biol. Conserv. 2015, 186, 287–295. [Google Scholar] [CrossRef]

- Ni, W.; Ranson, K.J.; Zhang, Z.; Sun, G. Features of point clouds synthesized from multi-view ALOS/PRISM data and comparisons with LIDAR data in forested areas. Remote Sens. Environ. 2014, 149, 47–57. [Google Scholar] [CrossRef]

- Ogunjemiyo, S.; Parker, G.; Roberts, D. Reflections in bumpy terrain: Implications of canopy surface variations for the radiation balance of vegetation. IEEE Geosci. Remote Sens. Lett. 2005, 2, 90–93. [Google Scholar] [CrossRef]

- Sithole, G.; Vosselman, G. Experimental comparison of filter algorithms for bare-earth extraction from airborne laser scanning point clouds. ISPRS J. Photogramm. Remote Sens. 2004, 59, 85–101. [Google Scholar] [CrossRef]

- St. Onge, B.; Hu, Y.; Vega, C. Mapping the height and above-ground biomass of a mixed forest using LIDAR and stereo IKONOS images. Int. J. Remote Sens. 2008, 29, 1277–1294. [Google Scholar] [CrossRef]

- Tinkham, W.T.; Huang, H.; Smith, A.M.S.; Shrestha, R.; Falkowski, M.J.; Hudak, A.T.; Link, T.E.; Glenn, N.F.; Marks, D.G. A comparison of two open source LIDAR surface classification algorithms. Remote Sens. 2011, 3, 638–649. [Google Scholar] [CrossRef]

- Wasser, L.; Day, R.; Chasmer, L.; Taylor, A. Influence of vegetation structure on LIDAR-derived canopy height and fractional cover in forested riparian buffers during leaf-off and leaf-on conditions. PLoS ONE 2013, 8, e54776. [Google Scholar] [CrossRef] [PubMed]

- Chasmer, L.; Hopkinson, C.; Smith, B.; Treitz, P. Examining the influence of changing laser pulse repetition frequencies on conifer forest canopy returns. Photogramm. Eng. Remote Sens. 2006, 72, 1359–1367. [Google Scholar] [CrossRef]

- Hirschmugl, M.; Ofner, M.; Raggam, J.; Schardt, M. Single tree detection in very high resolution remote sensing data. Remote Sens. Environ. 2007, 110, 533–544. [Google Scholar] [CrossRef]

- Ofner, M.; Hirschmugl, M.; Raggam, H.; Schardt, M. 3D stereo mapping by means of UltracamD data. In Proceedings of the Workshop on 3D Remote Sensing in Forestry, Vienna, Austria, 14–15 February 2006.

- Cox, S.E.; Booth, D.T. Shadow attenuation with high dynamic range images. Environ. Monit. Assess. 2009, 158, 231–241. [Google Scholar] [CrossRef] [PubMed]

- Bragg, D. An improved tree height measurement technique tested on mature southern pines. South. J. Appl. For. 2008, 32, 38–43. [Google Scholar]

- Goetz, S.; Dubayah, R. Advances in remote sensing technology and implications for measuring and monitoring forest carbon stocks and change. Carbon Manag. 2011, 2, 231–244. [Google Scholar] [CrossRef]

- Larjavaara, M.; Muller-Landau, H.C. Measuring tree height: A quantitative comparison of two common field methods in a moist tropical forest. Methods Ecol. Evol. 2013, 4, 793–801. [Google Scholar] [CrossRef]

- Frazer, G.W.; Magnussen, S.; Wulder, M.A.; Niemann, K.O. Simulated impact of sample plot size and co-registration error on the accuracy and uncertainty of LIDAR-derived estimates of forest stand biomass. Remote Sens. Environ. 2011, 115, 636–649. [Google Scholar] [CrossRef]

- Swiftnav Piksi RTK-GPS. Available online: http://www.swiftnav.com/piksi.html (accessed on 9 September 2015).

- Richards, J.A. Remote Sensing Digital Image Analysis; Springer: Berlin, Germany, 2006; p. 437. [Google Scholar]

- Honkavaara, E.; Saari, H.; Kaivosoja, J.; Pölönen, I.; Hakala, T.; Litkey, P.; Mäkynen, J.; Pesonen, L. Processing and assessment of spectrometric, stereoscopic imagery collected using a lightweight UAV spectral camera for precision agriculture. Remote Sens. 2013, 5, 5006–5039. [Google Scholar] [CrossRef]

- Berni, J.; Zarco-Tejada, P.J.; Su·rez, L.; Fereres, E. Thermal and narrowband multispectral remote sensing for vegetation monitoring from an unmanned aerial vehicle. IEEE Trans. Geosci. Remote Sens. 2009, 47, 722–738. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.J.; González-Dugo, V.; Berni, J.A.J. Fluorescence, temperature and narrow-band indices acquired from a UAV platform for water stress detection using a micro-hyperspectral imager and a thermal camera. Remote Sens. Environ. 2012, 117, 322–337. [Google Scholar] [CrossRef]

- Beijborn, O.; Edmunds, P.J.; Kline, D.I.; Mitchell, B.G.; Kriegman, D. Automated annotation of coral reef survey images. In Proceedings of Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 16–21 June 2012; pp. 1170–1177.

- Nilsback, M.-E. An Automatic Visual Flora-Segmentation and Classication of Flower Images. Ph.D. Thesis, University of Oxford, Oxford, UK, 2009. [Google Scholar]