Journal Description

Current Oncology

Current Oncology

is an international, peer-reviewed, open access journal that since 1994 represents a multidisciplinary medium for clinical oncologists to report and review progress in the management of this disease, and published monthly online by MDPI (from Volume 28, Issue 1 - 2021). The Canadian Association of Medical Oncologists (CAMO), Canadian Association of Psychosocial Oncology (CAPO), Canadian Association of General Practitioners in Oncology (CAGPO), Cell Therapy Transplant Canada (CTTC) and others are affiliated with Current Oncology and their members receive discounts on the article processing charges.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), PubMed, MEDLINE, PMC, Embase, and other databases.

- Journal Rank: JCR - Q2 (Oncology) / CiteScore - Q1 (Oncology)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 22.8 days after submission; acceptance to publication is undertaken in 2.6 days (median values for papers published in this journal in the second half of 2025).

- Recognition of Reviewers: APC discount vouchers, optional signed peer review, and reviewer names published annually in the journal.

- Journal Clusters of Oncology: Cancers, Current Oncology, Onco and Targets.

Impact Factor:

3.4 (2024);

5-Year Impact Factor:

3.3 (2024)

Latest Articles

Readiness to Consider and Adopt Genetic and Genomic Tests in Canada—An Update to the State of Readiness Report

Curr. Oncol. 2026, 33(6), 334; https://doi.org/10.3390/curroncol33060334 - 4 Jun 2026

Abstract

►

Show Figures

(1) Background: Genomic medicine—i.e., the use of laboratory-based biomarkers that measure the expression, function and regulation of genes and gene products to aid healthcare decision making is a rapidly emerging technology. Readiness to consider and adopt new testing programs effectively and avoid critical

[...] Read more.

(1) Background: Genomic medicine—i.e., the use of laboratory-based biomarkers that measure the expression, function and regulation of genes and gene products to aid healthcare decision making is a rapidly emerging technology. Readiness to consider and adopt new testing programs effectively and avoid critical challenges requires health systems to harbor a number of key conditions that address infrastructural, as well operational and other needs. This assessment re-examines Canada’s state of readiness since a previously published 2023 assessment. (2) Methods: A mixed-methods approach of a review of the literature and key informant interviews with a purposive sample of experts was used. Health system readiness was assessed using a previously published set of conditions. (3) Results: This updated analysis of Canada’s state of readiness for genetic and genomic testing reveals Canada is only partially ready for a future of genomic medicine, although some progress has been made since 2023. The most established conditions were the use of appropriate service models and the integration of innovation and healthcare delivery functions. They suggest that Canada’s major healthcare regions are moving closer to a state of readiness for the consideration and adoption of new testing required for genomic medicine, although using different approaches and at different rates. These findings should be seen as generalizable to other regions internationally—health systems need to have functions that promote responsiveness and resilience, i.e., are able to recognize valuable innovation and quickly shift priorities and create conditions necessary to enable it.

Full article

Open AccessCase Report

Time Is Key: Early Diagnosis of Post-Transplant Lymphoproliferative Disorder Presenting as Primary CNS Diffuse Large B-Cell Lymphoma

by

Asli Altunbas, Aarti Desai, Andrea Muniz, Hussien Al Asi, Rajvi Chaudhary, Laxmi Raj Bangari, Surbhi Dadwal, Jose Ruiz, Juan Leoni, Julie Hammack, Harry Powers, James Foran and Rohan Goswami

Curr. Oncol. 2026, 33(6), 333; https://doi.org/10.3390/curroncol33060333 - 4 Jun 2026

Abstract

Post-transplant lymphoproliferative disorder (PTLD) involving the central nervous system (CNS) is a rare but serious life-threatening complication seen in recipients of solid organ transplant. Primary CNS encompasses 5–15% of all types of PTLD diagnoses, and heart transplant recipients represent 3–5% of those reported

[...] Read more.

Post-transplant lymphoproliferative disorder (PTLD) involving the central nervous system (CNS) is a rare but serious life-threatening complication seen in recipients of solid organ transplant. Primary CNS encompasses 5–15% of all types of PTLD diagnoses, and heart transplant recipients represent 3–5% of those reported cases. Diagnosis is often delayed due to the highly variable presentation, with some cases remaining undiagnosed for years. Multidisciplinary collaboration is crucial for early diagnosis and management. A 53-year-old woman patient presented with altered mental status. MRI revealed nodular ventriculitis and bilateral periventricular hyperdense infiltrates. CSF studies demonstrated lymphocytic pleocytosis, elevated protein, and EBV-PCR-positive results. A stereotactic brain needle biopsy confirmed the presence of EBV-positive diffuse large B-cell lymphoma, consistent with primary CNS PTLD, 14 months after her heart transplant. Despite appropriate management, the patient experienced progressive neurological decline and ultimately suffered a fatal intracerebral hemorrhage. We demonstrate the importance of the early diagnosis and variable presentation of post-heart-transplant PTLD. The importance of surveillance regardless of EBV status and close monitoring of disease progression due to potential life-threatening complications, such as fatal hemorrhages. Therefore, primary CNS-PTLD remains a challenging disease and is being increasingly recognized with improved transplant recipient survival and prolonged exposure to chronic immunosuppression.

Full article

(This article belongs to the Section Neuro-Oncology)

►▼

Show Figures

Figure 1

Open AccessArticle

Regional Variations in Guideline Concordance for Women with Triple-Negative and HER2+ Breast Cancer in Nova Scotia

by

Andrea Mayo, Hanna Stewart, Tongtong Li, Cameron Penny, Rachel Hemsworth, Ashley Drohan, Katerina Neumann, Boris Gala-Lopez, Richard T. Spence and Gregory Knapp

Curr. Oncol. 2026, 33(6), 332; https://doi.org/10.3390/curroncol33060332 - 2 Jun 2026

Abstract

Background: Systematic neoadjuvant therapy (NAT) is recommended for triple-negative (TNBC) and HER2+ breast cancers when tumor size at diagnosis is ≥2 cm. This study aimed to determine the proportion of patients in Nova Scotia with non-metastatic, incident, ≥2 cm TNBC and HER2+

[...] Read more.

Background: Systematic neoadjuvant therapy (NAT) is recommended for triple-negative (TNBC) and HER2+ breast cancers when tumor size at diagnosis is ≥2 cm. This study aimed to determine the proportion of patients in Nova Scotia with non-metastatic, incident, ≥2 cm TNBC and HER2+ breast cancer who received guideline-concordant NAT, stratified by administrative zone. Methods: This retrospective analysis utilized data from the Nova Scotia Breast Screening Program. Adult patients (18–80 years) with an incident, non-metastatic, ≥T2 breast cancer diagnosis between 2021 and 2023 were considered theoretically eligible for NAT, defined by a wait time of ≥4 months between core biopsy and surgery. Guideline-concordant care was confirmed through chart review and compared across health system administrative zones and over time. Results: Of the 291 women theoretically eligible for NAT, 67.0% received it. Significant differences in NAT receipt were observed across administrative zones (Central 73.1%, Eastern 72.9%, Northern 57.4%, Western 54.2%, p = 0.030). Conclusions: This study identifies meaningful regional disparities in NAT receipt for TNBC and HER2+ breast cancer in Nova Scotia. Targeted strategies to improve guideline concordance are warranted and may lead to better patient outcomes.

Full article

(This article belongs to the Section Breast Cancer)

Open AccessCase Report

Rhabdomyosarcoma Confined to the Bone Marrow: A Case Report and Literature Review

by

Mohammad Hassan Hodroj, Chloe Batrouni, Alexandre da Silva Faco Junior, Mohammad Amin Salehi and Ramy Saleh

Curr. Oncol. 2026, 33(6), 331; https://doi.org/10.3390/curroncol33060331 - 2 Jun 2026

Abstract

Rhabdomyosarcoma (RMS) confined to the bone marrow represents an exceptionally rare and aggressive presentation that can mimic primary hematological malignancies, often leading to diagnostic delays and therapeutic challenges. We report the case of a 34-year-old woman who presented with clinical and laboratory findings

[...] Read more.

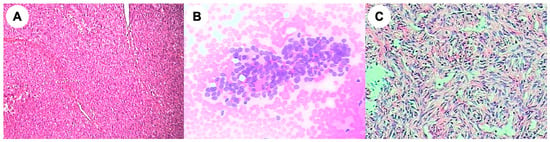

Rhabdomyosarcoma (RMS) confined to the bone marrow represents an exceptionally rare and aggressive presentation that can mimic primary hematological malignancies, often leading to diagnostic delays and therapeutic challenges. We report the case of a 34-year-old woman who presented with clinical and laboratory findings highly suggestive of a hematological disorder, including cytopenias and diffuse bone marrow involvement. Initial evaluation raised suspicion for leukemia; however, comprehensive diagnostic work-up, including immunohistochemistry and molecular studies, ultimately confirmed the diagnosis of PAX3/FOXO1 gene-rearranged alveolar RMS isolated in the bone marrow, with no identifiable primary soft tissue mass. The patient was treated with an intensive multi-agent chemotherapy regimen, resulting in a marked hematological recovery and a significant radiological improvement after a limited number of cycles. We further reviewed the limited literature on bone-marrow-confined RMS, highlighting the proposed pathophysiological mechanisms, diagnostic pitfalls, and reported treatment strategies. Given the absence of standardized management guidelines for this rare entity, therapeutic approaches are often extrapolated from conventional RMS protocols or regimens used for high-grade sarcomas. Our experience supports the potential efficacy of intensive chemotherapy in achieving meaningful clinical responses. This case report emphasizes the challenges in the diagnosis of RMS confined to the bone marrow due to its atypical presentation. It also highlights the poor prognosis and aggressiveness of this entity compared to conventional RMS.

Full article

(This article belongs to the Section Bone and Soft Tissue Oncology)

►▼

Show Figures

Figure 1

Open AccessGuidelines

Consensus Statement from the Society of Gynecologic Oncology of Canada on Folate Receptor α Testing in Ovarian Cancer

by

Kim Ma, Basile Tessier-Cloutier, Alon D. Altman, Mark S. Carey, Josee-Lyne Ethier, Susie Lau, Cheng-Han Lee, Laura Hopkins, Katharina Kieser, Aalok Kumar, Shuk On Annie Leung, Julie M. V. Nguyen, Helen MacKay, Jacob McGee, Lina Salman, Shannon Salvador, Cidalia Sluce, Luiza Tatar, Alicia A. Tone, Anna Tinker, Elizabeth Tremblay, Ana C. Veneziani, Danielle Vicus, Stephen Welch, Sharon Windsor Harker and Melica Nourmoussavi Brodeuradd

Show full author list

remove

Hide full author list

Curr. Oncol. 2026, 33(6), 330; https://doi.org/10.3390/curroncol33060330 - 1 Jun 2026

Abstract

Background: Folate receptor alpha (FRα), commonly expressed in epithelial ovarian cancers, is a clinically actionable biomarker following approval of the antibody–drug conjugate mirvetuximab soravtansine (MIRV). Pivotal trials showed that high FRα expression predicts MIRV benefit, creating the need for standardized testing to ensure

[...] Read more.

Background: Folate receptor alpha (FRα), commonly expressed in epithelial ovarian cancers, is a clinically actionable biomarker following approval of the antibody–drug conjugate mirvetuximab soravtansine (MIRV). Pivotal trials showed that high FRα expression predicts MIRV benefit, creating the need for standardized testing to ensure timely, equitable access. Methods: To address the need for guidance on FRα testing, the Society of Gynecologic Oncology of Canada convened a multidisciplinary Expert Panel to review the evidence and integrate Canadian clinical, pathology, laboratory, and patient perspectives. Consensus recommendations were developed through structured evidence review, expert discussion, iterative revision, and patient partner input. Results: The panel issued recommendations on the clinical role and timing of FRα testing, tissue requirements, assay selection and validation, interpretation and reporting standards, laboratory quality assurance, reimbursement, and equitable access. It is recommended that FRα testing be available to all patients with epithelial ovarian cancer, with results available no later than platinum-resistant disease, using a validated assay, preferably the Ventana FOLR1 RxDx assay or an appropriately validated laboratory-developed test. Standardized synoptic reporting, participation in external quality assurance programs, and clear patient communication were deemed essential. Conclusions: These recommendations aim to promote integrated, equitable, standardized FRα testing across Canada and support timely identification of patients eligible for FRα-directed therapy, clinical trial enrollment, and future biomarker-driven treatment strategies.

Full article

(This article belongs to the Section Gynecologic Oncology)

►▼

Show Figures

Figure 1

Open AccessArticle

Stage-Specific Healthcare Costs in Cervical Cancer and Cervical Intraepithelial Neoplasia: A Population-Based Analysis Informing Value-Based Oncology and Equitable Prevention

by

Tian-Shyug Lee and Yu-Chiao Wang

Curr. Oncol. 2026, 33(6), 329; https://doi.org/10.3390/curroncol33060329 - 1 Jun 2026

Abstract

Persistent challenges in cervical cancer (CC) control highlight the need for stage-specific cost estimates to refine prevention strategies. Structural integration of National Health Insurance (NHI) administrative claims, the Taiwan Cancer Registry (TCR), and the National Cause of Death Registry (NCDR) provided the empirical

[...] Read more.

Persistent challenges in cervical cancer (CC) control highlight the need for stage-specific cost estimates to refine prevention strategies. Structural integration of National Health Insurance (NHI) administrative claims, the Taiwan Cancer Registry (TCR), and the National Cause of Death Registry (NCDR) provided the empirical basis for this population-based research. The final analytical sample encompassed 6055 women with cervical intraepithelial neoplasia (CIN) identified in 2016 as well as 9318 patients diagnosed with stage I to IV invasive CC during the 2008 to 2015 period. Reimbursed direct medical costs were estimated for CIN within 6 months after diagnosis and for CC over 5 years after diagnosis. Across CIN grades, no consistent cost gradient was observed, although inpatient utilization was highest in CIN3. Among women with CC, healthcare utilization and expenditures were concentrated in the first year after diagnosis, accounting for 52–65% of the total 5-year costs. After age adjustment, the mean first-year costs increased from NT$256,095 (US$8413) in stage I to NT$474,724 (US$15,595) in stage IV, while 5-year survival declined from 85.3% to 19.5%. These findings show that cervical disease imposes substantial direct medical costs on Taiwan’s healthcare system and provide updated evidence to inform human papillomavirus (HPV) vaccination and CC screening policy.

Full article

(This article belongs to the Special Issue Health Economics in Oncology: Addressing Financial Toxicity, Value-Based Care, and Equity)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Radiotherapy for Adrenal Metastases from Hepatocellular Carcinoma: A 20-Year Bi-Institutional Experience

by

Jeongshim Lee and Myungsoo Kim

Curr. Oncol. 2026, 33(6), 328; https://doi.org/10.3390/curroncol33060328 - 1 Jun 2026

Abstract

Background: To evaluate tumor response, local control (LC), survival, and toxicity after radiotherapy for adrenal metastases from hepatocellular carcinoma (HCC), we conducted a retrospective, bi-institutional study spanning two decades. Methods: Twenty patients received radiotherapy for adrenal metastases (2005–2025). Techniques included three-dimensional conformal radiotherapy,

[...] Read more.

Background: To evaluate tumor response, local control (LC), survival, and toxicity after radiotherapy for adrenal metastases from hepatocellular carcinoma (HCC), we conducted a retrospective, bi-institutional study spanning two decades. Methods: Twenty patients received radiotherapy for adrenal metastases (2005–2025). Techniques included three-dimensional conformal radiotherapy, intensity-modulated radiotherapy, stereotactic body radiotherapy, and MR-guided radiotherapy. Tumor response was assessed using Response Evaluation Criteria in Solid Tumors version 1.1. LC, overall survival (OS), and progression-free survival (PFS) were estimated using Kaplan–Meier; the association between biologically effective dose (BED10) and local recurrence was assessed using Fisher’s exact test. Results: The objective response rate was 55.0%, and the disease control rate was 95.0%. The 6- and 12-month adrenal LC rates were 94.4% and 87.2%; local recurrence occurred in three patients (15.0%). No local recurrence was observed at BED10 ≥ 75 Gy (0/8) versus 25.0% (3/12) at BED10 < 75 Gy. However, this difference did not reach statistical significance (p = 0.242, Fisher’s exact test) and should be interpreted as exploratory and hypothesis-generating given the limited sample size. Median OS was 13.4 months, and median PFS was 6.3 months. Systemic progression was the predominant failure pattern. No grade ≥3 radiotherapy-related toxicity was observed. Conclusions: Radiotherapy achieved favorable LC with acceptable toxicity across a 20-year bi-institutional experience, supporting its role as an effective local treatment modality for adrenal metastases from HCC.

Full article

(This article belongs to the Section Gastrointestinal Oncology)

►▼

Show Figures

Figure 1

Open AccessArticle

Impact of Elevated C-Reactive Protein on Survival Outcomes of Patients with Small Renal Masses: A Retrospective Multicenter Analysis

by

Margaret F. Meagher, Natalie Birouty, Giacomo Musso, Dattatraya Patil, Kazutaka Saito, Yosuke Yasuda, Dhruv Puri, Benjamin Baker, Kit Yuen, Jacob L. Roberts, Aaron Ahdoot, Omer Baker, Mai Dabbas, Julian Cortes, Yasuhisa Fujii, Viraj Master, Michael Liss and Ithaar H. Derweesh

Curr. Oncol. 2026, 33(6), 327; https://doi.org/10.3390/curroncol33060327 - 1 Jun 2026

Abstract

Objective: This study aimed to investigate the impact of elevated CRP on survival outcomes in small renal masses (SRM). Methods: This was a multi-institutional retrospective analysis of SRM (≤3 cm) managed surgically. The cohort was divided into elevated CRP (≥0.5 mg/dL) vs. non-elevated

[...] Read more.

Objective: This study aimed to investigate the impact of elevated CRP on survival outcomes in small renal masses (SRM). Methods: This was a multi-institutional retrospective analysis of SRM (≤3 cm) managed surgically. The cohort was divided into elevated CRP (≥0.5 mg/dL) vs. non-elevated CRP groups (<0.5). The primary outcome was all-cause mortality (ACM). The secondary outcomes were non-cancer (NCM) and cancer-specific mortality (CSM). Cox-regression analysis was used to elucidate predictive factors for mortality outcomes. Kaplan–Meier Analysis (KMA) was performed to analyze 10-year overall (OS), cancer-specific (CSS) and non-cancer-specific survival (NCS). Results: A total of 1001 patients were analyzed (309 non-elevated CRP/692 elevated CRP; median follow-up 70 months). Elevated CRP was an independent predictor for ACM (HR = 2.60, p < 0.001) NCM (HR = 2.90, p = 0.002), and CSM (HR = 1.20, p = 0.011). KMA comparing elevated vs. non-elevated groups revealed greater 10-year OS (p < 0.001) and NCS (p < 0.001) for non-elevated CRP, but no significant difference in 10-year CSS (p = 0.295). A total of 83 deaths were observed in elevated CRP (71 NCM/12 CSM—all clear-cell histology). The sensitivity/specificity of elevated CRP was 0.87/0.33, 0.75/0.81, and 0.90/0.33 for ACM, CSM, and NCM. By utilizing CRP for a decision-making algorithm prioritizing biopsy in CRP elevation and offering surveillance in benign or indolent histology, surgery may be avoided in 218 patients, in whom there were 38 fatalities, all NCM. Conclusions: Elevated CRP was an independent predictor of survival outcomes in SRM ≤ 3 cm. From a competing mortality standpoint, patients with elevated CRP had significantly worsened NCM compared to CSM. In such patients, upfront oncologic risk stratification through biopsy may be considered, and indolent/low-grade neoplasms should be strongly considered for non-surgical management.

Full article

(This article belongs to the Special Issue Advances in Novel Biomarkers for Kidney Cancer)

►▼

Show Figures

Figure 1

Open AccessArticle

Temporal Patterns of Breast Cancer-Related Sickness Absence and Social Insurance Expenditures Among Women in Poland, 2020–2024: A Nationwide Registry-Based Study

by

Piotr Artur Winciunas, Justyna Grudziąż-Sękowska, Agnieszka Mazurek, Kuba Sękowski, Wojciech S. Zgliczyński and Mateusz Jankowski

Curr. Oncol. 2026, 33(6), 326; https://doi.org/10.3390/curroncol33060326 - 1 Jun 2026

Abstract

Background/Objectives: Sickness absence due to breast cancer generates significant indirect costs. This nationwide registry-based study aimed to evaluate temporal patterns and the population-level burden of sickness absence, rehabilitation benefit utilization, and social insurance expenditures related to breast cancer among women in Poland

[...] Read more.

Background/Objectives: Sickness absence due to breast cancer generates significant indirect costs. This nationwide registry-based study aimed to evaluate temporal patterns and the population-level burden of sickness absence, rehabilitation benefit utilization, and social insurance expenditures related to breast cancer among women in Poland between 2020 and 2024. Methods: This nationwide analysis is based on the Social Insurance Institution registry. Data on females unable to work due to breast cancer were analyzed (ICD-10: C50). Short-term sickness absence was analyzed. Results: The number of sick leave days due to breast cancer varied from 1,241,223 in 2021 to 1,444,863 in 2024. The number of sick leave certificates increased every year: from 55,732 in 2020 to 79,979 in 2024. The most dynamic growth between 2020 and 2024 occurred in the 45–49 group, where sick leave days increased +33.4% and certificates increased +70.6%. There were significant regional differences. The sickness absence days per certificate due to breast cancer decreased over time, from 23.5 days in 2020 to 18.1 days in 2024. The total number of medical certificates on rehabilitation benefit due to breast cancer varied from 4811 in 2021 to 5281 in 2024. Social insurance expenditures on sickness absence varied from 112,064.9 thousand PLN (approximately 25.24 million EUR) in 2020 to 207,687.9 thousand PLN (approximately 48.3 million EUR) in 2024 and expenditures on rehabilitation benefits varied from 56,812.8 thousand PLN (12.8 million EUR) in 2020 to 89,692.6 thousand PLN (20.83 million EUR) in 2024. Conclusions: This population-based registry study revealed a high economic burden of sickness absence due to breast cancer in Poland.

Full article

(This article belongs to the Section Breast Cancer)

►▼

Show Figures

Figure 1

Open AccessArticle

Real-World Analysis of Metastatic Renal Cell Carcinoma Patients Treated with Pembrolizumab Plus Axitinib: Evidence from the Campania Oncology Network

by

Marilena Di Napoli, Elisabetta Coppola, Carmine D’Aniello, Sarah Scagliarini, Carlo Buonerba, Andrea Muto, Luigi Formisano, Francesco Sabbatino, Davide Bosso, Sabrina Rossetti, Lorenzo Lobianco, Rosa Tambaro, Fabrizio Di Costanzo, Pasquale Rescigno, Carmela Pisano, Sabrina Chiara Cecere, Anna Passarelli, Jole Ventriglia, Gabriele Calvanese, Maria Rosaria Lamia, Erica Perri, Roberto Contieri, Dario Franzese, Maria Adelina Simeoni, Caterina Mariarosaria Giorgio, Florinda Feroce, Salvatore Stilo, Giovanni Pacifico, Giuseppina Canciello and Sandro Pignataadd

Show full author list

remove

Hide full author list

Curr. Oncol. 2026, 33(6), 325; https://doi.org/10.3390/curroncol33060325 - 30 May 2026

Abstract

Background: Immune checkpoint inhibitor–tyrosine kinase inhibitor combinations represent a standard first-line option for metastatic renal cell carcinoma (mRCC). However, patients enrolled in pivotal trials often differ from those treated in routine practice. We report real-world outcomes of pembrolizumab plus axitinib within the

[...] Read more.

Background: Immune checkpoint inhibitor–tyrosine kinase inhibitor combinations represent a standard first-line option for metastatic renal cell carcinoma (mRCC). However, patients enrolled in pivotal trials often differ from those treated in routine practice. We report real-world outcomes of pembrolizumab plus axitinib within the Campania Oncology Network. Methods: We conducted a multicenter retrospective study including consecutive treatment-naïve mRCC patients who received first-line pembrolizumab plus axitinib between January 2021 and November 2023 across eight regional centers. The primary endpoints were progression-free survival (PFS) and overall survival (OS); secondary endpoints included objective response rate (ORR) and safety. Results: A total of 117 patients were included. IMDC risk was favorable in 19.6%, intermediate/poor in 65%, and unknown in 15.4%. Median age was 59 years, and 53.8% had ECOG performance status ≥ 1. Clear-cell histology accounted for 87.2% of cases; brain metastases were present in 36%. After a median follow-up of 12.8 months, median PFS was 15.1 months (95% CI 9.6–NR), and median OS was not reached. ORR was 27.3%, with a disease control rate of 79.5%; patients with non-clear-cell histology showed an ORR of 41.7%. Disease progression was the main cause of treatment discontinuation (49%), while adverse events (AEs) led to discontinuation in 6% of cases. Grade ≥3 AEs occurred in 14% of patients. Most toxicities were grade 1–2, including diarrhea (23.9%), asthenia (18%), hypothyroidism (12.8%), and hypertension (9.4%). Grade 1–2 AEs were significantly more frequent in females compared with males (57.6% vs. 35.7%, p = 0.05). Conclusions: In this consecutive regional cohort, pembrolizumab plus axitinib showed clinically relevant disease control, although ORR was lower than in pivotal trials. Performance status emerged as a key prognostic factor. Real-world data from oncology networks may support personalized first-line treatment decisions in mRCC.

Full article

(This article belongs to the Section Genitourinary Oncology)

►▼

Show Figures

Figure 1

Open AccessArticle

Implementation Benchmark of Tumor-Agnostic Eligibility Signals Across Routine Comprehensive Genomic Profiling Platforms in Japan: A Nationwide C-CAT Analysis

by

Shinya Kajiura, Naohiko Nakamura and Ryuji Hayashi

Curr. Oncol. 2026, 33(6), 324; https://doi.org/10.3390/curroncol33060324 - 30 May 2026

Abstract

Routine precision oncology requires realistic benchmarks for tumor-agnostic eligibility signals observed in heterogeneous comprehensive genomic profiling (CGP) pathways. We performed a retrospective descriptive analysis of anonymized aggregated nationwide Center for Cancer Genomics and Advanced Therapeutics (C-CAT) data in Japan, including 97,343 CGP-tested cases

[...] Read more.

Routine precision oncology requires realistic benchmarks for tumor-agnostic eligibility signals observed in heterogeneous comprehensive genomic profiling (CGP) pathways. We performed a retrospective descriptive analysis of anonymized aggregated nationwide Center for Cancer Genomics and Advanced Therapeutics (C-CAT) data in Japan, including 97,343 CGP-tested cases summarized across five routine CGP platforms and categorized into 12 prespecified organ groups for analysis. The primary strict approved set endpoint was the case-level union of MSI-H, TMB-H, NTRK fusion/rearrangement, RET fusion/rearrangement, and ERBB2 amplification; the expanded practical set endpoint additionally included ALK fusion/rearrangement and BRAF V600E. The primary strict approved set endpoint was observed in 14,005 cases (14.4%), and the expanded practical set endpoint in 15,911 cases (16.3%), adding 1906 cases and increasing the observed rate by 2.0 percentage points. Signals varied across organ groups and platform/specimen contexts. TMB-H and ERBB2 amplification numerically dominated the primary set signal, whereas NTRK and RET fusion/rearrangement remained rare. These observed frequencies should be interpreted as case-level implementation signals surfaced through routine CGP rather than assay superiority evidence, biological prevalence estimates, or treatment-benefit data. This nationwide, platform-aware benchmark supports practical interpretation of tumor-agnostic eligibility signals in routine CGP practice in Japan.

Full article

(This article belongs to the Section Oncology Biomarkers)

►▼

Show Figures

Figure 1

Open AccessCase Report

Pancreatic Metastasis from Intracranial Solitary Fibrous Tumor/Hemangiopericytoma Mimicking a Pancreatic Neuroendocrine Tumor: A Case Report and Focused Literature Review

by

Xiang Kong, Fan Tong, Yaru Liu, Haochen Tang, Huizi Sha and Juan Du

Curr. Oncol. 2026, 33(6), 323; https://doi.org/10.3390/curroncol33060323 - 29 May 2026

Abstract

Solitary fibrous tumor/hemangiopericytoma (SFT/HPC) of the central nervous system is a rare mesenchymal neoplasm with a propensity for late recurrence and distant metastasis. Pancreatic metastasis from intracranial SFT/HPC is exceptionally uncommon and may mimic primary pancreatic neoplasms, particularly pancreatic neuroendocrine tumor (PanNET). We

[...] Read more.

Solitary fibrous tumor/hemangiopericytoma (SFT/HPC) of the central nervous system is a rare mesenchymal neoplasm with a propensity for late recurrence and distant metastasis. Pancreatic metastasis from intracranial SFT/HPC is exceptionally uncommon and may mimic primary pancreatic neoplasms, particularly pancreatic neuroendocrine tumor (PanNET). We report a 52-year-old man with a documented history of recurrent intracranial SFT/HPC, historically diagnosed as hemangiopericytoma, who developed a hypervascular pancreatic tail lesion 11 years after the initial intracranial tumor diagnosis. Contrast-enhanced imaging and endoscopic ultrasound-guided fine-needle aspiration initially suggested a primary pancreatic neoplasm, including solid pseudopapillary neoplasm or PanNET, and a definitive preoperative diagnosis could not be established. Following laparoscopic resection, histopathological examination revealed a spindle-cell tumor with a rich vascular pattern. Immunohistochemistry documented STAT6 positivity, together with vimentin and Bcl-2 positivity, supporting the diagnosis of pancreatic metastasis from SFT/HPC. The patient later developed unresectable recurrent pancreatic disease and underwent stereotactic radiotherapy, showing radiological disease control at follow-up. This case highlights that pancreatic metastasis from intracranial SFT/HPC, although extremely rare, may occur after a prolonged latency period and mimic a hypervascular primary pancreatic neoplasm. In patients with a history of intracranial SFT/HPC, late metastatic disease should be considered, and definitive diagnosis relies on histopathological examination and targeted immunohistochemistry.

Full article

(This article belongs to the Section Gastrointestinal Oncology)

►▼

Show Figures

Figure 1

Open AccessArticle

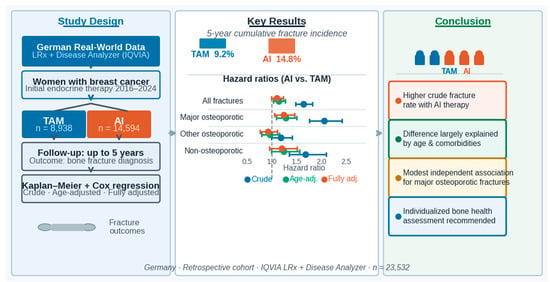

Association Between Endocrine Therapy and Fracture Risk in Women with Breast Cancer in Germany—A Retrospective Cohort Study

by

Karel Kostev, Maximilian Peters, Henning Sievert and Matthias Kalder

Curr. Oncol. 2026, 33(6), 322; https://doi.org/10.3390/curroncol33060322 - 29 May 2026

Abstract

Background: Aromatase inhibitors (AIs) are widely used in hormone receptor-positive breast cancer but may adversely affect bone health. Evidence on their independent association with fracture risk compared with tamoxifen (TAM) remains inconsistent. Methods: In this retrospective cohort study, women with an initial prescription

[...] Read more.

Background: Aromatase inhibitors (AIs) are widely used in hormone receptor-positive breast cancer but may adversely affect bone health. Evidence on their independent association with fracture risk compared with tamoxifen (TAM) remains inconsistent. Methods: In this retrospective cohort study, women with an initial prescription of TAM or AIs between 2016 and 2024 were identified in the IQVIA Longitudinal Prescription (LRx) database. Their prescription histories were combined with records from the IQVIA Disease Analyzer (DA) database using a validated co-therapy-based approach. Patients were then followed for up to five years. Kaplan–Meier analyses estimated cumulative fracture incidence, and Cox regression models assessed associations in unadjusted, age-adjusted, and fully adjusted analyses. Results: The study included 8938 TAM users and 14,594 AI users. Five-year cumulative incidence of all fractures was higher in the AI group than in the TAM group (14.8% vs. 9.2%). In fully adjusted primary analyses, AI therapy was not significantly associated with overall fracture risk (HR 1.10, 95% CI 0.99–1.23). A modest association persisted for major osteoporotic fractures (HR 1.24, 95% CI 1.05–1.46). Secondary exploratory analyses (age-stratified and fracture-type-specific models) showed patterns consistent with the primary results but were not powered or corrected for confirmatory inference. Conclusions: Aromatase inhibitor therapy was associated with a higher fracture incidence than tamoxifen, but much of this difference was explained by age and comorbidities. Both treatment-related effects and underlying patient characteristics contribute to fracture risk, underscoring the importance of individualized bone health assessment and targeted preventive strategies in women receiving endocrine therapy.

Full article

(This article belongs to the Section Breast Cancer)

►▼

Show Figures

Graphical abstract

Open AccessArticle

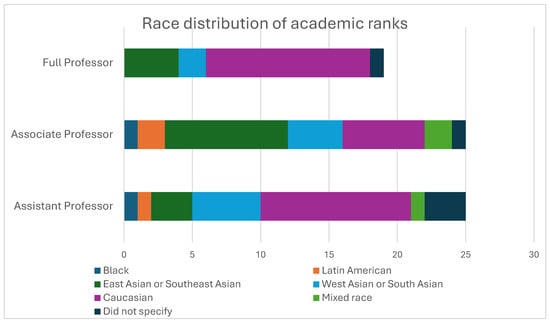

Effect of Race and Ethnicity on Academic Achievements in Cancer Physicians and Scientists

by

Doreen A. Ezeife, Amanda Khan, Mark Melika-Abusefien, Edouarda Taguedong, Md Mahsin and Shaun K. Loewen

Curr. Oncol. 2026, 33(6), 321; https://doi.org/10.3390/curroncol33060321 - 29 May 2026

Abstract

Background: Diversity in academia promotes research that can reduces health disparities and addresses equity issues for marginalized populations. This study aims to examine the effect of visible minority status on academic achievements in cancer physicians and scientists. Methods: Faculty at the tertiary cancer

[...] Read more.

Background: Diversity in academia promotes research that can reduces health disparities and addresses equity issues for marginalized populations. This study aims to examine the effect of visible minority status on academic achievements in cancer physicians and scientists. Methods: Faculty at the tertiary cancer center in Calgary, Alberta, Canada, completed a survey in 2023 to evaluate demographics, academic rank, leadership positions, number of trainees mentored, number of publications, and amount of grant funding. Chi-square tests and regression analyses examined the impact of race and ethnicity on these academic achievements. Results: The survey was completed by 74 faculty members (47% male, 43% female, 9% gender fluid or providing no answer) with a response rate of 26%. Seven percent were Black or Latin American, 18% East Asian or Southeast Asian, 19% West or South Asian, 39% Caucasian, 6% mixed race, and 11% not providing an answer. Visible minorities were underrepresented in the full professor rank (19%) compared to non-visible minorities (38%) and were overrepresented in assistant/associate professors (28% and 53%, respectively), with 41% of non-visible minorities having the title of assistant professor and 21% as associate professor (p = 0.02). Visible minorities were less likely to have both parents college-educated (OR 0.30, 95% CI 0.09–0.92, p = 0.042) and also less likely to have been raised in a home with household income above $100,000 (OR 0.26, 95% CI 0.07–0.90, p = 0.040). Discussion: Visible minorities are underrepresented in the full professor academic rank. Larger studies are needed to evaluate whether race and ethnicity significantly impact achievements in oncology academics.

Full article

(This article belongs to the Special Issue Equity-Oriented Cancer Treatment and Care)

►▼

Show Figures

Figure 1

Open AccessArticle

Metastatic Tumour Burden as Prognostic Factor in Nivolumab-Treated Metastatic Renal Cell Carcinoma: An Exploratory Study

by

Mario Uccello, Abigail L. Gee, James A. Bennett, Helen L. Hazell, Manuel Ruiz-Echarri Rueda and Mark J. Beresford

Curr. Oncol. 2026, 33(6), 320; https://doi.org/10.3390/curroncol33060320 - 28 May 2026

Abstract

Introduction: Prognostic assessment remains fundamental in metastatic renal cell carcinoma (mRCC). Established prognostic models, such as the International Metastatic Renal Cell Carcinoma Database Consortium (IMDC) and Meet-URO classifications, are widely used but do not directly quantify the metastatic tumour burden. We evaluated

[...] Read more.

Introduction: Prognostic assessment remains fundamental in metastatic renal cell carcinoma (mRCC). Established prognostic models, such as the International Metastatic Renal Cell Carcinoma Database Consortium (IMDC) and Meet-URO classifications, are widely used but do not directly quantify the metastatic tumour burden. We evaluated the prognostic value of metastatic tumour burden in nivolumab-treated mRCC and developed an exploratory composite prognostic score integrating the tumour burden with systemic inflammation and performance status. Patients and Methods: We retrospectively analysed consecutive patients with mRCC initiating nivolumab as a second- or third-line therapy at a single UK centre between March 2017 and November 2024. Their baseline clinical, laboratory and radiological data were collected. The metastatic tumour burden was defined as involvement of ≥2 metastatic organ sites, excluding lymph nodal, soft tissue, renal, pancreatic and thyroid metastases, while including bone metastases. The Bath score combined the metastatic tumour burden, systemic immune-inflammation index (SII) and Karnofsky performance status (KPS). Overall survival (OS) was analysed using Kaplan–Meier methods and a Cox regression, and the prognostic performance was compared using Harrell’s concordance index (C-index). Results: Fifty-one patients were included. After a median follow-up of 46.1 months, the median OS was 30.4 months. In the univariate analyses, a KPS < 80%, high SII, elevated platelet count and ≥2 metastatic organ sites were significantly associated with inferior OS. A KPS < 80% was the only variable retaining statistical significance in the multivariate analysis. The Bath score was significantly associated with OS (log-rank p < 0.001) and showed a numerically higher C-index than the IMDC and Meet-URO in this cohort (0.779 [optimism corrected, 0.760] vs. 0.641 and 0.706, respectively), with separation of risk groups. Conclusions: In this real-world cohort of nivolumab-treated mRCC, the metastatic tumour burden, systemic inflammation, and performance status were associated with survival. The Bath score should be regarded as exploratory and hypothesis-generating and requires validation in larger contemporary cohorts.

Full article

(This article belongs to the Special Issue Advances in Novel Biomarkers for Kidney Cancer)

►▼

Show Figures

Figure 1

Open AccessArticle

Targeting Phosphatidylserine in Advanced Gastric and Gastroesophageal Junction Adenocarcinomas: A Phase 2 Trial of Bavituximab Plus Pembrolizumab with Biomarker-Correlated Outcomes

by

Panagiotis Ntellas, Haeseong Park, Kerry Culm, Jeeyun Lee, Hagop Youssoufian, Colleen Mockbee, Mark Uhlik, Laura Benjamin and Ian Chau

Curr. Oncol. 2026, 33(6), 319; https://doi.org/10.3390/curroncol33060319 - 28 May 2026

Abstract

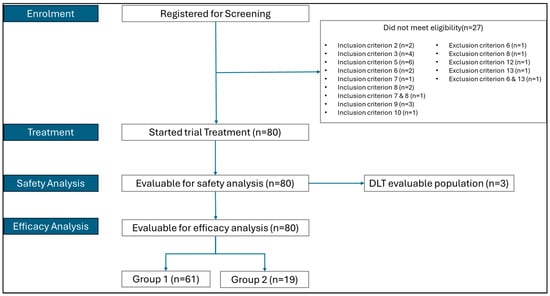

Advanced gastric and gastroesophageal junction (gastric/GOJ) adenocarcinomas have poor outcomes, and the benefit of immune checkpoint inhibitors (CPIs) remains limited. Bavituximab is a phosphatidylserine-targeting monoclonal antibody that modulates the immune and vascular tumour microenvironment. This Phase 2, open-label, multinational study evaluated bavituximab plus

[...] Read more.

Advanced gastric and gastroesophageal junction (gastric/GOJ) adenocarcinomas have poor outcomes, and the benefit of immune checkpoint inhibitors (CPIs) remains limited. Bavituximab is a phosphatidylserine-targeting monoclonal antibody that modulates the immune and vascular tumour microenvironment. This Phase 2, open-label, multinational study evaluated bavituximab plus pembrolizumab in previously treated advanced gastric/GOJ. Patients were stratified into CPI-naïve (n = 61) and CPI-relapse (n = 19) cohorts. Bavituximab (3 mg/kg weekly) was combined with pembrolizumab (200 mg every 3 weeks) until disease progression or unacceptable toxicity. Primary endpoints included the safety and objective response rate (ORR) per RECIST 1.1. Secondary endpoints included the disease control rate (DCR), progression-free survival, overall survival, and exploratory biomarker analyses using the Xerna TME Panel and the neutrophil-to-lymphocyte ratio (NLR). No dose-limiting toxicities were observed. The most common treatment-emergent adverse events were fatigue (32.5%), constipation (31.3%), and decreased appetite (31.3%). The ORR was 13.1% in CPI-naïve and 5.3% in CPI-relapse patients; the median duration of response was 12.5 months in the CPI-naïve cohort. The DCR was 39.3% and 52.6% respectively. Higher ORRs were observed in patients with immune-high (B+) subtypes (21.9%), NLR < 4 (17.9%) and B+/NLR < 4 (33%). Bavituximab plus pembrolizumab was well tolerated but showed modest activity, with greater benefit in biomarker-defined subgroups, supporting the biomarker-driven development of tumour microenvironment-targeting combinations in advanced gastric/GOJ adenocarcinomas. NCT04099641.

Full article

(This article belongs to the Section Gastrointestinal Oncology)

►▼

Show Figures

Figure 1

Open AccessCase Report

Pregnancy Outcomes After in Utero Exposure to Immune Checkpoint Inhibitors

by

Morgan Bou Zerdan, Bruna Kfoury, Eliane Aoun, Sarah Diane Hmaidan, Roni Nitecki Wilke, Jeffrey A. How, Terri L. Woodard, Pamela T. Soliman and Laurie J. McKenzie

Curr. Oncol. 2026, 33(6), 318; https://doi.org/10.3390/curroncol33060318 - 28 May 2026

Abstract

Importance: Immune checkpoint inhibitors (ICIs) have transformed the management of cancers affecting reproductive-age patients, yet their impact on pregnancy outcomes remains incompletely understood. We describe two cases of maternal and fetal outcomes associated with ICI exposure during pregnancy and present a comprehensive literature

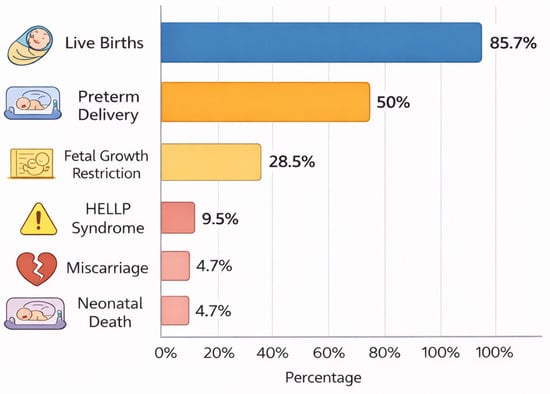

[...] Read more.

Importance: Immune checkpoint inhibitors (ICIs) have transformed the management of cancers affecting reproductive-age patients, yet their impact on pregnancy outcomes remains incompletely understood. We describe two cases of maternal and fetal outcomes associated with ICI exposure during pregnancy and present a comprehensive literature review. Methods: A retrospective chart review was conducted at MD Anderson Cancer Center (1 January 2015 to 31 December 2024) to identify patients exposed to ICIs during pregnancy. Clinical data including cancer type, treatment timing, pregnancy course, and maternal and neonatal outcomes were collected. A narrative literature review was also performed using PubMed to identify reported cases of ICI exposure during pregnancy. Observations: Two patients were identified at our institution, both treated with ICIs for advanced melanoma. One patient received pembrolizumab during early pregnancy, with the final dose administered five days after conception, and subsequently gave birth to a healthy term infant without complications. The second patient conceived while receiving adjuvant nivolumab and experienced a miscarriage at 13 weeks of gestation. Neither patient experienced immune-related toxicity during pregnancy, and both remained without evidence of disease at follow-up. The literature review identified 21 reported pregnancies with ICI exposure and variable outcomes. Most resulted in live births (85.7%), though preterm delivery occurred in approximately 50% of cases, often due to maternal or fetal indications. Additional reported outcomes included miscarriage, neonatal death, fetal growth restriction, preeclampsia, and rare immune-related neonatal effects. Congenital anomalies were reported in a small number of cases. Conclusions and Relevance: These findings suggest that, while many pregnancies exposed to ICIs result in live births, there may be an increased risk of adverse maternal and fetal outcomes. However, causality cannot be established due to the limited quality and quantity of available data. These findings underscore the importance of effective contraception during ICI therapy and careful multidisciplinary counseling when exposure occurs during pregnancy.

Full article

(This article belongs to the Section Gynecologic Oncology)

►▼

Show Figures

Figure 1

Open AccessArticle

Endometrial Cancer Recurrence Risk Following Robotic Hysterectomy

by

Sean Zhu, Ericka Wiebe, Haley Frerichs, Sunita Ghosh, Jasmine Gill, Zainab Al Habsi, Ananya Beruar and Sophia Pin

Curr. Oncol. 2026, 33(6), 317; https://doi.org/10.3390/curroncol33060317 - 28 May 2026

Abstract

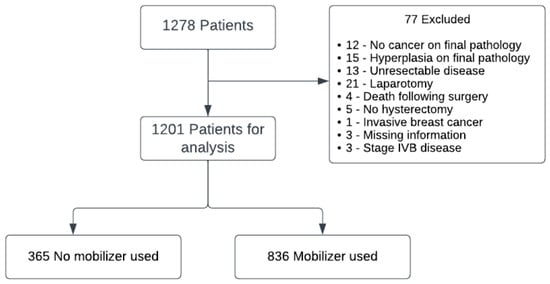

Background: Minimally invasive surgery is widely used in endometrial cancer management, but recurrence remains a concern. Methods: A retrospective review of 1201 patients treated from 2012 to 2019 with robotic laparoscopy was conducted. Recurrence-free survival and hazard ratios were calculated using multivariate Cox

[...] Read more.

Background: Minimally invasive surgery is widely used in endometrial cancer management, but recurrence remains a concern. Methods: A retrospective review of 1201 patients treated from 2012 to 2019 with robotic laparoscopy was conducted. Recurrence-free survival and hazard ratios were calculated using multivariate Cox models. Results: Of 1278 patients, 155 cases of recurrent disease were identified. Age (HR 1.01, p = 0.045), LVSI (HR 3.54, p < 0.001), stage and high-grade histology (HR 3.39, p < 0.001) were associated with increased risk of recurrence. Hazard ratios for stages IB, II and III/IV were 1.92 (p = 0.007), 2.67 (p = 0.001), and 3.23 (p < 0.001) respectively. The use of a uterine manipulator was an independent risk factor on multivariate analysis (HR 2.12, p < 0.001). Adjuvant chemotherapy (HR 0.67, p = 0.239) and radiotherapy (HR 0.64, p = 0.056) trends favored reduced risk but were not significant. Chemoradiotherapy was found to have a reduction in recurrence with a HR 0.44 (p = 0.002). Conclusions: Traditional prognostic factors remain important for patients with endometrial cancer having undergone robotic laparoscopic hysterectomy. Uterine manipulator use should be carefully reconsidered in endometrial cancer surgery.

Full article

(This article belongs to the Special Issue Optimizing Surgical Management for Gynecologic Cancers)

►▼

Show Figures

Figure 1

Open AccessArticle

Prognostic Factors and Survival in Surgically Treated Stage III Non-Small Cell Lung Cancer: A Real-World Single-Center Retrospective Cohort Study

by

Bekir Elma, Hilal Zehra Kumbasar, Ilkay Dogan, Ahmet Ulusan, Maruf Sanli and Ahmet Ferudun Isik

Curr. Oncol. 2026, 33(6), 316; https://doi.org/10.3390/curroncol33060316 - 28 May 2026

Abstract

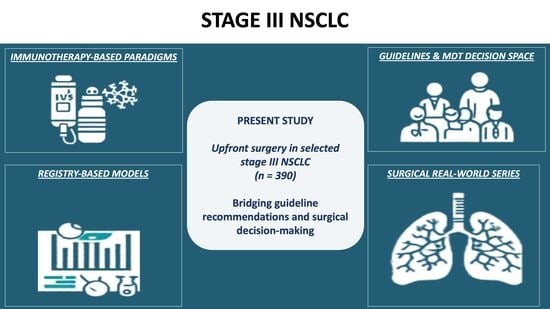

Background: Stage III non-small cell lung cancer (NSCLC) represents a clinically and biologically heterogeneous disease group, posing challenges in treatment standardization. Objective: This study aimed to evaluate survival outcomes and prognostic factors in patients with pathological stage III NSCLC undergoing upfront surgery in

[...] Read more.

Background: Stage III non-small cell lung cancer (NSCLC) represents a clinically and biologically heterogeneous disease group, posing challenges in treatment standardization. Objective: This study aimed to evaluate survival outcomes and prognostic factors in patients with pathological stage III NSCLC undergoing upfront surgery in a real-world setting. Methods: Patients treated between January 2009 and December 2023 were retrospectively analyzed. All patients underwent anatomical resection with systematic mediastinal lymph node dissection and achieved complete (R0) resection. Overall survival (OS) and recurrence-free survival (RFS) were estimated using the Kaplan–Meier method, and prognostic factors were evaluated using Cox proportional hazards regression. Nomograms were developed for individualized survival prediction. Results: A total of 390 patients were included, with a median follow-up of 35 months. The median OS was 47 months, and the median RFS was 30 months. Multivariable analysis identified age, PET-detected mediastinal nodal involvement, tumor location, postoperative complications, pathological nodal status, adjuvant chemotherapy, and adjuvant radiotherapy as independent predictors of OS (p = 0.001, p = 0.029, p = 0.006, p =0.002, p = 0.009, and 0.004, respectively), while adjuvant chemotherapy was associated with improved survival (p = 0.010). For RFS, pathological N2b disease, stage IIIB, tumor location, and adenocarcinoma histology were independent predictors (p = 0.001, p = 0.016, p = 0.029, and p = 0.027, respectively). The OS nomogram demonstrated moderate discriminative ability, whereas the RFS model showed limited predictive performance. Conclusions: These findings highlight the substantial heterogeneity of stage III NSCLC and emphasize the importance of individualized, multidisciplinary treatment strategies beyond conventional staging systems.

Full article

(This article belongs to the Section Thoracic Oncology)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Limited Immune-Mediated Efficacy of Anti-PD-L1/VEGF in EGFR-TKI-Naïve Egfr-Mutant Lung Cancer with Non-Inflamed Tumor Microenvironment

by

Atsuko Hirabae, Tadahiro Kuribayashi, Shuta Tomida, Sachi Okawa, Takamasa Nakasuka, Kazuya Nishii, Jun Nishimura, Go Makimoto, Kiichiro Ninomiya, Hisao Higo, Kammei Rai, Eiki Ichihara, Katsuyuki Hotta, Masamichi Sugimoto, Yosuke Togashi, Yoshinobu Maeda, Katsuyuki Kiura and Kadoaki Ohashi

Curr. Oncol. 2026, 33(6), 315; https://doi.org/10.3390/curroncol33060315 - 27 May 2026

Abstract

Immune checkpoint inhibitors (ICIs) show limited efficacy in epidermal growth factor receptor (EGFR)-mutant lung adenocarcinoma due to its non-inflamed tumor microenvironment (TME). While quadruple therapy combining chemotherapy, anti-programmed death-ligand 1 (PD-L1), and anti-vascular endothelial growth factor (VEGF) antibodies has shown inconsistent

[...] Read more.

Immune checkpoint inhibitors (ICIs) show limited efficacy in epidermal growth factor receptor (EGFR)-mutant lung adenocarcinoma due to its non-inflamed tumor microenvironment (TME). While quadruple therapy combining chemotherapy, anti-programmed death-ligand 1 (PD-L1), and anti-vascular endothelial growth factor (VEGF) antibodies has shown inconsistent results in EGFR-tyrosine kinase inhibitor (TKI)-pretreated patients, whether anti-VEGF therapy can modulate the intrinsic non-inflamed TME remains unknown. We employed an EGFR-TKI-naïve syngeneic Egfr-mutant mouse model and evaluated effects of anti-VEGF, anti-PD-L1, carboplatin, and paclitaxel as monotherapies and combinations, with TME analysis via immunohistochemistry (IHC), flow cytometry, and RNA sequencing. Anti-PD-L1 showed no antitumor effect, and adding anti-VEGF failed to convert the TME to an inflamed status. Although paclitaxel—but not carboplatin—combined with low-dose anti-VEGF inhibited tumor growth, adding anti-PD-L1 provided no benefit, indicating the anti-VEGF-A antibody evaluated here has a limited role in sensitizing tumors to anti-PD-L1 regardless of chemotherapy. CD8+ T-cell depletion did not attenuate the effect of paclitaxel plus low-dose anti-VEGF, and IHC and RNA sequencing revealed increased natural killer cell infiltration, suggesting a CD8+ T-cell-independent, innate immune mechanism. These findings provide preclinical evidence that the evaluated anti-VEGF has limited immunomodulatory activity in EGFR-TKI-naïve Egfr-mutant tumors with a non-inflamed TME, and suggest immunotherapeutic strategies beyond CD8+ T-cell-mediated immunity warrant investigation.

Full article

(This article belongs to the Section Thoracic Oncology)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Current Oncology Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal Browser-

arrow_forward_ios

Forthcoming issue

arrow_forward_ios Current issue - Volumes not published by MDPI

Highly Accessed Articles

Latest Books

E-Mail Alert

News

1 June 2026

MDPI INSIGHTS: The CEO’s Letter #35 – 30 Years of Open Science, Open Access Policies, Spain Summit, MMCS 2026 & Antibiotics 2026

MDPI INSIGHTS: The CEO’s Letter #35 – 30 Years of Open Science, Open Access Policies, Spain Summit, MMCS 2026 & Antibiotics 2026

Topics

Topic in

Cancers, Diagnostics, Medicina, Current Oncology

Prostate Cancer: Symptoms, Diagnosis & Treatment—3rd Edition

Topic Editors: Ana Faustino, Lúcio Lara Santos, Paula OliveiraDeadline: 30 June 2026

Topic in

Cancers, Current Oncology, JCM, Medicina, Onco

Cancer Biology and Radiation Therapy: 2nd Edition

Topic Editors: Chang Ming Charlie Ma, Ka Yu Tse, Ming-Yii Huang, Mukund SeshadriDeadline: 25 July 2026

Topic in

Cancers, Diagnostics, Gastrointestinal Disorders, JCM, Current Oncology

Metastatic Colorectal Cancer: From Laboratory to Clinical Studies, 2nd Edition

Topic Editors: Ioannis Ntanasis-Stathopoulos, Diamantis I. TsilimigrasDeadline: 20 August 2026

Topic in

Cancers, Current Oncology, Diseases, Onco

Genomic Signature of Ocular Tumors

Topic Editors: Bita Esmaeli, Natalie WolkowDeadline: 20 October 2026

Conferences

Special Issues

Special Issue in

Current Oncology

Targeted Molecular Therapeutics for Urologic Cancers: Advances, Emerging Strategies, and the Road Ahead

Guest Editor: Carolina D'eliaDeadline: 15 June 2026

Special Issue in

Current Oncology

The Impact of Tumor Microenvironment on Therapeutic Resistance

Guest Editor: Ziqing ChenDeadline: 15 June 2026

Special Issue in

Current Oncology

Recent Advances in Surgical Strategies for Managing Metastatic Colorectal Cancer

Guest Editors: Fabrizio D'Acapito, Marco VairaDeadline: 15 June 2026

Special Issue in

Current Oncology

Surgery and Beyond: The Evolving Landscape of Glioma Management

Guest Editor: Andrea LandiDeadline: 15 June 2026

Topical Collections

Topical Collection in

Current Oncology

New Insights into Prostate Cancer Diagnosis and Treatment

Collection Editor: Sazan Rasul

Topical Collection in

Current Oncology

New Insights into Breast Cancer Diagnosis and Treatment

Collection Editors: Filippo Pesapane, Matteo Suter

Topical Collection in

Current Oncology

Editorial Board Members’ Collection Series: Contemporary Perioperative Concepts in Cancer Surgery

Collection Editors: Vijaya Gottumukkala, Jörg Kleeff