Journal Description

Sensors

Sensors

is an international, peer-reviewed, open access journal on the science and technology of sensors. Sensors is published semimonthly online by MDPI. The Polish Society of Applied Electromagnetics (PTZE), Japan Society of Photogrammetry and Remote Sensing (JSPRS), Spanish Society of Biomedical Engineering (SEIB) and International Society for the Measurement of Physical Behaviour (ISMPB) are affiliated with Sensors and their members receive a discount on the article processing charges.

- Open Access — free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), PubMed, MEDLINE, PMC, Ei Compendex, Inspec, Astrophysics Data System, and other databases.

- Journal Rank: JCR - Q2 (Instruments & Instrumentation) / CiteScore - Q1 (Instrumentation)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 17 days after submission; acceptance to publication is undertaken in 2.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Testimonials: See what our editors and authors say about Sensors.

- Companion journals for Sensors include: Chips, Automation, JCP and Targets.

Impact Factor:

3.9 (2022);

5-Year Impact Factor:

4.1 (2022)

Latest Articles

Leveraging Temporal Information to Improve Machine Learning-Based Calibration Techniques for Low-Cost Air Quality Sensors

Sensors 2024, 24(9), 2930; https://doi.org/10.3390/s24092930 (registering DOI) - 04 May 2024

Abstract

Low-cost ambient sensors have been identified as a promising technology for monitoring air pollution at a high spatio-temporal resolution. However, the pollutant data captured by these cost-effective sensors are less accurate than their conventional counterparts and require careful calibration to improve their accuracy

[...] Read more.

Low-cost ambient sensors have been identified as a promising technology for monitoring air pollution at a high spatio-temporal resolution. However, the pollutant data captured by these cost-effective sensors are less accurate than their conventional counterparts and require careful calibration to improve their accuracy and reliability. In this paper, we propose to leverage temporal information, such as the duration of time a sensor has been deployed and the time of day the reading was taken, in order to improve the calibration of low-cost sensors. This information is readily available and has so far not been utilized in the reported literature for the calibration of cost-effective ambient gas pollutant sensors. We make use of three data sets collected by research groups around the world, who gathered the data from field-deployed low-cost CO and NO2 sensors co-located with accurate reference sensors. Our investigation shows that using the temporal information as a co-variate can significantly improve the accuracy of common machine learning-based calibration techniques, such as Random Forest and Long Short-Term Memory.

Full article

(This article belongs to the Section Sensor Networks)

Open AccessArticle

Biomechanical Posture Analysis in Healthy Adults with Machine Learning: Applicability and Reliability

by

Federico Roggio, Sarah Di Grande, Salvatore Cavalieri, Deborah Falla and Giuseppe Musumeci

Sensors 2024, 24(9), 2929; https://doi.org/10.3390/s24092929 (registering DOI) - 04 May 2024

Abstract

Posture analysis is important in musculoskeletal disorder prevention but relies on subjective assessment. This study investigates the applicability and reliability of a machine learning (ML) pose estimation model for the human posture assessment, while also exploring the underlying structure of the data through

[...] Read more.

Posture analysis is important in musculoskeletal disorder prevention but relies on subjective assessment. This study investigates the applicability and reliability of a machine learning (ML) pose estimation model for the human posture assessment, while also exploring the underlying structure of the data through principal component and cluster analyses. A cohort of 200 healthy individuals with a mean age of 24.4 ± 4.2 years was photographed from the frontal, dorsal, and lateral views. We used Student’s t-test and Cohen’s effect size (d) to identify gender-specific postural differences and used the Intraclass Correlation Coefficient (ICC) to assess the reliability of this method. Our findings demonstrate distinct sex differences in shoulder adduction angle (men: 16.1° ± 1.9°, women: 14.1° ± 1.5°, d = 1.14) and hip adduction angle (men: 9.9° ± 2.2°, women: 6.7° ± 1.5°, d = 1.67), with no significant differences in horizontal inclinations. ICC analysis, with the highest value of 0.95, confirms the reliability of the approach. Principal component and clustering analyses revealed potential new patterns in postural analysis such as significant differences in shoulder–hip distance, highlighting the potential of unsupervised ML for objective posture analysis, offering a promising non-invasive method for rapid, reliable screening in physical therapy, ergonomics, and sports.

Full article

(This article belongs to the Special Issue Sensors and Artificial Intelligence in Gait and Posture Analysis)

►▼

Show Figures

Figure 1

Open AccessArticle

Analyzing the Thermal Characteristics of Three Lining Materials for Plantar Orthotics

by

Esther Querol-Martínez, Artur Crespo-Martínez, Álvaro Gómez-Carrión, Juan Francisco Morán-Cortés, Alfonso Martínez-Nova and Raquel Sánchez-Rodríguez

Sensors 2024, 24(9), 2928; https://doi.org/10.3390/s24092928 (registering DOI) - 04 May 2024

Abstract

Introduction: The choice of materials for covering plantar orthoses or wearable insoles is often based on their hardness, breathability, and moisture absorption capacity, although more due to professional preference than clear scientific criteria. An analysis of the thermal response to the use of

[...] Read more.

Introduction: The choice of materials for covering plantar orthoses or wearable insoles is often based on their hardness, breathability, and moisture absorption capacity, although more due to professional preference than clear scientific criteria. An analysis of the thermal response to the use of these materials would provide information about their behavior; hence, the objective of this study was to assess the temperature of three lining materials with different characteristics. Materials and Methods: The temperature of three materials for covering plantar orthoses was analyzed in a sample of 36 subjects (15 men and 21 women, aged 24.6 ± 8.2 years, mass 67.1 ± 13.6 kg, and height 1.7 ± 0.09 m). Temperature was measured before and after 3 h of use in clinical activities, using a polyethylene foam copolymer (PE), ethylene vinyl acetate (EVA), and PE-EVA copolymer foam insole with the use of a FLIR E60BX thermal camera. Results: In the PE copolymer (material 1), temperature increases between 1.07 and 1.85 °C were found after activity, with these differences being statistically significant in all regions of interest (p < 0.001), except for the first toe (0.36 °C, p = 0.170). In the EVA foam (material 2) and the expansive foam of the PE-EVA copolymer (material 3), the temperatures were also significantly higher in all analyzed areas (p < 0.001), ranging between 1.49 and 2.73 °C for EVA and 0.58 and 2.16 °C for PE-EVA. The PE copolymer experienced lower overall overheating, and the area of the fifth metatarsal head underwent the greatest temperature increase, regardless of the material analyzed. Conclusions: PE foam lining materials, with lower density or an open-cell structure, would be preferred for controlling temperature rise in the lining/footbed interface and providing better thermal comfort for users. The area of the first toe was found to be the least overheated, while the fifth metatarsal head increased the most in temperature. This should be considered in the design of new wearables to avoid excessive temperatures due to the lining materials.

Full article

(This article belongs to the Special Issue Wearable Sensors for Continuous Health Monitoring and Analysis)

►▼

Show Figures

Figure 1

Open AccessArticle

Consensus-Based Information Filtering in Distributed LiDAR Sensor Network for Tracking Mobile Robots

by

Isabella Luppi, Neel Pratik Bhatt and Ehsan Hashemi

Sensors 2024, 24(9), 2927; https://doi.org/10.3390/s24092927 (registering DOI) - 04 May 2024

Abstract

A distributed state observer is designed for state estimation and tracking of mobile robots amidst dynamic environments and occlusions within distributed LiDAR sensor networks. The proposed novel framework enhances three-dimensional bounding box detection and tracking utilizing a consensus-based information filter and a region

[...] Read more.

A distributed state observer is designed for state estimation and tracking of mobile robots amidst dynamic environments and occlusions within distributed LiDAR sensor networks. The proposed novel framework enhances three-dimensional bounding box detection and tracking utilizing a consensus-based information filter and a region of interest for state estimation of mobile robots. The framework enables the identification of the input to the dynamic process using remote sensing, enhancing the state prediction accuracy for low-visibility and occlusion scenarios in dynamic scenes. Experimental evaluations in indoor settings confirm the effectiveness of the framework in terms of accuracy and computational efficiency. These results highlight the benefit of integrating stationary LiDAR sensors’ state estimates into a switching consensus information filter to enhance the reliability of tracking and to reduce estimation error in the sense of mean square and covariance.

Full article

(This article belongs to the Special Issue Applications of Intelligent Robots: Sensing, Interaction, Navigation and Control Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Distinguishing the Uterine Artery, the Ureter, and Nerves in Laparoscopic Surgical Images Using Ensembles of Binary Semantic Segmentation Networks

by

Norbert Serban, David Kupas, Andras Hajdu, Peter Török and Balazs Harangi

Sensors 2024, 24(9), 2926; https://doi.org/10.3390/s24092926 (registering DOI) - 04 May 2024

Abstract

Performing a minimally invasive surgery comes with a significant advantage regarding rehabilitating the patient after the operation. But it also causes difficulties, mainly for the surgeon or expert who performs the surgical intervention, since only visual information is available and they cannot use

[...] Read more.

Performing a minimally invasive surgery comes with a significant advantage regarding rehabilitating the patient after the operation. But it also causes difficulties, mainly for the surgeon or expert who performs the surgical intervention, since only visual information is available and they cannot use their tactile senses during keyhole surgeries. This is the case with laparoscopic hysterectomy since some organs are also difficult to distinguish based on visual information, making laparoscope-based hysterectomy challenging. In this paper, we propose a solution based on semantic segmentation, which can create pixel-accurate predictions of surgical images and differentiate the uterine arteries, ureters, and nerves. We trained three binary semantic segmentation models based on the U-Net architecture with the EfficientNet-b3 encoder; then, we developed two ensemble techniques that enhanced the segmentation performance. Our pixel-wise ensemble examines the segmentation map of the binary networks on the lowest level of pixels. The other algorithm developed is a region-based ensemble technique that takes this examination to a higher level and makes the ensemble based on every connected component detected by the binary segmentation networks. We also introduced and trained a classic multi-class semantic segmentation model as a reference and compared it to the ensemble-based approaches. We used 586 manually annotated images from 38 surgical videos for this research and published this dataset.

Full article

(This article belongs to the Section Sensing and Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

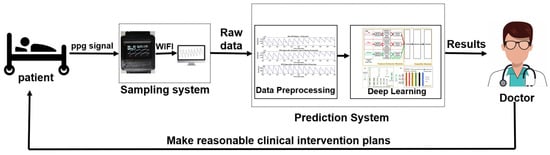

Rehabilitation Assessment System for Stroke Patients Based on Fusion-Type Optoelectronic Plethysmography Device and Multi-Modality Fusion Model: Design and Validation

by

Liangwen Yan, Ze Long, Jie Qian, Jianhua Lin, Sheng Quan Xie and Bo Sheng

Sensors 2024, 24(9), 2925; https://doi.org/10.3390/s24092925 - 03 May 2024

Abstract

This study aimed to propose a portable and intelligent rehabilitation evaluation system for digital stroke-patient rehabilitation assessment. Specifically, the study designed and developed a fusion device capable of emitting red, green, and infrared lights simultaneously for photoplethysmography (PPG) acquisition. Leveraging the different penetration

[...] Read more.

This study aimed to propose a portable and intelligent rehabilitation evaluation system for digital stroke-patient rehabilitation assessment. Specifically, the study designed and developed a fusion device capable of emitting red, green, and infrared lights simultaneously for photoplethysmography (PPG) acquisition. Leveraging the different penetration depths and tissue reflection characteristics of these light wavelengths, the device can provide richer and more comprehensive physiological information. Furthermore, a Multi-Channel Convolutional Neural Network–Long Short-Term Memory–Attention (MCNN-LSTM-Attention) evaluation model was developed. This model, constructed based on multiple convolutional channels, facilitates the feature extraction and fusion of collected multi-modality data. Additionally, it incorporated an attention mechanism module capable of dynamically adjusting the importance weights of input information, thereby enhancing the accuracy of rehabilitation assessment. To validate the effectiveness of the proposed system, sixteen volunteers were recruited for clinical data collection and validation, comprising eight stroke patients and eight healthy subjects. Experimental results demonstrated the system’s promising performance metrics (accuracy: 0.9125, precision: 0.8980, recall: 0.8970, F1 score: 0.8949, and loss function: 0.1261). This rehabilitation evaluation system holds the potential for stroke diagnosis and identification, laying a solid foundation for wearable-based stroke risk assessment and stroke rehabilitation assistance.

Full article

(This article belongs to the Special Issue Multi-Sensor Fusion in Medical Imaging, Diagnosis and Therapy)

►▼

Show Figures

Figure 1

Open AccessArticle

Anthropomorphic Tendon-Based Hands Controlled by Agonist–Antagonist Corticospinal Neural Network

by

Francisco García-Córdova, Antonio Guerrero-González and Fernando Hidalgo-Castelo

Sensors 2024, 24(9), 2924; https://doi.org/10.3390/s24092924 - 03 May 2024

Abstract

This article presents a study on the neurobiological control of voluntary movements for anthropomorphic robotic systems. A corticospinal neural network model has been developed to control joint trajectories in multi-fingered robotic hands. The proposed neural network simulates cortical and spinal areas, as well

[...] Read more.

This article presents a study on the neurobiological control of voluntary movements for anthropomorphic robotic systems. A corticospinal neural network model has been developed to control joint trajectories in multi-fingered robotic hands. The proposed neural network simulates cortical and spinal areas, as well as the connectivity between them, during the execution of voluntary movements similar to those performed by humans or monkeys. Furthermore, this neural connection allows for the interpretation of functional roles in the motor areas of the brain. The proposed neural control system is tested on the fingers of a robotic hand, which is driven by agonist–antagonist tendons and actuators designed to accurately emulate complex muscular functionality. The experimental results show that the corticospinal controller produces key properties of biological movement control, such as bell-shaped asymmetric velocity profiles and the ability to compensate for disturbances. Movements are dynamically compensated for through sensory feedback. Based on the experimental results, it is concluded that the proposed biologically inspired adaptive neural control system is robust, reliable, and adaptable to robotic platforms with diverse biomechanics and degrees of freedom. The corticospinal network successfully integrates biological concepts with engineering control theory for the generation of functional movement. This research significantly contributes to improving our understanding of neuromotor control in both animals and humans, thus paving the way towards a new frontier in the field of neurobiological control of anthropomorphic robotic systems.

Full article

(This article belongs to the Special Issue Tactile Sensors for Robotics Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

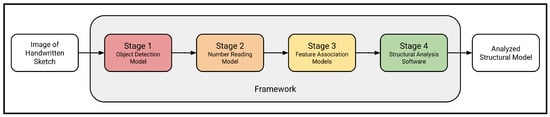

A Computer Vision Framework for Structural Analysis of Hand-Drawn Engineering Sketches

by

Isaac Joffe, Yuchen Qian, Mohammad Talebi-Kalaleh and Qipei Mei

Sensors 2024, 24(9), 2923; https://doi.org/10.3390/s24092923 - 03 May 2024

Abstract

Structural engineers are often required to draw two-dimensional engineering sketches for quick structural analysis, either by hand calculation or using analysis software. However, calculation by hand is slow and error-prone, and the manual conversion of a hand-drawn sketch into a virtual model is

[...] Read more.

Structural engineers are often required to draw two-dimensional engineering sketches for quick structural analysis, either by hand calculation or using analysis software. However, calculation by hand is slow and error-prone, and the manual conversion of a hand-drawn sketch into a virtual model is tedious and time-consuming. This paper presents a complete and autonomous framework for converting a hand-drawn engineering sketch into an analyzed structural model using a camera and computer vision. In this framework, a computer vision object detection stage initially extracts information about the raw features in the image of the beam diagram. Next, a computer vision number-reading model transcribes any handwritten numerals appearing in the image. Then, feature association models are applied to characterize the relationships among the detected features in order to build a comprehensive structural model. Finally, the structural model generated is analyzed using OpenSees. In the system presented, the object detection model achieves a mean average precision of 99.1%, the number-reading model achieves an accuracy of 99.0%, and the models in the feature association stage achieve accuracies ranging from 95.1% to 99.5%. Overall, the tool analyzes 45.0% of images entirely correctly and the remaining 55.0% of images partially correctly. The proposed framework holds promise for other types of structural sketches, such as trusses and frames. Moreover, it can be a valuable tool for structural engineers that is capable of improving the efficiency, safety, and sustainability of future construction projects.

Full article

(This article belongs to the Topic Applied Computer Vision and Pattern Recognition: 2nd Volume)

►▼

Show Figures

Figure 1

Open AccessReview

MEMS Technology in Cardiology: Advancements and Applications in Heart Failure Management Focusing on the CardioMEMS Device

by

Francesco Ciotola, Stylianos Pyxaras, Harald Rittger and Veronica Buia

Sensors 2024, 24(9), 2922; https://doi.org/10.3390/s24092922 - 03 May 2024

Abstract

Heart failure (HF) is a complex clinical syndrome associated with significant morbidity, mortality, and healthcare costs. It is characterized by various structural and/or functional abnormalities of the heart, resulting in elevated intracardiac pressure and/or inadequate cardiac output at rest and/or during exercise. These

[...] Read more.

Heart failure (HF) is a complex clinical syndrome associated with significant morbidity, mortality, and healthcare costs. It is characterized by various structural and/or functional abnormalities of the heart, resulting in elevated intracardiac pressure and/or inadequate cardiac output at rest and/or during exercise. These dysfunctions can originate from a variety of conditions, including coronary artery disease, hypertension, cardiomyopathies, heart valve disorders, arrhythmias, and other lifestyle or systemic factors. Identifying the underlying cause is crucial for detecting reversible or treatable forms of HF. Recent epidemiological studies indicate that there has not been an increase in the incidence of the disease. Instead, patients seem to experience a chronic trajectory marked by frequent hospitalizations and stagnant mortality rates. Managing these patients requires a multidisciplinary approach that focuses on preventing disease progression, controlling symptoms, and preventing acute decompensations. In the outpatient setting, patient self-care plays a vital role in achieving these goals. This involves implementing necessary lifestyle changes and promptly recognizing symptoms/signs such as dyspnea, lower limb edema, or unexpected weight gain over a few days, to alert the healthcare team for evaluation of medication adjustments. Traditional methods of HF monitoring, such as symptom assessment and periodic clinic visits, may not capture subtle changes in hemodynamics. Sensor-based technologies offer a promising solution for remote monitoring of HF patients, enabling early detection of fluid overload and optimization of medical therapy. In this review, we provide an overview of the CardioMEMS device, a novel sensor-based system for pulmonary artery pressure monitoring in HF patients. We discuss the technical aspects, clinical evidence, and future directions of CardioMEMS in HF management.

Full article

(This article belongs to the Special Issue Application of MEMS/NEMS-Based Sensing Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

AI Concepts for System of Systems Dynamic Interoperability

by

Jacob Nilsson, Saleha Javed, Kim Albertsson, Jerker Delsing, Marcus Liwicki and Fredrik Sandin

Sensors 2024, 24(9), 2921; https://doi.org/10.3390/s24092921 - 03 May 2024

Abstract

Interoperability is a central problem in digitization and sos engineering, which concerns the capacity of systems to exchange information and cooperate. The task to dynamically establish interoperability between heterogeneous cps at run-time is a challenging problem. Different aspects of the interoperability problem have

[...] Read more.

Interoperability is a central problem in digitization and sos engineering, which concerns the capacity of systems to exchange information and cooperate. The task to dynamically establish interoperability between heterogeneous cps at run-time is a challenging problem. Different aspects of the interoperability problem have been studied in fields such as sos, neural translation, and agent-based systems, but there are no unifying solutions beyond domain-specific standardization efforts. The problem is complicated by the uncertain and variable relations between physical processes and human-centric symbols, which result from, e.g., latent physical degrees of freedom, maintenance, re-configurations, and software updates. Therefore, we surveyed the literature for concepts and methods needed to automatically establish sos with purposeful cps communication, focusing on machine learning and connecting approaches that are not integrated in the present literature. Here, we summarize recent developments relevant to the dynamic interoperability problem, such as representation learning for ontology alignment and inference on heterogeneous linked data; neural networks for transcoding of text and code; concept learning-based reasoning; and emergent communication. We find that there has been a recent interest in deep learning approaches to establishing communication under different assumptions about the environment, language, and nature of the communicating entities. Furthermore, we present examples of architectures and discuss open problems associated with ai-enabled solutions in relation to sos interoperability requirements. Although these developments open new avenues for research, there are still no examples that bridge the concepts necessary to establish dynamic interoperability in complex sos, and realistic testbeds are needed.

Full article

(This article belongs to the Section Sensor Networks)

Open AccessArticle

Development of a Six-Degree-of-Freedom Analog 3D Tactile Probe Based on Non-Contact 2D Sensors

by

José Antonio Albajez, Jesús Velázquez, Marta Torralba, Lucía C. Díaz-Pérez, José Antonio Yagüe-Fabra and Juan José Aguilar

Sensors 2024, 24(9), 2920; https://doi.org/10.3390/s24092920 - 03 May 2024

Abstract

In this paper, a six-degree-of-freedom analog tactile probe with a new, simple, and robust mechanical design is presented. Its design is based on the use of one elastomeric ring that supports the stylus carrier and allows its movement inside a cubic measuring range

[...] Read more.

In this paper, a six-degree-of-freedom analog tactile probe with a new, simple, and robust mechanical design is presented. Its design is based on the use of one elastomeric ring that supports the stylus carrier and allows its movement inside a cubic measuring range of ±3 mm. The position of the probe tip is determined by three low-cost, noncontact, 2D PSD (position-sensitive detector) sensors, facilitating a wider application of this probe to different measuring systems compared to commercial ones. However, several software corrections, regarding the size and orientation of the three LED light beams, must be carried out when using these 2D sensors for this application due to the lack of additional focusing or collimating lenses and the very wide measuring range. The development process, simulation results, correction models, experimental tests, and calibration of this probe are presented. The results demonstrate high repeatability along the X-, Y-, and Z-axes (2.0 µm, 2.0 µm, and 2.1 µm, respectively) and overall accuracies of 6.7 µm, 7.0 µm, and 8.0 µm, respectively, which could be minimized by more complex correction models.

Full article

(This article belongs to the Topic Measurement Strategies and Standardization in Manufacturing)

►▼

Show Figures

Figure 1

Open AccessArticle

Smart Textile Impact Sensor for e-Helmet to Measure Head Injury

by

Manob Jyoti Saikia and Arar Salim Alkhader

Sensors 2024, 24(9), 2919; https://doi.org/10.3390/s24092919 - 03 May 2024

Abstract

Concussions, a prevalent public health concern in the United States, often result from mild traumatic brain injuries (mTBI), notably in sports such as American football. There is limited exploration of smart-textile-based sensors for measuring the head impacts associated with concussions in sports and

[...] Read more.

Concussions, a prevalent public health concern in the United States, often result from mild traumatic brain injuries (mTBI), notably in sports such as American football. There is limited exploration of smart-textile-based sensors for measuring the head impacts associated with concussions in sports and recreational activities. In this paper, we describe the development and construction of a smart textile impact sensor (STIS) and validate STIS functionality under high magnitude impacts. This STIS can be inserted into helmet cushioning to determine head impact force. The designed 2 × 2 STIS matrix is composed of a number of material layered structures, with a sensing surface made of semiconducting polymer composite (SPC). The SPC dimension was modified in the design iteration to increase sensor range, responsiveness, and linearity. This was to be applicable in high impact situations. A microcontroller board with a biasing circuit was used to interface the STIS and read the sensor’s response. A pendulum test setup was constructed to evaluate various STISs with impact forces. A camera and Tracker software were used to monitor the pendulum swing. The impact forces were calculated by measuring the pendulum bob’s velocity and acceleration. The performance of the various STISs was measured in terms of voltage due to impact force, with forces varying from 180 to 722 N. Through data analysis, the threshold impact forces in the linear range were determined. Through an analysis of linear regression, the sensors’ sensitivity was assessed. Also, a simplified model was developed to measure the force distribution in the 2 × 2 STIS areas from the measured voltages. The results showed that improving the SPC thickness could obtain improved sensor behavior. However, for impacts that exceeded the threshold, the suggested sensor did not respond by reflecting the actual impact forces, but it gave helpful information about the impact distribution on the sensor regardless of the accurate expected linear response. Results showed that the proposed STIS performs satisfactorily within a range and has the potential to be used in the development of an e-helmet with a large STIS matrix that could cover the whole head within the e-helmet. This work also encourages future research, especially on the structure of the sensor that could withstand impacts which in turn could improve the overall range and performance and would accurately measure the impact in concussion-causing impact ranges.

Full article

(This article belongs to the Section Wearables)

►▼

Show Figures

Figure 1

Open AccessArticle

Intra and Inter-Device Reliabilities of the Instrumented Timed-Up and Go Test Using Smartphones in Young Adult Population

by

Thâmela Thaís Santos dos Santos, Amélia Pasqual Marques, Luis Carlos Pereira Monteiro, Enzo Gabriel da Rocha Santos, Gustavo Henrique Lima Pinto, Anderson Belgamo, Anselmo de Athayde Costa e Silva, André dos Santos Cabral, Szymon Kuliś, Jan Gajewski, Givago Silva Souza, Tacyla Jesus da Silva, Wesley Thyago Alves da Costa, Railson Cruz Salomão and Bianca Callegari

Sensors 2024, 24(9), 2918; https://doi.org/10.3390/s24092918 - 03 May 2024

Abstract

The Timed-Up and Go (TUG) test is widely utilized by healthcare professionals for assessing fall risk and mobility due to its practicality. Currently, test results are based solely on execution time, but integrating technological devices into the test can provide additional information to

[...] Read more.

The Timed-Up and Go (TUG) test is widely utilized by healthcare professionals for assessing fall risk and mobility due to its practicality. Currently, test results are based solely on execution time, but integrating technological devices into the test can provide additional information to enhance result accuracy. This study aimed to assess the reliability of smartphone-based instrumented TUG (iTUG) parameters. We conducted evaluations of intra- and inter-device reliabilities, hypothesizing that iTUG parameters would be replicable across all experiments. A total of 30 individuals participated in Experiment A to assess intra-device reliability, while Experiment B involved 15 individuals to evaluate inter-device reliability. The smartphone was securely attached to participants’ bodies at the lumbar spine level between the L3 and L5 vertebrae. In Experiment A, subjects performed the TUG test three times using the same device, with a 5 min interval between each trial. Experiment B required participants to perform three trials using different devices, with the same time interval between trials. Comparing stopwatch and smartphone measurements in Experiment A, no significant differences in test duration were found between the two devices. A perfect correlation and Bland–Altman analysis indicated good agreement between devices. Intra-device reliability analysis in Experiment A revealed significant reliability in nine out of eleven variables, with four variables showing excellent reliability and five showing moderate to high reliability. In Experiment B, inter-device reliability was observed among different smartphone devices, with nine out of eleven variables demonstrating significant reliability. Notable differences were found in angular velocity peak at the first and second turns between specific devices, emphasizing the importance of considering device variations in inertial measurements. Hence, smartphone inertial sensors present a valid, applicable, and feasible alternative for TUG assessment.

Full article

(This article belongs to the Special Issue Human Movement Monitoring Using Wearable Sensor Technology)

►▼

Show Figures

Figure 1

Open AccessEssay

Wireless Power Transfer Efficiency Optimization Tracking Method Based on Full Current Mode Impedance Matching

by

Yuanzhong Xu, Yuxuan Zhang and Tiezhou Wu

Sensors 2024, 24(9), 2917; https://doi.org/10.3390/s24092917 - 02 May 2024

Abstract

Wireless power transfer (WPT) technology is a contactless wireless energy transfer method with wide-ranging applications in fields such as smart homes, the Internet of Things (IoT), and electric vehicles. Achieving optimal efficiency in wireless power transfer systems has been a key research focus.

[...] Read more.

Wireless power transfer (WPT) technology is a contactless wireless energy transfer method with wide-ranging applications in fields such as smart homes, the Internet of Things (IoT), and electric vehicles. Achieving optimal efficiency in wireless power transfer systems has been a key research focus. In this paper, we propose a tracking method based on full current mode impedance matching for optimizing wireless power transfer efficiency. This method enables efficiency tracking in WPT systems and seamless switching between continuous conduction mode and discontinuous mode, expanding the detection capabilities of the wireless power transfer system. MATLAB was used to simulate the proposed method and validate its feasibility and effectiveness. Based on the simulation results, the proposed method ensures optimal efficiency tracking in wireless power transfer systems while extending detection capabilities, offering practical value and potential for widespread applications.

Full article

(This article belongs to the Section Electronic Sensors)

Open AccessCommunication

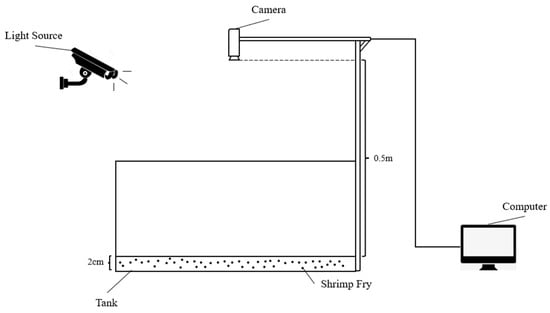

Automatic Shrimp Fry Counting Method Using Multi-Scale Attention Fusion

by

Xiaohong Peng, Tianyu Zhou, Ying Zhang and Xiaopeng Zhao

Sensors 2024, 24(9), 2916; https://doi.org/10.3390/s24092916 - 02 May 2024

Abstract

Shrimp fry counting is an important task for biomass estimation in aquaculture. Accurate counting of the number of shrimp fry in tanks can not only assess the production of mature shrimp but also assess the density of shrimp fry in the tanks, which

[...] Read more.

Shrimp fry counting is an important task for biomass estimation in aquaculture. Accurate counting of the number of shrimp fry in tanks can not only assess the production of mature shrimp but also assess the density of shrimp fry in the tanks, which is very helpful for the subsequent growth status, transportation management, and yield assessment. However, traditional manual counting methods are often inefficient and prone to counting errors; a more efficient and accurate method for shrimp fry counting is urgently needed. In this paper, we first collected and labeled the images of shrimp fry in breeding tanks according to the constructed experimental environment and generated corresponding density maps using the Gaussian kernel function. Then, we proposed a multi-scale attention fusion-based shrimp fry counting network called the SFCNet. Experiments showed that our proposed SFCNet model reached the optimal performance in terms of shrimp fry counting compared to CNN-based baseline counting models, with MAEs and RMSEs of 3.96 and 4.682, respectively. This approach was able to effectively calculate the number of shrimp fry and provided a better solution for accurately calculating the number of shrimp fry.

Full article

(This article belongs to the Special Issue Sensor and AI Technologies in Intelligent Agriculture: 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Assessment of Surgeons’ Stress Levels with Digital Sensors during Robot-Assisted Surgery: An Experimental Study

by

Kristóf Takács, Eszter Lukács, Renáta Levendovics, Damján Pekli, Attila Szíjártó and Tamás Haidegger

Sensors 2024, 24(9), 2915; https://doi.org/10.3390/s24092915 - 02 May 2024

Abstract

Robot-Assisted Minimally Invasive Surgery (RAMIS) marks a paradigm shift in surgical procedures, enhancing precision and ergonomics. Concurrently it introduces complex stress dynamics and ergonomic challenges regarding the human–robot interface and interaction. This study explores the stress-related aspects of RAMIS, using the da Vinci

[...] Read more.

Robot-Assisted Minimally Invasive Surgery (RAMIS) marks a paradigm shift in surgical procedures, enhancing precision and ergonomics. Concurrently it introduces complex stress dynamics and ergonomic challenges regarding the human–robot interface and interaction. This study explores the stress-related aspects of RAMIS, using the da Vinci XI Surgical System and the Sea Spikes model as a standard skill training phantom to establish a link between technological advancement and human factors in RAMIS environments. By employing different physiological and kinematic sensors for heart rate variability, hand movement tracking, and posture analysis, this research aims to develop a framework for quantifying the stress and ergonomic loads applied to surgeons. Preliminary findings reveal significant correlations between stress levels and several of the skill-related metrics measured by external sensors or the SURG-TLX questionnaire. Furthermore, early analysis of this preliminary dataset suggests the potential benefits of applying machine learning for surgeon skill classification and stress analysis. This paper presents the initial findings, identified correlations, and the lessons learned from the clinical setup, aiming to lay down the cornerstones for wider studies in the fields of clinical situation awareness and attention computing.

Full article

(This article belongs to the Special Issue Enhancing Rehabilitation and Assistance through Human–Robot Interaction: Current Trends and Future Directions)

►▼

Show Figures

Figure 1

Open AccessArticle

Surface Defect Detection of Aluminum Profiles Based on Multiscale and Self-Attention Mechanisms

by

Yichuan Shao, Shuo Fan, Qian Zhao, Le Zhang and Haijing Sun

Sensors 2024, 24(9), 2914; https://doi.org/10.3390/s24092914 - 02 May 2024

Abstract

To address the various challenges in aluminum surface defect detection, such as multiscale intricacies, sensitivity to lighting variations, occlusion, and noise, this study proposes the AluDef-ClassNet model. Firstly, a Gaussian difference pyramid is utilized to capture multiscale image features. Secondly, a self-attention mechanism

[...] Read more.

To address the various challenges in aluminum surface defect detection, such as multiscale intricacies, sensitivity to lighting variations, occlusion, and noise, this study proposes the AluDef-ClassNet model. Firstly, a Gaussian difference pyramid is utilized to capture multiscale image features. Secondly, a self-attention mechanism is introduced to enhance feature representation. Additionally, an improved residual network structure incorporating dilated convolutions is adopted to increase the receptive field, thereby enhancing the network’s ability to learn from extensive information. A small-scale dataset of high-quality aluminum surface defect images is acquired using a CCD camera. To better tackle the challenges in surface defect detection, advanced deep learning techniques and data augmentation strategies are employed. To address the difficulty of data labeling, a transfer learning approach based on fine-tuning is utilized, leveraging prior knowledge to enhance the efficiency and accuracy of model training. In dataset testing, our model achieved a classification accuracy of 98.01%, demonstrating significant advantages over other classification models.

Full article

(This article belongs to the Special Issue Multi-Modal Image Processing Methods, Systems, and Applications)

Open AccessArticle

Multiple-Junction-Based Traffic-Aware Routing Protocol Using ACO Algorithm in Urban Vehicular Networks

by

Seung-Won Lee, Kyung-Soo Heo, Min-A Kim, Do-Kyoung Kim and Hoon Choi

Sensors 2024, 24(9), 2913; https://doi.org/10.3390/s24092913 - 02 May 2024

Abstract

The burgeoning interest in intelligent transportation systems (ITS) and the widespread adoption of in-vehicle amenities like infotainment have spurred a heightened fascination with vehicular ad-hoc networks (VANETs). Multi-hop routing protocols are pivotal in actualizing these in-vehicle services, such as infotainment, wirelessly. This study

[...] Read more.

The burgeoning interest in intelligent transportation systems (ITS) and the widespread adoption of in-vehicle amenities like infotainment have spurred a heightened fascination with vehicular ad-hoc networks (VANETs). Multi-hop routing protocols are pivotal in actualizing these in-vehicle services, such as infotainment, wirelessly. This study presents a novel protocol called multiple junction-based traffic-aware routing (MJTAR) for VANET vehicles operating in urban environments. MJTAR represents an advancement over the improved greedy traffic-aware routing (GyTAR) protocol. MJTAR introduces a distributed mechanism capable of recognizing vehicle traffic and computing curve metric distances based on two-hop junctions. Additionally, it employs a technique to dynamically select the most optimal multiple junctions between source and destination using the ant colony optimization (ACO) algorithm. We implemented the proposed protocol using the network simulator 3 (NS-3) and simulation of urban mobility (SUMO) simulators and conducted performance evaluations by comparing it with GSR and GyTAR. Our evaluation demonstrates that the proposed protocol surpasses GSR and GyTAR by over 20% in terms of packet delivery ratio, with the end-to-end delay reduced to less than 1.3 s on average.

Full article

(This article belongs to the Special Issue Advanced Vehicular Ad Hoc Networks (Volume II))

Open AccessArticle

Estimation of Shoulder Joint Rotation Angle Using Tablet Device and Pose Estimation Artificial Intelligence Model

by

Shunsaku Takigami, Atsuyuki Inui, Yutaka Mifune, Hanako Nishimoto, Kohei Yamaura, Tatsuo Kato, Takahiro Furukawa, Shuya Tanaka, Masaya Kusunose, Yutaka Ehara and Ryosuke Kuroda

Sensors 2024, 24(9), 2912; https://doi.org/10.3390/s24092912 - 02 May 2024

Abstract

Traditionally, angle measurements have been performed using a goniometer, but the complex motion of shoulder movement has made these measurements intricate. The angle of rotation of the shoulder is particularly difficult to measure from an upright position because of the complicated base and

[...] Read more.

Traditionally, angle measurements have been performed using a goniometer, but the complex motion of shoulder movement has made these measurements intricate. The angle of rotation of the shoulder is particularly difficult to measure from an upright position because of the complicated base and moving axes. In this study, we attempted to estimate the shoulder joint internal/external rotation angle using the combination of pose estimation artificial intelligence (AI) and a machine learning model. Videos of the right shoulder of 10 healthy volunteers (10 males, mean age 37.7 years, mean height 168.3 cm, mean weight 72.7 kg, mean BMI 25.6) were recorded and processed into 10,608 images. Parameters were created using the coordinates measured from the posture estimation AI, and these were used to train the machine learning model. The measured values from the smartphone’s angle device were used as the true values to create a machine learning model. When measuring the parameters at each angle, we compared the performance of the machine learning model using both linear regression and Light GBM. When the pose estimation AI was trained using linear regression, a correlation coefficient of 0.971 was achieved, with a mean absolute error (MAE) of 5.778. When trained with Light GBM, the correlation coefficient was 0.999 and the MAE was 0.945. This method enables the estimation of internal and external rotation angles from a direct-facing position. This approach is considered to be valuable for analyzing motor movements during sports and rehabilitation.

Full article

(This article belongs to the Special Issue Artificial-Intelligence-Enhanced Wearable Sensing Technologies for Biomechanical and Physiological Monitoring and Analysis)

►▼

Show Figures

Figure 1

Open AccessArticle

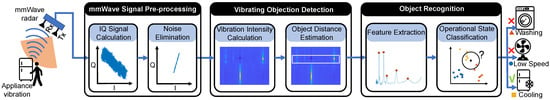

HomeOSD: Appliance Operating-Status Detection Using mmWave Radar

by

Yinhe Sheng, Jiao Li, Yongyu Ma and Jin Zhang

Sensors 2024, 24(9), 2911; https://doi.org/10.3390/s24092911 - 02 May 2024

Abstract

Within the context of a smart home, detecting the operating status of appliances in the environment plays a pivotal role, estimating power consumption, issuing overuse reminders, and identifying faults. The traditional contact-based approaches require equipment updates such as incorporating smart sockets or high-precision

[...] Read more.

Within the context of a smart home, detecting the operating status of appliances in the environment plays a pivotal role, estimating power consumption, issuing overuse reminders, and identifying faults. The traditional contact-based approaches require equipment updates such as incorporating smart sockets or high-precision electric meters. Non-constant approaches involve the use of technologies like laser and Ultra-Wideband (UWB) radar. The former can only monitor one appliance at a time, and the latter is unable to detect appliances with extremely tiny vibrations and tends to be susceptible to interference from human activities. To address these challenges, we introduce HomeOSD, an advanced appliance status-detection system that uses mmWave radar. This innovative solution simultaneously tracks multiple appliances without human activity interference by measuring their extremely tiny vibrations. To reduce interference from other moving objects, like people, we introduce a Vibration-Intensity Metric based on periodic signal characteristics. We present the Adaptive Weighted Minimum Distance Classifier (AWMDC) to counteract appliance vibration fluctuations. Finally, we develop a system using a common mmWave radar and carry out real-world experiments to evaluate HomeOSD’s performance. The detection accuracy is 95.58%, and the promising results demonstrate the feasibility and reliability of our proposed system.

Full article

(This article belongs to the Special Issue Sensors for Smart Environments)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Sensors Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal Browser-

arrow_forward_ios

Forthcoming issue

arrow_forward_ios Current issue - Vol. 24 (2024)

- Vol. 23 (2023)

- Vol. 22 (2022)

- Vol. 21 (2021)

- Vol. 20 (2020)

- Vol. 19 (2019)

- Vol. 18 (2018)

- Vol. 17 (2017)

- Vol. 16 (2016)

- Vol. 15 (2015)

- Vol. 14 (2014)

- Vol. 13 (2013)

- Vol. 12 (2012)

- Vol. 11 (2011)

- Vol. 10 (2010)

- Vol. 9 (2009)

- Vol. 8 (2008)

- Vol. 7 (2007)

- Vol. 6 (2006)

- Vol. 5 (2005)

- Vol. 4 (2004)

- Vol. 3 (2003)

- Vol. 2 (2002)

- Vol. 1 (2001)

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Materials, Nanomaterials, Photonics, Polymers, Applied Sciences, Sensors

Optical and Optoelectronic Properties of Materials and Their Applications

Topic Editors: Zhiping Luo, Gibin George, Navadeep ShrivastavaDeadline: 20 May 2024

Topic in

Remote Sensing, Sensors, Smart Cities, Vehicles, Geomatics

Information Sensing Technology for Intelligent/Driverless Vehicle, 2nd Volume

Topic Editors: Yan Huang, Yi Ren, Penghui Huang, Jun Wan, Zhanye Chen, Shiyang TangDeadline: 31 May 2024

Topic in

Applied Sciences, Electricity, Electronics, Energies, Sensors

Power System Protection

Topic Editors: Seyed Morteza Alizadeh, Akhtar KalamDeadline: 20 June 2024

Topic in

Applied Sciences, Energies, Machines, Sensors, Vehicles

Vehicle Dynamics and Control

Topic Editors: Peter Gaspar, Junnian WangDeadline: 30 June 2024

Conferences

Special Issues

Special Issue in

Sensors

Sensing for Robotics and Automation

Guest Editors: Igor Korobiichuk, Michał NowickiDeadline: 10 May 2024

Special Issue in

Sensors

Meta-User Interfaces for Ambient Environments

Guest Editors: Marco Romano, Phillip C-Y. Sheu, Giuliana VitielloDeadline: 20 May 2024

Special Issue in

Sensors

Selected Papers from 20th World Conference on Non-Destructive Testing (WCNDT 2022)

Guest Editor: Seunghee ParkDeadline: 31 May 2024

Special Issue in

Sensors

Novel Sensors and Algorithms for Outdoor Mobile Robot

Guest Editors: Levente Tamás, Andras MajdikDeadline: 20 June 2024

Topical Collections

Topical Collection in

Sensors

Robotic and Sensor Technologies in Environmental Exploration and Monitoring

Collection Editors: Jacopo Aguzzi, Corrado Costa, Sergio Stefanni, Valerio Funari

Topical Collection in

Sensors

Microfluidic Sensors

Collection Editors: Sabina Merlo, Klaus Stefan Drese