1. Introduction

Uncertainty management has been applied widely in practical engineering, such as sensor data fusion, pattern recognition and so on [

1,

2,

3,

4]. The new technology helps the information obtained in and out of the system to be integrated to make correct judgments. Bayesian probability theory, Dempster–Shafer evidence theory (D-S evidence theory), fuzzy structure and some other methods [

5,

6,

7] have been used to enhance the ability to process uncertain data [

8,

9,

10]. Among them, the Dempster–Shafer theory has an outstanding performance in uncertain information modeling and fusion in practical applications, such as multi-sensor target recognition [

11], industrial alarm system design [

12], web news extraction [

13] classification with uncertainty [

14,

15,

16], clustering [

17,

18,

19] and so on [

20,

21]. The research on the Brain–Computer Interface also expanded the application of D-S evidence theory in the medical field [

22].

The advantage of the D-S evidence theory lies in its characteristics of non-learning and no need for prior information [

15,

23]. Due to different sources of evidence, in the application process, the obtained evidence may have a high degree of conflict, resulting in counter-intuitive results after data fusion [

24,

25]. Many methods have been put forward to improve the performance of information fusion after Zadeh pointed out the shortcomings in the Dempster combination rule [

26]. In order to analyze the rationality of the data fusion method, Liu characterized the feasibility of the classical Dempster rule with the two-dimensional form of conflict coefficient and distance between betting commitments between two BPAs [

27]. Deng et al. proposed the generalized basic probability assignment (GBPA) generation method in the generalized evidence theory with a new combination rule [

28]. If the belief value of the empty set

equals 0, GBPA degenerates into classic basic probability assignment (BPA). An et al. introduced a fuzzy reasoning mechanism into a similarity measurement model [

29]. Many works proposed different methods to modify the combination rule [

30,

31,

32]. In the classical method from Yager [

33], it assumes that the frame of discernment (FOD) is closed and allocates the conflicting part of the belief value to the universal set of FOD, denoted as

.

A promising perspective of addressing conflict data fusion is using an uncertainty measure to manage the conflict in evidence [

34,

35,

36]. Deng studied information entropy in the evidence theory and proposed Deng entropy, which has been applied widely [

37]. Xiao proposed an information fusion method based on belief entropy and applied it to sensor data fusion in an uncertain environment [

38]. A series of improved failure mode and effects analysis methods based on Deng entropy was proposed in [

39,

40]. The generation of a mass function with high reliability plays a key role in uncertain information processing with the D-S evidence theory [

41]. There are also many methods of preprocessing different sources of evidence in D-S evidence theory, such as different concepts of evidence reliability [

42,

43], consistent strength [

44], evidence reasoning [

45,

46] and uncertainty measure of negation evidence [

47]. It seems that these preprocessing methods continue the idea of calculating the average arithmetic value of conflict evidence with different strategies [

48].

There are many discussions on the use of the belief function in D-S evidence theory, such as the concept and redefinition of the conditional belief function [

49,

50] and the applicability of the belief function in specific situations [

51,

52]. The belief function is the result of assigning a probability to a set of evidence, not for a single proposition, and it is the probability assertion of the evidence based on knowledge [

53]. If there is no evidence or initialization is required, the belief function should be assigned to all subsets of the FOD according to certain rules. In this case, the base belief function can be a solution [

54]. The question is whether these pre-allocated belief functions have beneficial effects on evidence fusion all the time and how to avoid counter-intuitive combination results in some cases.

Most of the existing methods of improving D-S evidence theory have some limitations. First of all, the complexity brought by exponential-level evidence on the power set space is not considered enough. If the FOD is very large, not only is the calculation difficulty greatly increased but also the correlation among elements in the FOD is decreased or even lost. The conflict coefficient considers the factor product with an empty intersection between different sources of evidence, so the interaction among elements in the FOD should also be considered in the combination process. Secondly, there is a lack of substantive analysis of the original evidence. If the data are not processed according to the characteristics of the data itself, even if a more reasonable result is obtained in some cases, there may be a large deviation. To address the aforementioned open issues, this paper proposes a new evidence reliability coefficient.

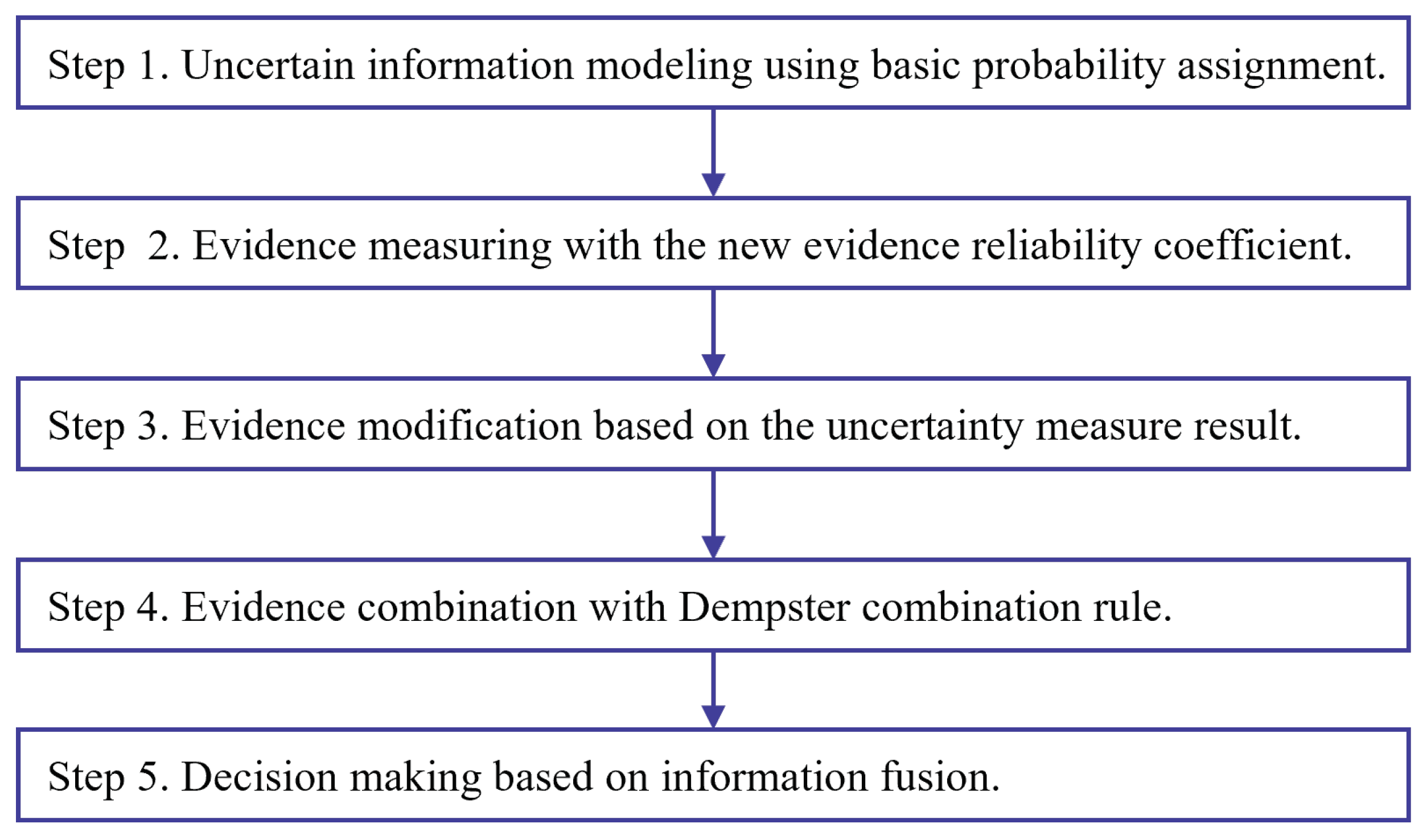

The motivation of this study is to develop a new conflict data management method that can keep the original mathematical characteristics of the Dempster combination rule without adding more computation burden compared to most of the aforementioned methods of evidence preprocessing. The new evidence reliability coefficient defines the reliability coefficient through the differences in evidence sources from the prospect of using the Dempster combination rule. Inconsistencies between modified BPAs are eliminated. All elements in the FOD are assigned a belief value due to their inner connection.

The rest of this article is arranged as follows.

Section 2 briefly introduces the Dempster–Shafer evidence theory and some existing conflict management methods. In

Section 3, a new evidence reliability coefficient is proposed.

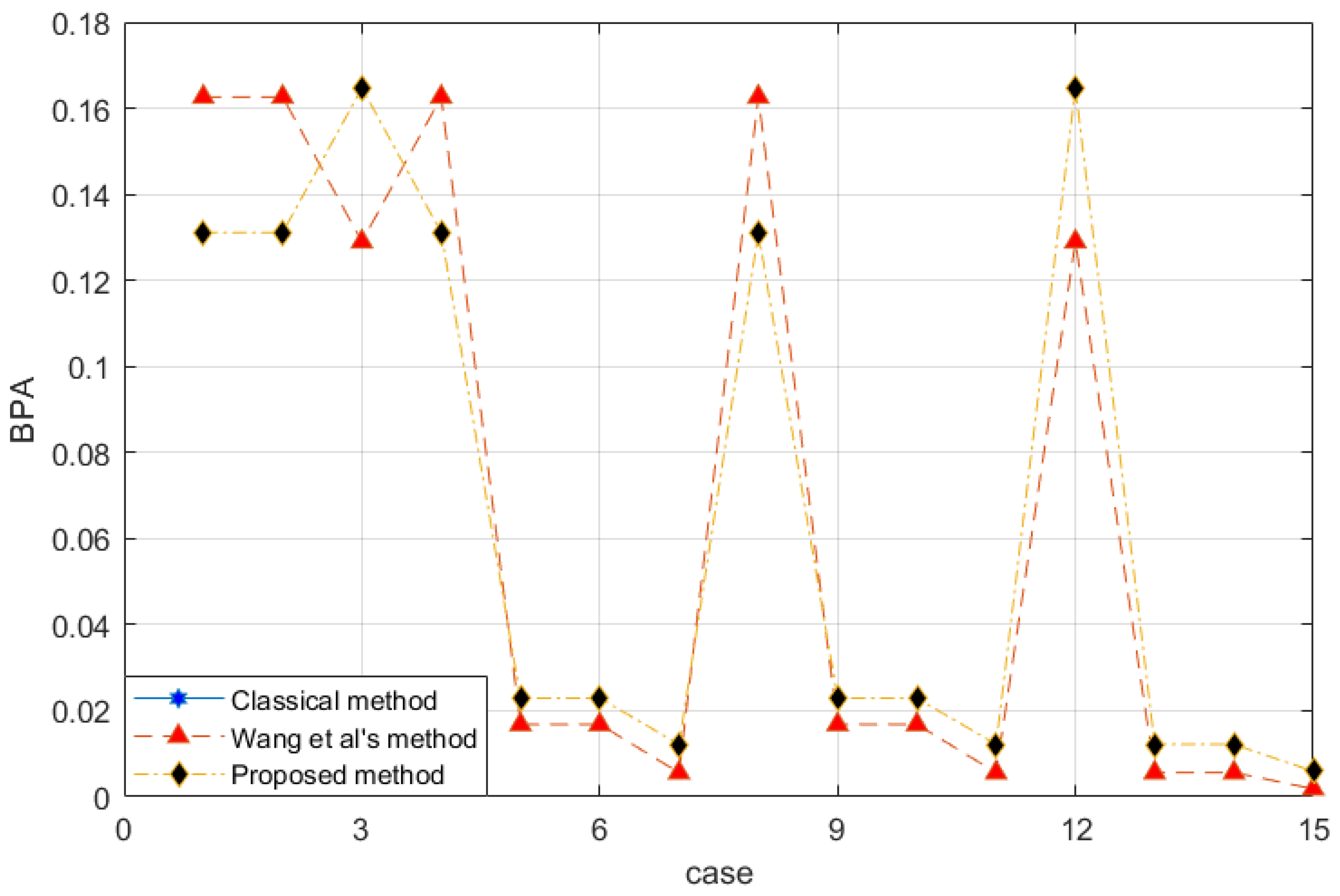

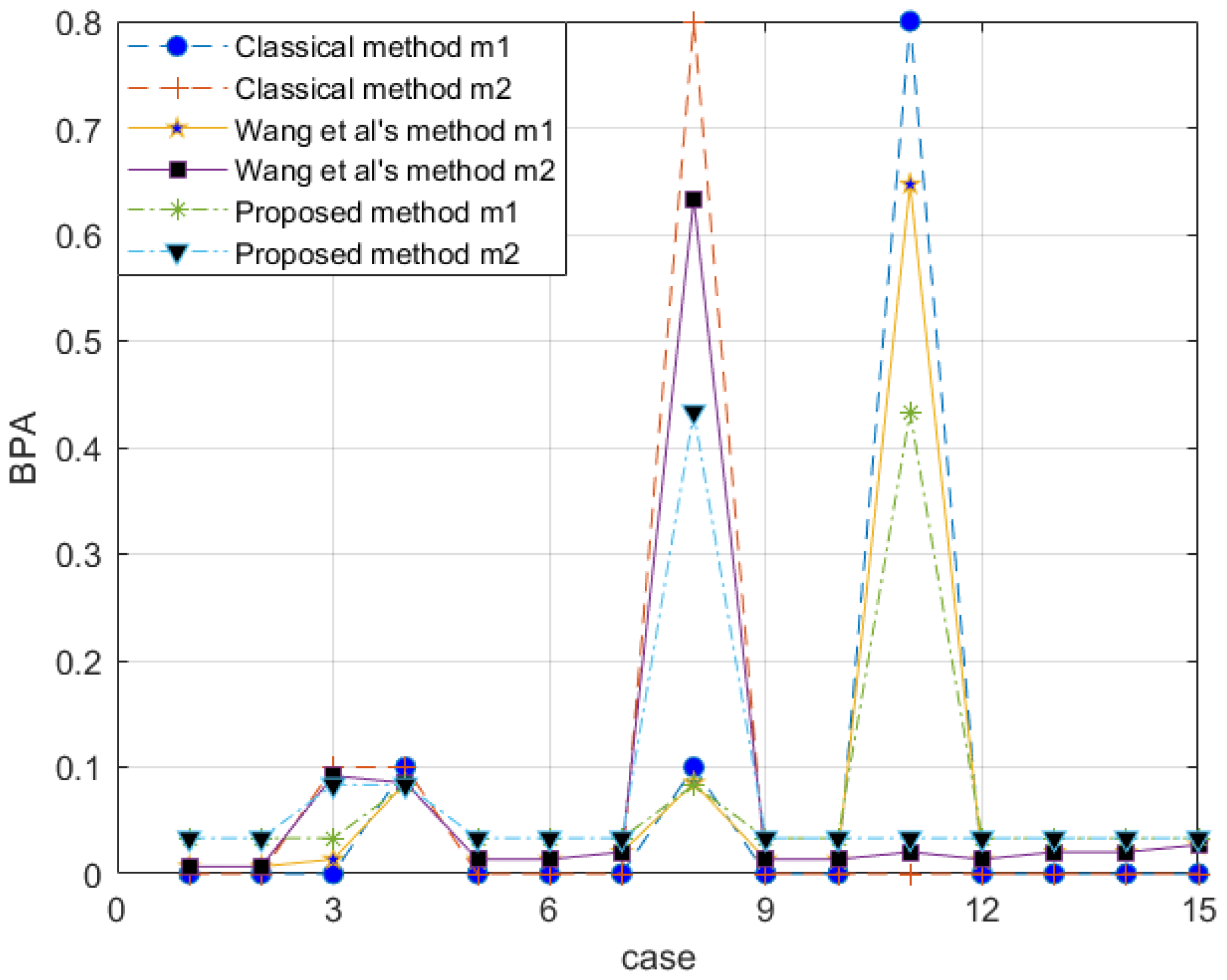

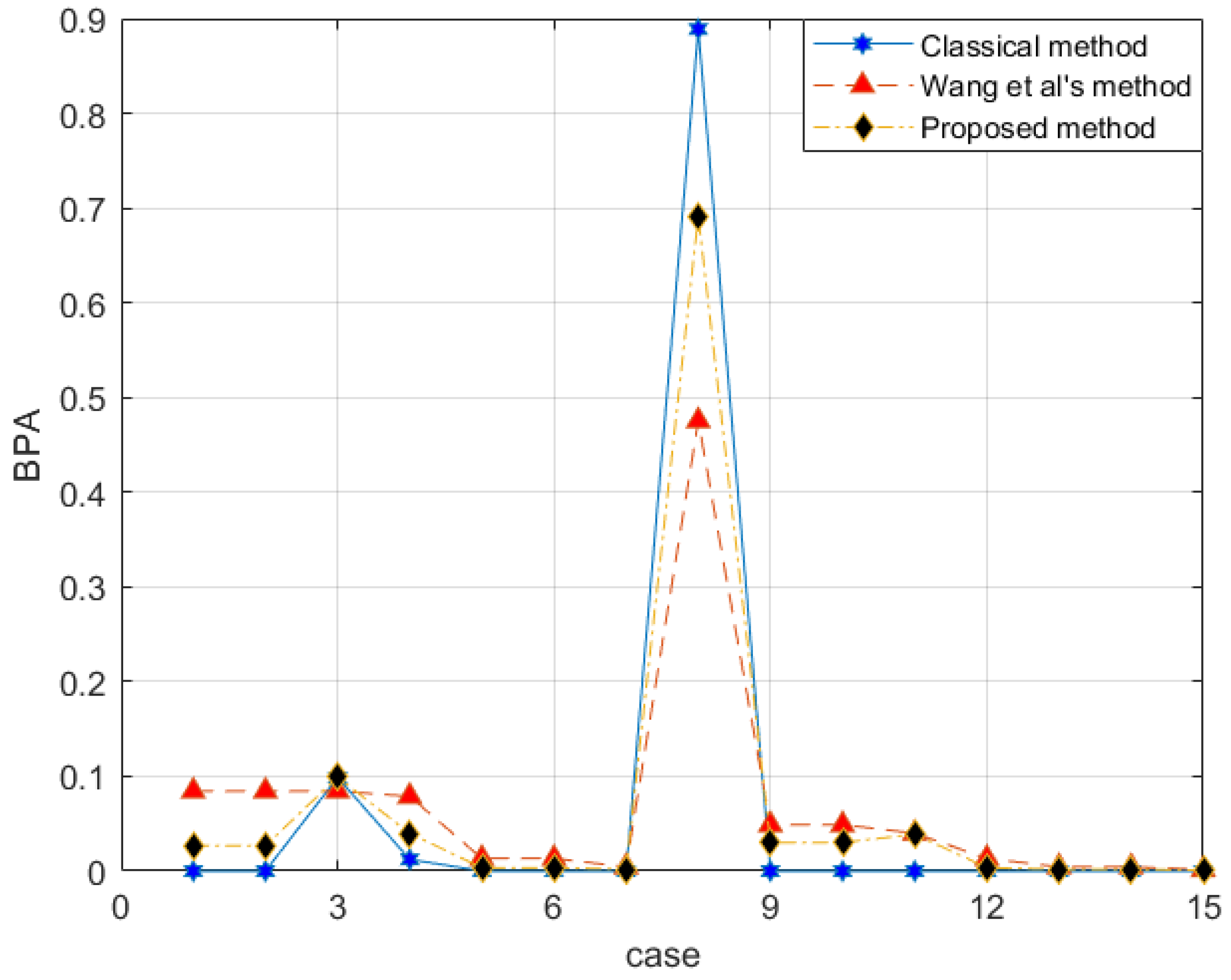

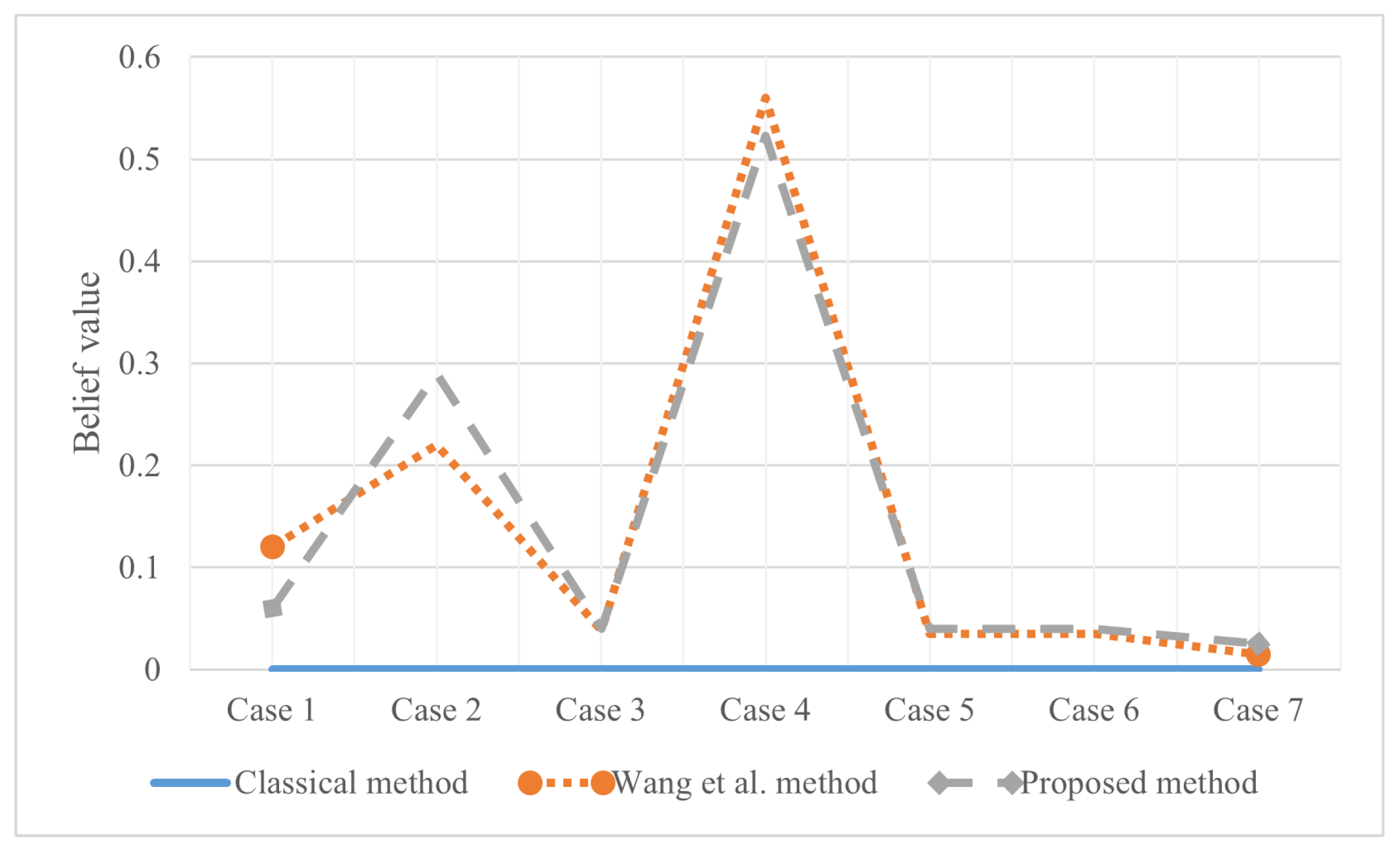

Section 4 presents two experiments of applying the proposed method.

Section 5 shows some discussions and open issues in this work.

Section 6 is the conclusion of the work.

5. Discussion and Open Issue

If the Dempster–Shafer evidence theory is used to deal with conflict data directly, it is likely that the combination results are counter-intuitive due to data defects. The classical Dempster combination rule has good exchangeability and combination while destroying combination structures often leads to different results. Appropriate modifications to the data model can improve the performance of the combination rule. The method proposed in this paper defines the one-factor belief function on the basis of the closed FOD, which not only makes the mass function of each subset non-zero, it avoids the combination defects of the classical D-S evidence theory and also balances the preference degree for the single factor set in the combination process to a certain extent. Small subsets always have more intersections than large subsets. The proposed factor , a two-dimensional evaluation form, can give a more intuitive reference to the outside world. The conflict coefficient in the classical combination rule cannot better reflect the difference between evidence. The new evidence reliability coefficient is defined based on the maximum betting commitment distance between evidences. The reliability is low if the distance is large. For evidence with high reliability, the proposed method can better maintain the characteristics of the original data. In addition, when the mass function of a single subset or a complete set is not zero, the classical D-S evidence theory can perform well. In order to reduce the computational complexity, new methods can be abandoned in this case. The defined one-factor belief function is only applicable to the closed world. At the same time, the maximum betting commitment distance is under the closed conditions and . If sensors are used to collect data, the FOD may gradually become larger during use, and the relative strain of the single factor belief function is small. When the FOD is small, the combination has a greater fault tolerance, which reduces the accuracy of the system. Larger FOD, more evidence and single factor belief function reduce the influence on the original data, improving the accuracy of probability. The classical D-S evidence theory itself has exponential disasters. The new method requires traversing every subset of the FOD in computation, but only increases the computation by a low multiple. They were reduced to be equivalent.

There are two ways to explain belief functions [

72]. One is to treat BPAs as generalized probabilities, and the other is to treat them as a way to express evidence. From the first point of view, the influence of single factor belief function on a non-single factor subset is considered. If the number of intersections caused by the potential of the subset is small, it is equivalent to directly weakening the combination probability of this term. In other words, non-single factor subsets bear more conflicts but cannot express them. Specifically, for the two evidence sources

and

, if there is a complete set of evidence BPA that is not zero, then it can match any focal element. The fusion process is greatly limited, and the complete set BPA of another source must also be non-zero. It is reasonable to allocate part of the probability back to these subsets. The weighting of the single factor belief function according to the reliability coefficient is also afraid of excessive damage to the original data structure. The proposed method is not only to make the results of the classical Dempster combination rule more reasonable but also to improve some irreconcilable contradictions between evidence. For example, the combination of zero and non-zero for the same case. Under the condition of ensuring the integrity of the evidence source (such as sensors), BPA with very low reliability is still obtained. It is possible to sort out the uncertainty of this matter to the environment rather than the source. Of course, outliers always appear, and they have a great influence on the proposed method. If there are enough sources or they appear in real-time, a minimum degree of trust can be specified to exclude evidence that causes low reliability. It not only reduces the amount of calculation but also reduces the contradictions of the system and makes the combination results more credible.

There are some open issues in the proposed measure that need to be addressed in the following research. First, the proposed reliability coefficient is based on the betting commitment evidence distance in the Dempster–Shafer evidence theory. However, the pignistic transformation of a BPA in the betting commitment evidence distance may have some undesirable properties, as shown in [

73]. Take monotonicity as an example, the pignistic transformation of a BPA can express an increase in information when the real situation is on the contrary. Second, the mathematical characteristics of the proposed reliability coefficient can be taken into consideration if it is not regarded as a simple factor for evidence modification. These properties include probabilistic consistency, set consistency, range, subadditivity, additivity and monotonicity. It should be noted that the property of a measure in the Dempster–Shafer evidence theory is a very big problem as well as an open issue that attracts a lot of attention among studies [

64,

65,

74,

75]. Third, there may be problems that occur in the application of the new measure to other data sets. An example is the dependence on evidence. If the dependence of the sources of evidence is not guaranteed, then a new evidence combination rule may be needed. Another concern may focus on incomplete FOD, where there are different FODs during information fusion. Then, the generalized evidence theory [

28,

30] may be a choice and direction.