1. Introduction

To obtain a complete scene point cloud map, it is necessary to measure the point cloud from different positions or perspectives several times, and each measurement result is in different coordinate systems. Therefore, the coordinate system transformation should be carried out for the point clouds measured at different positions, and the same point in the point cloud measured at different positions should be transformed to the same position [

1,

2]. This process is called point cloud registration. For the map reconstruction of point clouds in static scenes, the coordinate transformation between point clouds can be considered as rigid transformation. Point cloud registration can be generally divided into two processes: rough registration and fine registration. Rough registration can provide initial values of optimization for fine registration. While using the point cloud reconstruction device with motion estimation functions such as inertial measurement unit or rotating table, the relative motion between point clouds can be obtained directly, so it is easy to coarse registration of point cloud. Otherwise, the transformation matrix between two point clouds should be calculated.

To reduce the influence of measurement errors on registration accuracy, a novel point cloud registration method is proposed in this paper. Firstly, the transfinite and interference points in two point clouds are filtered by preprocess. The matching relations of points between two point clouds are found by iterative closest point method. To improve the registration accuracy and speed, the point pairs with smaller Euclidean distances are used for registration, and the weights of each point pair are assigned according to the depth values. Finally, the rotation and translation matrices are calculated and the registration errors are obtained. The depth camera is used to collect environmental point clouds, and the experimental results show that the proposed method can effectively improve the registration accuracy and speed.

The major contributions of this study are as follows:

- (1)

during the iterative registration process, only the point pairs with smaller Euclidean distances are used, which improves the accuracy of registration;

- (2)

by incorporating the measurement error into the registration process, the weights are assigned to each point pair according to the measurement depth error and the convergence speed of registration error is improved.

The rest of this paper is organized as follows.

Section 2 discusses the related work. The notations, assumptions and problem definition are given in

Section 3.

Section 4 presents the novel registration method for point clouds. We provide the experimental results and analysis in

Section 5, and conclude the paper and discuss the future work in

Section 6.

2. Related Work

On rough registration based on two-dimensional features, Lin et al. [

3] proposed an efficient three-dimensional registration method based on a two-dimensional local feature matching, which is demonstrated that the method is robust to a larger difference of viewing angle. Many three-dimensional feature points can also be used for registration, such as Fast Point Feature Histograms (FPFH) features [

4] and local three-dimensional feature. Yang et al. [

5] proposed a registration method to provide marker-free and multi-view registration based on the semantic feature points extracted. This method mainly took advantage of the limited position and attitude of the sensor (only moving on the horizontal plane and rotating in gravity direction), and it was suitable for large-scale outdoor scene such as Terrestrial Laser Scanning (TLS). However, it needed to establish point cloud grids during the point cloud segmentation, which would increase the amount of calculation.

At present, the point cloud fine registration method used widely is the Iterative Closest Point (ICP) algorithm which was proposed by Besl et al. [

6]. The basic principle is to iteratively calculate the nearest points between each point in the registration points and the fixed reference point, and the two nearest points are as matching points. The ICP algorithm can be used for registration of broken line points, curve points, surface points, dense points and triangular points. It is widely used in point cloud registration because it does not need the matching relationship between point sets and also has a faster calculation speed. However, the ICP algorithm also has the following shortcomings: the point clouds to be registered should have a large overlap area; easy to fall into local optimum; high requirements for initial values. For this reason, many variant ICP algorithms based on measurement scene and sensor type were proposed [

7]. Chen et al. [

8] presented an approach that works on range data directly and registers successive views with enough overlapping area to get an accurate transformation between views. It was performed by minimizing a functional that did not require point-to-point matches. Segal et al. [

9] proposed a generalization of the ICP algorithm which takes into account the locally planar structure of both scans in a probabilistic model.

Another type of method for fine registration is based on probability distribution. For example, the registration method based on normal distribution transformation is not to establish the matching relationship between point clouds, but to divide the point clouds into blocks, and then use the probability distribution of these point clouds to represent the point clouds. The matching problem is transformed into the expectation maximization problem, which is used to deal with sparse point clouds from laser radar. Agamennoni et al. [

10] presented a point cloud registration method based on probability distribution. Unlike the “point to point” matching registration, the method was a weighted matching registration. The transformation matrix was estimated by expectation maximization, and the accuracy of registration is significantly improved.

We proposed a point-based rigid registration method which provided different weights for different locations and directions of the measured points based on the measurement error distribution properties of laser tracker [

11]. The experimental results showed that the proposed method clearly performs better for the same fiducial point number and distribution than the traditional method.

After rough and fine registrations, two point clouds are transformed into the same coordinate. Due to the accumulated error, it is necessary to do global registration and optimization for registered point clouds. In the present achievements, the influence of measurement errors on registration is seldom considered in the field of visual measurements. Because the point cloud registration is the basis of constructing a complete three-dimensional point cloud map or other subsequent processing, it is very important to consider the measurement error of point cloud in the registration process.

3. Problem Definition

The goal of this paper is to improve the ICP algorithm by using point pairs with smaller Euclidean distances for registration. At the same time, the measurement error is considered in the registration process, and the weights are assigned to each point pair according to the measurement error. The key of this paper is to establish the measurement error model

where

represents the coordinate value of point and

contains the intrinsic parameters of depth camera. Based on the error

e, the weight function affecting registration is constructed

The amount of point pairs with smaller Euclidean distances for registration is notated as

Q. The rotary and translation matrices can be calculated by

where

and

are the source and target point clouds.

To focus more on verifying the feasibility of the proposed method, this study only considers the point cloud pre-processing and registration process, and makes the following assumptions:

- (1)

the source and target point clouds are obtained by depth camera from two different static postures;

- (2)

there is a large overlap area between the source and target point clouds.

The object of this paper is to determine the functions , , and under assumed conditions, and verify the method by experiments.

4. Method

4.1. Principle of Point Cloud Registration

In three-dimensional reconstruction, it is necessary to change the position and attitude of the camera for multiple scene acquisition, and then get the whole three-dimensional point cloud of the target scene by point cloud registration and fusion. Therefore, each point cloud is measured in different local coordinate systems. The main goal of this paper is to improve the registration accuracy of two point clouds.

The origin of the local coordinate system is located at the center of the camera, which is notated as

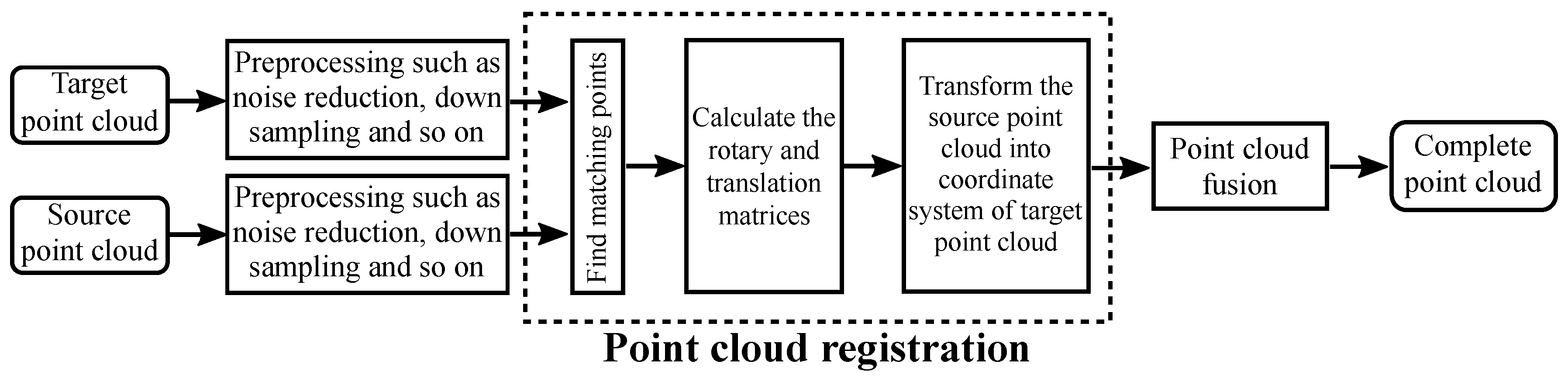

. Point cloud registration is to transform point clouds in different coordinate systems into the same coordinate system, and ensure that the same point in different point clouds can coincide as much as possible after registration. The flow chart of point clouds registration is shown in

Figure 1.

The goal of point cloud registration is to transform local point clouds into the global coordinate system.

where

represents the source point cloud in local coordinate system with

N points to be registered,

is the registered point cloud in global coordinate system. The target point cloud to be registered can be either a registered point cloud or a point cloud fused by the previous measurements in global coordinate system.

The target point cloud is notated as

and the source point cloud is notated as

. The key to registration is to calculate the transformation matrix

, and minimize the error function

where

is one point of target point cloud in global coordinate system which is matched to

.

For point cloud registration in static scenes, it can be considered that different point clouds satisfy the rigid transformation. Therefore, the Formula (

5) can be written as

where

and

are the rotation and translation matrices of rigid body transformation.

There are two main issues in solving Formula (

6): (1) finding the point

in target point cloud

which matches the point

; (2) optimization solution.

Assuming that a matching relationship has been found, it can be solved as follows:

- (1)

calculate the centers of target point cloud and point cloud to be registered

- (2)

calculate the covariance matrix

- (3)

construct symmetric matrix

where

- (4)

perform eigenvalue decomposition of matrix

, and the quaternion

corresponding to the rotation matrix

is obtained by taking the eigenvector corresponding to the maximum eigenvalue.

The translation matrix is

4.2. Depth Measurement Error Model

To improve the 3D point cloud modeling of complex indoor environment, a novel point cloud registration method based on Intel

RealSense

depth camera is proposed in this paper, which can reduce the influence of measuring errors. The Intel

RealSense

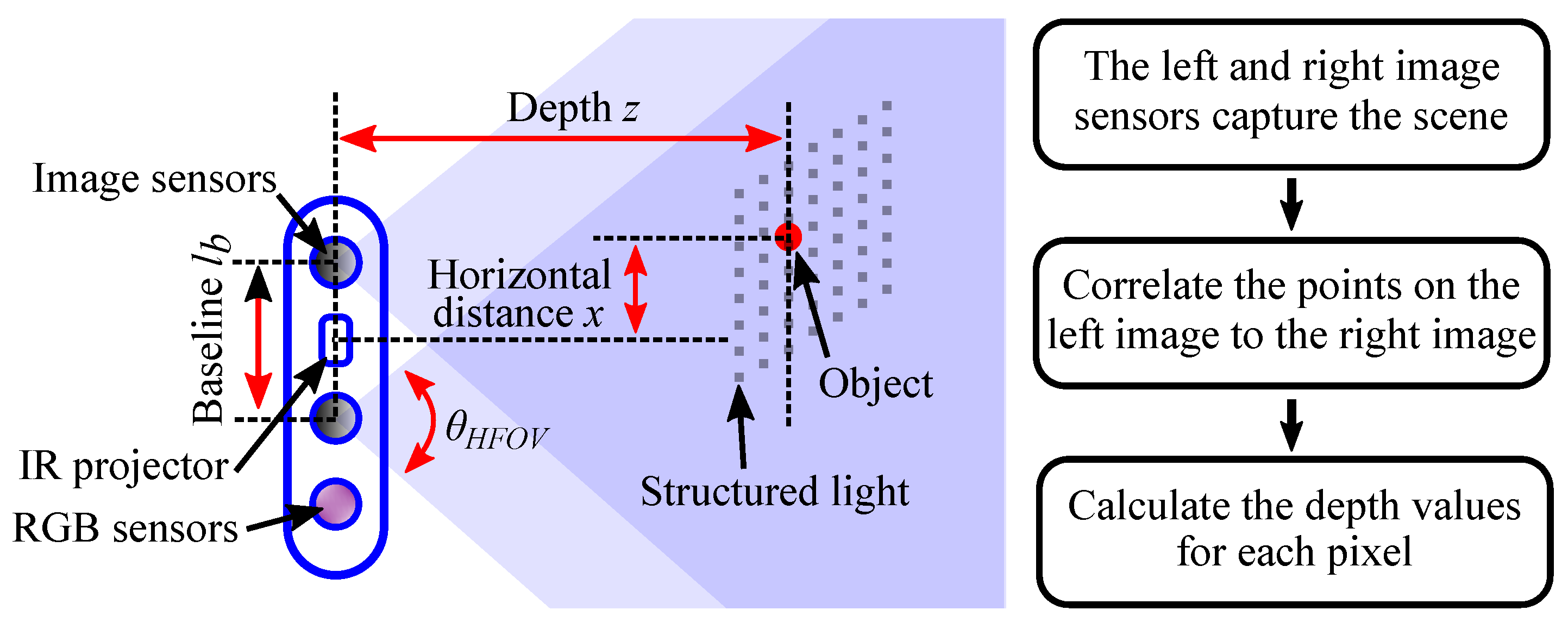

depth camera adopts active infrared (IR) stereo vision technology, which is shown in

Figure 2. The depth perception based on stereo vision is implemented by two image sensors and an infrared projector. The infrared projector projects non-visible structured IR pattern to improve depth accuracy in scenes. The depth calculation process in presented in the right of

Figure 2. The depth image processor obtains the scene data by the two image sensors, and the depth values for each pixel can be calculated by correlating the points on the left image to the right image.

In fact, there will be measurement error in depth camera. If the measurement error is not considered, the original error will be accumulated and enlarged when the depth map or point cloud is applied, resulting in poor results.

To analyse and calculate the depth error, the symbols for camera parameters are defined as follows: the depth between the origin of camera and the object is

z, the size of subpixel is

, the focal length is

, and the baseline of two cameras is

. The horizontal resolution is

, and the horizontal field of view is

. The RMS error represents the depth noise for a localized plane fit to the depth values and is defined as [

12]

where the focal length is

It can be concluded that the depth error is proportional to the square of the depth value.

As described in

Section 4.1, in general ICP algorithms, the weights of different pairs of points are equal when the registration error is minimized. In fact, as the depth increases, the measurement error will increase and the credibility of measurement result will also change. To quantify the impact of errors on measurement results, the scalar

of relative error is defined and simplified as

When the point cloud registration error is minimized, the weights of different point pairs are defined as

w 4.3. Depth-Based Weighted ICP Registration

Section 4.1 assumes that the matching relations of two point clouds to be registered are known, and the transformation matrix can be calculated. However, for two point clouds with large amount of points, it is difficult to find point pairs between them. The classic ICP algorithm is to calculate rotation and translation matrices of two point clouds by finding closest point pairs iteratively.

During iterative closest points, all the points in source point cloud are used to match points in target point cloud. Then the distances between point

in source point cloud and all points

in target point cloud are calculated. The point corresponding to minimum distance (nearest neighbor) is taken as the matching point of

.

Using Formula (

16), a new target point cloud

that matches all the points in the source cloud can be obtained. In fact, the two point clouds obtained in different positions and attitudes cannot completely coincide. For more accurate registration, we choose

point pairs with smaller Euclidean distances as the object point clouds to calculate the transformation matrix and registration error. The

Q is generally the number of overlap points of two point clouds. The number is difficult to be calculated accurately, so it can use an approximate value.

Based on new target and source point clouds

and

, the transformation matrix

can be calculated by Formulas (

7)–(

11).

The coordinate transformation for source point cloud is performed by Formula (

4), and the registration error is calculated by Formula (

6). If the registration error changes less than the pre-set threshold

or the number of iterations is greater than the pre-set threshold

, the algorithm terminates; otherwise the iteration will continue.

The above is the procedure of general ICP algorithm, and the weights of different point pairs are equal while minimizing the registration error. To reduce the influence of measurement errors on point cloud registration results, the depth error should be incorporated into the registration process by weighting. Therefore, different weights can be assigned to different point pairs according to the depth errors, and the registration accuracy can be improved during the least square optimization process. The error function can be rewritten as follows:

By continuously minimizing the objective error function , the optimal rotation and translation matrices can be obtained to improve the registration accuracy of point clouds.

5. Experiments and Results

To improve the accuracy of point cloud registration in complex indoor scenes, this paper proposed a novel point cloud registration method by reducing the number of point pairs and incorporating the measurement errors into the registration process. The

Section 4 presents the detailed depth error model and registration method. This section will verify the effectiveness of the proposed method through multiple scene registration experiments and result analysis.

5.1. Depth Camera

In this experiment, the point cloud data is obtained by Intel

RealSense

D415 depth camera. As shown in

Section 4.2, the depth camera adopts active infrared stereo vision technology to measure the depth of the environment. It consists of two image sensors, an IR projector, and a RGB sensor, which is shown in

Figure 3. The key specifications of depth camera is presented in

Table 1.

To verify the effectiveness of proposed method, the parameters of the depth camera are determined according to the experimental requirements:

mm,

,

,

. Therefore, the depth error

e and scalar

are calculated as follows:

The depth RMS error and relative error are shown in

Figure 4. It can be seen that the absolute RMS error of the measured value is a quadratic function of the measured depth

z, and the relative error has a linear relationship with the measured depth

z.

5.2. Registration Results

To verify the effectiveness of the proposed method, we complete the experiments of point cloud acquisition and registration in four different indoor scenes. In each scene, four different methods of registration are implemented: (A) original ICP algorithm; (B) ICP algorithm with weighting; (C) ICP algorithm with

Q point pairs, (D) ICP algorithm weighted by

Q point pairs, where

Q is determined as 0.8 times the number of target point clouds. The experimental results are shown in

Figure 5. To show the scene represented by point cloud, the RGB images of target and source point clouds are also presented. The registration RMS error in Method D is calculated by the square root of

, where

is calculated by Formula (

19).

According to the registration results, the registration errors of the four methods in four different scenes are all convergent. Method A and Method B use all point pairs to calculate the rotation and translation matrices, but the weights of each pair of points between two methods are different. In Method A, the weights of all point pairs are equal, and in Method B, the greater the measurement error, the smaller the weight. It can be seen that Method B has smaller errors and higher registration accuracy which considers the effect of measurement errors than Method A. Similarly, when Q point pairs are used for registration, such as Method C and Method D, the registration results are also better when the measurement errors are taken into account. The results show that the measurement errors have an impact on the speed and accuracy of registration. When different numbers of point pairs are used for registration, the accuracy of registration is quite different. The registration results of Method C and Method D are obviously better than those of Method A and Method B. Therefore, the ICP algorithm weighted by Q point pairs are proposed in this paper, which can effectively improve the convergence speed of registration error and reduce the registration error.

6. Conclusions

Aiming at the influence of the number of registration points and measurement errors on the registration results, an improved ICP registration method is proposed in this paper. During the iterative registration process, only the point pairs with smaller Euclidean distances are used, which improves the accuracy of registration. To reduce the influence of measurement errors on registration result, the depth measurement error model based on Intel

RealSense

depth camera is analyzed, and an ICP registration method with error weights is constructed to improve the convergence speed of registration errors. The registration experiments in different indoor scenes demonstrate the effectiveness of the proposed improved ICP algorithm. The effects of measurement error and Euclidean distance of point pairs on registration result are studied in this paper. In future work, point cloud registration based on 4D modeling will be considered, which will make full use of the environmental features, and may improve the registration accuracy and speed [

13,

14,

15].

Author Contributions

Conceptualization, S.L. and P.W.; Methodology, S.L., X.G. and D.-X.L.; Software, D.G. and P.W.; Validation, S.L., J.X. and D.-X.L.; Writing, S.L., P.W. and D.-X.L.

Funding

This research was funded by National Key R&D Program of China(2016YFE0206200). National Natural Science Foundation of China (NSFC) (U1613205, 51675291); State Key Laboratory of China (SKLT2018C04).

Conflicts of Interest

The authors declare no conflict of interest.

References

- Pulli, K. Multiview registration for large data sets. In Proceedings of the Second International Conference on 3-D Digital Imaging and Modeling, YOW, Gloucester, ON, Canada, 4–8 October 1999; pp. 160–168. [Google Scholar] [Green Version]

- Matabosch, C.; Salvi, J. Overview of 3D registration techniques including loop minimization for the complete acquisition of large manufactured parts and complex environments. In Proceedings of the Eighth International Conference on Quality Control by Artificial Vision, Le Creusot, France, 23–25 May 2007; pp. 393–400. [Google Scholar]

- Lin, C.-C.; Tai, Y.C.; Lee, J.J.; Chen, Y.S. A novel point cloud registration using 2d image features. EURASIP J. Adv. Signal Process. 2017, 1, 5. [Google Scholar] [CrossRef]

- Rusu, R.B.; Blodow, N.; Beetz, M. Fast point feature histograms (FPFH) for 3D registration. In Proceedings of the 2009 IEEE International Conference on Robotics and Automation, Kobe, Japan, 12–17 May 2009; pp. 3212–3217. [Google Scholar]

- Yang, B.; Dong, Z.; Liang, F.; Liu, Y. Automatic registration of large-scale urban scene point clouds based on semantic feature points. ISPRS J. Photogramm. 2016, 113, 43–58. [Google Scholar] [CrossRef]

- Besl, P.J. A method for registration 3-d shapes. IEEE Trans. Pattern Anal. Mach. Intell. 1992, 14, 193–200. [Google Scholar] [CrossRef]

- Pomerleau, F.; Colas, F.; Siegwart, R.; Magnenat, S. Comparing icp variants on real-world data sets. Auton. Robot. 2013, 34, 133–148. [Google Scholar] [CrossRef]

- Chen, Y.; Medioni, G. Object modeling by registration of multiple range images. Image Vis Comput. 2002, 10, 145–155. [Google Scholar] [CrossRef]

- Segal, A.; Haehnel, D.; Thrun, S. Generalized-icp. In Proceedings of the Robotics: Science and Systems, Seattle, DC, USA, 28 June–1 July 2009. [Google Scholar]

- Agamennoni, G.; Fontana, S.; Siegwart, R.Y.; Sorrenti, D.G. Point Clouds Registration with Probabilistic Data Association. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems, Daejeon, Korea, 9–14 October 2016; pp. 4092–4098. [Google Scholar]

- Wan, A.; Xu, J.; Miao, D. An Accurate Point-Based Rigid Registration Method for Laser Tracker Relocation. IEEE Trans. Instrum. Meas. 2017, 66, 254–262. [Google Scholar] [CrossRef]

- Grunnet-Jepsen, A.; Sweetser, J.N.; Woodfill, J. Best-Known-Methods for Tuning Intel® RealSenseTM D400 Depth Cameras for Best Performance. 2018. Available online: https://www3.intel.com/content/www/xr/en/support/articles/000027833/emerging-technologies/intel-realsense-technology.html (accessed on 4 June 2018).

- Schindler, G.; Dellaert, F. 4D cities: Analyzing, Visualizing, and Interacting with Historical Urban Photo Collections. 2012. Available online: https://smartech.gatech.edu/handle/1853/48719 (accessed on 5 April 2012).

- Ioannides, M.; Hadjiprocopi, A.; Doulamis, N. Online 4D reconstruction using multi-images available under Open Access. In Proceedings of the ISPRS Annals of the Photogrammetry, Remote Sensing and Saptial Information Sciences, Cape Town, South Africa, 11–13 December 2013; pp. 169–174. [Google Scholar]

- Rodriguez-Gonzalvez, P.; Munoz-Nieto, A.L.; del Pozo, S. 4D Reconstruction and visualization of Cultural Heritage: Analyzing our legacy through time. In Proceedings of the International Archives of Photogrammetry, Remote Sensing and Spatial Information Sciences, Nafplio, Greece, 1–3 March 2017; p. 609. [Google Scholar]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).