Abstract

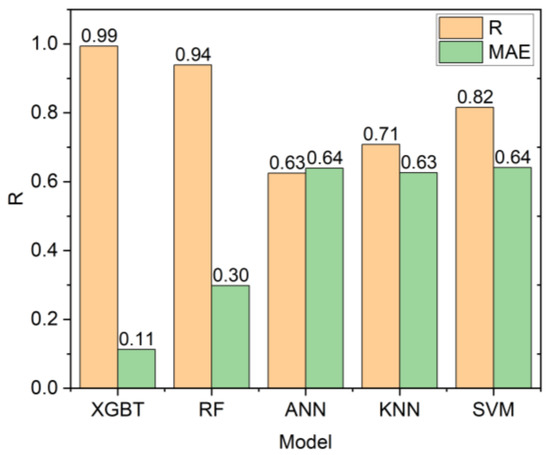

The accurate estimation of rock strength is an essential task in almost all rock-based projects, such as tunnelling and excavation. Numerous efforts to create indirect techniques for calculating unconfined compressive strength (UCS) have been attempted. This is often due to the complexity of collecting and completing the abovementioned lab tests. This study applied two advanced machine learning techniques, including the extreme gradient boosting trees and random forest, for predicting the UCS based on non-destructive tests and petrographic studies. Before applying these models, a feature selection was conducted using a Pearson’s Chi-Square test. This technique selected the following inputs for the development of the gradient boosting tree (XGBT) and random forest (RF) models: dry density and ultrasonic velocity as non-destructive tests, and mica, quartz, and plagioclase as petrographic results. In addition to XGBT and RF models, some empirical equations and two single decision trees (DTs) were developed to predict UCS values. The results of this study showed that the XGBT model outperforms the RF for UCS prediction in terms of both system accuracy and error. The linear correlation of XGBT was 0.994, and its mean absolute error was 0.113. In addition, the XGBT model outperformed single DTs and empirical equations. The XGBT and RF models also outperformed KNN (R = 0.708), ANN (R = 0.625), and SVM (R = 0.816) models. The findings of this study imply that the XGBT and RF can be employed efficiently for predicting the UCS values.

1. Introduction

In various domains of geotechnical engineering structures, including tunnels and dams, appropriate measurement of unconfined compressive strength (UCS) of rocks is of vital significance. The UCS provides a desirable evaluation of the capacity of rock bearing. To be specific, an unsuitable calculation of the UCS can be dangerous, because it causes the depreciation of the final bearing capacity. The unconfined compression test in labs is typically employed to ascertain rock strength. The standard procedures, including the International Society for Rock Mechanics (ISRM), are followed to carry out the test. Nevertheless, various hindering issues in the direct determination of UCS in the lab are available. For example, providing the needed rock core specimens is usually challenging, particularly for highly fractured rocks and those that show notable foliation and lamination [1,2]. It is extremely costly and prolonged for ascertaining the UCS directly at the initial steps of design [3]. Although, different and secondary methods of rock strength prediction are available, including common regression models and machine learning techniques.

To date, many researchers have sought to develop standard techniques for UCS assessment. Certain distinct techniques for UCS forecast are regularly in the classes of simple regression between UCS and straightforward index tests of rocks, including the Schmidt hammer, ultrasonic velocity, or Vp, Brazilian tensile strength, point-load index, and slake durability index tests [4,5,6]. In addition, the successful utilisation of multiple regression analysis for rock strength forecast is also reported in the literature [7]. However, some available reports found that these associations were inadequate for producing extremely reliable UCS values [8,9]. Typically, it is suggested to employ these equations simply for particular types of rock [9]. Moreover, the analytical forecast techniques cannot adjust themselves with the changes in data, which means if different data from the initial set of data is added to these models, the equations should be renewed [10,11]. More recently, researchers and practitioners of geotechnical engineering highlighted the utilisation of machine learning (ML) techniques, including decision trees (DTs), the artificial neural network (ANN), support vector machine (SVM), and the adaptive neuro-fuzzy inference system (ANFIS) in problems of this domain [12,13,14,15]. These highlighted techniques are related to the firm fact that ML techniques are fit and likely instruments for engineering problem-resolving, especially when the association varieties between the predictors and target variable are concealed [11,16,17,18,19,20,21,22,23,24,25,26]. From a monetary perspective, the application of ML techniques is also profitable, because it reduces the costs related to lab tests for ascertaining the UCS. It is important to note that the mentioned ML techniques have been used and applied to solve science and engineering problems [21,23,27,28,29,30,31,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46,47,48,49,50,51,52,53,54,55,56,57,58,59].

The UCS value of the Main Range granite in Malaysia is predicted in this work using two state-of-the-art tree-based techniques, namely random forest (RF) and extreme gradient boosting trees (XGBT). These two models are among the most robust ML models, and their predictive potential has been shown in several areas of study, e.g., [60,61]. To date, however, these approaches have not been used to forecast the unconfined compressive strength of rock materials. These models are also less susceptible to overfitting, a major problem with ML approaches with limited data (which is also true for this study). This process considers and selects non-destructive rock index tests and petrographic investigations, as both categories are crucial in predicting rock strength values. Comparative analysis is also conducted among the RF and XGBT models, simple regression, and single DTs.

2. Rock Strength Research Significance

In the past, rock strength was considered as the model output of many empirical, semi-empirical, and intelligent studies. In these studies, both destructive parameters such as point load index and Brazilian tensile strength (BTS) and non-destructive parameters such as p-wave velocity and Schmidt hammer have been considered as model inputs. However, conducting some of these tests, such as BTS, is still time-consuming and requires sample preparation. On the other hand, rock minerals have theoretical relationships with strength-related parameters of the rock material, and they can also be considered as input parameters to predict rock strength. These minerals and their percentages can be easily identified through petrographic analysis on thin sections of the same rock. There are only a few studies considering different rock minerals as input variables, and there is a need to combine these parameters with non-destructive parameters as inputs to estimate rock strength. This can aid in the development of a predictive model with simpler input parameters and, as a result, greater applicability in real-world projects.

3. Earlier Related Studies

Several previous studies employed ML techniques for UCS prediction. In 1999, a study by Meulenkamp and Grima [62] forecasted UCS was utilising a backpropagation ANN. These researchers applied this technique to 194 various kinds of rock samples, including dolomite, sandstone, and limestone. They have employed several inputs for predicting the UCS, including density, porosity, grain size, rock type, and Equotip hardness reading. Their findings showed that the ANN outperformed the traditional statistical techniques in terms of generalization capabilities. A fuzzy inference system (FIS) was applied to 164 samples of Ankara agglomerate by Sonmez et al. [63] for the UCS forecast. Their results indicated that the fuzzy logic technique yields a highly reliable UCS prediction. A different study by Gokceoglu and Zorlu [64] applied both regression and fuzzy models to 82 samples from problematic rocks for UCS prediction. They considered the ultrasonic velocity, point load index, tensile strength, and block punch index as their inputs. In comparison with multiple and simple regression methods, the fuzzy model had a better performance for UCS prediction. Feed-forward neural network and regression models were applied to a dataset including 30 travertine rock data by Dehghan, Sattari, Chelgani, and Aliabadi [8]. Their inputs included Schmidt hammer rebound number, velocity, porosity, point load index, and ultrasonic. This study suggested that the ANN method is a more powerful model than regression analysis for UCS prediction. Mishra and Basu [65] employed simple and multiple regression techniques as well as FIS. They suggested that the FIS and multiple regression techniques are more efficient for predicting the UCS than the simple regression technique. For sedimentary rock samples, the efficiency of ANN was reported by Cevik et al. [66]. They applied this model to 56 samples and considered clay contents, the origin of rocks, slake durability indices, two/four-cycle as inputs of UCS prediction. A different study by Yesiloglu-Gultekin, Gokceoglu, and Sezer [18] reported the advantage of the ANFIS over the multiple regression and ANN. They employed 75 rock samples from a granite mine. They utilized p-wave velocity, rock tensile strength, block punch index, and point load index as inputs. They also concluded that if p-wave velocity as well as tensile strength are considered for building the ANFIS prediction model of UCS, this model shows its best performance.

Singh et al. [67] found a number of relationships between various measures of strength and various schistose rock index characteristics. They also applied an ANN to their dataset and concluded that the ANN outperformed the established correlations in terms of accuracy. Gokceoglu [68] employed a fuzzy triangular chart for UCS prediction relating to the petrographic composition. The author created fifteen membership functions for fifteen samples. It was demonstrated that the fuzzy inference system could reliably predict the UCS values. Zorlu et al. [69] examined the associations between UCS and petrographic properties of sandstone. They evaluated the accuracy of multiple regression and ANN for the prediction of stone UCS. Their conclusion showed the superiority of ANN over multiple regression. For UCS forecast of carbonate rocks, Yagiz et al. [70] developed nonlinear regression and ANN models. Their dataset included 54 samples. They also stated that the ANN model outperforms the nonlinear regression model in terms of accuracy. Table 1 presents a summary of some recent studies on the application of ML techniques for predicting the UCS.

Table 1.

Some studies on UCS forecast utilizing ML techniques.

4. Collection of Case Studies and Data

The team of this research collected the rock samples from a water transfer tunnel, starting from Pahang state and ending in Selangor state. The data were collected from the study published by Armaghani et al. [73]. The tunnel supplies the extra water needs for Kuala Lumpur and Selangor states. The tunnel was constructed by the tunneling workforce to pass through the Main Range, which runs between the states of Pahang and Selangor. The spine of Peninsular Malaysia with a height range of 100 to 1400 m is formed by this mountain chain. Granite is the major type of rock, which is locally called Main Range granite. The intact granite strength is typically between 150 and 200 MPa. Table 2 shows the specifications of the tunnel.

Table 2.

Tunnel specifications.

Thirty-five kilometers of the tunnel were unearthed utilizing a tunnel boring machine (TBM). The rest of the tunnel was unearthed employing traditional tools and blast techniques. For getting the best excavation performance for this tunnel, the tunnelling team planned three TBM and four traditional drill and blast segments.

The research team collected a number of core samples from the boreholes and sent them to a laboratory to check the important granite material and engineering attributes. The team also lapped the edge of the surfaces of the cut cores to achieve the needed finishing and appearance. For the laboratory tests, many granitic rock material samples were prepared. To avoid any unwanted differences in features and early breakdowns, the team carefully inspected the samples for extant fractures and different tiny-scale discontinuities. The physical characteristics (i.e., non-destructive) tests involved Vp and dry density (DD). In addition, uniaxial compression examinations were conducted to ascertain the granite UCS. The team followed the ISRM [74] standards of testing and preparing all tests and investigations. Eventually, a database with the 45 data samples (all input and output parameters) was prepared for data analysis.

A polarizing petrological microscope was also used to conduct the petrographic investigations of the granite samples. To this end, small segments of the samples were provided to distinguish the portion of various minerals. The specimens display non-porphyritic and holocrystalline mineral composition as well as essentially comprise interlocking coarse-grained crystals of quartz (Qtz), plagioclase (Plg), biotite (Bi), and alkali feldspar (Kpr). The before-mentioned composition is expected of plutonic igneous rock. It is important to note that micas are denoted by 3 elements: sericite, muscovite, and biotite. For more information regarding study area and the data used for modeling, it is recommended to review Armaghani, Mohamad, Momeni, and Narayanasamy [73].

5. Analysis of Data

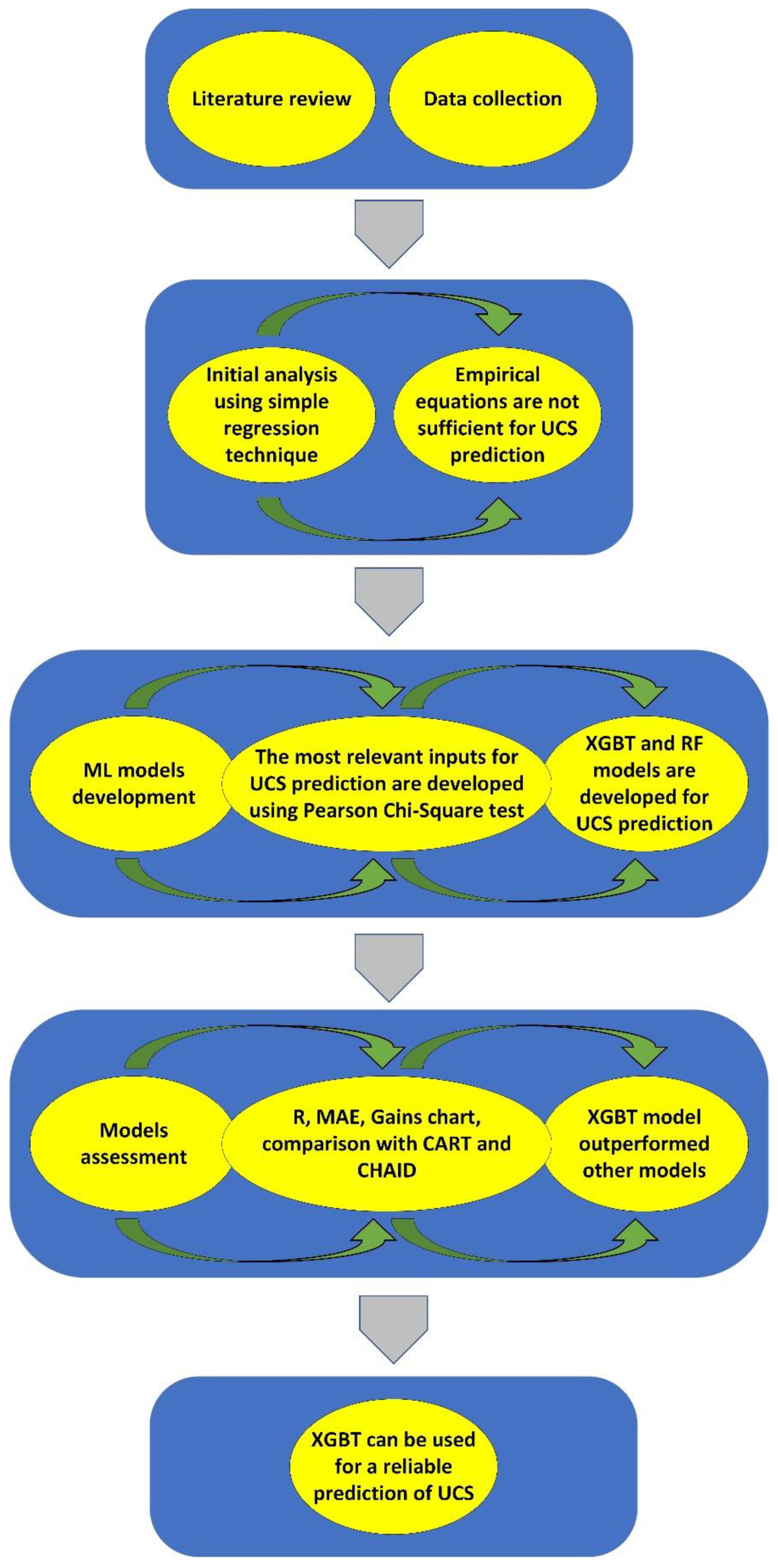

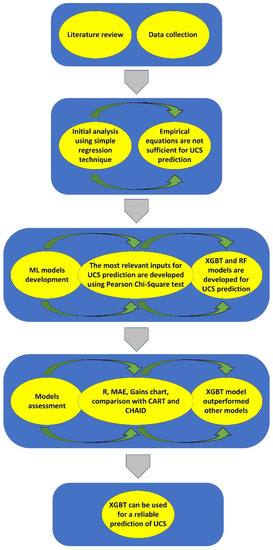

In this study, various statistical and simulation techniques were employed to analyze the results of laboratory tests. The subsequent sections explain the application of the techniques mentioned before to forecast the UCS of granite samples. Ultimately, the UCS values achieved from laboratory tests were compared with those predicted. The process of this study is presented in Figure 1. Two ML algorithms, including XGBT and RF, were employed to predict the UCS. Before the models’ development, an input selection was performed using the Pearson’s Chi-Square test. The inputs selected by this test were used to develop XGBT and RF models. The models were then evaluated by several performance criteria, including R, MAE, and gain charts. Additionally, the results of XGBT and RF models were compared with those of single decision trees, including CART and CHAID.

Figure 1.

Process of this study.

5.1. Regression Techniques

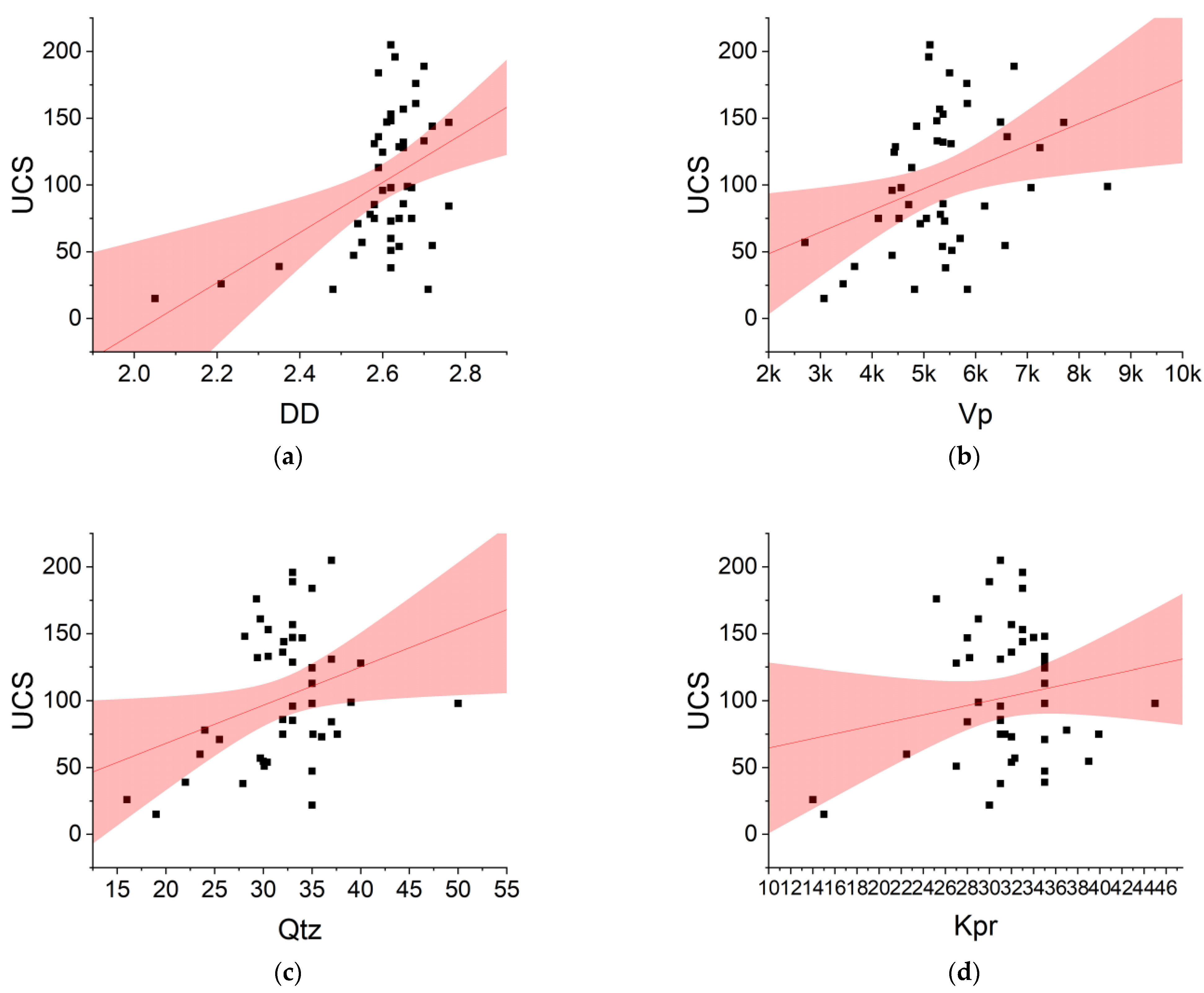

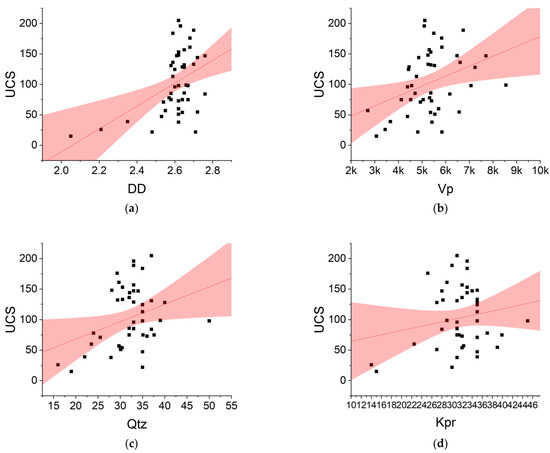

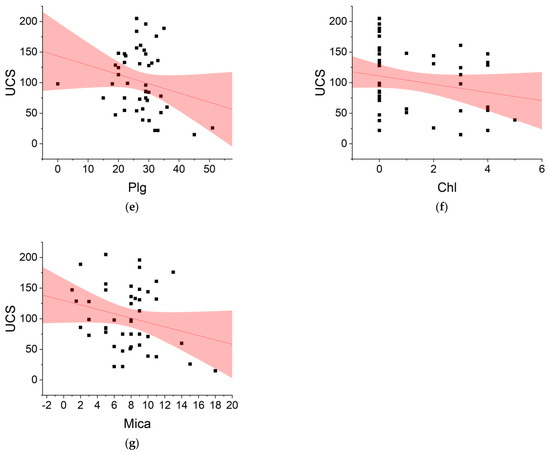

This study developed several empirical equations using simple regression technique. The simple regression is represented by the general form of Y = AX + B, “A” is the coefficient of regression and “B” is a constant value of “Y” once all input parameters equal zero. These equations and their square of the correlation coefficient (R2) are shown in Figure 2. Among all variables used in this study, DD was the most correlated variable to UCS, followed by Vp. As can be seen, the best R2 belonged to Figure 2a followed by Figure 2b. The lowest R2 belonged to Figure 2d. However, these accuracies show that empirical regression models applied to only one predictor are insufficient to solve the UCS issues. According to Yilmaz and Yuksek [7], the main conceptual shortcoming of all regression approaches is that they cannot reveal the precise causation process, just connections.

Figure 2.

Simple empirical regression models for predicting UCS. (a) UCS = 187.66 DD − 386.02; R2 = 0.2136; (b) UCS = 0.0163 Vp + 15.989; R2 = 0.1301; (c) UCS = 2.8535 Qtz + 11.013; R2 = 0.0998; (d) UCS = 1.7699 Kpr + 46.883; R2 = 0.0342; (e) UCS = −1.5152 Plg + 143.7; R2 = 0.0539; (f) UCS = −6.718 Chl + 110.84; R2 = 0.0469; (g) UCS = −3.563 Mica + 129.63; R2 = 0.0611.

Using their assumption-free characteristics, ML approaches may circumvent the above difficulties. There are several ML approaches capable of resolving UCS-related issues. Among these ML approaches, academics and practitioners paid less attention to DTs and their ensemble variations. This work will thus use two powerful ensemble DT methods, RF and XGBT, to forecast the UCS values of Malaysian Main Range granite.

5.2. Decision Tree Models

Various decision tree (DT) models are available and each of them has its own advantages and disadvantages. DTs employ a tree-like model of choices and their potential outcomes, such as chance event results, resource costs, and utility. It can show a conditionally controlled scheme. Some well-known DTs are classification and regression tree (CART), Chi-square automatic interaction detection (CHAID), and quick, unbiased, efficient statistical tree (QUETS). However, these tree-based models are classified as single DTs. The common flaw of these single DTs is their susceptibility to overfit with a small training set.

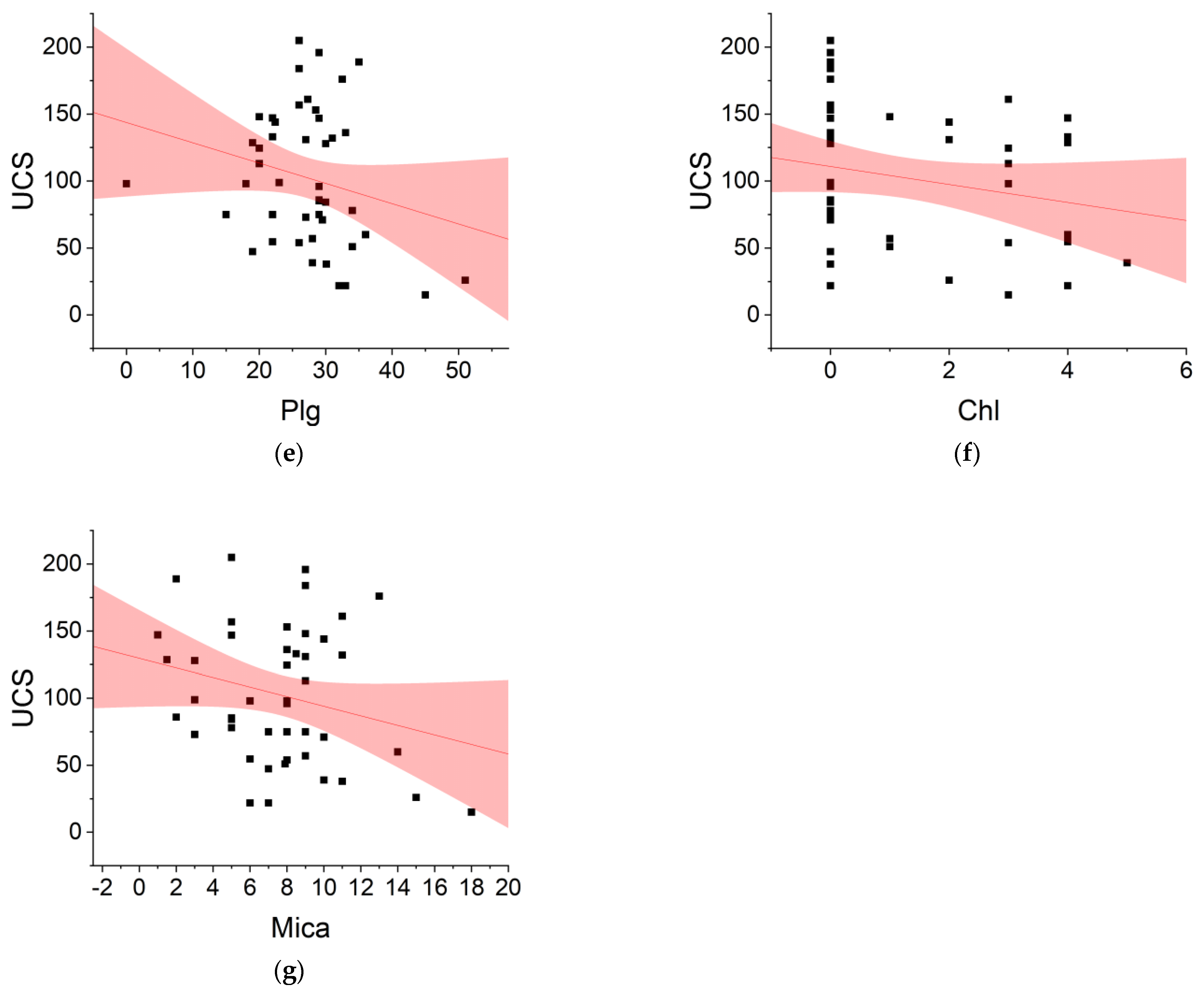

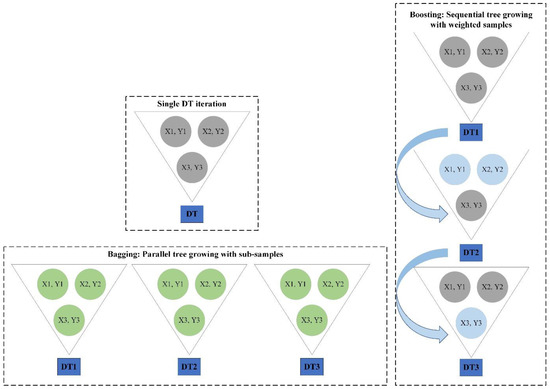

To remedy the abovementioned issue, some other advanced DTs, including random forest (RF) [75] and extreme gradient boosting tree (XGBT) [76] can be employed. Both algorithms belong to the ensemble DTs family. While RF follows the bagging principles, the XGBT follows the boosting standards. A schematic diagram of boosting and bagging and their application in DTs is presented in Figure 3.

Figure 3.

Application of bagging and boosting in DTs.

RF is a variance-reducing bagging method, as was previously explained. DTs are very sensitive to even little variations in the input. By using the “bagging” method, the RF is able to generate a stable model that lessens the variation. Bagging is an ensemble method that uses several averaging procedures, such as the mean, the majority vote, and the weighted average, to construct and integrate many predictions.

One of the most efficient machine learning techniques for classification and regression problems is gradient boosting (GB). GB creates a strong predictive model by combining weak learners such as DTs. If the weak learner is a DT, the resulting model is termed a gradient boosted tree (GBT). The GBT employs a conventional technique, including mean squared error (MSE), to build the regression trees and ascertain the most desirable division for the tree. For each possible node division, the GBT approximates the MSE and selects the one with the lowest MSE as the split to utilize in the tree.

One of the variants of GBT, which employs more precise estimates to decide the most suitable tree model, is Extreme Gradient Boosting Tree (XGBT). The XGBT applies several efficient methods, which make it extraordinarily strong, especially with structured data. Contradictory to conventional GBT, XGBT applies its process of creating trees where the similarity score (SS) and gain ascertain the fittest node divisions. The SS can be calculated utilizing Equation (1). Once the SS for each leaf is calculated, the gain can be estimated using Equation (2). Then, the node division with the greatest gain is selected at the most suitable division for the tree.

where SS is the similarity score, “R” represents the residual, PP signifies previous probability, λ denotes a regularization parameter. LLs is the left leaf similarity, RLs is the right leaf similarity, ROs is the root similarity.

6. Results and Discussion

This research applied two advanced decision tree techniques, including RF and XGBT, to predict the UCS. These techniques were applied to 45 data points or samples collected from the case study sites. Before the development of these models and because the data were unbalanced, the input and target fields were normalized. For this purpose, a z-score transformation technique was utilized for inputs and a Box–Cox transformation technique was used for the target variable.

In order to reduce the data dimensionality and identify the most relevant predictors of UCS prediction, the authors employed a Pearson’s Chi-Square test. The candidate inputs included DD, Vp, Qtz, Kpr, Plg, Chl, and Mica. The feature selection technique selected the most important predictors, including DD, Vp, Qtz, Plg, and Mica, which are used for developing the RF and XGBT models.

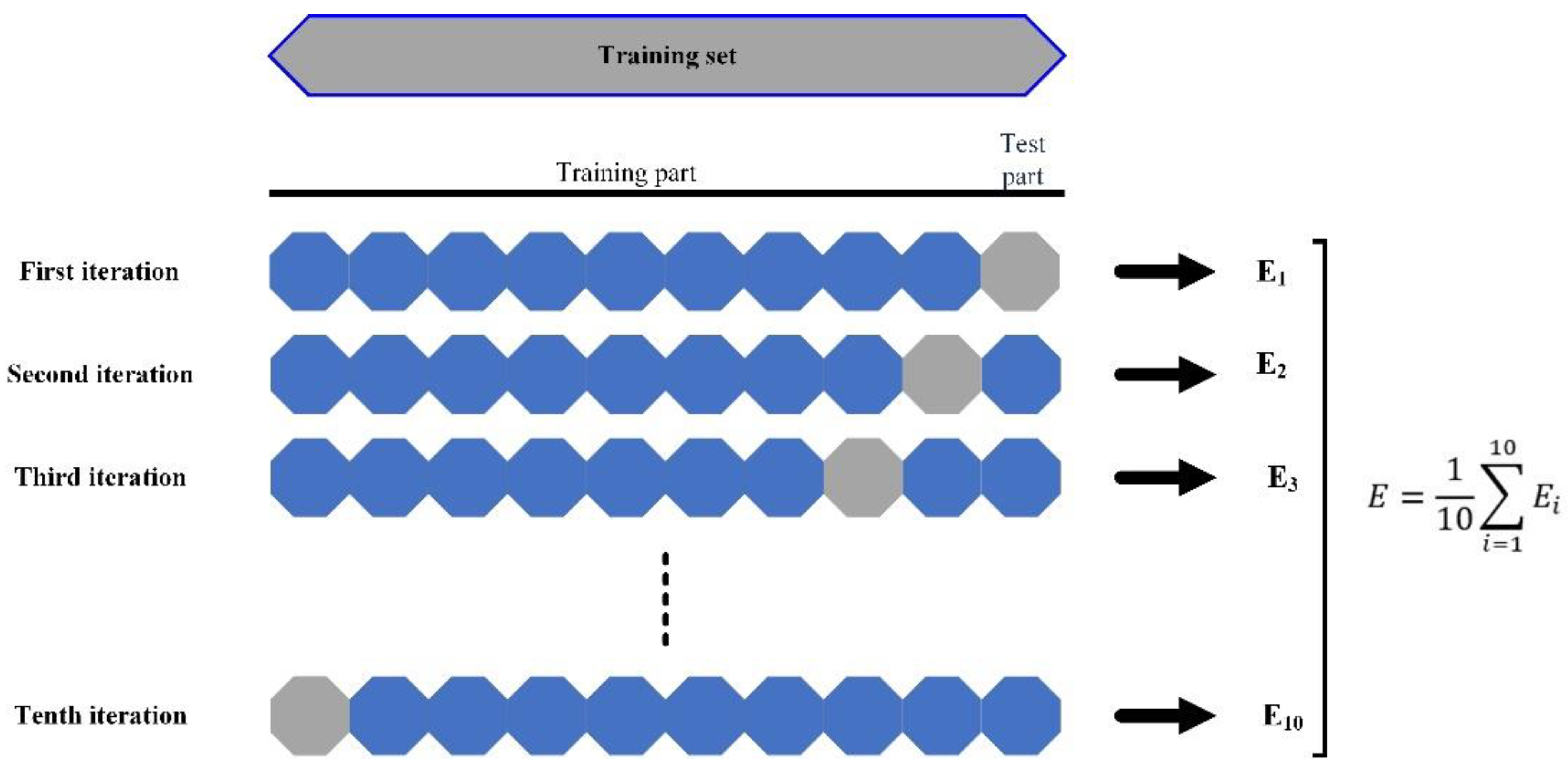

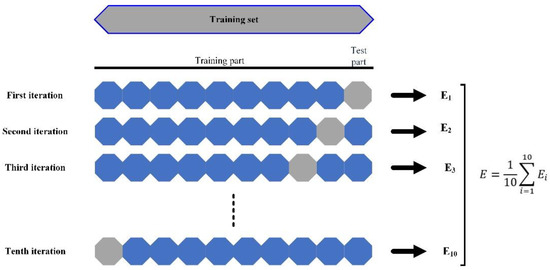

To develop the RF and XGBT models, 10-fold cross validation was used as the validation scheme. Since there are few samples, as a rule of thumb, the cross validation works better than the holdout technique. Cross-validation protects the ML predictive models against overfitting, especially when the number of samples is limited. This technique divides the sample frame into an established number of parts, and then averages the overall errors. The cross-validation procedure is shown in Figure 4.

Figure 4.

A ten-fold cross-validation procedure.

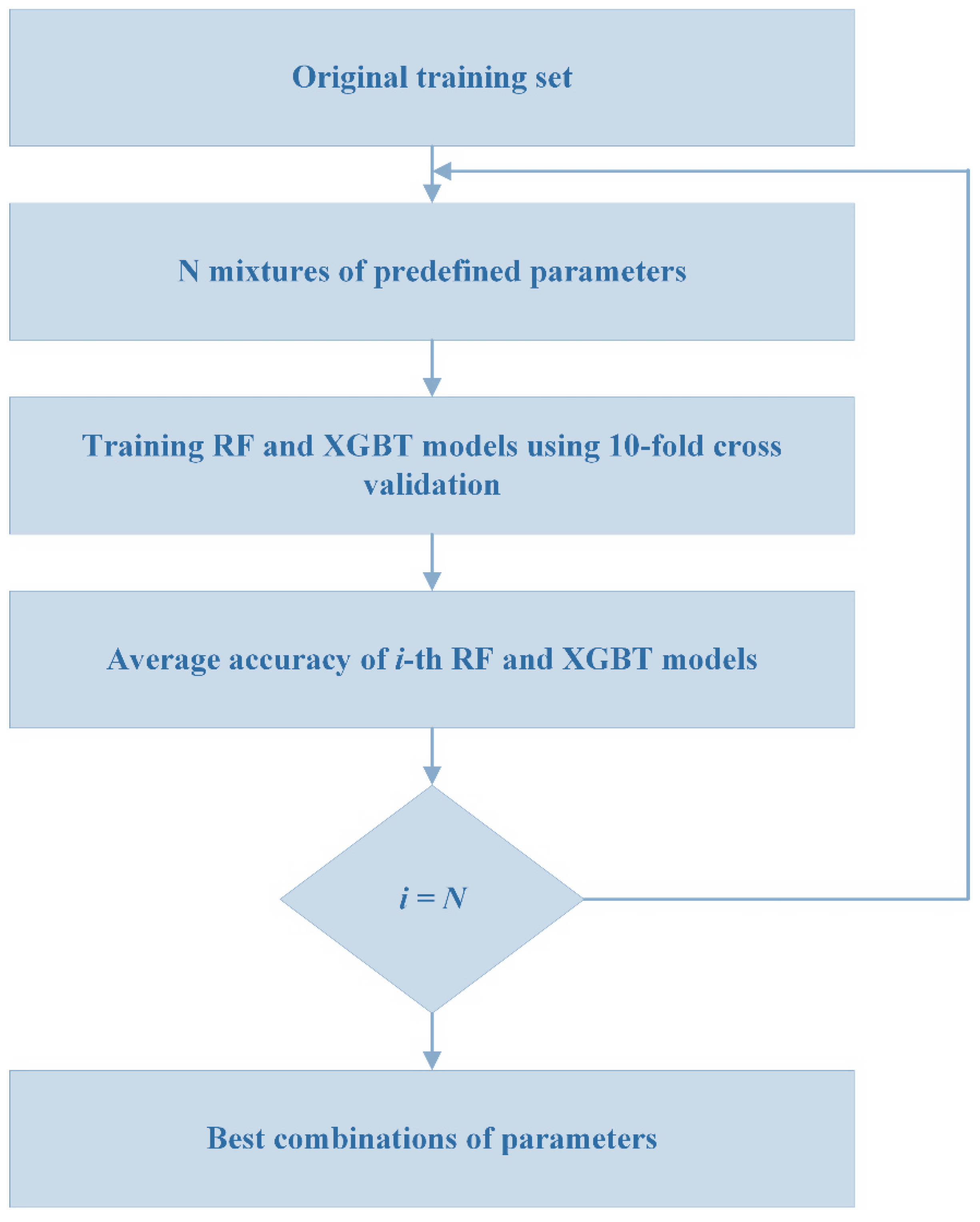

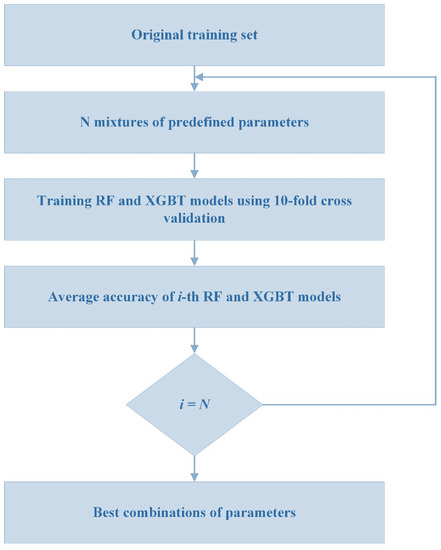

The RF and XGBT models were developed using several parameters. To achieve the optimized value for each parameter of these models, a grid search technique was used. This technique systematically creates and assesses a model for various mixtures of algorithm parameters particularized in a grid. Figure 5 displays the process of the grid search technique while a 10-fold cross validation technique is applied.

Figure 5.

Process of grid search in this study.

For RF, the following parameters were optimized and used: (1) number of trees to build = 10.0; minimum leaf node size = 1.0. The algorithm also used bootstrap and out-of-bag samples to calculate the accuracy of generalization. For XGBT, (1) the booster type was set as “gbtree”; (2) the boosting round number was set as 10; (3) Lambda was set as 1.0; (4) Alpha was set as 0.0; (5) max depth was set as 6.0; and (6) minimum child weight was 1.0.

Two standard metrics, linear correlation (R) and mean absolute error (Mae), were used to evaluate the effectiveness of these models (MAE). Below are the equations for determining these criteria:

where ei and mi denote nth real and predicted values, respectively; and signify the average values of actual and forecast values, correspondingly; n stands for the number of samples in the dataset.

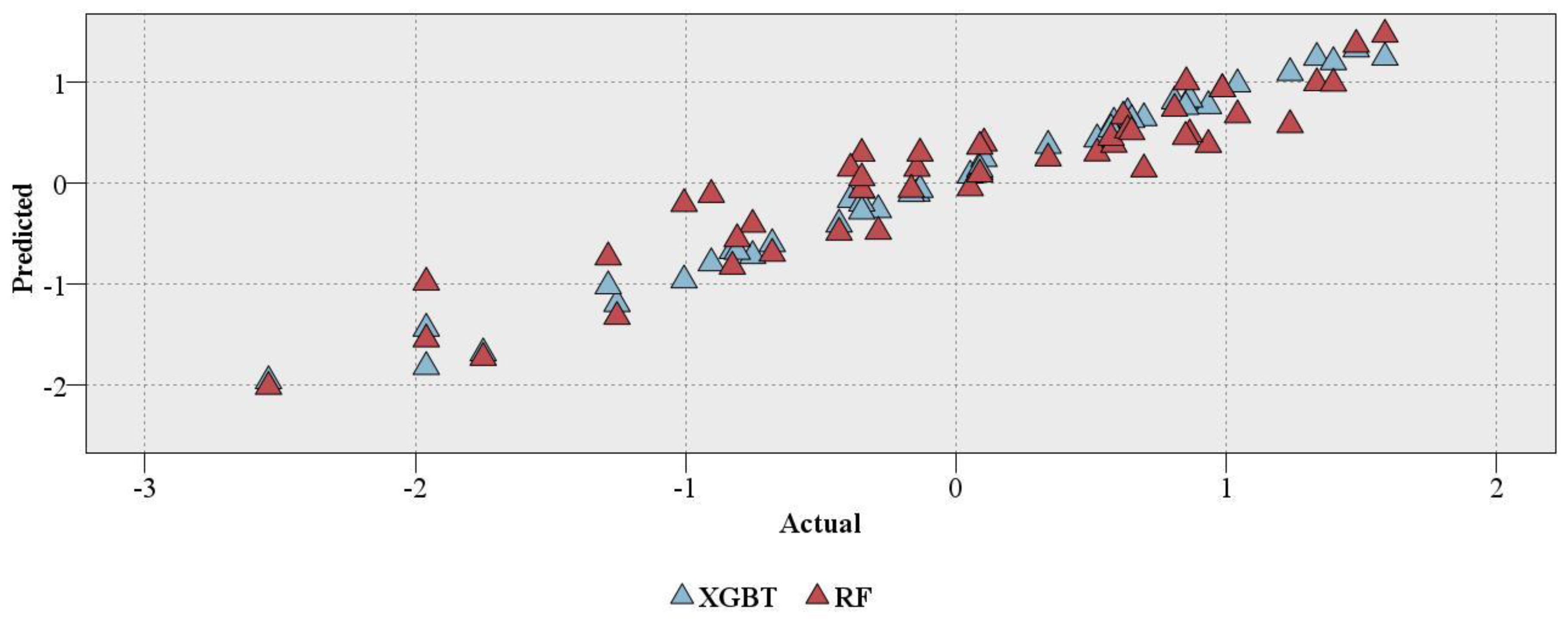

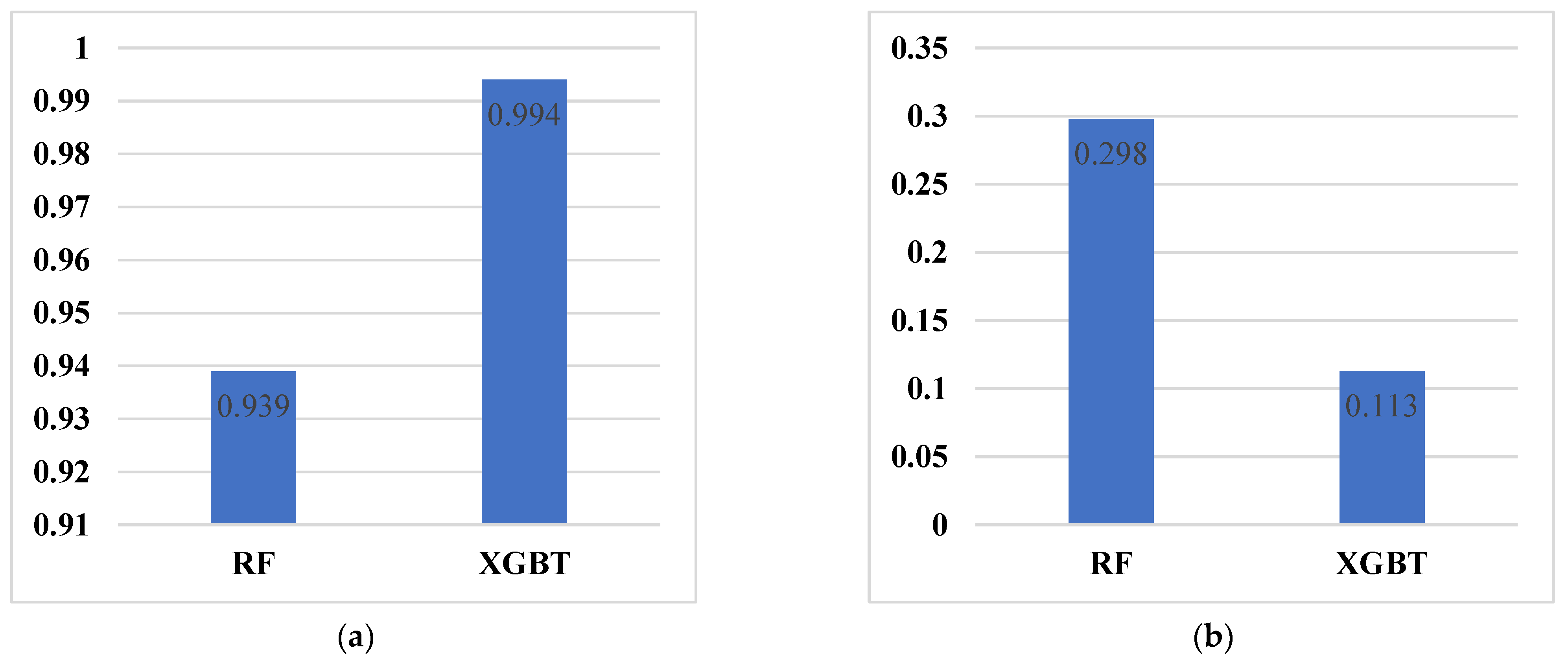

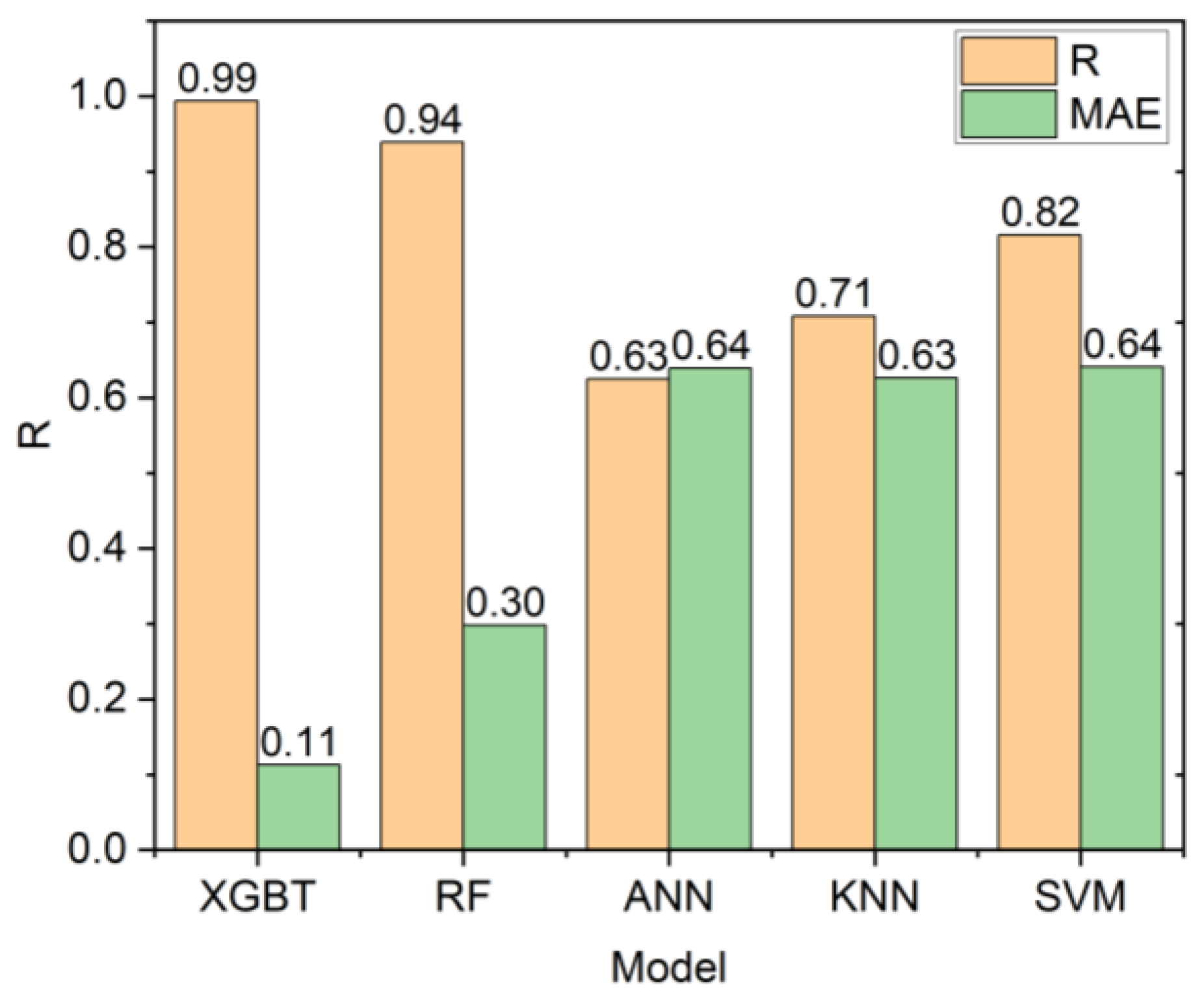

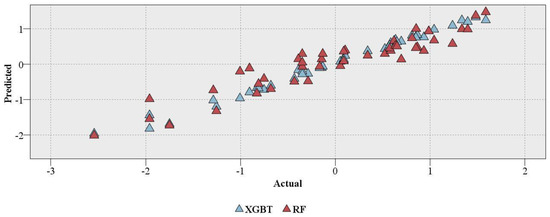

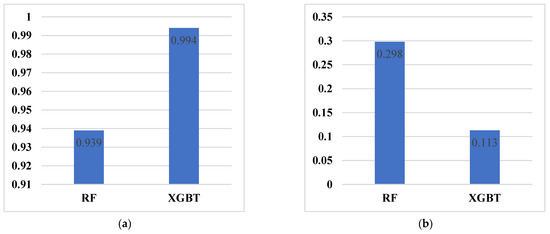

The RF and XGBT models were evaluated with the abovementioned statistical evaluations (Figure 6) with further assessments of minimum, maximum, and mean errors (Table 3). The evaluation of models developed in this study showed a more accurate prediction of XGBT as compared to the RF model. The R of XGBT and RF models were 0.994 and 0.939, respectively. In addition, the MAE of XGBT and RF models were 0.113 and 0.298, respectively. A plot of actual and predicted values of XGBT and RF models is shown in Figure 6.

Figure 6.

Real vs. predicted values of XGBT and RF.

Table 3.

Statistical error evaluation functions of the models.

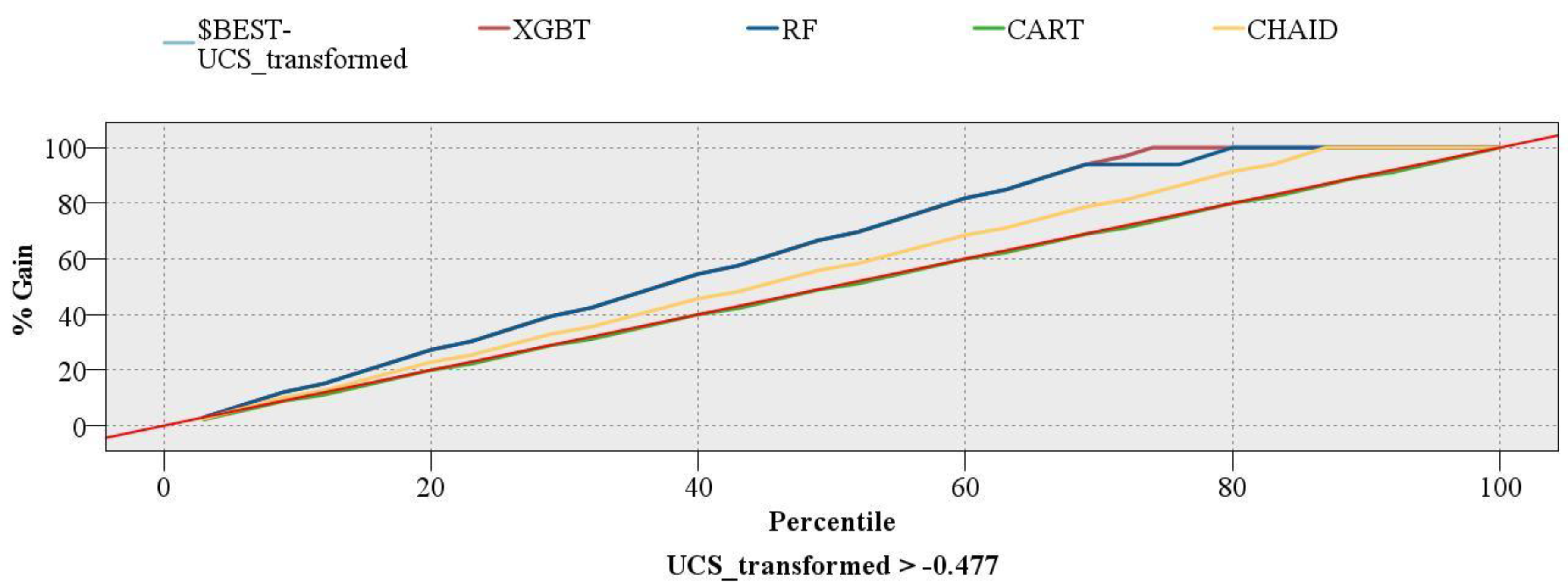

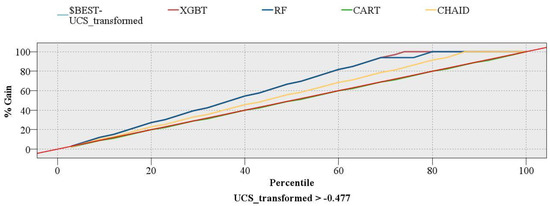

Two single DT models, CART and CHAID, which can be used for regression problems, were also developed to compare these models with XGBT and RF. The R of CART and CHAID models were zero and 0.65, respectively. For MAE, CART and CHAID had values of 0.811 and 0.593, respectively (Figure 7). In addition, a gains chart provides a more in-depth comparison between these models (Figure 8). In machine learning, the gains are explained as the proportion of entire hits that happen in every quantile. The gains are estimated using Equation (5). Here, “hits” mean how the model is successful in forecasting the values greater than the middle value of the UCS (UCS_transformed > −0.477).

where “a” implies the number of hits in quantile and “b” refers to the aggregate number of hits.

Figure 7.

The performance of the RF and XGBT models. (a) R; (b) MAE.

Figure 8.

Gains chart of the models developed in this study.

In Figure 8, the red diagonal line shows the baseline, and the pale blue line represents the perfect model. Typically, the higher lines show better models. As can be seen, the lowest line belongs to CART and follows the diagonal line from the lower left to the upper right. It is evident from the chart that RF and XGBT models have better performance than the other two single DT models. More importantly, the XGBT model followed the perfect model, which shows the superiority of this model over the RF and other models.

The better performance of XGBT over the RF can have several reasons. To start, XGBT has a high similarity score for a direct pruning tree, which is a first step toward the ultimate modeling objectives. Selecting the right node and figuring out the information gain is made simpler by the similarity score. Second, XGBT is trustworthy when working with imbalanced datasets, but RF is not. One last key distinction between XGBT and RF is that the former routinely gives more weight to functional space when reducing a model’s cost, while the latter aims to allow greater leeway to hyperparameters to improve the model.

This study also compared the results of the models developed in this study with those of some other common ML techniques, including k-nearest neighbors (KNN), ANN, and SVM. The outcomes of this comparison are shown in Figure 9. As can be seen, both XGBT and RF outperformed KNN, ANN, and SVM models.

Figure 9.

Models’ comparison.

When comparing the XGBT model’s accuracy (R = 0.994) to that of prior research, we found that Armaghani, Mohamad, Momeni, and Narayanasamy [73], who used an ANFIS model with the identical sample size and four inputs, performed somewhat better. Furthermore, the accuracy attained in this investigation was better than that of previous studies shown in Table 1.

7. Limitations and Future Works

It is a common fact that ML studies have always included several limitations and difficulties. One of the limitations of this study is related to the rock type, which is granite. The next limitation is related to the number of data samples used in the analysis, which is 45. The proposed models in this research are effective with the expected accuracy if the same input parameters are used in the future. In addition, if the same inputs are used but out of the range of our inputs, there is a possibility of an error in the analysis. Future studies with more data samples (more petrographic tests) should be conducted to propose a more generalized ML model. Furthermore, additional rock index tests, such as point load tests, should be performed and used as model inputs to map the behaviors of rock strength in greater and more accurate detail.

8. Conclusions

This present study aimed to predict the UCS using two advanced ensemble tree-based models, including the RF and XGBT. These models were applied to 45 data samples of rock material collected from a mountain range in Malaysia. Before the development of these two models, some simple regression equations were developed and showed that these models were insufficient for UCS prediction. Among the candidate inputs, the Pearson’s Chi-Square test selected the following as the inputs of the XGBT and RF models: DD, Vp, Qtz, and Plg. These two models were developed, and the results showed that the XGBT model outperformed the RF in terms of both accuracy and error. The XGBT model had a linear correlation of 0.994, while the RF model had a linear correlation of 0.939. These models were compared with two single DTs, including CART (linear correlation = 0) and CHAID (linear correlation = 0.65). Expectedly, both ensemble models outperformed the single DTs. In addition, XGBT and RF models were compared with ANN, KNN, and SVM models. Again, the two ensemble models outperformed the ANN (linear correlation = 0.625), KNN (linear correlation = 0.708), and SVM (linear correlation = 0.816) models.

The sample size of this study was small. Further studies can apply these advanced ensemble DT techniques to larger sample sizes to achieve higher accuracies. However, depending on the specifics of the situation, the aforementioned predictive methods may be used to make predictions about UCS. Furthermore, it was said that the use of XGBT and RF offers a practical method for reducing uncertainties during the design of rock engineering projects, which is important in terms of performance. Many additional mining difficulties, not only Young’s modulus, may be predicted using these approaches. It has been shown that XGBT is a practical, accurate, and effective algorithm. Tree learning techniques and linear model solvers are both in XGBT’s toolkit. Being able to conduct several computations in parallel at once is what makes it so fast.

Author Contributions

Conceptualization, Y.W., M.H. and A.S.A.R.; methodology, Y.W., M.H. and A.S.A.R.; formal analysis, Y.W., M.H. and A.S.A.R.; writing—original draft preparation, Y.W., M.H., A.S.A.R., D.V.U. and B.N.L.; writing—review and editing, Y.W., M.H., A.S.A.R., D.V.U. and B.N.L.; supervision, M.H. and A.S.A.R. All authors have read and agreed to the published version of the manuscript.

Funding

This study received no external funding for this research.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The data will be available from the corresponding author upon reasonable request.

Acknowledgments

The authors appreciate the contribution of Rasha Fadhel Obaid in editing and improving the quality of some parts of this paper.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Singh, R.; Kainthola, A.; Singh, T. Estimation of elastic constant of rocks using an ANFIS approach. Appl. Soft Comput. 2012, 12, 40–45. [Google Scholar] [CrossRef]

- Monjezi, M.; Khoshalan, H.A.; Razifard, M. A neuro-genetic network for predicting uniaxial compressive strength of rocks. Geotech. Geol. Eng. 2012, 30, 1053–1062. [Google Scholar] [CrossRef]

- Kahraman, S. The determination of uniaxial compressive strength from point load strength for pyroclastic rocks. Eng. Geol. 2014, 170, 33–42. [Google Scholar] [CrossRef]

- Tandon, R.S.; Gupta, V. Estimation of strength characteristics of different Himalayan rocks from Schmidt hammer rebound, point load index, and compressional wave velocity. Bull. Eng. Geol. Environ. 2015, 74, 521–533. [Google Scholar] [CrossRef]

- Azarafza, M.; Hajialilue Bonab, M.; Derakhshani, R. A Deep Learning Method for the Prediction of the Index Mechanical Properties and Strength Parameters of Marlstone. Materials 2022, 15, 6899. [Google Scholar] [CrossRef]

- Luo, J.a.; He, J. Constitutive model and fracture failure of sandstone damage under high temperature–cyclic stress. Materials 2022, 15, 4903. [Google Scholar] [CrossRef]

- Yilmaz, I.; Yuksek, G. Prediction of the strength and elasticity modulus of gypsum using multiple regression, ANN, and ANFIS models. Int. J. Rock Mech. Min. Sci. 2009, 46, 803–810. [Google Scholar] [CrossRef]

- Dehghan, S.; Sattari, G.; Chelgani, S.C.; Aliabadi, M. Prediction of uniaxial compressive strength and modulus of elasticity for Travertine samples using regression and artificial neural networks. Min. Sci. Technol. 2010, 20, 41–46. [Google Scholar] [CrossRef]

- Beiki, M.; Majdi, A.; Givshad, A.D. Application of genetic programming to predict the uniaxial compressive strength and elastic modulus of carbonate rocks. Int. J. Rock Mech. Min. Sci. 2013, 63, 159–169. [Google Scholar] [CrossRef]

- O’Rourke, J. Rock index properties for geoengineering in underground development. Min. Eng. 1989, 41, 106–109. [Google Scholar]

- Rezaei, M.; Majdi, A.; Monjezi, M. An intelligent approach to predict unconfined compressive strength of rock surrounding access tunnels in longwall coal mining. Neural Comput. Appl. 2014, 24, 233–241. [Google Scholar] [CrossRef]

- Moradi, M.; Basiri, S.; Kananian, A.; Kabiri, K. Fuzzy logic modeling for hydrothermal gold mineralization mapping using geochemical, geological, ASTER imageries and other geo-data, a case study in Central Alborz, Iran. Earth Sci. Inform. 2015, 8, 197–205. [Google Scholar] [CrossRef]

- Su, C.; Xu, S.-J.; Zhu, K.-Y.; Zhang, X.-C. Rock classification in petrographic thin section images based on concatenated convolutional neural networks. Earth Sci. Inform. 2020, 13, 1477–1484. [Google Scholar] [CrossRef]

- Saporetti, C.M.; Goliatt, L.; Pereira, E. Neural network boosted with differential evolution for lithology identification based on well logs information. Earth Sci. Inform. 2021, 14, 133–140. [Google Scholar] [CrossRef]

- Di, Y.; Wang, E.; Li, Z.; Liu, X.; Li, B. Method for EMR and AE interference signal identification in coal rock mining based on recurrent neural networks. Earth Sci. Inform. 2021, 14, 1521–1536. [Google Scholar] [CrossRef]

- Atici, U. Prediction of the strength of mineral admixture concrete using multivariable regression analysis and an artificial neural network. Expert Syst. Appl. 2011, 38, 9609–9618. [Google Scholar] [CrossRef]

- Asadi, M.; Bagheripour, M.H.; Eftekhari, M. Development of optimal fuzzy models for predicting the strength of intact rocks. Comput. Geosci. 2013, 54, 107–112. [Google Scholar] [CrossRef]

- Yesiloglu-Gultekin, N.; Gokceoglu, C.; Sezer, E.A. Prediction of uniaxial compressive strength of granitic rocks by various nonlinear tools and comparison of their performances. Int. J. Rock Mech. Min. Sci. 2013, 62, 113–122. [Google Scholar] [CrossRef]

- Marto, A.; Hajihassani, M.; Jahed Armaghani, D.; Tonnizam Mohamad, E.; Makhtar, A.M. A novel approach for blast-induced flyrock prediction based on imperialist competitive algorithm and artificial neural network. Sci. World J. 2014, 2014, 643715. [Google Scholar] [CrossRef]

- Momeni, E.; Nazir, R.; Armaghani, D.J.; Maizir, H. Prediction of pile bearing capacity using a hybrid genetic algorithm-based ANN. Measurement 2014, 57, 122–131. [Google Scholar] [CrossRef]

- Chen, L.; Asteris, P.G.; Tsoukalas, M.Z.; Armaghani, D.J.; Ulrikh, D.V.; Yari, M. Forecast of Airblast Vibrations Induced by Blasting Using Support Vector Regression Optimized by the Grasshopper Optimization (SVR-GO) Technique. Appl. Sci. 2022, 12, 9805. [Google Scholar] [CrossRef]

- Le, T.-T.; Skentou, A.D.; Mamou, A.; Asteris, P.G. Correlating the Unconfined Compressive Strength of Rock with the Compressional Wave Velocity Effective Porosity and Schmidt Hammer Rebound Number Using Artificial Neural Networks. Rock Mech. Rock Eng. 2022, 55, 6805–6840. [Google Scholar] [CrossRef]

- Koopialipoor, M.; Asteris, P.G.; Mohammed, A.S.; Alexakis, D.E.; Mamou, A.; Armaghani, D.J. Introducing stacking machine learning approaches for the prediction of rock deformation. Transp. Geotech. 2022, 34, 100756. [Google Scholar] [CrossRef]

- Asteris, P.G.; Lourenço, P.B.; Roussis, P.C.; Adami, C.E.; Armaghani, D.J.; Cavaleri, L.; Chalioris, C.E.; Hajihassani, M.; Lemonis, M.E.; Mohammed, A.S. Revealing the nature of metakaolin-based concrete materials using artificial intelligence techniques. Constr. Build. Mater. 2022, 322, 126500. [Google Scholar] [CrossRef]

- Kardani, N.; Bardhan, A.; Samui, P.; Nazem, M.; Asteris, P.G.; Zhou, A. Predicting the thermal conductivity of soils using integrated approach of ANN and PSO with adaptive and time-varying acceleration coefficients. Int. J. Therm. Sci. 2022, 173, 107427. [Google Scholar] [CrossRef]

- Hasanipanah, M.; Monjezi, M.; Shahnazar, A.; Armaghani, D.J.; Farazmand, A. Feasibility of indirect determination of blast induced ground vibration based on support vector machine. Measurement 2015, 75, 289–297. [Google Scholar] [CrossRef]

- Zhu, W.; Rad, H.N.; Hasanipanah, M. A chaos recurrent ANFIS optimized by PSO to predict ground vibration generated in rock blasting. Appl. Soft Comput. 2021, 108, 107434. [Google Scholar] [CrossRef]

- Asteris, P.G.; Mamou, A.; Hajihassani, M.; Hasanipanah, M.; Koopialipoor, M.; Le, T.-T.; Kardani, N.; Armaghani, D.J. Soft computing based closed form equations correlating L and N-type Schmidt hammer rebound numbers of rocks. Transp. Geotech. 2021, 29, 100588. [Google Scholar] [CrossRef]

- Fattahi, H.; Hasanipanah, M. Prediction of blast-induced ground vibration in a mine using relevance vector regression optimized by metaheuristic algorithms. Nat. Resour. Res. 2021, 30, 1849–1863. [Google Scholar] [CrossRef]

- Zeng, F.; Amar, M.N.; Mohammed, A.S.; Motahari, M.R.; Hasanipanah, M. Improving the performance of LSSVM model in predicting the safety factor for circular failure slope through optimization algorithms. Eng. Comput. 2021, 38, 1755–1766. [Google Scholar] [CrossRef]

- Aghaabbasi, M.; Shekari, Z.A.; Shah, M.Z.; Olakunle, O.; Armaghani, D.J.; Moeinaddini, M. Predicting the use frequency of ride-sourcing by off-campus university students through random forest and Bayesian network techniques. Transp. Res. Part A Policy Pract. 2020, 136, 262–281. [Google Scholar] [CrossRef]

- Yu, Z.; Shi, X.; Miao, X.; Zhou, J.; Khandelwal, M.; Chen, X.; Qiu, Y. Intelligent modeling of blast-induced rock movement prediction using dimensional analysis and optimized artificial neural network technique. Int. J. Rock Mech. Min. Sci. 2021, 143, 104794. [Google Scholar] [CrossRef]

- Ke, B.; Khandelwal, M.; Asteris, P.G.; Skentou, A.D.; Mamou, A.; Armaghani, D.J. Rock-Burst Occurrence Prediction Based on Optimized Naïve Bayes Models. IEEE Access 2021, 9, 91347–91360. [Google Scholar] [CrossRef]

- Qiu, Y.; Zhou, J.; Khandelwal, M.; Yang, H.; Yang, P.; Li, C. Performance evaluation of hybrid WOA-XGBoost, GWO-XGBoost and BO-XGBoost models to predict blast-induced ground vibration. Eng. Comput. 2021, 38, 4145–4162. [Google Scholar] [CrossRef]

- He, Z.; Armaghani, D.J.; Masoumnezhad, M.; Khandelwal, M.; Zhou, J.; Murlidhar, B.R. A Combination of Expert-Based System and Advanced Decision-Tree Algorithms to Predict Air-Overpressure Resulting from Quarry Blasting. Nat. Resour. Res. 2021, 30, 1889–1903. [Google Scholar] [CrossRef]

- Bayat, P.; Monjezi, M.; Mehrdanesh, A.; Khandelwal, M. Blasting pattern optimization using gene expression programming and grasshopper optimization algorithm to minimise blast-induced ground vibrations. Eng. Comput. 2021, 38, 3341–3350. [Google Scholar] [CrossRef]

- Bayat, P.; Monjezi, M.; Rezakhah, M.; Armaghani, D.J. Artificial neural network and firefly algorithm for estimation and minimization of ground vibration induced by blasting in a mine. Nat. Resour. Res. 2020, 29, 4121–4132. [Google Scholar] [CrossRef]

- Zhou, J.; Li, X.; Mitri, H.S. Comparative performance of six supervised learning methods for the development of models of hard rock pillar stability prediction. Nat. Hazards 2015, 79, 291–316. [Google Scholar] [CrossRef]

- Le, T.-T.; Asteris, P.G.; Lemonis, M.E. Prediction of axial load capacity of rectangular concrete-filled steel tube columns using machine learning techniques. Eng. Comput. 2021, 38, 3283–3316. [Google Scholar] [CrossRef]

- Harandizadeh, H.; Armaghani, D.J.; Asteris, P.G.; Gandomi, A.H. TBM performance prediction developing a hybrid ANFIS-PNN predictive model optimized by imperialism competitive algorithm. Neural Comput. Appl. 2021, 33, 16149–16179. [Google Scholar] [CrossRef]

- Gavriilaki, E.; Asteris, P.G.; Touloumenidou, T.; Koravou, E.-E.; Koutra, M.; Papayanni, P.G.; Karali, V.; Papalexandri, A.; Varelas, C.; Chatzopoulou, F. Genetic justification of severe COVID-19 using a rigorous algorithm. Clin. Immunol. 2021, 226, 108726. [Google Scholar] [CrossRef]

- Shan, F.; He, X.; Armaghani, D.J.; Zhang, P.; Sheng, D. Success and challenges in predicting TBM penetration rate using recurrent neural networks. Tunn. Undergr. Space Technol. 2022, 130, 104728. [Google Scholar] [CrossRef]

- Indraratna, B.; Armaghani, D.J.; Correia, A.G.; Hunt, H.; Ngo, T. Prediction of resilient modulus of ballast under cyclic loading using machine learning techniques. Transp. Geotech. 2023, 38, 100895. [Google Scholar] [CrossRef]

- Tan, W.Y.; Lai, S.H.; Teo, F.Y.; Armaghani, D.J.; Pavitra, K.; El-Shafie, A. Three Steps towards Better Forecasting for Streamflow Deep Learning. Appl. Sci. 2022, 12, 12567. [Google Scholar] [CrossRef]

- Ghanizadeh, A.R.; Ghanizadeh, A.; Asteris, P.G.; Fakharian, P.; Armaghani, D.J. Developing Bearing Capacity Model for Geogrid-Reinforced Stone Columns Improved Soft Clay utilizing MARS-EBS Hybrid Method. Transp. Geotech. 2022, 38, 100906. [Google Scholar] [CrossRef]

- Skentou, A.D.; Bardhan, A.; Mamou, A.; Lemonis, M.E.; Kumar, G.; Samui, P.; Armaghani, D.J.; Asteris, P.G. Closed-Form Equation for Estimating Unconfined Compressive Strength of Granite from Three Non-destructive Tests Using Soft Computing Models. Rock Mech. Rock Eng. 2022, 56, 487–514. [Google Scholar] [CrossRef]

- Ghanizadeh, A.R.; Delaram, A.; Fakharian, P.; Armaghani, D.J. Developing Predictive Models of Collapse Settlement and Coefficient of Stress Release of Sandy-Gravel Soil via Evolutionary Polynomial Regression. Appl. Sci. 2022, 12, 9986. [Google Scholar] [CrossRef]

- Li, C.; Zhou, J.; Tao, M.; Du, K.; Wang, S.; Armaghani, D.J.; Mohamad, E.T. Developing hybrid ELM-ALO, ELM-LSO and ELM-SOA models for predicting advance rate of TBM. Transp. Geotech. 2022, 36, 100819. [Google Scholar] [CrossRef]

- Parsajoo, M.; Armaghani, D.J.; Mohammed, A.S.; Khari, M.; Jahandari, S. Tensile strength prediction of rock material using non-destructive tests: A comparative intelligent study. Transp. Geotech. 2021, 31, 100652. [Google Scholar] [CrossRef]

- Barkhordari, M.S.; Armaghani, D.J.; Mohammed, A.S.; Ulrikh, D.V. Data-Driven Compressive Strength Prediction of Fly Ash Concrete Using Ensemble Learner Algorithms. Buildings 2022, 12, 132. [Google Scholar] [CrossRef]

- Liu, Z.; Armaghani, D.J.; Fakharian, P.; Li, D.; Ulrikh, D.V.; Orekhova, N.N.; Khedher, K.M. Rock Strength Estimation Using Several Tree-Based ML Techniques. CMES-Comp. Model. Eng. Sci. 2022, 133, 799–824. [Google Scholar] [CrossRef]

- Pham, B.T.; Nguyen, M.D.; Nguyen-Thoi, T.; Ho, L.S.; Koopialipoor, M.; Quoc, N.K.; Armaghani, D.J.; Van Le, H. A novel approach for classification of soils based on laboratory tests using Adaboost, Tree and ANN modeling. Transp. Geotech. 2021, 27, 100508. [Google Scholar] [CrossRef]

- Asteris, P.G.; Mamou, A.; Ferentinou, M.; Tran, T.-T.; Zhou, J. Predicting clay compressibility using a novel Manta ray foraging optimization-based extreme learning machine model. Transp. Geotech. 2022, 37, 100861. [Google Scholar] [CrossRef]

- Rezazadeh Eidgahee, D.; Jahangir, H.; Solatifar, N.; Fakharian, P.; Rezaeemanesh, M. Data-driven estimation models of asphalt mixtures dynamic modulus using ANN, GP and combinatorial GMDH approaches. Neural Comput. Appl. 2022, 34, 17289–17314. [Google Scholar] [CrossRef]

- Naderpour, H.; Rafiean, A.H.; Fakharian, P. Compressive strength prediction of environmentally friendly concrete using artificial neural networks. J. Build. Eng. 2018, 16, 213–219. [Google Scholar] [CrossRef]

- Yang, H.; Song, K.; Zhou, J. Automated Recognition Model of Geomechanical Information Based on Operational Data of Tunneling Boring Machines. Rock Mech. Rock Eng. 2022, 55, 1499–1516. [Google Scholar] [CrossRef]

- Yang, H.; Wang, Z.; Song, K. A new hybrid grey wolf optimizer-feature weighted-multiple kernel-support vector regression technique to predict TBM performance. Eng. Comput. 2020, 38, 2469–2485. [Google Scholar] [CrossRef]

- Ikram, R.M.A.; Dai, H.-L.; Ewees, A.A.; Shiri, J.; Kisi, O.; Zounemat-Kermani, M. Application of improved version of multi verse optimizer algorithm for modeling solar radiation. Energy Rep. 2022, 8, 12063–12080. [Google Scholar] [CrossRef]

- Ikram, R.M.A.; Ewees, A.A.; Parmar, K.S.; Yaseen, Z.M.; Shahid, S.; Kisi, O. The viability of extended marine predators algorithm-based artificial neural networks for streamflow prediction. Appl. Soft Comput. 2022, 131, 109739. [Google Scholar] [CrossRef]

- Nguyen, H.; Bui, X.-N.; Bui, H.-B.; Cuong, D.T. Developing an XGBoost model to predict blast-induced peak particle velocity in an open-pit mine: A case study. Acta Geophys. 2019, 67, 477–490. [Google Scholar] [CrossRef]

- Ahmad, I.; Basheri, M.; Iqbal, M.J.; Rahim, A. Performance comparison of support vector machine, random forest, and extreme learning machine for intrusion detection. IEEE Access 2018, 6, 33789–33795. [Google Scholar] [CrossRef]

- Meulenkamp, F.; Grima, M.A. Application of neural networks for the prediction of the unconfined compressive strength (UCS) from Equotip hardness. Int. J. Rock Mech. Min. Sci. 1999, 36, 29–39. [Google Scholar] [CrossRef]

- Sonmez, H.; Tuncay, E.; Gokceoglu, C. Models to predict the uniaxial compressive strength and the modulus of elasticity for Ankara Agglomerate. Int. J. Rock Mech. Min. Sci. 2004, 41, 717–729. [Google Scholar] [CrossRef]

- Gokceoglu, C.; Zorlu, K. A fuzzy model to predict the uniaxial compressive strength and the modulus of elasticity of a problematic rock. Eng. Appl. Artif. Intell. 2004, 17, 61–72. [Google Scholar] [CrossRef]

- Mishra, D.; Basu, A. Estimation of uniaxial compressive strength of rock materials by index tests using regression analysis and fuzzy inference system. Eng. Geol. 2013, 160, 54–68. [Google Scholar] [CrossRef]

- Cevik, A.; Sezer, E.A.; Cabalar, A.F.; Gokceoglu, C. Modeling of the uniaxial compressive strength of some clay-bearing rocks using neural network. Appl. Soft Comput. 2011, 11, 2587–2594. [Google Scholar] [CrossRef]

- Singh, V.; Singh, D.; Singh, T. Prediction of strength properties of some schistose rocks from petrographic properties using artificial neural networks. Int. J. Rock Mech. Min. Sci. 2001, 38, 269–284. [Google Scholar] [CrossRef]

- Gokceoglu, C. A fuzzy triangular chart to predict the uniaxial compressive strength of the Ankara agglomerates from their petrographic composition. Eng. Geol. 2002, 66, 39–51. [Google Scholar] [CrossRef]

- Zorlu, K.; Gokceoglu, C.; Ocakoglu, F.; Nefeslioglu, H.; Acikalin, S. Prediction of uniaxial compressive strength of sandstones using petrography-based models. Eng. Geol. 2008, 96, 141–158. [Google Scholar] [CrossRef]

- Yagiz, S.; Sezer, E.; Gokceoglu, C. Artificial neural networks and nonlinear regression techniques to assess the influence of slake durability cycles on the prediction of uniaxial compressive strength and modulus of elasticity for carbonate rocks. Int. J. Numer. Anal. Methods Geomech. 2012, 36, 1636–1650. [Google Scholar] [CrossRef]

- Ceryan, N.; Okkan, U.; Kesimal, A. Prediction of unconfined compressive strength of carbonate rocks using artificial neural networks. Environ. Earth Sci. 2013, 68, 807–819. [Google Scholar] [CrossRef]

- Rabbani, E.; Sharif, F.; Koolivand Salooki, M.; Moradzadeh, A. Application of neural network technique for prediction of uniaxial compressive strength using reservoir formation properties. Int. J. Rock Mech. Min. Sci. 2012, 56, 100–111. [Google Scholar] [CrossRef]

- Armaghani, D.J.; Mohamad, E.T.; Momeni, E.; Narayanasamy, M.S. An adaptive neuro-fuzzy inference system for predicting unconfined compressive strength and Young’s modulus: A study on Main Range granite. Bull. Eng. Geol. Environ. 2015, 74, 1301–1319. [Google Scholar] [CrossRef]

- Ulusay, R.; Hudson, J.A. ISRM (2007) The Complete ISRM Suggested Methods for Rock Characterization, Testing and Monitoring: 1974–2006; Comm. Test Methods Int. Soc. Rock. Mech.; ISRM Turkish Natl Group: Ankara, Turkey, 2007; p. 628. [Google Scholar]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd Acm Sigkdd International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).