1. Introduction

High reliability and long-life materials have received increasing attention in the past and are widely used in the fields of nuclear power, aerospace, and electronic communications. As a serious concern, deterioration drastically reduces the service life and reliability of the materials. Considering the uncertainty and time-variability of the deterioration process, the deterioration is a complex and highly unpredictable process.

In recent years, there have been a lot of studies on the deterioration of materials [

1]. There is generally two types of deterioration: gradual (progressive) deterioration and shock (sudden) deterioration. Gradual deterioration mainly refers to the deterioration of system parameters and performance under storage and routine use [

2,

3,

4]. Since this deterioration phenomenon often continues over time, gradual deterioration is usually represented by a random process. Shock deterioration usually occurs in extreme cases (e.g., earthquakes, blasts and other sudden hazards) [

5,

6], and a point process is usually used to simulate this deterioration phenomenon. In general, the two kinds of deteriorations appear in the deterioration process of most engineering systems simultaneously. Some methods are presented for the modeling of deterioration [

7,

8]. Since deterioration may directly affect the reliability of the assessment results and accuracy of life prediction, deterioration models play an important role in the analysis and design of systems, especially reliability analysis [

9,

10], residual service life prediction [

11] and life-cycle analysis [

12,

13].

Engineers focus on the accuracy of degradation models due to the importance of deterioration. Generally speaking, the simulation model must be verified using experimental data [

14,

15], and a validated deterioration model can be regarded as a credible representation of the actual deterioration phenomenon. Moreover, information gained from validation also aids in simulating the deterioration process and refining the model. Therefore, a validation approach is necessary to measure the variance between the simulation deterioration model and the experimental observations which is caused by uncertainty and model simplification. Four validation methods are prevalent in the existing literature [

16,

17,

18,

19]: classical hypothesis testing, Bayes factor, frequentist’s metric and area metric. Among them, the classical hypothesis testing judges the correctness of the model by identifying statements in which there is compelling evidence of truth. The Bayes factor verification method also pays attention to the problem of the correctness of the model [

18]. Classical hypothesis testing and Bayes factor cannot obtain the variance between the simulation model and the experimental observations [

17]. The frequentist’s metric used the mean of the simulation results and the experimental observations to quantitatively evaluate the difference between the simulation model and the actual model. The area metric [

19] can be obtained by calculating the area between the cumulative distribution function (CDF) based on the simulation model and the CDF based on experimental data, and the size of the area can represent the variance between the simulation model and the actual model. There is a considerable amount of literature available on the validation of time-dependent models. Yang et al. [

20] have proposed a validation metric of the degradation model with dynamic performance, which can not evaluate the accuracy of the degradation model quantitatively. To quantitatively evaluate the dynamic model, Zhan et al. [

21] developed a Bayesian dynamic model validation method using probabilistic principal component analysis. Xi et al. [

22] proposed a validation metric using U-polling techniques for general dynamic system responses. Wang et al. [

23] presented an area metric based on Karhunen–Loève expansion for validating dynamic models. Atkinson et al. [

24] proposed a dynamic model verification metric based on wavelet threshold signals, which can solve the problem of experimental system data being often polluted by noise. Lee et al. [

25] have a detailed introduction to the above methods. In these methods, data dimensionality reduction is mainly obtained by constructing different decomposition formulas (e.g., Principal Component Analysis) to represent the entire random process. Then, the validation metric is obtained by comparing the data of the simulation model and that of the experimental observations.

For the above studies on model validation, the credibility of validation metrics is significantly reduced when the number of experimental samples is too few. However, in engineering, many experimental samples are expensive and time-consuming. This means that the credibility and cost of validation experiments are often conflicting goals. Hence, designing a validation experiment would be the key step for the validation of deterioration models. Design of experiment (DoE) [

26,

27] has been used to improve experimental performance in engineering and has been studied extensively. Design of experiments are classified into two categories: classical DoE (such as central composite design, full and fractional-factorial design, Box–Behnken design, orthogonal arrays experiments and optimal design) and modern DoE (such as quasi-random design, random design, projections-based design, miscellaneous design, uniform design and hybrid design). Different from traditional experiments, the validation experiment is a new type of experimental method and aims to determine the prediction accuracy and reliability of the simulation model used to describe the actual model. An excellent validation experiment can maximize the information from experiments, increase the credibility of experiments, and reduce their cost. There have been some studies on experimental design in the past decades. For example, Huan and Marzouk [

28] developed an experimental design method for model calibration by using information theory metrics and gradient-based stochastic optimization techniques. Jiang and Mahadevan [

29] proposed a computer simulation model verification experiment design method based on Bayesian cross-entropy. The above experimental design methods are aimed at static models and are difficult to apply to the verification of time-dependent prediction models. Ao et al. [

30] proposed a validation experiment design optimization method of a life prediction model to obtain the optimal stress level and the number of experiments under each stress level. However, for the validation experiment of deterioration models, the number of experimental samples and observation moments of the samples are crucial factors in experimental design. Moreover, as an important evaluation index for validation experiments, the credibility of experiments should not be ignored. Hence, how to design the validation experiment of deterioration models under certain credibility conditions is an open problem.

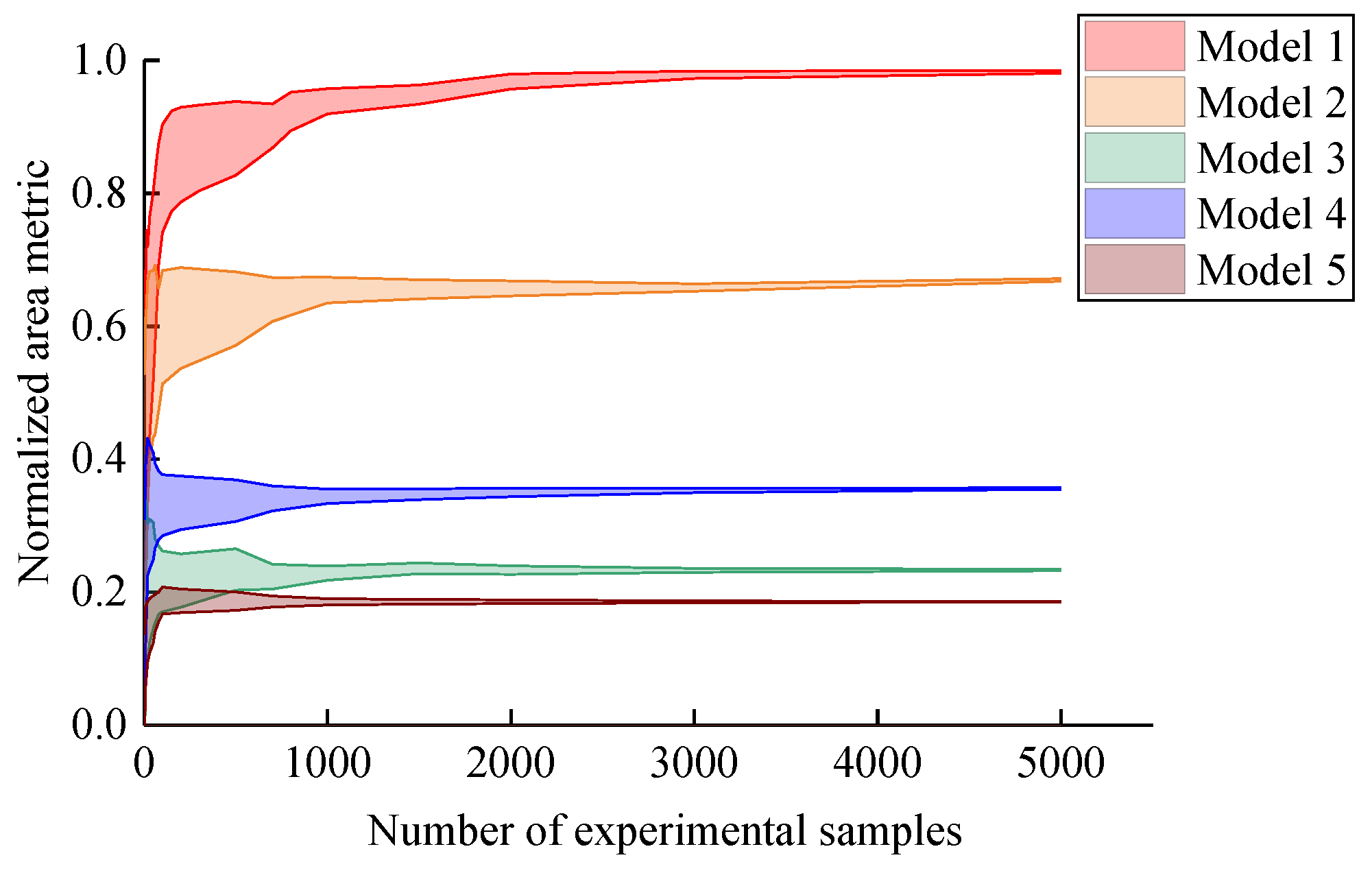

This paper mainly included two main contributions: (i) A normalized area metric for deterioration models is proposed. Different from the traditional area metric, the metric is based on the probability density functions (PDFs), which is dimensionless and intuitive. In particular, kernel density estimation (KDE) is used to obtain a smooth PDF from discrete experimental data. (ii) An optimization method of the validation experiment for the deterioration model is proposed. The experimental design fully considers design variables, including the number of experimental samples, the number of observation and observation moments. The credibility of the validation experiment is constraint, and the total cost is optimization objective. This paper is structured as follows: In

Section 2, the validation metric of the deterioration model is described. In

Section 3, the validation experiment design of the deterioration model is developed. Finally, in

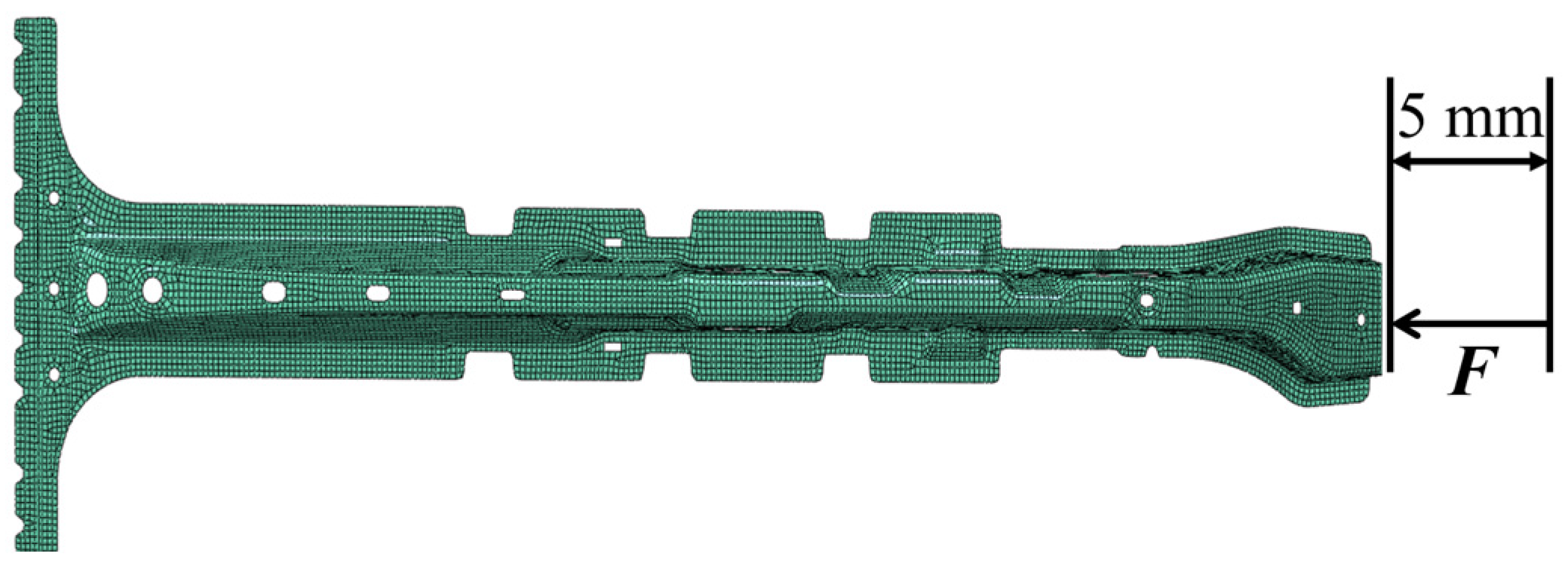

Section 4, two examples of deterioration models are used to prove the correctness and validity of the experiment design method.