Optimization Design of Laser Arrays Based on Absorption Spectroscopy Imaging for Detecting Temperature and Concentration Fields

Abstract

1. Introduction

2. Methods

2.1. Linear Tomography Flame Imaging Measurement Model

2.2. Optical Path Optimization Model

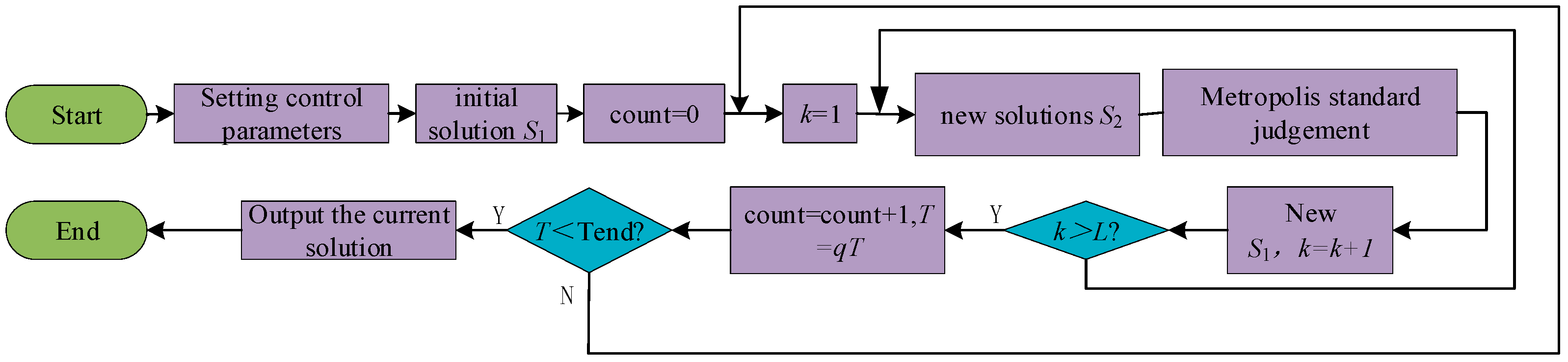

2.2.1. Simulated Annealing Algorithm

- (1)

- Heating step: This phase intensifies the thermal movement of particles, disrupting the system’s equilibrium. With a sufficiently high temperature, the solid transitions into a liquid state, effectively eliminating the original system inhomogeneities.

- (2)

- Isothermal step: At a constant temperature, the system’s state evolves in response to free energy, ultimately reaching equilibrium at the minimum free energy value.

- (3)

- Cooling step: This phase decelerates the thermal motion of particles, reducing the system’s energy and yielding a crystalline structure.

2.2.2. Harris’s Hawk Optimization Algorithm

- (1)

- Exploration phase

- (2)

- Transition from exploration to exploitation

- (3)

- Exploitation stage

- (1)

- Initialization: Define initial parameters such as population size, number of iterations, and the objective function. Randomly generate variables and calculate the objective value of the initial solution. Concurrently, iterates the algorithm by generating random variables again to update the global optimal solution, storing iteration data for subsequent comparison.

- (2)

- Exploration: Update the random light path coordinate positions according to the algorithm’s rules and perturb these coordinates based on the computation’s scale.

- (3)

- Exploitation: perform hard and soft sieges sequentially, updating the coordinate positions and target values in each iteration according to the computational formulas.

- (4)

- Progressive Fast Swooping Siege Strategy: Update the target value and corresponding coordinates as per the formula. At the end of each iteration, update the global best solution, recording the best solution for each generation. Repeat the iterations for the specified number of times to ultimately obtain the optimal optical path and the highest reconstruction accuracy.

3. Results and Discussion

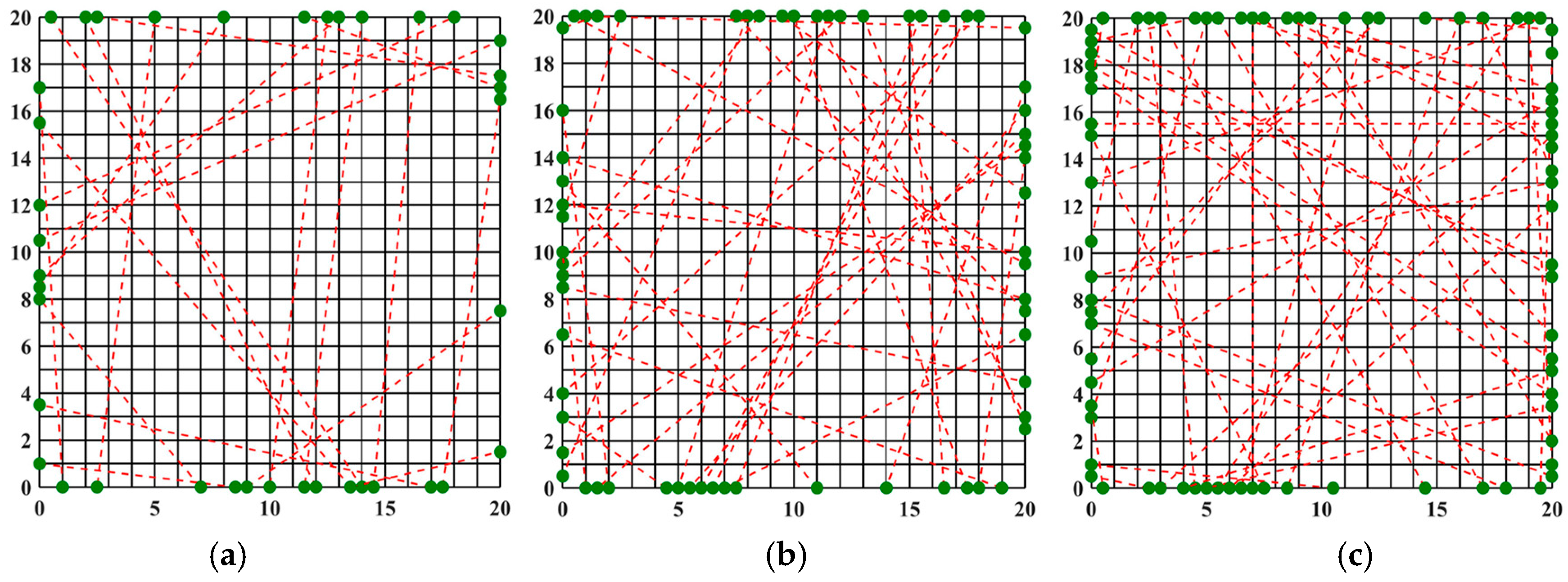

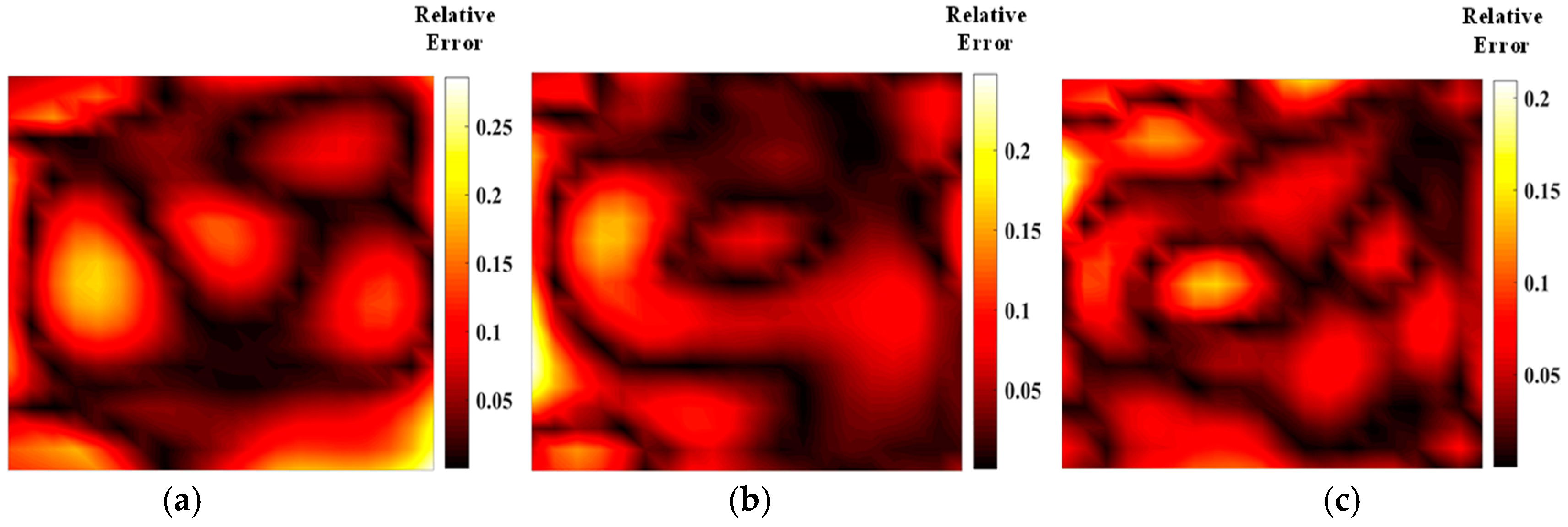

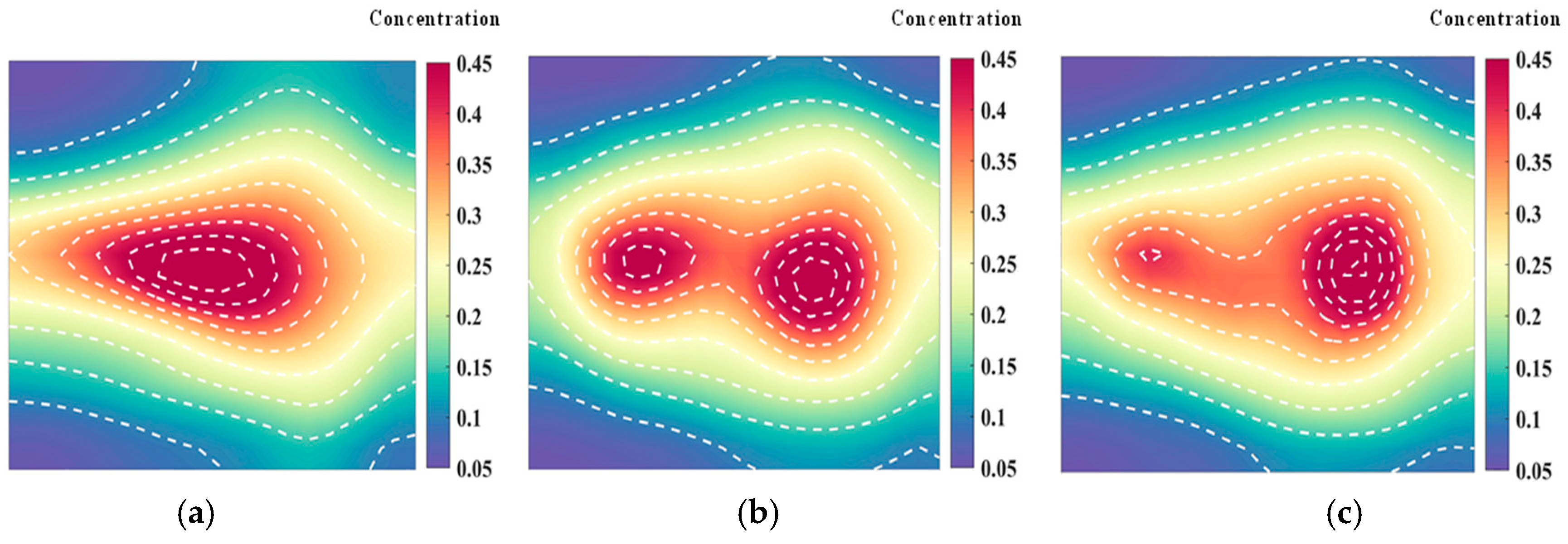

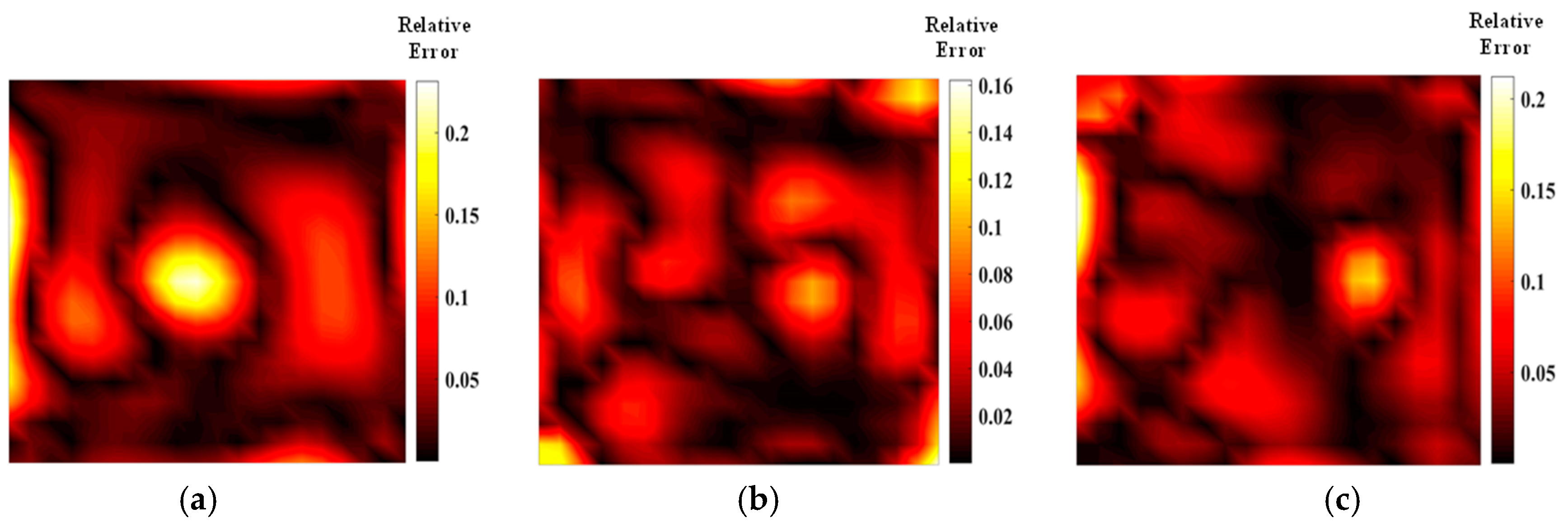

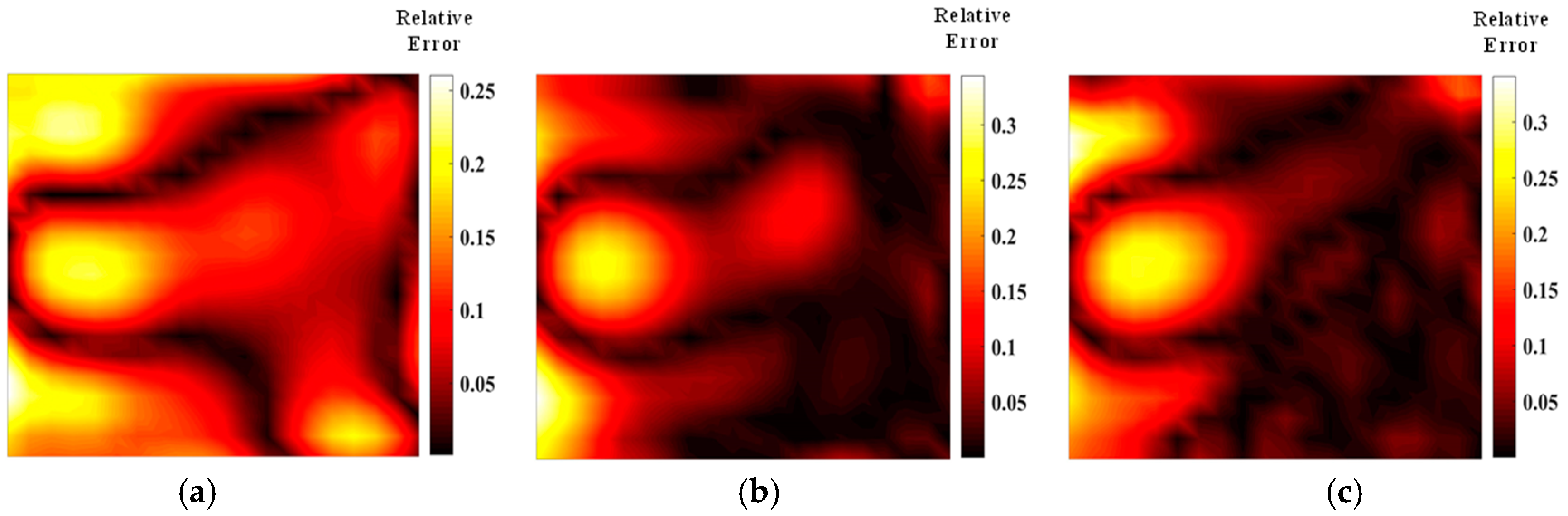

3.1. Optimization of the Optical Path for Symmetric Measurements

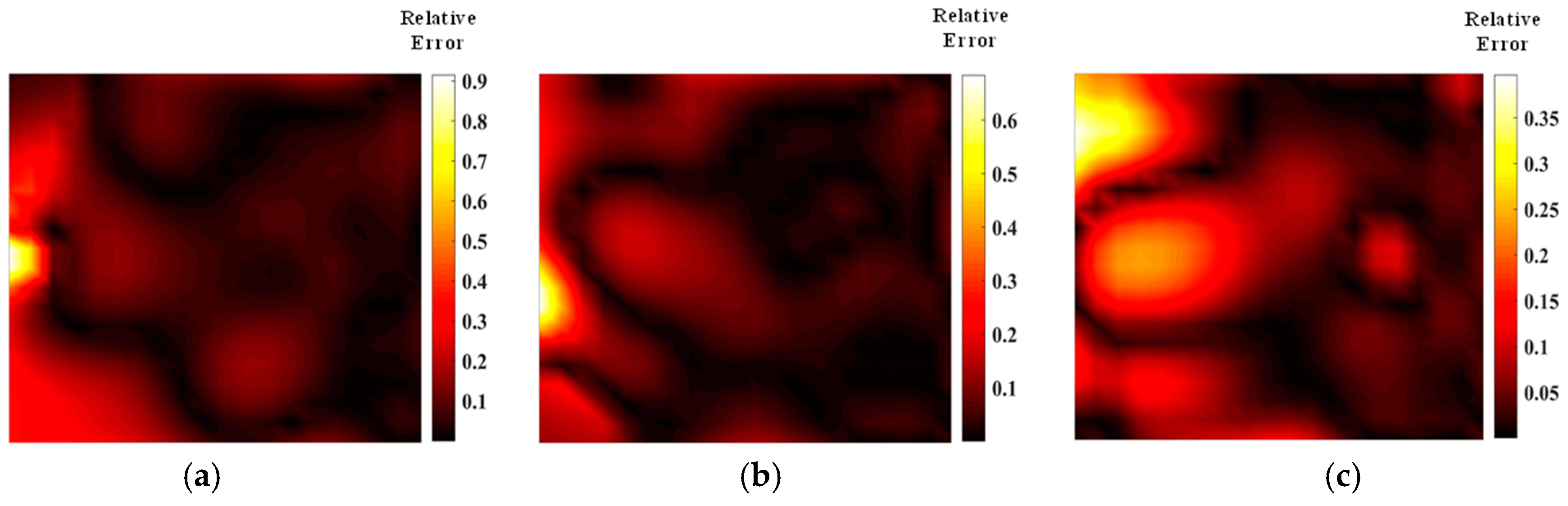

3.2. Optimization of the Optical Path for Asymmetric Measurements

4. Conclusions

- (1)

- The detection model based on the TDLAS technique demonstrates effective reconstruction for both asymmetric flame temperature and concentration fields. Under 40 parallel light paths, the relative error of temperature field reconstruction is 5.1%, and the relative error of concentration field reconstruction is 8.3%. The model provides high imaging accuracy and quality, validating its correctness.

- (2)

- Both the simulated annealing and Harris’s Hawk algorithms were employed to optimize the light path arrangement for configurations of 20, 32, and 40 beams. Results indicate that the optimized optical path arrangements significantly outperform conventional regular arrangements. Moreover, the HHO algorithm surpasses the simulated annealing algorithm in terms of both optimization results and computational efficiency. Compared with the parallel optical path, the optimized optical path of the HHO algorithm reduces the temperature field error by 25.5% and the concentration field error by 26.5%.

- (3)

- The optimization results for the asymmetric measurement optical path show that measurements on the dominant side of the optical path generation are reliable. However, the concentration measurement error is notably larger than the temperature measurement error.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Choi, J.; Choi, O.; Lee, M.C.; Kim, N. On the observation of the transient behavior of gas turbine combustion instability using the entropy analysis of dynamic pressure. Exp. Therm. Fluid Sci. 2020, 115, 110099. [Google Scholar] [CrossRef]

- Chen, J.; Du, J.; Liu, Y.; Liu, L.; Li, A.; Jiang, J.; Sun, P. Numerical study of combustion flow field characteristics of industrial gas turbine under different fuel blending conditions. Appl. Therm. Eng. 2024, 251, 123573. [Google Scholar] [CrossRef]

- Tan, P.; Xia, J.; Zhang, C.; Fang, Q.; Chen, G. Modeling and reduction of NOX emissions for a 700 MW coal-fired boiler with the advanced machine learning method. Energy 2016, 94, 672–679. [Google Scholar] [CrossRef]

- Lu, M.; Li, D.; Xie, K.; Sun, G.; Fu, Z. Investigation of flame evolution and stability characteristics of H2-enriched natural gas fuel in an industrial gas turbine combustor. Fuel 2023, 331, 125938. [Google Scholar] [CrossRef]

- Zhang, Z.; Pang, T.; Yang, Y.; Xia, H.; Cui, X.; Sun, P.; Wu, B.; Wang, Y.; Sigrist, M.W.; Dong, F. Development of a tunable diode laser absorption sensor for online monitoring of industrial gas total emissions based on optical scintillation cross-correlation technique. Opt. Express 2016, 24, A943. [Google Scholar] [CrossRef]

- Ashik, A.S.; Rodrigo, P.J.; Larsen, H.E.; Vechi, N.T.; Kissas, K.; Fredenslund, A.M.; Mønster, J.G.; Scheutz, C.; Pedersen, C. Integrated-path multi-gas sensor using near-infrared diode lasers: An alternative to vehicle-driven point gas analyzer. Sens. Actuators B Chem. 2024, 412, 135855. [Google Scholar] [CrossRef]

- Choi, D.W.; Jeon, M.G.; Cho, G.R.; Kamimoto, T.; Deguchi, Y.; Doh, D.-H. Performance improvements in temperature reconstructions of 2-D tunable diode laser absorption spectroscopy (TDLAS). J. Therm. Sci. 2016, 25, 84–89. [Google Scholar] [CrossRef]

- Chen, B.; Sun, Y.R.; Zhou, Z.Y.; Chen, J.; Liu, A.-W.; Hu, S.-M. Ultrasensitive, self-calibrated cavity ring-down spectrometer for quantitative trace gas analysis. Appl. Opt. 2014, 53, 7716. [Google Scholar] [CrossRef] [PubMed]

- Barria, J.B.; Roux, S.; Dherbecourt, J.B.; Raybaut, M.; Melkonian, J.-M.; Godard, A.; Lefebvre, M. Microsecond fiber laser pumped, single-frequency optical parametric oscillator for trace gas detection. Opt. Lett. 2013, 38, 2165. [Google Scholar] [CrossRef]

- Kasyutich, V.L.; Poulidi, D.; Jalil, M.; Metcalfe, I.S.; Martin, P.A. Application of a cw quantum cascade laser CO2 analyser to catalytic oxidation reaction monitoring. Appl. Phys. B 2013, 110, 263–269. [Google Scholar] [CrossRef]

- Goldenstein, C.S.; Spearrin, R.M.; Jeffries, J.B.; Hanson, R.K. Infrared laser absorption sensors for multiple performance parameters in a detonation combustor. Proc. Combust. Inst. 2015, 35, 3739–3747. [Google Scholar] [CrossRef]

- Sushkov, V.; Do, H.T.; Cada, M.; Hubicka, Z.; Hippler, R. Time-resolved tunable diode laser absorption spectroscopy of excited argon and ground-state titanium atoms in pulsed magnetron discharges. Plasma Sources Sci. Technol. 2012, 22, 015002. [Google Scholar] [CrossRef]

- Yu, T.; Tian, B.; Cai, W. Development of a beam optimization method for absorption-based tomography. Opt. Express 2017, 25, 5982. [Google Scholar] [CrossRef]

- Cai, W.; Kaminski, C.F. Tomographic absorption spectroscopy for the study of gas dynamics and reactive flows. Prog. Energy Combust. Sci. 2017, 59, 1–31. [Google Scholar] [CrossRef]

- Cai, W.; Kaminski, C.F. A tomographic technique for the simultaneous imaging of temperature, chemical species, and pressure in reactive flows using absorption spectroscopy with frequency-agile lasers. Appl. Phys. Lett. 2014, 104, 034101. [Google Scholar] [CrossRef]

- Twynstra, M.G.; Daun, K.J. Laser-absorption tomography beam arrangement optimization using resolution matrices. Appl. Opt. 2012, 51, 7059. [Google Scholar] [CrossRef]

- Song, J.; Hong, Y.; Wang, G.; Pan, H. Algebraic tomographic reconstruction of two-dimensional gas temperature based on tunable diode laser absorption spectroscopy. Appl. Phys. B 2013, 112, 529–537. [Google Scholar] [CrossRef]

- Xin, M.; Song, J.; Rao, W.; Hong, Y.; Jiang, Y. An efficient regulation approach for tomographic reconstruction in combustion diagnostics based on TDLAS method. Chin. J. Aeronaut. 2020, 33, 3158–3166. [Google Scholar] [CrossRef]

- Huang, X. Reconstruction of the Multi-Physical Fields in Luminous Flame Based on Active and Passive Optical Detection; Harbin Institute of Technology: Harbin, China, 2020. [Google Scholar]

- Zhao, J. Research on Temperature and Velocity Measurement Method of High Temperature Airflow Based on TDLAS; Dalian University of Technology: Dalian, China, 2023. [Google Scholar]

- Sun, P. Study on Reconstruction of Temperature and Concentration in Combustion Based on TDLAS; University of Science and Technology of China (USTC): Hefei, China, 2017. [Google Scholar]

- Heidari, A.A.; Mirjalili, S.; Faris, H.; Aljarah, I.; Mafarja, M.; Chen, H. Harris hawks optimization: Algorithm and applications. Future Gener. Comput. Syst. 2019, 97, 849–872. [Google Scholar] [CrossRef]

- Shi, J.; Qi, H.; Yu, Z.; An, X.; Ren, Y.; Tan, H. Three-dimensional temperature reconstruction of diffusion flame from the light-field convolution imaging by the focused plenoptic camera. Sci. China Technol. Sci. 2022, 65, 302–323. [Google Scholar] [CrossRef]

- Zhang, J.; Qi, H.; Jiang, D.; He, M.; Ren, Y.; Su, M.; Cai, X. Acoustic tomography of two dimensional velocity field by using meshless radial basis function and modified Tikhonov regularization method. Measurement 2021, 175, 109107. [Google Scholar] [CrossRef]

| Initial Temperature | Closing Temperature | Cooling Rate | Chain Length |

|---|---|---|---|

| q | L | ||

| 100 | 1 | 0.9 | 10 |

| Error Level | 20 Beams | 32 Beams | 40 Beams |

|---|---|---|---|

| Mean relative error of temperature | 0.069 | 0.050 | 0.044 |

| Relative error of mean concentration | 0.105 | 0.080 | 0.082 |

| Mean Relative Error | SA (20) | HHO (20) | SA (32) | HHO (32) | SA (40) | HHO (40) |

|---|---|---|---|---|---|---|

| temperature | 0.069 | 0.049 | 0.051 | 0.031 | 0.044 | 0.038 |

| concentration | 0.105 | 0.112 | 0.080 | 0.064 | 0.081 | 0.061 |

| Algorithm | SA (20) | HHO (20) | SA (32) | HHO (32) | SA (40) | HHO (40) |

|---|---|---|---|---|---|---|

| runtime/h | 4.393 | 4.270 | 4.590 | 3.967 | 4.672 | 4.546 |

| Average Error Level | 20 Beams | 32 Beams | 40 Beams |

|---|---|---|---|

| SA | 0.103 | 0.069 | 0.071 |

| HHO | 0.099 | 0.068 | 0.069 |

| Average Error Level | 20 Beams | 32 Beams | 40 Beams |

|---|---|---|---|

| SA | 0.248 | 0.171 | 0.134 |

| HHO | 0.190 | 0.117 | 0.124 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fan, L.; Dong, F.; Duan, J.; Sun, Y.; Wang, F.; Liu, J.; Tang, Z.; Sun, L. Optimization Design of Laser Arrays Based on Absorption Spectroscopy Imaging for Detecting Temperature and Concentration Fields. Materials 2024, 17, 3569. https://doi.org/10.3390/ma17143569

Fan L, Dong F, Duan J, Sun Y, Wang F, Liu J, Tang Z, Sun L. Optimization Design of Laser Arrays Based on Absorption Spectroscopy Imaging for Detecting Temperature and Concentration Fields. Materials. 2024; 17(14):3569. https://doi.org/10.3390/ma17143569

Chicago/Turabian StyleFan, Limei, Fangxu Dong, Jian Duan, Yan Sun, Fei Wang, Junyan Liu, Zhenhe Tang, and Liangwen Sun. 2024. "Optimization Design of Laser Arrays Based on Absorption Spectroscopy Imaging for Detecting Temperature and Concentration Fields" Materials 17, no. 14: 3569. https://doi.org/10.3390/ma17143569

APA StyleFan, L., Dong, F., Duan, J., Sun, Y., Wang, F., Liu, J., Tang, Z., & Sun, L. (2024). Optimization Design of Laser Arrays Based on Absorption Spectroscopy Imaging for Detecting Temperature and Concentration Fields. Materials, 17(14), 3569. https://doi.org/10.3390/ma17143569