Sentiment analysis (also known as opinion mining) is one of the most dynamically growing areas of natural language processing (NLP). It goes back to the early 2000s, when a handful of fundamental studies like Pang et al. [

1], Turney [

2], Turney and Littman [

3], and Dave et al. [

4] laid foundations for its subsequent explosive growth, fueled primarily by the availability of large quantities of opinionated online texts in English, mostly user-rated reviews of movies, products and services [

5]. Drawing mainly on Bing Liu’s excellent “in-depth introduction” [

6] and his recent exhaustive textbook [

7], as well as on selected comprehensive reviews [

5,

8,

9], we briefly summarize here its most salient aspects.

1.1. Terminology

The first thing that needs clarification is terminology. Although the name of the field has by now largely converged towards two nearly synonymous alternatives, sentiment analysis and opinion mining (or their concatenation), the fact remains that it has to deal with a host of closely interrelated, but not completely identical, manifestations of human subjectivity in a textual form, such as opinions, sentiments, evaluations, appraisals, attitudes, and emotions. These can be directed towards various entities, such as products, services, organizations, individuals, issues, events, topics, or even their attributes. In this respect, we might say that subjective texts express personal feelings, views or beliefs, while objective ones simply state (in principle) verifiable external facts.

Sometimes, all the diverse forms of subjectivity are lumped together and designated indiscriminately as “opinions” or “sentiments”, although conferring such a wide meaning to these two terms might easily breed confusion. We therefore side with those who propose to use both only for the forms of subjectivity that express or imply positive or negative feelings or attitudes towards something or somebody, and we follow Liu [

6,

7] in calling such documents and sentences opinionated. It may not be immediately obvious, but there are emotions (e.g., moods, such as melancholy) that are not directed at any particular object—at least not on the surface—while the directedness of others, e.g., that of surprise, does not easily fit into the categories of positive and negative (albeit the context may occasionally impart to it either hue). To avoid a long detour into a potentially controversial issue of how many basic emotions there are, we restrict ourselves to merely echoing the observation of Tsytsarau and Palpanas [

10] that most authors in NLP seem to prefer the scheme with six basic emotions (anger, disgust, fear, joy, sadness, surprise) originally proposed by Ekman et al. [

11].

From the preceding terminological clarifications, it might seem that opinionated texts should always be a subset of subjective ones, but the reality is more complex: opinions and sentiments may be implied indirectly, contextually, without any explicit indicators of subjectivity in the text. One example could be the sentence, “I bought this car and after two weeks it stopped working.” Here, a negative opinion is conveyed by an objective fact, whose undesirability is clear only to those who share implicit general expectations regarding cars. (A complementary example of a subjective sentence that is nevertheless not opinionated could be “I think he went home after lunch.” [

7]) Moreover, as Pang and Lee [

8] pointed out, the distinction between what is objective and what is subjective can be rather subtle: is “long battery life” or “a lot of gas” really objective? And how about the difference between “the battery lasts 12 h” and “the battery only lasts 12 h”? Consequently, and despite its deceptively simple appearance, sentiment analysis turns out to be a very difficult task. It is from this perspective that we can appreciate the depth of its pithy informal definition as “a field of study that aims to extract opinions and sentiments from natural language text using computational methods” [

7].

1.2. Challenges

Apparently, the most straightforward way to identify opinionated texts is through the presence of sentiment words, also called opinion words. These consist mainly of adjectives and adverbs (good, bad, well, poorly, etc.), but also some nouns, verbs, phrases and idioms (disaster, to fail, to cost someone an arm and a leg, etc.). However, as Liu [

6] notes, sentiment lexicons (lists of sentiment words along with their positive or negative polarities) cannot be a panacea. One reason is that certain sentiment words may acquire opposite orientations in different contexts. For example, Turney [

2] observes that the word “unpredictable” could be positive regarding a movie plot but negative regarding a car’s steering capabilities, while Pang and Lee [

8] mention the phrase “Go read the book,” which might convey a positive sentiment in a book review but a negative one in a movie review. Moreover, as Fahrni and Klenner [

12] point out, this polarity flip can happen even within one domain—compare for instance the polarity of the word “cold” in “cold beer” with that in “cold pizza”.

Another reason limiting the utility of sentiment lexicons is that some sentences containing sentiment words may not express any sentiment at all—typically questions and conditionals, for example, “Can you tell me which Sony camera is good?” or “If I can find a good camera in the shop, I will buy it”. This, however, is not universal; for example, the question “Does anyone know how to repair this terrible printer?” clearly carries a negative attitude toward the printer [

7]. Then there are sarcastic sentences, which actually mean the opposite of what they appear to say on the surface, as well as seemingly objective sentences without any sentiment words, but which nevertheless carry an evaluative attitude such as the one about the new car that broke down after two weeks.

Pang and Lee [

13] also mention a few other issues affecting sentiment analysis, especially when both fine-grained ratings (e.g., stars) and evaluative texts (reviews) are assigned to opinion targets. These problems include:

- (a)

Individual reviewer inconsistency, e.g., when the same reviewer associates their own very similarly worded reviews with different star ratings;

- (b)

Lack of inter-reviewer calibration, e.g., high praise by an understated author can be interpreted as a lukewarm or neutral reaction by others;

- (c)

Ratings not entirely supported by the review text, as when the review mentions only some partial aspects or details, while the rating captures the overall impression of the target, including aspects not explicitly mentioned in the text.

As a result, there is considerable uncertainty inherent in the very nature of sentiment analysis, which even the human mind cannot entirely overcome. Human interrater agreement studies can therefore be taken as providing upper bounds on the accuracy that can be reasonably expected of automated sentiment analysis methods. It turns out that human interrater agreement can be surprisingly low, especially for fine-grained sentiments, as Hazarika et al. [

14] observed. Studying consistency of human ratings of Apple iTunes applications on the scale from one to four stars, the exact interrater agreement among their three human raters was below 41%. To account for subtle variations in human interpretation, they proposed to consider human ratings to be in agreement if they varied by at most one level of rating. This “adjacent interrater agreement” then approached 89.5%, which showed that after all, the three raters could still be considered fairly consistent. In a similar context, Batista et al. [

15] report the levels of exact interrater agreement between two human judges distinguishing three levels of sentiment (positive, neutral, negative) to vary between 79% and 90%. It is important to be aware of these limits when forming expectations or evaluating the performance of automated methods.

1.3. Approaches

In general, sentiment analysis can be carried out on the level of documents, sentences, or entities mentioned in the text and their aspects. Document-level is properly applicable only to documents which evaluate a single entity, such as product or movie reviews. Because we deal precisely with such documents in this article, from now on we will concentrate primarily on document-level sentiment analysis.

Tsytsarau and Palpanas [

10] broadly categorized document-level sentiment analysis approaches into four types: dictionary-based, statistical, semantic, and machine learning-based. In principle, each of these can be conceived either as a classification task, labelling documents as “positive”, “negative”, “neutral”, etc., or as a regression task, trying to predict some sort of numeric or ordinal sentiment score for each document, e.g., on a scale from one to five stars.

Of these, the most straightforward is the dictionary approach, which exploits pre-built sentiment lexicons and dictionaries, such as General Inquirer [

16], WordNet-Affect [

17], SentiWordNet [

18], or Emotion lexicon [

19]. In the dictionary approach, the polarity of a text is usually computed as a weighted average of the lexicon-provided polarities of its sentiment words, accounting also for their modifiers (negation, intensification, etc.). The weights used in this calculation may be static or computed dynamically, reflecting, e.g., the distance of a given sentiment word from the closest topic word [

10]. It is also possible to evaluate the polarity of a text based on the polarity of its longer n-grams, as in [

20]. In general, however, relying on universal polarity values from lexicons was shown to be unreliable, since sentiment words can change their polarity in different contexts, and sometimes even within the same context. This motivated the development of more advanced forms of sentiment analysis.

The statistical approach aims to overcome such problems by constructing corpus-dependent sentiment lexicons. It is based on the observation that similar opinion words frequently appear together in a corpus. Conversely, if two words frequently appear together within the same context, they are likely to share the same polarity. Therefore, the polarity of an unknown word can be determined by calculating the relative frequency of its co-occurrence with another word, which invariantly preserves its polarity (a prototypical example of such a “reference” word is “good”). This fact motivated Peter Turney [

2,

3] to exploit the pointwise mutual information (PMI) criterion originally proposed by Church and Hanks [

21], and derive from it the sentiment polarity of a word as the difference between its PMI values relative to two opposing lists of words: positive words, such as “good, excellent”, and negative words, such as “bad, poor”.

The semantic approach is akin to the statistical one in that it directly calculates sentiment polarity values for words (thus bypassing the need for external sentiment lexicons) except that it computes the similarity between words differently. Its defining principle is that semantically close words should receive similar sentiment polarity values. As with statistical methods, two sets of seed words with positive and negative sentiments are used as a starting point for bootstrapping the construction of a dictionary. This initial set is then iteratively expanded with their synonyms and antonyms, drawn from a suitable semantic dictionary such as WordNet [

22,

23]. The sentiment polarity for an unknown word can be determined by the relative count of its positive and negative synonyms, or it may simply be discarded. Alternatively, Kamps et al. [

24] used the relative shortest path distance of the “synonym” relation. In any case, it is important to account for the fact that the synonym’s relevance decreases with its distance from the original word, so the same principle should be applied to assigning the polarity value to the original word.

The above three approaches were especially prominent in the early days of sentiment analysis; later they were mostly eclipsed by classical machine learning or incorporated into it as special forms of data pre-processing, feature construction and feature selection. Some of them could even be considered forms of machine learning in their own right. Thus, for example, Peter Turney [

2] called his early work (considered to be of “statistical” nature by Tsytsarau and Palpanas [

10]), “unsupervised classification”, a somewhat paradoxical but still legitimate term employed also by Liu [

7].

In classical machine learning (ML), a model is learned in a supervised or unsupervised way from a training corpus, and then used to classify new, previously unseen texts. Its key success factors are the choice of the learning algorithm, the quality and quantity of the training data, and the quality of feature engineering and feature selection, which may require considerable domain expertise.

In many applications, binary features (recording just the presence or absence of a certain feature in a given text) perform remarkably well. In others, capturing relative frequency of each feature might yield better results. Moreover, as Osherenko and André [

25] observed, in most cases the features may be reduced to a small subset of the most affective words without any significant degradation of the model’s performance. The same authors also noted that (at least for their corpus) word frequencies contained approximately the same amount of information as sentiment annotations in their sentiment lexicons.

Since sentiment classification is a type of text classification, in principle, any supervised ML method suitable for the latter can also be applied to the former. In practice, Naïve Bayes (NB) and Support Vector Machines (SVM) have been (and still are) very popular in English [

26,

27], especially for sentiment analysis of short messages like Twitter [

28,

29]. From the recent work exploiting deep learning techniques we might mention the study of COVID-19 vaccination-related sentiments by Reshi et al. [

30], or various attempts to combine deep learning with other techniques such as ensemble learning [

31], dual graphs [

32], word dependencies [

33] or topic modeling and clustering [

34,

35]. Given the growing demand for trustworthiness, explainability and accountability of AI applications, the use of explainable AI techniques in sentiment analysis is also growing [

36,

37]. Of course, many more algorithms and their variations than we could possibly list here have been tried by various researchers—for a detailed overview we recommend the latest book by Liu [

7] or one of the recent comprehensive reviews, such as [

38,

39].

While NLP in general, and sentiment analysis in particular, receive a lot of research attention and funding in English (and possibly in a few other major languages), smaller languages are largely left behind. Their uneven progress has been vividly documented in a series of white papers produced by the European META alliance (

http://www.meta-net.eu/whitepapers/overview (accessed on 17 October 2022)). Their Key Results section (

http://www.meta-net.eu/whitepapers/key-results-and-cross-language-comparison (accessed on 17 October 2022)) shows English to be the only language with “good support” across all the four major NLP dimensions (Text Analysis, Speech Processing, Machine Translation, Resources), with French and Spanish coming next and enjoying consistently “moderate support” across them. Dutch, German and Italian enjoy fragmentary support in Machine Translation and a moderate one in the remaining three categories, while most of the remaining European languages enjoy only fragmentary or no support.

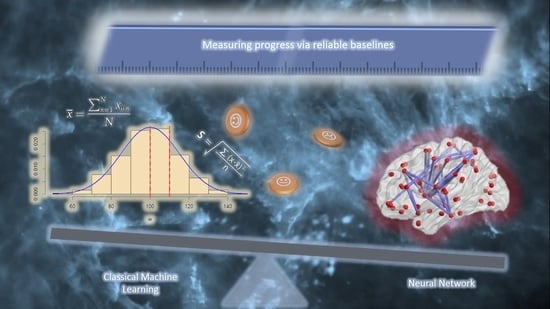

This explains why the latest deep learning techniques and trends, already prominent in English, such as Bidirectional Encoder Representations from Transformers (BERT) [

40] and its derivatives, e.g., RoBERTa (A Robustly Optimized BERT Pretraining Approach) [

41], have only recently started to appear in smaller languages such as Czech and Slovak [

42,

43,

44,

45]. Although they promise to revolutionize the whole field of NLP, to objectively measure the progress and the advantages that they bring, reliable baselines must first be established on the basis of methods that historically preceded them. That is precisely the goal and the main contribution of our present work. Moreover, related contemporary research in NLP shows that these new deep learning techniques will not simply render classical machine learning obsolete, but rather turn it into a valuable auxiliary tool to be used whenever their results or their operation need to be investigated from the perspective of robustness, adequacy, quality, accountability or explanation. Examples of techniques that can thus utilize classical machine learning include, for instance, “diagnostic classifiers” [

46], “probing tasks” [

47], and “structural probes” [

48,

49]. It will therefore continue to pay off for researchers of artificial intelligence to remain well-versed in classical machine learning techniques too.

The rest of this article is structured as follows: In

Section 2 we review the related work in the Czech and Slovak languages. In

Section 3 we summarize the relevant experiments and results by Habernal et al. [

50], some of which we then choose for replication.

Section 4 describes our replication experiments and their results, while

Section 5 discusses their implications and adds some relevant methodological advice toward increased replicability and reliability of sentiment analysis results in general. Finally,

Section 6 concludes our work with a short summary and an outline of future work.