1. Introduction

Blockchain technology is continually evolving in response to the success and growing popularity of digital currencies, such as Bitcoin. It is an innovative distributed ledger technology that has sparked widespread interest by addressing the issues of centralized parties, high costs, inefficiencies, and data storage challenges. Blockchain guarantees a tamper-proof ledger and transparent, secure transactions. Blockchain technology can change how we share information and affect the future of the digital economy and society, promoting its adoption in many fields, such as the Internet of Things (IoT) [

1], circular economy [

2], social crowdfunding [

3], insurance systems [

4], and smart manufacturing [

5].

Within the blockchain network, data integrity and validation are maintained thanks to a process called mining. The latter is performed by miners using different consensus mechanisms, such as Proof of Work (PoW) [

6] and Proof of Stake (PoS) [

7]. The consensus mechanism aims to solve a mathematical problem by generating a hash that satisfies a set of requirements using extensive computing resources. This mathematical problem refers to specific transactions that must be added to the blockchain’s ledger. In recent years, many researchers have been interested in the problem of resource allocation for the execution of the consensus mechanism [

8,

9,

10]. They primarily focused on selecting miners and determining the optimal resources to allocate to each participating miner. Despite the importance of these studies, they focus solely on wired blockchain networks and do not consider more challenging blockchain networks, such as mobile blockchains operating on mobile devices. Mobile blockchain, also known as wireless blockchain networks, has garnered significant attention in recent years, with many authors demonstrating in the literature its effectiveness and potential to drive substantial changes in various domains [

11]. However, mobile devices usually have limited resources (energy, storage, computation, etc.) that cannot support validation and verification tasks. With the emergence of mobile edge computing (MEC), mobile blockchain can utilize this environment to facilitate the offloading of mining and verification tasks from mobile devices to nearby edge servers that possess sufficient resources, thereby achieving improved communication efficiency. Because MEC enables the deployment of edge servers near mobile devices and has sufficient computing and storage capacity, it can overcome the high latency problem of traditional cloud computing and the limited computational power of mobile devices [

12]. MEC servers offer a distributed platform with integrated networking, computing, storage, and application processing capabilities.

Several studies on MEC-enabled wireless blockchain networks have been undertaken to enhance data security and transactional throughput. Most of these studies concentrated on applying game theory to resource allocation problems or presenting deep learning-based allocation [

13,

14]. Other works utilized auctions to allocate virtual machines to end users. All these works assign tasks related to the PoW protocol, such as mining and transaction verification, which require significant computing resources and demand that all nodes participate in these tasks. In contrast to these works, we are particularly interested in blockchain systems built upon the PoS protocol, which, unlike PoW, are less energy-intensive and resource-demanding. Indeed, miners compete to find a block hash; the first to do so gains the right to create a new block. In contrast, PoS, which Ethereum 2.0 uses, selects validators randomly once they have paid the stake. PoS introduces new considerations for resource allocation that both the validator and the MEC provider must consider. First, when leasing MEC resources, the validator must accurately calculate the utility of this allocation in terms of rewards, considering their chances of being selected as validators and the allocation costs they must incur. On the other hand, the MEC provider must maximize their income, considering that clients are mobile, and must properly balance the load on their resources. They must also provide the necessary security mechanisms for the validator to outsource their validation and verification scripts.

Although several studies have explored resource allocation for mobile blockchain networks, most of them focus on PoW and do not reflect the specific characteristics and constraints of PoS. Existing work typically assumes stable computation resources and therefore overlooks the dynamic and heterogeneous resource availability of mobile devices, which directly affects validators’ ability to operate PoS nodes reliably. Moreover, prior studies generally optimize resource allocation solely from the user perspective and do not incorporate the dual interests of both validators and MEC providers, such as balancing cost, reliability, and profit. Finally, current models rarely address the strict latency requirements imposed by PoS block creation times (e.g., Ethereum’s 12-second slot), which are crucial for ensuring timely attestation and block proposal. These limitations highlight the absence of a comprehensive framework that can address mobile resource variability, multi-stakeholder objectives, and PoS-specific timing constraints, underscoring the need for the proposed MEC-Chain framework.

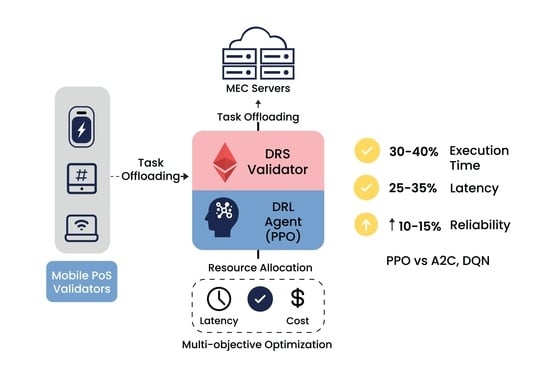

The main contributions of this paper are summarized as follows:

We propose MEC-Chain, a framework that integrates MEC with mobile PoS validation and supports both full and partial delegation modes, ensuring flexible, low-latency, and reliable validator operation in dynamic mobile environments.

We formulate a PoS-aware resource allocation problem that jointly considers transmission latency, server reliability, and the minimum computing resources required to maintain continuous validator availability.

We design a deep reinforcement learning agent based on Proximal Policy Optimization (PPO) to learn optimal offloading and resource allocation strategies under varying network and mobility conditions.

We implement and evaluate MEC-Chain through extensive simulations, demonstrating that PPO outperforms A2C and DQN in execution time, transmission latency, and server reliability.

The rest of the paper is organized as follows.

Section 2 offers the necessary background, serving as a foundational basis for this study. Related works are presented in

Section 3.

Section 4 presents the MEC-Chain framework.

Section 5 depicts a detailed design of the RL-based Resource allocation algorithm. Then,

Section 6 describes and discusses the preliminary evaluation results before concluding remarks and future work in

Section 7.

2. Background

This section elucidates the fundamental concepts essential for comprehensively understanding our approach.

2.1. Proof of Stake Consensus Mechanism

Ethereum, one of the leading blockchain platforms, has transitioned to a Proof of Stake (PoS) consensus mechanism as part of its Ethereum 2.0 upgrade [

15]. This transformative shift aims to enhance the network’s scalability, security, and sustainability. In Ethereum’s PoS, validators are chosen to propose and validate new blocks based on the amount of cryptocurrency they lock up as collateral, known as staking. With a 12-s block time, Ethereum PoS significantly reduces the time required for block creation, thereby improving transaction throughput. This transition aligns with Ethereum’s commitment to sustainability by reducing energy consumption compared to the previous PoW model. Stakers, motivated by the prospect of earning rewards, play a pivotal role in maintaining the network’s integrity. Ethereum’s PoS introduces a dynamic and participatory approach to consensus, fostering a decentralized and secure ecosystem as the platform evolves.

2.2. Mobile Blockchain Network

The concept of a mobile blockchain refers to the integration of blockchain technology with mobile devices, extending the capabilities and benefits of decentralized ledgers to the mobile ecosystem [

16]. The use of a mobile blockchain for Ethereum validators is a significant advancement [

17]. This notion is simple and convenient, allowing validators to remain active regardless of their location. Because mobile devices are portable, they can validate transactions while on the go. This is consistent with the availability and connection criteria, as validators remain linked and active. On the other hand, creating a validation node on a mobile device may pose technical and security problems related to PoS consensus procedures. These activities often necessitate a robust, dependable, and high-performance computer infrastructure, which may not be available on a mobile device. Furthermore, due to their frequent use in public contexts, mobile devices may be more vulnerable to attacks than desktop or laptop computers, increasing their exposure to malicious actors.

2.3. Mobile Edge Computing and Resource Allocation

Mobile Edge Computing (MEC) represents a paradigm shift in how computing resources are utilized in mobile networks [

18]. MEC brings computational capabilities closer to the network’s edge, reducing latency and enhancing the overall user experience. Efficient resource allocation is a cornerstone of MEC, where computing resources are dynamically distributed based on their proximity to end users and the specific requirements of applications. This allocation strategy optimizes available resources, ensuring that processing tasks are performed closer to the data source and reducing the need for data to traverse long distances to centralized cloud servers. The challenge lies in designing algorithms and frameworks that can adaptively allocate resources based on varying workloads and user demands, striking a balance between efficiency and responsiveness in the dynamic landscape of mobile edge computing.

3. Related Work

Some studies have explored the challenge of compute offloading for mobile blockchain networks, aiming to address the computation-intensive proof-of-work (PoW) and promote trust between resource requesters and providers.

Liu et al. [

19] suggested a wireless blockchain framework with mobile edge computing (MEC) capabilities. The computationally difficult mining activities can be offloaded to nearby edge computing nodes, and the cryptographic hashes of blocks can be saved on the MEC server. Jiao et al. [

20] proposed an auction-based resource allocation system to maximize social welfare. The authors enhanced their initial work [

21] by proposing two bidding schemes: constant and multi-demand. In the former, each miner bids for a fixed quantity of resources, while in the latter, the miners can submit their preferred demands and bids. The authors introduce an auction mechanism for the constant-demand bidding scheme that achieves optimal social welfare. In the multi-demand bidding scheme, they design an approximate algorithm that guarantees truthfulness, individual rationality, and computational efficiency. Zuo et al. [

13] designed a new mobile blockchain-enabled edge computing network based on a nonce hash computing ordering (HCO) mechanism. First, the authors formulated the demands for computing an individual user’s nonce hash as a non-cooperative game that maximizes personal revenue. Then, they analyzed the existence of Nash equilibrium in the non-cooperative game and designed an alternating optimization algorithm to achieve the optimal nonce selection strategies for all users. Nevertheless, the transmission delay between the miners and the MEC server is not taken into account in the different approaches [

19,

20,

21]. A large transmission delay may occur if too many miners simultaneously offload tasks to the MEC server.

Qiu et al. [

14] proposed a model for the optimal bid-rigging mechanism in a mobile blockchain edge computing resource auction. To increase the revenue of the MEC service provider, the authors proposed a method for determining the optimal reserve price using the simulated annealing algorithm. This algorithm calculates the optimal reserve price based on bidders’ willingness to pay and the number of bid-rigging participants. Nonetheless, the suggested method enables ESPs to discourage user cheating in resource optimization auctions, reduce revenue losses, and address user data privacy concerns. Another work is that proposed by Zhang et al. [

22], which investigated both the regular task offloading problem and the mining task offloading problem in a blockchain-enabled beyond 5G network. The former problem is formulated as a double auction market, and the latter is formulated as a Stackelberg game. Wang et al. [

23] proposed a new differential evolution algorithm to jointly optimize the mining decision and resource allocation for an MEC-enabled wireless blockchain network. Ding et al. [

24] proposed a NOMA-based MEC wireless blockchain system that optimizes task offloading, user clustering, computing resources, and transmission power to reduce overall energy consumption. They decompose the non-convex problem into sequential algorithms and show through simulations that their joint optimization approach effectively lowers system energy use. Another recent effort is SharpEdge [

25], a QoS-driven task-scheduling scheme for mobile edge computing that leverages blockchain to enable secure, efficient, and trustworthy peer offloading between edge servers from different infrastructure providers. In SharpEdge, edge servers publish tasks with associated rewards and select reliable executors through a reputation mechanism built on historical performance. After execution, the results and executor performance are immutably recorded on the blockchain. To meet MEC’s low-latency requirements, the authors design a concurrent consensus mechanism based on sharding, which significantly improves scheduling efficiency. Fang et al. [

26] present a blockchain-assisted MEC offloading scheme in which user terminals offload sensitive tasks to a base station that executes them while blockchain consensus ensures security. They formulate a joint optimization problem to minimize user energy under delay constraints and solve it using a collective reinforcement learning approach, with simulations confirming its effectiveness. However, this work focuses on generic task offloading and minimizing user energy consumption, without addressing PoS validation or mobile validator behavior.

Overall, most of the above works tackle task offloading from a user perspective and concentrate on PoW mining tasks. Since PoW relies on computational work to select the miner who adds a new block, resource allocation should concentrate on optimizing computational tasks. However, in PoS, given the shorter block creation times, minimizing latency while allocating resources is crucial. Unlike existing works, we focus on PoS consensus and aim to propose an approach that considers the viewpoints of both mobile blockchain users and MEC providers. The user seeks a reliable, secure, and cost-effective resource. As for the providers, they aim to maximize their profit by accommodating requests from validators.

4. The MEC-Chain Framework

The proposed framework for a MEC-enabled mobile blockchain network under the PoS consensus is depicted in

Figure 1. In this framework, we make certain assumptions to streamline the deployment and functionality of edge nodes. Firstly, we assume that the edge node, while not pervasive, maintains a satisfactory level of stability. Furthermore, it is configured to operate as a node within the Proof-of-Stake (PoS) consensus mechanism. This presupposes a reliable and stable environment for the edge node’s consistent performance. Our assumptions include deploying edge servers equipped with Docker images that encompass all the required software components to function as an Ethereum node. The Docker image is designed to generate a container, facilitating the efficient configuration of Ethereum nodes on edge servers. These assumptions collectively contribute to the foundation of our approach to mobile edge computing and resource allocation.

The ultimate goal of this MEC-Chain framework is to enable validators to run their nodes via mobile devices, offering several advantages. In general, validators have three options for executing their nodes—individual staking, service staking, and group staking—each with its advantages and disadvantages. If the requirements of 32 ETH are met, the main challenge is to provide suitable infrastructure. Whatever the validator’s decision, it must preserve validator revenues and node security, while also ensuring network stability and security.

To guarantee these objectives, the framework consists of two basic layers: validators and edge server layers. The validators layer comprises a set of mobile devices, including smartphones, tablets, and PCs. Each mobile device is considered a node in the blockchain network. Mobile devices can be full nodes or lightweight nodes. The task characteristics are almost similar and are described as validator node execution. The edge server layer comprises servers located in different regions, which deploy several edge computing nodes according to their advantage strategy. Each provider offers its quality of service in terms of availability, price, and trust. Providers compete with each other for a group of users with similar needs in the area.

Edge computing servers provide their customers with a user-friendly interface, enabling validators to easily manage their validation nodes. Furthermore, to simplify the interaction between validators and Edge servers, we propose a smart contract with two main functions. The first is the save() function, which initiates an allocation process by saving public information (server and validator identifiers, allocation duration, price, and allocated resources), as well as the validator’s private key, which is essential for consensus participation. It is essential to note that this key is solely used for validation purposes and does not grant access to the funds. To ensure secure handling of validator credentials, we assume that private keys are never stored or transmitted in plaintext. Before invoking the save() function, the validator encrypts the key locally, and the MEC server is only authorized to use the encrypted material for validation tasks under strict access controls. This prevents sensitive credentials from being exposed during the allocation process. The second function is the finish-allocation() function, allowing the validator to free up its computational resources. Together, these functions ensure the efficient management of the validation node’s parameters and the computational resources utilized by the validator.

In the context of MEC-Chain, the two smart contract functions (save() and finish-allocation()) require a secure execution environment. For this reason, the design of the contract must explicitly account for common blockchain vulnerabilities. In particular, reentrancy can be mitigated by structuring these functions according to the checks–effects–interactions pattern or by applying a nonReentrant modifier when external calls are involved. To prevent front-running during the submission of allocation parameters or sensitive validator metadata, a commit–reveal scheme may be incorporated when appropriate. Furthermore, access to save() and finish-allocation() must be restricted to authenticated validator addresses to avoid unauthorized invocation. These security considerations are essential for ensuring that the MEC-Chain smart contract can be implemented without exposing validator credentials or compromising the integrity of the allocation process.

4.1. The MEC-Chain Process

The proposed framework follows a process that begins when users decide to run their tasks on an edge server and specify their requirements, and ends when the task is fully executed (see

Figure 2).

This process includes five steps:

Step 1—Determining the resource requirements: In this step, the validators evaluate the resources required to operate their nodes, such as computing power, storage space, bandwidth, allocation period, and core number. The validator’s location is also taken into account to reduce latency times. It is important to note that the basic requirements of the validator are generally constant. However, in a context of equitable resource distribution, each validator can keep a history recording the activity of its node and the resources required as a function of network throughput. This method enables the estimation of the ideal resources required to run the node reliably and efficiently. Finally, validators send their requests to the system once these specific requirements have been defined.

Step 2—Resource allocation: When a validator submits a request to the system, this request, including task-specific characteristics, is captured by the resource allocation agent. The latter receives requests from validators, extracts information about the task, summarizes it in a vector adapted to the decision engine, and then transmits it to the decision optimization engine. The decision engine receives a matrix describing the environment, including server status, available resources, and validator requests. It updates this data to form a complete state, at which point the optimization engine makes the allocation decision and implements it by physically deploying the decision via the peripheral network. A more detailed explanation of this decision algorithm is presented in

Section 5.

Step 3—Validator’s Node Configuration: Once the system has assigned the allocation to each validator, validators can log in to their session. At this stage, the validator faces several choices, such as the operating system, consensus client type, and runtime client type. The server will then take these choices and create a Docker image of the operating system, runtime client type, and consensus type. The server will then execute this image to launch the container, making the server ready to run the validator node. Once the configurations have been completed, the server will import the validator’s validation key from the smart contract, preparing the node for function. This process simplifies the management of PoS validators by automating the creation and configuration of containers.

Step 4—Exchanging requests and responses: During this step, the validator must choose between two modes—full delegation or partial delegation—taking into account various external factors, such as battery charge. By opting for full delegation, the validator delegates all its work to the server. The server, acting as a validator, decides which tasks will be performed and how. The user interface (UI) is then transformed into a dashboard displaying statistics, the results of various node missions, and the status of the network and nodes. In this mode, tasks are script-automated (see

Figure 3) according to a series of instructions defined in specific code and using the signature key stored in the smart contract to authorize the execution of validation tasks.

In the limited delegation mode, the validator is the decision-maker (see

Figure 4). He or she has full rights to manage their node, and the Ethereum blockchain network will notify the validator’s node of all network details and information, as well as their tasks (attesting, block creation, and transaction validation). This information is then transmitted to the validator via their interface. During this stage, the validator makes decisions concerning these tasks and sends its response as a query containing the parameters required to execute the necessary instructions specific to its needs. For example, once a task has been created in a block, the validator examines the tasks created in the blocks to ensure they comply with Ethereum’s security and confidentiality policies. If these policies are not respected, the validator rejects the task and sends a rejection message to the sending node. If the task complies with Ethereum’s security and confidentiality policies, the validator accepts it and sends a creation request to the issuing node.

In summary, whether validators choose full or partial delegation, the MEC server must guarantee that the validator node operates reliably and responds within the timing constraints imposed by PoS. Although PoS does not require intensive computation like PoW, the validator still depends on stable processing capacity, low transmission latency, and high server availability to avoid missed attestations or delayed participation in consensus. Consequently, the resource management problem in MEC-Chain focuses not on heavy mining tasks but on ensuring continuous validator responsiveness under mobile conditions. These PoS-specific constraints motivate the need for a dedicated resource-allocation model, which we formalize in the next subsection.

4.2. Resource Allocation System Model

Mobile devices have different allocation periods and resources requested, depending on the validator’s needs. Similarly, edge servers are characterized by their associated processing capacities and qualities.

The Edge network is made up of N Edge servers, each of which is denoted by where .

Each server is defined by:

< , , _ >: which represents the available capacity of RAM, disk, and the number of cores.

: the proposed server’s price (per day).

: the proposed server’s bandwidth to receive data and return task results after execution, measured in Mbit/s.

: the server localization (,)

: the reliability level corresponds to the percentage of tasks correctly executed by the server in the last period, reflecting its reliability.

The validator’s request is characterized by:

< , , Core_ >: which represents the required capacity of RAM, disk, and the number of cores.

: the price suggested by the validator.

: the allocation period (number of days).

: the list of validator moves expressed in terms of point

(

,

) and point

(

,

). To find a point close to all validator movements, we calculate the center point as a function of

,

The communication between servers and validators is carried out via bandwidth. It is responsible for connection management, data transmission, and quality of service (QoS). To evaluate the performance of the communication link between users and servers, we can use the following criteria:

The speed of transmission, estimated by the transmission time

, deduced as a function of the transmission rate noted

per second, will be calculated based on distance, signal quality, bandwidth

, and using Claude Shannon’s theorem [

27].

Note that

N defines a constant value for noise,

B is the bandwidth value,

P represents the signal power, and

h is the estimated channel gain using the signal power propagation model, which is calculated as:

where

denotes the distance between validator

i and server

j,

f is the carrier frequency,

c is the speed of light, and

represents the small-scale fading coefficient (e.g., Rayleigh or Rician distributed). In this work, for tractability, we assume the average fading case

, which is equivalent to neglecting instantaneous fading and considering only large-scale path loss. This simplification enables us to focus on resource allocation strategies.

As a result, the transmission time

between the validator and the server will be calculated as follows:

where S is the request size in Mbit.

Decision variable: The allocation of a task to a server is represented by the decision variable for each validator i and server j.

In our context, the task is to run an Ethereum validation node for a validator, which can only be run on a single server. This constraint is defined by Equation (

6), indicating that a task can only be assigned to one server at a time.

The validation node must be properly configured to ensure optimal functionality. To accomplish this, each request must be routed to a server with sufficient capacity. This condition can be expressed as follows:

where

represents the capacity requested by the validator and

represents the server’s available capacity.

In practice, the validator provides a price for the amount of resources per day, while the edge provider gives a total price for all the resources. We then calculate the percentage of resources requested from the available resources and deduct the provider’s price from the validator’s request

.

At the same time, the

must be lower than the price proposed by the validator

where

represents the resource usage percentage requested by the validator.

Another important condition is the validator’s mission completion time

, often referred to as blocking time. Ethereum’s blocking time is 12 s, noted

, generally encompassing the local execution time. In our case, this includes the time required for the request from the server to the validator

, the response time from the validator to the server

, and the execution time

. This value must remain below the blocking time.

We present a resolution to the resource allocation problem in what follows.

5. DRL-Based Resource Allocation Algorithm

Our objective is to develop an advanced optimization algorithm that efficiently allocates resources across various validators. For the validators, this entails minimizing latency, enhancing the provider’s reliability, and reducing costs. For edge server providers, the focus is on maximizing profit and achieving optimal resource allocation.

Mathematically, this problem is formalized as a constrained multi-objective optimization problem in which we aim to maximize several objective functions: ensuring the quality of service provided to the validators so that they meet necessary standards, coordinating between requests and available resources to address as many requests as possible, and guaranteeing the provider’s profit, all while adhering to a set of constraints, such as resource availability, server capacity, and block creation time.

To address the complex and dynamic nature of this resource allocation problem, we propose a solution based on deep reinforcement learning (DRL), specifically using the Proximal Policy Optimization (PPO) algorithm. DRL is particularly well-suited for this problem because it excels in environments where decisions must be made sequentially and under uncertainty. Unlike traditional optimization methods, which may struggle with the problem’s high dimensionality and non-stationary characteristics, DRL can learn from the environment and adapt its strategies over time. DRL is fundamentally based on Markovian formalization, particularly through the use of Markov Decision Processes (MDPs).

5.1. Markov Formalization

In this section, we formulate our problem in the context of Markov Decision Process (MDP) theory by defining a set of states, actions, and rewards that allows us to efficiently model the interactions between the resource allocation agent and the environment.

State: the state space S represents the state of the allocation system at each instant st, where st = , , … . In our model, the state space is structured as a dictionary consisting of two parts: the first part contains the task queue, which is a list of requests waiting to be assigned to a server. This part is identified by the key “Request” and is presented in the form of a matrix, where each row corresponds to a specific request according to its “request status”:

If the request status is 0, then it has not been processed. It takes the form of a vector representing the characteristics of the requested resources (), which include disk (), core (), RAM (), nearest center (), proposed price (), and request status.

If the request status is 1, it has already been processed and assigned to a server; thus, it is a vector of zeros.

If the status of the request is −1, this means that the agent has made the wrong decision and is represented as a vector of −1.

The second part represents the state of the servers, identified by the key “Servers”, and presented in the form of a matrix, where each row corresponds to the state of a particular server.

Each server is characterized by available resources, including disk (), core (), RAM (), , location (), reliability level (), and price of available resources ().

Action: the action space is a discrete space ranging from 0 to J, where J represents the number of available servers. Each action a ∈ A indicates the server to which the request is allocated.

Reward: a reward is allocated to each time interval t, following a feasible action A(t). For each allocation , a reward is given. This reward must be consistent with the needs of both the validators and the edge server providers. To this end, we have divided the reward form into three parts:

Provider reliability reward: The reward can be estimated using the reliability level to encourage the agent to choose a server with a higher confidence value.

Latency minimization reward: Efficiency in our problem boils down to minimizing transmission time and the cost of server access fees.

Equation (

12) encourages the agent to find a server closer to the validator.

Profit maximization reward: The agent will seek to maximize the difference between the price proposed by the validator and the cost of server access fees, which guarantees savings compared to the validator’s budget.

where:

Once the various reward values have been calculated, the agent receives the following reward vector:

To promote optimal decision-making by the agent, we introduced a condition emphasizing the significance of strategic choices. Specifically, when both

(indicating a reliability level above the threshold of 0.5) and

(indicating a transmission time below 1 s), the rewards for reliability

and latency

are amplified by a factor of 10. Conversely, to discourage poor decisions, we established a critical condition for operational success: if the available resources

fall short of the required demand

, the agent incurs penalties. This reduces the rewards for provider reliability, latency minimization, and profit maximization by 10 points each. This framework encourages desired behaviors while penalizing actions that could compromise system performance.

5.2. Proximal Policy Optimization as a Resource Allocation Method

The proposed DRL is based on the Proximal Policy Optimization (PPO) algorithm to solve the optimization problem (see Algorithm 1). PPO was chosen for its ability to efficiently handle complex and varied reward functions. It strikes a balance between exploration and exploitation, ensuring that the algorithm explores a wide range of possible solutions while still converging towards optimal policies. This is crucial in our multi-objective scenario, where different objectives, such as minimizing latency, enhancing reliability, reducing costs, and maximizing provider profit, must be balanced under stringent constraints like resource availability, server capacity, and block creation time.

| Algorithm 1 Resource Allocation with PPO |

- 1:

Input: resource_list, request_list - 2:

Output: allocation_list - 3:

for num_step in range(len(request_list)) do - 4:

State ← get_state(num_step) - 5:

action ← AgentPPO(State) - 6:

if server is available then - 7:

reward ← Calculate_reward(request, server) - 8:

alloc ← allocate(server, request) - 9:

allocation_list.append(alloc) - 10:

end if - 11:

num_step ← num_step + 1 - 12:

end for

|

6. Experiments and Results

The main focus of the experiments is to assess the performance of the proposed DRL-based resource allocation algorithm. This algorithm was implemented using the Stable-Baselines3 library [

28], chosen for its robustness, flexibility, and comprehensive documentation. This library offers optimized use of numerous reinforcement learning algorithms. We implemented the resource allocation algorithm and trained and tested the agent on a laptop with 6 cores, 16 GB RAM, and a 512 GB SSD under Windows 11.

To assess the performance of the proposed DRL-based resource allocation algorithm, we rely on a simulation that models real-world scenarios based on the validators’ needs and edge server characteristics. The simulation models a homogeneous environment where edge resources are geographically distributed, with validators in the same area requesting resources based on their operational needs.

Table 1 and

Table 2 outline the configuration parameters for edge servers and requests. The simulation, conducted in Python v. 3.10, uses a random distribution according to the specified configuration.

6.1. PPO-Based Approach Training Assessment

This section explores the evaluation of our algorithm during the learning process, followed by a comparison with the performance of two other reinforcement learning algorithms: Advantage Actor-Critic (A2C) [

29] and Deep Q-Network (DQN) [

30]. A2C was chosen for its balance of computational efficiency and performance in policy gradient methods. At the same time, DQN was selected for its strong track record in handling discrete action spaces using value-based methods.

Table 3 presents the hyperparameters used for all three algorithms (our PPO-based algorithm, A2C, and DQN).

The curves in

Figure 5 illustrate the performance evolution, in terms of rewards, of three reinforcement learning algorithms during the training phase: our algorithm based on PPO, A2C, and DQN. The curves in

Figure 5a,b show the rewards from over 16,000 episodes for the PPO and A2C agents. The blue line represents the gross reward per episode, while the orange line represents the moving average of rewards for every 5 episodes. Initially, the PPO and A2C agents start with negative rewards, meaning their initial attempts to solve the resource allocation problem are unsuccessful. Over time, as the agent learns by trial and error, the rewards increase dramatically as it gains more experience. Around episode 6000, the curve in

Figure 5b shows that the A2C agent’s performance stabilizes, reaching a maximum value of approximately 850. Performance improvement stops once the agent reaches its allocation limit, unlike the PPO agent, which continues to improve slowly until episode 16,000.

The constant reward increase suggests an improved allocation quality for each request.

Furthermore, comparing

Figure 5a,c reveals that the PPO curve shows faster reward improvement than the DQN curve, which requires more than 60,000 episodes. In contrast to the PPO agent, the DQN agent shows more significant fluctuations, while the PPO agent displays a much more stable movement.

In summary, as demonstrated in this experiment, our PPO-based algorithm outperformed both A2C and DQN during the training phase.

6.2. PPO-Based Approach Test Assessment

In this experiment, we compare the results of using our agent with two other algorithms, A2C and DQN, to test the effectiveness of our approach in terms of total execution time, cost savings, total transmission time, and reliability.

We have created a new simulation with the same parameters mentioned in

Table 1 and

Table 2 for use in the test.

Figure 6 presents the experimental results comparing the performance of the three algorithms: our approach based on PPO, A2C, and DQN. Each algorithm was evaluated over 500 iterations, with each iteration using a new set of requests and a corresponding set of available resources.

Total Execution time: The first graph shows the evolution of the total execution time as a function of the number of iterations for three reinforcement learning algorithms: our approach, A2C, and DQN. Our approach-based PPO is distinguished by systematically shorter execution times, demonstrating superior efficiency; A2C shows intermediate execution times, stabilizing around 0.5 s, slightly higher than those of PPO’s, while DQN has the longest execution times of the three. This may be due to the nature of the DQN algorithm, which uses a table of Q values to represent the policy and can be more computationally expensive than the neural network-based methods used by PPO and A2C. However, it should be noted that from iteration 400 onward, the execution time starts to increase, and the curve shows fluctuations at this stage for both PPO and A2C.

Cost savings: When we analyze the savings achieved through price negotiation, we find that the three algorithms, PPO, A2C, and DQN, generate nearly equal average savings of between $1000 and $1600.

Transmission time: The third graph shows that the PPO algorithm has the shortest total transmission time, stabilizing at around 30 s. DQN has a higher transmission time, hovering around 40 s, while A2C has the longest transmission time, stabilizing at around 60 s.

Reliability: The last graph shows that the PPO algorithm has a more stable reliability rate, fluctuating between 75 and 80; the A2C and DQN algorithms, on the other hand, exhibit more variable reliability rates, fluctuating between 50 and 75.

7. Conclusions

The shift to PoS has provided mobile blockchain with new opportunities, but it has also raised issues due to the constrained resources of mobile devices. Validators depend on the availability and seamless functioning of nodes, which are impacted by these limitations. This study suggested a novel framework, MEC-Chain, that uses the Proof of Stake (PoS) consensus method to integrate Mobile Edge Computing (MEC) into a mobile blockchain network. The proposed framework begins with the formulation of user needs and ends with task completion, helping to optimize task execution on edge servers. One unique feature of this design is its flexibility to adapt to the specific needs of validators, which is facilitated by both full and partial delegation modes. Additionally, the architecture includes a resource allocation agent that uses deep reinforcement learning (DRL), more precisely, the Proximal Policy Optimization (PPO) algorithm, to balance the interests of mobile blockchain users and the MEC providers. We used multi-objective reinforcement learning constrained to a single strategy. To evaluate the effectiveness of our approach, we carried out simulations. The results obtained demonstrate the ability of our PPO-based approach to perform quality resource allocations. A comparison with the A2C and DQN algorithms highlighted PPO’s superiority in server reliability and transmission time, but not at the cost level, which may be a limitation for our PPO model.

To further optimize our results, we plan to adopt a multi-strategy approach that enables the agent to learn and combine different allocation strategies in response to changing system conditions. For example, the Pareto strategy could help identify efficient solutions that balance key objectives such as cost and latency. However, this study has two main limitations: the wireless channel model relies on average fading and does not capture fast variations or interference, and the evaluation remains simulation-based, as MEC-Chain has not yet been tested in a real MEC–PoS deployment. To address these limitations, future work will focus on integrating more realistic wireless models and evaluating MEC-Chain in real-world environments to assess its usability, adaptability, and potential for further optimization.