1. Introduction

Accurate crop distribution information from regional-to-global scales is essential for estimating crop yield [

1], modelling greenhouse gas (GHG) emissions from agriculture [

2] and making effective agrarian management policies [

3]. Moreover, agricultural ecosystems are often managed intensively and modified frequently [

4], which might alter land cover/use patterns rapidly and, thus, influence ecological processes and biogeochemical cycles [

5]. These spatial and temporal characteristics pose a great challenge for traditional approaches (e.g., ground surveys) to monitoring agricultural systems. Remote sensing using sensors onboard satellite and aircraft platforms, however, has been shown to be an effective means of crop monitoring at regional-to-global scales, and has the advantages of being consistent, timely and cost-efficient (e.g., [

2,

5]).

Coarse and medium spatial resolution multispectral data, such as Landsat, SPOT and MODIS (Moderate Resolution Imaging Spectroradiometer), have been used widely for crop classification and mapping [

3,

6,

7]. However, the accuracy of crop maps generated from these images is inevitably compromised by the spatial limitation [

8], especially over the highly fragmented and heterogeneous agricultural areas. As stated by [

9], a spatial resolution of less than 10 m is required for precision agriculture. More recently, remotely sensed imagery from fine spatial resolution (FSR) (<10 m) satellite systems (e.g., RapidEye, IKONOS, and WorldView) as well as airborne systems (e.g., Uninhabited Aerial Vehicle Synthetic Aperture Radar (UAVSAR)) is now available commercially, providing new opportunities for crop classification and mapping in very fine detail [

9,

10]. However, high intra-class variance and low inter-class separability over croplands in FSR images may exist because of differences in climatic conditions, topographic properties, soil composition, farming practices and so on [

11]. Moreover, FSR imagery has fewer multispectral bands (around four) in comparison to medium resolution data (e.g., MODIS and Landsat), which leads to subtle differences in spectral/polarimetric properties amongst crop types (i.e., crop types are difficult to discriminate). Therefore, developing advanced classification methods for accurate crop mapping and monitoring is of prime concern, especially with a view to exploiting the deep hierarchical features presented in FSR imagery.

During the past few decades, a vast array of methods has been developed for remote sensing image classification [

12,

13,

14]. These methods can be categorised into pixel-based and object-based methods according to the basic unit of analysis (either per-pixel or per-object) [

15]. Pixel-based classification methods that rely purely upon spectral (or polarimetric) signatures have been used widely for crop classification using various types of imagery (including the newly-launched Sentinel-2 imagery [

16,

17,

18]). However, these methods often produce limited classification accuracy due to large intra-class variances as stated above. Severe salt-and-pepper effects may occur owing to the noise in FSR imagery. Although some post-classification algorithms (e.g., spatial filters) might alleviate the noise to some extent, they may also erase small objects of interest comprised of just a few pixels. Compared with pixel-wise algorithms, object-based image analysis (OBIA) built upon segmented homogeneous objects [

15] is preferable for crop classification using FSR remotely sensed images (e.g., [

19,

20], in which objects instead of pixels are adopted as the basic unit of analysis. This allows spatial information (e.g., texture, shape) with respect to the objects to be incorporated into the classification process, thus, reducing the salt-and-pepper noise [

15].

Under the framework of OBIA, machine learning algorithms (e.g., the support vector machine (SVM), the multilayer perceptron (MLP) and the random forest (RF)) have been used for crop classification and mapping thanks to their ability to deal with multi-modal and noisy data [

21]. The SVM, as a typical non-parametric machine learning classifier, was often found to outperform other machine learning algorithms in image classification due to its high generalisation ability [

22]. The objected-based SVM (OSVM) has, thus, been popular for complex crop classification tasks [

2,

23]. In the OBIA classification process, there are generally two kinds of information that can be obtained from a spatially segmented region: within-object information (such as spectra, polarization, texture) and between-object information (such as configuration and topological relationships between adjacent objects) [

24]. The OSVM classifier can extract within-object features (low-level information) from FSR images for classification. However, it is essentially a single-layer classifier (linear SVM) or two-layer classifier (kernel SVM) [

25], which might overlook the high-level between-object information that may be critical to crop identification. For example, crop swath direction conveys important information for the identification of crop pattern [

26]. In this context, a series of object-based spatial contextual descriptive indicators were developed based on spatial metrics, graphs and ontologies [

27,

28] to derive high-level semantic information from FSR images. However, it is often very difficult to characterise the spatial contexts as a set of “rules” in view of the structurally complicated and diverse agricultural landscapes [

2], even if these complex spatial patterns might be interpreted by human experts [

29].

Recent developments in artificial intelligence and pattern recognition demonstrated that high-level feature representations can be extracted with multi-layer neural networks in an “end-to-end” manner without using human-crafted “rules” [

30]. These breakthrough deep learning algorithms achieved unprecedented success in a wide range of challenging domains, such as speech recognition, visual object recognition and target detection [

30,

31]. As a representative deep learning method, convolutional neural networks (CNNs) have drawn a lot of academic and industrial interest, and made huge improvements in the field of image analysis, such as text recognition [

32], speech detection [

31] and image denoising [

33]. CNNs, with great capability in high-level feature characterization, were also applied to various remote sensing applications, such as object detection [

34], image segmentation [

35] and scene classification [

36]. In addition, CNN-based approaches have been developed to solve the complex problem of remote sensing classification, where all pixels in an image are labelled into several categories. For example, Stoian, et al. [

37] presented a fully-realized CNN model for land cover classification using multi-temporal high spatial resolution imagery. Chen, et al. [

25] proposed a three-dimensional (3D) CNN for hyperspectral image classification by using both spectral and spatial features. Recently, Zhang, et al. [

38] provided a novel approach by combining CNNs with rough sets for classification of FSR images. The above-mentioned work has achieved promising classification results, demonstrating the advantages of CNNs with respect to spatial feature representation. However, these pixel-based CNNs classify images by applying contextual patches as inputs, which often blurs the boundaries between adjacent ground objects, leading to over-smooth classification results [

38]. To overcome this problem, a new object-based CNN (OCNN) framework was presented which combined the OBIA and CNN techniques, such that the segmented objects can be identified while retaining precise boundary information [

24]. The OCNN method was applied to the complex land use classification task and produced encouraging classification results. However, with a fix-sized input window (receptive field), large uncertainties may be introduced into the OCNN classification process, especially for those objects with areas far smaller or larger than the input window [

24]. Moreover, while the CNN model can explore the high-level features hidden in remotely sensed images, low-level features (e.g., within-object spectra) observed by shallow models may be overlooked [

38].

Any single classifier is unlikely to achieve promising results if the scenes of remotely sensed imagery are complex [

38,

39]. The combination of multiple classification methods with complementary behaviours would be a good idea to improve complex land cover classification [

40] and crop classification [

39], by better exploring the minute differences that may exist between the classes. Within the remote sensing community, there are generally three types of ensemble-based systems, namely “consensus classification’’, ‘‘multiple classifier systems’’ and ‘‘decision fusion’’ [

41]. Relying on multiple types of datasets, the utility of consensus classification is constrained due to the lack of availability of such data. By means of manipulating training samples to generate subsets randomly (boosting and bagging) [

42], multiple classifier systems require extremely large sample sizes and deliver high time complexity. In contrast, decision fusion that combines the outputs of individual classifiers with a certain fusion rule to take advantage of complementary characteristics is a generally effective ensemble strategy [

40]. For example, different classifiers may produce accurate results over different areas within a classification map and, hence, produce complementary results [

38]. However, the above-mentioned ensemble methods are always performed based on pixel-wise classifiers with shallow structures and, thus, are not well suited to cope with the complex FSR image classification problem.

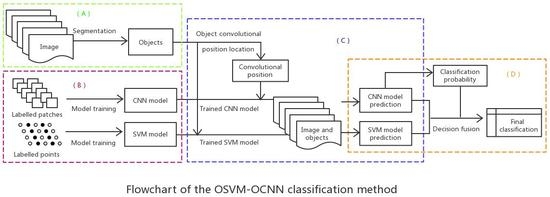

In this research, a novel OSVM-OCNN approach was proposed by combining the OSVM (an object-based SVM model with shallow architectures) and the OCNN (an object-based CNN model with deep architectures) through a rule-based decision fusion strategy. Image segmentation was first used to partition the agricultural landscape into basic crop patches (objects), based on whether the SVM and CNN models were respectively applied to allocate a label to each object. The outputs of the two models were combined subsequently through a rule-based fusion strategy according to prediction probability output from the CNN. Such a fusion decision strategy allows the rectification of CNN predictions with low confidence using SVM predictions at the object level. The major contributions of this research can be summarised as: (1) the shallow architecture SVM and the deep architecture CNN was first found to be complementary to each other in terms of crop classification at the object level; (2) a straightforward rule-based decision fusion strategy was developed to effectively fuse the results of the OSVM and OCNN. We investigated the effectiveness of the proposed approach over two study sites with heterogeneous agriculture landscapes in California, USA, using the FSR UAVSAR and RapidEye imagery.

The reminder of this paper is organised into five sections:

Section 2 elaborates the proposed methods in detail.

Section 3 provides the study area, datasets, model structure and experimental results. A thorough discussion of the observed results is made in

Section 4, and the conclusions of this research are drawn in

Section 5.

4. Discussion

Accurate classification of FSR remotely sensed images is considered a major challenge within the remote sensing community [

57]. Combination of different classifiers is an effective means to solve the complex FSR image classification problem, where single classifiers should be as unique as possible, so as to produce different decision boundaries [

38]. However, traditional classifier fusion methods by integrating classifiers at the pixel level are unsuitable for processing FSR imagery, given the potential for large amounts of noise (see the salt-and-pepper noise in the PSVM classifications,

Figure 6 and

Figure 7).

In this research, a novel method (OSVM-OCNN) was proposed for the first time by fusing the outputs of the object-based SVM (OSVM) and CNN (OCNN) at the object level for crop classification from FSR images. The OSVM determines the decision boundaries among classes based completely on the low-level within-object information (e.g., spectral, polarimetric, texture; [

24]). In such a manner, the OSVM can identify the objects with salient spectral properties (i.e., light regions on the

Figure 3b), but has difficulty handling those objects with similar within-object information (e.g., the misclassifications between two types of forage crops (alfalfa and hay),

Figure 6b). This is due mainly to the unavailability of high-level between-object information. In fact, for a large crop parcel, it is normally segmented into several small objects due to the heavy spectral and spatial variations. If only the within-object information is utilised, some of the segmented objects might be misclassified. However, if the between-object (contextual) information is also taken into account, sufficiently representative information can be achieved for the objects, thus markedly increasing the chance of correctly identifying those objects. The OCNN extracts hierarchical features from images via an input window using multiple convolution and pooling operations [

24]; thus, both low-level and high-level features are incorporated into the classification process. However, with a fixed input window, the OCNN is incapable of accurately extracting key within-object information of particular objects (e.g., small-sized and linearly shaped objects) due to the mismatch between the observational scale of the OCNN and the scale of the objects themselves. For example, as shown in

Figure 3c, the OCNN’s prediction probability of some small-sized objects (usually with distinctive within-object information) tends to be relatively low. In fact, as a state-of-the-art deep learning classifier, OCNN is especially distinguished in representing spatial contextual (i.e., between-object) information, whereas OSVM is superior in extracting within-object information. As a consequence, the shallow-structured OSVM and the deep-structured OCNN have intrinsically complementary characteristics in terms of remotely sensed image classification, as illustrated by

Figure 8a. It should be noted that the incorporation of both within- and between-object information is normally necessary to identify and classify complex landscapes. This explains why the proposed hybrid OSVM-OCNN method consistently and significantly outperformed its sub-modules (the OSVM and OCNN) as well as traditional pixel-wise classifiers (the PSVM and PCNN) over both study sites (

Table 5,

Figure 6 and

Figure 7).

Searching for the optimal parameter combination of decision fusion rules is a tedious and time-consuming process [

41]. In the proposed OSVM-OCNN, a novel decision fusion strategy was developed to integrate the two sub-models, primarily based on the prediction probability of the OCNN in consideration of its superiority in image classification. That is, the OCNN is regarded as the base classifier, and it is given credit as long the key information of the target object is acquired (i.e., high prediction probability); otherwise, the prediction of the OSVM is trusted. The combination of the two classifiers (OSVM and OCNN), therefore, represents a new rule-based decision fusion strategy that incorporates this key principle. Such a fusion strategy exactly capturing the complementarity between the two sub-modules, even with different types of data (optical and SAR images), is straightforward and efficient in comparison to previous methods (in which two or more parameters are usually employed, e.g., [

38,

58]), since only one parameter (

) is required. Moreover, there are some other parameters that need to be finely tuned, including those used in the sub-modules and image segmentation. The control parameters of the SVM and CNN can be tuned relatively easily according to previous research. In contrast, the parameters of segmentation algorithms are usually hard to determine. In the MRS image segmentation algorithm, the scale parameter is considered the most important, as it directly controls the relative size of the segmented objects. In practice, it is almost impossible to select an optimal scale value that can accurately segment all of the ground patches with the boundaries being retained completely. In practice, a relatively small value is always a preferred alternative (e.g., [

24]). By taking the UAVSAR experiment as an example, the impact of segmentation on the overall accuracy of the proposed method was illustrated (

Figure 9). It can be seen from the figure that the OSVM-OCNN consistently outperformed the two sub-modules, regardless of how the scale parameter is tuned. The scale parameter selected in this research (i.e., scale = 25) that achieves a small amount of over-segmentation is suitable for crop classification. If the value is too small (e.g., scale = 20 in

Figure 9), one crop patch may be partitioned into many very small objects; and if it is too large (e.g., scale = 30 in

Figure 9), one segmented object may contain many crop patches. Obviously, both cases exert negative impact on the classification results (

Figure 9). Therefore, the segmentation parameters employed in this research by trial and error are relatively optimal. Algorithms that automatically determine segmentation parameters (e.g., [

59]) could be integrated into the proposed method in future research.

The proposed hybrid OSVM-OCNN approach achieved promising crop classification results for FSR images. In fact, the proposed method that makes full use of both within-object and between-object feature representations has wide potential applicability for a range of complex classification tasks (e.g., Mangroves, [

60]; land use, [

38]). The proposed classification method, therefore, provides a general solution to address the complex FSR image-based classification problem. It should be mentioned that the effectiveness of the OCNN, a sub-model of the OSVM-OCNN, is constrained by a so-called optimal (fixed-sized) input window as stated previously. A variable sized input window that adjusts dynamically according to the size of objects, thus, deserves to be introduced to the OCNN. This will be investigated in detail in future research.