1. Introduction

Freshwater is a finite resource that is required for the daily production of container crops to be used for food, ecosystem services, urban development, and other purposes. The United Nations Education, Scientific, and Cultural Organization (UNESCO) has indicated that the combined expansion of manufacturing, agriculture, and urban populations has created excessive strain on the existing fresh water supply and has called for more sustainable water management [

1]. One opportunity to reduce water consumption lies in the development of intelligent irrigation systems that can optimize water use in real-time [

2]. Crop producers routinely provide an excess of water to container-grown plants to mitigate plant stress and subsequent economic loss, resulting in inefficient use of agrichemicals, energy, and freshwater. Site-specific irrigation systems minimize these losses by using sensors to allocate water to plants as needed, improving crop production while minimizing operating costs [

3]. Sensor-based irrigation is not a new concept [

1,

4,

5,

6]. Kim et al. [

5] developed software for an in-field wireless sensor network (WSN) to implement site-specific irrigation management in greenhouse containers. Coates et al. [

7] developed site-specific applications using soil water status data to control irrigation valves.

In 2017, the U.S. nursery industry had sales of

$5.9 billion and ornamental production accounted for 2.2 percent of all U.S. farms [

8]. Plants grown in containers are the primary (73%) production method [

9] and the majority (81%) of nursery production acreage is irrigated [

10]. The largest production cost for nurseries is labor, which amounts to 39% of total costs [

11], and labor shortages are linked to reduced production [

12]. Adoption of appropriate technologies may offset increasing labor costs and labor shortages. Small unmanned aircraft systems (sUAS) have been suggested as an important tool in nursery production to help automate certain processes such as water resource management [

13].

sUASs allow farmers to quickly survey large plots of land using aerial imagery. sUAS imagery has been used to detect diseases and weeds [

14,

15], predict cotton yield [

16], measure the degree of stink bug aggregation [

17], and identify water stress in ornamental plants [

18]. Several thermal and spectral indices have been correlated to biophysical plant parameters based on sUAS imagery [

19,

20]. Analyses of sUAS imagery have been shown to be sensitive to time of day, cloud cover, light intensity, image pixel size, soil water buffering capacity, and atmospheric conditions at the canopy level [

21,

22]. Still, multispectral data collected with sUAS were shown to be more accurate than data collected using manned aircraft [

23]. A variety of methodologies, including thermal and spectral imagery, have been used to assess water stress in conventional sustainable agriculture using sUAS [

3]. Stagakis et al. [

24] indicated that the high spatial and spectral resolution provided by sUAS-based imagery could be used to detect deficient irrigation strategies. Zovkoa et al. [

25] reported difficulty measuring three levels of water stress of grape grown in soil; however, they were able to discern irrigated vs. non-irrigated plots via hyperspectral image analysis (409–988 nm and 950–2509 nm) when employing a support vector machine (SVM). de Castro et al. [

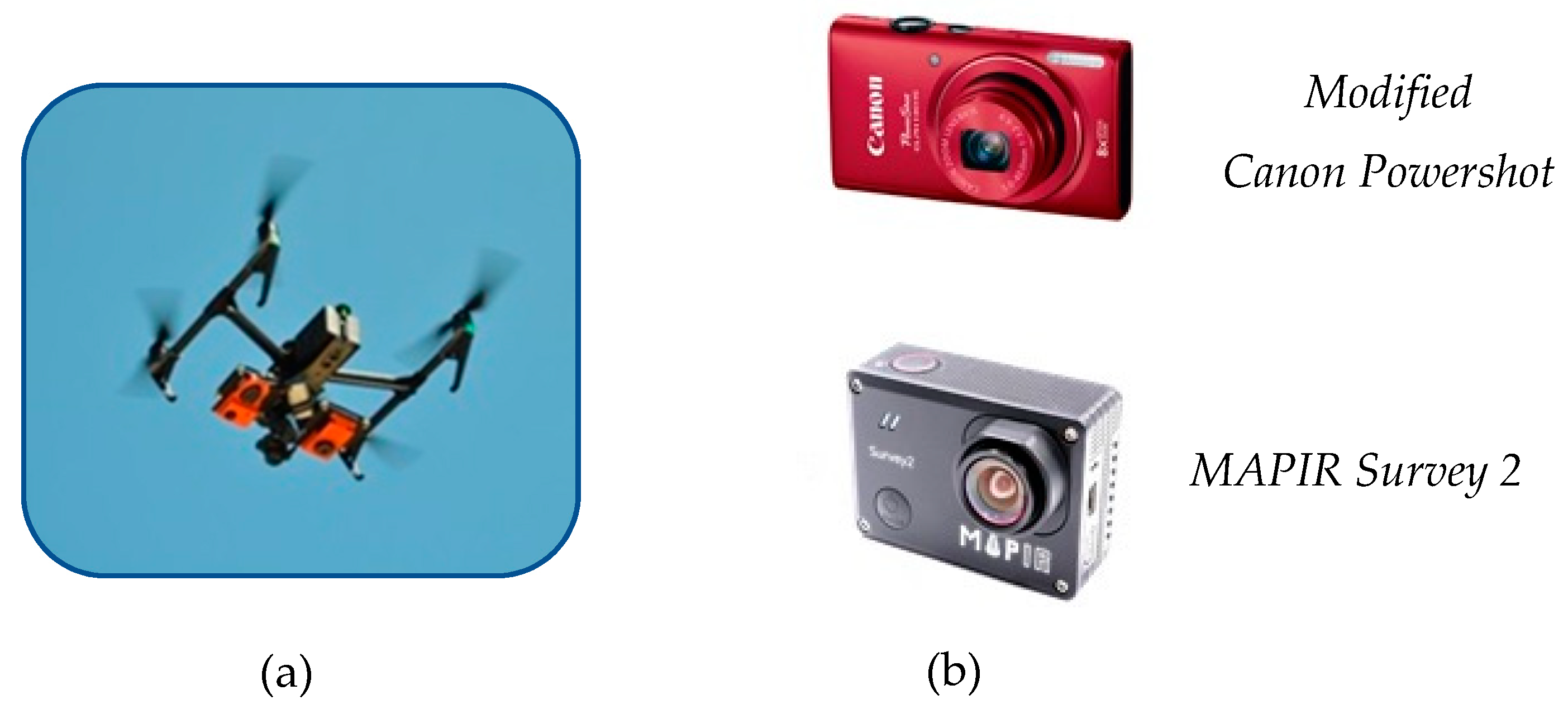

18] successfully identified water-stressed and non-stressed containerized ornamental plants using two multispectral cameras aboard an sUAS, although the spectral separation was higher when information from the sensors was combined. Data being produced by de Castro and Zovkoa could be utilized as a roadmap for real-time, sustainable water management of specialty or container-grown crops using sUAS. Fulcher et al. [

26] indicated that the adoption of sUAS to monitor crop water status will be useful in addressing the challenge of sustainable water use in container nurseries. Unlike conventional crops produced in soil systems, containerized soilless-based systems have low water buffering capacity, resulting in rapid physiological changes that may not be observed at the ground level visually, but can be monitored by reflected wavelengths captured by sUAS. To reduce size and cost, sUAS can collect and wirelessly transmit high-resolution image data to cloud providers that can perform analyses on offsite servers. Thus, the convergence of technologies—such as sUAS, Internet of Things (IoT), spectral imagery, and cloud-based computing—can be used to build intelligent irrigation systems that monitor crop status and optimize water allocation in real time.

In this study, images were analyzed with IBM Watson Visual Recognition, a cloud-hosted artificial intelligence service that allows users to train custom image classifiers using deep convolutional neural networks (CNNs). Unlike linear algorithms, CNNs model complex non-linear relationships between the independent variables (pixels comprising the image) and the dependent variable (plant health) by transforming data through layers of increasingly abstract representation (

Figure 1). The first layer is an array of pixel values from the original image; nodes in subsequent layers represent local features such as color, texture, and shape; deeper layers encode semantic information such as leaf or branch morphology. Individual nodes become optimized to represent different features of the image through an iterative learning process that rewards nodes that amplify aspects of the image that are useful for classification and suppresses those that do not [

27]. The convolutional relationship from one layer to the next allows CNNs to model complex relationships between input variables, making it particularly useful for analyzing image data that cannot be understood by examining pixels in isolation. Given a set of images of stressed and non-stressed plants, for example, individual nodes in the network may become optimized to represent spectral indices that are sensitive to water stress. Those nodes can affect the outcome directly, or they can feed forward into higher-order features such as the specific location and pattern of discoloration within the plant. Spectral indices may combine with other plant features such as the unique structure of sagging branches or the distinct texture created by the shadows from drooping leaves. All of these features culminate in a single output node that returns a value from zero to one representing the confidence that a given image belongs to the desired class (i.e., water stress).

While CNNs’ layers allow networks to model complex nonlinear relationships that simpler algorithms might miss, they are also prone to overfitting. This occurs when CNNs learn patterns that are specific to the training set and do not generalize to the overall population. In one case study, for example, a model trained to predict a patient’s age based on MRI images was found to have learned the shape of the head rather than the content of the scan itself [

28]. The challenge of overfitting is compounded by CNNs’ inherent ‘black box’ quality. Since information is passed through so many transformations, it is difficult to identify which input variables have the largest influence on the final outcome. While CNNs often must be trained with large datasets to overcome their tendency to overfit, transfer learning techniques allow fully trained networks to be repurposed for new classification tasks with much smaller datasets. A growing set of tools are also making it possible to introspect models to determine feature importance directly. Saliency heat maps, for example, can highlight regions of the image that are used for classification [

29,

30]. Overfitting can be tested with a cross-validation scheme in which models are trained with one set of images and then used to classify a new, previously unseen set of images. Performance metrics are based on how well the model’s classification of unseen data matches a ground truth standard. A final limitation of CNNs is the significant amount of time and resources required to train them. To circumvent this, the computation may be outsourced to cloud computing providers that train models on large servers and offer a suite of tools for hyperparameter tuning, transfer learning, and cross-validation [

31].

Despite their inherent limitations, CNNs have become popular for image recognition tasks ranging from Facebook photo-tagging to self-driving cars [

32,

33]. In agriculture, CNNs have been used to predict wheat yield based on soil parameters, diagnose diseases with simple images of leaves, and detect nitrogen stress using hyperspectral imagery [

34,

35]. CNNs’ ability to learn complex nonlinear features makes them particularly useful for analyzing image data in which individual pixels form larger features such as shape or texture. Extensive research has demonstrated that CNNs perform image classification tasks with higher accuracy than traditional machine vision algorithms [

36].

In our study, a small set of aerial images were used to train custom image classification models to detect water stress in ornamental shrubs. The objective was to evaluate the ability of IBM Watson’s Visual Recognition service to detect early indicators of plant stress. These experiments provide a strong rationale for the deployment of cloud-based artificial intelligence frameworks that use larger datasets to monitor crop status and maximize sustainable water use.

4. Discussions

Unlike traditional machine vision models that require users to manually select features, CNNs have layers of neurons that allow them to automatically learn relevant features from data. CNNs improve with each training example by iteratively rewarding neurons that amplify aspects of the image that are important for discrimination and suppressing those that do not. For example, in traditional techniques, the background must be manually segmented prior to analysis. By contrast, CNNs can automatically ‘learn’ to ignore the background because it is not relevant to the classification task. Similarly, rather than manually delineating spectral indices thought to be correlated with plant health, networks can infer relevant transformation of the input color channels from data. Low level features inferred by the network feed into higher-order features such as the specific location or pattern of discoloration within the plant. Information from spectral indices may combine with other features such as the unique structure of sagging branches or the distinct texture created by the shadows from wilted leaves. Thus, CNNs can learn multiple features of the training images and are not limited by a priori hypotheses.

Models tested in this study demonstrated significant variation in their ability to identify water stress in different species. Models trained on

Buddleia achieved near-perfect separation while those trained on

Cornus approximated random classification. Such variation is consistent with previous literature showing differences in morphological and physiological responses to water stress across genera, species, and even cultivar. In Michigan, Warsaw et al. [

37] tracked daily water use and water use efficiency of 24 temperate ornamental taxa from 2006 and 2008. Daily water use varied from 12 to 24 mm per container and daily water use efficiency (increase in growth index per total liters applied) varied from 0.16 to 0.31. Of the similar taxa used,

Buddleia davidii ‘Guinevere’ (24 mm per container) had the greatest water use followed by

Spirea japonica ‘Flaming Mound’ (18 mm per container),

Hydrangea paniculata ‘Unique’ (14 mm per container), and

Cornus sericea ‘Farrow’ (12 mm per container) with estimated crop coefficients (KC) of 6.8, 5.0, 3.6, and 3.4, respectively. Low-water tolerant taxa such as

Cornus may simply not have been demonstrating symptoms of water stress when they were photographed. Models that achieved moderate performance were likely provided with too few examples to distinguish patterns relevant to the classification task from those specific to the training data, causing them to generalize poorly to new data during the testing phase. Such overfitting bias can be overcome by training models with a larger and more diverse set of training images. Varying the location, weather, and growing period in which images are taken, for example, can force models to learn features that generalize to all conditions. Future studies can also use images of plants with multiple degrees of water stress to train regression models that return a value along a numeric scale rather than a stressed or not-stressed binary.

While CNNs’ complicated nature prevents us from knowing what features are driving the model, insight can be gained from the conditions in which classifiers succeed or fail. For example, classifiers trained by pooling images of all species had significantly lower performance than classifiers trained with images of just one species despite having a considerably larger training set. This suggests that symptoms of water stress differ from one species to the next. Subsequent studies can identify what features are driving the model by iteratively removing them from the image. For example, one experiment could train models with individual R, G, or near-infrared channels to determine if certain spectral indices are more sensitive to water stress than others. Another experiment could crop a rectangle circumscribed to the plant in order to see if plant shape or other peripheral features aid the classifier. Features that significantly reduce performance when removed may represent biologically relevant phenotypes that are worthy of further study.