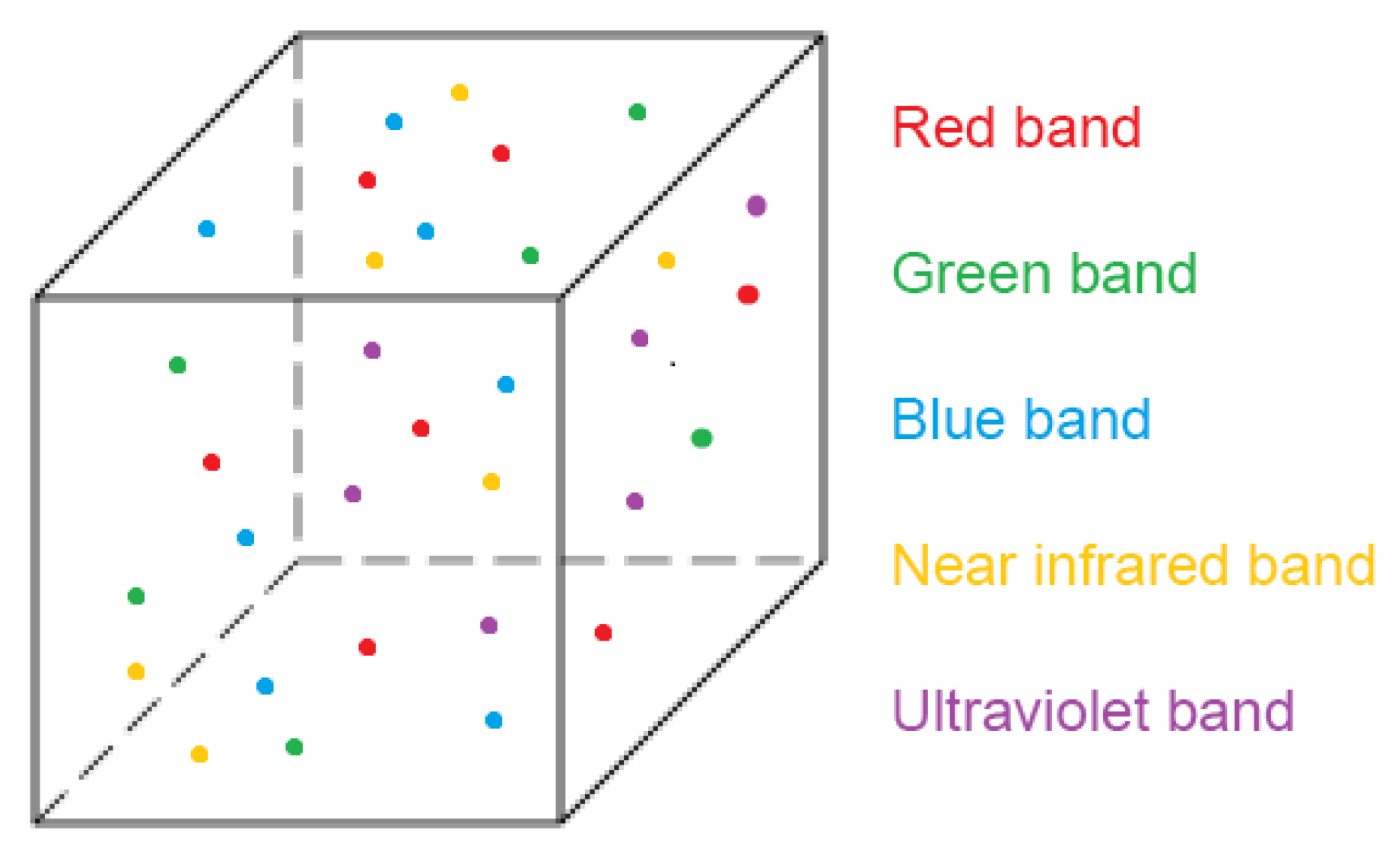

The outputs of photogrammetry are point clouds from homologous entities identified in photographs taken with digital image sensors. The information accompanying the point geometry will depend on the type of sensor and the part of the electromagnetic spectrum to which it is sensitive, registered by a digital value that usually corresponds to its colour. Using visible spectrum cameras (conventional cameras), colour is obtained in its green, red and blue components (bands). However, if multispectral, thermographic, ultraviolet, etc., cameras are used, the information registered will correspond to the part of the spectrum to which each sensor is sensitive [

25]. Previous research work has been done in the field of fusing infrared thermal data with visible spectrum images. The product of this infrared-visible fusion is a thermal infrared point cloud with higher resolution than could be obtained from the original thermal sensor [

26].

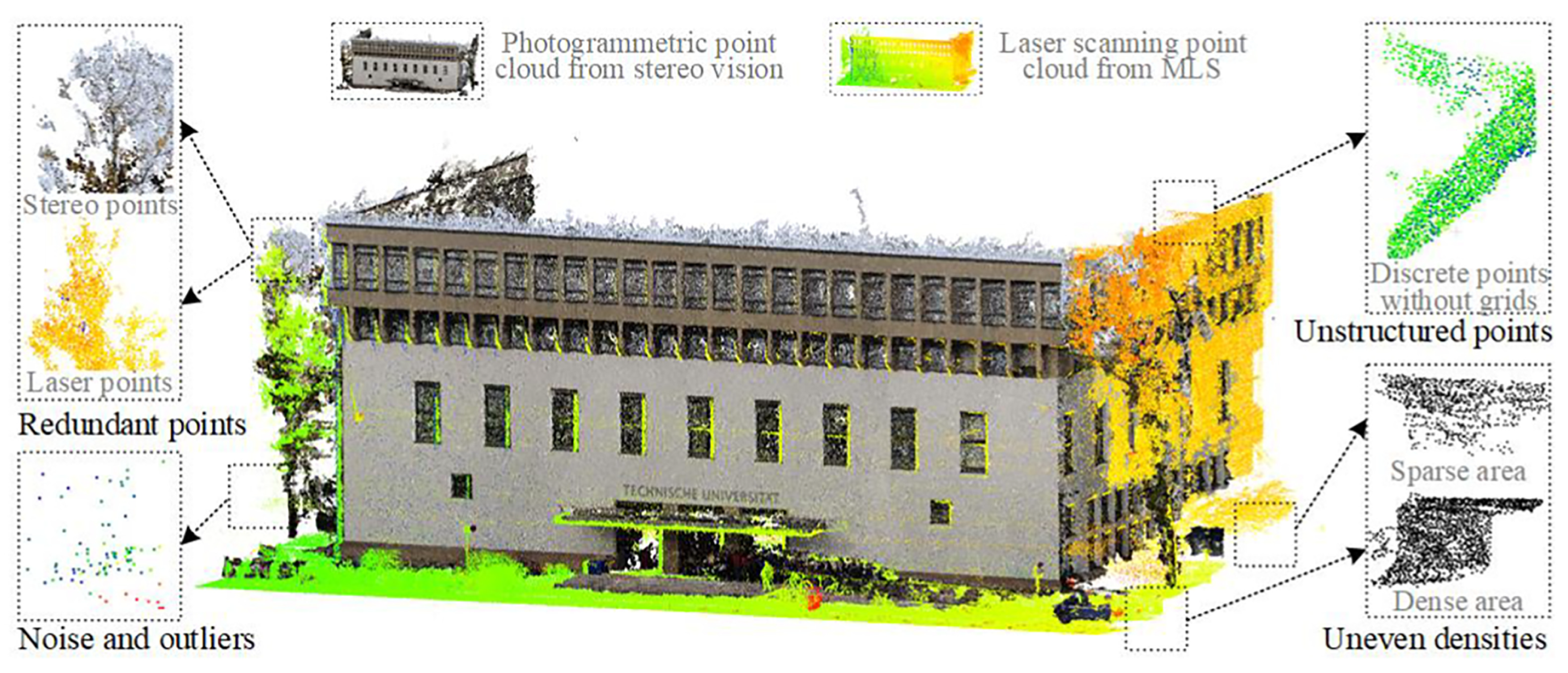

In addition to the problems of data structure, heterogeneity, variable density etc. described above, multisensor point clouds will have a different data source and express different information. It is necessary to devise techniques to fuse both the geometric and spectral information in order to facilitate processing and obtain conclusions from the different information they contain.

2.1. Data Collection

In order to demonstrate the potential of the use of voxels for processing multisensor point clouds, a building element from a site containing a historic enclosure wall was registered with a variety of sensors.

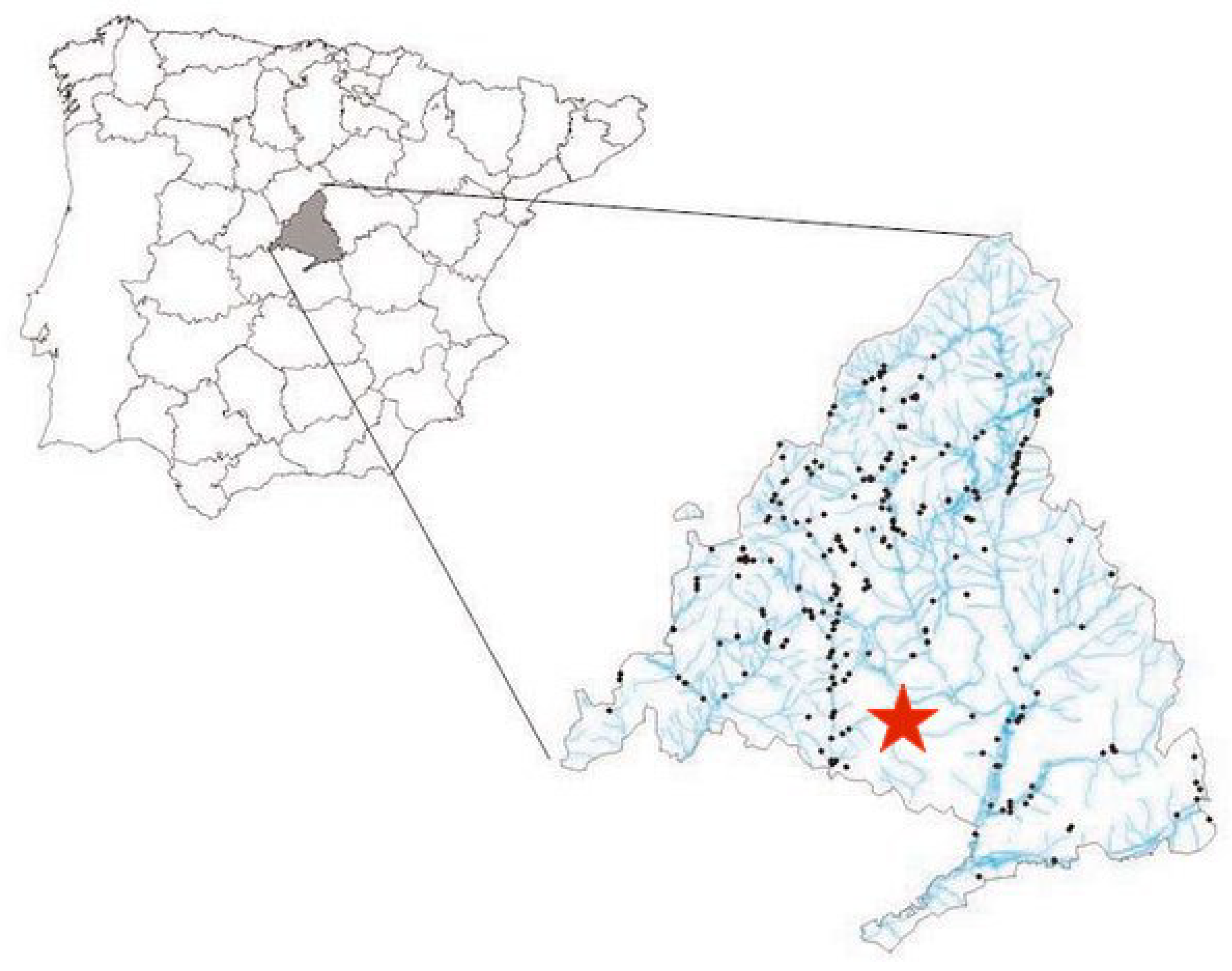

The element is located in the town of Humanes de Madrid (Madrid, Spain), with geographic coordinates 40.250177°N, 3.828382°W. In

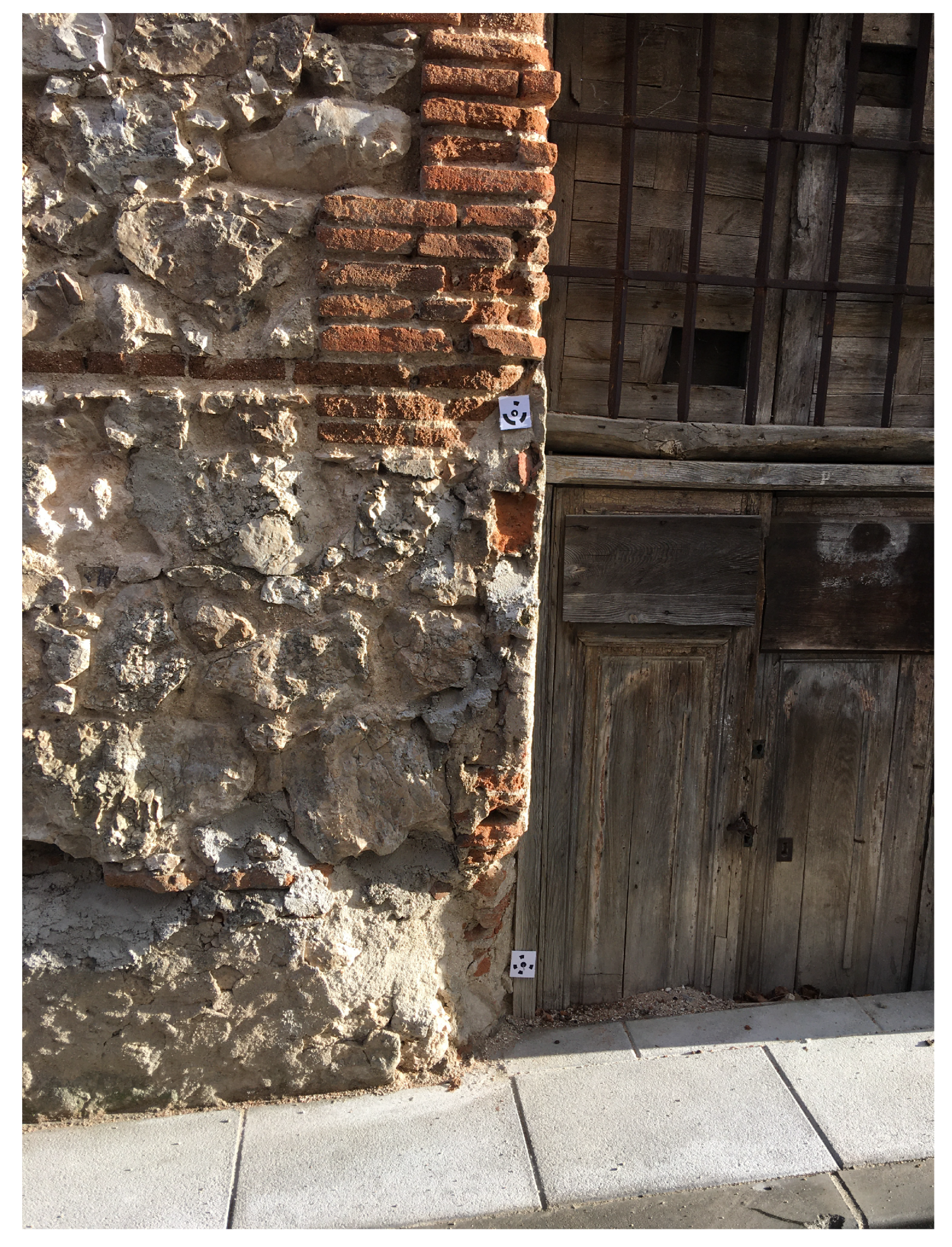

Figure 2 its geographic location in Spain is shown, as well as a photograph of the wall itself (

Figure 3). The dimensions of the chosen wall are approximately 20 × 3 m.

The selection of this site and this historical architectural element is justified by the fact that in ancient history research works the main elements to be studied are walls. The fusion of point clouds by voxelization does not depend on the geometry (shape) of the element. The fusion can be done by elements or by the whole building or environment to be studied as a whole. The point cloud selected as the starting point for defining the voxelised structure will determine the limits of the study area in each case.

The data were collected by means of photogrammetric techniques, with several sensors. A series of photogrammetric data acquisition missions were designed to obtain different point clouds of the same object. Thus, each point cloud, while representing the geometric reality of this historic architectural element, adds the intrinsic spectral information of the sensor used, or of the filter used in that capture. These data acquisition sessions were carried out sequentially to ensure that the environmental conditions did not change significantly. Special care was taken in the design of the data collection missions to ensure that the weather and environmental conditions did not vary. When taking the photographs, no part of the wall was directly illuminated by the sun, which would cause discrepancies in the values acquired. Other variables such as humidity and wind remained constant throughout the acquisition time. Two different cameras, equipping several filters depending on desired spectral information, were used in this work:

Sony digital image camera model Nex-7, with a 19 mm fixed-length optical lens. This sensor records the spectral components corresponding to the red (590 nm), green (520 nm) and blue (460 nm) bands. In

Table 2 we show these used sensor parameters.

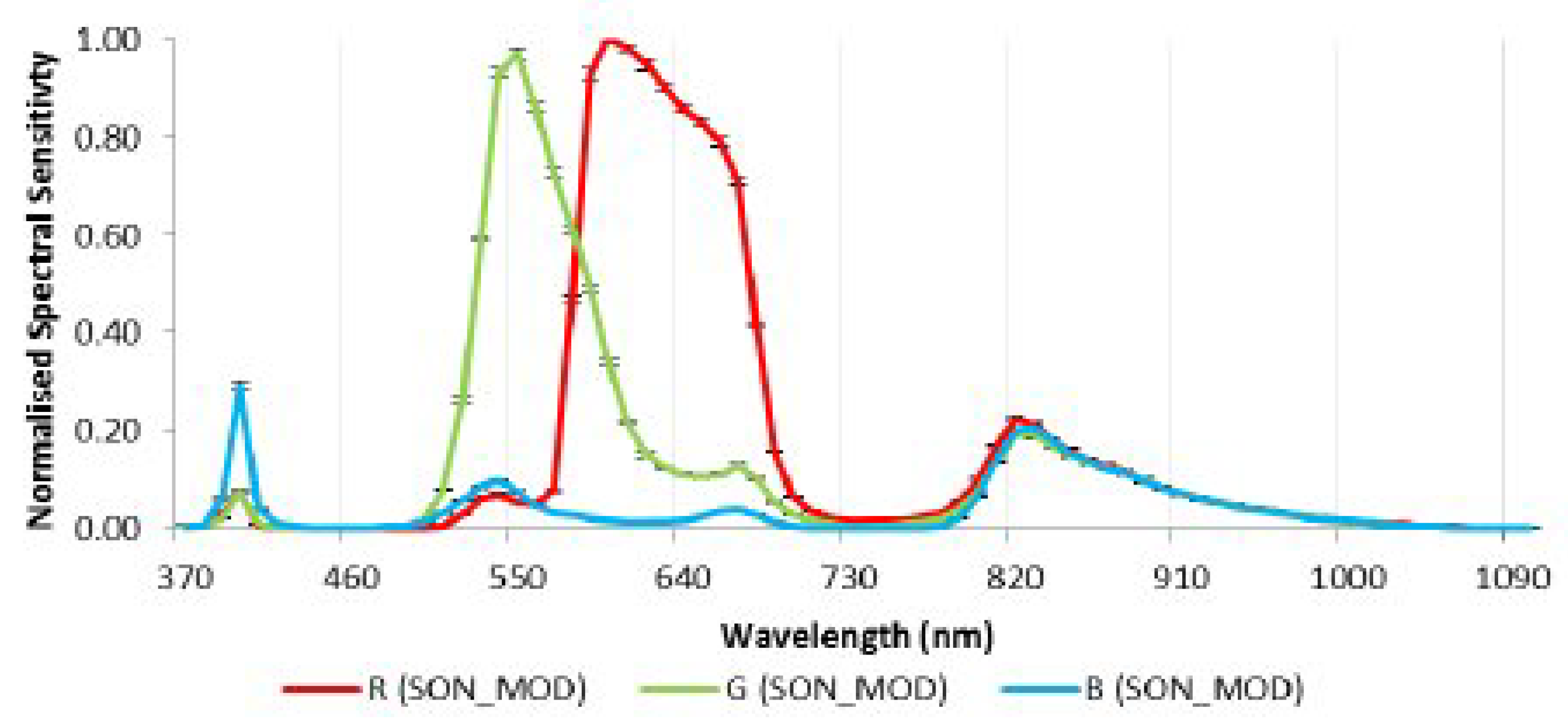

Modified digital image camera model Sony Nex-5N (

Table 3), equipped with a 16 mm fixed-length lens, with near infrared filter and an ultraviolet filter.

Figure 4 shows the spectral response of the unmodified Sony NEX sensor. If we removed the internal infrared filter, this Sony sensor will become sensitive to parts of the electromagnetic spectrum such as the near infrared (820 nm) and the ultraviolet (390 nm). In

Figure 5 we shows the spectral response of the modified Sony sensor [

27]. Combined with different filters we can acquire different spectral information than the one we can achieve with the unmodified sensor.

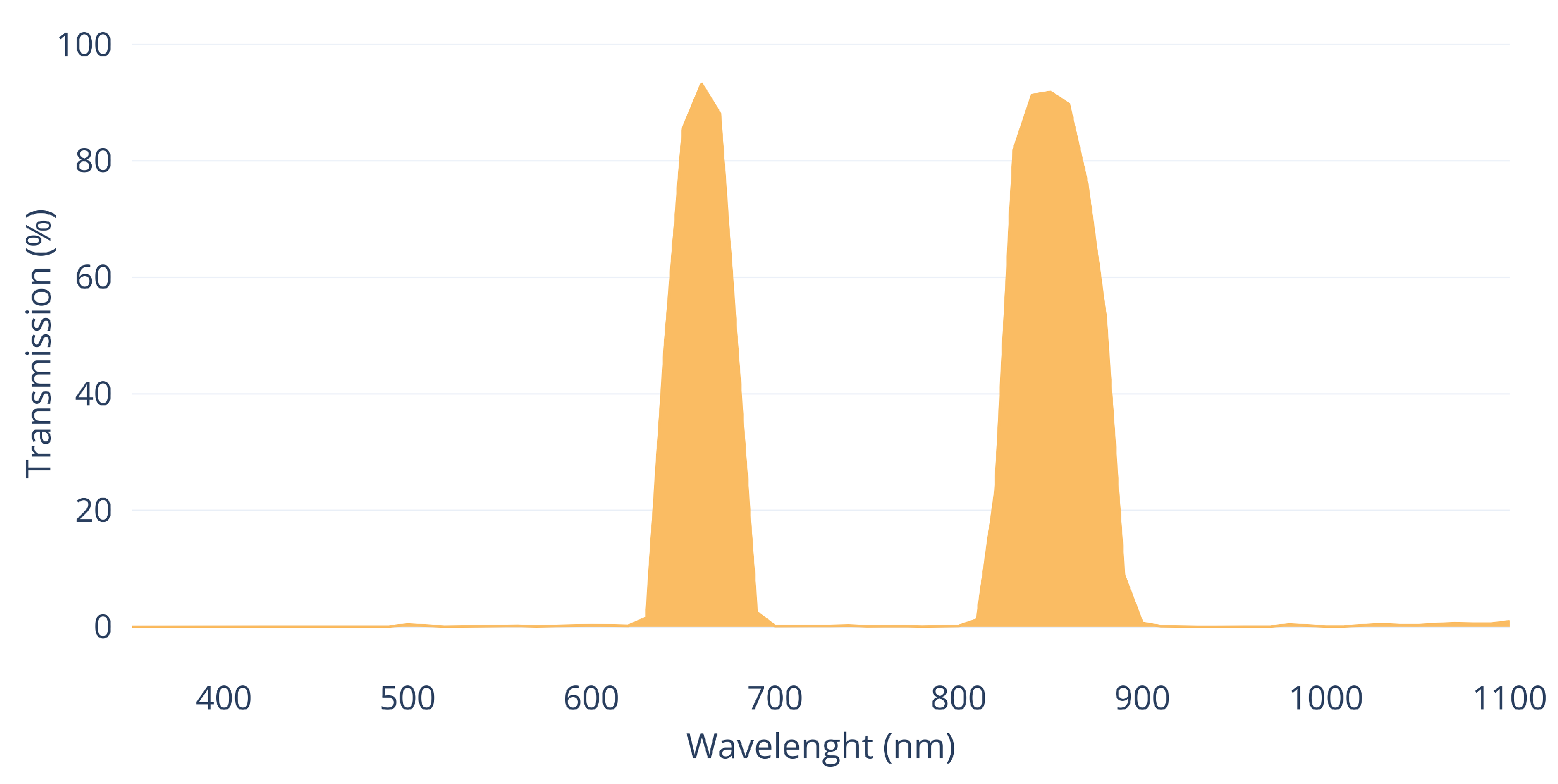

Figure 6 and

Figure 7 show the transmission curves of the these used filters during capture process.

In this work, we have used consumer photographic cameras, one of them modified, to demonstrate that multispectral point clouds can be obtained with low-cost sensors.

The use in this work of only two different photogrammetric sensors for point cloud fusion does not imply that the voxelization fusion process can only be used with point clouds originated from this technique. As mentioned in the introduction, there are several tools and methods for obtaining point clouds, nowadays making great advances in this research field. Each sensor involved, apart from the geometrical data, provides different information inherent to its characteristics or the methodology used in the data acquisition. Our research is independent of the number of sensors used or their technology. In this case, we are going to fuse five point clouds by voxelization, analysing their advantages and limitations.

The process of image capture for subsequent photogrammetric processing was designed in such a way that the individual images were taken at a distance of approximately 3 m from the wall. The distance could not be greater due to the narrowness of the street where this historic location is situated. The overlap was always greater than 90% between consecutive images and two passes were made, each with a different camera orientation, with each sensor and filter combination.

Figure 8,

Figure 9 and

Figure 10 show examples of the images obtained with each sensor and filter combination.

Figure 8 shows an image taken by the unmodified Sony Nex7 visible spectrum camera with no additional filter.

Figure 9 corresponds to the modified Sony Nex5N camera mounting a Midopt DB 660/850 Dual Bandpass filter. Finally, in

Figure 10 we show a detail of the image corresponding to the modified camera mounting a ZB2 ultraviolet filter.

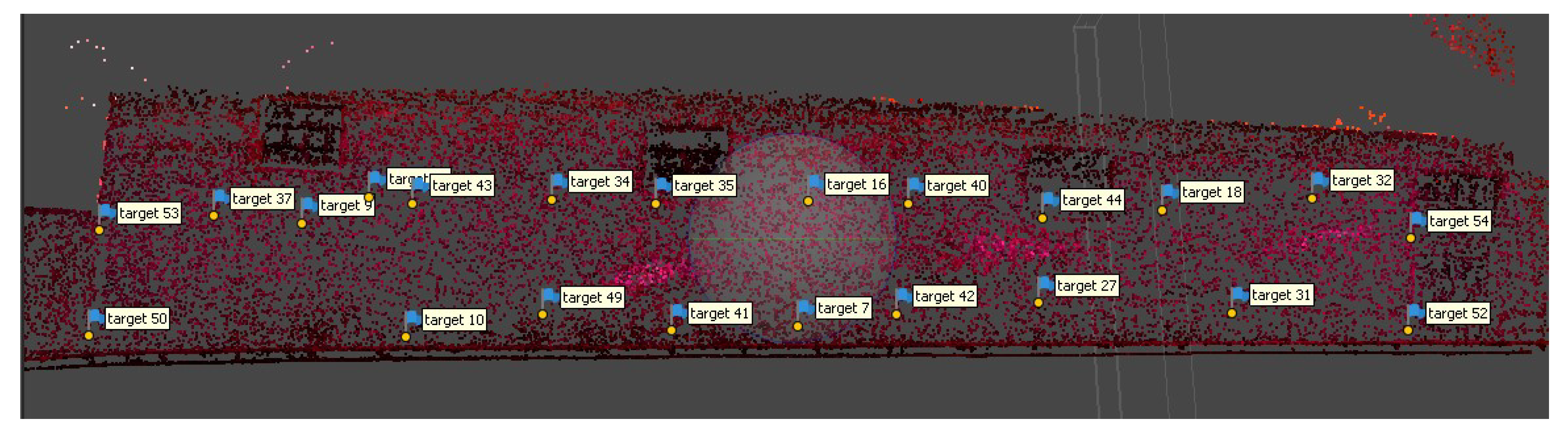

Before taking the photographs, a series of precision targets were marked on the wall and their interdistances were measured with a fibreglass measuring tape with centimetre precision. These marks define a set of control points and a common geometric reference system for all the takes (

Figure 11).

The set of photographs in the visible spectrum consists of 398 images, the near-infrared set consists of 340 images, and the ultraviolet group of images comprises 309 photographs.

The photographs from the different sensors were processed with the photogrammetric processing application Agisoft Metashape version 1.6.1 build 10,009 (64 bits).

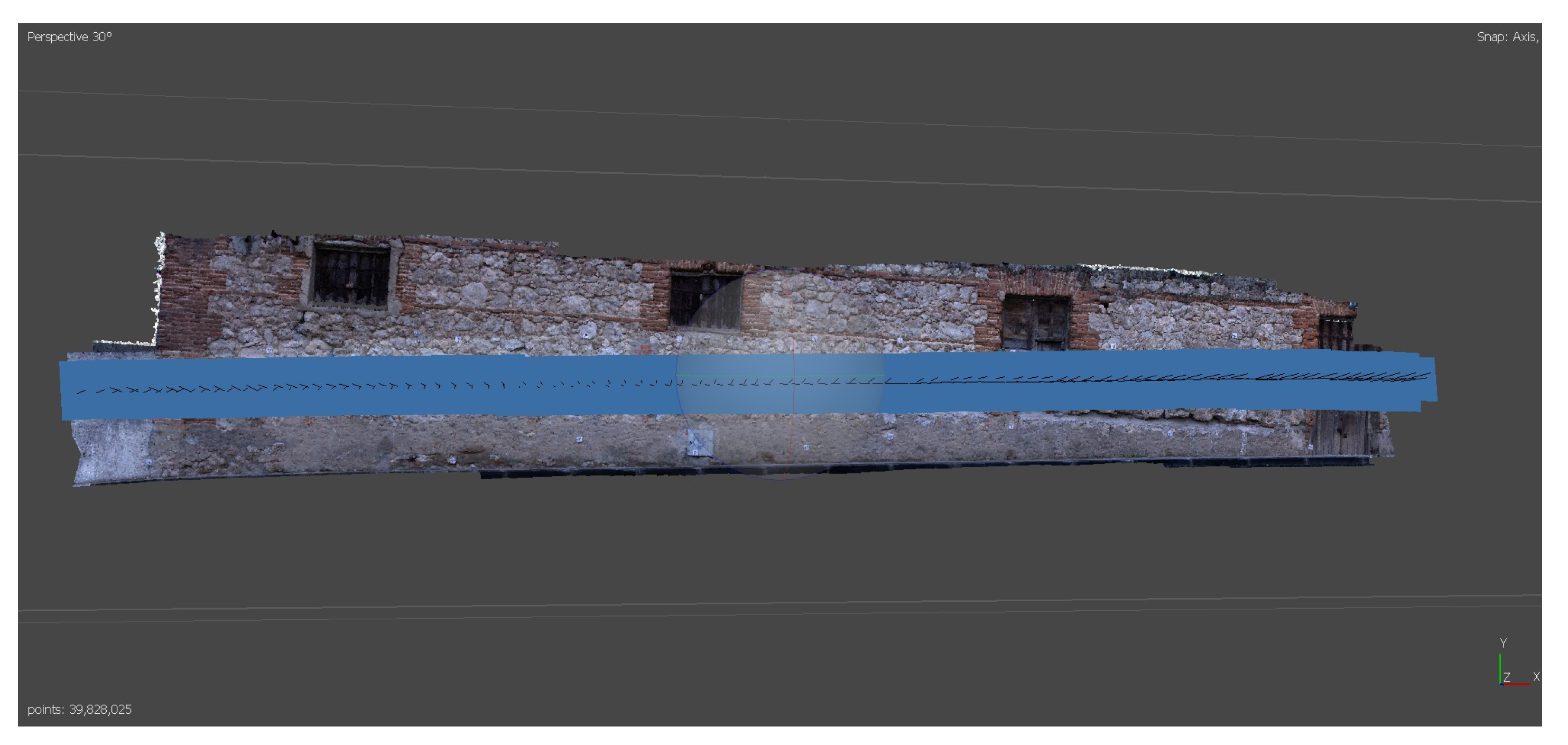

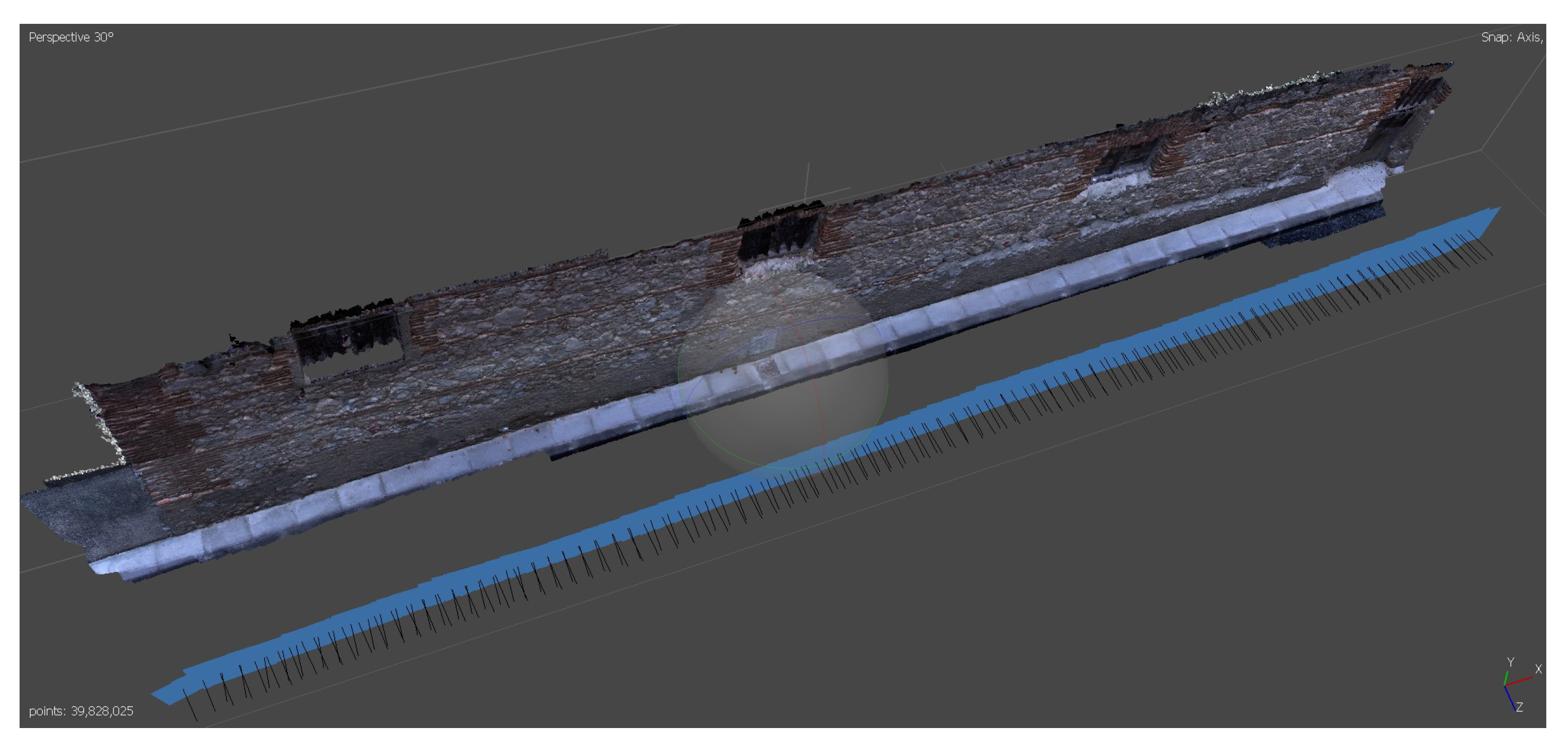

Figure 12 and

Figure 13 show the distribution of shots for the visible spectrum point cloud.

The precision targets were identified in all the photographs (

Figure 14) in a way that defined a local reference system, in which all the different point clouds were integrated. Thus, we have several point clouds in a common reference system. In this particular case, we did not consider georeferencing these targets, as we intend to demonstrate the feasibility of the fusion of point clouds by voxelization. We consider that georeferencing of point clouds should be carried out in any case where the precise marking can not be permanent, which is common in the context of ancient history buildings. This georeferencing process will help a lot in the event that new data acquisition has to be realised.

The result of the photogrammetric process of the visible spectrum capture was a point cloud whose RGB colour property corresponds to the spectral information in the red, green and blue bands. On the other hand, the point clouds of the near infrared and ultraviolet set, although the points present the colour fields structure, the same as the visible spectrum point cloud, only the information corresponding to the desired spectral information has been taken. For the near-infrared cloud, the information of the field corresponding to “red” was taken, while for the cloud of points coming from the process with the ultraviolet images set, only the colour property corresponding to “blue” field was used.

Five different point clouds were obtained as a final output of this data processing phase, corresponding to different spectral bands: red, green, blue, infrared and ultraviolet. To clarify, the geometry of the visible spectral point cloud, as all the points positions coincide because they come from the same cloud, has been split into three different ones because they contain distinct spectral information, in single bands, such as the infrared and ultraviolet point clouds.

2.2. Fusion of Point Clouds by Voxelization

We now have several point clouds (five, in this particular study) in a common reference system, but with different information. To be able to merge these point clouds and subsequently analyse the information provided by each one in an integrated way, we propose the concept of multispectral voxel. A multispectral voxel is defined not only by its size and position, but by the characteristics of the points it contains (

Figure 15).

When processing point clouds in their voxelization, one of the parameters that defines the structure of voxels is the size of the elemental voxel. This parameter will determine the resolution of the phenomena to be studied thanks to the data structure (finite elements [

8], structural studies [

28,

29], dynamic phenomena [

30], etc.). For example, if the study or simulation requires a resolution of 5 cm, this will be the voxel size to be set in the voxelization process. The size of the voxel will also establish the degree of reduction of the elements compared to the number of points in the original clouds.

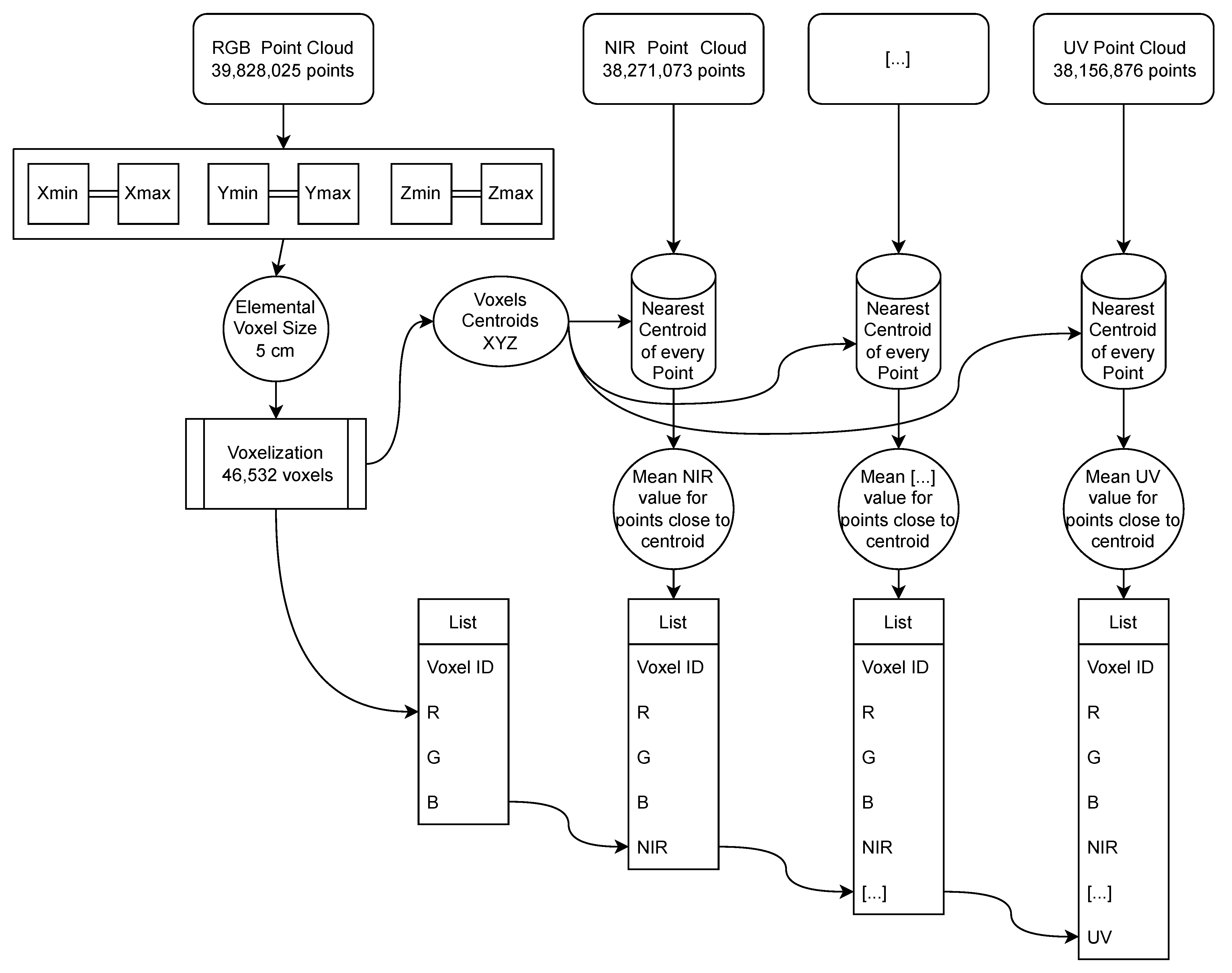

The voxelization process consists of dividing the point cloud into unique elements defined by tetrahedrons (although other geometric figures can be defined such as spheres, cylinders, etc.), all with a single uniform size. We first determine the defining framework (bounding box) with the limits of the point cloud on each of the three axes XYZ. In this first step of the voxelization, one of the point clouds representing the study object is taken as a reference. The number of voxels in each direction is obtained by dividing the dimensions of the bounding box by the dimension of the elemental voxel selected [

31]. This voxelization process is included in the Open3D open code library [

32].

Once the voxelized data structure has been defined, the data can be fused. Each voxel is defined by its centroid, with its geometric coordinates XYZ, and by its contour. We locate the centroid nearest to each point in the cloud, which is then assigned to the voxel represented by this centroid. The centroid closest to each point can be searched using the closest-pair or KDTree algorithms [

33].

As the whole cloud has been covered, we will have identified the points that are contained within each voxel. The property transmitted by the points to the voxel containing them will be the mean of the values of the points in each individual spectral band. In other words, if a voxel contains many points of the same point cloud, the corresponding spectral information value of that voxel will be the mean of the spectral values of the points contained in it. Other statistical measurements can be calculated in addition to the mean, such as the maximum and minimum values, variance, skewness and kurtosis, which will give a measure of the dispersion and variability of the spectral information transmitted by these contained points. This process is then repeated for each point cloud to be fused.

Figure 16 shows the methodology designed for the fusion of point clouds from heterogeneous sensors by voxelization. The final output is a series of voxels integrating the multispectral information contained in them. Given the heterogeneity of the point density, there may be voxels that do not include points from any of the different clouds. A complete voxel is considered to be one that contains at least one point from all the point clouds analysed. Incomplete voxels must be identified in order to handle them correctly in subsequent processes.

The voxelization was done based on the cloud from the visible spectrum photographs taken with the unmodified camera. This is because, in this particular case, it is the largest point cloud. This does not imply that none of the other point clouds can be used as a starting point for defining the voxelized structure. This original visible point cloud has over 39 million points (RGB point cloud). The

Table 4 shows the number of voxels obtained by varying their size in regard to the original point cloud. Voxel size multiples such as ×2, ×5, ×10, etc. have been chosen. As can be seen, varying the voxel size by half does not imply half the number of voxels are obtained, and vice versa.

The size of the set of elements to be analysed in subsequent processes has been drastically reduced. It is no longer necessary to store millions of points; by performing the voxelization, the geometry of the construction element is contained in a much smaller set of voxels. In this study case, the original RGB cloud has 39,828,025 points, and the number of elements resulting in the case of voxel resolutions of 0.5 cm is 11.60%. At a resolution of 1 cm, the voxels are 2.99% compared to the original.