1. Introduction

Change detection is an important task in the remote sensing field, which aims to reveal surface changes in multi-temporal remote sensing data [

1]. Forests are important natural resources that play a major role in maintaining the Earth’s ecological environment. As a sub-task of change detection, forest change detection has been widely used in land and resource inventory, deforestation control, and forest management.

Early forest change detection was generally performed using optical images, which have obvious color characteristics, with certain color bands being sensitive to specific changes [

2,

3]. Currently, optical images are the main data source in the change detection field [

4]. However, the quality of optical images is strongly affected by clouds and fog. Moreover, the temporal difference in multi-phase images captured by a sensor may show spectral changes for the same objects [

5]. With the development of synthetic aperture radar (SAR) technology, numerous studies have been carried out on SAR image-based forest change detection in recent years [

6].

Traditional forest change detection algorithms mainly include algebraic algorithms (e.g., vegetation index difference [

7] and change vector analysis [

8]), data transformation methods (e.g., principal component analysis [

7] and canonical correlation analysis [

9]), and classification-based methods [

10]. However, to eliminate the differences in sensor data as much as possible, it is necessary to first perform geometric and radiometric corrections of multi-phase images and then construct the change map using algebraic operations or transformations on the multi-phase images. Regardless, the traditional algorithms only use the initial features of an image and usually have low accuracy in forest change detection.

With the rapid development of deep learning algorithms and computer vision in recent years, deep learning algorithms have been used in image classification [

11], target detection [

12], and semantic segmentation [

13], demonstrating excellent performance. Change detection is a special semantic segmentation task that adopts an encoder-decoder structure of semantic segmentation models in the model design. In recent years, a large number of deep learning-based change detection algorithms have been proposed. These algorithms significantly outperform traditional algorithms, and is thus a favored approach in the field of change detection. Accurately extracting change detection has become the focus of several studies, since change detection models are typically based on multiple image inputs. By considering the mainstream multi-temporal change detection algorithms, the deep learning-based algorithms used in change detection can be roughly divided into two main categories regarding the feature extraction stage. The first category performs early fusion (EF), combining bitemporal images as one model input and transforming the change detection task into a semantic segmentation task. The second category adopts Siamese networks, which use two identical separate encoders to extract features from bitemporal images, and then the extracted features of the two Siamese branches are combined in the feature maps at the same scale. The change detection models using Siamese networks were first proposed in 2018 and have been commonly used to design change detection models [

14].

Recent research has demonstrated that Siamese neural network-based change detection models are effective at identifying differences between multiple images. These models have, therefore, significantly improved in recent years. F. Rahman et al. designed a Siamese network model based on two VGG16 encoders with shared weights and obtained high change detection accuracy [

15]. Y. Zhan et al. used the weighted contrastive loss to train a Siamese network, where variation features were extracted directly from input image pairs, resulting in an improved F1-score [

16]. H. Chen et al. designed a self-attention mechanism to capture spatiotemporal correlations at different feature scales and employed it to improve the F1-score [

17]. In addition, J. Chen et al. designed a dual attentive fully-convolutional Siamese network (DASNet) based on a dual attention mechanism to reduce noise in change detection results. DASNet performed well in capturing long-range dependencies, showing few noises in changes and high F1-scores [

18]. S. Fang et al. designed SNUNet based on the nested U-Net using a deep supervision method, employed a Siamese network structure to extract accurate change graphs, and proposed an integrated channel attention module at the end of the decoder for multi-scale information aggregation. SNUNet achieved state-of-the-art results on the CDD public dataset [

19]. With the success of the transformer model in computer vision tasks, this model has also been introduced to the change detection field to improve detection accuracy. The state-of-the-art results were obtained on a public change detection dataset [

20].

Many of the advanced methods are based on the Siamese neural network. However, most of the advanced methods use high- or ultra-high-resolution remote sensing images as data sources, which are expensive and unsuitable for detection tasks with continuous and rapid changes. Forest change detection requires timely and accurate detection of forest changes, which is crucial for the rapid response of government departments. Nevertheless, most recent research on forest change detection has focused on low- and medium-resolution remote sensing data. MG. Hethcoat et al. used machine learning-based models to detect low-intensity selective logging in the Amazon region based on Landsat8 data [

21]. T. A. Schroeder et al. performed the detection of forest fire and deforestation using the supervised classification of the Landsat8 time series [

22]. Whereas W. B. Cohen et al. used an unsupervised classification post-difference approach to detect deforestation in the Pacific Northwest on Landsat8 data [

23]. SAR based detection has become the most common method for obtaining accurate forest change detection results with reduced interference from clouds and fog. M. G. Hethcoat et al. used the random forest algorithm to analyze deforestation based on Sentinel-1 time series data [

24]. J. Reiche et al. combined dense Sentinel-1 time series with Landsat and ALSO-2 PALSAR-2 to perform real-time near-field tropical forest monitoring [

25]. Indeed, with the development of change detection technology, deep learning-based models have begun to be applied to forest change detection tasks. R. V. Maretto et al. improved the traditional U-Net model and applied it to forest change detection based on Landsat-8 OLI data, demonstrating the effectiveness of the improved U-Net model in achieving high forest-change detection accuracy [

26]. F. Zhao et al. extracted deforestation areas using the U-Net model and Sentinel-1 time series to process the VV and VH data, providing evidence of the efficiency of SAR data as a data source [

5].

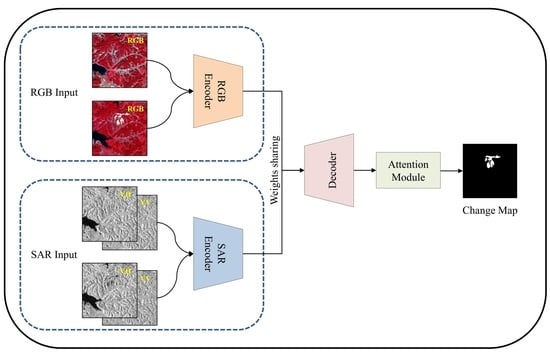

Although these methods can be used to effectively identify forest change, the application of advanced change detection algorithms has not been thoroughly explored. In addition, most of the major change detection algorithms have been based on high-resolution optical images, while the combination of low- and medium-resolution optical and SAR data has rarely been considered. This study reviewing the characteristics of the major change detection algorithms and forest change detection tasks proposes a double-Siamese nested U-Net (DSNUNet) model to improve forest change detection accuracy based on the encoder-decoder structure. The encoder included two sets of Siamese branches which were used to extract features from optical and SAR images. Meanwhile, the decoder aggregates the optical and SAR features and restores the scale features. Indeed, DSNUNet was derived from the change detection algorithm named SNUNet-CD. In the proposed model, different feature channel combinations were used to extract effective features from optical and SAR images, as well as to compensate for the differences between these image data. Moreover, to overcome the discrepancies between positive and negative samples in the change detection task, a combination of focal loss and dice loss was used as a loss function of the proposed model. The proposed model was validated using Sentinel-1 and Sentinel-2 data. The results demonstrated the effectiveness of the proposed method in forest change detection in terms of precision, recall, and F1-Score compared to the state-of-the-art methods.

The main contributions of this paper are as follows:

(1) A Siamese network model named DSNUNet was designed to achieve accurate forest change detection by combining optical and SAR images. The DSNUNet model uses optical and SAR image data as inputs directly and outputs the final change map, thus improving the forest change detection performance;

(2) Two sets of Siamese branches with different widths were designed for feature extraction to achieve more effective use of the multi-sensor data. The feature balance of optical and SAR images was performed using different channel combinations. DSNUNet also can be generalized as a general change detection framework for any combination of two kinds of images with information differences.

The rest of this paper is organized as follows.

Section 2 introduces training data sources and data preprocessing and describes the proposed DSNUNet model.

Section 3 presents multiple sets of comparative experiments.

Section 4 analyzes the experimental results and provides future research directions. Finally,

Section 5 summarizes the paper.

4. Discussion

In the first experiment, most change detection models reveal less accurate bounds and more pseudo changes in forest change detection. The SNUNet and DSNUNet models can provide good detection results. However, SAR image-based DSNUNet revealed closer prediction results to the observed data. BIT is a transformed-based change detection model that requires a longer training time and a larger training dataset for training than CNN-based models.

The introduction of SAR image data can provide more accurate forest variation characteristics. According to the obtained results, from

Table 3,

F1-Score,

Recall, and

Precision values of DSNUNet were 2.23, 3.62, and 0.63% higher than those obtained using optical images-based SNUNet, respectively (

Table 3). However, the information complexities of optical and SAR image data were different. In addition, an effective fusion of multi-source remote sensing data can improve the change detection performance. However, the simple combination of optical and SAR images into multiple channels of input showed a slight improvement in most models. The results revealed higher

F1-Score,

Recall, and

Precision values of DSNUNet than those obtained using optical and SAR image-based SNUNet by 1.65, 0.74, and 2.62%, respectively (

Table 4). This is due to the fact that these models prematurely merged different information in the feature initialization step of the input, resulting in the SAR data’s value not being fully used. Therefore, DSNUNet uses two sets of Siamese branches to extract features from optical and SAR images, which can effectively explore the spatial and semantic information of different data and improve detection performance.

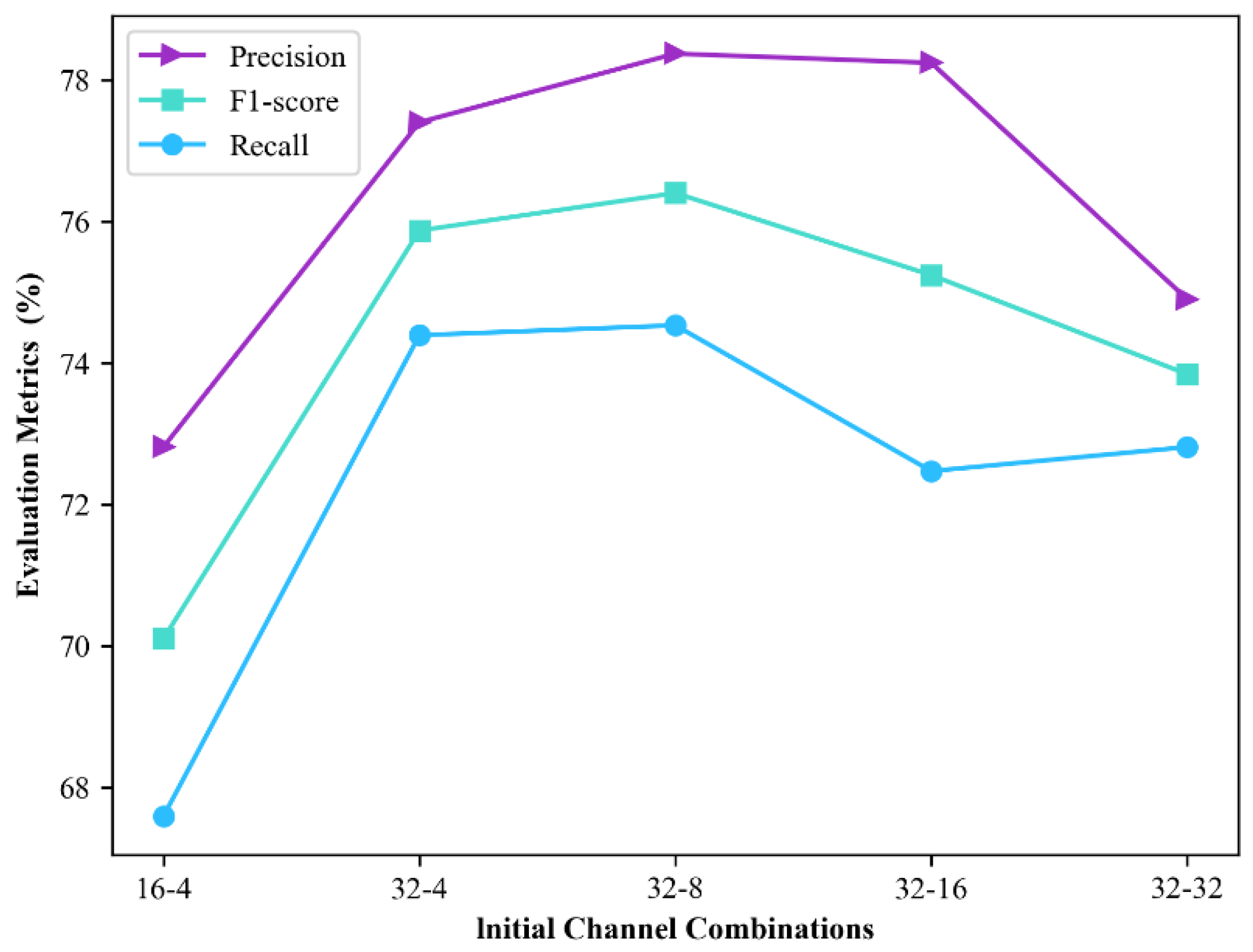

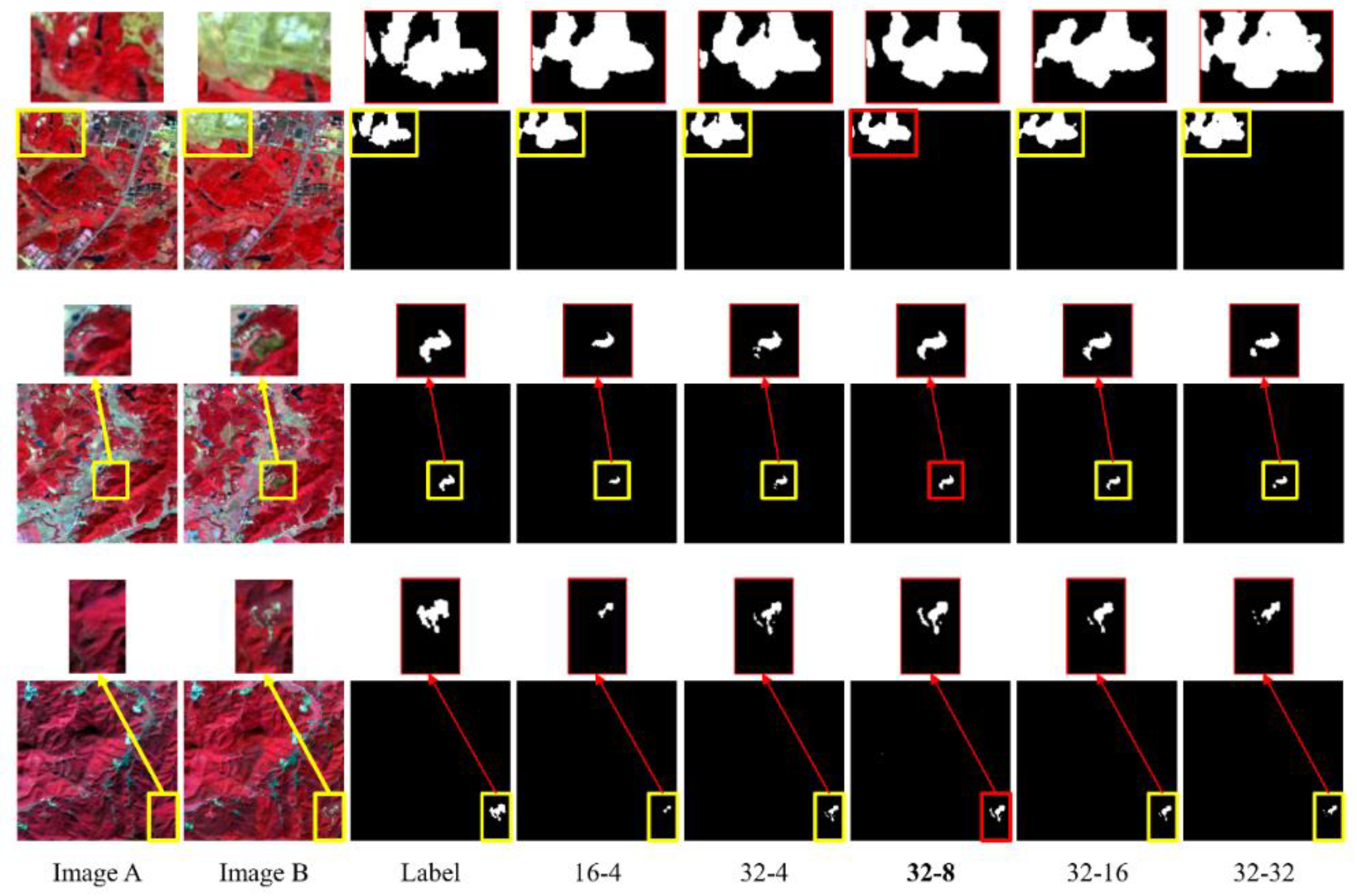

In the second experiment, it was found that the proposed model’s performance was optimal at 32 and eight initial channels in the optical and SAR image branch, respectively. DSNUNet using the 32-eight combination revealed

Precision,

Recall, and

F1-Score values of 78.37, 74.53, and 76.40%, respectively. As shown in

Figure 9, using several initial channels in the SAR image branch can cause redundancy in information, while selecting a moderate number of initial channels can help to obtain a compromise between the number of parameters and model performance.

Figure 12 shows the variation in loss during training of DSNUNet with different initial channel combinations. With the increase of epoch, the loss value shows a gradual decline and tends to be stable, which shows that the DSNUNet model has excellent fitting ability for forest change data.

DSNUNet has stronger tolerance to clouds in images since SAR can provide images with high resolutions, even under cloudy conditions. As shown in

Figure 13, the DSNUNet could suppress the pseudo-variation caused by cloud layers more effectively than other models. These cloud-covered images are not involved in the training, indicating that the characteristics of SAR images have resulted in more performant change detection of the model.

The following aspects could be addressed in future studies. First, the proposed model’s structure could be improved. Although DSNUNet uses two sets of branches to obtain different feature combinations, simple splicing has been used to merge information in the decoding stage. Moreover, the number of feature channels can dramatically change during the decoding process, which may cause information losses. Second, the feature extraction backbone of DSNUNet is relatively simple. Therefore, a more complex backbone could be used in the future to improve the model performance. Moreover, an attempt could be made to classify different forest change types for forest change detection, including dominant tree species that have changed and source of change (deforestation or fire). Finally, the increase in the forest area could be extracted to facilitate statistical analyses of related departments.