1. Introduction

With the continuous breakthrough of remote sensing technology, the ways to obtain information through multi-source satellites, unmanned aerial vehicles, and other sensors have gradually increased, and the space–air–ground integrated system based on multi-sensors has gradually improved. Due to the different methods and principles of data acquisition by different sensors, the acquired Earth observation data have their own information advantages, and edge intelligence greatly improves multi-source data transmission and processing speed [

1]. In order to integrate the multi-source remote sensing data to achieve complementary advantages and improve the data availability, information fusion of remote sensing images is usually required. Multi-source image registration refers to the technology of matching images in the same scene obtained by different image sensors and establishing the spatial geometric transformation relationship between them. Its accuracy and efficiency will directly impact the subsequent tasks, such as remote sensing interpretation in land-use surveillance [

2]. Although the remote sensing data are commonly coarse-registered with the spatial reference, there are still slight translation or rotation differences between the images. The fine-registration task is particularly important to realize the precise alignment of objects.

However, due to the large differences in the operating mechanisms and conditions of imaging sensors, multi-source remote sensing images often show significant variations in the geometric and radiometric features. These obvious differences between multi-source remote sensing images are reflected in the registration process as the same landform or the target in the image presents distinct image features, especially internal texture features. Therefore, algorithms such as SIFT proposed for homologous registration often fail [

3]. Traditional multi-source image registration methods can be divided into two categories: region-based and feature-based methods. Region-based methods often use similarity measures and optimization methods to accurately estimate transformation parameters. A typical solution is to evaluate similarity with MI [

4], which is generally limited by high computational complexity. Another approach is to improve processing efficiency through the domain transfer techniques such as fast Fourier Transform (FFT) [

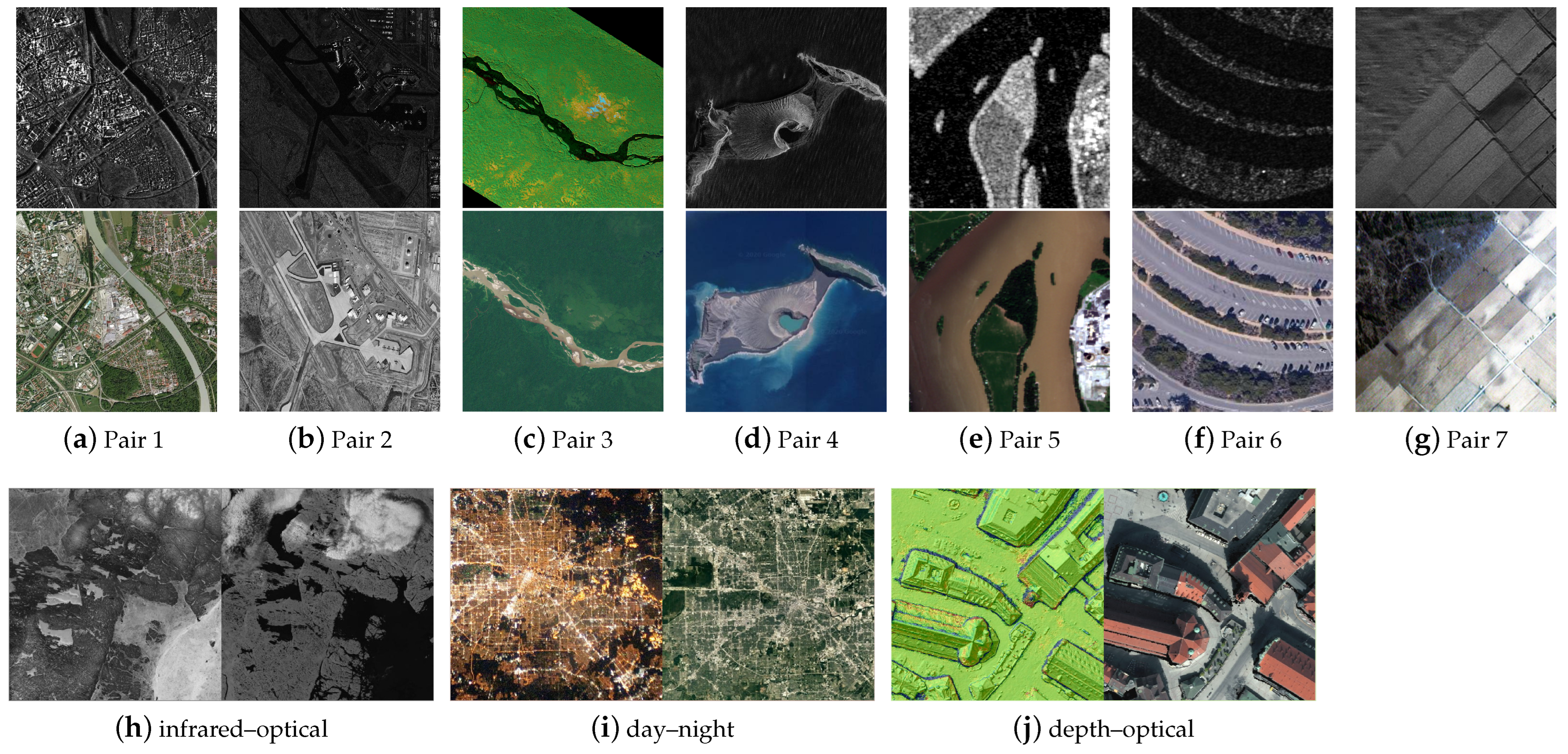

5]. However, facing large-scale remote sensing images, region-based methods are sensitive to severe noise caused by imaging sensors and atmosphere, making it difficult to be applied widely. Therefore, feature-based methods are more commonly used in the remote sensing field. These methods usually detect significant features between images (such as points, lines, and regions) and then identify correspondence by describing the detected features. The PSO-SIFT algorithm optimizes the calculation of image gradients based on SIFT and exploits the intrinsic information of feature points to increase the number of accurate matching points for the problem of multi-source image matching [

6]. The applicatory multi-source data include optical, SAR, infrared, and LiDAR images, and the SAR and optical images are the most widely used and representative ones. Therefore, many studies focus on SAR–optical registration. OS-SIFT is mainly focused on SAR images and optical images matching and uses the multiscale exponential weighted average ratio and the multiscale Sobel operator to calculate the SAR image and optical image gradients to improve the robustness [

7]. The multiscale histogram of local main orientation (MS-HLMO) [

8] method develops a basic feature map for local feature extraction and a matching strategy based on Gaussian scale space. The development of deep learning technology has accelerated the research of computer vision, and more and more learning-based methods have emerged in the registration field [

9]. A common strategy is to generate deep and advanced image representations, such as using the network to train feature detectors [

10], descriptors [

11], or similarity measures, instead of traditional registration steps. There are also many methods based on Siamese networks or pseudo-Siamese networks [

12], which obtain the deep feature representation by training the network [

13] and then calculate the similarity of the output feature map to achieve template matching. As the basis of the deep learning method, large datasets are critical to its performance. However, there are few available multi-source remote sensing datasets and the acquisition cost is high; labeled examples are even rarer, resulting in the difficulty of wide application to multi-source data, which greatly limits the development of learning-based methods.

These early feature-based methods are mainly based on gradient information, but the application in multi-source image registration is often limited due to the sensitivity to illumination differences, contrast differences, etc. In recent years, as the concept of phase congruency (PC) [

14] has been introduced into image registration, more and more registration methods based on it show superior performance. The histogram of orientated phase congruency (HOPC) feature descriptor achieves matching between multiple modal images by constructing descriptors from phase congruency intensity and orientation information [

15]. Li et al. proposed a radiation change insensitive feature transformation (RIFT) algorithm to detect significant corner and edge feature points on the phase congruency map and constructed the maximum index map (MIM) descriptor based on the Log-Gabor convolution image sequences [

16], realizing the insensitivity to multi-modal image radiation changes. Yao et al. proposed a histogram of absolute phase consistency gradients (HAPCG) algorithm, which extended the phase consistency model, established absolute phase consistency directional gradients, and built the HAPCG descriptors [

17] achieving robust matching between different source images with large illumination and contrast difference. Fan et al. proposed a 3MRS method based on a 2D phase consistency model to construct a new template feature based on Log-Gabor convolutional image sequences and used 3D phase correlation as a similarity metric [

18]. In general, although these phase-congruency-based registration methods demonstrate excellent performance in multi-source image matching, there are still two problems for remote sensing images registration: (1) the interference of noise and texture on feature extraction cannot be avoided; (2) the computation of phase congruency involves Log-Gabor transforms at multiple scales and directions, which is heavy in computation and results in the extension of feature detection time.

The key to automatic registration of multi-source images lies in how to extract and match feature points between heterogeneous images. Since edges are relatively stable features in multi-source images, which can maintain stability when the imaging conditions or mode changes [

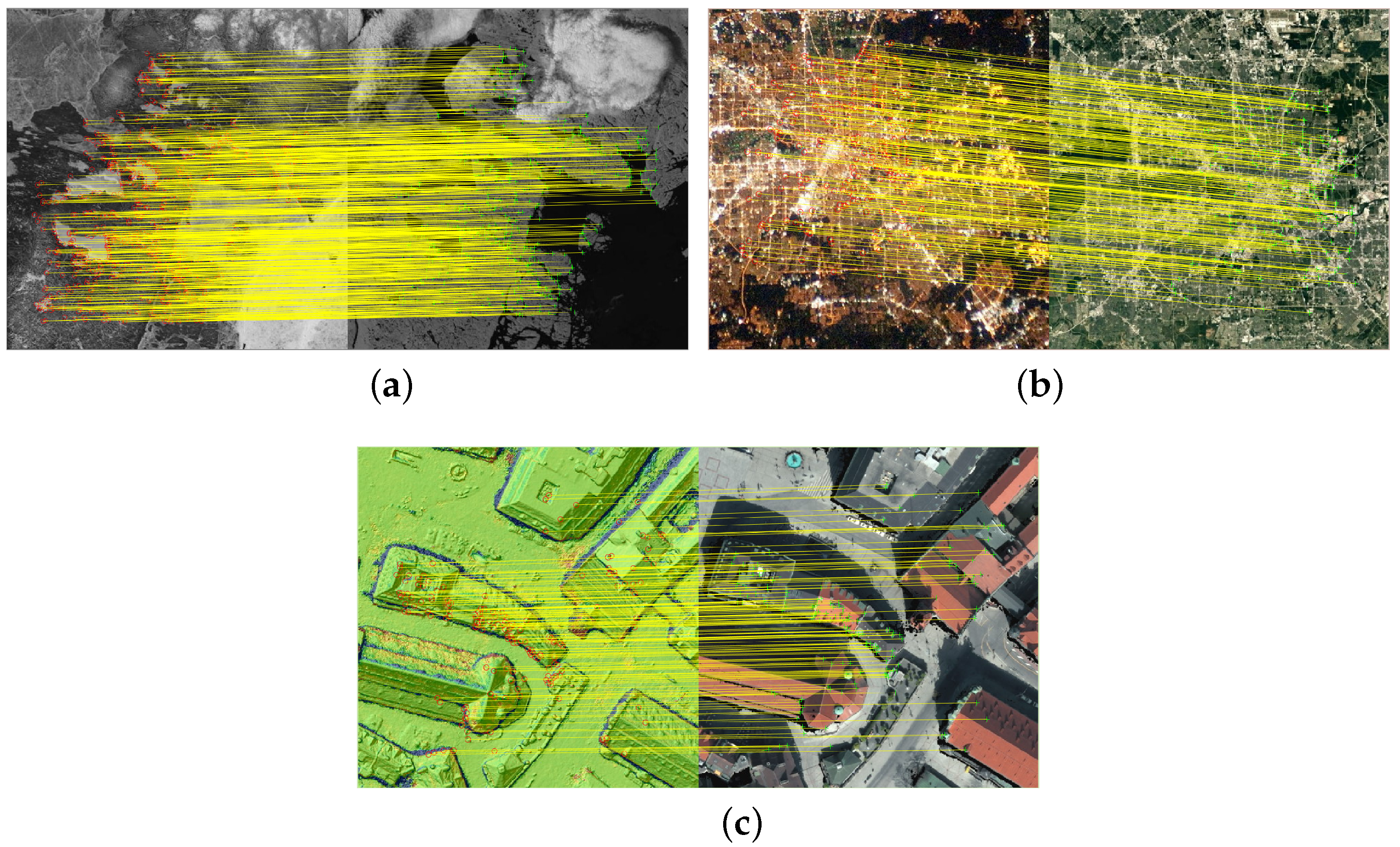

19], feature extraction based on edge contour information can greatly enhance the registration performance. As shown in

Figure 1, for the same area, terrain selected by the red box exhibits significant differences in multi-source images, while the edge marked with the green box tends to be more consistent. Therefore, a multi-source remote sensing image registration method based on homogeneous features is proposed in this paper, namely, edge consistency radiation-variation insensitive feature transform (EC-RIFT). Firstly, in order to suppress speckle noise without destroying edge integrity, a non-local mean filter is performed on SAR images, and edge enhancement is applied to visible images with abundant texture to help extract coherent features. Secondly, image edge information is extracted based on phase congruency, and the orthogonal Log-Gabor filter (OLG) is chosen instead of the global filter algorithm for feature dimension reduction, speeding up the feature detection. Finally, the EC-RIFT algorithm constructs descriptors based on the sector neighborhood of edge feature points, which is more sensitive to nearby points and improves the robustness of the descriptors, thus improving the registration accuracy. The main contributions of our paper are as follows:

To capture the similarity of geometric structures and morphological features, phase congruency is used to extract image edges, and the differences between multi-source remote sensing images are suppressed by eliminating noise and weakening texture.

To reduce computing costs, phase congruency models are constructed using Log-Gabor filtering in orthogonal directions.

To increase the dependability of descriptor features with richer description information, sector descriptors are built based on edge consistency features.

The rest of the paper is organized as follows:

Section 2 introduces the proposed method in detail.

Section 3 evaluates the proposed method by comparing experimental results with other representative methods.

Section 4 discusses several important aspects related to the proposed method. Finally, the paper is concluded in

Section 5.

2. Materials and Methods

In this section, we first give a brief introduction of RIFT. Firstly, the PC is calculated for each orientation of the Log-Gabor convolution, and the features on the maximum moment map are extracted by the FAST detector [

20]. Then the amplitudes of the convolution results at each scale in the same orientation are summed to obtain the MIM. A convolution sequence ring structure is applied to generate multiple MIMs for each feature in the reference image. Based on the MIM, the descriptors are constructed with feature coding technology like the SIFT algorithm [

3]. Finally, the nearest neighbor matching strategy is used on descriptors to obtain the initial correspondence, and the outliers are removed by fast sample consensus (FSC) algorithm [

21].

The EC-RIFT algorithm process can be divided into five steps: image preprocessing, feature point detection, descriptor construction, feature matching, and outlier removal. The main process of the proposed framework is shown in

Figure 2. The blue boxes indicate the focus of the research in this paper. The following subsections provide a detailed description. Moreover, the important symbols in the paper are explained in

Table 1.

2.1. Multi-Source Image Preprocessing

The interference of noises and textures on infrared or LiDAR images is not as obvious as that on SAR images in terms of feature extraction. The regular denoise filters, such as median filters, are efficient for infrared images. The design of filters for SAR and optical images is emphasized in this section.

2.1.1. Non-Local Mean Filtering

SAR images are always corrupted by speckle noise, leading to the challenge of subsequent tasks such as feature detection, so it is necessary to suppress the speckle noise first. The non-local mean filtering algorithm (NLM) defines similar pixels as the same neighborhood pattern and uses the information within a fixed-size window around the pixel to represent it. The loss of image structure information during the noise reduction process can be avoided, and it thus performs well in maintaining image edge and structure information [

22]. The NLM filtering is calculated as (

1):

where

and

represent the output and input images of NLM filters, respectively,

x and

y represent the indices of pixels, and the weight

represents the similarity between pixels x and y, which is determined by the distance

between rectangular regions V(x), V(y), as shown in (

2)–(

4).

where

is the normalization parameter,

h is the smoothing parameter,

denotes the search window region that regulates the degree of Gaussian function’s decay,

d represents the window side, and

z is the point within

.

2.1.2. Co-Occurrence Filtering

To take full advantage of the edge information of image, the edges need to be enhanced while weakening the texture. The co-occurrence filter (CoF) is an edge-preserving filter in which pixel values that appear more frequently in the image are weighted higher in the co-occurrence matrix, so the image texture can be smooth without considering the grayscale difference [

23]. Pixel values that rarely appear at the same time are weighted lower in the co-occurrence matrix, which can better maintain the boundaries. The CoF is defined according to (

5):

where

and

represent the output and input images of CoF filters, respectively,

p and

q are pixel indices,

G denotes the Gaussian filter,

is the standard deviation of

G, and

M is calculated from the co-occurrence matrix as (

6)

where

h denotes the histogram of pixels, indicating the occurrence frequency of

p and

q. The co-occurrence matrix

C is computed as (

7).

In Equation (

7),

d denotes the Euclidean distance,

is a fixed value,

.

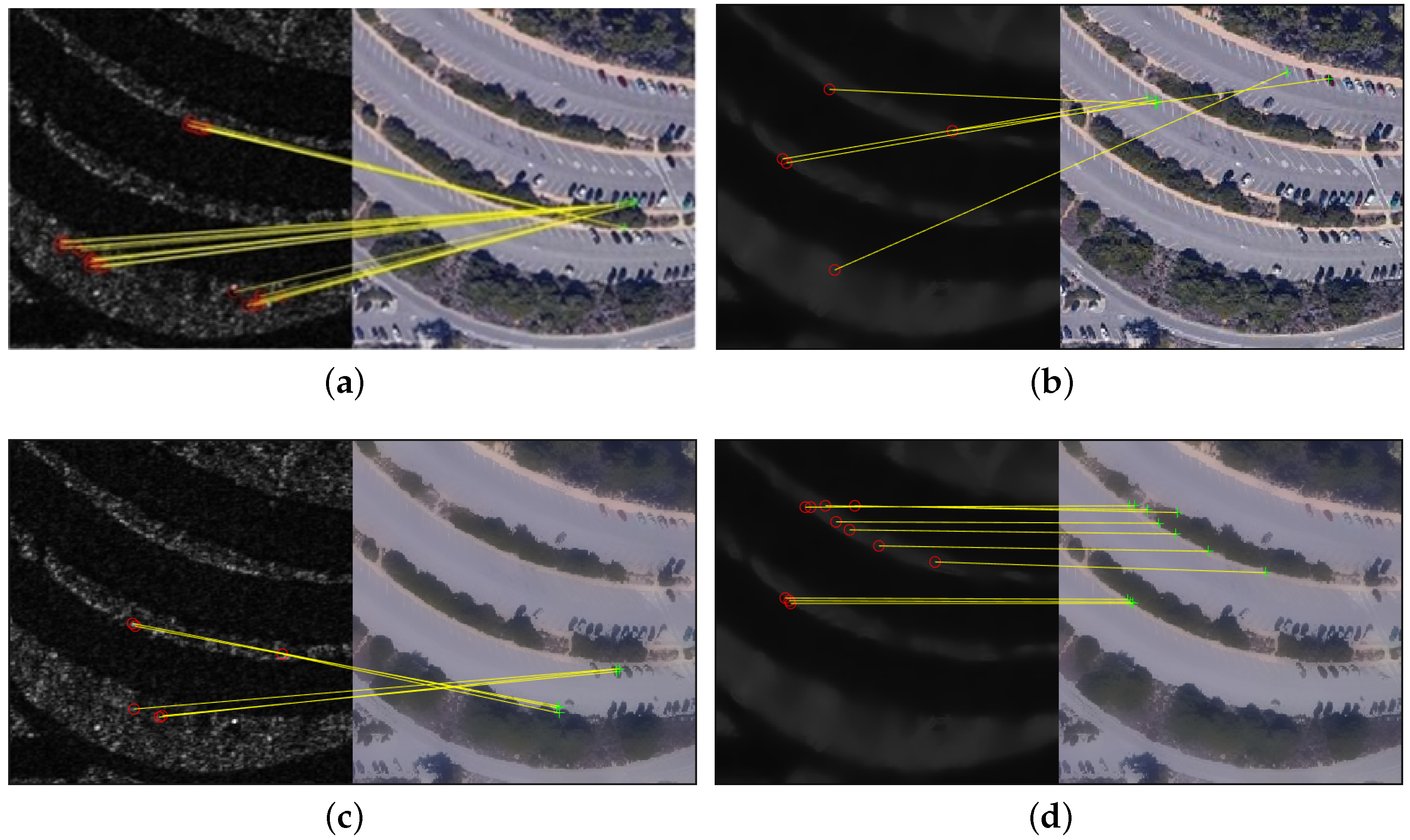

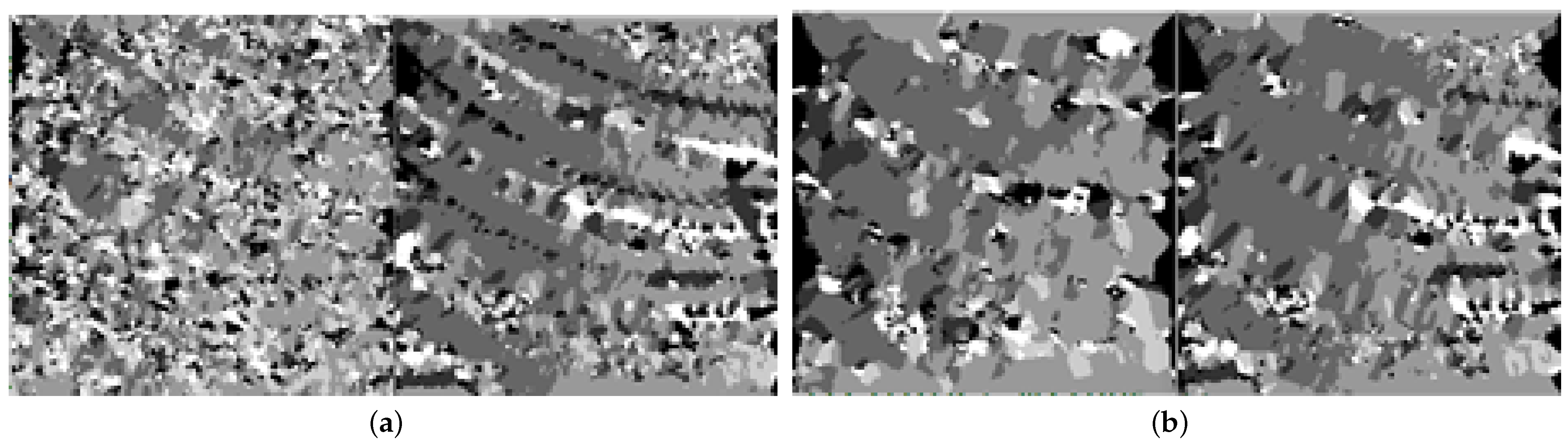

The comparison of images with/without preprocessing is shown in

Figure 3. There are significant geometric and radiometric differences between the original images, making it more difficult to be matched with high precision. It is clear that the speckle noises in SAR image

Figure 3a are filtered in

Figure 3b, and the textures in optical image

Figure 3c are suppressed in

Figure 3d with complete edge maintained. The edge information left in the two images has a stronger consistency.

2.2. Edge Feature Detection

Edge information can largely reflect the structural features of objects and is more stable in heterogeneous images, so feature detection based on complete edges can provide a more reliable foundation for registration. The phase congruency model can overcome the effects of contrast and brightness changes and extract more complete feature edges than gradients. The Log-Gabor filter is able to maintain zero direct current components in an even symmetric filter, and the bandwidth is arbitrary. Therefore, the Log-Gabor filter is efficient to calculate the phase congruency. However, the feature extraction based on it is time-consuming because of the high dimensions. The number of scales and orientations are set to four and six, respectively. Therefore, in this paper, a 2D orthogonal Log-Gabor filter (OLG) is used instead of the global filter to achieve feature dimension reduction [

24].

The geometric structure of images can be well preserved by phase congruency. Oppenheim first identified the ability of phase to extract significant information from images [

25], and later Morrone and Owens proposed a model of phase congruency [

26]. Since the degree of phase congruency is independent of the overall amplitude of signal, it has better robustness and gives more detailed edge information than the gradient operator when employed for edge detection. Kovesi simplified the calculation of phase congruency with Fourier phase components and extended the model to 2D [

14]. Kovesi employed Log-Gabor filters in the computation, which were demonstrated to be more natural for human eyesight.

The intensity value of phase congruency is calculated as Equation (

8):

where

s and

o respectively denote the filter scale and orientation,

denotes the weight, and

is a minimal constant preventing the denominator from being 0.

and

denote the amplitude and phase of the PC, respectively, and can be derived from Equations (

9) and (

10):

and

represent the results of even-symmetric filtering and odd-symmetric filtering convolved with image I, respectively. The calculation formula is shown in (

11):

L is a 2D Log-Gabor filtering function, defined as (

12):

where

represents the bandwidth, and

and

are the center frequencies of Log-Gabor filtering.

The edges extracted based on phase congruency are often used for edge detection because of their ability to be unaffected by local light variations in the image, and they can contain various information, especially information at low edge contrast.

A 2D OLG is formed with two Log-Gabor filters with mutually orthogonal directions, which can be expressed by (

13). The OLG proposed reduces the amount of computation while avoiding the redundancy of features, and thus can improve the running speed under the premise of ensuring the integrity of features.

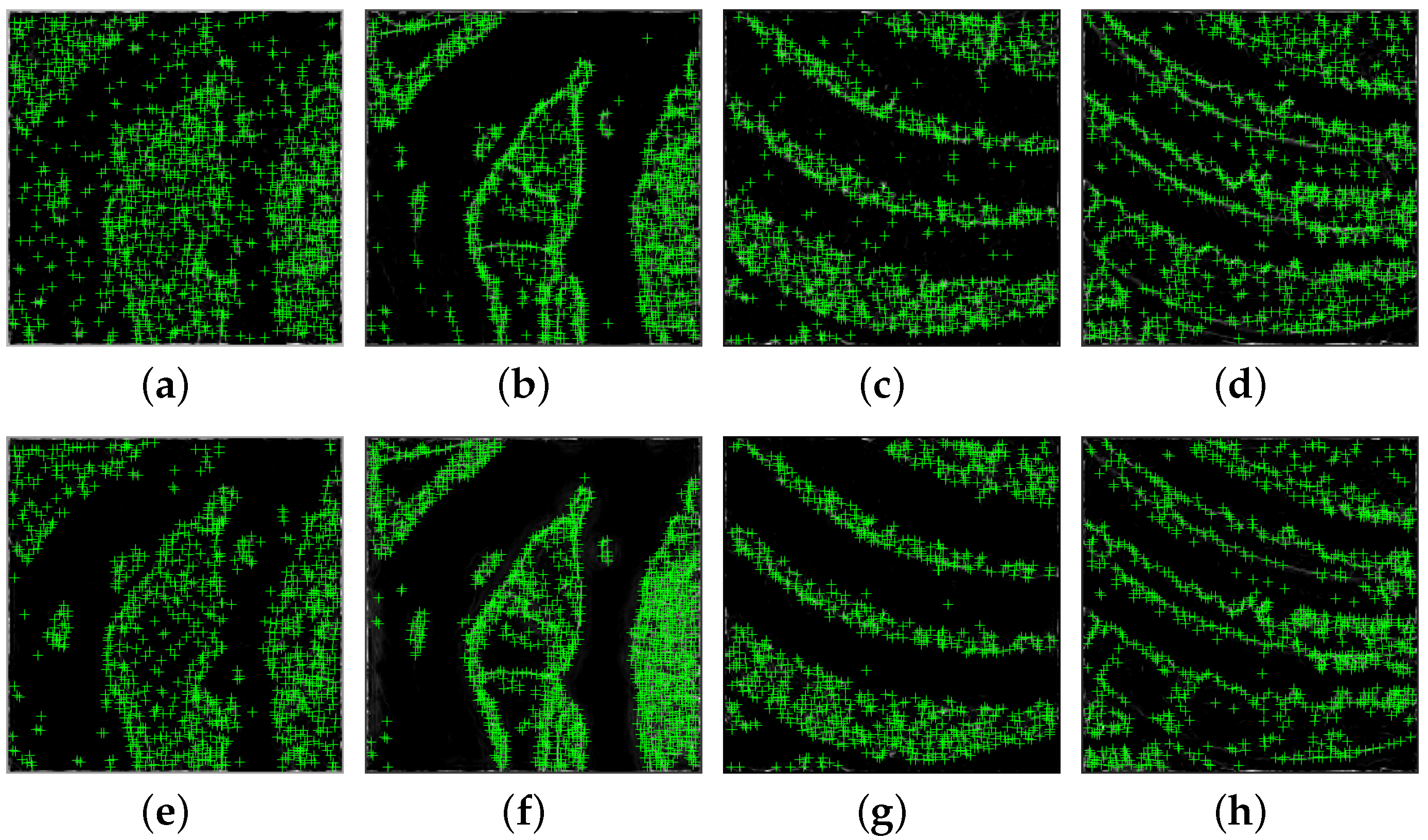

To validate the the effect of the OLG algorithm on removing redundant features, the FAST algorithm is applied based on the phase congruency representations processed in the two strategies, respectively, and the results are shown in

Figure 4. Subfigures (a)–(d) denote features detected based on LG algorithm, and (e)–(h) are based on the OLG algorithm. To illustrate the effectiveness of the proposed algorithm, image pairs SAR1/OPT1 and SAR2/OPT2 of different landforms are selected for comparison. It can be observed that the OLG algorithm performs better in depicting the image edges, and some of the isolated points were removed compared with the results of LG. The feature points obtained by the orthogonal method tend to be more recognizable, which makes it more likely to obtain the correct match in the subsequent processing.

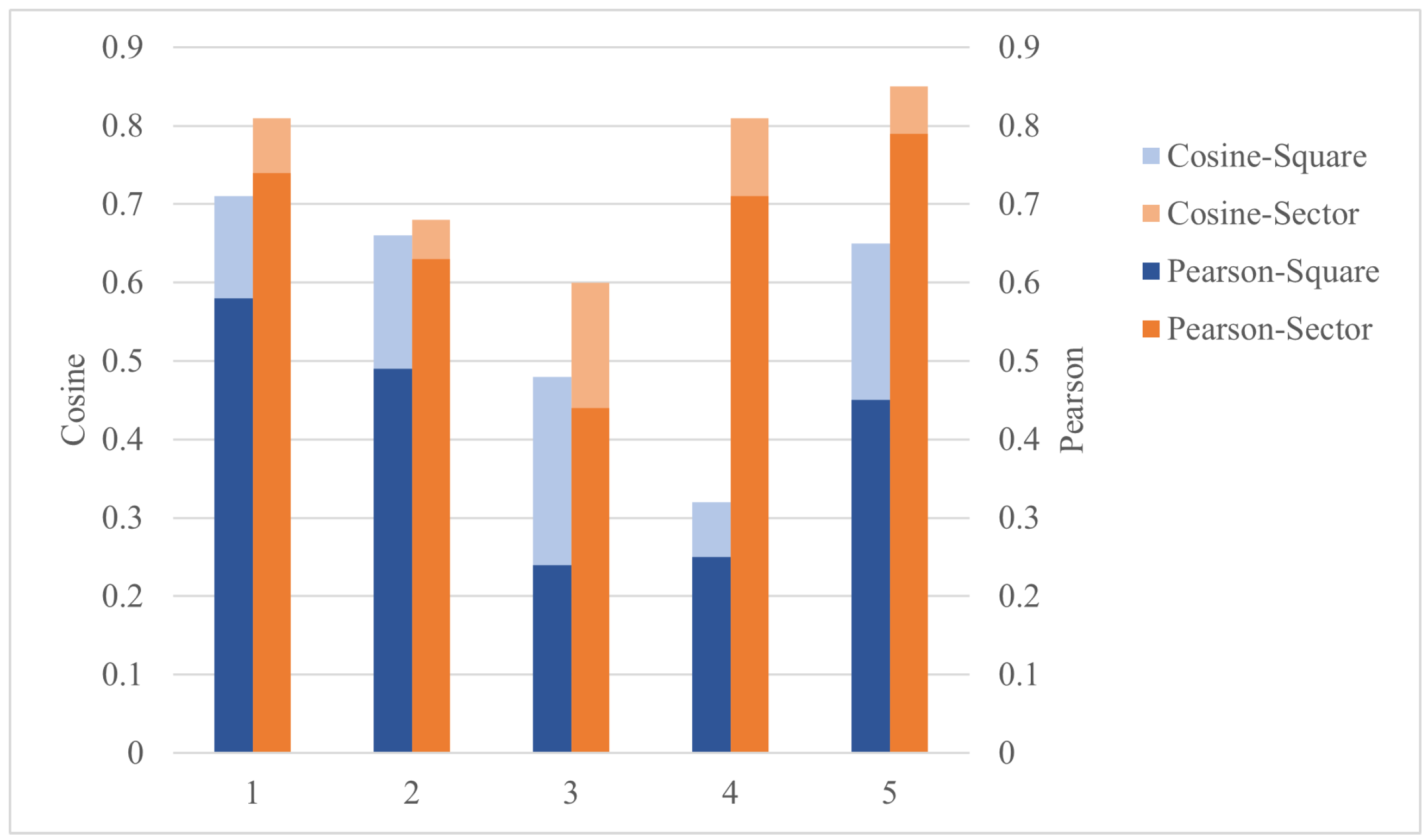

2.3. Sector Descriptor Construction

Referring to the RIFT algorithm, the descriptors construction of EC-RIFT is performed on the MIM. It accumulates the amplitudes in the same direction at scales first to obtain , and then the MIM is constituted with the direction index value , which represents the maximum values of these pixels.

To enhance the robustness and uniqueness of the descriptors, the GLOH-like approach is chosen to construct the descriptors in this paper [

27]. For the obtained features based on edge consistency, the statistical histogram in log-polar coordinates enables the descriptors to be more sensitive to near points than distant points. As shown in

Figure 5, the pixels in the neighborhood of keypoints are divided into log-polar coordinate concentric circles with the radius set to 0.25 r and 0.75 r, supposing that the radius of neighborhood is r. If the area of the divided sector is too small, the features are not sufficient, while the larger section will increase the descriptor dimension and the burden of computation. The pixels are divided into eight equal parts in the angular, each equal part being

, so that 16 sectors and 1 circle are formed, for a total of 17 image sub-blocks. The points on the same ring are close to each other, so the feature points are equally discriminated. Each block computes a histogram of six directions, resulting in a descriptor vector of 17 × 6 = 102 dimensions. To simplify the calculation, if some descriptor component exceeds the threshold value, the component is set to the threshold size, and the threshold value is empirically set as 0.2 in this paper. Finally, the descriptor vector is normalized.

2.4. Feature Matching and Outlier Removal

An effective algorithm to match feature points is the Nearest Neighbor Distance Ratio (NNDR) method, which is proposed by Lowe. It screens out local features by comparing the distances of nearest neighbor and second nearest neighbor features, and filters out features with low discrimination. A feature point is more likely to be correctly matched if the point most similar to its descriptor is close, and the point less similar to it is farther away.

After the feature matching, there are still some mismatched point pairs, so the EC-RIFT algorithm chooses the FSC algorithm to remove the outliers and estimate the parameters of the affine transformation model.