Abstract

Background: Policy implementation measurement lacks an equity focus, which limits understanding of how policies addressing health inequities, such as Universal School Meals (USM) can elicit intended outcomes. We report findings from an equity-focused measurement development study, which had two aims: (1) identify key constructs related to the equitable implementation of school health policies and (2) establish face and content validity of measures assessing key implementation determinants, processes, and outcomes. Methods: To address Aim 1, study participants (i.e., school health policy experts) completed a survey to rate the importance of constructs identified from implementation science and health equity by the research team. To accomplish Aim 2, the research team developed survey instruments to assess the key constructs identified from Aim 1 and conducted cognitive testing of these survey instruments among multiple user groups. The research team iteratively analyzed the data; feedback was categorized into “easy” or “moderate/difficult” to facilitate decision-making. Results: The Aim 1 survey had 122 responses from school health policy experts, including school staff (n = 76), researchers (n = 22), trainees (n = 3), leaders of non-profit organizations (n = 6), and others (n = 15). For Aim 2, cognitive testing feedback from 23 participants was predominantly classified as “easy” revisions (69%) versus “moderate/difficult” revisions (31%). Primary feedback themes comprised (1) comprehension and wording, (2) perceived lack of control over implementation, and (3) unclear descriptions of equity in questions. Conclusions: Through adaptation and careful dissemination, these tools can be shared with implementation researchers and practitioners so they may equitably assess policy implementation in their respective settings.

1. Introduction

Within high-income countries, such as the United States, inequities persist in the prevalence of diseases such as overweight/obesity, type II diabetes, and cancer [1,2]. These inequities can be attributed to the growing divide in social and environmental conditions and an increasing wealth gap, which limits opportunities for low-income and marginalized racial and ethnic populations to engage in health-enhancing behaviors [3,4,5]. Public schools serving students from early grades to adolescence are a critical setting to address health inequities at an early age. Policies that promote opportunities for preventive health behavior such as healthy eating, physical activity, and mental health have significant potential to close gaps in access to resources (i.e., affordable groceries and walkable neighborhoods) across populations [6,7,8,9]. For example, policies that ensure the provision of free healthy meals in schools serving low-income populations can significantly mitigate food insecurity, enhance dietary quality, and improve education-related outcomes in children [10,11]. Unfortunately, policy evaluation traditionally focuses only on behavioral outcomes and lacks rigorous understanding of how these policies are implemented. The Ottawa Charter for Public Health was developed to align targets for health promotion across the globe taking into consideration specific needs of low-, middle-, and high-income countries, but to date no measurement tools or metrics exist that seek to address these goals [12]. Thus, a gap in the measurement literature needs to be addressed to align efforts nationally and globally to improve population health.

Policy implementation science is a growing component of dissemination and implementation science, which offers rigorous methodologies to understand how and why policies are implemented, with a key focus on health equity from the beginning [13,14,15]. A global systematic review of existing quantitative school policy implementation measures [16] revealed several limitations. First, most of the 87 measures focused on a limited set of implementation determinants and outcomes, while many relevant constructs were under assessed. For example, fidelity/compliance to policies was the most commonly measured outcome; the most common determinants measured were policy actor/relationships, resources (i.e., financial and space), and leadership for implementation [16]. Second, most tools focused on only a few key constructs outside of fidelity, indicating a missed opportunity to gather contextual information about how or why implementation fidelity was low/high. These findings reflect earlier work that focused on health policies in healthcare, community settings, and other aspects of public health [17]. Finally, none of the 87 measures found focused explicitly on health equity frameworks or theories, and validation methods focused primarily on internal and/or concurrent validity of items. This highlights a lack of consideration for the lived experience of practitioners (i.e., teachers and staff) or policy recipients (i.e., community members and students) [17]. The lack of methodological approaches grounded in practitioner input from the beginning of development limits external validity and may impede rigorous understanding of how policies are implemented “on the ground”.

A major gap in implementation science is equitable implementation [18,19], which refers to the study of methods to promote the adoption and integration of evidence-based practices, interventions, and policies into routine healthcare and public health settings to improve our impact on population health. This work should build on the intellectual contributions of health equity scholars who have led the field for decades to avoid the duplication of efforts and health equity tourism [20,21,22]. This study builds on existing frameworks from health equity and implementation science, marking a step to bridge the gap between these areas for the sake of advancing policy and practice. Scholars in implementation science indicate the need for measures to be more widely available [13]. Open dissemination of measures avoids “reinventing the wheel” with single-use measures, allows for replication across studies, and facilitates the validation and refinement of measures over time. This ultimately will enhance the understanding of how policy implementation can be adapted and improved to yield optimal impact [13,23]. Finally, given a lack of meaningful engagement of policy recipients (e.g., children/students and parents/caregivers), a critical need is engaging these groups in measurement development [24,25,26]. Accordingly, the goal of this paper is to report the results of a measurement development study grounded in health equity and implementation science (Open Science Framework Registration doi: 10.17605/OSF.IO/736ZU; Temple University IRB #3000) conducted from 2022 to 2023 to bridge the gap between policy and practice [25]. This study had two primary aims:

- Identify key constructs related to the equitable implementation of school health policies through a collaborative approach.

- Create measurement tools for key implementation determinants, processes, and outcomes and establish face and content validity through review of the health equity literature and rigorous expert engagement.

2. Materials and Methods

This manuscript reports findings from an iterative measurement development study with two distinct phases. A description of the study protocol is published elsewhere and provides extensive background literature and rationale for the methods used to address these two aims [27]. In the sections below, we summarize these methods and provide further details of participant recruitment and data analysis [27,28,29,30,31,32]. We sought to develop survey instrument tools suitable for school administrators, teachers/staff, parents/caregivers, and students, which would be tested by individuals from each group. For an initial policy target, we chose to develop these tools for school nutrition policies (e.g., school meals and wellness), given the interests and expertise of the research team. A separate adaptation guide was developed [33], funded by the National Cancer Institute Consortium of Cancer Implementation Science (CCIS), which allows researchers and practitioners to adapt these tools to (1) other primary prevention of cancer behaviors (i.e., physical activity and tobacco) and (2) other settings outside schools (i.e., healthcare settings, community organizations, and workplaces).

2.1. Aim 1 Methods

To identify relevant constructs for assessment, the research team conducted a narrative literature review to identify key frameworks in the implementation science and health equity fields. The goal was to assemble a core set of frameworks that could comprise constructs for the implementation determinants, processes, and outcomes of school health policy. Research team members (GMM, CWB, CS, LT) collated articles describing the development of frameworks in the implementation science, health policy, and health equity literature, with a focus on frameworks that included an accompanying measure, or for which measures of framework constructs had been developed. The team then conducted an initial assessment of each framework using a worksheet where each team member recorded details on the following items: article citation; setting/context; key constructs and relevance to school-based policy; levels of conceptualization; most salient framework type (i.e., determinants, processes, outcomes); associated measurement/evaluation tool? (if yes, state location); and priority for use (see Supplementary Materials File S1). The team met on a weekly basis to discuss frameworks and their suitability for inclusion. Once the set was finalized, we collaboratively organized framework constructs by determinants, processes, and outcomes, as shown in Table 1 below. Determinant frameworks were those guiding an understanding of key factors supporting/hindering implementation; process frameworks supported an understanding of “how” implementation takes place and the practices adopted; and outcome frameworks explicated the primary indicators of implementation success [34]. A full description of these frameworks and their constructs can be found in Supplementary Materials File S2. The team synthesized similar constructs that appeared across multiple frameworks.

Table 1.

Guiding implementation science and health equity theoretical frameworks.

Once the set of constructs was established, the team developed a Qualtrics survey where subject matter experts could rate the perceived importance of each construct to addressing access to school nutrition policy from their perspective. The primary objective was to gather feedback from a variety of experts, including practitioners (i.e., teachers, food service providers, school administrators), policymakers, representatives from relevant non-profit organizations (e.g., anti-hunger advocacy, school wellness), and researchers. A total of 44 constructs across the 6 frameworks were included in the survey. To enhance readability and avoid overuse of research terminology (i.e., “jargon”) the team adapted each construct to create a participant-facing item name and description, followed by an example item to assess this construct. For example, the CFIR construct Innovation Evidence-Base (Innovation Characteristics Domain) was called “Perception of Policy Evidence Base”, followed by an example question of “To what extent do you believe the evidence used to support this policy is credible?” to give some context behind the construct. The survey began with demographic questions followed by construct rating. For each construct, participants chose from six options: 1 = not important at all, 2 = not very important, 3 = neutral, 4 = somewhat important, 5 = very important, 6 = not applicable.

After the rating exercise, participants had the option to complete three open-ended questions:

- Please use this space to identify issues that you think are missing from this list. What else would be an important factor to consider in school policy implementation?

- Please provide any other suggestions, ideas, or comments regarding this project (you may also copy URLs to any relevant web-based materials in the space below).

- Please provide any additional experiences or feedback that are important to you that were not addressed in the sections above.

Finally, participants were asked if they would like to be contacted to take part in cognitive testing of the measurement tools in Aim 2. The full survey can be found in Supplementary Materials File S3.

2.2. Aim 1 Recruitment

Participant recruitment occurred via non-random purposive and snowball sampling in two phases. An email with a link to the Qualtrics survey was disseminated to organizations and partners to ensure reach to those in the K-12 school/community, research, and policy/advocacy settings nationwide. These organizations were as follows: (1) the Nutrition and Obesity Policy Research and Evaluation Network (NOPREN), funded by Healthy Eating Research (a program of the Robert Wood Johnson Foundation) and comprising researchers/policy advocacy experts in school nutrition and wellness, (2) the Urban School Food Alliance (funding this study; comprising 18 school districts across the United States), and (3) the School District of Philadelphia (to gain local-level feedback). Email solicitations were sent in September 2022, with additional follow-up emails to distribution lists in October and December. Afterwards, flyers were distributed to various groups to facilitate reach to as many practitioners as possible. A recruitment plan spreadsheet was drafted to track outreach efforts. As an incentive, each participant with complete responses was given the option to be entered into a draw to win 1 of 20 USD 25 gift cards. We anticipated a sample size of 100 people and estimated that participants would have a 20% chance of winning a gift card for participation (see email in Supplementary Materials File S4).

2.3. Aim 1 Analysis

Prior to analysis, the team utilized the “bot detection” feature in Qualtrics to identify potential fake/autogenerated responses, and to remove all incomplete responses. Demographic and construct rating data were cleaned and analyzed to generate descriptive statistics (means, proportions, and frequencies) for each characteristic and construct among the whole sample and stratified by expert classification to identify differences between groups (e.g., school staff, researchers, policymakers). Open descriptive analysis was conducted on free-response data to ascertain potential additional constructs/items to include in cognitive testing. These were cross-referenced with existing constructs in the construct bank to examine overlap. Following analysis, the team met several times to “triage” which constructs to include in the cognitive testing phase and focused on the highest scoring items as priority constructs for tool development in Aim 2. This resulted in a series of mean scores for each construct (ranging from 1 to 5; all not applicable scores removed), arranged by theoretical framework.

2.4. Aim 2 Methods

Upon analyzing findings from Aim 1, the research team met several times to discuss which constructs to prioritize in the cognitive testing phase. Given the availability of existing measurement tools on common implementation determinants and outcomes [40,41] (i.e., IOF, CFIR), greater priority was given to constructs previously not measured in policy implementation, thus prioritizing constructs from the HEMF, GTE, and R4P. For each framework, backwards citation searches were conducted on the published article to extensively search the literature for any previously developed measurement tools that we could adapt for the present study and limit unnecessary duplication of questions/items. This resulted in a spreadsheet documenting the frameworks and constructs, adapted construct definition (if applicable), sub constructs, existing items, potential inclusion and purpose, item source, availability of psychometric data, and source bibliometric information. Review of this worksheet helped identify gaps in measurement and places where the research team needed to develop new questions. Items were coded to denote which participant group(s) would complete them; codes were reviewed by the research team to reduce burden where questions were less applicable for a certain participant group (i.e., school administrators, teachers, food service staff, parents/caregivers, students). This resulted in a separate Word document for each framework with questions and target participant groups listed under each construct, to ensure that questions were guided by theory and the existing literature.

After several rounds of review, the research team developed the first round of surveys (called Version 1) for each target group: school food service staff, school administrators, teachers/staff, caregivers, and students. These documents were created by converging all questions into one document, and then organizing, formatting, and deciding on an initial response system for blocks of questions. Surveys were checked for brevity and readability to ensure parsimony and appropriate language for the target age group. To facilitate flow of completion, each survey began with questions addressing implementation determinants (i.e., from HEMF and CFIR) as Section 1, then Section 2 focused on implementation processes (i.e., R4P, GTE, FSD), and Section 3 ended with a focus on implementation outcomes (i.e., IOF). For students and caregivers, only 2 sections were included, which were 1 and 3; Section 2 was replaced with implementation outcome questions. Based on most resources and existing tools found, the team opted for a 5-point Likert scale (i.e., completely disagree, disagree, neutral, agree, completely agree) for most items, and remained faithful to other scoring scales if they differed from this format (i.e., 3-item or different scoring procedure). Before recruiting a sample for cognitive interviewing, the research team participated in the Adolescent Health Network hosted by Penn State PRO Wellness funded through a Patient-Centered Outcomes Research Institute (PCORI) at the Pennsylvania State University [42]. This network facilitated a panel session with 10 adolescents (US high school/last 4 years of secondary school ages) from across the state of Pennsylvania who provided informal feedback on the student-facing survey through a video conferencing platform. This was incredibly valuable and allowed the team to refine wording and items before conducting interviews so that participants’ time could be used more effectively.

2.5. Cognitive Interviewing

As described in the protocol [27], a PhD-level team member experienced in cognitive interviewing and qualitative methods trained the research team in conducting qualitative interviews. Training included didactic presentation, review of the cognitive interviewing methods literature, and example procedures from previous studies [28]. The study team developed a detailed protocol that included a communication guide for recruiting and enrolling participants, conducting the interviews, and performing data analysis (see Supplementary Materials File S5). Interviews were conducted by the study PI and 2 masters-level research assistants via Zoom. All interviews followed a semi-structured interview guide that asked participants to go through the survey in order of the sections and items to provide detailed feedback on each set of instructions and items in the survey. The guide included various prompt options that interviewers could choose to probe for additional detail as needed.

Participants were randomly assigned 1 of 2 conditions: (1) pre-review of the survey prior to the interview or (2) testing the survey during the interview. This was carried out to avoid potential bias during a live interview for some participants and to see whether this allowed for more in-depth responses. The interview guide questions and flow (i.e., review of items in order) did not vary by condition. For condition 1, one of the team members emailed the survey and completion instructions to the participant approximately 48 h prior to the testing interview. Participants completed the survey via a shared online file (Google drive) and annotated the document with comments (e.g., highlighted confusing terms, redundant items) and returned it to the study team prior to the start of the interview. For condition 2, the interviewer shared the survey electronically with the participant just minutes prior to the interview such that the participant would not have time to review the survey. The interviewer instructed the participant to complete the survey at the beginning of the interview session. The interviewer then followed the interview guide to elicit feedback on the survey. The participant returned the survey to the study team at the conclusion of the interview.

2.6. Aim 2 Recruitment

All partners that supported recruitment for Aim 1 were contacted for Aim 2 with explicit language emphasizing that only practitioners (i.e., food service, teachers/staff, administration) and recipients (i.e., students, caregivers) would be eligible for cognitive interviews. Researchers and policy experts were excluded from Aim 2 to ensure that development was grounded in the needs of those who would be asked to complete such surveys. A flyer was distributed in February 2023, followed by additional nudges in March and April (see Supplementary Materials File S6). Participants were provided a USD 25 electronic gift card, which was emailed following the conclusion of the cognitive testing interview. Although the lead author is semi-fluent in Spanish, the team decided it would be more appropriate to conduct all interviews in English and develop separate adaptation guides in Spanish following finalization of English tools, grounded in recommendations from global implementation science experts [43].

2.7. Aim 2 Analysis

Analysis of cognitive interview data comprised several steps. First, guided by the work of LaPietra and colleagues [28], the research team developed a deductive coding matrix for each participant group with each question, response provided, classification of feedback (easy versus moderate/difficult), and a potential action to be taken by the research team in the next iteration of surveys (i.e., version 2, 3, 4, etc.). Following such guidance, “easy” feedback was classified as comments that had a straightforward solution, such as correction of an error, deletion, or modification of a word, change to phrasing, or request for examples of item descriptions. For moderate or difficult categories, the team coded feedback related to issues such as comprehension of the topic, appropriateness of the question for a certain group, and related issues that required team discussion and decision-making before revisions could be made to the surveys. All feedback inputted into the Google document, transcripts, and researcher notes was entered into the coding matrix (see Supplementary Materials File S7).

The research team met on a weekly basis to review feedback, discuss both the easy and moderate/difficult feedback from participants, and decide how to modify a question based on feedback. Due to the iterative nature of data collection, incremental changes were made in between “rounds” of interviews so that the initial participants reviewed earlier versions of the survey, and later respondents reviewed surveys after the team had made small changes. This step was followed by thematic analysis [44] of interview transcripts to identify overarching themes from feedback with a focus on pragmatic issues with the measurement tools to help inform changes to the survey, following a similar approach to previously published research [39]. Once surveys were finalized, the team used the Psychometric and Pragmatic Evidence Rating Scale (PAPERS) standardized scale [45] to rate the measures on five pragmatic properties: brevity, cost, training, interpretation, and readability. The PAPERS has been used in prior work such as systematic reviews of policy implementation measurement tools [16,17]; its use in the current study facilitates comparison of the resulting measures against existing tools within the field of policy implementation science.

3. Validity, Reliability, and Generalizability

The validity of the data analysis methods in Aims 1 and 2 was established by following a measurement development methodology and participant engagement techniques, consistent with existing guidance in the field [27,28,29,30,31,32]. Participant triangulation was used to ensure that themes from the cognitive interviews were consistent across different populations (i.e., practitioners and recipients) and representative of marginalized voices. To establish reliability and generalizability, the research team relied heavily on the coding matrix as an audit trail [46,47] to facilitate analysis of key trends in data. In addition to the matrix, the team regularly conducted peer debriefing through weekly meetings and made notes on the matrix that all team members could add to in between meetings, to ensure all members of the team had an equal voice. Finally, the team looked for negative cases in the themes to ensure adequate interpretation of the findings.

4. Results

4.1. Aim 1 Results

A total of 122 participants completed the survey for Aim 1, most of whom were practitioners in the K-12 setting. All participant demographics including role, race/ethnicity, and education level can be found in Table 2.

Table 2.

Aim 1 participant demographic information.

Construct rating data (Table 3) revealed that among the entire sample (N = 122), the top-rated constructs were Unanticipated Events from the CFIR (4.63 ± 0.75), Socioeconomic, Cultural, and Political Context from the HEMF (4.61 ± 0.89), Fidelity/Compliance from the IOF (4.53 ± 0.85), Material Circumstances from the HEMF (4.53 ± 0.83), and Meet Basic Food Needs with Dignity from the FSD frameworks (4.51 ± 0.81). These were largely consistent with the top-rated constructs of school practitioners (n = 79), but when split into practitioners versus researchers/policy advocacy groups/other experts, the highest rated variables in this latter group were as follows: Socioeconomic, Cultural, and Political Context (4.86 ± 0.52), Material Circumstances (4.81 ± 0.55), Cost (4.79 ± 0.65), Acceptability (4.76 ± 0.66), and Feasibility (4.76 ± 0.48) from the IOF.

Table 3.

Construct rating data reported for the whole sample, school practitioners, and remaining participants.

The lowest ranked constructs according to practitioners were as follows: Build on Community Capacity from GTE (3.72 ± 1.31), Social Location from the HEMF (3.73 ± 1.37), Relative Priority from the CFIR (3.74 ± 1.37), and Psychosocial Stressors from the HEMF.

(3.81 ± 1.53). Of these, only Social Location was ranked among the lowest by researchers/policy/other (4.36 ± 1.01), with the lowest score assigned to Innovation Evidence-Base from the CFIR (4.26 ± 1.01). Across all constructs, higher ratings were provided by researchers/policy/other than practitioners, indicating a potential difference among these two groups. Participants who provided responses to open-ended questions provided some additional items/constructs to consider, such as the overall quality of school meals, student input, community buy-in, supply chain constraints, and equipment availability for implementation. Aside from food quality, these suggestions all aligned with constructs in the survey, but were valuable for the research team to prioritize when developing specific survey items.

4.2. Aim 2 Results

A total of 42 individuals responded to the opportunity to interview; 27 provided accurate information and interview schedules and 23 completed the interview. Of those who did not show/complete an interview, three were food service staff or managers and one was a teacher/staff. Table 4 shows the demographic information for all participants, in addition to the demographics of the schools/districts they work in/are a part of.

Table 4.

Cognitive Interview Participant Demographic Information.

Across all cognitive interviews, we recorded 315 total comments from participants. Table 5 below shows the distribution of feedback type, split by “easy” and “moderate/difficult” for each participant type, followed by the totals and average. Most feedback was classed as easy and comprised predominantly clarification requests, simplification of questions, or removing unnecessary words.

Table 5.

Summary of feedback type by participant group.

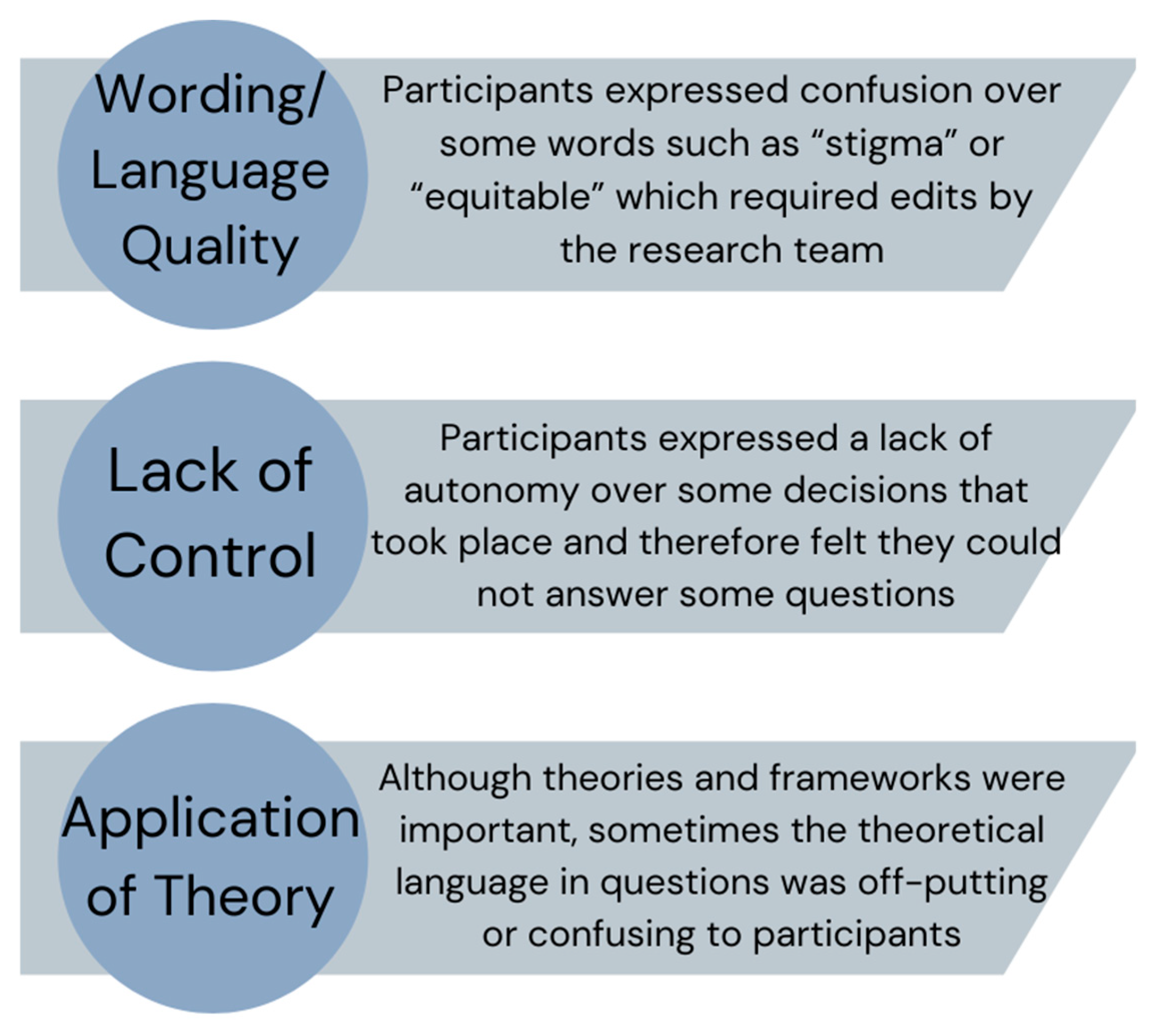

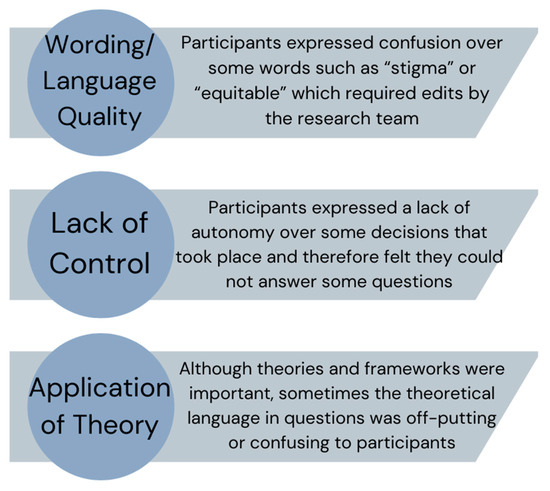

In addition to comments provided on the documents directly and the main actions taken from interviews, we coded overarching themes from the interviews to facilitate overall refinement of the surveys and guide future research. Three main themes of feedback arose from the cognitive interviews which were as follows: (1) Wording/comprehension issues, (2) Control over policy implementation, and (3) Grounded in theory but not translated well. These are described in Figure 1 and the text below along with key quotes from participants to illustrate the issues requiring attention.

Figure 1.

Summary of themes from cognitive interviews.

4.3. Wording/Comprehension Issues

Much of the “easy”-coded feedback pertained to words that were too long or “sophisticated” for people to understand. This meant that often the researchers had to explain the whole question to respondents, signaling they needed to be shortened or reworded. One school food service manager commented “…like equitable. What do you mean?” Another example came from a student in the seventh grade, “I don’t exactly know what stigmatized is. So I had to Google a definition for that one”. This was in response to a question from the FSD framework around addressing stigmatization of those from disadvantaged backgrounds. These key words were used several times in the surveys, so the research team had to find ways to adapt, especially for those with a lower reading level (i.e., students). To help increase comprehension, respondents often provided alternate wording during the interview. One example came from the IOF and a question related to policy complexity, where a district food service director commented, “The difficulty with [question] Number 5 was, ‘it is/was difficult for me to learn the requirements of the policy’… the difficulty to interpret and apply…or you could even say, ‘operationalize’”. And another from a student,

“I made one comment about changing the wording because it says, ‘Are there any opportunities for you to learn about school meals programs and be involved?’ The answer is probably yes, but I, personally don’t know of any… So, I said maybe word it, ‘Do you know of any opportunities for this about the school meals programs to be involved?”(Student, 12th grade)

4.4. Control over Policy Implementation

There was an overarching sense that participants did not feel in control of the implementation of school meals. Specifically, one district food service director stated, “I think it’s gonna be difficult for a food service worker who doesn’t know anything about free school meals to understand the implementation”. This was echoed by a school-level food service manager who said “That [question] wouldn’t necessarily again be relevant on my level of management for an individual school”. After the first round of testing, we decided to split the food service surveys into school level and district level, reducing the length of school-level food service participants. Even after this separation, one district food service director, in response to the interviewer asking if any questions were confusing, said “Some of [the questions] are aspirational, and not necessarily what we’re doing now” which made them harder to answer.

Furthermore, teachers, students, and caregivers reported an overall lack of input in school meals; thus, many questions felt irrelevant to them. For example, some questions in the survey to parents asked about the level of parent input, to which one parent commented “It doesn’t seem like there’s any like avenue to voice any opinions or concerns, not that I have any”. The team acted somewhat differently on this latter kind of feedback, as although many participants did not feel they could answer, we felt it important to keep these questions in the final surveys but introduced the “N/A” option instead of a “neutral” option. This was met with approval from subsequent participants who responded to the surveys.

4.5. Grounded in Theory but Not Translated Well

Some questions that were grounded in the health equity frameworks, although making empirical sense, did not translate well into a survey and required significant rewording. One question grounded in the HEMF for teachers expanded on the Socioeconomic, Cultural and Political Context and asked about how non-English speakers are prioritized in school meal policies. One teacher commented, “So, I’m not sure how linguistic preferences influence a meal because I feel like a person’s language depends more on their national background or their racial and ethnic background.” In some cases, especially for students, they did not see the urgency to gain community member input. For example, one high school (US 12th grade) student commented, “I think that the student opinion is more important than the community and family member voices. So, I wrote that I didn’t think that they were particularly involved. But I also don’t think that’s inherently a bad thing.” Another example came from a school food service director who was completing a question asking what demographic characteristics (i.e., race/ethnicity, income level) make students less likely to participate in school meals,

“I’m not sure that I would know what characteristics make a student less likely to participate. I would think a lot of what makes students less likely to participate in school meals is there unsure how to go about going to the line and putting in their [ID] number.”

This insight came from a participant in a predominantly white school district, and therefore provided helpful feedback from their perspective, adding to insights from participants from more racially diverse settings.

4.6. Pragmatic Properties of Surveys

Once the team finalized all surveys (Supplementary Materials File S8), we scored them according to the PAPERS pragmatic scale to understand how feasible they are to implement in practice. Overall, the measures scored high for their pragmatic properties. The student survey scored 17/20 and all other surveys scored 16/20, indicating overall good to excellent practicality. All measures are freely available and have detailed instruction for the administration, scoring, and interpretation of scores. All surveys except the caregiver survey scored a 4 (excellent) on readability. All surveys were written between a fifth- and eighth grade reading level, indicating their accessibility to most target audiences. Table 6 below shows the scoring results for each measure.

Table 6.

Pragmatic properties of surveys by participant type.

5. Discussion

The aims of this study were to identify important constructs related to the equitable implementation of school health policies, create measurement tools for key implementation determinants, processes, and outcomes, and establish face and content validity. This paper reported findings from a 2-year project to develop equity-informed implementation measurement tools, with the goal of advancing the field of policy implementation science. A key innovation and strength of this study is a primary focus on policy practitioners (i.e., school/district practitioners) and policy recipients (i.e., students and parents/caregivers), demonstrating a commitment to equity in the measurement process by prioritizing reach in the study design [48].

Findings from Aim 1 provided important insights from a diverse group of key informants such as teachers/school staff, food service providers, researchers, trainees, and policy advocacy experts. When analyzing ratings, we found that scores were consistently higher among researchers than for practitioners (i.e., those working in the school setting), meaning this group seemed to report each construct as more important. Given the novel nature of this study and a lack of prior literature to contextualize the findings, the team hypothesize that this trend is reflective of researchers’/policy experts’ greater involvement in evaluation than practitioners [49], and therefore this may have led to a response bias which led to higher ratings of importance. This finding warrants consideration and the research team will continue to analyze the data as a whole and by splitting the sample into researchers and practitioners to examine disaggregated findings and allow for important nuance in policy implementation evaluation.

Although cognitive interviewing has been utilized as a technique for developing measurement tools for decades [28,30,32,50], it has only recently been utilized to develop measurement tools within implementation science and few examples of such application exist [32,51]. One notable study developed a measurement tool for community-based organizations’ capacity to engage with academics, which was co-created with community members and followed a similar approach of survey completion and cognitive testing [32]. Similar to our study, the authors received feedback from participants related to language/clarity issues and made iterative changes over time to increase comprehension and reduce “jargon” in the questions asked. Other examples of measurement development have focused on capacity for implementation tools [51,52] but have utilized online survey tools for gaining participant feedback, with a primary focus of assessing psychometric properties of items and constructs. Although these studies offer valuable tools for understanding the capacity for implementation, they do not provide in-depth feedback from participants as to why items were scored a certain way during testing, what was confusing to them as a reader, and how the measurement tools can be improved. Our work addresses this gap by taking a two-step approach to measurement development and testing.

Furthermore, despite prior published research utilizing cognitive interviewing methods, there are currently no published applications (a) for developing tools combining health equity and implementation science frameworks or (b) with policy recipients (i.e., students and parents) as key informants in the measurement development process. This highlights our work as a much-needed innovation in the measurement development field. Focusing on the “end-users” in addition to policy implementors took additional time to recruit these participants, and to and design more appropriate interviewing techniques for students, but we see this as a necessary investment to ensure children’s and parents’ voices are heard in the measurement development process by focusing on reaching these populations from the beginning [48].

One potential limitation of this study is that all interviews took place over an online video conference as opposed to in-person. It is recommended that cognitive interviews be conducted in-person to pick up on body language cues and other nonverbal indicators [31], but given that Aim 1 recruitment was nationwide, the research team believe it was important to not limit participants in Aim 2 to one geographic area, and instead gave all participants the default option of a video conference call. Thus, although some important cues may have been missed from participants, the cognitive interview protocol was adapted to include both in-person and online options for both interview conditions (i.e., survey review before interview or during). During training, the research team practiced responding to nonverbal cues and developed prompts for these situations to try and elicit more feedback. Additionally, using snowball recruitment procedures has limitations in that the sample may not be representative of the target population.

Another limitation of this study is that although the team conducted 23 interviews with a variety of implementer and recipient groups, the sample size for each group was relatively small and we struggled to recruit school/district administrators. Although the surveys for administration, teachers, and food service providers were very similar, and thus many questions were the same, the team would have liked more input from school leadership. There is no “gold standard” for participant size; our team reached saturation of feedback in the thematic analysis and our sample size was similar to other qualitative-heavy measurement development studies. This study is the first step in developing rigorous and valid measurement tools, and we are planning to conduct more rigorous psychometric testing in future studies with larger, more representative sample sizes. Regarding usability, researchers and practitioners may choose to pare down the number of items on the food service, teacher, and admin surveys to the most pertinent constructs to improve their brevity. The team is working to improve the readability of the caregiver and student surveys to make these more accessible to participants with lower literacy and will conduct further testing of these with target participant groups.

6. Conclusions

Overall, this study achieved its objectives and resulted in a series of robust policy implementation measurement tools that can be used to advance the understanding of if and how health policies are implemented in the school setting. This is a timely and novel study that bridges the gap between policy and practice by centering health equity and implementation science. Regarding the next steps for this work, the surveys developed are already being integrated into a five-year implementation mapping study with a Pennsylvania school district to develop and test equity-informed implementation strategies that aim to increase the reach of universal school meals [53]. Ongoing refinement of these tools will occur based on participant feedback and analysis of the psychometric data resulting from use in a larger study. The published adaptation guide [33] will facilitate application to other policy settings and to other high-, middle-, and low-income countries. We encourage research teams to adapt and refine these tools to meet their evaluation goals and enhance usability for their specific research context.

Beyond use for research, we hope that schools and school districts conducting their own evaluation of policies, such as school meal policies, can find these measures useful and use them to make data-informed decisions about implementation. We envision that this is the first step in a series of advancements in policy implementation science. Future studies are warranted to (1) examine the psychometric properties of these measures and (2) assess the feasibility and acceptability of these tools to other health policies such as physical activity, tobacco, and mental health with a key focus on health equity. If implementation of policies that aim to advance health equity can be measured beyond fundamental issues of fidelity/compliance, the likelihood of sustaining policy outcomes can be improved and thus elicit a key impact on the health of marginalized populations [19].

7. Contributions to the Literature

There are many policy implementation measurement tools developed internally by research teams, requiring a large volume of work, but seldom used by other researchers.

This novel study resulted in a series of open access survey tools to help researchers and practitioners better assess school policy implementation grounded in the work of health equity experts.

This is the first study to meaningfully utilize both policy practitioner (i.e., teacher and administrator) and recipient (i.e., student and parent/caregiver) feedback in the development and refinement of survey tools for policy implementation.

Supplementary Materials

The following supporting information can be downloaded at: https://www.mdpi.com/article/10.3390/nu16193357/s1. Supplementary Materials File S1: Framework selection worksheet (XLS spreadsheet); Supplementary Materials File S2: Chosen health equity and implementation science frameworks, constructs, and definitions (Word document); Supplementary Materials File S3: Qualtrics survey for Aim 1 (PDF document); Supplementary Materials File S4: Aim 1 recruitment file (Word document); Supplementary Materials File S5: Cognitive interviewing guides; Version 1—feedback during interview and Version 2—feedback before and during interview (Word document); Supplementary Materials File S6: Aim 2 recruitment flyer (PDF document); Supplementary Materials File S7: Aim 2 example cognitive testing coding matrix (XLS spreadsheet); Supplementary Materials File S8: Finalized surveys (PDF document).

Author Contributions

G.M.M. conceptualized the study and obtained extramural funding. G.M.M. and C.W.-B. developed the surveys and protocols for the study. G.M.M., C.W.-B., C.R.S. and L.T. developed the framework list and co-created the survey. G.M.M. and C.R.S. led the writing of the article. G.M.M. and R.I. led the data collection and analysis approaches. All authors contributed to the study design and data interpretation, edited the final manuscript, and approve of its submission. All authors have read and agreed to the published version of the manuscript.

Funding

This study was funded by the Urban School Food Alliance. The corresponding author is also supported by a National Institutes of Health, National Heart, Lung, and Blood Institute career development award (K01 HL166957). The findings and conclusions in this paper are those of the authors and do not necessarily represent the official positions of the National Institutes of Health.

Institutional Review Board Statement

This study was reviewed and approved by the Temple University IRB, protocol #30000.

Informed Consent Statement

Consent was obtained from each participant for publication.

Data Availability Statement

Some data presented (i.e., coding matrix for Aim 2) in this study are available in the additional files. Remaining data are available on request from the corresponding author due to confidentiality concerns.

Acknowledgments

The authors wish to acknowledge the contributions of Maggie McGinty, a paid research assistant, who helped to conduct cognitive interviews.

Conflicts of Interest

The authors declare that they have no conflicts of interest.

Protocol Registration

Open Science Framework Registration: DOI 10.17605/OSF.IO/P2D3T.

References

- Coughlin, S.S. Social determinants of breast cancer risk, stage, and survival. Breast Cancer Res. Treat. 2019, 177, 537–548. [Google Scholar] [CrossRef] [PubMed]

- Jenssen, B.P.; Kelly, M.K.; Powell, M.; Bouchelle, Z.; Mayne, S.L.; Fiks, A.G. COVID-19 and Changes in Child Obesity. Pediatrics 2021, 147, e2021050123. [Google Scholar] [CrossRef] [PubMed]

- Global Burden of Disease, C.; Adolescent Health, C.; Kassebaum, N.; Kyu, H.H.; Zoeckler, L.; Olsen, H.E.; Thomas, K.; Pinho, C.; Bhutta, Z.A.; Dandona, L.; et al. Child and Adolescent Health From 1990 to 2015: Findings From the Global Burden of Diseases, Injuries, and Risk Factors 2015 Study. JAMA Pediatr 2017, 171, 573–592. [Google Scholar] [CrossRef]

- Kwan, B.M.; Brownson, R.C.; Glasgow, R.E.; Morrato, E.H.; Luke, D.A. Designing for Dissemination and Sustainability to Promote Equitable Impacts on Health. Annu. Rev. Public Health 2022, 43, 331–353. [Google Scholar] [CrossRef] [PubMed]

- Kumanyika, S.K. A Framework for Increasing Equity Impact in Obesity Prevention. Am. J. Public Health 2019, 109, 1350–1357. [Google Scholar] [CrossRef]

- LaVeist, T.A.; Pérez-Stable, E.J.; Richard, P.; Anderson, A.; Isaac, L.A.; Santiago, R.; Okoh, C.; Breen, N.; Farhat, T.; Assenov, A.; et al. The Economic Burden of Racial, Ethnic, and Educational Health Inequities in the US. JAMA 2023, 329, 1682–1692. [Google Scholar] [CrossRef]

- Au, L.E.; Ritchie, L.D.; Gurzo, K.; Nhan, L.A.; Woodward-Lopez, G.; Kao, J.; Guenther, P.M.; Tsai, M.; Gosliner, W. Post-Healthy, Hunger-Free Kids Act Adherence to Select School Nutrition Standards by Region and Poverty Level: The Healthy Communities Study. J. Nutr. Educ. Behav. 2020, 52, 249–258. [Google Scholar] [CrossRef]

- Hecht, A.A.; Pollack Porter, K.M.; Turner, L. Impact of The Community Eligibility Provision of the Healthy, Hunger-Free Kids Act on Student Nutrition, Behavior, and Academic Outcomes: 2011–2019. Am. J. Public Health 2020, 110, 1405–1410. [Google Scholar] [CrossRef] [PubMed]

- Ingram, M.; Leih, R.; Adkins, A.; Sonmez, E.; Yetman, E. Health Disparities, Transportation Equity and Complete Streets: A Case Study of a Policy Development Process through the Lens of Critical Race Theory. J. Urban Health 2020, 97, 876–886. [Google Scholar] [CrossRef]

- Guerra, L.A.; Rajan, S.; Roberts, K.J. The Implementation of Mental Health Policies and Practices in Schools: An Examination of School and State Factors. J. Sch. Health 2019, 89, 328–338. [Google Scholar] [CrossRef]

- Cohen, J.F.W.; Hecht, A.A.; McLoughlin, G.M.; Turner, L.; Schwartz, M.B. Universal School Meals and Associations with Student Participation, Attendance, Academic Performance, Diet Quality, Food Security, and Body Mass Index: A Systematic Review. Nutrients 2021, 13, 911. [Google Scholar] [CrossRef] [PubMed]

- World Health Organization. Ottawa Charter for Public Health. Available online: https://www.who.int/publications/i/item/WH-1987 (accessed on 1 September 2024).

- Chriqui, J.F.; Asada, Y.; Smith, N.R.; Kroll-Desrosiers, A.; Lemon, S.C. Advancing the science of policy implementation: A call to action for the implementation science field. Transl. Behav. Med. 2023, 13, ibad034. [Google Scholar] [CrossRef] [PubMed]

- Emmons, K.M.; Chambers, D.A. Policy Implementation Science—An Unexplored Strategy to Address Social Determinants of Health. Ethn. Dis. 2021, 31, 133–138. [Google Scholar] [CrossRef] [PubMed]

- Nilsen, P.; Stahl, C.; Roback, K.; Cairney, P. Never the twain shall meet?--a comparison of implementation science and policy implementation research. Implement. Sci. 2013, 8, 63. [Google Scholar] [CrossRef]

- McLoughlin, G.M.; Allen, P.; Walsh-Bailey, C.; Brownson, R.C. A systematic review of school health policy measurement tools: Implementation determinants and outcomes. Implement. Sci. Commun. 2021, 2, 67. [Google Scholar] [CrossRef]

- Allen, P.; Pilar, M.; Walsh-Bailey, C.; Hooley, C.; Mazzucca, S.; Lewis, C.C.; Mettert, K.D.; Dorsey, C.N.; Purtle, J.; Kepper, M.M.; et al. Quantitative measures of health policy implementation determinants and outcomes: A systematic review. Implement. Sci. 2020, 15, 47. [Google Scholar] [CrossRef] [PubMed]

- McLoughlin, G.M.; Martinez, O. Dissemination and Implementation Science to Advance Health Equity: An Imperative for Systemic Change. CommonHealth 2022, 3, 75–86. [Google Scholar] [CrossRef]

- McLoughlin, G.M.; Kumanyika, S.; Su, Y.; Brownson, R.C.; Fisher, J.O.; Emmons, K.M. Mending the gap: Measurement needs to address policy implementation through a health equity lens. Transl. Behav. Med. 2024, 14, 207–214. [Google Scholar] [CrossRef]

- Lett, E.; Adekunle, D.; McMurray, P.; Asabor, E.N.; Irie, W.; Simon, M.A.; Hardeman, R.; McLemore, M.R. Health Equity Tourism: Ravaging the Justice Landscape. J. Med. Syst. 2022, 46, 17. [Google Scholar] [CrossRef]

- Kumanyika, S. Overcoming Inequities in Obesity: What Don’t We Know That We Need to Know? Health Educ. Behav. 2019, 46, 721–727. [Google Scholar] [CrossRef]

- Hogan, V.; Rowley, D.L.; White, S.B.; Faustin, Y. Dimensionality and R4P: A Health Equity Framework for Research Planning and Evaluation in African American Populations. Matern. Child. Health J. 2018, 22, 147–153. [Google Scholar] [CrossRef] [PubMed]

- Emmons, K.M.; Chambers, D.; Abazeed, A. Embracing policy implementation science to ensure translation of evidence to cancer control policy. Transl. Behav. Med. 2021, 11, 1972–1979. [Google Scholar] [CrossRef] [PubMed]

- Nesrallah, S.; Klepp, K.-I.; Budin-Ljøsne, I.; Luszczynska, A.; Brinsden, H.; Rutter, H.; Bergstrøm, E.; Singh, S.; Debelian, M.; Bouillon, C.; et al. Youth engagement in research and policy: The CO-CREATE framework to optimize power balance and mitigate risks of conflicts of interest. Obes. Rev. 2023, 24, e13549. [Google Scholar] [CrossRef]

- Mandoh, M.; Redfern, J.; Mihrshahi, S.; Cheng, H.L.; Phongsavan, P.; Partridge, S.R. Shifting From Tokenism to Meaningful Adolescent Participation in Research for Obesity Prevention: A Systematic Scoping Review. Front. Public Health 2021, 9, 789535. [Google Scholar] [CrossRef] [PubMed]

- Linton, L.S.; Edwards, C.C.; Woodruff, S.I.; Millstein, R.A.; Moder, C. Youth advocacy as a tool for environmental and policy changes that support physical activity and nutrition: An evaluation study in San Diego County. Prev. Chronic Dis. 2014, 11, E46. [Google Scholar] [CrossRef]

- McLoughlin, G.M.; Walsh-Bailey, C.; Singleton, C.R.; Turner, L. Investigating implementation of school health policies through a health equity lens: A measures development study protocol. Study Protocol. Front. Public Health 2022, 10, 984130. [Google Scholar] [CrossRef]

- LaPietra, E.; Brown Urban, J.; Linver, M.R. Using Cognitive Interviewing to Test Youth Survey and Interview Items in Evaluation: A Case Example. J. Multidiscip. Eval. 2020, 16, 74–96. [Google Scholar] [CrossRef]

- Shafer, K.; Lohse, B. How to Conduct a Cognitive Interview: A Nutrition Education Example. 2006. Available online: http://www.csrees.usda.gov/nea/food/pdfs/cog_interview.pdf (accessed on 30 January 2023).

- Jia, M.; Gu, Y.; Chen, Y.; Tu, J.; Liu, Y.; Lu, H.; Huang, S.; Li, J.; Zhou, H. A methodological study on the combination of qualitative and quantitative methods in cognitive interviewing for cross-cultural adaptation. Nurs. Open 2022, 9, 705–713. [Google Scholar] [CrossRef] [PubMed]

- Meadows, K. Cognitive Interviewing Methodologies. Clin. Nurs. Res. 2021, 30, 375–379. [Google Scholar] [CrossRef]

- Teal, R.; Enga, Z.; Diehl, S.J.; Rohweder, C.L.; Kim, M.; Dave, G.; Durr, A.; Wynn, M.; Isler, M.R.; Corbie-Smith, G.; et al. Applying Cognitive Interviewing to Inform Measurement of Partnership Readiness: A New Approach to Strengthening Community–Academic Research. Prog. Community Health Partnersh. Res. Educ. Action 2015, 9, 513–519. [Google Scholar] [CrossRef]

- McLoughlin, G.M. EQUIMAP—EQUity-focused Implementation Measurement and Assessment of Policies. Available online: https://www.gabriellamcloughlin.com/evaluation-tools.html (accessed on 11 April 2023).

- Nilsen, P. Making sense of implementation theories, models and frameworks. Implement. Sci. 2015, 10, 53. [Google Scholar] [CrossRef] [PubMed]

- Dover, D.C.; Belon, A.P. The health equity measurement framework: A comprehensive model to measure social inequities in health. Int. J. Equity Health 2019, 18, 36. [Google Scholar] [CrossRef] [PubMed]

- Damschroder, L.J.; Reardon, C.M.; Widerquist, M.A.O.; Lowery, J. The updated Consolidated Framework for Implementation Research based on user feedback. Implement. Sci. 2022, 17, 75. [Google Scholar] [CrossRef] [PubMed]

- Damschroder, L.J.; Aron, D.C.; Keith, R.E.; Kirsh, S.R.; Alexander, J.A.; Lowery, J.C. Fostering implementation of health services research findings into practice: A consolidated framework for advancing implementation science. Implement. Sci. 2009, 4, 50. [Google Scholar] [CrossRef] [PubMed]

- Freedman, D.A.; Clark, J.K.; Lounsbury, D.W.; Boswell, L.; Burns, M.; Jackson, M.B.; Mikelbank, K.; Donley, G.; Worley-Bell, L.Q.; Mitchell, J.; et al. Food system dynamics structuring nutrition equity in racialized urban neighborhoods. Am. J. Clin. Nutr. 2022, 115, 1027–1038. [Google Scholar] [CrossRef]

- Proctor, E.; Silmere, H.; Raghavan, R.; Hovmand, P.; Aarons, G.; Bunger, A.; Griffey, R.; Hensley, M. Outcomes for Implementation Research: Conceptual Distinctions, Measurement Challenges, and Research Agenda. Adm. Policy Ment. Health Ment. Health Serv. Res. 2011, 38, 65–76. [Google Scholar] [CrossRef]

- Fernandez, M.E.; Walker, T.J.; Weiner, B.J.; Calo, W.A.; Liang, S.; Risendal, B.; Friedman, D.B.; Tu, S.P.; Williams, R.S.; Jacobs, S.; et al. Developing measures to assess constructs from the Inner Setting domain of the Consolidated Framework for Implementation Research. Implement. Sci. 2018, 13, 52. [Google Scholar] [CrossRef]

- Weiner, B.J.; Lewis, C.C.; Stanick, C.; Powell, B.J.; Dorsey, C.N.; Clary, A.S.; Boynton, M.H.; Halko, H. Psychometric assessment of three newly developed implementation outcome measures. Implement. Sci. 2017, 12, 108. [Google Scholar] [CrossRef]

- Penn State PRO Wellness. Adolescent Health Network. Pennsylvania State University. Available online: https://prowellness.childrens.pennstatehealth.org/school/programs/adolescent-health-network/ (accessed on 1 August 2022).

- Malone, S.; Rivera, J.; Puerto-Torres, M.; Prewitt, K.; Sakaan, F.; Counts, L.; Al Zebin, Z.; Arias, A.V.; Bhattacharyya, P.; Gunasekera, S.; et al. A new measure for multi-professional medical team communication: Design and methodology for multilingual measurement development. Front. Pediatr. 2023, 11, 1127633. [Google Scholar] [CrossRef]

- Braun, V.; Clarke, V. Using thematic analysis in psychology. Qual. Res. Psychol. 2006, 3, 77–101. [Google Scholar] [CrossRef]

- Stanick, C.F.; Halko, H.M.; Nolen, E.A.; Powell, B.J.; Dorsey, C.N.; Mettert, K.D.; Weiner, B.J.; Barwick, M.; Wolfenden, L.; Damschroder, L.J.; et al. Pragmatic measures for implementation research: Development of the Psychometric and Pragmatic Evidence Rating Scale (PAPERS). Transl. Behav. Med. 2021, 11, 11–20. [Google Scholar] [CrossRef] [PubMed]

- Leung, L. Validity, reliability, and generalizability in qualitative research. J. Fam. Med. Prim. Care 2015, 4, 324–327. [Google Scholar] [CrossRef] [PubMed]

- Whittemore, R.; Chase, S.K.; Mandle, C.L. Validity in Qualitative Research. Qual. Health Res. 2001, 11, 522–537. [Google Scholar] [CrossRef]

- Baumann, A.A.; Cabassa, L.J. Reframing implementation science to address inequities in healthcare delivery. BMC Health Serv. Res. 2020, 20, 190. [Google Scholar] [CrossRef] [PubMed]

- Carrington, S.J.; Uljarević, M.; Roberts, A.; White, L.J.; Morgan, L.; Wimpory, D.; Ramsden, C.; Leekam, S.R. Knowledge acquisition and research evidence in autism: Researcher and practitioner perspectives and engagement. Res. Dev. Disabil. 2016, 51–52, 126–134. [Google Scholar] [CrossRef]

- Willis, G.B. Cognitive Interviewing: A “How To” Guide 1999. Available online: https://www.hkr.se/contentassets/9ed7b1b3997e4bf4baa8d4eceed5cd87/gordonwillis.pdf (accessed on 30 January 2023).

- McClam, M.; Workman, L.; Dias, E.M.; Walker, T.J.; Brandt, H.M.; Craig, D.W.; Gibson, R.; Lamont, A.; Weiner, B.J.; Wandersman, A.; et al. Using cognitive interviews to improve a measure of organizational readiness for implementation. BMC Health Serv. Res. 2023, 23, 93. [Google Scholar] [CrossRef]

- Stamatakis, K.A.; Baker, E.A.; McVay, A.; Keedy, H. Development of a measurement tool to assess local public health implementation climate and capacity for equity-oriented practice: Application to obesity prevention in a local public health system. PLoS ONE 2020, 15, e0237380. [Google Scholar] [CrossRef]

- Mohsen, A. 5-Year Temple Study Aims to Boost Philly Students’ Participation in Free Breakfast and Lunch. Billy Penn. Available online: https://billypenn.com/2023/08/31/philadelphia-school-lunch-temple-study-boost-participation/ (accessed on 30 September 2023).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).