2.1. Statistical Field Theories

The following mainly concerns fully-discrete one-dimensional spatial systems. These are given as a shift space —a set of indexed bi-infinite sequences, or strings, of symbols taken from a finite alphabet . Before diving into details, let us first take a moment to compare shift spaces to the analogous setup from statistical mechanics for analyzing ordered systems.

A shift space can be thought of as a topological ensemble—a set of strings—in contrast with a statistical ensemble that is a distribution over a set of strings. This is an abstraction of discrete-spin models in one-dimension—e.g., for a standard Ising model. Rather than specify interactions on the spin lattice and analyze the resulting statistical field theory, we wish to analyze any pattern present for a given (topological) ensemble .

A key distinction between a shift space as a topological ensemble and a spin lattice ensemble in a statistical field theory is that all elements are related to one another through the shift operator . (Formally described below.) In fact, for the irreducible sofic shifts we consider, is an ergodic dynamical system. So, every member x of the ensemble can eventually be sampled through ’s action. Thus, we equivalently consider (i) as an ensemble of points and as a deterministic mapping between those points or (ii) as a single infinite lattice and moves indices on that lattice. The difference is that of active versus passive transformation.

Spontaneous symmetry breaking in statistical field theories is monitored through an

order parameter [

25]; such as total magnetization for an Ising model. In the symmetric “ordered” phase, the order parameter has a nonzero value and, after a symmetry-breaking phase transition, the order parameter vanishes. For the Ising model, below the transition—below the

critical temperature—spins tend to align giving nonzero magnetization. At zero temperature the model reaches its ground state with all spins aligned. This is a fully symmetric state with maximal magnetization, corresponding to strings of the form

or

. Above the critical temperature, this symmetry is fully broken, with zero magnetization.

While effective as an approach to thermal spin lattices, such as the Ising and related lattice models, abstractly quantifying “order” with a single scalar quantity—the order parameter—is far from ideal.

First, for the Ising model, there are only two configurations with maximal magnetization, as given above. Second, these configurations are maximally symmetric, with for integer p. Consider, though, configurations of the form . These configurations are still symmetric, with , although they have vanishing order parameter. There are many such symmetric configurations with zero magnetization: e.g., those of the form , with . More novelly, there are zero order-parameter configurations that are neither completely symmetric nor completely random. Third, these symmetric sequences with zero order parameter are not the ground state and they are not stable under thermal perturbations. Thus, though singled out by the choice of total magnetization as the order parameter, they are edge cases that will almost never be seen. Finally and more generally, order parameters in statistical mechanics are not determined from first physical principles. They must be posited initially and then proved appropriate.

Similarly,

correlation functions and

structure factors are additional and commonly-employed scalar quantities that capture one or another notion of order. Conceptually, a system considered highly ordered will surely be highly correlated. Patterns that emerge on the macroscopic scale correspond to collective behaviors on the microscale that certainly exhibit nonzero correlations. However, as with order parameters, there can be many degeneracies between specific patterns and correlation values. In short, order is something beyond correlation. A diverging correlation length in an Ising model at the critical temperature does not signify the presence of intricate patterns and organization, such as spiral wave patterns in lattice models of excitable media [

26]. To remedy these failings, we seek a definition of pattern that is not scalar.

This is not to banish all scalar quantities. Many, in given settings, can be insightful [

27]. We will show that the algebraic

presentations for topological patterns have a natural extension to patterns in statistical field theories. Moreover, scalar quantities of interest, like correlation functions, can be computed in closed-form from the stochastic presentations. We also return later, briefly, to discuss generalized order parameters in light of the algebraic theory.

2.2. Symbolic Dynamics

We now detail shift spaces and how they quantify topological patterns as generalized symmetries. Consider a finite alphabet of n symbols and (indexed) bi-infinite symbol sequences or strings. The set of all possible bi-infinite sequences is known as the full-n shift. A particular sequence is described as a point in . For now, we need not specify whether sequence indices are time coordinates or space coordinates. In either case, translations are generated via the shift operator that maps a point to another point whose coordinate is for all i. (That is, shifts every element of x one place to the left.) Our interest is in patterns as predictive regularity, and the predictions are made over translations generated by . Thus, we want to capture patterns in closed, -invariant subsets of . The subsets are called shift spaces (or subshifts or simply shifts).

Often one can concisely specify a shift space as the set of all strings that do not contain a collection of forbidden words. A word is a finite block of symbols and a point x is said to contain or admit a word if there are indices i and such that ; explicitly, . Again, a word is a finite sequence of symbols; a string, bi-infinite.

For a collection

of forbidden words, define

to be the subset of strings in

that do

not contain any words

. A shift space

is a subset of the full shift

such that

for some collection

of forbidden words [

28]. The

language of a shift space

is the collection of all words that occur in some point in

.

If

is a finite set, the resulting shift space is called a

subshift of finite type [

29] or an

intrinsic or a

topological Markov chain [

30]. A wider class of finitely-definable shift spaces are the

sofic shifts. These are the closure of subshifts of finite type under continuous local mappings—

k-block factor maps [

31]. Though, note that there are many equivalent definitions of sofic shifts; several of which are given below as needed. A sofic shift is

irreducible if, for every ordered pair of words

, there is a word

w such that

. For reasons elaborated on shortly, we define general discrete one-dimensional patterns as irreducible sofic shifts.

2.3. Sofic Shifts as Topological Patterns

To recap, we seek a mathematical specification of patterns in strings that captures a range of organizations spanning fully-symmetric sequences to arbitrary (“random”) sequences. Moreover, we wish to identify, from first principles, an associated algebra that generalizes the group algebra of symmetries. For physical consistency, we started with shift spaces since they are shift-invariant subspaces of strings. To fulfill the algebraic requirement, we now further restrict to sofic shifts as they are shift spaces defined in terms of a finite semigroup [

31].

Recall that a group is a set of elements closed under an associative and invertible binary operation with an identity element. In this, they are too restrictive and impose only exact symmetry. In contrast, semigroups require neither invertibility nor an identity operation. This relaxation is key to defining generalized patterns, as we laid out above. It permits exact symmetries but also allows expressing noisy and approximate symmetries.

The elements of a sofic shift’s semigroup are words and the binary operation is word concatenation. For example, the set of all words in and their concatenation products together form the free semigroup. For example, the product of and in the free semigroup is the word . A sofic shift is defined in terms of a finite semigroup G with an absorbing element e, whose product is for all . The absorbing element e together with the elements from the alphabet generates G via single-symbol concatenations. G’s production rules are such that for any pair of allowed words , if their concatenation contains a forbidden word , then their product in G gives the absorbing element .

A semigroup of word concatenations is also associated with a simple presentation in the form of a

semiautomaton finite-state machine [

32]—the triple

, where

is a set of

internal states,

is the symbol alphabet, and

is a set of mappings from

into

. To be explicit, we consider

deterministic and fully-specified semiautomata for which each

is a function—each input has one and only one output—with domain over the full set

of internal states. Semiautomata can be usefully depicted as an edge-labeled directed graph. The vertices represent the internal states in

and for every pair

such that

there is an edge labeled

that leads from

to

. For a deterministic and fully-specified semiautomaton, there is an edge labeled with each symbol in

emanating from every state in

.

A fully-specified semiautomaton directly determines a subshift’s algebra from the free semigroup as follows. For every state

and any element

of the the free semigroup

there is a map

from

to another state

[

32]. A deterministic and fully-specified semiautomaton is a

presentation of a sofic shift if we include an absorbing “forbidden” state

. That is, the mappings associated with all elements of the free semigroup containing a forbidden word in

lead to the forbidden state. Additionally, all mappings from the forbidden state return the forbidden state. That is,

for all

and

, and

for all

. See

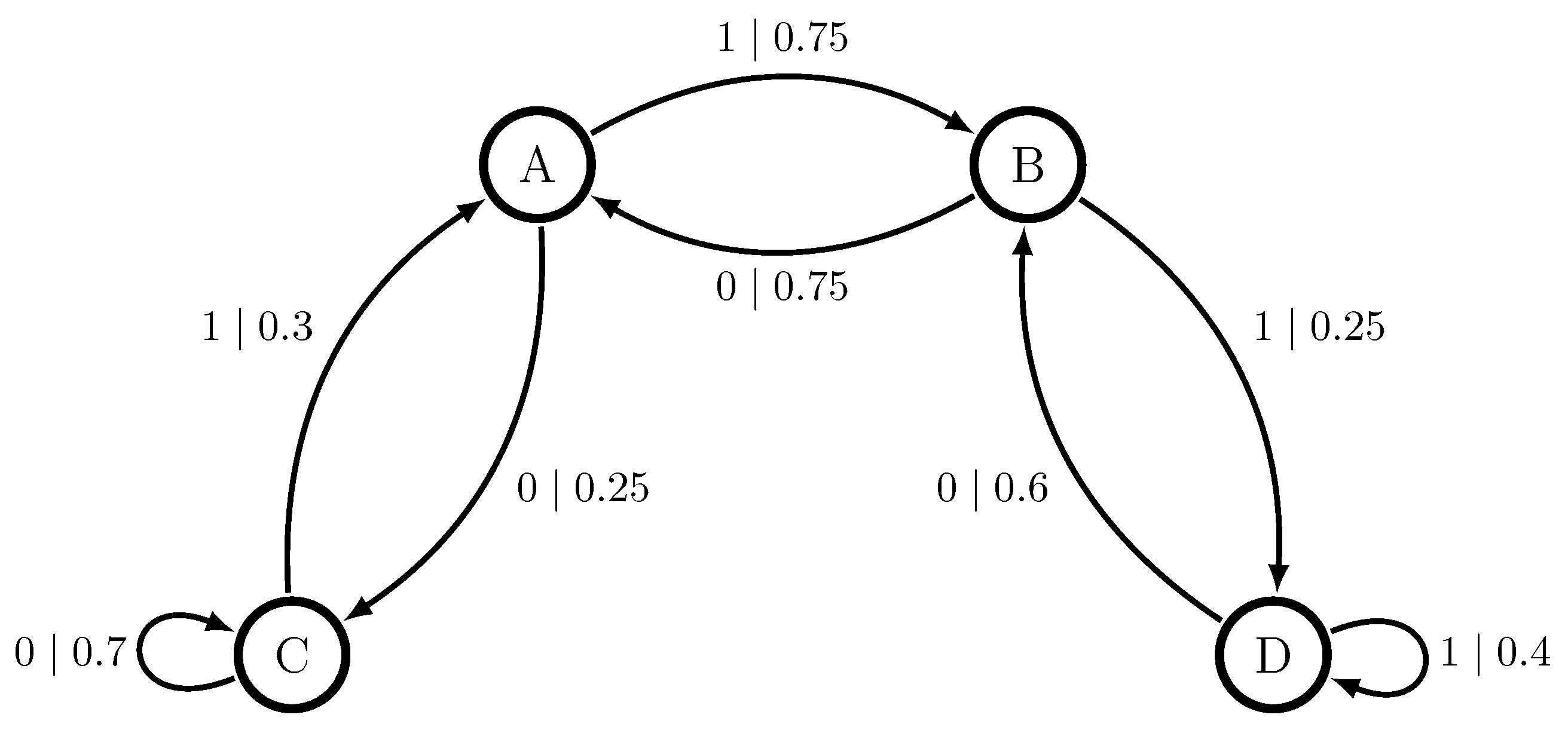

Figure 1 for presentations of example shifts.

Since sofic shifts are defined by a finite semigroup, every sofic shift can be presented by a semiautomaton with a finite set of states . Recall from above that the idea of compression is related to our intuition of pattern. A pattern—a predictive regularity—allows for a compressed representation of a system’s behaviors. Note that sofic shifts and their presentations provide a finite representation of an ensemble of infinite strings through their finite semigroup.

Here, we distinguish between three types of sofic-shift semiautomaton presentation.

Appendix A gives example constructions of these three types of presentation.

The most straightforward presentation assigns a state

to each element of a semigroup

G of

and fills in the state transitions

using

G’s production rules [

33]. While straightforward to construct, if a

G is known, this semiautomaton presentation is not necessarily minimal, in terms of the state set size

. To specify a particular pattern as a sofic shift, it is crucial to have a minimal and unique presentation associated with the given sofic shift. This also allows extracting unambiguous quantitative measures of the ensemble of strings, such as measures of correlation, from the finite presentation.

An important presentation that is minimal and constructible without knowing any

G is

’s

future cover [

34], defined below. The future cover semiautomaton of every irreducible sofic shift

has a unique strongly connected component [

35,

36]. This irreducible component is our mathematical representation of patterns as generalized symmetries. We refer to it as the

canonical machine presentation of . The future cover and its recurrent component

provide a unique, minimal mathematical representation of

.

The

future set (sometimes

follower set)

of a word

is the collection of all words

u such that

. Define the

future equivalence relation on

as:

for all

.

An important definition of sofic shifts that we use shortly is:

Theorem 1. ([28], Theorem 3.2.10) A shift space is sofic if and only if it has a finite number of future sets. Therefore, sofic shifts have finitely-many equivalence classes, denoted , and these equivalence classes plus the absorbing forbidden state are the internal states of the future cover semiautomaton.

The mappings that give the state transitions are defined from the allowed concatenations that do not contain a forbidden word in . That is, each state is an equivalence class and, for each symbol , the concatenation belongs to the equivalence class assigned as state , giving . Note that this is independent of the choice and that in some cases may be equal to . This gives a self-edge transition in the semiautomaton: . If , then maps to the forbidden state .

This natural transition structure follows from future equivalence and it leads to a very important property of the future-cover semiautomaton. They are called

unifilar [

37] in information theory, equivalently also called

right resolving in symbolic dynamics ([

28], Corollary 3.3.19) or

deterministic in automata theory [

38]. A fully-specified semiautomaton is unifilar if for every internal state

and every word (element of the free semigroup)

the map

leads to one and only one internal state

. (It may be that

j =

i.)

Unifilarity is the defining property of a predictive semiautomaton. Since the goal is to formalize patterns as predictive regularities, this is an important point to stress. By way of contrast, first note that any presentation of a sofic shift , whether unifilar or not, is a generator of . Every string in can be generated by following the symbol-labeled transitions of the presentation and no forbidden words can be generated. Thus, being generative can be thought of as the baseline property of any model of a sofic shift. Prediction is an additional capability beyond generation that arises from a presentation being unifilar.

Unifilarity establishes a presentation as predictive in the following way. For topological patterns, the task of prediction is to establish what may happen in the future, given what has happened in the past. Specifically, given a word w, what words are allowed to follow in the shift space? That is, what is w’s future set? Due to the implied determinism, each internal state of a unifilar presentation is uniquely specified by the word w leading to that state. Notably, this is not guaranteed for an arbitrary generator of a shift. Furthermore, in a unifilar presentation every subsequent word again leads to a unique internal state. It is straightforward to see that the future cover is a predictive presentation: By definition, its internal states are future separated—the set of all words that may follow from each internal state is unique.

To establish that sofic shifts and their canonical machine presentations express patterns as generalized symmetries, it is helpful to first describe how they capture exact translation symmetries of symbolic sequences.

2.4. Exact Symmetries

A string x has a discrete translation symmetry if , where the minimal such is the symmetry’s period. The symmetry group is the set with where . Since here p is finite, the action of on x produces a compact shift-invariant subspace of . Therefore, translation symmetric strings are shift spaces .

We now show that the shift space of translation symmetric strings is sofic. The action of the symmetry group used to define x is determined by the shift operator , while the action of sofic semigroups is word concatenation.

To connect these, consider windows ( that return the word w from coordinates i through j in x. For , if and , then gives the concatenated window. Recall that shifts indices in x so that . If we have a word , there is some such that . Then the allowed concatenations are determined by the shift operator , since we can write . All such concatenations determine ’s semigroup G. For translation symmetric strings with , we have that . So, intuitively, there is only a finite number of elements in G because there is a closure of allowed single-symbol concatenations after a finite number of unit-shifts. Therefore, for translation symmetric strings is sofic.

We now show this explicitly by constructing the canonical machine presentation with a finite number of states.

Proposition 1. Translation-symmetric strings, for some , together with their shifts for all , form an irreducible sofic shift space.

Proof. First, note that implies x can be written as a tiling , where b is a word of length p. Pick any as the first symbol in the word b. Then , ,…, with . Applying one more time gives , arriving at the next tile b.

Second, using this observation we create using internal states, where we have a state for each symbol in the tile b (there are p of these) and one absorbing state . Let be the future-set equivalence class of the word b ( is the last symbol in b). Due to x’s exact symmetry, there is one and only one symbol such that for ; namely . Therefore, let , and then all other map to , for . Now, and is the only symbol such that for . Thus, , with , and all other map to , for . Repeat this argument for all generators in b until we arrive at .

Third, as with , the only symbol that can follow is and we repeat the full argument again, where each sequentially follows. Therefore, ’s future set equals that of and so . Thus, the only transition from that is allowed returns to the original state . This completely specifies with a finite number of states . Given Theorem (1) above, one concludes that the shift space for a translation symmetric sequence is sofic. □

Figure 1a gives an example of

for the set of translation symmetric strings

with

. For visual clarity it omits the self-loops on state

.

Recall that is the irreducible component of the future cover, so for a given shift there may be additional states beyond those just described. However, these are equivalence classes for words of length less than p, since we started with . Since p is finite, there are only finitely many additional equivalence classes. These correspond to transient (nonrecurrent) states of the future cover semiautomaton.

In

Figure 1a’s example, knowing

does not fully specify which state

is in, since three states

,

, and

correspond to observing the symbol 0. The additional transient states of the future cover specify how to

synchronize to

’s states from the generators

(single-symbol words).

Having constructed the canonical machine presentation , we can further relate ’s semigroup action to x’s translation symmetry group. For each state there is one and only one transition that does not lead to the absorbing forbidden state . That is, only one generator can be concatenated to the words . Similarly, if we consider a word as the window , then a unit shift by reveals one and only one new symbol at index j in . Therefore, ignoring the absorbing state and transitions to it, the graph of is a cyclic graph with period p: Every p-length path from returns to for all . Thus, the permutation symmetries of this (edge-labeled) graph correspond to elements of x’s translation symmetry group.

In

Figure 1a’s example, the state labeled

corresponds to the start of the tile

, but we could equivalently use

as the start of tile 000111. Furthermore, the internal states have a functional meaning. They are the elements of the quotient group of the translation symmetry—counters that track the symmetry’s phase.

It must be emphasized that sofic shifts and their semigroups do not formally generalize such exact symmetries in the obvious way. That is, the semigroup of a sofic shift for exact symmetry strings does not simply become a symmetry group. G’s absorbing semigroup element e is still required for an exact symmetry sofic shift . From our construction of translation symmetric , we see that, for every internal state , there is one and only one transition that does not lead to . This makes it clear that exact symmetries are a highly restrictive form of pattern. By representing exact symmetries using sofic shifts and their machine presentations, though, it is now straightforward to generalize by relaxing the restrictions that impose exact symmetries.

2.5. Generalized Symmetries

Ignoring , a sofic shift whose machine presentation is not cyclic then represents a pattern as a generalized symmetry. We can again either consider as an ensemble of strings or one infinite sequence that possesses a generalized symmetry described by ’s semigroup, which is well represented by the machine presentation . By removing the restriction of a cyclic graph in —that imposed the perfect regularity of —we now can capture a much wider class of patterned strings with approximate or partial regularities.

Consider the extreme case of the full shift that has no regularity. There are no forbidden products such that in G for , and so there are no restrictions on its words; . The algebra of the full shift is the free semigroup. Its machine presentation is a single state with all mapping that one state back to itself—i.e., all transitions are self transitions. Since all words can be concatenated to each other, they all belong to a single future equivalence class.

We interpret ’s complete lack of regularity to be a null pattern. Analogously, the opposite extreme of total regularity with strings of the form for is also a null pattern with a single-state (again, ignoring ) machine presentation that has a single self-transition. The null pattern, in these cases, has zero memory—the logarithm of vanishes. While both extremes at first seem to be polar opposites, recall our goal is that “pattern” represents a predictive regularity and this is lacking in both cases. The full shift is completely “random” and thus unpredictable. Whereas, for trivially translation symmetric strings , the future is always the same. There is nothing to predict.

Between the complete regularity of exact symmetries and lack of predictive regularity in null patterns, we identify several categories of partial predictive regularity.

First, note that for translation symmetric strings for all i.

Second, there are string classes for which

for only

some i. We call these

partial symmetries. A particular case of partial symmetries are

stochastic symmetries. For simplicity, consider binary sequences with

, and let

denote a “wildcard” that can be either 0 or 1 [

39]. Sofic shifts with stochastic (partial) symmetries are fully translation symmetric after making wildcard substitutions. For example, we can specify a sofic shift with sequences of the form

, say. Examples of such strings are

, where spaces help emphasize the “fixed” 0s that are the scaffolding of the partial symmetry. Note that the canonical machine presentation

for such stochastic symmetries are also cyclic graphs, as shown in

Figure 1b.

Third, recall that if the canonical presentation

is a cyclic graph, every

p-length path from

returns to

for all

. Similar to how we generalized from exact to partial symmetries, we define

hidden symmetries for which only

some states

return to themselves on all

p-length paths in

. We exclude the case of

, so that self-loops do not count as a hidden symmetry.

Figure 1c shows an example with

as the symmetric state. The canonical machine presentation specifies a sofic shift consisting of arbitrary arrangements of blocks

and

; e.g.,

. The exact symmetry shift in

Figure 1a is the special case of the symmetric tiling

.

Finally,

Figure 1d gives an example of a general nonnull pattern that is not an exact, partial, or hidden symmetry. This is the well-known Even Shift [

31,

33]—the set of binary strings in which only even blocks of 1s bounded by 0s are allowed. This

pattern extends to arbitrary lengths, despite being specified by only two internal states. While there are no states in the presentation

that always return to themselves after

transitions, there is still predictive regularity. In particular, if a 1 is seen after a 0, it is guaranteed that the next symbol will be a 1. This is specified by

having only one allowed transition to

on a 1.

Appendix A.2 discusses this example in more detail, along with its semigroup and three semiautomaton presentations.

Before moving to probabilistic patterns represented by sofic measures, we note that Krohn–Rhodes theory [

40,

41] was the first to connect finite automata with a semigroup algebra. Moreover, it showed that finite semigroups and their corresponding automata naturally decompose into simpler components, including finite simple groups. This is yet another perspective showing finite automata and their semigroup algebra capture patterns as generalized symmetries. Further exploration of the connection between Krohn–Rhodes theory and the perspective developed here is left for future work. One important difference to note is that their approach did not address statistical or noisy patterns, as the following now does.

2.6. Statistical Patterns Supported on Sofic Shifts

The exposition on sofic shift patterns did not invoke probabilities over symbols, words, or strings. Shift spaces are not concerned with the probability of a word occurring, only whether a word can possibly occur or not. This is why we referred to sofic shifts as topological patterns. We just saw that exact symmetries are given as sofic shifts and so are topological patterns. Recall that our key motivation for showing this was to argue for sofic shifts as a mathematical formalism that captures a notion of (topological) pattern that greatly expands exact symmetry to generalized symmetry. However, as we now describe, we can generalize further to formalize statistical patterns that are supported on sofic shifts. Doing so provides a direct link with the more familiar statistical measures of order and organization used in statistical mechanics, such as correlation functions.

Our goal is to build a probability space on top of a shift space. The key property of this probability space is that words are assigned positive probability if and only if they are allowed in the shift space. This is accomplished through the use of cylinder sets as the sigma algebra on a shift space

[

42]. For a shift space

and a length-

n word

w, the

cylinder set is defined as:

Naturally then, a probability measure

is assigned such that the probability of a word

w occurring is given as:

By definition,

if

, as the associated cylinder sets will be empty for forbidden words.

Such a probability measure defines a

stationary stochastic process over the shift space

if it is shift-invariant, such that the probability of a word is independent of the index

i in

, and each word satisfies prefix and suffix marginalization:

and:

This is the Kolmogorov extension theorem that guarantees the finite-dimensional word distributions consistently define a stochastic process [

43]. We only consider shift-invariant measures and, so without loss of generality, we simply use

for the cylinder sets.

Crucially, the semigroup algebra and canonical machine presentation machinery for topological patterns have natural generalizations to stochastic patterns, as we now describe.

Following Ref. [

44] in the context of shift spaces, a (free)

stochastic semigroup is a function

F defined on the free semigroup

S, with identity element

(the empty symbol), that satisfies the following properties ([

42], Definition 4.29):

,

for each and for each , and

for each .

For a sofic shift

, we define a stochastic semigroup on

using the semigroup

G defined above, with absorbing element

e corresponding to forbidden words. We simply set

. Then, a shift-invariant measure

satisfying Kolmogorov extension on a sofic shift

with semigroup

G forms a stochastic semigroup as follows. For all elements of the free semigroup

—i.e., for every word

—define

F such that

,

if and only if

(equivalently,

in the semigroup

G), and otherwise

. Such a measure

is called a

sofic measure [

42].

In this way, sofic measures allow for statistical structure on top of a sofic shift , while maintaining an algebraic structure related to ’s semigroup algebra. More importantly, there is a canonical machine presentation associated with sofic measures, analogous to the canonical machine presentation of sofic shifts. As in the topological case for sofic shifts, the canonical machine presentation of sofic measures provides the mathematical formulation of statistical patterns.

Recall that the future cover semiautomaton—the canonical topological machine presentation—is defined from Equation (

1)’s future equivalence relation. The canonical stochastic machine presentation is defined through a stochastic generalization of future equivalence, called

predictive or

causal equivalence, defined on semi-infinite words. Each index

i partitions a sequence into a semi-infinite

past , and semi-infinite

future. In the topological case, two pasts are considered future-equivalent if they have the same future—the same

set of futures that follow. In the stochastic case, two pasts are

predictively or

causally equivalent if they have the same

distribution over futures conditioned on the past:

Just as the future equivalence relation

defines the unique minimal semiautomaton presenting a sofic shift, the causal equivalence relation

defines the unique minimal

hidden Markov chain (HMC) that presents a sofic measure and its stationary stochastic process [

22].

Speaking simply, a hidden Markov chain is a semiautomaton whose deterministic symbol-labeled transitions are replaced by symbol-labeled transition probabilities , where is the probability of transitioning from state to on the symbol .

Paralleling the topological setting, the canonical stochastic machine presentation is a hidden Markov chain whose internal states

—the

predictive or

causal states—are the equivalence classes of Equation (

2)’s causal equivalence relation. The symbol-labeled transitions are then defined through the one-step conditional distributions

. For a given causal state

, we write

since by definition each past

in the equivalence class

has the same predictive distribution.

The transition probability

is then given as

, with

, where

is the new past given by concatenating the observed symbol

a onto the current past

. This follows from

unifilarity [

22]: in the stochastic setting for each internal state

and symbol

there is at most one internal state

such that

. It then follows that

is the same for all

.

Historically, the canonical stochastic machine presentation

is known as the

ϵ-machine [

22] of the associated stochastic process. As described shortly, the

-machine is a generator of its associated statistical field theory. It generates all words with their corresponding probabilities. Similar to its topological counterpart, unifilarity additionally elevates the

-machine to a predictive presentation. Prediction in the stochastic setting means identifying the predictive distribution associated with a given past. Additionally, by definition, an

-machine’s causal states carry unique predictive distributions.

Summing over all symbol-labeled transitions produces a Markov transition operator over the internal causal states:

. This operator evolves probability distributions over the causal states, regardless of the symbols involved, and specifies an order-1 Markov process over the states [

22]. The left eigenvector of

with unit eigenvalue provides the

stationary distribution over causal states:

. For ergodic systems, as we consider here,

is unique.

We emphasize that the causal states identify a hidden, internal Markov process underlying the non-Markovian process over the symbols in

, specified by a sofic measure on a sofic shift. This inverts the abstract definition of sofic measures, given as factor maps on Markov processes [

42]. There, a shift-invariant measure obeying Kolmogorov extension on a subshift of finite type produces a Markov chain of finite order and sofic shifts are abstractly defined as factor maps of subshifts of finite type. Hidden Markov chains are then given as the pushforward of the Markov measure along the factor map. Here, in contrast, we start with a statistical field theory supported on a sofic shift. The causal equivalence relation then identifies the underlying causal states and the Markov process defined over them.

With our given assumption of a stationary ergodic process over symbols we can use the stationary distribution

and the symbol-labeled transition operators to directly extract the word probabilities

from the

-machine presentation. First, single-symbol probabilities are given as:

where

gives the probability of observing the symbol

, conditioned on being in causal state

, and this is summed over all states in

weighted by their stationary probabilities

. We write this compactly as:

where

indicates

as a row vector and

is the column vector of all 1s.

The probability of a word

is then:

Recall that in the topological case, the semigroup algebra of the canonical machine presentation is given through composition of the mappings

. Now, we see the same semigroup algebraic structure in the products of the symbol-labeled transition matrices

. In fact, the topological structure, the set of mappings

, is recovered from the statistical structure, the set of transitions

, by setting all nonzero elements of each

to unity and then applying future equivalence.

It must be emphasized that this last step, applying future equivalence, is essential. There may be distinct

-machines—presentations of statistical patterns—that are supported on the same sofic shift—topological pattern. Said another way, statistical patterns signify distinct structure supported on topological patterns. They are not merely “adding probabilities onto” topological patterns. Formally, the symbol-labeled transition matrices

can represent a different semigroup than that represented by their topological counterparts

on which they are supported.

Appendix B illustrates this distinction.

Let us briefly turn to mention the quantitative benefits of having these presentations. Using the word probabilities as just described, in addition to the underlying pattern of the sofic shift that supports the ensemble, a wide range of statistical properties of the sequence ensembles, such as correlation functions, power spectral densities, and informational properties [

45,

46] can be directly determined via the

-machine. For example, the

Shannon entropy rate of an ensemble measures its degree of randomness and can be calculated as:

where

for

is the random variable for the subsequence of symbols

. Moreover, the Shannon information in the causal states measures a process’ historical memory [

47]. This is the amount of memory about the past that must be stored in order to optimally predict the future of the process.