1. Introduction

Year by year, lung carcinoma is one of the most common malignancies worldwide. In 2020, approximately 2,206,771 new cases were identified. In Western countries, we can observe a decreasing trend due to the gradually decreasing number of smokers. In developing countries, however, the opposite can be seen [

1,

2]. In 2018, lung carcinoma was globally the cause of 1,761,000 deaths, which represents 18.4% of cancer deaths worldwide [

1,

3].

At present, unfortunately, there are no markers that would allow the early identification of this tumor in the preclinical or early clinical stage. Diagnosis relies predominantly on imaging methods, such as lung X-ray, computed tomography (CT), or a more efficient low-dose helical computed tomography (LDCT) [

4]; the latter, however, is prone to false positive results as it is known to also detect non-malignant abnormalities [

5]. Sputum cytology is a useful tool for the detection of tumors in the major bronchi; it is, however, unsuitable for the detection of smaller adenocarcinomas (<2 cm in diameter) in minor bronchi, bronchioles, and alveoli. Bronchoscopy with lung biopsy and histological evaluation follows to confirm the diagnosis [

6]. Still, a majority of tumors are diagnosed only in an advanced stage, which leads to a 5-year survival of only 11.2% in men and 13.9% in women [

7]. Timely detection of tumors is, therefore, of utmost importance.

Early diagnosis of selected tumors is the subject of research by the Czech Center for Signal Animals, specializing in the training of signal dogs. These dogs are trained for early detection of selected tumor types from the patient’s blood samples. The Center deals with tumours that are difficult to diagnose with medical instruments in the early stages (e.g., lung cancer and ovarian cancer [

8]. The principle lies in the dog’s smell being several orders of magnitude more sensitive than diagnostic instruments [

9]; thanks to this, dogs are capable of identifying volatile organic compounds produced by tumor metabolism [

10]. It is not yet known what specific compounds are contained in the tumour. But it is four–five thousand different molecules [

8]. The dogs are trained to identify the samples from people with cancer by performing a certain activity (e.g., the dog sits down or lies down in front of the respective sample). This method is, however, considered by some as insufficiently proven and insufficiently objectivized [

11].

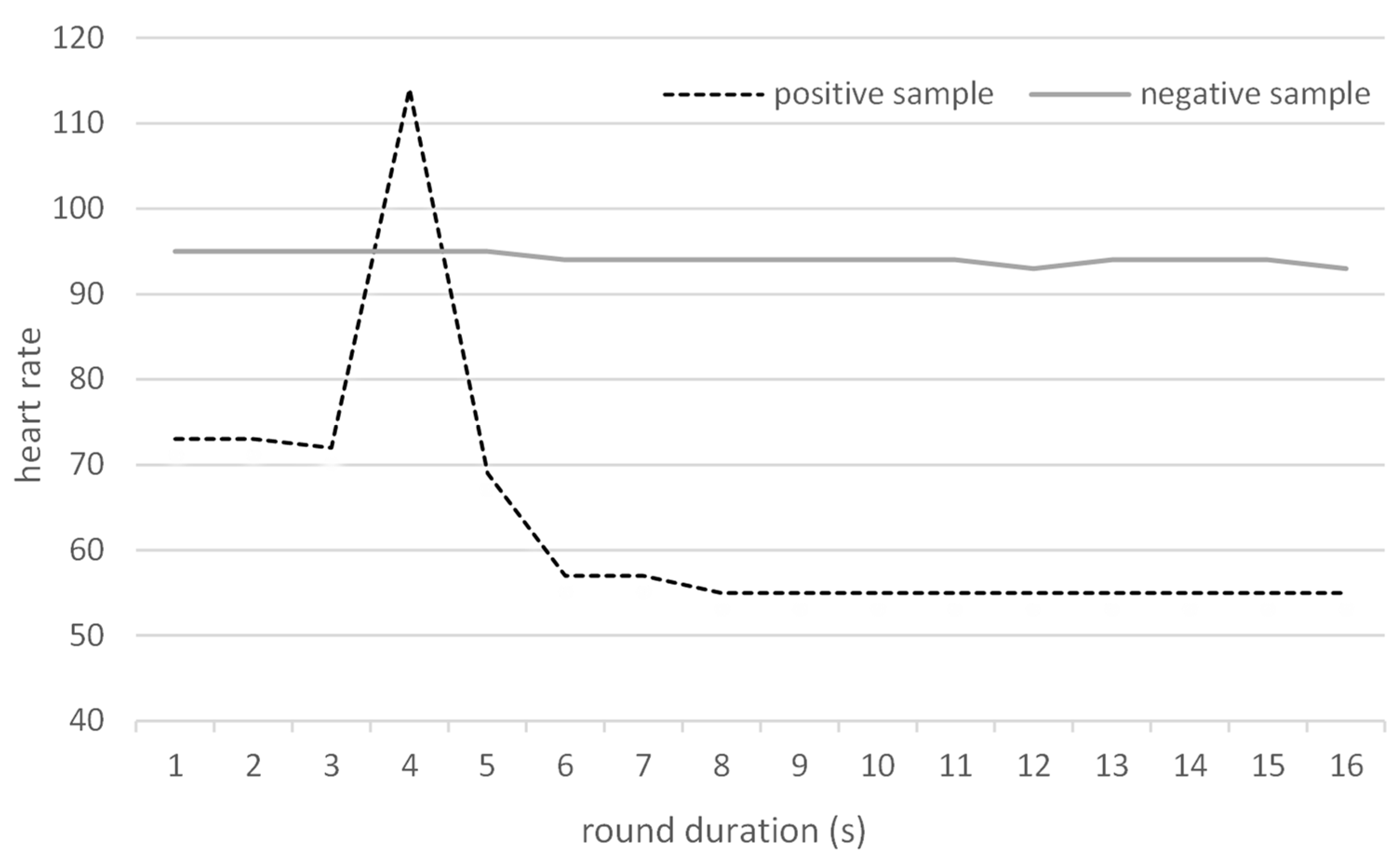

Hence, in this study, we hypothesized that objectivization of the process through monitoring heart rate (HR) changes of trained sniffer dogs during sniffing experiments could improve the performance. The hypothesis was based on the assumption that if the dog “suspects” a sample but is not sure enough to tag the sample as positive, its HR would go up, as it does when finding a clearly positive sample and expecting a reward. The same phenomenon was observed in avalanche dogs whose heart rate increased when detecting a human being [

12].

We expected that the detection based on HR increase could theoretically lead to an increased false positive rate; however, as this type of error is less serious than the opposite (failure to detect a positive sample) and would only lead to a more thorough examination rather than to omitting an already existing tumor, it appears that the expected tradeoff of improved sensitivity at the expense of slightly reduced specificity would be acceptable. The hypotheses of this research were therefore as follows: (i) trained dogs can effectively recognize the difference between the negative and positive samples, (ii) monitoring dogs’ HR during action can be a useful auxiliary method for improving the sensitivity and negative predictive value compared to the standard performance when the dog just indicates samples from cancer patients. This study is the first one attempting to objectivize the detection process using heart rate.

2. Methods

This pilot study included two sniffer dogs (an Australian cattle dog and a German Shepherd) selected from the pool of dogs trained in the Czech Center for Signal Animals as the two individuals that best tolerated wearing the HR monitor on their bodies. The dogs were trained using intermittent reinforcement as described in detail in our previous publication [

8]. In addition to the standard conditions used for training, a chest strap was fastened around the chests of the dogs, which allowed continuous HR monitoring using the smartwatch SUUNTO Ambit3 (Vantaa, Finland) and the supplier-produced software (version 2.26.1).

The study was designed as a double-blinded study, with neither the trainer nor the owner of the dog knowing the status of individual samples. Blood samples from individuals ≥18 years of age with histologically confirmed lung carcinoma (regardless of the stage, sex, or age) were considered positive, and negative samples were acquired from individuals without a confirmed diagnosis of lung cancer. No other inclusion/exclusion criteria were applied. All participants whose blood samples were used for training or experiments signed informed consent approved by the local Ethics Committee.

The samples were prepared using blood serum from 5 mL of full blood taken from the participants. The blood was centrifuged at 4000 RCF/4 °C for 10 min and the resulting supernatant (serum) was pipetted into a storage vial and stored in a freezer at −12 °C. Before use, the serum was thawed and, after shaking to thoroughly mix the contents of the storage vial, one drop was placed on the bottom of another vial. A pad of cotton wool was inserted into the new vial above the serum (but not in direct contact) as an odor adsorbent and the vial was kept closed for 24 h [

8]. After that, the “scented” pads were placed separately into closed vials without blood serum and stored until experiments.

The experiments themselves were performed as follows: for each round (i.e., each release of the dog for detection), four vials with samples were placed into a stainless steel tray with 4 holes laying on the ground. For each individual round (i.e., an individual sample presentation to a dog), a set of 4 samples was prepared by the administrator prior to the experiments (3 negative samples and one “unknown” sample; that sample was taken either from the pool of negative samples, or from the pool of positive samples). During experiments, the dog owner/trainer placed samples into the four holes in the tray (as the owner/trainer was blinded to the content of the vials, they were placed at random positions within the tray). Subsequently, the dog was released to examine the vials with pads. Each round was video recorded and the initial and highest heart rates during the individual presentation (which took approx. 10–20 s in each case) were written down, as well as the result of the dog’s indication (or not) of the sample positivity. None of the persons present during the experiments were aware of the positivity/negativity of the samples. The two dogs alternated in their rounds after 10 min (i.e., approximately after 10 samples, including sample placement) to allow sufficient time for regeneration and rest. None of the samples used in the training were used for experiments; in addition, none of the samples used during the experiments were presented twice to the same dog.

After the experimental part of the study was completed, the results were unblinded and evaluated based on (a) dogs’ indication and (b) heart rate increase during the experiment. Any HR increase above the initial value (base HR) was considered an indication of the presence of the positive sample in the set. Although the HR kept gradually changing over the course of the experiments, preliminary testing showed us that dogs’ heart rates increased only when the dogs were excited for any reason. From this perspective, changes in the actual base heart rate at the beginning of the individual round played no role in the evaluation as only the increase in HR during the round was considered. The experimental setting was designed in such a way that there was no potential source of excitement other than the samples. Basic test parameters were subsequently calculated, namely, sensitivity (SEN), specificity (SPE), and positive (PPV) and negative (NPV) predictive values. Both of these classes were, in addition, evaluated in two ways: (i) considering each experiment separately (i.e., each set of 4 samples) as one round with a positive/negative result, and (ii) considering each sample separately (i.e., considering each round as four independent samples; for example, an experiment with 4 negative samples where the dog indicated none of the samples was considered as 4 correctly identified negative samples). The reasons for this approach are discussed below in the

Section 4.

4. Discussion

The presented study aimed to investigate the success of the trained sniffer dogs in detecting lung carcinoma and to compare the results of dogs’ indications to those obtained by heart rate measurements.

Although heart rate measurement counts among basic physical examinations, information about the normal heart rate of dogs is scarce. The high physiological and psychological variability of dog breeds makes the use of an arbitrary HR impossible [

13], with physiological values generally ranging between 70–120 beats per minute (bpm) [

14]. Moreover, although HR correlates with body weight in many mammalians, this does not consistently apply to dogs [

15,

16].

Recently, the use of human heart rate monitors for the measurement of dogs’ heart rates [

12,

17,

18,

19] has become preferred to the gold standard, i.e., electrocardiography (ECG) [

20,

21]. Such monitors consist of a chest strap with electrodes and a built-in signal transmitter. The application of a conductive gel on the electrodes before fastening the chest strap on the dog helps improve the reliability of the function of the belt. The data from the strap are forwarded to a smartwatch and can be analyzed on a computer. The advantages of such monitors compared to ECG include dog-friendliness (no fur shaving is necessary), easy mobility, simple application, and lower costs. Studies comparing these two methods revealed a good agreement of the results of HR monitors with ECG in dogs [

12,

18,

19,

22].

However, the problematic functionality of the HR monitor in some cases was a downside of the use of the HR monitor in our study. In all, 115 experiments with HR measurement were performed, of which HR was properly recorded only in 84 experiments. This was mostly associated with the fact that the HR monitors are constructed to fit and work with human skin, and the fur interfered with their function in 33% and 20% of the measurements with individual dogs, when, despite the application of the conductive gel, the monitors lost contact with the skin (

Table 6). In some studies on monitoring dogs’ heart rates for other purposes, such as medical ones, researchers shaved the hairs from the dog’s chests to improve the contact between the electrodes and the skin [

12,

18]; in our research, however, we opted for a more dog-friendly approach as we were still able to acquire a sufficient number of valid measurements, and shaving could possibly stress the dogs, interfering with their performance. Moreover, Bidoli et al. reported that the fur itself does not influence the effectiveness of the chest strap as, curiously, a higher frequency of invalid measurements was found in short-haired than long-haired dogs [

19].

In addition to the fur, we hypothesize that the dog’s size is another important factor—the percentage of valid experiments was higher in the larger German Shepherd than in the somewhat smaller Australian cattle dog (80%, resp. 67%); this could be caused by differences in the fit of the strap constructed for the much larger human chest. This is, however, in contrast with results by Bidoli et al., who stated that higher chest circumference was associated with a higher frequency of invalid results [

19]. Some researchers also use gauze for additional fastening of the chest strap to the dog’s chest, which can further improve performance [

12,

18]. We have, however, rejected this option as well in order to prevent the potential stress that could affect the results.

In our study, 62 valid rounds with positive samples (i.e., three negative and one positive sample in the round) and 22 rounds with solely negative samples (four per round) were performed. This setup led to the underestimation of specificity and negative predictive values (note that in the used setting, where only one sample is unknown and all others are negative, three samples in each round are “disregarded”;

Table 2). For this reason, we have performed an additional calculation considering each sample separately (

Table 3). This allowed a finer analysis and correction of some issues that could not be addressed during the “by rounds” calculation. For example, if the dog indicates an incorrect sample in a round containing a positive sample, it is considered a false-negative when calculated per rounds, but in the finer calculation based on individual samples, it can be considered a false negative (the positive sample was not identified), a false positive (a negative sample was indicated as positive), and two true negatives (two negative samples that were correctly ignored). This calculation, however, could not be used in the case of heart rate as it is not possible to determine which sample causes the heart rate to increase (

Table 4). This duality of calculations can be considered both a limitation of the study and its strength as it facilitates a complex evaluation from both perspectives. To eliminate this duality, it would be necessary to evaluate only one sample per round instead of a set of four; this, however, would require a completely different strategy of training (a new approach from the beginning of training; dogs already trained for runs of four samples cannot be retrained this way) and might be difficult to implement as it is assumed that the dogs need to have a negative sample in the set of tested samples to be better able to recognize the positive sample. Moreover, the approach with multiple samples is a standard setting commonly used in studies with sniffer dogs [

23,

24].

We should also mention that some false positive samples were marked as false positives repeatedly by both dogs. Such samples are being recorded and the patients will be subject to more thorough follow-ups as it is possible that the dogs have detected forming tumors at a very early stage preceding the clinical diagnosis.

There is another peculiarity that needs to be mentioned; when evaluated according to the dog’s indications and rounds, the dogs indicated another sample in the set on two out of three occasions in which they failed to recognize a positive sample. This may suggest that the dogs were aware of the presence of a positive sample in the set but failed to identify the correct one. This was also associated with another observation—from our experience (including training), we know that dogs are more likely to err in freshly scented samples than in older ones; all positive samples that the dogs failed to recognize were less than 2 weeks old. This may also have implications for practice and letting the samples “mature”, or repeating the identification with a “matured” sample after several weeks might further improve the results.

In this type of screening, sensitivity and negative predictive value are probably the most important parameters (false positives playing a key role in calculations of specificity and PPV are not as problematic, as they would only lead to a more thorough examination and follow-up of patients, which is far less serious than false negativity, i.e., failure to identify a patient with cancer). From this perspective, a sensitivity of 95% based on the dogs’ indications is a very good result, slightly better (though not significantly, p = 0.763) than results based on the heart rate. Negative predictive values of 85.7% (dog’s indications) and 78.3% (heart rate) in the calculations per rounds are not so favorable; we must, however, take into account the aforementioned fact that in every run, there were three or four negative samples, so calculations per rounds underestimate the actual NPV. Once individual samples were considered, the NPV grew to an excellent value of almost 99%. Of course, we have to take into account that NPV is greatly affected by prevalence, and that by considering all samples individually, we have altered that prevalence. Still, this high number accompanied by the sensitivity of 95% indicates a good potential of this method for future use in clinical practice.

The confidence intervals of some parameters (specificity and NPV in evaluation by rounds) are relatively wide. This is caused by the relatively low number of experiments with false negative/positive results in this experimental setup.

Our results of evaluation based on dogs’ indications are similar compared to other studies. Examples include studies by Ehmann et al., McCulloch et al., Elliker et al., Cornu et al., Kitiyakara et al., and Guerrero Flores et al. (see

Table 7; [

25,

26,

27,

28,

29,

30]).

The hypothesis that the HR would increase if the dog finds a positive sample was confirmed (

Figure 2). However, the assumption that it would increase even when the dog is uncertain about the sample and, hence, the overall sensitivity would improve, was not proven true. In effect, as the use of HR instead of the indication by dogs did not improve the results, and considering the complications associated with the chest strap resulting in a high frequency of invalid experiments, we cannot recommend the use of HR monitoring as a parameter superior to the indications by trained sniffer dogs.