Abstract

Background/Objectives: Breast cancer continues to be one of the primary causes of death among women worldwide, emphasizing the necessity for accurate and efficient diagnostic approaches. This work focuses on developing an automated diagnostic framework based on convolutional neural networks (CNNs) capable of handling multiple imaging modalities. Methods: The proposed CNN model are trained and evaluated on several benchmark datasets, including mammography (DDSM, MIAS, INbreast), ultrasound, magnetic resonance imaging (MRI), and histopathology (BreaKHis). Standardized preprocessing procedures were applied across all datasets, and the outcomes were compared with leading state-of-the-art techniques. Results: The model attained strong classification performance with accuracy scores of 99.2% (DDSM), 98.97% (MIAS), 99.43% (INbreast), 98.00% (Ultrasound), 98.43% (MRI), and 86.42% (BreaKHis). These findings indicate superior results compared to many existing approaches, confirming the robustness of the method. Conclusions: This study introduces a reliable and scalable diagnostic system that can support radiologists in early breast cancer detection. Its high accuracy, efficiency, and adaptability across different imaging modalities make it a promising tool for integration into clinical practice.

Keywords:

computer-aided diagnosis; deep learning; CNN; imaging modalities; mammography; magnetic resonance imaging; ultrasound; histopathology Key Contribution:

The proposed work presents an adaptable CNN model capable of effectively processing various imaging modalities. To mitigate overfitting, the model is carefully designed with a minimal number of parameters while still learning discriminative features. Its effectiveness and robustness were comprehensively evaluated across multiple datasets, demonstrating strong generalization performance.

1. Introduction

Breast cancer is the second leading cause of cancer-related mortality worldwide, following lung cancer [1]. Without early detection, it represents a major source of female mortality [2]. Conversely, timely diagnosis significantly improves survival rates, as breast cancer is among the most treatable cancers when detected early [3], reducing both mortality and patient distress [4]. Various imaging modalities, including digital mammography, ultrasound, MRI, and histopathology, are widely used for the early detection and diagnosis of breast cancer [5].

Digital mammography remains the primary screening tool, producing high-resolution images to identify calcifications and masses, yet its performance declines in dense breast tissue. Ultrasound is widely used to distinguish solid from cystic lesions and to guide biopsies, while MRI is particularly valuable for high-risk patients and ambiguous cases. Despite their utility, these modalities suffer from variability in interpretation and intrinsic limits of sensitivity and specificity, often leading to false positives or negatives [6]. These constraints highlight the need for advanced diagnostic approaches to improve accuracy and reliability.

Artificial intelligence (AI) has emerged as a transformative solution in medical imaging, capable of analyzing large datasets such as mammograms [7] and MRIs with performance comparable to or exceeding human experts [8,9]. By detecting subtle patterns beyond human perception [9], AI has proven effective in diverse applications, including skin leishmaniasis [10] and breast cancer detection [11].

However, most computer-aided diagnosis (CAD) systems remain modality-specific, limiting adaptability and requiring multiple frameworks to handle mammography, ultrasound, MRI, or histopathology. To overcome these challenges, we propose a unified convolutional neural network (CNN) framework capable of analyzing multiple modalities within a single model. This modality-agnostic approach leverages shared imaging patterns, streamlining deployment, reducing costs, and improving flexibility, while maintaining robust diagnostic performance across imaging types.

Deep learning, particularly deep convolutional neural networks (DCNNs), has demonstrated remarkable success in medical image analysis by automatically extracting complex features [12], surpassing traditional machine learning methods that rely on manual feature engineering [13].

The objective of this study is to develop a unified, multimodal CNN framework that minimizes overfitting through optimized architecture design, enhances diagnostic accuracy, and eliminates manual feature extraction. By integrating multiple imaging modalities with deep learning, the proposed system aims to advance breast cancer diagnosis, enable earlier detection, and ultimately improve patient outcomes.

2. Related Works

Machine learning (ML) and deep learning (DL) have been extensively applied to breast cancer diagnosis, primarily for binary or multi-class classification, evaluated using metrics such as accuracy, precision, recall, and F1 score. CNN-based CAD systems offer faster, more reliable detection across modalities including ultrasound, MRI, X-ray, and mammography [14], with AI in digital mammography and tomosynthesis matching or exceeding conventional CADe/CADx performance [15]. DL also enables analysis of genetic and histopathological data for early detection, supporting timely diagnosis and improved outcomes [16]. AI-assisted mammography improves detection and reduces radiologist workload despite challenges such as false positives and variable subgroup performance [17], and AI-powered CAD has notably enhanced mammography accuracy, showing strong potential for future breast cancer screening [18].

Mammogram Images: DCNNs with preprocessing and augmentation effectively classify mammograms into benign, malignant, and normal while handling class imbalance [19]. Castro-Tapia et al. [20] used segments from the INbreast and MIAS datasets to analyze and compare breast lesion classification architectures such as AlexNet, GoogleNet, VGG19, and ResNet50. In line with previous studies, they evaluated 14 malignant and benign microcalcification and mass classifiers from prior research. CNNs yielded exceptional results. GoogleNet emerged as the most accurate model in their CAD system for breast cancer diagnosis, achieving an F1 score of 91.92%, an AUC of 99.29%, a precision rate of 92.15%, an accuracy rate of 91.92%, a specificity rate of 97.66%, and a sensitivity rate of 91.70% on a balanced dataset. Chougrad et al. [21] developed a CNN-based breast cancer screening method to enhance mammographic image classification in the DDSM dataset.

These studies include: Rahman et al. [22], who employed pre-trained convolutional neural network (CNN) architectures, specifically ResNet50 and InceptionV3, to categorize mammographic lesions into benign and malignant classifications. Due to the limited availability of data, methods such as data augmentation, preprocessing, and transfer learning were implemented, with some approaches also incorporating encoder mechanisms. The ResNet50 model achieved an accuracy of 85.7%, while InceptionV3 recorded an accuracy of 79.6%.

Sun et al. [23] integrated features derived from multiple perspectives (MLO and CC) within the convolutional neural network framework. This model introduced a penalty term and utilized features across various scales, resulting in an accuracy of 82.02%. In [24], Jafari et al. extracted features from several pre-trained CNN models to identify breast cancer. The most relevant features were selected using mutual information and classified using neural networks (NN), k-nearest neighbors (kNN), random forests (RF), and support vector machines. This novel approach achieved an accuracy of 92% on the RSNA dataset, 94.5% on MIAS, and 96% on DDSM.

Muduli et al. [25] developed a CNN with five learnable layers (four convolutional and one fully connected) for breast cancer classification. The model automates feature extraction with fewer parameters and was rigorously tested on multiple mammography and ultrasound datasets (MIAS, DDSM, INbreast, BUS-1, BUS-2). It outperformed several methods, achieving 96.55%, 90.68%, and 91.28% accuracy on the MIAS, DDSM, and INbreast datasets, and 100% and 89.73% accuracy on the BUS-1 and BUS-2 datasets.

A hybrid mammography computer-aided diagnosis (CADx) system was developed by Rouhi and Jafari in [26], integrating region-based, contour-based, and clustering segmentation methodologies. The system employs spatial frequency components (SFC), enhanced region growing (RG), or convolutional neural networks (CNN) for preliminary segmentation and utilizes genetic algorithm-artificial neural networks (GA-ANN) or genetic algorithm-multiple artificial neural networks (GA-MA-ANN) for the optimization of level set parameters. Neoplasms were categorized using classifiers, including artificial neural networks (ANN), random forests, and support vector machines (SVM), achieving high levels of sensitivity, specificity, accuracy, and area under the curve (AUC) across various datasets (MIAS, DDSM, INbreast). The multilayer perceptron (MLP) classifier achieved accuracies of 90.94%, 88.61%, and 89.23% for the aforementioned datasets, respectively.

A novel feature extraction technique based on the Dual Contourlet Transform (Dual-CT) was introduced by Dong et al. in [27] for breast cancer diagnosis. In conjunction with an enhanced k-nearest neighbor (kNN) classifier, the methodology involved extracting regions of interest (ROI) from the MIAS database, followed by decomposition using Dual-CT, contourlet, and wavelet transforms, and extracting texture features. This approach achieved classification accuracies of 94.14% and 95.76%, surpassing conventional techniques. The enhanced kNN classifier demonstrated accuracies of 95.76%, 86.54%, and 89.30% for the MIAS, DDSM, and INbreast datasets, respectively.

Aguerchi et al. [11] conducted a study that employed transfer learning methodologies through the application of pre-trained convolutional neural network (CNN) architectures, specifically VGG16, ResNet50, and InceptionV3, to classify mammography images as either benign or malignant. The models underwent fine-tuning on the Digital Database for Screening Mammography (DDSM) dataset, utilizing pre-trained weights derived from ImageNet. Among the architectures evaluated, ResNet50 demonstrated superior performance, achieving an accuracy of 88%, precision of 85%, recall of 90%, and a ROC AUC of 0.92, outpacing both VGG16 and InceptionV3 in all comprehensive metrics. These findings highlight the effectiveness of transfer learning in enhancing breast cancer detection by improving diagnostic accuracy and reliability.

A sophisticated deep ensemble transfer learning model, combined with a neural network classifier, was proposed by Arora et al. in [28] for the automated extraction of features and classification of mammographic images. This approach involved the pre-processing of images, the extraction of robust features using the ensemble model, and the optimization of these features into a cohesive vector for classification. The neural network classifier successfully distinguished between benign and malignant tumors, achieving an accuracy of 88% and an AUC of 0.88, thereby demonstrating the promising capabilities of this robust computer-aided diagnosis (CADx) system for breast cancer classification.

Aguerchi et al. [7] developed a highly accurate convolutional neural network (CNN) model for breast cancer detection through mammographic imaging. The proposed methodology is based on the Particle Swarm Optimization (PSO) algorithm, which helps identify optimal hyperparameters and structural configurations for the CNN model. The CNN model utilizing PSO achieved impressive success rates of 98.23% and 97.98% on the DDSM and MIAS datasets, respectively.

Histopathological images: Mansour [29] introduced a computer-assisted system for breast cancer detection, employing an adaptive learning-based Gaussian Mixture Model (GMM) alongside feature extraction using AlexNet-DNN, complemented by principal component analysis (PCA) and linear discriminant analysis (LDA). The proposed approach achieved a performance score of 96.70% for the AlexNet-FC7 model using the BreakHis dataset.

An evaluative analysis was conducted on the efficacy of CNNs in conjunction with four widely recognized CNN-based architectures: VGG16, VGG19, MobileNet, and ResNet50, for the classification of breast cancer using histopathological images from the BreakHis dataset. Among the evaluated classifiers, VGG16 exhibited superior performance, achieving an accuracy of 94.67%, precision of 92.60%, F1-score of 85.21%, and recall of 80.52%. These results substantiate its effectiveness in the classification of malignant versus non-malignant tumors, as noted by Agarwal et al. [30].

Spanhol et al. [31] published a dataset containing 7,909 breast cancer histology images, classified into normal and cancerous categories. The primary objective of this dataset is to facilitate the automatic classification of these images into two groups, providing medical professionals with a useful computer-aided diagnosis tool. Their analysis achieved an accuracy of 80% to 85%, indicating room for improvement. The researchers employed machine learning algorithms such as KNN, SVM, quadratic linear analysis, and random forest for feature analysis.

Zhou et al. [4] utilized a three-dimensional DCNNs detect and localize breast cancer in dynamic contrast-enhanced MRI datasets. Despite being a relatively under-trained model, the 3D-CNN achieved an accuracy of 83.7%, showcasing the potential of CNNs for MRI-based breast cancer detection.

Yurttakal et al. [32] developed a multilayer convolutional neural network (CNN) that used pixel information and on-line data enhancement to detect malignant or benign lesions in MRI images. Their model demonstrated impressive performance, achieving an accuracy of 98.33%, which underscores the potential of pixel-based feature extraction and data augmentation to improve model efficacy.

In the field of ultrasound imaging, Ragab et al. [33] aimed to identify and classify breast cancer using an innovative ensemble deep learning-based clinical decision support system. To accurately identify tumor-affected regions, the researchers developed an optimal multilevel thresholding technique for image segmentation. In addition, they established a feature extraction ensemble comprising three distinct deep learning models, combined with an effective machine learning classifier for breast cancer diagnosis.

Eroğlu Y [34] designed a hybrid CNN system that utilizes ultrasonography images for breast cancer diagnosis. This system extracts features from AlexNet, MobileNetV2, and ResNet50, concatenating these features and applying the mRMR (minimum Redundancy Maximum Relevance) feature selection method to identify the most significant features. The classification was performed using SVM and k-NN classifiers, leading to an outstanding accuracy rate of 95.6

One study [35] utilized two breast ultrasound datasets from different platforms, with breast ultrasound images as the primary dataset. The BUSI dataset contains 780 images, including 133 normal, 210 malignant, and 437 benign specimens. Another dataset (referred to as dataset B) includes 163 images comprising 110 normal and 53 malignant specimens. To improve the dataset, generative adversarial networks (GANs) were used to augment the data. The study explored the classification of breast ultrasound images using deep learning (DL), comparing CNN-AlexNet and transfer learning approaches, both with and without data augmentation. Over 60 training epochs with a learning rate of 0.0001, their model achieved an accuracy of 94% for the BUSI dataset, 92% for dataset B, and 99% when data augmentation was applied.

3. Material and Methods

3.1. Datasets Used

Deep learning models in breast cancer research depend on diverse and high-quality datasets. In this study, we utilized publicly available breast cancer datasets from multiple imaging modalities. For mammography, we used the MIAS, DDSM, and INbreast datasets. We also incorporated public ultrasound imaging datasets. Magnetic resonance imaging (MRI) was performed using a publicly available breast imaging dataset. Lastly, for histopathology images, we employed the BreaKHis dataset. Our proposed method was thoroughly tested across these imaging modalities to evaluate its capacity to distinguish benign from malignant findings using these diverse datasets.

DDSM: The Digital Database for Screening Mammography (DDSM) [36] is a crucial resource for breast cancer research. It includes mammography images from screening programs, classified as benign, malignant, and normal. Patient metadata, including age, breast density, and pathology findings, enhances the dataset for developing and validating breast cancer identification algorithms. The richness and high resolution of this dataset make it a reliable baseline for testing diagnostic models in clinical settings.

MIAS: The Mammographic Image Analysis Society (MIAS) [36] database is a publicly available dataset of mammographic images with detailed annotations. MIAS is highly useful for automated breast cancer detection as it contains well-defined annotations and includes both normal and abnormal cases, facilitating the development of algorithms for early breast cancer detection.

INbreast: The high-resolution, full-field digital mammography database INbreast [36] contains annotated mammograms of various types. Benign or malignant abnormalities are classified according to craniocaudal (CC) and mediolateral oblique (MLO) views. The extensive annotations in this collection help to locate and characterize lesions. Due to its high image quality and substantial metadata, INbreast is widely used for machine learning research in breast cancer diagnosis.

Breast Ultrasound Images Dataset: [35] Available on Kaggle, this dataset contains 971 breast ultrasound images originally categorized into three classes: normal, benign, and malignant, with corresponding labels (0, 1, and 2). For the purposes of this study, the “normal” images were excluded, and only benign and malignant images were used for classification. The dataset thus contains two classes (benign = 0, malignant = 1), as reflected in all tables and analyses in the manuscript. This preprocessing ensures a focused evaluation of the model for distinguishing between benign and malignant findings.

MRI Datasets: Magnetic Resonance Imaging (MRI) [37] datasets provide complex 3D breast tissue models essential for breast cancer research. Contrast-enhanced MRI images are more effective than standard mammography at detecting small abnormalities in dense breast tissues. These datasets are critical for lesion characterization, tumor detection, and staging investigations, enhancing the diagnostic precision of machine learning models in complex clinical scenarios.

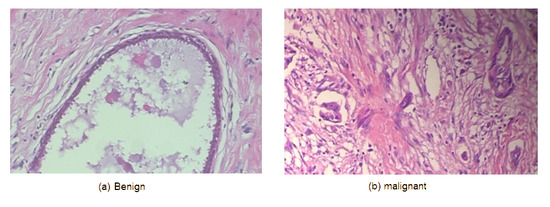

BreaKHis: The Breast Cancer Histopathological Database (BreaKHis) [38] contains 7909 microscopic breast tissue images from 82 individuals. The images, captured at magnifications of 40×, 100×, 200×, and 400×, are classified as benign or malignant. This dataset is essential for histopathological image processing research, supporting the development of cellular-level breast cancer subtype classification algorithms. The focus on pathology enhances the diagnostic accuracy and robustness of machine learning models.

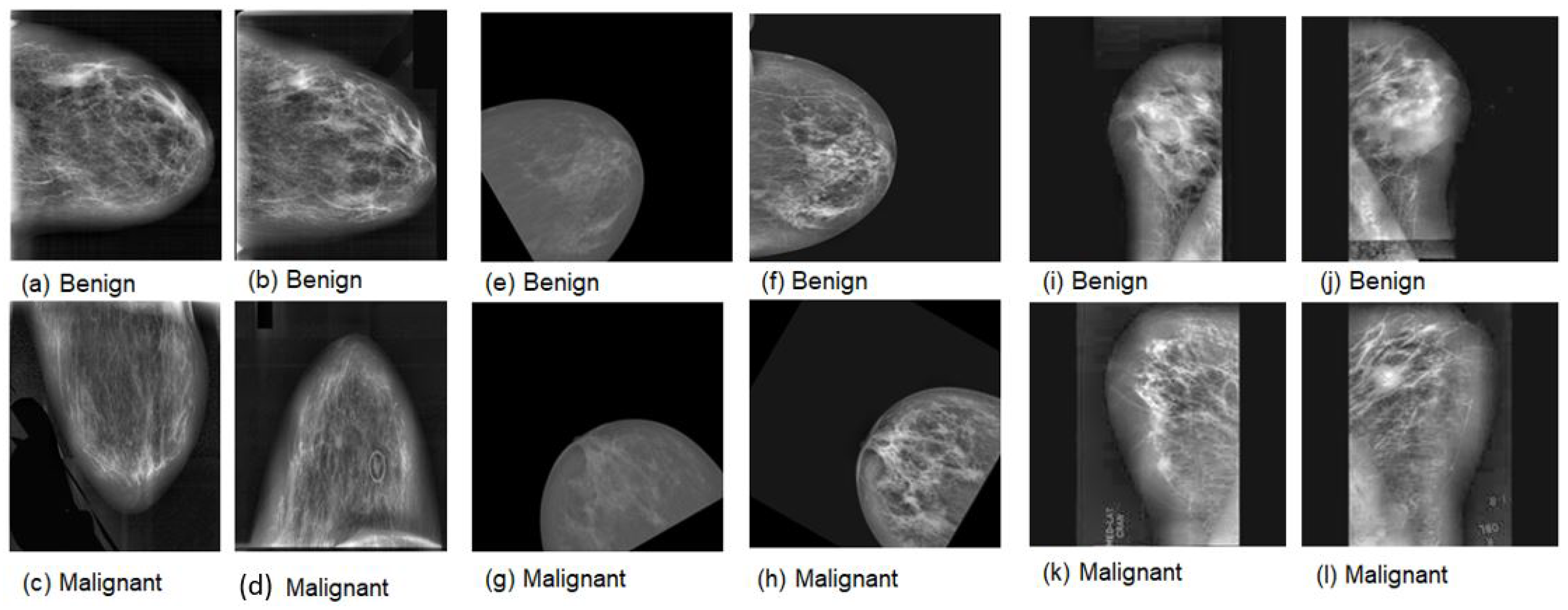

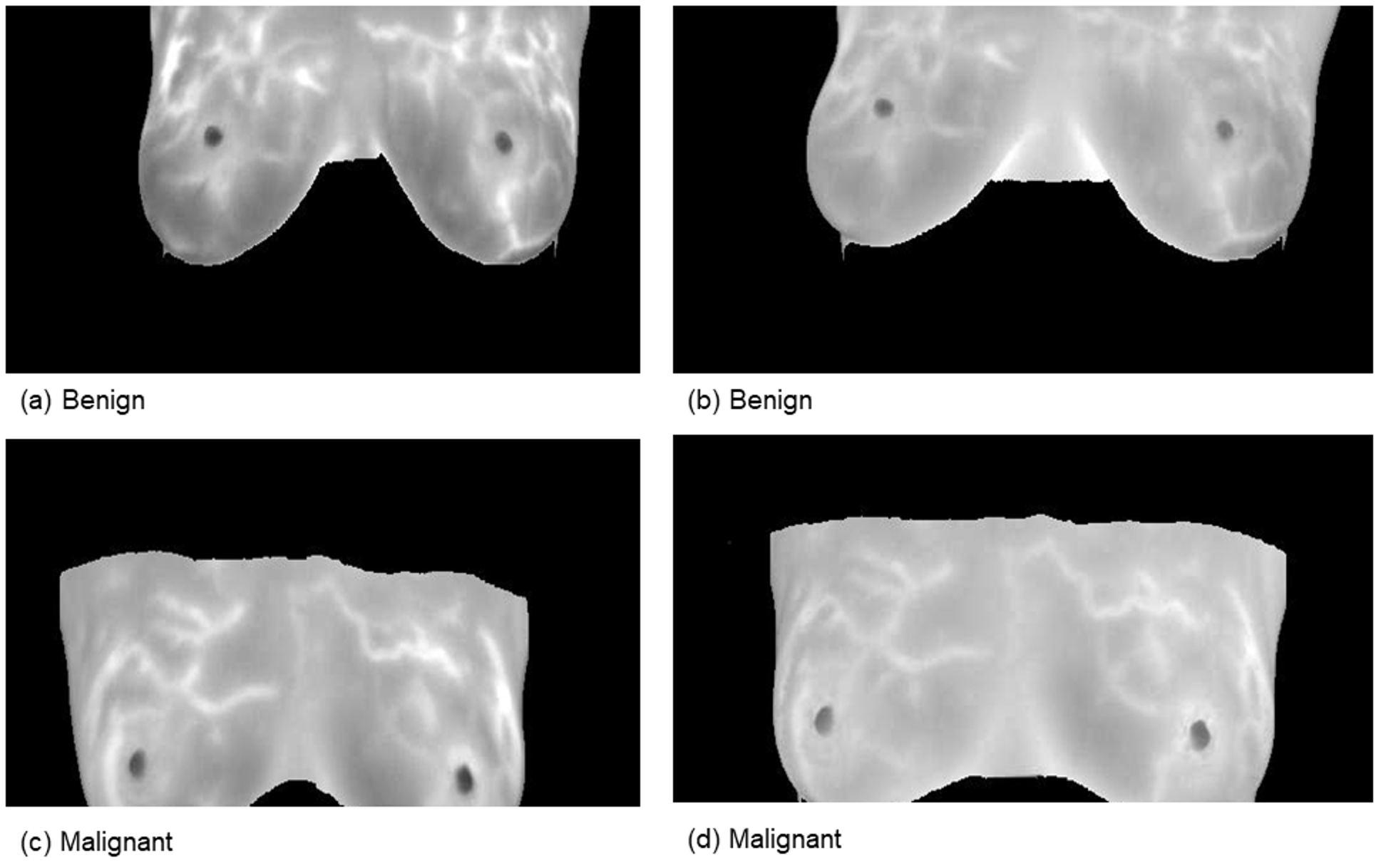

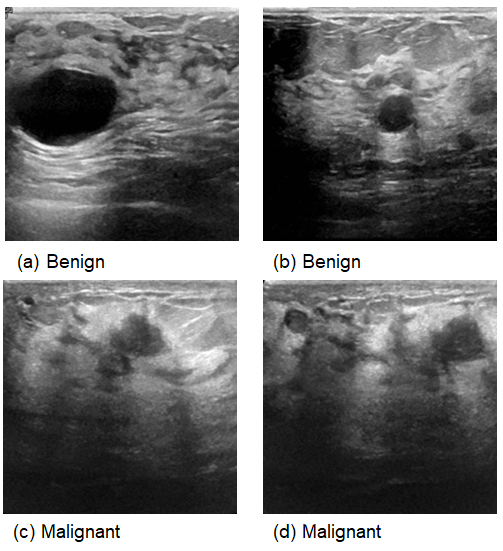

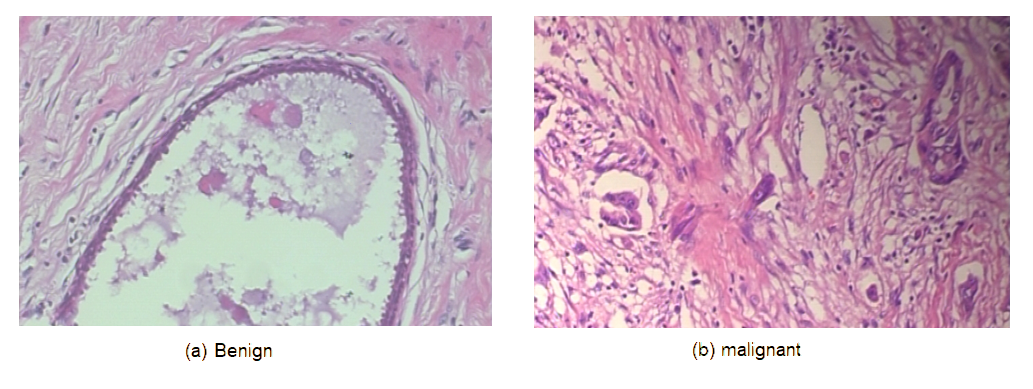

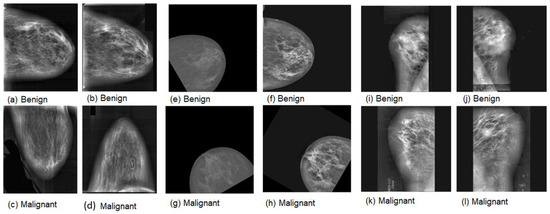

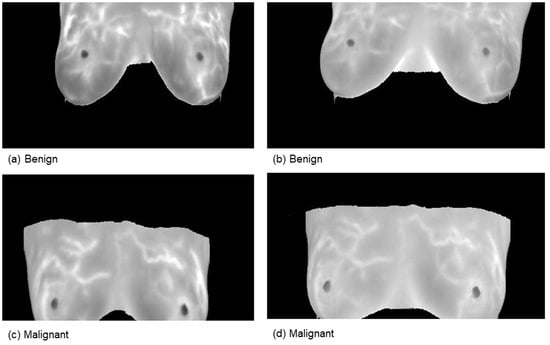

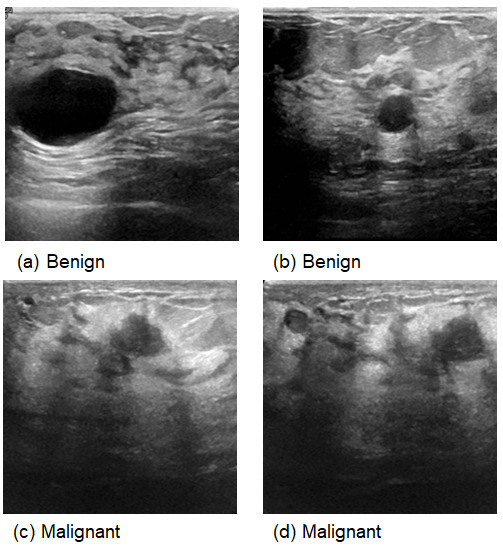

This comprehensive approach of utilizing datasets from multiple imaging modalities shown in Figure 1, Figure 2, Figure 3 and Figure 4 and summarized in Table 1, including mammography, ultrasound, MRI, and histopathological images, ensures robust model validation and enhances the system’s capacity to accurately classify benign and malignant findings. By leveraging these diverse datasets, we aim to develop a more generalizable and effective breast cancer classification system.

Figure 1.

Samples of mammography images. (a–d) DDSM; (e–h) INbreast; (i–l) MIAS.

Figure 2.

Samples from the magnetic resonance imaging (MRI) dataset.

Figure 3.

Samples from Ultrasound images.

Figure 4.

Samples from the BreakHis dataset.

Table 1.

Specification of breast cancer datasets (benign versus malignant).

3.2. Proposed Methodology

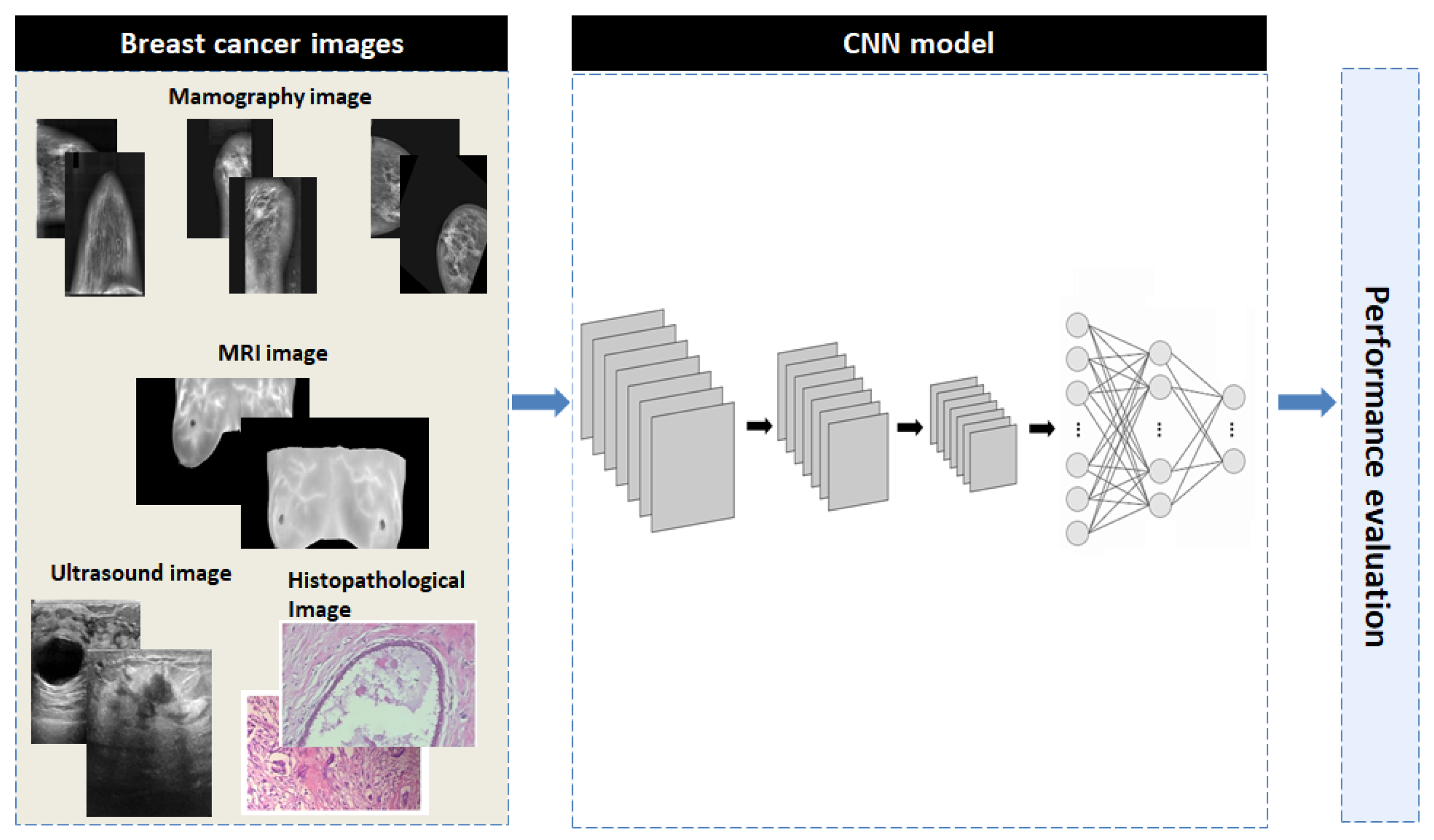

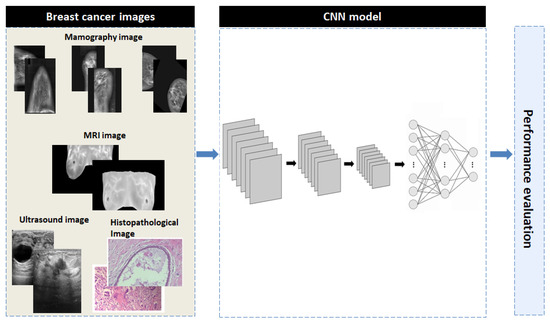

The proposed methodology consists of three phases: image preprocessing, training of an advanced convolutional neural network (CNN) model using multiple imaging datasets and the performance evaluation. Figure 5 provides a concise overview of the proposed methodology to aid in understanding.

Figure 5.

The overall block diagram of the proposed CNN model.

- Preprocessing: All images were resized to 128 × 128 pixels and normalized by dividing pixel values by 255.

- Data Augmentation: Random rotations, horizontal flips, and zoom were applied during training.

- Dataset Splits: 5-fold cross-validation was used for all datasets with fixed random seeds ensuring reproducibility.

3.2.1. Image Preprocessing

In this study, we prepare the data for deep learning models using image preprocessing techniques. Contrast Limited Adaptive Histogram Equalization (CLAHE) was applied to enhance image contrast, highlighting critical features such as masses and calcifications. To ensure consistency for the neural network, all images were resized to 128 × 128 pixels. Since mammography scans are monochromatic, the images were adjusted to facilitate the data collection process. These preprocessing techniques improve the model’s ability to detect and classify breast cancer.

3.2.2. Convolutional Neural Networks

Convolutional Neural Networks (CNNs) have emerged as a highly effective deep learning architecture for medical image processing, including breast cancer prediction and diagnosis. CNNs can successfully analyze imaging modalities such as mammograms, ultrasounds, MRI scans and hispathology, which are crucial for the early detection of breast cancer.

CNNs improve predictive accuracy by integrating multiple imaging datasets, offering a powerful tool for comprehensive breast cancer investigation. This deep learning-based approach not only enhances diagnostic performance but also facilitates personalized treatment planning, significantly impacting clinical decision-making and patient outcomes.

3.3. Proposed CNN Model

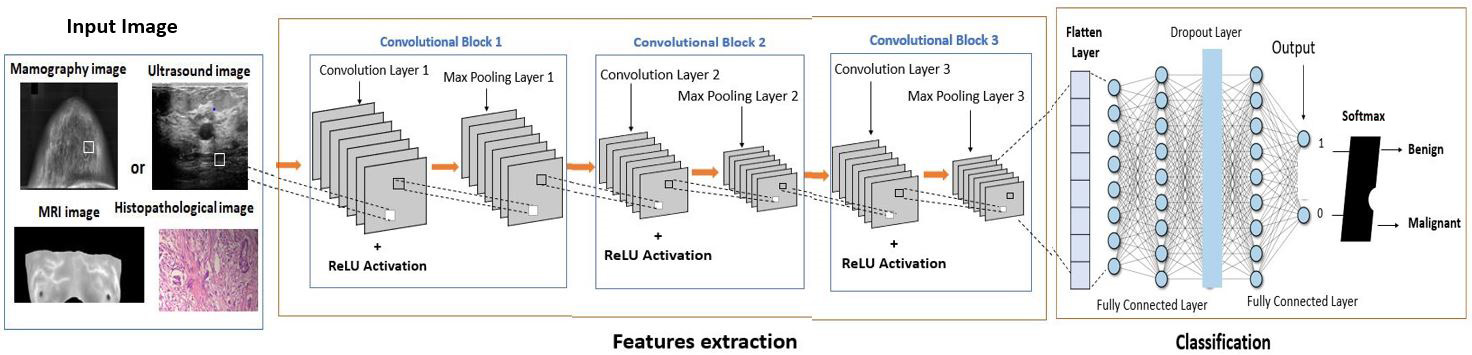

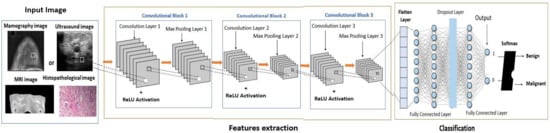

The proposed CNN architecture consists of three convolutional layers and a single fully connected layer, chosen to balance model complexity, computational efficiency, and classification performance. Convolutional and max-pooling layers extract hierarchical features, capturing progressively more complex patterns. The fully connected layer integrates high-level features, and the final activation function performs binary classification between benign and malignant cases. This streamlined design minimizes parameters and computational load while maintaining robust feature extraction and accurate classification, addressing both efficiency and performance considerations.

3.3.1. Architecture of Proposed CNN Model

The proposed CNN architecture (shown in Figure 6) consists of three convolutional layers, a max-pooling layer, and a fully connected binary classification layer. The model begins with a Conv2D layer containing 32 filters, a kernel size of (3, 3), and ReLU activation, designed to process grayscale input. Subsequent convolutional layers extract more complex features with 64 and 128 filters, while max-pooling layers reduce spatial dimensions and computational costs. After flattening, a dense layer with 128 neurons and ReLU activation encodes high-level representations, followed by a dropout layer with a rate of 0.5 to prevent overfitting. Finally, a sigmoid activation function produces a single output neuron, classifying the input as either benign or malignant. This architectural framework prioritizes simplicity and efficiency, enabling rapid learning with high accuracy and minimal computational load.

Figure 6.

The comprehensive architecture of the proposed CNN architecture.

3.3.2. Learning Method

The proposed convolutional neural network (CNN) architecture employs the Adam optimization method for adaptive learning rate adjustments and uses a binary cross-entropy loss function for binary classification tasks, ensuring rapid convergence. The model was trained for 80 epochs with a batch size of 32, utilizing a novel data generator to enhance variability and generalization. A detailed training history was recorded for each epoch to monitor performance and detect potential overfitting. The model’s classification performance was evaluated using four key metrics: accuracy, Precision, Recall and F1 score. The metrics are defined as follows:

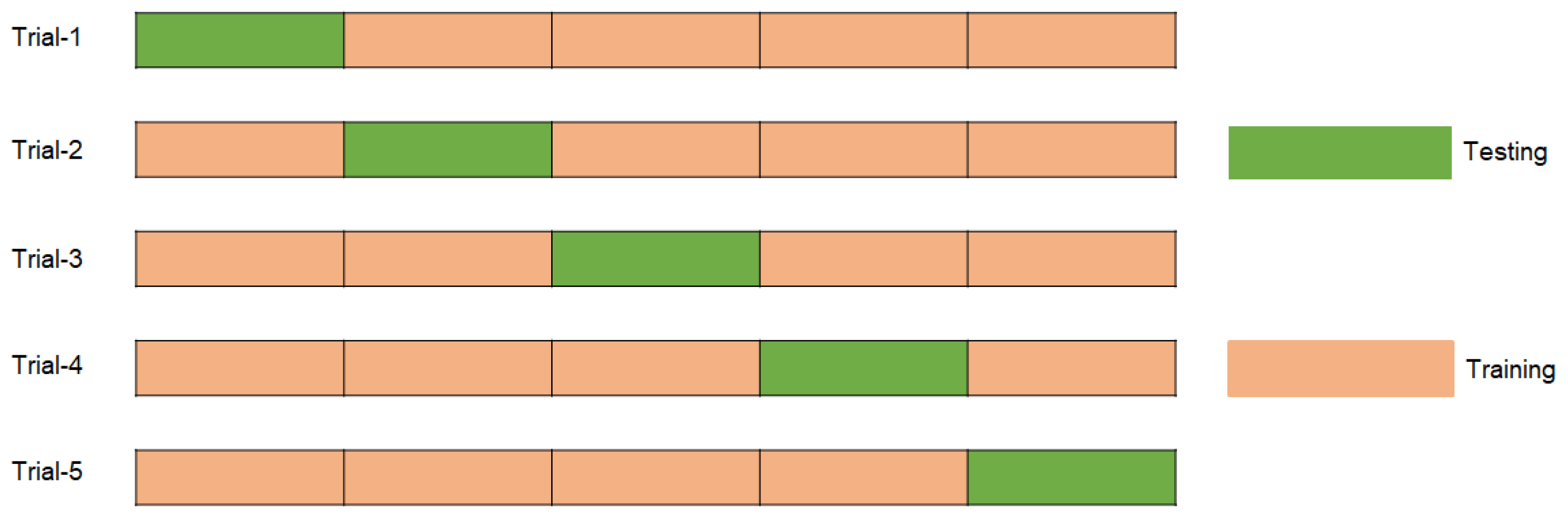

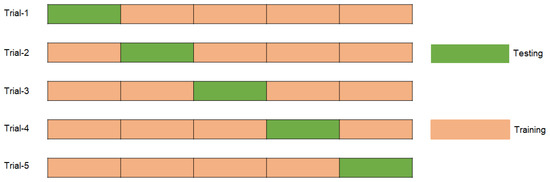

FP, FN, TN, and TP represent false positives, false negatives, true negatives, and true positives, respectively. Using these criteria, a comprehensive evaluation of the model’s classification performance in both categories enhances precision and reliability. The model was evaluated using stratified 5-fold cross-validation (CV), where the dataset was divided into five subsets while maintaining the original class distribution of benign and malignant cases. In each iteration, one subset was reserved for testing, and the remaining subsets were used for training. To further improve robustness and mitigate potential effects of class imbalance, the entire CV process was repeated three times, ensuring consistent and balanced evaluation across all folds. Figure 7 illustrates the sample distribution of the 5-fold cross-validation for each iteration.

Figure 7.

Distribution of samples for each trial by using 5-fold cross validation.

The proposed CNN strikes a balance between performance and efficiency by utilizing three convolutional layers for feature extraction and a single dense layer to prevent overfitting and manage complexity. ReLU activation, a 0.5 dropout rate, and 0.0001 L2 regularization are incorporated to enhance learning and generalization. A batch size of 32, 80 epochs, and a carefully tuned learning rate of 0.0001 ensure stable optimization dynamics, while the Adam optimizer accelerates convergence. The combination of binary cross-entropy and a sigmoid output function guarantees accurate binary classification. Model performance is evaluated using accuracy, Precision, Recall and F1 score, while 5-fold cross-validation enhances reliability and generalizability. These methodological choices result in a robust and efficient medical imaging model.

4. Experiments and Results

4.1. Experimental Setting

The experiments were conducted on Kaggle Notebooks with dual NVIDIA Tesla T4 GPUs (16 GB each), 32 GB RAM, and 100 GB storage, providing enhanced performance for large-scale deep learning tasks. The models were implemented in Python 3.10 within this flexible and scalable environment.

4.2. Results and Analysis

4.2.1. Results of Proposed Method for Mammography Datasets

Results of Proposed Method for MIAS Dataset

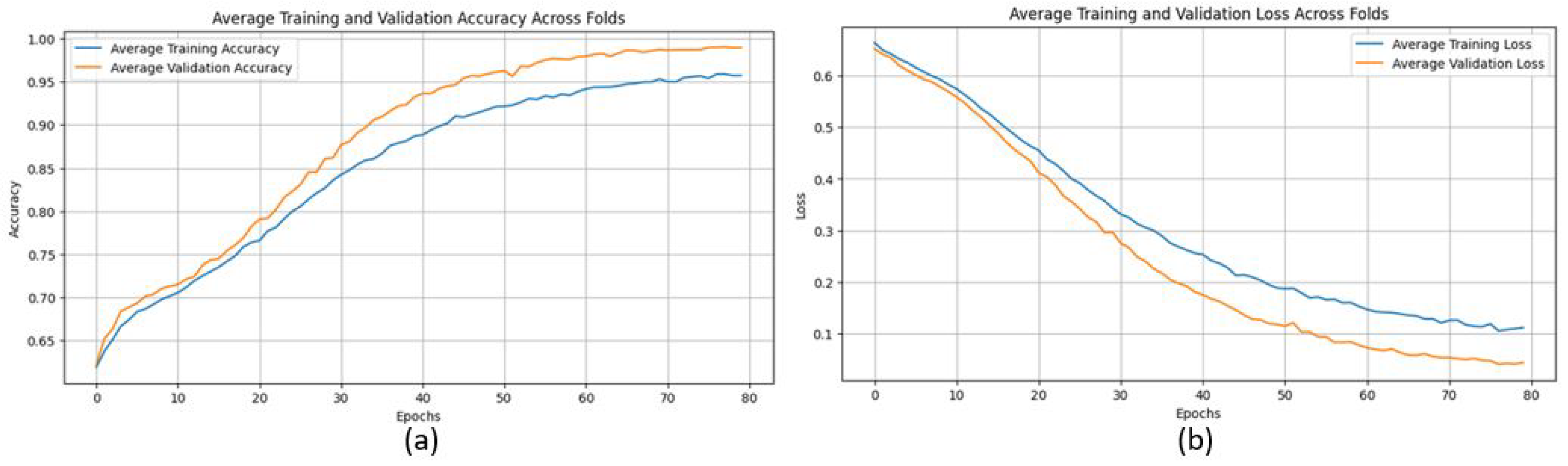

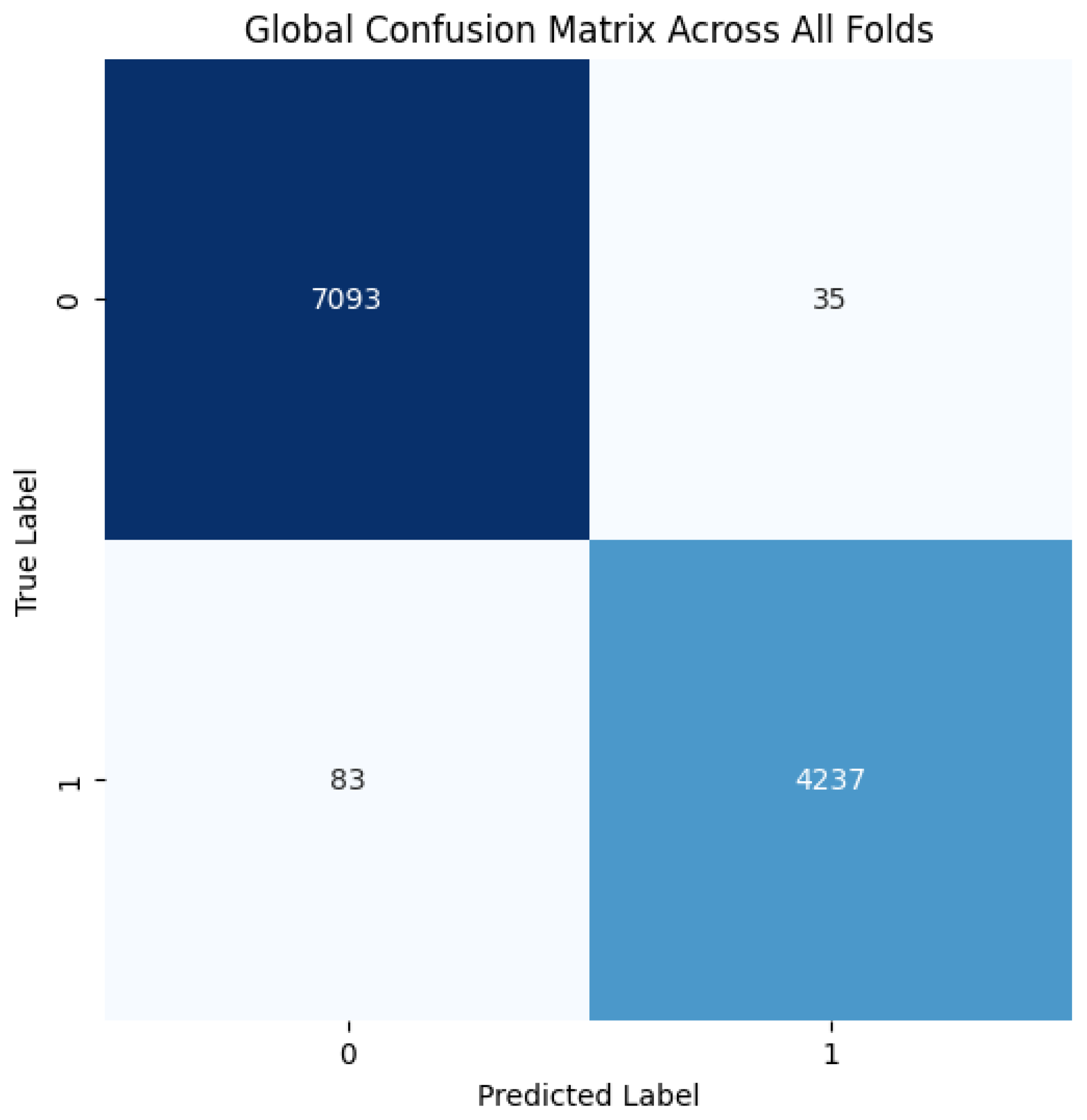

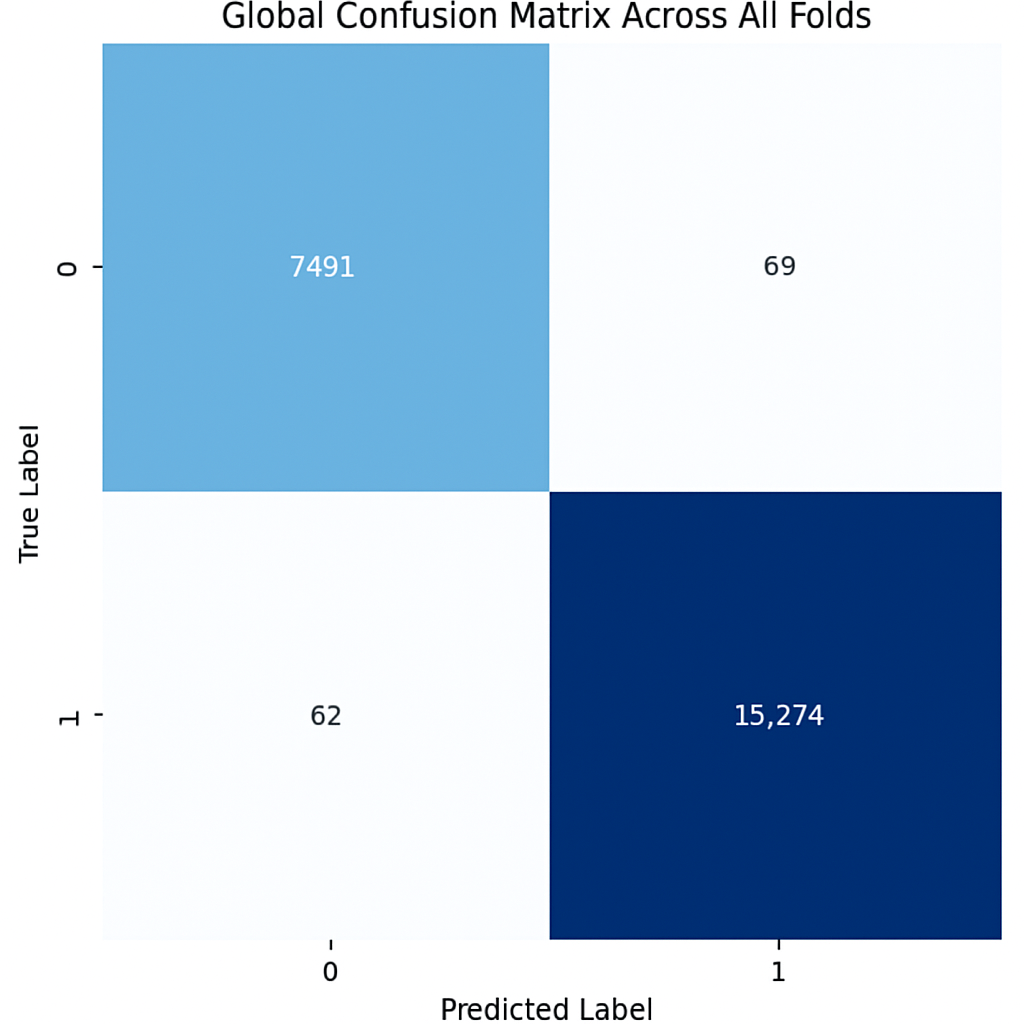

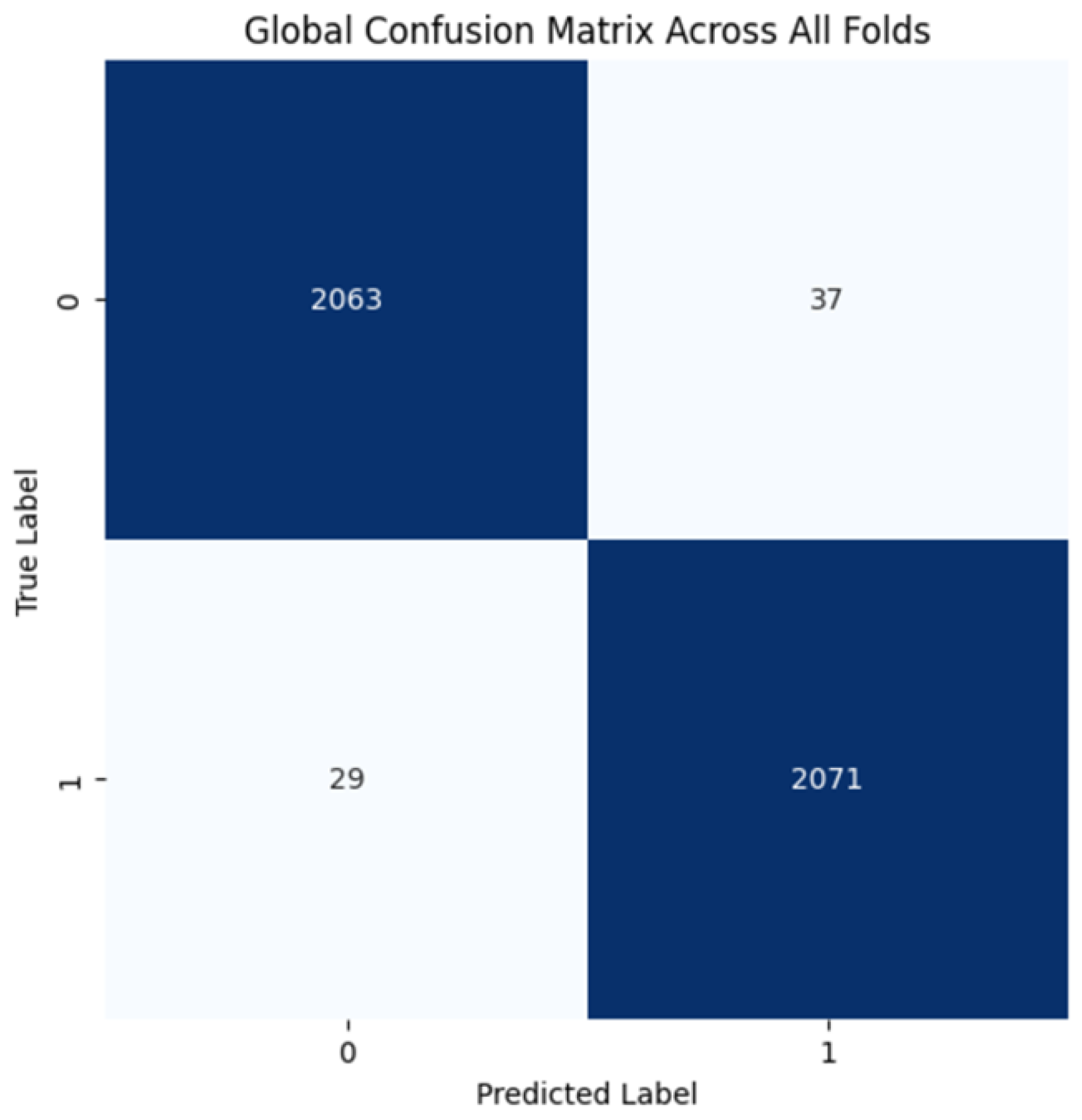

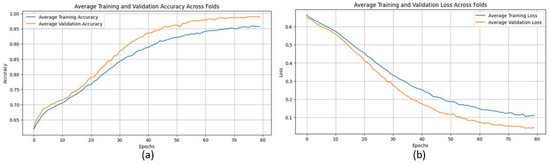

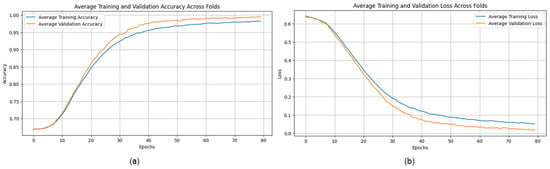

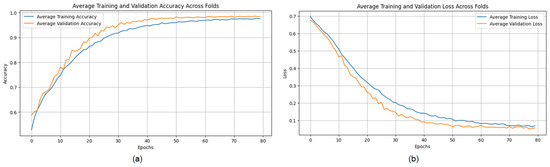

The proposed CNN model yielded impressive results on the MIAS dataset, achieving an average accuracy of 98.97%, precision of 99.20%, recall of 98.11%, and an F1 score of 98.64% across three runs using 5-fold cross-validation. The model effectively classifies mammographic images, with consistent precision and recall metrics, underscoring its reliability in identifying both positive and negative instances. The low variability across folds indicates the model’s stability and generalization. Small fluctuations in metrics, such as a slight decrease in recall in some folds, suggest potential areas for optimization to enhance sensitivity. The detailed performance metrics are summarized in Table 2. Training curves showing the average accuracy and loss across folds are illustrated in Figure 8, while the confusion matrix aggregated across all folds is presented in Figure 9. Overall, these results highlight the model’s strong potential for breast cancer classification and diagnostics.

Table 2.

Accuracy, Precision, Recall, and F1 Score (%) of the proposed CNN model for the MIAS dataset, using a 5-fold with 3 iterations.

Figure 8.

(a) Average Training accuracy Across Folds of the proposed CNN model versus number of epochs with MIAS dataset; (b) Average Training Loss Across Folds of the proposed CNN model versus number of epochs with MIAS dataset.

Figure 9.

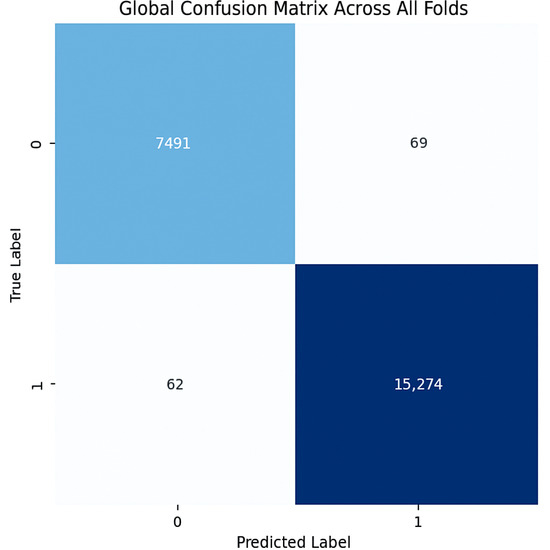

Confusion matrix across all folds for MIAS dataset.

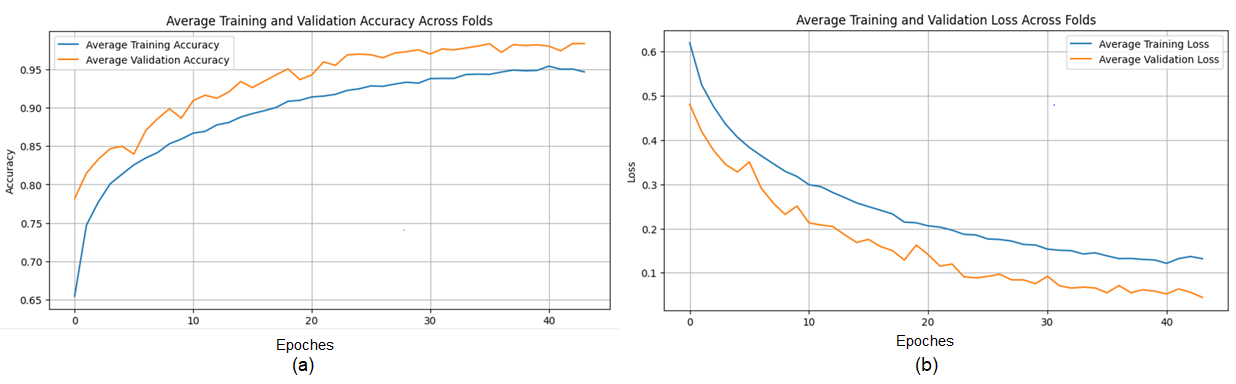

Results of the Proposed Method for DDSM Dataset

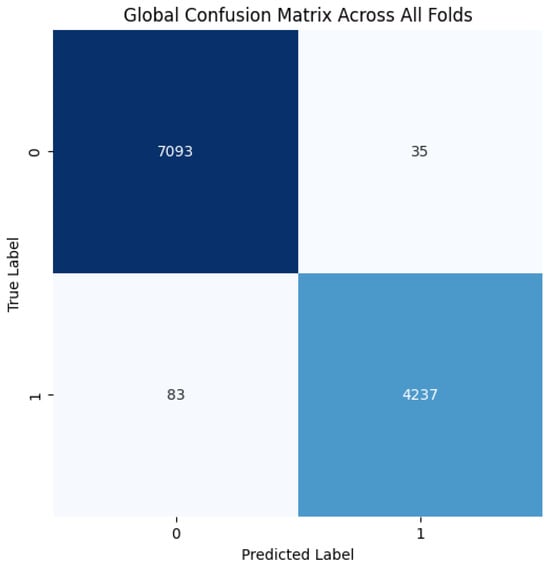

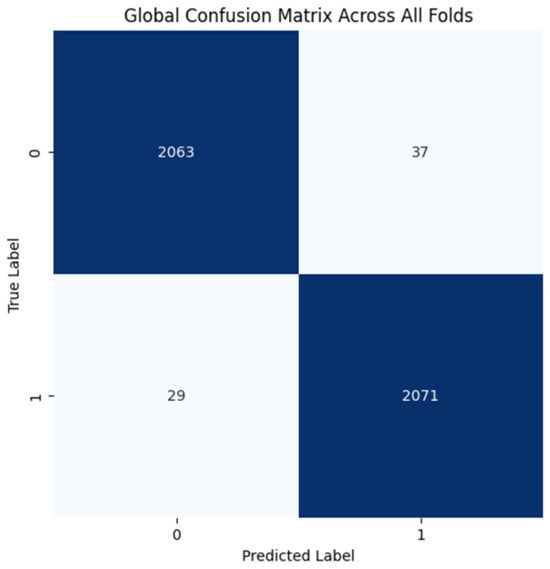

The proposed CNN model demonstrates remarkable robustness and performance on the DDSM dataset, achieving an overall average accuracy of 99.24%, precision of 99.20%, recall of 99.43%, and an F1 score of 99.31% across three runs using 5-fold cross-validation. These results highlight the model’s ability to effectively classify mammographic images, with consistent precision and recall metrics, reflecting its reliability in identifying both benign and malignant instances. The minimal variation across folds underscores the model’s stability and generalization capabilities. Slight discrepancies in memory usage across individual folds suggest potential areas for optimization, particularly to enhance sensitivity. The detailed performance metrics are summarized in Table 3. Training curves showing the average accuracy and loss across folds are illustrated in Figure 10, while the confusion matrix aggregated across all folds is presented in Figure 11. Overall, these findings affirm the model’s strong potential for breast cancer classification and emphasize its diagnostic value.

Table 3.

Accuracy, Precision, Recall, and F1 Score (%) of the proposed CNN model for the DDSM dataset, using a 5-fold with 3 iterations.

Figure 10.

(a) Average Training accuracy Across Folds of the proposed CNN model versus number of epochs with DDSM dataset; (b) Average Training Loss Across Folds of the proposed CNN model versus number of epochs with DDSM dataset.

Figure 11.

Confusion matrix across all folds for DDSM dataset.

Results of Proposed Method for INbreast Dataset

The evaluation of the proposed CNN model on the INbreast dataset highlights its effectiveness and outstanding performance, achieving an average accuracy of 99.43%, precision of 99.55%, recall of 99.60%, and an F1 score of 99.57% across three runs using 5-fold cross-validation. These results emphasize the model’s strong ability to accurately classify breast cancer images, with consistent precision and recall metrics confirming its reliability in identifying both malignant and benign instances. The minimal performance variation across the different folds further highlights the model’s stability and generalization capability. Small fluctuations in metrics, such as a slight decrease in recall in certain folds, suggest potential areas for improvement to boost sensitivity. The detailed performance metrics are summarized in Table 4. Training curves showing the average accuracy and loss across folds are illustrated in Figure 12, while the confusion matrix aggregated across all folds is presented in Figure 13. Overall, these results validate the model’s efficacy in breast cancer classification and enhance its potential as a reliable diagnostic tool.

Table 4.

Accuracy, Precision, Recall, and F1 Score (%) of the proposed CNN model for the INbreast dataset, using a 5-fold with 3 iterations.

Figure 12.

(a) Average Training accuracy Across Folds of the proposed CNN model versus number of epochs with INbreast dataset; (b) Average Training Loss Across Folds of the proposed CNN model versus number of epochs with INbreast dataset.

Figure 13.

Confusion matrix across all folds for INbreast dataset.

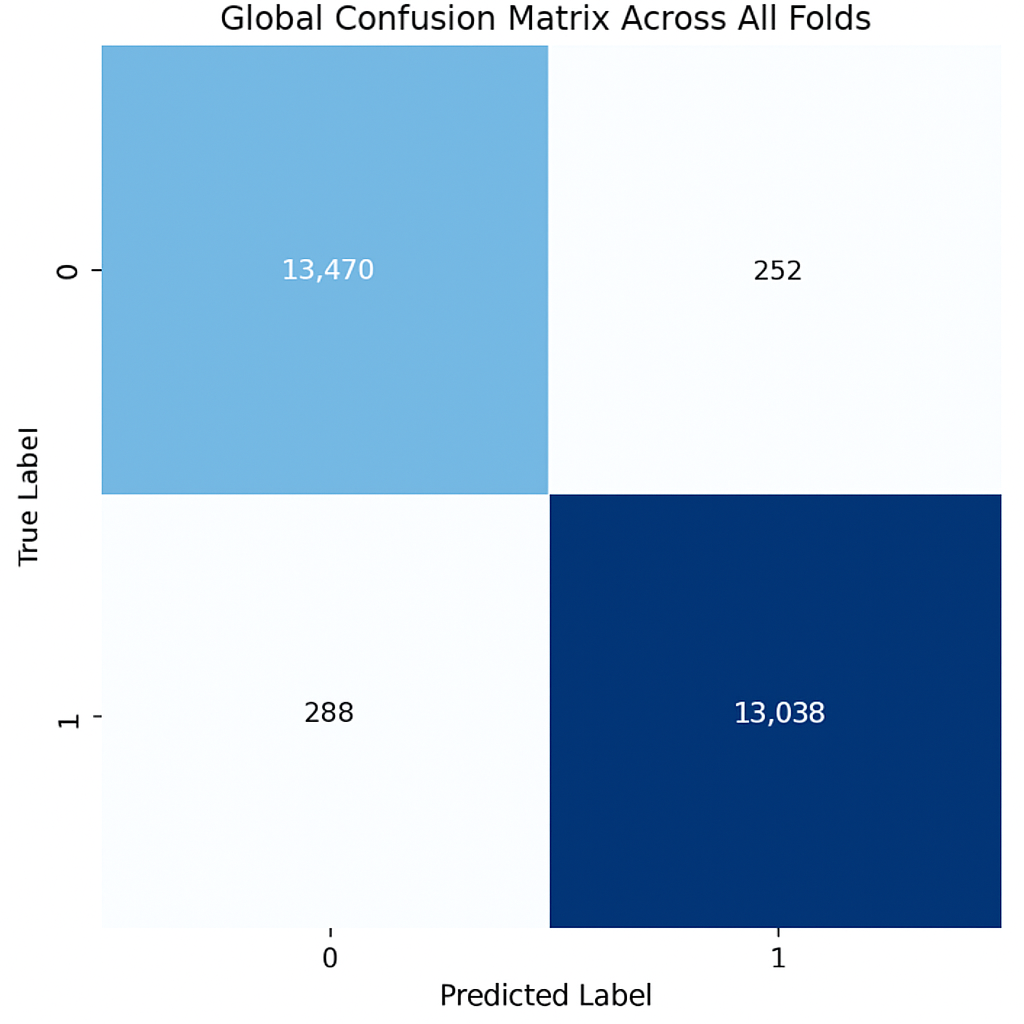

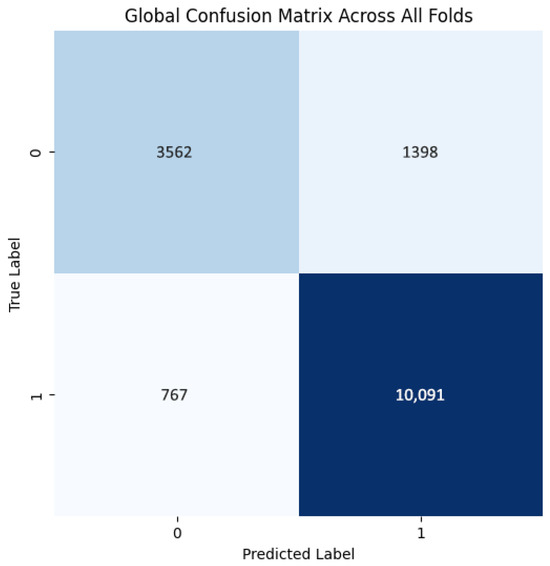

4.2.2. Results of Proposed Method for Ultrasound Datasets

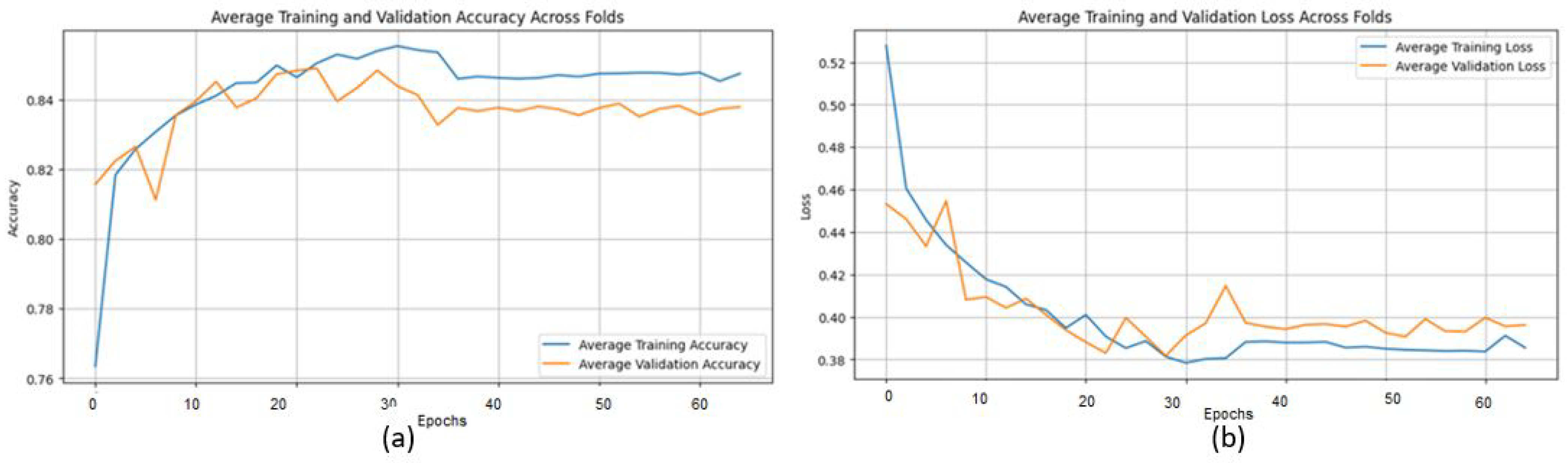

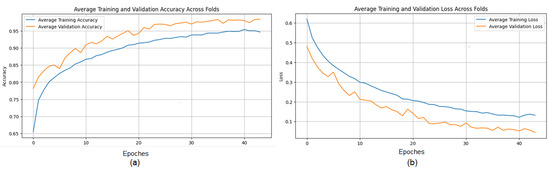

The proposed CNN model performs well on the Ultrasound dataset, achieving an average accuracy of 98.00%, precision of 98.11%, recall of 97.84%, and an F1 score of 97.97% across three iterations using 5-fold cross-validations (Table 5). These metrics demonstrate the model’s ability to classify ultrasound images with consistent precision and recall values, effectively distinguishing between benign and malignant cases. The limited variation across folds highlights the model’s stability and generalization. However, slight differences in memory usage, particularly in certain folds, suggest the need for further tuning to enhance sensitivity. Overall, the results reinforce the model’s potential for breast cancer classification and its diagnostic capabilities.

Table 5.

Accuracy, Precision, Recall, and F1 Score (%) of the proposed CNN model for the Ultrasound dataset, using a 5-fold with 3 iterations.

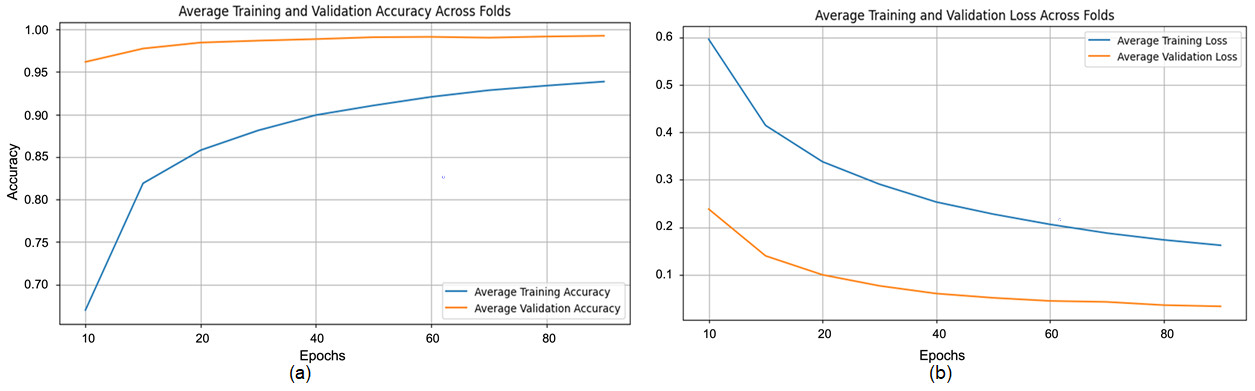

The training process shows consistent convergence across folds, as illustrated by the average training accuracy and loss curves (Figure 14). The confusion matrix confirms the model’s effectiveness in correctly classifying most cases, with only a small number of misclassifications observed (Figure 15).

Figure 14.

(a) Average Training accuracy Across Folds of the proposed CNN model versus number of epochs with Ultrasound dataset; (b) Average Training Loss Across Folds of the proposed CNN model versus number of epochs with Ultrasound dataset.

Figure 15.

Confusion matrix across all folds for Ultrasound dataset.

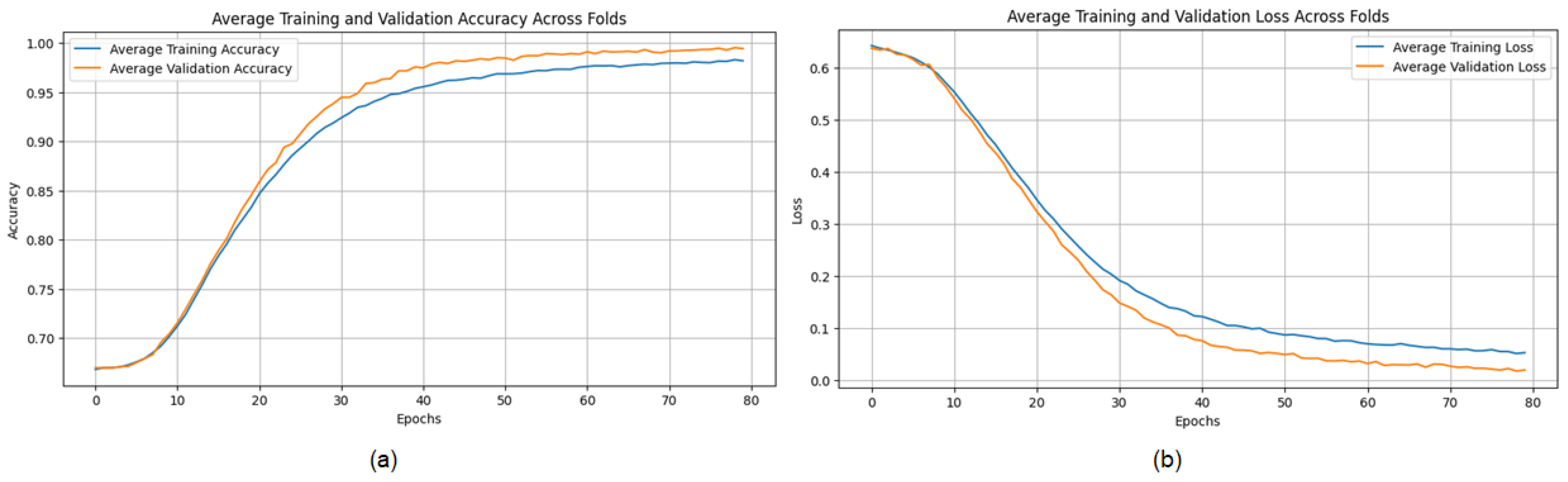

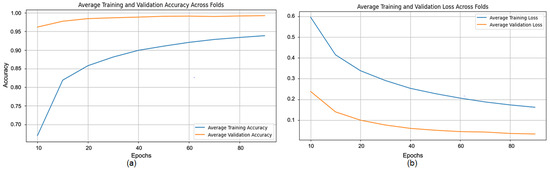

4.2.3. Results of the Proposed Method on Magnetic Resonance Imaging (MRI) Datasets

The evaluation of the proposed Convolutional Neural Network (CNN) architecture on the Magnetic Resonance Imaging (MRI) dataset highlights its robustness and outstanding performance, achieving an average accuracy of 98.43%, precision of 98.27%, recall of 98.63%, and an F1 score of 98.45% across three iterations using a five-fold cross-validation methodology. These metrics demonstrate the model’s ability to accurately classify MRI images, with consistent precision and recall rates indicating its potential to effectively differentiate between benign and malignant conditions. The model’s stability and applicability are evidenced by the minimal variability across folds. However, slight differences in memory usage across specific folds suggest opportunities to further enhance sensitivity. Overall, the results affirm the model’s capability for breast cancer classification and its potential as a reliable diagnostic tool.

The evaluation of the proposed Convolutional Neural Network (CNN) architecture on the Magnetic Resonance Imaging (MRI) dataset highlights its robustness and outstanding performance, achieving an average accuracy of 98.43%, precision of 98.27%, recall of 98.63%, and an F1 score of 98.45% across three iterations using a five-fold cross-validation methodology (Table 6). These metrics demonstrate the model’s ability to accurately classify MRI images, with consistent precision and recall rates indicating its potential to effectively differentiate between benign and malignant conditions. The model’s stability and applicability are evidenced by the minimal variability across folds. However, slight differences in memory usage across specific folds suggest opportunities to further enhance sensitivity. Overall, the results affirm the model’s capability for breast cancer classification and its potential as a reliable diagnostic tool.

Table 6.

Accuracy, Precision, Recall, and F1 Score (%) of the proposed CNN model for the MRI dataset, using a 5-fold with 3 iterations.

The training process shows consistent convergence across folds, as indicated by the average training accuracy and loss curves (Figure 16). The confusion matrix further demonstrates the model’s ability to correctly classify most cases, with minimal misclassifications observed across folds (Figure 17).

Figure 16.

(a) Average Training accuracy Across Folds of the proposed CNN model versus number of epochs with MRI dataset; (b) Average Training Loss Across Folds of the proposed CNN model versus number of epochs with MRI dataset.

Figure 17.

Confusion matrix across all folds for MRI dataset.

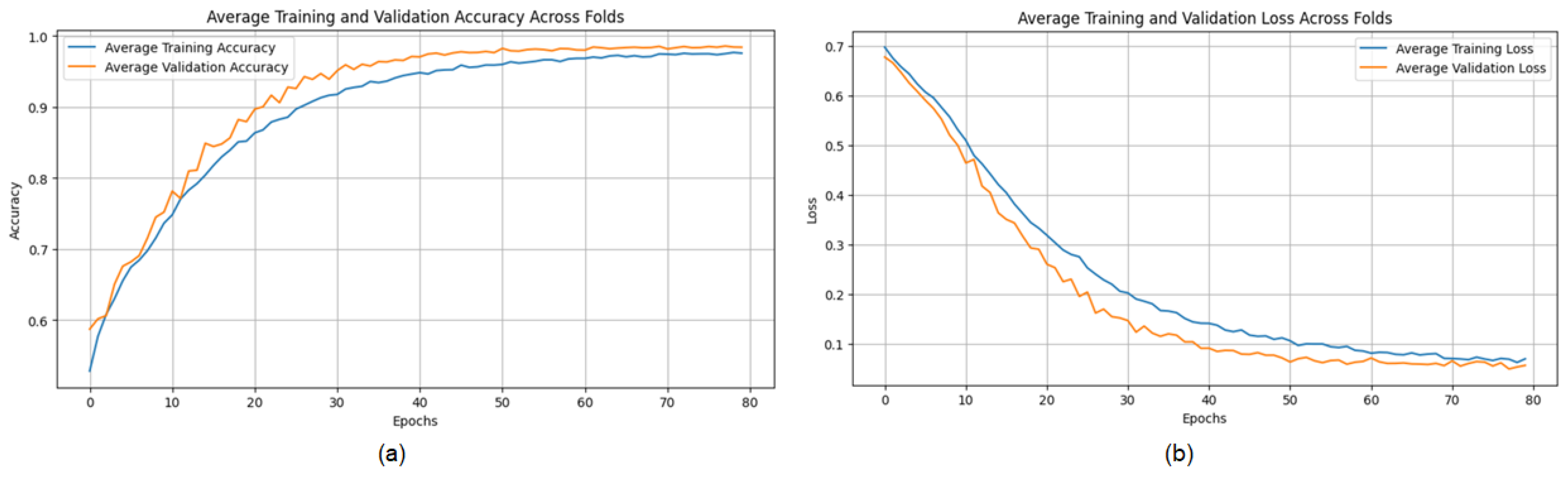

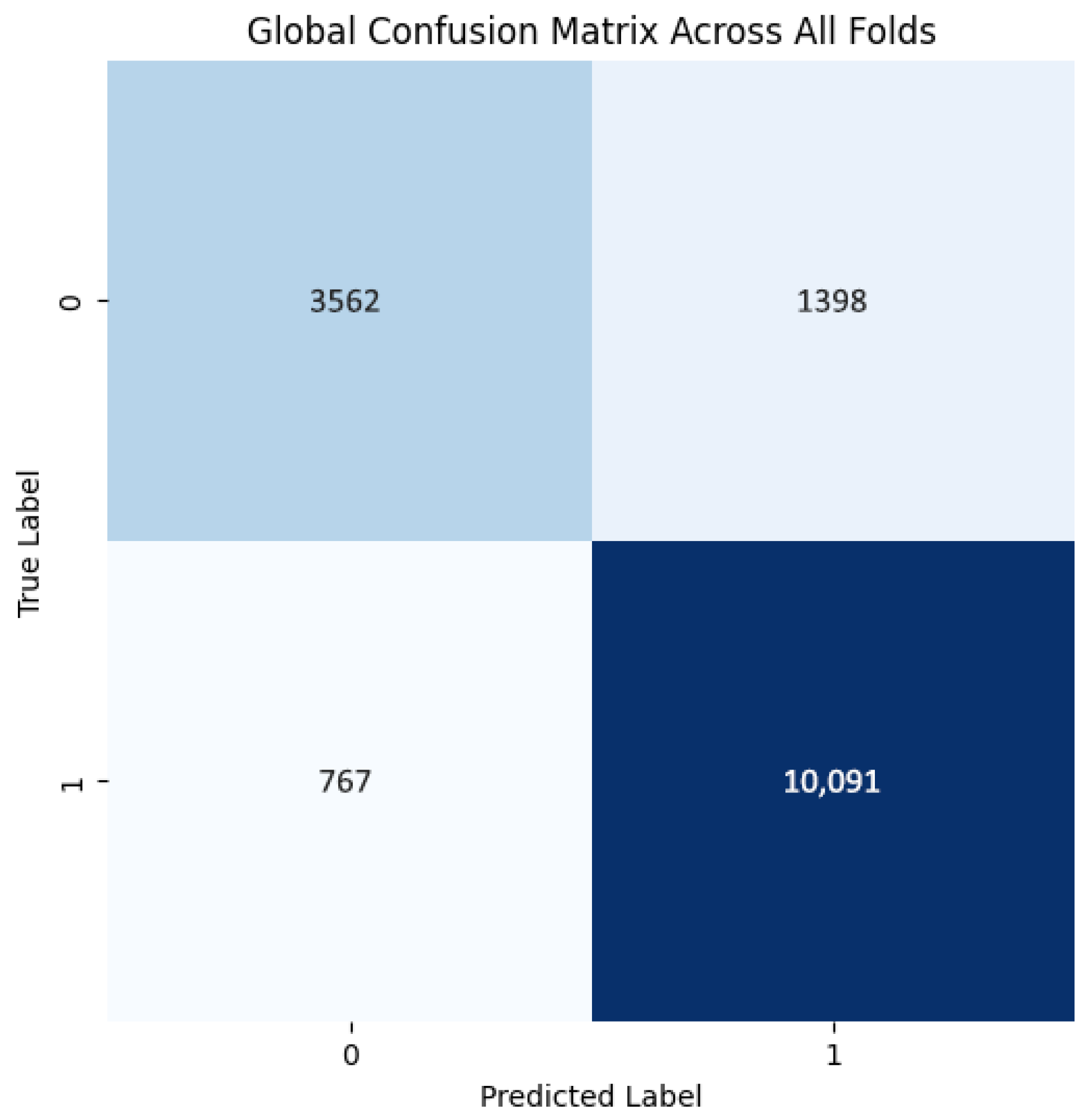

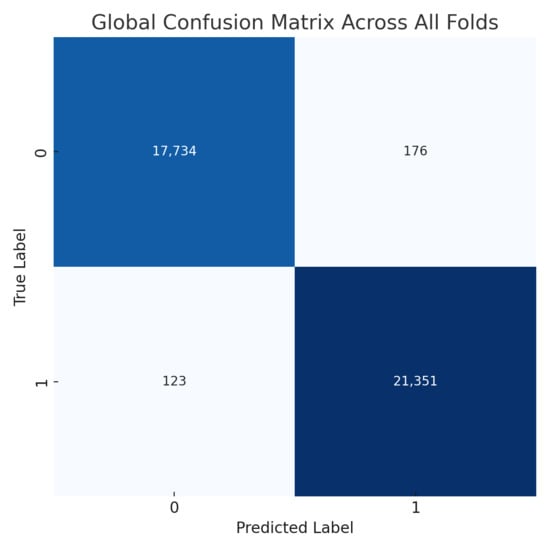

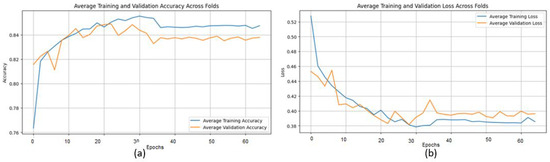

4.2.4. Results of the Proposed Method on Histopathological Datasets

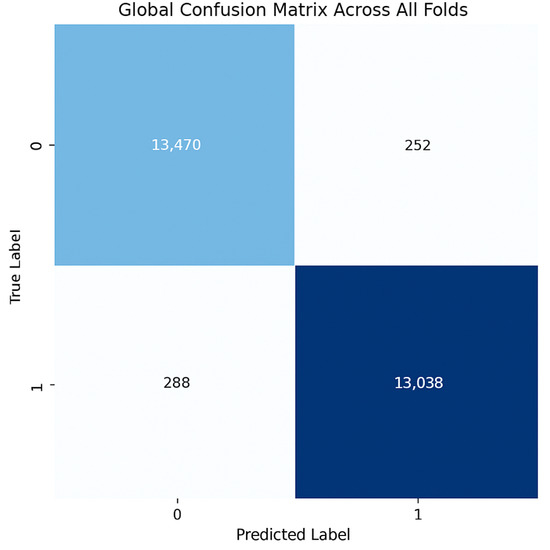

The model demonstrates robust performance across all folds, achieving an average accuracy of 0.863, precision of 0.879, recall of 0.929, and an F1 score of 0.903, indicating an effective balance between identifying positive cases and minimizing false positives (Table 7). The high recall highlights the model’s proficiency in detecting positives, which is crucial in fields like medical diagnostics. While precision is generally high, slight variations in accuracy across folds and minor changes in precision (e.g., 0.868 in Fold 1, Run 1) suggest opportunities to further reduce false positives and improve consistency. Overall, the model shows strong performance and robustness, with potential for fine-tuning to enhance precision and uniformity.

Table 7.

Accuracy, Precision, Recall, and F1 Score (%) of the proposed CNN model for the BreaKHis dataset, using a 5-fold with 3 iterations.

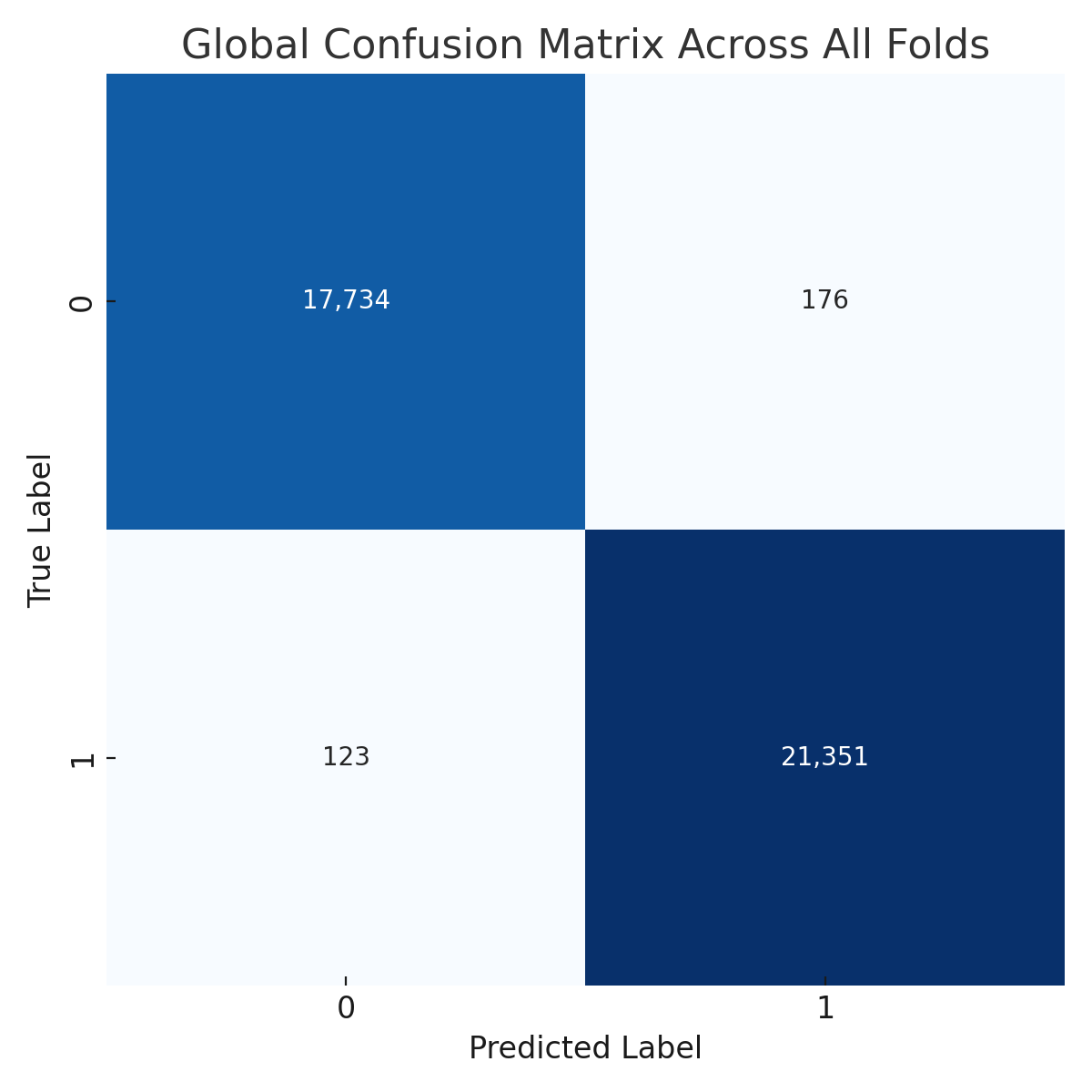

The training curves show stable convergence across folds, as illustrated by the average training accuracy and loss (Figure 18). The confusion matrix further confirms the model’s ability to classify cases correctly, with only a few misclassifications observed across folds (Figure 19). These figures complement the metrics reported in Table 7.

Figure 18.

(a) Average Training accuracy Across Folds of the proposed CNN model versus number of epochs with BreaKHis dataset; (b) Average Training Loss Across Folds of the proposed CNN model versus number of epochs with BreaKHis dataset.

Figure 19.

Confusion matrix across all folds for Histopathological dataset.

4.3. Comparative Analysis with Existing CAD Models

4.3.1. Comparative Analysis with Existing CAD Models on Mammography Datasets

Table 8 shows that the CNN model outperforms traditional methods in classification accuracy on the DDSM, MIAS, and INbreast datasets. The model achieves 99.2% accuracy on DDSM, 98.97% on MIAS, and 99.43% on INbreast. In comparison, Chougrad et al.’s ensemble, which includes VGG16, ResNet50, and Inception v3, achieved accuracies of 97.35% on DDSM and 98.23% on MIAS [21]. Similarly, Muduli et al.’s DeepCNN reached 90.68% on DDSM, 96.55% on MIAS, and 91.28% on INbreast [25], while Rouhi and Jafari’s MLP recorded 88.61% on DDSM, 90.94% on MIAS, and 89.23% on INbreast [26]. Jafari et al.’s feature-extraction technique achieved 96% accuracy on DDSM and 94.5% on MIAS [18], and Rahman et al. reported accuracies of 85.7% for ResNet50 and 79.6% for Inception v3 on DDSM [22]. Lastly, Dong et al.’s enhanced kNN achieved scores of 86.54% on DDSM, 95.76% on MIAS, and 89.30% on INbreast [27]. These comparisons highlight the robustness and generalizability of the proposed CNN model, demonstrating it as a significant improvement in computer-aided diagnosis (CAD) systems for breast cancer and a valuable tool for enhancing early detection accuracy in clinical environments.

Table 8.

Comparative performance analysis of existing CAD models utilizing mammography datasets.

4.3.2. Comparative Analysis with Existing CAD Models on Ultrasound Datasets

The results presented in Table 9 show that the proposed CNN model achieves an accuracy of 98.00% on the Breast Ultrasound Dataset, outperforming Ragab et al.’s ensemble of SqueezeNet, VGG-16, and VGG-19 models optimized through Cat Swarm Optimization and Multilayer Perceptron, which achieved 97.09% [32], as well as Eroğlu Y’s hybrid-based CNN system, which reached 95.6% [34]. These findings highlight the superior performance of the proposed CNN, demonstrating its ability to effectively balance simplicity and accuracy, surpassing more complex ensemble and hybrid approaches. The model’s proficiency in extracting meaningful features from ultrasound images is reflected in its higher accuracy, making it a promising and effective solution for breast cancer screening. However, as the evaluation was conducted on a single dataset, further validation across a wider range of datasets is recommended to assess its generalizability and robustness.

Table 9.

Comparative performance analysis of existing CAD models using Ultrasound Datasets.

4.3.3. Comparative Analysis with Existing CAD Models on Magnetic Resonance Imaging (MRI) Datasets

The proposed CNN model achieves an accuracy of 98.43% on MRI datasets, outperforming Zhou et al.’s 3D deep CNN, which achieved 83.7%, and slightly surpassing Yurttakal et al.’s multilayer CNN, which reached 98.33%, as shown in the results presented in the Table 10. The modest improvement over Yurttakal et al.’s method highlights the effectiveness of the proposed approach in optimizing feature extraction and maximizing classification performance for MRI data [32]. Furthermore, the significant improvement over Zhou et al.’s 3D deep CNN emphasizes the model’s ability to capture essential imaging features without the added complexity of 3D structures [4]. These results underscore the potential of the proposed CNN as a reliable tool for MRI data interpretation in clinical settings. However, additional validation on different MRI datasets is necessary to confirm the model’s generalizability and robustness.

Table 10.

Comparative performance analysis of existing CAD models using MRI Datasets.

4.3.4. Comparative Analysis with Existing CAD Models on Histopathological Datasets

The BreakHis dataset reveals notable performance disparities in classification as shown in Table 11. Mansour [29] achieved AlexNet’s best accuracy of 96.70%, demonstrating its effectiveness for breast cancer categorization. Using CNN-based models (VGG16, VGG19, MobileNet, and ResNet 50), Agarwal et al. [30] reached an accuracy of 94.67%, which is effective but did not surpass AlexNet. Spanhol et al. [31] achieved 85% accuracy using classical classifiers (KNN, SVM, RF), highlighting that deep learning methods outperform traditional image classification techniques. Similar to Spanhol et al. [31], our CNN-based model achieved 86.42% accuracy, which, while effective, lags behind the top-performing deep learning models. We may consider modifying our CNN architecture or exploring hybrid approaches to reduce the performance gap and further enhance accuracy.

Table 11.

Comparative performance analysis of existing CAD models using Histopathological Datasets.

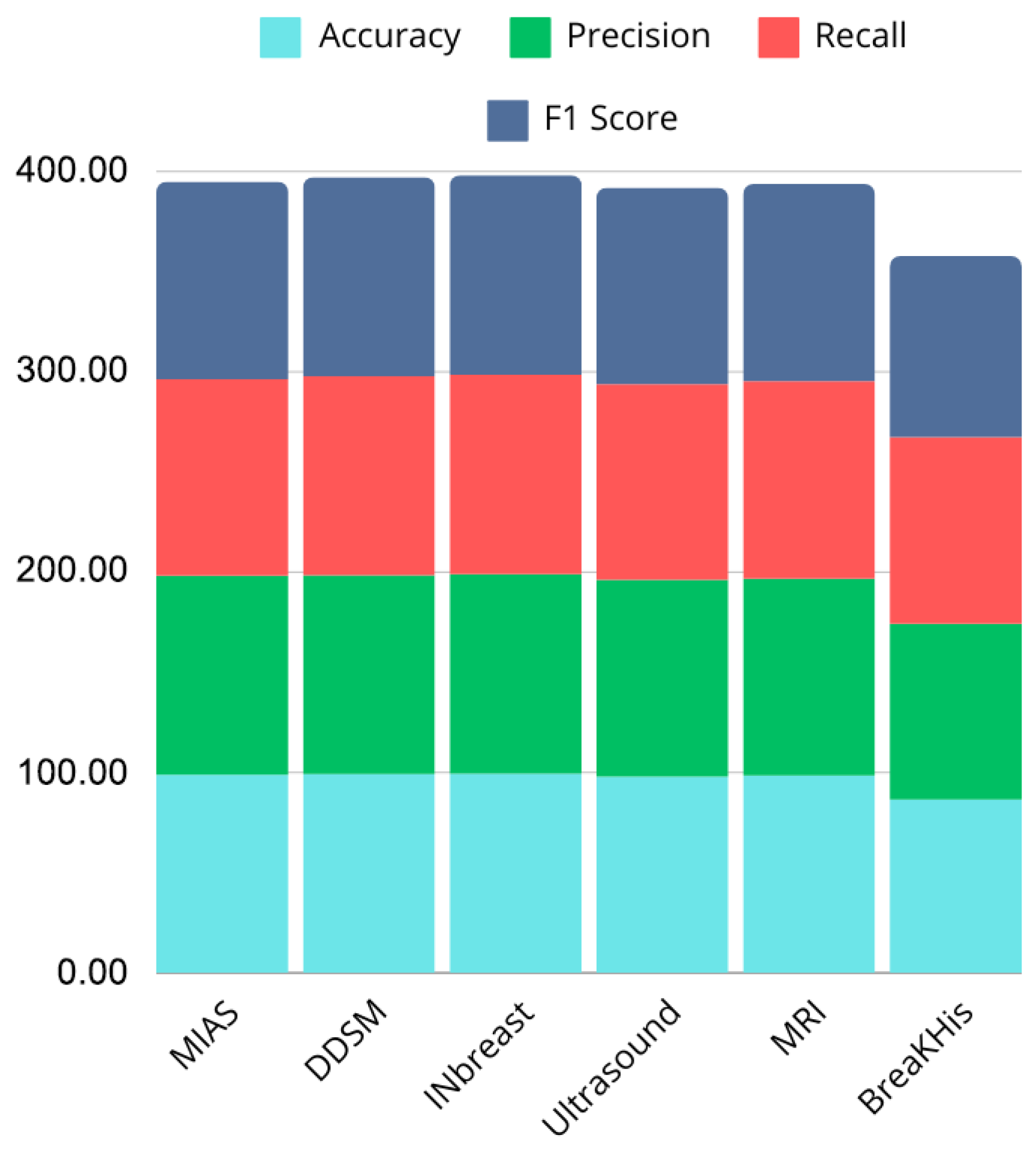

4.4. General Discussion

The proposed CNN model demonstrates strong performance across multiple imaging modalities, including mammography, ultrasound, MRI, and histopathology datasets, achieving accuracy rates of 99.2% on DDSM, 98.97% on MIAS, 99.43% on INbreast, 98.00% on ultrasound, and 98.43% on MRI. In contrast, performance on the BreaKHis histopathology dataset was lower, with an accuracy of 86.42%, indicating potential limitations when dealing with higher intra-class variability or complex tissue structures. While these results surpass several state-of-the-art approaches, such as those reported by Chougrad et al. [21], Muduli et al. [25], and Mansour [29], they also highlight the challenges of generalizing across heterogeneous datasets.

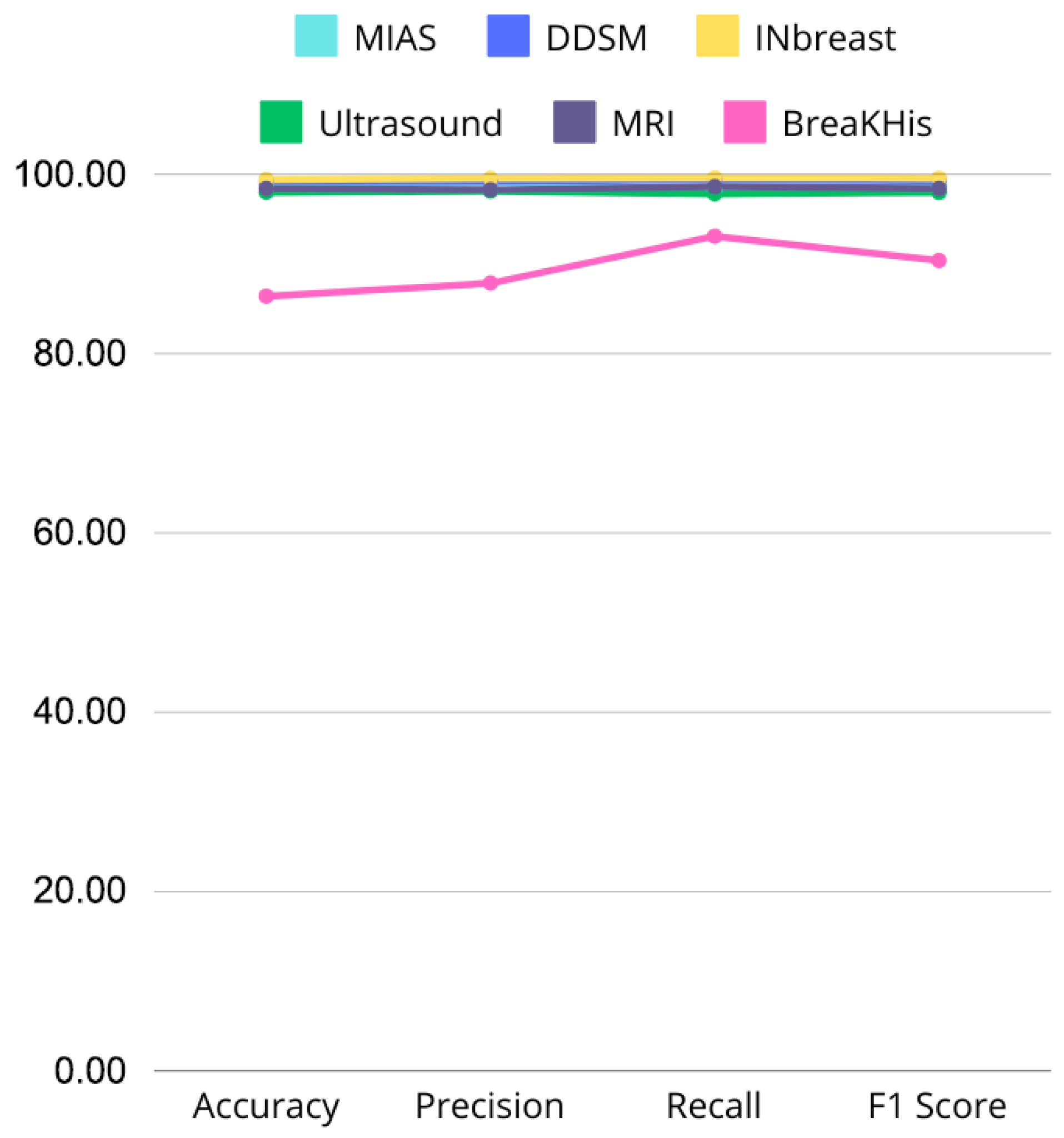

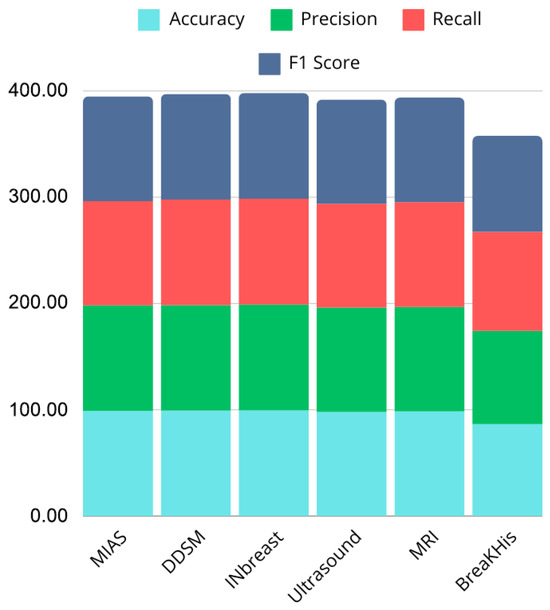

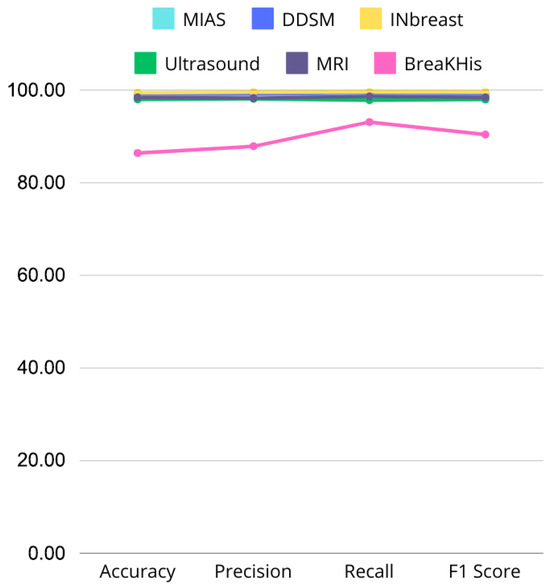

Figure 20 and Figure 21 illustrate performance comparisons across datasets and critical metrics (Accuracy, Precision, Recall, F1 Score), revealing consistent performance on most modalities but noticeable drops on histopathological images. This suggests that while the model effectively captures discriminative features in mammography, ultrasound, and MRI, additional architectural adaptations or domain-specific pre-processing may be required for histopathology tasks.

Figure 20.

Bar chart showing performance comparison across datasets.

Figure 21.

Line graph comparing Accuracy, Precision, Recall, and F1 Score across MIAS, DDSM, INbreast, Ultrasound, and MRI datasets, highlighting performance variations.

The simplicity of the CNN architecture, avoiding complex ensembles or hybrid models, contributes to computational efficiency and scalability. However, this design choice may also limit the model’s ability to capture more subtle or high-dimensional features in certain datasets, such as BreaKHis. Nonetheless, the model reliably extracts essential imaging features and provides robust classification outcomes, demonstrating its potential as a practical tool for clinical decision support.

These findings underline the model’s versatility and potential to enhance early breast cancer detection. However, additional validation on larger, multi-center datasets is essential to confirm its generalizability and address modality-specific limitations. Future work could explore hybrid architectures, multi-scale feature extraction, or integration with clinical metadata to further improve performance, particularly in challenging histopathology datasets. Overall, the proposed CNN provides a solid foundation for advancing deep learning applications in medical imaging, bridging methodological innovation with clinical relevance.

5. Limitations and Future Work

This study has several limitations that should be addressed in future research. A key limitation is lower performance on the BreaKHis dataset, indicating the need for further optimization to improve its effectiveness on histopathology data, the small size of the datasets used, including MIAS, INbreast, DDSM, and Ultrasound, which, while widely used in the field, may not fully capture clinical heterogeneity. The class imbalance and limited data availability restrict the model’s ability to represent the full spectrum of breast cancer imaging, thereby limiting its generalizability. Additionally, the model’s ability to learn generalized features is impacted by image quality issues, such as noise and low resolution. To overcome these challenges, future studies should incorporate larger, more diverse datasets from multiple medical institutions, imaging modalities, and patient demographics to enhance the model’s robustness and real-world applicability. Nonetheless, there remains possibility of enhancement, especially in the histopathological field. Future research may investigate architectural improvements and hybrid models to improve performance and assure the model’s robustness across various datasets and imaging modalities. There are also opportunities for future improvements to boost model performance. One potential approach is the use of pre-trained models and transfer learning, which could enable fine-tuning on larger datasets, such as those used for general object detection or medical imaging, to improve accuracy, especially in low-data scenarios. A multi-modal model combining clinical and genomic data could further enhance prognostic capabilities by integrating patient history, biopsy results, and genetic information [39]. Finally, testing the model in real-time clinical settings and optimizing it for rapid inference would help doctors make quick, informed decisions during the diagnostic process.

6. Conclusions

This research presents a CNN-based approach for the automated prediction and diagnosis of breast cancer using various imaging modalities, including mammography, ultrasound, MRI, and histopathological Datasets. The model achieved exceptional accuracy rates, demonstrating its ability to identify significant features and provide reliable predictions. Its simplicity, efficiency, and adaptability make it suitable for clinical applications, especially in contrast to more complex ensemble or hybrid models. The model’s capacity to generalize across diverse imaging datasets positions it as a transformative tool in computer-aided diagnosis, offering valuable support to radiologists in the early detection of breast cancer.

While the model performs well, the study identified key limitations, particularly related to dataset size, diversity, and image quality. These factors may hinder the model’s clinical utility despite its strong performance. These findings suggest the need for further research to evaluate the model on larger, more diverse datasets, and to explore enhancements such as integrating clinical and genomic data for more personalized predictions. In conclusion, this study lays a strong foundation for deep learning-based breast cancer diagnosis. By addressing its limitations and leveraging the model’s scalability, future research can improve early detection and patient outcomes, facilitating its integration into clinical workflows.

Author Contributions

Conceptualization, K.A. and Y.J.; Methodology, Y.J., M.A. and A.H.E.H.; Formal analysis, K.A., Y.J. and M.H.; Investigation, Y.J. and M.A.; Writing original draft, K.A. and Y.J.; Writing review & editing, M.H. and A.H.E.H.; Supervision, Y.J. and A.H.E.H. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The original data presented in the study are openly available in the following public repositories: sciencedirect: https://doi.org/10.1016/j.dib.2020.105928 (accessed on 12 November 2024), kaggle: https://www.kaggle.com/datasets/uzairkhan45/breast-cancer-patients-mris (accessed on 12 November 2024), https://www.kaggle.com/datasets/rehelzannat/breakhis-total (accessed on 12 November 2024).

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| CNN | Convolutional Neural Network |

| DCNNs | Deep convolutional neural networks |

| DL | Deep Learning |

| MIAS | Mammogram Image Analysis Society |

| DDSM | Digital Dataset for Screening Mammography |

| MRI | Magnetic Resonance Imaging |

| BreaKHis | Breast Cancer Histopathological Database |

References

- Sadoughi, F.; Kazemy, Z.; Hamedan, F.; Owji, L.; Rahmanikatigari, M.; Azadboni, T. Artificial intelligence methods for the diagnosis of breast cancer by image processing: A review. In Breast Cancer Targets And Therapy; Taylor & Francis: Abingdon, UK, 2018; Volume 12, pp. 219–230. [Google Scholar]

- Gupta, A.; Shridhar, K.; Dhillon, P. A review of breast cancer awareness among women in India: Cancer literate or awareness deficit? Eur. J. Cancer 2015, 51, 2058–2066. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Sun, L.; Ma, K.; Fang, J. Breast Cancer Microscope Image Classification Based on CNN with Image Deformation. In Lecture Notes In Computer Science; Springer: Berlin/Heidelberg, Germany, 2018; pp. 845–852. [Google Scholar] [CrossRef]

- Zhou, J.; Luo, L.; Dou, Q.; Chen, H.; Chen, C.; Li, G.; Jiang, Z.; Heng, P. Weakly supervised 3D deep learning for breast cancer classification and localization of the lesions in MR images. J. Magn. Reson. Imaging 2019, 50, 1144–1151. [Google Scholar] [CrossRef]

- Lima, Z.; Ebadi, M.; Amjad, G.; Younesi, L. Application of Imaging Technologies in Breast Cancer Detection: A Review Article. Open Access Maced. J. Med. Sci. 2019, 7, 838–848. [Google Scholar] [CrossRef]

- Alakhras, M.; Mousa, D.S.A.; Alqadi, A.K.; Sabaneh, H.A.; Karasneh, R.M.; Spuur, K. The influence of breast density and key demographics of radiographers on mammography reporting performance—A pilot study. J. Med. Radiat. Sci. 2021, 69, 30–36. [Google Scholar] [CrossRef]

- Aguerchi, K.; Jabrane, Y.; Habba, M.; Hassani, A. A CNN Hyperparameters Optimization Based on Particle Swarm Optimization for Mammography Breast Cancer Classification. J. Imaging 2024, 10, 30. [Google Scholar] [CrossRef]

- Filipović-Grčić, L.; Đerke, F. Artificial intelligence in radiology. Rad Hrvat. Akad. Znan. Umjet. Med. Znan. 2019, 537, 55–59. [Google Scholar] [CrossRef]

- Deepa, S. A survey on artificial intelligence approaches for medical image classification. Indian J. Sci. Technol. 2011, 4, 1583–1595. [Google Scholar] [CrossRef]

- Talimi, H.; Retmi, K.; Fissoune, R.; Lemrani, M. Artificial Intelligence in Cutaneous Leishmaniasis Diagnosis: Current Developments and Future Perspectives. Diagnostics 2024, 14, 963. [Google Scholar] [CrossRef]

- Aguerchi, K.; Jabrane, Y.; Habba, M.; Ameur, M. On the Use of Transfer Learning to Improve Breast Cancer Detection. Int. J. Sci. Res. Innov. Stud. 2024, 3, 11217339. [Google Scholar] [CrossRef]

- Litjens, G.; Kooi, T.; Bejnordi, B.; Setio, A.; Ciompi, F.; Ghafoorian, M.; Van Der Laak, J.; Van Ginneken, B.; Sánchez, C. A survey on deep learning in medical image analysis. Med. Image Anal. 2017, 42, 60–88. [Google Scholar] [CrossRef] [PubMed]

- Niola, V.; Quaremba, G. Pattern recognition and feature extraction: A comparative study. Comput. Sci. 2005, 4, 109–114. [Google Scholar]

- Zhu, W.; Xie, L.; Han, J.; Guo, X. The Application of Deep Learning in Cancer Prognosis Prediction. Cancers 2020, 12, 603. [Google Scholar] [CrossRef] [PubMed]

- Sechopoulos, I.; Teuwen, J.; Mann, R. Artificial intelligence for breast cancer detection in mammography and digital breast tomosynthesis: State of the art. Semin. Cancer Biol. 2021, 72, 214–225. [Google Scholar] [CrossRef]

- Nassif, A.B.; Talib, M.A.; Nasir, Q.; Afadar, Y.; Elgendy, O. Breast cancer detection using artificial intelligence techniques: A systematic literature review. Artif. Intell. Med. 2022, 127, 102276. [Google Scholar] [CrossRef]

- Abu Abeelh, E.; Abuabeileh, Z. Screening Mammography and Artificial Intelligence: A Comprehensive Systematic Review. Cureus 2025, 17, e79353. [Google Scholar] [CrossRef] [PubMed]

- Jairam, M.P.; Ha, R. A review of artificial intelligence in mammography. Clin. Imaging 2022, 88, 36–44. [Google Scholar] [CrossRef]

- Hussain, S.I.; Toscano, E. Optimized Deep Learning for Mammography: Augmentation and Tailored Architectures. Information 2025, 16, 359. [Google Scholar] [CrossRef]

- Castro-Tapia, S.; Castañeda-Miranda, C.; Olvera-Olvera, C.; Guerrero-Osuna, H.; Ortiz-Rodriguez, J.; Del Rosario Martínez-Blanco, M.; Díaz-Florez, G.; Mendiola-Santibañez, J.; Solís-Sánchez, L. Classification of Breast Cancer in Mammograms with Deep Learning Adding a Fifth Class. Appl. Sci. 2021, 11, 11398. [Google Scholar] [CrossRef]

- Chougrad, H.; Zouaki, H.; Alheyane, O. Deep Convolutional Neural Networks for breast cancer screening. Comput. Methods Programs Biomed. 2018, 157, 19–30. [Google Scholar] [CrossRef] [PubMed]

- Rahman, A.; Belhaouari, S.; Bouzerdoum, A.; Baali, H.; Alam, T.; Eldaraa, A. Breast Mass Tumor Classification using Deep Learning. In Proceedings of the 2020 IEEE International Conference On Informatics, IoT, And Enabling Technologies (ICIoT), Doha, Qatar, 2–5 February 2020; pp. 271–276. [Google Scholar] [CrossRef]

- Sun, L.; Wang, J.; Hu, Z.; Xu, Y.; Cui, Z. Multi-View Convolutional Neural Networks for Mammographic Image Classification. IEEE Access 2019, 7, 126273–126282. [Google Scholar] [CrossRef]

- Jafari, Z.; Karami, E. Breast Cancer Detection in Mammography Images: A CNN-Based Approach with Feature Selection. Information 2023, 14, 410. [Google Scholar] [CrossRef]

- Muduli, D.; Dash, R.; Majhi, B. Automated diagnosis of breast cancer using multi-modal datasets: A deep convolution neural network based approach. Biomed. Signal Process. Control 2021, 71, 102825. [Google Scholar] [CrossRef]

- Rouhi, R.; Jafari, M. Classification of benign and malignant breast tumors based on hybrid level set segmentation. Expert Syst. Appl. 2015, 46, 45–59. [Google Scholar] [CrossRef]

- Dong, M.; Wang, Z.; Dong, C.; Mu, X.; Ma, Y. Classification of Region of Interest in Mammograms Using Dual Contourlet Transform and Improved KNN. J. Sens. 2017, 2017, 1–15. [Google Scholar] [CrossRef]

- Arora, R.; Rai, P.; Raman, B. Deep feature–based automatic classification of mammograms. Med. Biol. Eng. Comput. 2020, 58, 1199–1211. [Google Scholar] [CrossRef]

- Mansour, R. A Robust Deep Neural Network Based Breast Cancer Detection and Classification. Int. J. Comput. Intell. Appl. 2020, 19, 2050007. [Google Scholar] [CrossRef]

- Agarwal, P.; Yadav, A.; Mathur, P. Breast Cancer Prediction on BreakHis Dataset Using Deep CNN and Transfer Learning Model. In Lecture Notes In Networks And Systems; Springer: Cham, Switzerland, 2021; pp. 77–88. [Google Scholar] [CrossRef]

- Spanhol, F.; Oliveira, L.; Petitjean, C.; Heutte, L. A Dataset for Breast Cancer Histopathological Image Classification. IEEE Trans. Biomed. Eng. 2015, 63, 1455–1462. [Google Scholar] [CrossRef]

- Yurttakal, A.; Erbay, H.; İkizceli, T.; Karaçavuş, S. Detection of breast cancer via deep convolution neural networks using MRI images. Multimed. Tools Appl. 2019, 79, 15555–15573. [Google Scholar] [CrossRef]

- Ragab, M.; Albukhari, A.; Alyami, J.; Mansour, R. Ensemble Deep-Learning-Enabled Clinical Decision Support System for Breast Cancer Diagnosis and Classification on Ultrasound Images. Biology 2022, 11, 439. [Google Scholar] [CrossRef]

- Eroğlu, Y.; Yildirim, M.; Çinar, A. Convolutional Neural Networks based classification of breast ultrasonography images by hybrid method with respect to benign, malignant, and normal using mRMR. Comput. Biol. Med. 2021, 133, 104407. [Google Scholar] [CrossRef] [PubMed]

- Al-Dhabyani, W.; Gomaa, M.; Khaled, H.; Fahmy, A. Deep Learning Approaches for Data Augmentation and Classification of Breast Masses using Ultrasound Images. Int. J. Adv. Comput. Sci. Appl. 2019, 10, 1–11. [Google Scholar] [CrossRef]

- Huang, M.; Lin, T. Dataset of breast mammography images with masses. Data Brief 2020, 31, 105928. [Google Scholar] [CrossRef] [PubMed]

- Available online: https://www.kaggle.com/datasets/uzairkhan45/breast-cancer-patients-mris (accessed on 12 November 2024).

- Available online: https://www.kaggle.com/datasets/rehelzannat/breakhis-total (accessed on 12 November 2024).

- Talimi, H.; Daoui, O.; Bussotti, G.; Mhaidi, I.; Boland, A.; Deleuze, J.; Fissoune, R.; Lemrani, M.; Späth, G. A comparative genomics approach reveals a local genetic signature of Leishmania tropica in Morocco. Microb. Genom. 2024, 10, 001230. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).