1. Introduction

The construction industry stands at a pivotal juncture, grappling with the dual challenge of escalating demand for quality inspection and a diminishing pool of available inspectors. Traditional inspection mechanisms, long reliable in controlled manufacturing environments, face redefinition in response to evolving industry dynamics, particularly in construction.

This transformation stems from a growing emphasis on post-installation quality assessments, notably in uncontrolled environments like bustling construction sites. Traditional machine vision systems, foundational to industrial processes, reveal their limitations in these dynamic settings, characterized by unpredictable variables and ceaseless activity. These systems often require extensive parameter tuning and can falter, especially in uncontrolled environments marked by fluctuating lighting conditions and unexpected defects [

1,

2,

3].

In parallel, the allure of machine and deep learning (ML/DL) promises to overcome these challenges by offering a more adaptable approach to defect detection. However, the effectiveness of ML/DL critically hinges on the availability of extensive, high-quality datasets, a rarity in the context of construction quality inspection. Even advanced DL models can underperform due to the scarcity of comprehensive training data and the influence of unpredictable external factors, as exemplified by notable studies [

1,

2,

4,

5,

6].

To bridge this critical gap, the integration of data augmentation surfaces as the initial solution [

7,

8]. Furthermore, image enhancement techniques aim to bolster DL models by enhancing image clarity, mitigating shadows, and accentuating defects. The synergistic fusion of DL with a judicious image enhancement (IE) strategy can revolutionize defect identification and reshape the industrial landscape [

9]. Recent research, exemplified by Wu et al. [

10] and Tang et al. [

11], has effectively utilized these strategies to improve segmentation networks. Wu et al. notably enhanced crack segmentation accuracy using MobileNetV2_DeepLabV3, while Tang employed image refinement post-processing with U-Net for similar gains. These studies showcase the applicability of these strategies in current research. Alongside technological challenges, the construction sector faces an escalating demand for rigorous quality inspections and a diminishing pool of human inspectors. Even seemingly minor defects can tarnish a brand’s reputation and compromise functional aesthetics [

3].

This paper introduces a novel amalgamation of advanced image processing, data augmentation techniques, and DL methodologies to address the challenges posed by intricate lighting conditions and limited data availability on construction sites. We aim to ensure consistent inspections in uncontrolled domains and establish a benchmark for construction quality assessments. Additionally, through a rigorous comparison with a sophisticated segmentation model, we underscore the potential of our proposed methodology, particularly in the burgeoning domain of automated building inspection [

7,

8].

Our primary research objective is to develop an advanced defect detection method utilizing deep learning (DL). Specifically, we present an innovative DL-based framework for detecting defects in window frames. This framework combines data augmentation, customized image enhancement techniques, and a detection model designed to enhance the quality of defect detection.

In this context, we operate under the assumption that construction sites often present intricate lighting conditions and suffer from limited data availability. These inherent challenges in uncontrolled environments require a more robust defect detection solution. Our research addresses these assumptions by establishing a new benchmark for quality inspections in construction sites.

The subsequent sections of this paper are structured as follows:

Section 2 delves into the relevant literature,

Section 3 outlines our approach,

Section 4 unveils our experimental design and findings, and

Section 5 offers conclusions and insights from our research.

2. Related Work

This section provides a comprehensive overview of prior research and methodologies in defect detection, tracking the historical evolution of techniques and highlighting recent advancements.

2.1. Traditional Computer Vision Approaches

Defect detection in construction and manufacturing has been a prominent research focus. Traditional machine vision systems, relying on predefined algorithms, have played a pivotal role in quality control across diverse industries [

12].

Threshold Techniques: Automatic thresholding has been crucial in industries such as glass manufacturing [

13,

14] and textiles [

15]. Dynamic thresholding has found applications in road crack segmentation [

16]. The Retinex Algorithm has been used for edge detection [

17,

18], and innovative approaches like combining morphological processing with genetic algorithms have introduced new dimensions to defect detection strategies [

19].

Edge Detection and Morphological Processing: The Retinex Algorithm has been prominent in edge detection for defect identification. The fusion of morphological processing with genetic algorithms has also introduced innovative dimensions to defect detection strategies.

Fourier and Texture Analysis: Fourier series is useful in line defect detection [

20], while texture analysis proves reliable in labs [

18,

21,

22,

23]. Combining impulse/response testing with statistical pattern recognition has helped to detect defects in concrete plates [

24].

Innovative Approaches: Recent innovations, such as impulse/response testing and statistical pattern recognition, have effectively detected defects in concrete plates. However, traditional machine vision systems have limitations in complex, dynamic settings like construction sites, where unpredictable variables like complex lighting challenge their efficacy.

2.2. Challenges in Conventional Machine Vision Methods

Traditional machine vision methods excel in controlled environments but face significant hurdles in complex settings like construction sites. Key challenges include the following:

Variable Lighting: Fluctuating natural and artificial lighting conditions impact system performance [

1,

2,

3].

Dynamic Environments: Rapid changes in construction sites challenge machine vision adaptability, reducing accuracy.

Noise and Interference: Visual noise and electromagnetic interference disrupt defect detection [

1].

Scale and Perspective Variations: Varying object sizes and perspectives require extensive system adjustments.

Real-Time Demands: Meeting real-time requirements can be challenging for traditional methods.

Data Annotation: Creating and maintaining labeled datasets is labor-intensive and complex in dynamic environments.

Innovative approaches, including deep learning and adaptive algorithms, are needed to enhance defect detection in construction.

2.3. Machine Learning and Deep Learning-Based Methods

This subsection explores recent advancements in machine learning, particularly deep learning (DL) models, for defect detection. With increased computing power and data availability, DL, particularly convolutional neural networks (CNNs), has gained popularity in quality inspection.

Supervised object detection supervised defect detection relies on labeled datasets with defect-free and defective samples, resulting in high detection rates. This approach annotates each object with its class label and bounding box coordinates during training. Various datasets are used in supervised learning, including fabric defect datasets [

25] and rail defect datasets [

26,

27].

Unsupervised object detection unsupervised methods aim to overcome the limitations of supervised learning by leveraging inherent data characteristics for classification. These approaches detect defects and objects in images without using labeled training data. Instead, they rely on patterns, structures, or anomalies in the data to identify objects. Techniques like clustering, anomaly detection, and feature extraction are commonly used for unsupervised defect detection.

Object detection model current object detection in deep learning falls into two main categories. The first encompasses two-stage object detection models, which include R-CNNs [

28], Fast R-CNNs [

29], and Faster R-CNNs [

30]. For example, U-Net for defect segmentation using synthetic data was used by Boikov et al. [

31]. The second category features one-stage models like YOLO [

32] and SSD [

33].

2.4. Challenges in DL-Based Defect Detection

In the realm of deep learning-based defect detection in construction, formidable challenges persist despite significant advancements in computer vision for monitoring structural health and identifying unsafe behaviors [

34]. These challenges encompass a range of issues, including identifying multiple defects or concurrent unsafe behaviors, which remain problematic due to the high noise levels inherent in construction settings. Balancing datasets for deep learning training poses difficulties, particularly when certain defects or behaviors occur infrequently, leading to unbalanced data [

35]. Moreover, the scarcity of labeled data, especially for rare defects, hampers the development of robust models. Pursuing real-time performance without compromising accuracy is an ongoing challenge, and the issue of inaccurate or inconsistent labeling adversely affects model precision. Furthermore, the variability in inspection standards hinders integrating deep learning models with human inspectors. At the same time, environmental factors like changing lighting conditions introduce variability in model accuracy in the dynamic construction environment.

2.5. Hybrid Models for Defect Detection

Hybrid models for defect detection represent a compelling approach by integrating image processing techniques (IPTs) and image enhancement techniques (IETs) with machine learning (ML) and deep learning (DL) methodologies. This fusion has demonstrated significant advantages across various domains, including defect identification. This section delves into such hybrid models’ underlying principles and practical applications. One avenue of exploration involves the integration of IPTs with ML techniques, offering a robust framework for defect detection. For instance, combining edge detection algorithms with convolutional neural networks (CNNs) has yielded substantial improvements in tasks like weld segmentation, resulting in enhanced accuracy and efficiency in defect identification processes. Additionally, the domain of deep learning has witnessed a transformative impact on defect detection, particularly through models like CNNs. However, integrating image enhancement techniques can further elevate their performance, making them increasingly applicable in industries such as construction. Techniques like data augmentation, which generates diverse training data, and image enhancement algorithms that enhance image clarity, reduce shadows, and accentuate defects, contribute to fine-tuning DL models for more effective defect identification tasks, ultimately facilitating their integration into real-world applications such as construction [

36].

These hybrid models find applications across industries, from manufacturing to construction, demonstrating their ability to handle complex image data and enhance defect identification in challenging settings. However, challenges remain, including parameter optimization and dataset availability. Some recent research used image enhancement and refinement to improve crack segmentation [

10,

11]. Our research addresses these challenges, focusing on the domain-specific context of cosmetic quality inspection for window frames. We aim to uncover the most effective image enhancement strategies and data augmentation techniques to enhance defect detection systems’ precision, robustness, and real-world applicability.

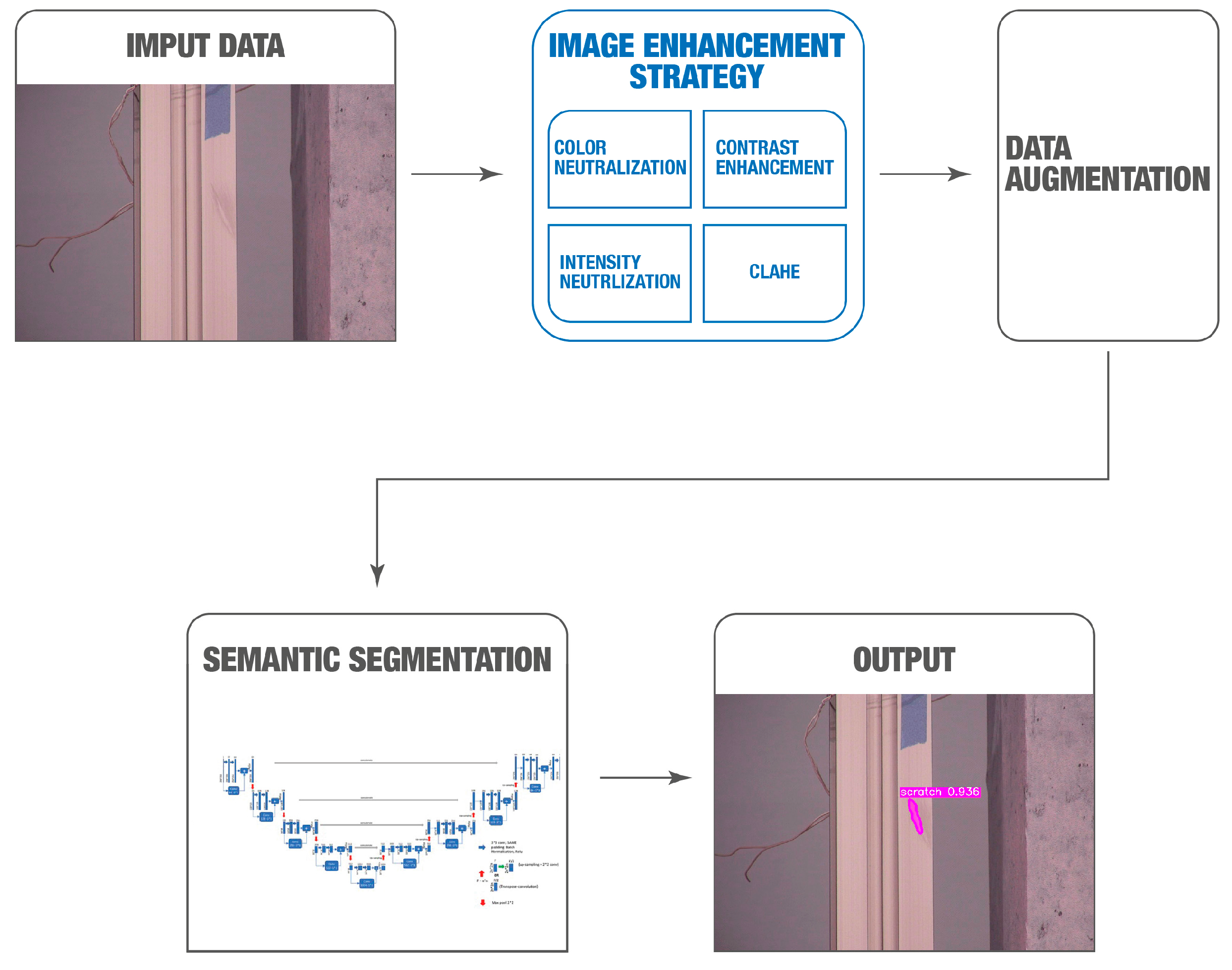

3. Proposed Method

The demand for robust defect detection becomes paramount in industrial environments marked by unpredictable lighting conditions. Our proposed method is a comprehensive solution that integrates an image quality assessment tool, a sophisticated image enhancement strategy, advanced data augmentation processes, and a deep learning-based defect detection model. The framework of our approach, illustrated in

Figure 1, visually represents the various components and their interplay.

Our system begins with inputting RGB images captured by the Spot Robot. Subsequently, the data augmentation module comes into play, employing a combination of geometric operations and a range of image enhancement techniques to generate vast enhanced data.

The preprocessing module further enhances the defect detection model’s performance, ensuring optimal results. Finally, within the detection module, defects are identified among all detected window frames, with the output showcasing U-Net-generated segmentation blobs, ultimately providing a comprehensive overview of detected defects within the industrial setting. This holistic approach empowers the system to operate effectively in dynamic and challenging environments, setting new standards for defect detection accuracy and reliability.

3.1. Data Collection

Our dataset consists of 1356 original images sourced from five distinct datasets:

Cellphone Dataset (441 Images): This dataset comprises color images capturing non-installed window frames both indoors and outdoors (see

Figure 2).

Construction Site Dataset (235 Images): Captured on a real-world construction site using inspector cellphone cameras, this dataset includes various installed window frame types and diverse conditions (see

Figure 3).

Lab-1 Dataset (100 Images): Collected in a controlled lab environment using the Spot Robot’s PTZ camera, this dataset features a range of window frame samples with variations in colors, lighting conditions and angles (see

Figure 4).

Lab-2 Dataset (80 Images): Focused on a single window frame within a cluttered lab setting, this dataset offers images captured at different zoom levels (see

Figure 5).

Demo Site Dataset (500 Images): Captured at a construction site using the Spot Robot, this dataset encompasses multiple window frame types and a variety of lighting conditions (see

Figure 6).

3.2. Data Labeling

Our data labeling process was meticulously designed to accurately identify defects within the images. The manual labeling of images was conducted using the Roboflow Platform [

37]. Examples of labeled defects, including dents and scratches, are visually depicted in

Figure 5 and

Figure 6.

The labeling process is visualized in

Figure 7. Following the meticulous labeling procedure, images were stored in COCO format at a resolution of 500 × 500 pixels. This curated labeled image dataset was an invaluable reference standard for our defect detection methodology.

3.3. Geometric Data Augmentation

In the first stage, we leveraged geometric data augmentation techniques to enhance dataset diversity and size. This process involved applying three geometric transformations: anti-clockwise rotation, clockwise rotation, and horizontal flipping. By incorporating these transformations, we expanded our dataset threefold, enriching the variety of samples available for training and validation.

3.4. Image Enhancement Techniques (IETs)

Our defect detection methodology incorporates a range of image enhancement techniques (IETs) carefully selected to enhance the quality of input images before input into the segmentation network. These techniques are pivotal in accentuating object details, reducing noise, and optimizing overall image quality, thus facilitating more precise detection. Below is an overview of the IETs employed in our approach:

Shadow Removal (SR): To address shadow removal, we adopted the pre-trained dual hierarchical aggregation network (DHAN) developed by Cun et al. [

38], which is based on VGG16 with the context aggregation network (CAN). This approach effectively mitigates shadows, a common challenge in image quality.

Color Neutralization (CN): CN plays a crucial role in ensuring a consistent foundation for subsequent processing by harmonizing color variations across images, promoting uniformity.

Contrast Enhancement (CE): CE significantly enhances image clarity, making even subtle defects more discernible. This enhancement aids in the accurate identification of defects.

Intensity Level Neutralization (IN): IN standardizes intensity levels across the dataset, reducing disparities that could otherwise affect the analysis. This step contributes to data consistency.

CLAHE (Contrast-Limited Adaptive Histogram Equalization): CLAHE, a localized contrast enhancement technique, enhances small-scale details while preserving overall image contrast. It improves the visibility of fine details without oversaturating the image.

The choice of these five image enhancement techniques and our data augmentation strategy was informed by extensive experimentation, explained in

Section 4. We rigorously assessed various combinations of techniques to ensure they collectively enhanced image quality without introducing noise or misleading information. This careful selection process ensures the reliability and effectiveness of our approach in the context of defect detection.

3.4.1. Shadow Removal (SR) Process

Harnessing the capabilities of the pre-trained dual hierarchical aggregation network (DHAN) proposed by Cun et al. [

38], we adeptly addressed the challenge of shadow removal. The DHAN network, underpinned by the esteemed VGG16 architecture—a convolutional neural network archetype—employs the context aggregation network (CAN) for encoding.

The DHAN network pinpoints and eliminates shadows from the imagery by orchestrating dilation convolutions and leveraging hierarchical aggregation of multi-contextual features. With weights furnished by the original authors, our application of the network inference method effectively expunged shadows from the entire dataset, as elucidated in

Figure 8.

3.4.2. Color Neutralization (CN)

It was imperative to refine the color definition within the dataset. Our approach pivoted on the Von Kries chromatic adaptation transformation [

39], a robust chromatic adaptation methodology. This technique seamlessly transitions from source to target colors within the LMS (long, medium, short) color spectrum. By adapting the RGB illuminant color of dataset samples to varied illuminates, it preserves the pristine white color. This equates to enhanced color consistency and bolsters feature extraction, as illustrated in

Figure 9.

3.4.3. Contrast Enhancement (CE)

Our image processing strategy concluded with a pivotal step—contrast enhancement. By transmuting dataset samples into RGB channels, we embraced a histogram equalization approach [

40]. This technique magnifies the visual fidelity of the image, making defect spotting significantly more intuitive. The overall image contrast is augmented by streamlining the histogram, translating it to an enriched feature representation, and consequently enhancing it in the deep-learning phase, as showcased in

Figure 10.

3.4.4. Intensity Level Neutralization (IN)

The multi-scale Retinex (MSR) algorithm was brought to refine the intensity channel. The outcome colors were fine-tuned such that the chromaticity mirrored the original snapshot. This meticulous filtration aids in preserving relative lightness, ensuring a harmonized image intensity without any distortion to chromaticity and color composition.

Land and Maccan’s research [

41] laid the groundwork for this approach. They postulated that the visual cortex discerns relative, not absolute, lightness—nuances in localized image segments.

Our adherence to this philosophy culminated in negating intensity variations that might otherwise hamper feature extraction and image data processing, as captured in

Figure 11.

3.4.5. Contrast-Limited Adaptive Histogram Equalization or CLAHE (CLAHE)

CLAHE plays a pivotal role in our novel strategy, enhancing image contrast by equalizing the histogram for each contextual region or tile in an image. This technique effectively limits histogram amplification via clipping at a predefined limit. The crucial steps of the CLAHE algorithm are as follows:

Divide the input image into non-overlapping tiles of size m × n, resulting in M × N tiles.

Perform histogram equalization on each tile, using the probability density function (PDF) and cumulative distribution function (CDF) to distribute pixel intensities effectively.

Apply contrast limiting by clipping the histogram at a predefined limit, CL, to prevent excessive amplification.

Conduct bilinear interpolation to eliminate artificial boundaries between tiles, resulting in a smoothly enhanced output image.

Our study applied the contrast-limited adaptive histogram equalization (CLAHE) method to the luminance (L) channel within the lab color space. Results are shown in

Figure 12.

3.5. IE-Enhanced Data Augmentation

We implemented an advanced data augmentation process involving an IE-enhanced augmentation. For the second stage of data augmentation, we harnessed the power of 40 IE combinations, including the ‘Normal’ dataset and results from five image enhancement techniques (IETs) where the order of application was irrelevant. This comprehensive approach created 40 distinct datasets, each representing a unique IE combination. These augmented datasets played a vital role in training our model, enabling it to learn from various enhanced variations, ultimately enhancing its defect detection capabilities. Notably, this 40 included the 4! (4 factorial) combinations from the five IEs, and 16 additional datasets were created by inserting CLAHE into the best-performing ones.

3.6. Defect Detection Model

Our defect detection model is a culmination of data augmentation and image enhancement techniques strategies, meticulously designed to enhance its performance. The model leverages a U-Net neural network architecture to segment defects within the input images effectively. A detailed breakdown of our defect detection process is described as follows:

Data Preprocessing: Following data augmentation and IPT application, we preprocess the images to prepare them for defect detection. These preprocessed images, post-IPT, were resized to a standardized 500 × 500 pixel format.

Ground Truth-Guided Learning: During the training phase, our U-Net neural network relied on ground truth masks. These masks serve as invaluable references, guiding the network to detect defects with exceptional precision.

Intersection of Classes: Our defect detection is specifically tailored to identify defects within window frames. We utilize the concept of the intersection of classes, ensuring that our results exclusively represent defects inside the window frames.

Neural Network Architecture: Our neural network architecture is a fusion of two powerful components: ResNet152 and U-Net. We employ transfer learning to harness the feature extraction capabilities of ResNet152. Its encoder is utilized, with the last layer discarded and then integrated with a decoder. This fusion results in an expansive feature map that excels in defect localization.

Semantic Segmentation Model: The architecture of our deep learning-based semantic segmentation model. This model combines the robustness of ResNet152 with the precision of U-Net, providing an ideal balance between feature extraction and localization.

Integrating U-Net with the feature-rich ResNet152 encoder enhances the model’s ability to accurately detect defects within window frames, making it a formidable tool for defect detection in industrial environments.

4. Experiments and Results

In this section, we delve into the practical aspects of our experiments, which aimed to optimize defect detection in window frames through the strategic deployment of image enhancement techniques (IETs). Our objective was to evaluate the performance enhancements achieved by these strategies, comparing them against a baseline machine learning (ML) model. To quantitatively assess the efficacy of our approach, we employed two key metrics: the F1-score and IoU (intersection over union). The F1 score provides a balanced measure of precision and recall, allowing us to gauge the accuracy of our defect detection model. Additionally, IoU quantifies the overlap between predicted and ground truth defect regions, offering insights into the model’s localization precision. Together, these metrics enable us to comprehensively evaluate and present the results of our defect detection system.

4.1. Data Collection

To assess the efficacy of our IET-enhanced deep learning-based defect detection model, we utilized the Spot Robot using Boston Dynamics in our experiments. This robotic platform allowed us to capture high-resolution images of window frames under various lighting conditions, enabling a comprehensive examination of defect detection in complex real-world environments.

4.2. Experimental Setup

At the heart of our defect detection model lies the integration of image enhancement techniques (IETs). Informed by insights from our experiments, we devised four distinct methods and their synergistic combinations tailored for robust industrial-scale deployment. These strategies draw from our findings in Experiments 1 and 2, wherein specialized techniques and IET-based data augmentation played pivotal roles in improving the defect detection accuracy.

4.3. Experiment 1: Integration of Image Enhancement Techniques

This experiment enhanced defect detection accuracy by optimizing our preprocessing pipeline’s sequence of image enhancement techniques (IETs). We evaluated IETs individually and in various combinations.

4.3.1. Experiment 1 Results

In our pursuit of elucidating the factors behind our results, particularly concerning performance disparities across different defect detection categories, we comprehensively explored over 40 image enhancement (IE) combinations. The derivation of this extensive set of combinations involved a meticulous examination of 24 permutations of the 4 enhancement techniques, each applied in varying orders—a total of 24 of the 4 factorials. Additionally, we introduced the “CLAHE” (contrast-limited adaptive histogram equalization) enhancement technique to the best 16 combinations, further enriching our analysis.

We present the top 10 image enhancement (IE) combinations for each defect out of the over 40 combinations tested.

Bend Detection Results

Table 3 shows the top 10 IE combinations with the highest F1 scores for bend detection.

Dent Detection Results

Table 4 presents the top 10 IE combinations with the highest F1 scores for dent detection.

Scratch Detection Results

Table 5 displays the top 10 IE combinations with the highest F1 scores for scratch detection.

4.3.2. Experiment 1 Insights

This thorough exploration allowed us to systematically evaluate a broad spectrum of enhancement strategies and their influence on defect detection performance. From this exhaustive analysis, the following key insights emerged:

IE Strategy Effectiveness: The most notable discovery revolves around the substantial enhancements observed in F1 and IoU scores across all defect categories (bend, dent, and scratch) when implementing the ‘Best IE Strategy’. The improvement in dent detection is particularly striking, showcasing a remarkable 9.92% increase in the F1 score and an impressive 10.70% surge in the IoU score. This underscores the pivotal role of tailored image enhancement strategies in effectively addressing specific defect characteristics, particularly those highly susceptible to lighting conditions, as exemplified by the dent defects.

Overall Improvement: On average, our model featuring the “Best IE Strategy” consistently outperformed the baseline U-Net model, achieving a noteworthy 7.67% improvement in F1 scores and an impressive 8.60% enhancement in IoU scores. This serves as compelling evidence for the efficacy of integrating IE techniques into the defect detection pipeline.

Performance Variability: It is crucial to acknowledge that the magnitude of improvement varied across defect categories. This variability emphasizes the need for adaptable defect detection systems capable of accommodating the diverse characteristics and challenges associated with different defect types.

Enhancement Strategies: A consistent trend emerges in our findings, revealing that the combination of image enhancement techniques consistently enhances F1 scores and precision. This underscores the intrinsic value of systematic experimentation in optimizing image enhancement for defect detection.

CLAHE Success: The “CLAHE” technique consistently played a pivotal role in enhancing F1 scores and precision across various defect categories. This reaffirms its significance in improving detection accuracy and highlights its effectiveness.

Trade-offs and Context: It is essential to strike a balance between accuracy and localization precision, as certain enhancements may influence IoU values differently. This trade-off consideration underscores the need for a nuanced approach in selecting and fine-tuning enhancement techniques based on specific detection requirements.

Fine-tuning Opportunities: The results underscore the potential for further customization by exploring enhancement combinations and adjustments to model architectures. This fine-tuning process holds the key to optimizing defect detection systems for specific application contexts.

In summary, our meticulous exploration of over 40 IE combinations, driven by 24 permutations of the 4 enhancement techniques and incorporating “CLAHE” into the best 16 combinations, provides a robust foundation for understanding the intricate relationship between image enhancement strategies and defect detection performance. These findings offer valuable insights into the dynamic interplay of enhancement techniques in optimizing the accuracy and precision across diverse defect categories.

4.4. Experiment 2: IE-Data Augmentation and Results

In Experiment 2, we investigated the impact of IE (image enhancement) as a data augmentation technique on object detection performance across various categories, including bend, dent, and scratch. IE-based data augmentation involves enhancing the quality and features of input images before applying object detection algorithms.

4.4.1. Experiment 2 Results

This subsection discusses the findings and insights gained from this experiment. These results are visualized in

Table 6 and

Table 7.

We exhaustively explored 30 IE combinations to identify the most effective strategies. The outcomes of the top 10 IE combinations are presented in

Table 8,

Table 9 and

Table 10. Our approach resulted in over 30 combinations tested, as we initially generated 24 combinations using the permutations of 4 enhancement techniques (4 factorial) and subsequently inserted CLAHE into the 6 best-performing combinations. This approach ensured a thorough evaluation of enhancement strategies, considering their individual and collective impact on defect detection performance.

Table 8 presents the top 10 combinations of Image Enhancement (IE) techniques that yield the highest F1 scores for detecting bends. Similarly,

Table 9 continues this analysis, showcasing the top 10 IE combinations with the highest F1 scores specifically for scratch detection. Meanwhile,

Table 10 focuses on the top 10 IE combinations that have achieved the best F1 scores for detecting dents.

4.4.2. Experiment 2 Insights

Experiment 2 introduced a distinct dimension to our exploration by employing image enhancement (IE) techniques not only to enhance the quality of the existing data but also to create new data samples through augmentation. This innovative approach provided valuable insights into the interplay between IE techniques and defect detection performance, particularly in the context of industrial applications.

Impact of Image Enhancement Techniques: We assessed the influence of individual image enhancement techniques (e.g., CE, IN, CN, SR, and CLAHE) on object detection accuracy. These techniques exhibited varying effects on IoU scores, highlighting trade-offs between localization accuracy and detection precision.

Combination Strategies: Combinations of enhancement techniques, such as “CN + CE” and “SR + IN”, were explored to evaluate their impact on detection performance. Different combinations produced diverse outcomes, emphasizing the complexity of selecting the right strategy.

CLAHE Effectiveness: CLAHE consistently improved IoU values across multiple detection categories, underscoring its importance in enhancing accuracy and precision.

Comprehensive Combinations: Comprehensive combinations like “SR + CN + IN + CE + CLAHE” were investigated to identify strategies with strong overall performance regarding IoU scores, but their complexity warrants careful evaluation.

Balancing Trade-offs: IE-based data augmentation involves balancing improved IoU scores and detection accuracies. Some techniques may favor one aspect, requiring thoughtful adaptations to specific detection needs.

Comparison of F1 and IoU Scores: We compared F1 and IoU scores for bend, dent, and scratch detection under “Normal” and “Best IE” strategies. Our strategy consistently improved both scores, enhancing detection accuracy.

In summary, IE-based data augmentation significantly enhances object detection accuracy and precision. The enhancement techniques and combinations should align with the specific detection goals and trade-offs that are set. Experiment 2 highlights the importance of IE-based data augmentation and its potential to improve object detection performance substantially.

4.5. Experiment 1 vs. Experiment 2: A Comparison

4.5.1. Experiment 1 Insights

Category-Specific Improvement: Experiment 1 showed significant F1 score improvements for bend (5.70%), dent (9.92%), and scratch (8.11%) detection, highlighting the importance of tailored enhancement strategies.

IoU Improvement: IoU scores were improved, with bend IoU and dent IoU increasing by 6.49% and 10.70%, respectively, and scratch IoU improving by 9.60%.

Model Architecture Impact: Our model consistently outperformed U-Net, emphasizing the role of the model’s architecture.

4.5.2. Experiment 2 Insights

IE-Based Data Augmentation: Experiment 2 introduced IE-based data augmentation, resulting in substantial F1 and IoU score improvements across all defect categories. Notably, the scratch detection F1 score improved by 9.82%.

Category-Specific Improvement: Category specific enhancement, with bend F1 and dent F1 scores showing notable increases (11.65% and 0.83%, respectively).

IoU Improvement: IoU scores were improved, with bend IoU and dent IoU increasing by 12.33% and 1.13%, respectively, and scratch IoU showing a remarkable 31.84% improvement.

Overall Model Performance: Our model enhanced with IE-based data augmentation outperformed the baseline U-Net model, with a 7.43% improvement in the F1 score and a substantial 15.10% improvement in IoU scores.

4.5.3. Comparative Insights

Both experiments emphasize the importance of tailored strategies and model architecture choices in defect detection. Experiment 2’s IE-based data augmentation approach demonstrated superior results, particularly in improving IoU scores, making it a promising avenue for enhancing defect detection accuracy in complex environments. The insights from both experiments contribute to the understanding of how image enhancement techniques can be seamlessly integrated into deep learning architectures for defect detection.

5. Discussion

Our extensive experiments have underscored the significant impact of image enhancement (IE) techniques on enhancing defect detection accuracy and offered crucial insights for their application in industrial contexts. Our findings’ implications extend to industrial quality control, where precise defect identification is paramount.

In Experiment 1, we unequivocally demonstrated the effectiveness of tailored enhancement strategies. These strategies led to substantial improvements in both F1 and IoU scores across all defect categories. The marked enhancement in dent detection, with a notable 9.92% increase in the F1 score and a 10.70% surge in the IoU score, emphasizes the value of personalized enhancement techniques. These findings underscore the importance of making informed choices when selecting enhancement methods, thereby optimizing defect detection accuracy.

Building on the insights from Experiment 1, Experiment 2 introduced an innovative concept—IE-based data augmentation. This novel approach further elevated detection performance, particularly in improving IoU scores. The implications of this experiment are profound, as they suggest a promising avenue for substantially enhancing defect detection accuracy in complex industrial environments. In industrial settings where precise localization of defects is critical, the improved IoU scores offer a compelling advantage.

The practical implications of these insights for industrial applications are significant. Our research highlights the transformative potential of seamlessly integrating IE techniques into deep learning architectures for defect detection. By doing so, we not only enhance the accuracy of defect detection but also open valuable opportunities for fine-tuning and optimizing industrial quality control processes. Our findings offer a path to more reliable and effective quality control in the industrial sector, where even minor defects can compromise product quality, reputation, and safety. The ability to detect defects accurately, particularly in challenging environments with varying lighting conditions and defect types, is essential for ensuring product excellence and safety.

In conclusion, our experiments provide valuable guidance for implementing IE techniques in industrial defect detection, showcasing their potential to revolutionize the field. These insights promise to enhance the accuracy and efficiency of quality control processes, ultimately benefiting industrial applications and contributing to improved product quality and safety.

6. Conclusions

In conclusion, our study has illuminated a path of profound significance in industrial defect detection by integrating image enhancement (IE) techniques with deep learning. Our research is not just a scientific endeavor; it is a practical solution that holds transformative implications for industries relying on precise defect identification in challenging operational environments.

Across two comprehensive experiments, we have emphasized the critical importance of customization, underlining the necessity of tailoring enhancement strategies to specific defect categories. Furthermore, we have highlighted the pivotal role played by the selection of model architecture, showcasing how it can influence the accuracy and precision of defect detection. Experiment 2, introducing IE-based data augmentation, has emerged as an innovative development. It has yielded remarkable improvements in F1 and IoU scores, offering a novel method for enhancing detection accuracy in complex industrial settings. This innovative approach unlocks the potential for industries to significantly elevate their product quality and bolster their production efficiency by ensuring precise defect detection.

Our research is not confined to theoretical insights; it provides practical solutions for real-world challenges. We have demonstrated that industries can achieve more accurate and reliable defect detection with the right combination of enhancement techniques and thoughtful adaptations. As computer vision continues to evolve, our findings offer a clear roadmap for implementing advanced defect detection systems, ultimately enhancing product quality, safety, and operational efficiency within industrial settings. There are numerous avenues for future research, including expanding datasets to encompass a broader range of defect types and operational conditions, exploring adaptive strategies for automatic technique selection, and integrating our enhanced defect detection approach into real-time industrial quality inspection using mobile robots.

In summary, our research signifies a pivotal moment in the domain of image enhancement techniques for industrial defect detection. It provides practical solutions and novel insights that empower industries to embrace cutting-edge technology, ensuring product excellence, reputation protection, and efficient production processes. Our journey has just begun, and we look forward to further advancing the field and driving excellence in industrial quality control.