1. Introduction

Affective computing defined by Picard [

1] is a multidisciplinary research field that relates to computer science, psychology, neuroscience, and cognitive science. Levenson [

2] believed that during natural selection, emotions were preserved for the necessity of rapid response mechanisms when facing different environmental threats. Emotion plays a nuclear role in human behavior, such as perception, attention, decision-making, and communication [

3]. Positive emotions contribute to healthy life and efficient work, while negative emotions may result in health problems [

4].

Emotion recognition methods include two main categories, according to the methods humans communicate emotions, including body expressions, and physiological signals. Body expressions are physical manifestations and easy to be collected. Theorists argue that each emotion corresponds to its unique somatic response [

1]. However, human physical manifestations are easily affected by the user’s cultural background and social environment [

4]. The physiological signals [

3,

4] are internal signals, such as electroencephalogram (EEG), electrocardiogram (ECG), heart rate (HR), electromyogram (EMG), and galvanic skin response (GSR). According to Connon’s theory [

5], the emotion changes are associated with quick responses in physiological signals coordinated by the autonomic nervous systems (ANS). This makes the physiological signals not easily controlled and overcome the shortcomings of body expressions [

4]. Physiological signals have been widely applied in many studies for emotion recognition [

3,

4]. These physiological signals, including ECG and EMG, are still not a direct reaction to emotion changes. According to psychology and neurophysiology, emotion generation and activity have a close relationship with the activity of the cerebral cortex. Thus, EEG signals effectively reflect the brain electrical activity, and have been widely applied in many fields, including cognitive performance prediction [

6], mental load analysis [

7,

8], mental fatigue assessment [

9], recommendation system [

10] and decoding visual stimuli [

11,

12].

Recently, the field of EEG-based emotion recognition has attracted a lot of interest, including Brain-Computer Interaction (BCI) systems, basic emotion theories, and machine learning algorithms [

13,

14]. In machine learning, the definition of the emotion model is necessary to describe the objective function of the algorithms. There are mainly two kinds of models [

3], discrete emotion spaces and continuous emotion models. Among these models, the valence-arousal model by Russell [

15] has been widely used in emotion recognition for its simplicity to establish assessment criteria. The progress of EEG-based emotion recognition also includes feature extraction, feature selection, dimension reduction, and classification algorithms [

13,

14]. After the pre-processing of original EEG signals, the current work is to extract and select informative features to enhance the discriminative signal characteristics. Traditionally, feature extraction and selection are based on neuroscience and cognition science [

16]. For example, frontal asymmetry in Alpha band power for differentiating valence level has attracted lots of interest in neuroscience research [

17]. Besides neuro-scientific assumptions, computation methods in machine learning are also applied for feature extraction and selection in EEG-based emotion recognition [

16,

18]. Several studies transformed the pre-processed EEG-signal into various analysis domains, including time, frequency, statistical, and spectral domains [

19]. It should be noted that only one feature extraction method is not suitable for various applications and BCI systems [

19]. Although the most informative EEG features for emotion classification are still being researched, the power features obtained from different bands are widely recognized as the most popular features. In these studies [

20,

21,

22], power spectral density (PSD) from EEG signals worked well for identifying emotional states. However, feature extraction usually generates high-dimensional and abundant features. Feature selection and dimension reduction are necessary to avoid over-specification and to reduce computational burden [

3]. Compared to filter and wrapper methods for feature selection, the dimension reduction methods, e.g., principal component analysis (PCA), and Fisher linear discriminant (FLD), are more efficient. For further information about feature selection and dimension reduction, we refer the reader to [

23,

24]. Many machine learning algorithms have been introduced as EEG-based emotion classifiers, such as support vector machine (SVM) [

25,

26], Naive Bayes (NB) [

27], K-nearest neighbors (KNN), linear discriminant analysis (LDA), random forest (RF), and artificial neural networks (ANN). Among these methods, SVM based on spectral features, e.g., PSD, is the most widely applied approach. In [

25], SVM was used to classify the joy, sadness, anger, and pleasure feelings based on the EEG signals from 12 symmetric electrodes pairs. SVM was used in [

26] for emotion recognition with the accuracies 32% and 37% in valence and arousal dimensions, respectively. A Gaussian NB in [

27] was used to classify low/high valence, and arousal emotion with precision of 57.6% and 62.0%, respectively.

Recently, deep learning (DL) methods have been introduced for EEG-based emotion classification [

28,

29]. The study [

30,

31] proposed deep belief network (DBN) to discriminate positive, neutral, and negative emotions. The experimental results show that DBN performs better than SVM and KNN. In [

32], after an effective pre-processing method instead of traditional feature extraction methods, a hybrid neural network combining convolutional neural network (CNN) and recurrent neural network (RNN) is proposed to learn spatial-temporal representation from the pre-processed EEG recordings. The proposed pre-processing strategy improves the emotion recognition accuracies by about 33% and 30% for valence and arousal dimensions, respectively. In [

33], a deep CNN (DCNN) model is introduced to learn discriminative representations from the combined features in the raw time domain, after normalization, and in the frequency domain. The obtained emotion classification accuracies are higher than the traditionally best bagging tree (BT) classifier. The study [

34] proposed a hierarchical bidirectional gated recurrent unit (GRU) network with an attention mechanism. The proposed scheme learned more significant representation from EEG sequences and the accuracies obtained on cross-subject emotion classification task outperformed the long short time memory (LSTM) network by 4.2% and 4.6% in valence and arousal dimensions, respectively. Compared to traditional shallow methodologies, the DL models remove the signal pre-processing and feature extraction/selection progress, and are more suitable for affective representation [

35,

36]. However, the DL methods cannot reveal the relationship between emotional states and EEG signals for being like a black box [

37]. Moreover, the training of DL networks is extremely computationally time-consuming, which limits their practical applications in real-time emotion recognition [

3].

As aforementioned, the field of affective computing has developed a lot over the past several years, including the incorporation of DL methodologies. However, the modeling and recognition of emotional states is still an unexplored problem [

13,

14]. EEG-based emotion recognition is still faced with several challenges, including fuzzy boundaries between emotions.

Note that logistic regression (LR) [

38] has been widely used as a statistical learning model in pattern recognition and machine learning, as well as in EEG signal processing. In [

39], LR trained with EEG power spectral features was used for automatic epilepsy diagnosis. The work in [

40] further used wavelet transform to extract effective representation from non-stationary EEG records and adopted LR as a classifier to identify epileptic and non-epileptic seizures. In [

41], regularized linear LR was trained using the raw EEG signal without feature extraction to classify imaginary movements. In [

42], LR with L2-penalization to avoid overfitting was trained using spectral power features from intracranial EEG (iEEG) signals for the analysis of the brain’s encoding states and memory performance. The study in [

43] further incorporated t-distributed stochastic neighbor embedding (tSNE) for dimension reduction of iEEG signals, and the learned L2-regularized LR classier was used for predicting memory encoding success. Despite the above studies, the potential of the LR model for EEG-based emotion recognition is still not fully explored.

In this present study, we systematically introduced the logistic regression (LR) algorithm with Gaussian kernel and Laplacian prior [

44,

45,

46] for EEG-based emotion recognition. Different from these LR classifiers, Gaussian radial basis function (RBF) kernel was used to enhance the data separability in the transformed space [

46]. Moreover, Laplacian prior promoting the sparsity of logistic regressors was acted as L1-regularization [

44]. This prior forces many components of logistic regressors to be zero. Thus, the learned logistic regressors with sparseness control the complexity of the LR classifier and consequently avoids over-specification in EEG-based emotion recognition. The logistic regression via variable splitting and augmented Lagrangian (LORSAL) algorithm [

45] was introduced to optimize the logistic regressors for lower computational complexity. Thus, the introduced LR method is abbreviated as LORSAL. For overall evaluation of the LORSAL classifier, various power spectral features and features calculated by combinations of electrodes were used as input for the classifiers. The conventional NB, SVM, linear LR with L1-regularization (LR_L1), linear LR with L2-regularization (LR_L2) were used for comparison to evaluate the performance of the LORSAL classifier. This paper also presents an investigation of critical frequency bands [

47,

48] and an analysis of the effect of extracted features for EEG-based emotion classification.

The rest of this paper is organized as follows.

Section 2 presents the materials and methods, including the dataset for emotion analysis using EEG, physiological and video signals (DEAP), various features extracted from the EEG signals, the introduced LR model with Gaussian kernel and Laplacian prior, and the LORSAL algorithm to learn LR regressors. The experimental results are shown in

Section 3. The introduced method is evaluated in the task of subject-dependent emotion recognition in valence and arousal dimensions, and the compared methods include NB, SVM, LR_L1, and LR_L2.

Section 4 gives the discussion and a further comparison of LORSAL and the DL methods. Related conclusion and future work are presented in

Section 5.

4. Discussion

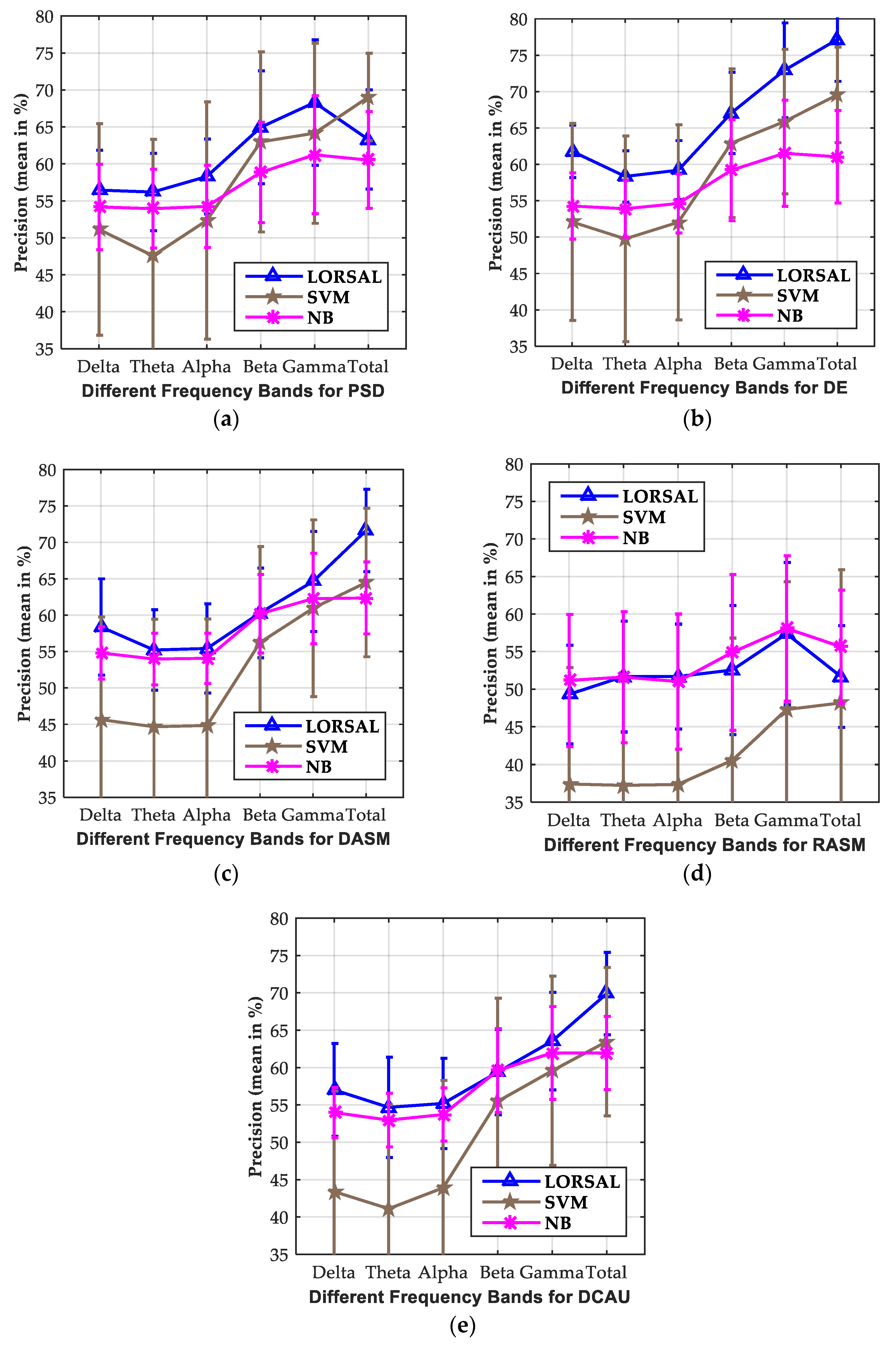

Although the area of affective computing has developed a lot over the past years, the topic of EEG-based emotion recognition is still a challenging problem. This paper introduced LR with Gaussian kernel and Laplacian prior for EEG-based emotion recognition. The Gaussian kernel enhances the EEG data separability in the transformed space, and the Laplacian prior controls the complexity of the learned LR regressor in the training process. The LORSAL algorithm was introduced to optimize the LR with Gaussian kernel and Laplacian prior for its low computational complexity. Various spectral power features in the frequency domain and features by combining the asymmetrical electrodes, PSD, DE, DASM, RASM, and DCAU, were extracted for the Delta, Theta, Alpha, Beta, Gamma and Total frequency bands using 256-point STFT from the segmented 1 s EEG epochs.

The experiments were conducted on the publicly available DEAP dataset, and the performance of the introduced LORSAL methods was compared with the NB, SVM, LR_L1, and LR_L2 classifiers. The experimental results showed that LORSAL presented the best accuracies of 77.17% and 77.03% in valence and arousal dimensions, respectively, on the DE features from the total frequency bands, while the SVM classifiers obtained the second-highest accuracies of 69.55% and 69.92%. The other evaluation metrics obtained by LORSAL, SVM, and NB were also tabulated in the paper. The introduced LORSAL method also presented the best Recall (76.79% and 77.03% in valence and arousal, respectively) and F1 (76.90% and 76.47% in valence and arousal, respectively). The previous experimental results showed the superiority of the introduced LORSAL method for EEG-based emotion recognition compared to the NB, SVM, LR_L1, and LR_L2 approaches.

This paper also showed an investigation of the critical frequency bands for EEG-based emotion recognition. In this study, the informative features are captured from different frequency bands: Delta, Theta, Alpha, Beta, Gamma, and Total. The previous neuroscience studies showed that specific frequency band ranges are associated with specific brain activities. For example, the EEG Alpha frequency bands are related to attentional processing, whereas the Beta bands are a reflection of emotional and cognitive processing. The experimental results showed that the LORSAL, SVM, and NB classifiers performed better on the Gamma and Beta frequency bands than other bands for different features. The comparison of the Fisher ratio also showed the effectiveness of Gamma and Beta bands in emotion recognition. The findings in this study are in accordance with the previous work about critical bands investigation [

47,

48].

Additionally, the effects of different features, PSD, DE, DASM, RASM, and DCAU, on the emotion classification results were also analyzed in this paper. Experimental results show that the compared approaches, LORSAL, SVM, and NB obtained superior precision metrics on the DE features over other features. This shows the effectiveness of the DE features in distinguishing low- and high-frequency energy in EEG sequences. Meanwhile, the DASM and DCAU features presented relatively ideal classification accuracies compared to the PSD features. It is noted that DASM and DCAU have the advantages of less time consumption for their lower dimensionality than PSD and DE.

For a more comprehensive analysis, -

Table 5 showed a comparison of the introduced LORSAL methods, the other shallow classifiers, and the deep learning approaches for EEG-based emotion recognition of LV/HV and LA/HA on DEAP dataset. In single-trial classification by Koelstra et al. [

27], the NB after feature selection using Fisher’s linear discrimination, obtained the accuracies of 57.65% and 62.0% in valence and arousal dimensions. In [

72], the Bayesian weighted-log-posterior function optimized with the perceptron convergence algorithm presented average precisions of 70.9% and 70.1% for valence and arousal. For within-subject emotion recognition of LV/HV and LA/HA, Atkinson et al. [

73] presented the accuracies of 73.41% and 73.06% using minimum-Redundancy- Maximum-Relevance (mRMR) for feature selection. Rozgić et al. [

74] performed classification using segment level decision fusion and presented precisions of 76.9% and 69.4% to discriminate LV/HV and LA/HA emotions. In the studies by Zheng et al. [

48], the discriminative graph regularized extreme learning machine (GELM) with DE features achieved the highest average accuracies of 69.67% for 4-class classification in VA emotion space. The introduced LORSAL classifier presented ideal evaluation metrics for EEG emotion recognition, including the compared NB, SVM, LR_L1, and LR_L2 methods in the experiments.

Recently, deep learning (DL) methods have been used for EEG-based emotion classification [

28,

29]. In [

75], a hybrid DL model combining CNN and RNN learned task-related features from grid-like EEG frames and achieved the accuracies of 72.06% and 74.12 for valence and arousal. The DNN and CNN models by Tripathi et al. [

76] achieved the precisions of 75.78%, 73.12%, 81.41%, and 73.36% along valence and arousal dimensions, respectively. The classification accuracies for valence and arousal were over 85% using LSTM-RNN by Alhagry et al. [

77], and over 87% using 3D-CNN by Salama et al. [

78]. More recently, Chen et al. [

33,

34] have researched a lot on the combination of DL models and various features. As tabulated in

Table 5, computer vision CNN (CVCNN), global spatial filter CNN (GSCNN), and global space local time filter (GSLTCNN) [

33] presented obvious improvements with concatenating PSD, raw EEG features, and normalized EEG signals. In [

34], the proposed hierarchical bidirectional gated recurrent unit (H-ATT-BGRU) network performed better on raw EEG signals than CNN and LSTM, and the obtained accuracies in valence and arousal dimensions were 67.9% and 66.5% for 2-class cross-subject emotion recognition. For more details about the DL architectures applied in the DEAP data, readers may refer to the literature [

33,

34,

75,

76,

77,

78]. Compared to traditional shallow methods, the DL schemes remove the signal pre-processing and feature extraction/selection progress, and are more suitable for affective representation [

35,

36]. However, the DL methods cannot reveal the relationship between emotional states and EEG signals for being like a black box [

37].

However, more importantly, the training of DL networks is extremely time-consuming, which limits their practical applications in real-time emotion recognition [

3]. Craik et al. [

28] stated that from practical issues, the DL methods have problems of very long computation, and the vanishing/ exploding gradients, and their practical application need extra graphic processing unit (GPU). Roy et al. [

29] pointed out that from a practical point-of-view, the hyperparameter search of a DL algorithm often takes up a lot of time for training. Additionally, Craik et al. [

28] and Roy et al. [

29] make comprehensive reviews on the recent DL schemes.

To illustrate the time efficiency, the average training time of the compared NB, SVM, MLR_L1, MLR_L2, and LORSAL methods are shown in

Table 6. The average running time for STFT-based feature extraction is 68.15 s. In our experiment, all the programs are performed using on a computer with an Intel Core i5-4590 of 3.30 GHz and 8.00-GB RAM. LORSAL takes just no more than 4 s for training, and the computing time is in the same order as the compared traditional shallow methods. As mentioned earlier, the complexity of LORSAL is

O((

L + 1)

2K) for each quadratic problem, where

L is the number of EEG epochs used for training and

K is the number of emotion classes. As shown in

Table 5, the time-consumptions of LORSAL on DE, PSD, DASM, RASM, and DCAU (with different dimensions 160, 160, 70, 70, and 55) are nearly the same. Given limited computational resources, or with portable devices, the introduced LORSAL algorithm has higher time efficiency than DL methods and can present better performance than the compared shallow methods.