In this section, we first investigate existing low-power technologies and methods and then raise our assumption on improving energy efficiency.

2.1. Related Work

We have searched multiple combinations of keywords such as ‘embedded system AND low power’, ‘embedded system energy saving’, ‘embedded system extend battery life’, and ‘embedded system AND energy efficient’ to retrieve the peer-reviewed articles of journals, conference proceedings, book chapters, and reports from the databases of ACM Digital Library, IEEE Xplore, Engineering Village, and Web of Science. We also used Google Scholar as a complementary search engine to find other related documents as well as those not formally published. On the topic of energy-saving and extending battery life in the field of embedded systems, research can be roughly divided into two directions: increasing the energy harvest and decreasing the consumption.

Energy harvesting is a promising technique that takes advantage of environmental or other energy sources such as solar, wind, thermal gradients, and radiofrequency radiation. Chandrakasan et al. [

8] stated that the ultimate goal of micro-power systems is to power microsystem devices using energy harvesting techniques such as vibration-to-electric conversion or through wireless power transmission. Gollakota et al. [

9] pointed out that, with the development of computers, it is possible to power small computing devices using only incident RF signals. Gudan et al. [

10] presented a system for measuring ambient RF energy in the 2.4 GHz ISM band and suggested there is enough energy to support a low duty cycle wireless sensor node system. Li et al. [

11] proposed a solar energy driven, multi-core architecture power management scheme called SolarCore, which is capable of achieving the optimal operation condition of solar panels autonomously under various environmental conditions with a high green energy utilization of 82% on average. Raghunathan et al. [

12] presented the design, implementation, and performance evaluation of Heliomote, a prototype to address the key issues and tradeoffs arisen in the design of solar energy harvesting and wireless embedded systems.

Dynamic voltage and frequency scaling (DVFS), power mode management (also known as dynamic power management), and software layer low-power optimizing techniques are the most widely used power-saving approaches. Dynamic processor frequency is widely used as a power-saving method to decrease power consumption. Hua et al. [

13] studied the optimal number and values of voltage levels for achieving energy efficiency with minimal area and power overhead of voltage regulators and of voltage transitions. Gheorghita et al. [

14] proposed a technique for saving energy in embedded systems by using a smaller voltage at a time when less computation power is required.

Choi et al. [

15] proposed a DVFS technique that enables one to achieve a precise energy-performance tradeoff while making use of runtime information about the external memory access statistics and to choose the optimal CPU clock frequency and the corresponding minimum voltage level based on the ratio of the on-chip computation time to the off-chip access time. Saewong et al. [

16] proposed four DVFS techniques for saving energy in embedded systems: using a single frequency for the entire execution, using slack to save extra energy in low-priority tasks, which are suitable for systems where the overhead of DVFS is very high, using different optimal frequencies for every task, and minimizing the energy consumption based on monitoring actual execution times; however, these are unsuitable for on-line use due to their high complexity. Quan et al. [

17] propose two DVFS algorithms for saving energy in real-time embedded systems; the first algorithm finds the minimum constant speed, and the processor is shut down when idle, and the second algorithm produces both a constant speed and a schedule of variable voltages for minimizing the energy. Kan et al. [

18] proposed a DVFS technique for saving energy in soft real-time embedded systems by choosing the available frequency that is closest to the optimal frequency for a task.

In embedded SoCs, there usually exist several operating modes or power modes that can be used to save energy. Different modes require different electric currents to work with and take different periods of time to return to the normal mode. Generally, modes that consume less energy will take a longer time to return to normal mode [

19]. A large amount of research has been performed to find the balance of energy-savings and real-time performance. Li et al. [

20] proposed a method for selecting power modes for the optimal power management of embedded systems under timing and power constraints. Hoeller et al. [

21] proposed an interface for the power management of hardware and software components. Huang et al. [

22] propose an energy-savings technique that works by adaptively controlling the power mode of the embedded system according to the historical arrival of tasks. Bhatti et al. [

23] present an online framework to integrate DVFS with a power-mode management (PMM) scheme to save energy in embedded systems. Niu et al. [

24] propose a technique to save both leakage and dynamic energy in embedded systems by integrating DVFS and PPM. In 2013, Liang et al. [

25] proposed a method to save energy in the user equipment, whereby the user equipment switches to sleep mode during their nonactivity periods and wakes up when required. They also discussed a strategy to prolong the sleep period of the sensors for better energy efficiency.

Ahmad et al. [

26] assumed that different ARM instructions have similar power consumption and proposed a framework for static-analysis-based smartphone application energy estimation. Chen [

27] conducted a test on ARM926EJS among different types of data, operators, and function types and obtained a result that achieved the same function using different instructions that could have different energy consumption. In addition, modern compilers such as the GNU C Compiler (GCC) [

28] and ARM Compiler (ARMCC) [

29] also provide optimization options, whereby the compiler can attempt to optimize for small code size and high performance.

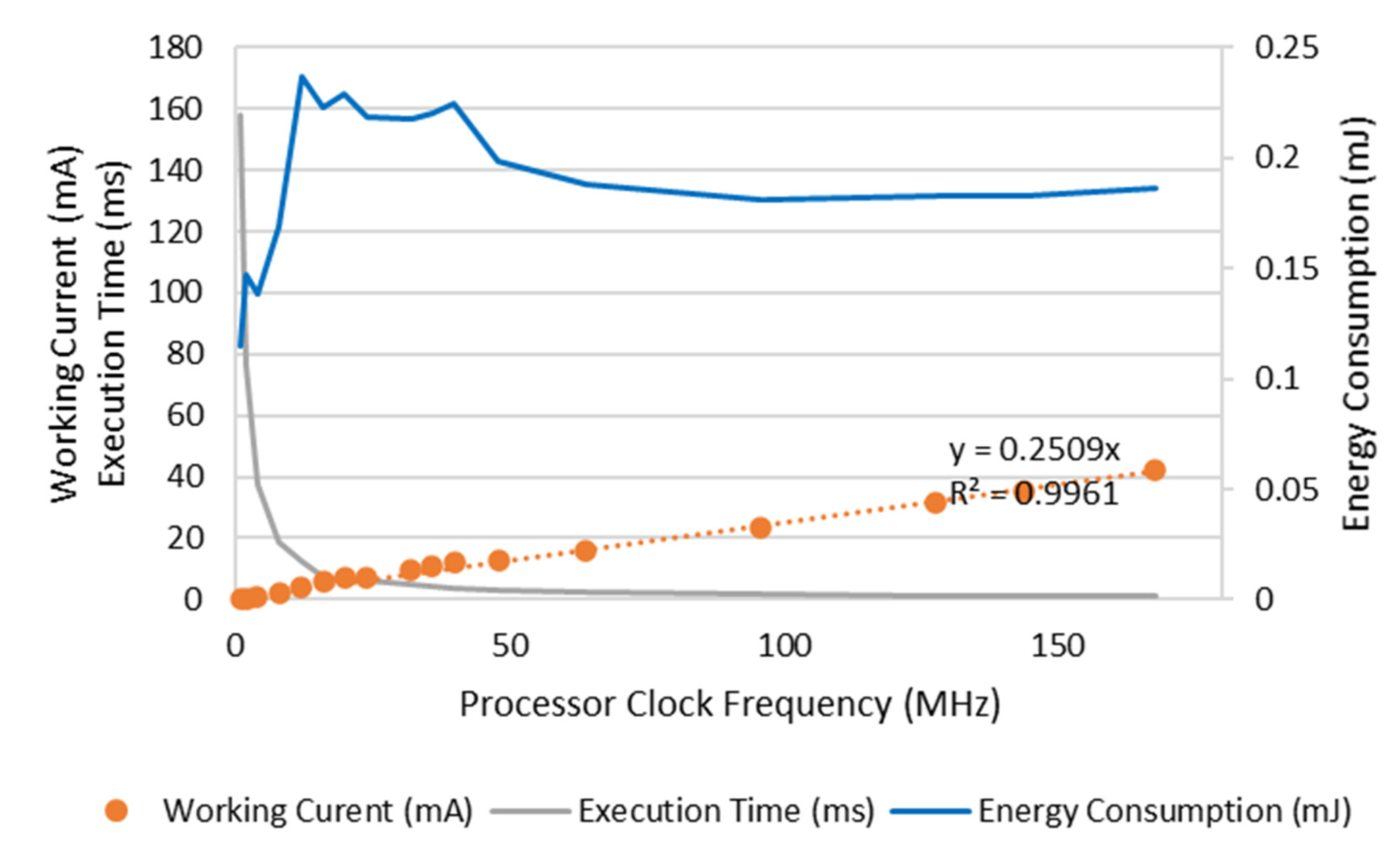

However, lowering power consumption does not necessarily mean saving energy. Chung et al. [

30] researched the relationship between energy consumption and the program executing efficiency and pointed out that, in some situations, the growth rate of the power consumption is not always as high as that of the program execution efficiency. Thus, in this situation, the energy consumption can be lowered by improving the program execution efficiency. Dzhagaryan et al. [

31] researched the relationship between the processor frequency and the energy consumed by program execution and pointed out that, with an increment in the processor frequency, the power consumed by the processor is increased, while the execution time of a program is reduced. The energy consumed by program execution can increase or decrease, varying based on the processor frequency. They proposed that the most energy-efficient processor clock frequency is below the designed maximum frequency, and the relationship between the energy consumption and frequency presents a U-shaped curve. In addition, because the voltage transitions can require time on the order of tens of microseconds [

32], Jiangwei et al. [

33], Pinheiro et al. [

34], and Kuehn et al. [

35] pointed out that the operation of processor frequency scaling can also introduce additional energy consumption because of the transition time overhead.

These studies have taken the overhead introduced by applying low-power technologies into account, but they focused more on designing low-power strategies from the perspective of system performance, especially system capacity and real-time performance. We believe that the overhead is not only a problem of system performance and energy consumption; it also has impacts on the DVFS strategy because it could be fairly significant and cannot be ignored. In addition, we want to figure out a convenient way to help determine when and which low-power technique has the best energy efficiency under specified circumstances.