Age Prediction from Low Resolution, Dual-Energy X-ray Images Using Convolutional Neural Networks

Abstract

:1. Introduction

- We built a dataset of low-resolution, dual-energy X-ray absorptiometry images with annotations performed by specialists. This was probably one of the first datasets containing whole-body, dual-energy X-ray absorptiometry images (to the best of our knowledge).

- We proposed a CNN-based age prediction framework for low-resolution, whole-body, dual-energy X-ray absorptiometry images.

- As a result of our experiments, we demonstrated that age could be successfully predicted from low-resolution images, so potentially even less radiation energy could be used at the data-acquisition phase.

2. Materials and Methods

2.1. Data Acquisition

2.2. Dataset

Data Preparation

2.3. Tested Model and Training Procedure

3. Results

3.1. Experiment I—DXA Dataset

3.2. Experiment II—augDXA Dataset

3.3. Experiment III—RSNA 2017 Challenge Dataset and Attention Model

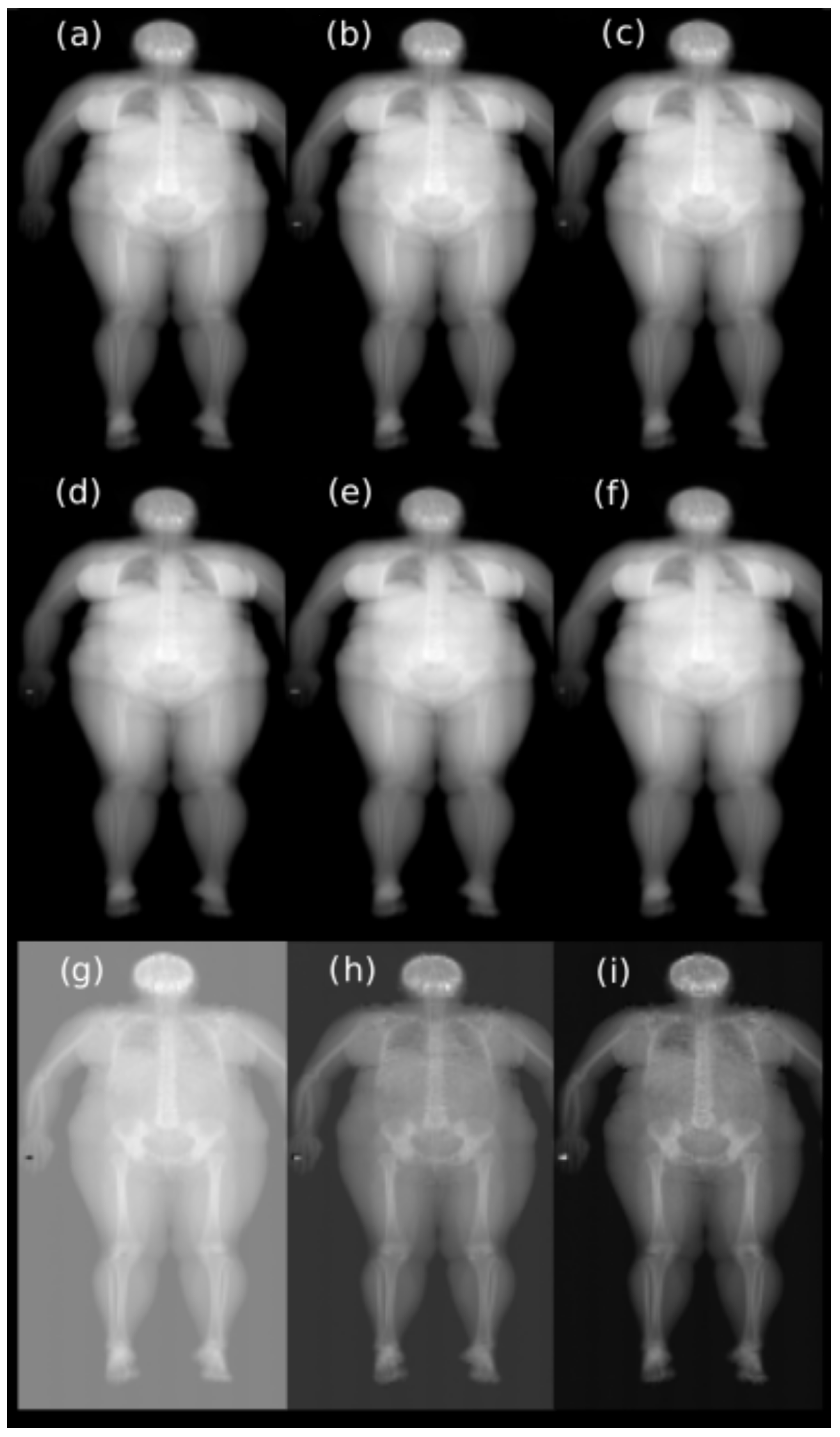

3.4. Experiment IV—The Single Energy X-ray Images

3.5. Vanilla Gradient

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| DXA | dual-energy X-ray absorptiometry; |

| MAE | mean absolute error; |

| CNN | convolutional neural network; |

| ROI | regions of interest |

| BMD | bone mineral density |

| BMC | bone mineral content |

| RSNA | Radiological Society of North America |

References

- Briot, K. DXA Parameters: Beyond Bone Mineral Density. Jt. Bone Spine 2013, 80, 265–269. [Google Scholar] [CrossRef] [PubMed]

- Heppe, D.H.M.; Taal, H.R.; Ernst, G.D.S.; Akker, E.L.T.V.D.; Lequin, M.M.H.; Hokken-Koelega, A.C.S.; Geelhoed, J.J.M.; Jaddoe, V.W.V. Bone Age Assessment by Dual-Energy X-ray Absorptiometry in Children: An Alternative for X-ray? Br. J. Radiol. 2012, 85, 114–120. [Google Scholar] [CrossRef] [Green Version]

- Castillo, R.F.; Ruiz, M.D.C.L. Assessment of Age and Sex by Means of DXA Bone Densitometry: Application in Forensic Anthropology. Forensic Sci. Int. 2011, 209, 53–58. [Google Scholar] [CrossRef] [PubMed]

- Navega, D.; Coelho, J.D.O.; Cunha, E.; Curate, F. DXAGE: A New Method for Age at Death Estimation Based on Femoral Bone Mineral Density and Artificial Neural Networks. J. Forensic Sci. 2017, 63, 497–503. [Google Scholar] [CrossRef] [PubMed]

- Lee, H.; Tajmir, S.; Lee, J.; Zissen, M.; Yeshiwas, B.A.; Alkasab, T.K.; Choy, G.; Do, S. Fully Automated Deep Learning System for Bone Age Assessment. J. Digit. Imaging 2017, 30, 427–441. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Iglovikov, V.I.; Rakhlin, A.; Kalinin, A.A.; Shvets, A.A. Paediatric bone age assessment using deep convolutional neural networks. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support; Springer: Cham, Switzerland, 2018; pp. 300–308. [Google Scholar]

- Karargyris, A.; Kashyap, S.; Wu, J.T.; Sharma, A.; Moradi, M.; Syeda-Mahmood, T. Age prediction using a large chest X-ray dataset. In Proceedings of the Medical Imaging 2019: Computer-Aided Diagnosis, San Diego, CA, USA, 16–21 February 2019; Volume 10950. [Google Scholar]

- Xue, Z.; Antani, S.; Long, L.R.; Thoma, G.R. Using deep learning for detecting gender in adult chest radiographs. In Proceedings of the Medical Imaging 2018: Imaging Informatics for Healthcare, Research, and Applications, Houston, TX, USA, 10–15 February 2018; Volume 10579. [Google Scholar]

- Xue, Z.; Rajaraman, S.; Long, R.; Antani, S.; Thoma, G. Gender Detection from Spine X-ray Images Using Deep Learning. In Proceedings of the 2018 IEEE 31st International Symposium on Computer-Based Medical Systems (CBMS), Karlstad, Sweden, 18–21 June 2018; pp. 54–58. [Google Scholar]

- Marouf, M.; Siddiqi, R.; Bashir, F.; Vohra, B. Automated Hand X-ray Based Gender Classification and Bone Age Assessment Using Convolutional Neural Network. In Proceedings of the 2020 3rd International Conference on Computing, Mathematics and Engineering Technologies (iCoMET), Sukkur, Pakistan, 29–30 January 2020; pp. 1–5. [Google Scholar]

- Kaloi, M.A.; He, K. Child Gender Determination with Convolutional Neural Networks on Hand Radio-Graphs. arXiv 2018, arXiv:1811.05180. [Google Scholar]

- Nguyen, H.; Soohyung, K. Automatic Whole-body Bone Age Assessment Using Deep Hierarchical Features. arXiv 2019, arXiv:1901.10237. [Google Scholar]

- Castillo, J.; Tong, Y.; Zhao, J.; Zhu, F. RSNA Bone-age Detection using Transfer Learning and Attention Mapping. 2018. Available online: http://noiselab.ucsd.edu/ECE228_2018/Reports/Report6.pdf (accessed on 7 April 2021).

- Liu, B.; Zhang, Y.; Chu, M.; Bai, X.; Zhou, F. Bone Age Assessment Based on Rank-Monotonicity Enhanced Ranking CNN. IEEE Access 2019, 7, 120976–120983. [Google Scholar] [CrossRef]

- Salim, I.; Ben Hamza, A. Ridge Regression Neural Network for Pediatric Bone Age Assessment. Multimed. Tools Appl. 2021, 80, 30461–30478. [Google Scholar] [CrossRef]

- Satoh, M. Bone Age: Assessment Methods and Clinical Applications. Clin. Pediatr. Endocrinol. 2015, 24, 143–152. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. MobileNetV2: Inverted Residuals and Linear Bottlenecks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the Inception Architecture for Computer Vision. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Identity Mappings in Deep Residual Networks. arXiv 2016, arXiv:1603.05027. [Google Scholar]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A. Inception-v4, Inception-ResNet and the Impact of Residual Connections on Learning. In Proceedings of the AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017. [Google Scholar]

- Halabi, S.S.; Prevedello, L.M.; Kalpathy-Cramer, J.; Mamonov, A.B.; Bilbily, A.; Cicero, M.; Pan, I.; Pereira, L.A.; Sousa, R.T.; Abdala, N.; et al. The RSNA Pediatric Bone Age Machine Learning Challenge. Radiology 2019, 290, 498–503. [Google Scholar] [CrossRef]

- Geron, A. Hands-On Machine Learning with Scikit-Learn, Keras and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems, 2nd ed.; O’Reilly: Sebastopol, CA, USA, 2019. [Google Scholar]

- Sicara/Tf-Explain, GitHub. Available online: github.com/sicara/tf-explain (accessed on 7 April 2021).

- Williams, G.R. Actions of thyroid hormones in bone. Endokrynol. Pol. 2009, 60, 380–388. [Google Scholar]

- Hill, R.J.; Brookes, D.S.; Lewindon, P.J.; Withers, G.D.; Ee, L.C.; Connor, F.L.; Cleghorn, G.J.; Davies, P.S.W. Bone Health in Children with Inflammatory Bowel Disease: Adjusting for Bone Age. J. Pediatr. Gastroenterol. Nutr. 2009, 48, 538–543. [Google Scholar] [CrossRef] [PubMed]

| Adapted Model | Total Parameters | Trainable Parameters |

|---|---|---|

| MobileNetV2 DW | 2,443,841 | 185,857 |

| MobileNetV2 GA | 2,422,081 | 164,097 |

| VGG16 DW | 14,789,185 | 74,497 |

| InceptionV3 DW | 22,075,425 | 272,641 |

| ResNet50V2 DW | 23,862,017 | 297,217 |

| InceptionResNetV2 DW | 54,541,281 | 204,545 |

| Fold | MobV2 DW | MobV2 GA | VGG16 DW | InceptionV3 DW | ResNet50V2 DW | Inception ResNetV2 DW |

|---|---|---|---|---|---|---|

| 1 | 13.39 ± 0.11 | 15.70 ± 0.09 | 15.64 ± 0.10 | 15.63 ± 0.07 | 14.67 ± 0.16 | 15.27 ± 0.10 |

| 2 | 16.29 ± 0.09 | 17.73 ± 0.06 | 16.25 ± 0.05 | 16.72 ± 0.13 | 15.72 ± 0.15 | 16.99 ± 0.14 |

| 3 | 16.58 ± 0.07 | 17.58 ± 0.42 | 16.49 ± 0.13 | 17.14 ± 0.37 | 16.03 ± 0.10 | 17.56 ± 0.08 |

| 4 | 14.85 ± 0.01 | 16.31 ± 0.07 | 16.74 ± 0.05 | 17.09 ± 0.03 | 15.95 ± 0.10 | 17.69 ± 0.12 |

| 5 | 16.67 ± 0.07 | 18.11 ± 0.07 | 18.30 ± 0.03 | 18.8 ± 0.065 | 16.39 ± 0.11 | 18.17 ± 0.07 |

| 15.56 ± 1.26 | 17.08 ± 0.92 | 16.69 ± 0.89 | 17.09 ± 1.04 | 15.75 ± 0.58 | 17.14 ± 1.00 | |

| MAE [months]: mean and standard deviation | ||||||

| Fold | MAE [Months]: Mean and SD |

|---|---|

| 1 | 13.77 ± 0.12 |

| 2 | 15.82 ± 0.09 |

| 3 | 15.75 ± 0.11 |

| 4 | 14.30 ± 0.04 |

| 5 | 15.47 ± 0.09 |

| 15.02 ± 0.83 |

| Summary | ||

|---|---|---|

| Model | Dataset | MAE [Months]: Mean and SD |

| MobileNetV2 DW | DXA | 15.56 ± 1.26 |

| augDXA | 15.02 ± 0.83 | |

| RSNA 2017 | 16.29 ± 0.19 | |

| Attention Model | DXA | 19.48 ± 0.77 |

| augDXA | 17.05 ± 0.55 | |

| RSNA 2017 | 11.45 [13] | |

| Images | MAE [Months]: Mean and SD |

|---|---|

| DXA | 15.56 ± 1.26 |

| High energy | 15.78 ± 1.13 |

| Low energy | 15.43 ± 1.04 |

| High air | 15.93 ± 1.15 |

| Low air | 15.61 ± 1.27 |

| High tissue | 15.74 ± 1.13 |

| Low tissue | 15.52 ± 1.29 |

| High bone | 15.89 ± 1.04 |

| Low bone | 15.47 ± 1.04 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Janczyk, K.; Rumiński, J.; Neumann, T.; Głowacka, N.; Wiśniewski, P. Age Prediction from Low Resolution, Dual-Energy X-ray Images Using Convolutional Neural Networks. Appl. Sci. 2022, 12, 6608. https://doi.org/10.3390/app12136608

Janczyk K, Rumiński J, Neumann T, Głowacka N, Wiśniewski P. Age Prediction from Low Resolution, Dual-Energy X-ray Images Using Convolutional Neural Networks. Applied Sciences. 2022; 12(13):6608. https://doi.org/10.3390/app12136608

Chicago/Turabian StyleJanczyk, Kamil, Jacek Rumiński, Tomasz Neumann, Natalia Głowacka, and Piotr Wiśniewski. 2022. "Age Prediction from Low Resolution, Dual-Energy X-ray Images Using Convolutional Neural Networks" Applied Sciences 12, no. 13: 6608. https://doi.org/10.3390/app12136608

APA StyleJanczyk, K., Rumiński, J., Neumann, T., Głowacka, N., & Wiśniewski, P. (2022). Age Prediction from Low Resolution, Dual-Energy X-ray Images Using Convolutional Neural Networks. Applied Sciences, 12(13), 6608. https://doi.org/10.3390/app12136608